By Judy Zhou, Head of Content Strategy

Key Takeaways

- A true AI marketing agent plans, uses tools, retains memory across sessions, and self-corrects — rule-based automation tools do none of these things, regardless of how they're marketed.

- The latest flagship AI models (Claude Opus 4.5, Gemini 3 Pro) show a ~9% accuracy drop on SEO tasks compared to predecessors, meaning teams must migrate from raw prompts to structured contextual environments to avoid performance regression.

- 83% of AI Overview citations come from pages outside the organic top 10, and AI search traffic converts at 4.4x the rate of traditional organic — making generative engine visibility a separate metric your agent's output quality must be measured against.

- Start agent integration with keyword clustering and brief generation; treat the first 90 days as provisional; and keep human editorial judgment in any content where a lived perspective is what separates a real asset from a ranking liability.

As someone who oversees AI-driven content research and publishing across hundreds of brands, I get asked some version of this question constantly: "What exactly is a marketing agent?" The answer has changed dramatically — and the confusion between a marketing agent and plain marketing automation is costing teams real money.

The short version: a marketing agent is an AI system that can set goals, use tools, remember context across sessions, and self-correct — not just execute a fixed sequence of steps. That distinction matters more than almost anything else in content strategy right now.

Marketing Agent vs. Marketing Automation — Not the Same Thing

Marketing automation is a decision tree with a nice UI. You define the triggers, the actions, the conditions — and the tool executes them faithfully, every time, in exactly the order you specified. HubSpot workflows, Mailchimp sequences, Zapier chains: all of these are automation. They're powerful. They're also fundamentally rule-based. They do exactly what you told them to do, nothing more.

A true AI marketing agent is different in kind, not just degree. It receives a goal — "increase organic traffic to our product pages by 30% over 90 days" — and then decides which tools to use, in what order, and how to adjust based on what it finds. It doesn't wait for you to define every step. It plans. It executes. It observes results. It revises its approach. That loop — plan, act, observe, revise — is what makes something agentic. Without it, you have automation. With it, you have an agent.

The practical difference shows up fast. Automation breaks when conditions fall outside the rules you wrote. An agent handles novel situations by reasoning through them. Automation is brittle at the edges; agents are designed for the edges.

I'd push back on one thing I hear constantly: that "AI-powered" in a vendor's marketing copy means the tool is agentic. It almost never does. Most tools labeled "AI marketing" are using LLMs to generate copy inside an otherwise rule-based pipeline. That's not an agent — that's autocomplete with better branding.

What a Marketing Agent Actually Does (With Real Examples)

The clearest way to understand agentic behavior is to watch a loop run. Here's what a real keyword research loop looks like when an agent handles it:

The agent receives a goal: find high-potential keyword opportunities in the "project management software" category with less than 50 KD and commercial intent. It queries a keyword research API, pulls 800 candidates, filters by difficulty and intent, clusters semantically related terms, cross-references against existing content to flag cannibalization risks, and returns a prioritized brief — flagging which gaps represent the fastest path to ranking. A human didn't specify each of those steps. The agent reasoned through the workflow.

Content brief generation works similarly. Feed an agent a target keyword and a competitor set, and it will scrape the SERPs, analyze heading structures, identify semantic gaps in the top-ranking pages, check entity coverage against Google's Knowledge Graph, and produce a brief that includes not just an outline but a recommended word count, internal linking targets, and a suggested angle based on what's missing from existing results. That's not automation. That's judgment.

The publish-and-monitor cycle is where agents start to get genuinely interesting — and where most current tools still fall short. A true agent would publish content, monitor ranking movement over 30–60 days, identify underperforming sections based on scroll depth and engagement data, generate a revision hypothesis, and re-optimize without being prompted. I've seen early versions of this working in closed environments. It's not science fiction. It's also not what most vendors are selling today.

Is your current AI tool actually agentic — or just automation with a smarter label?

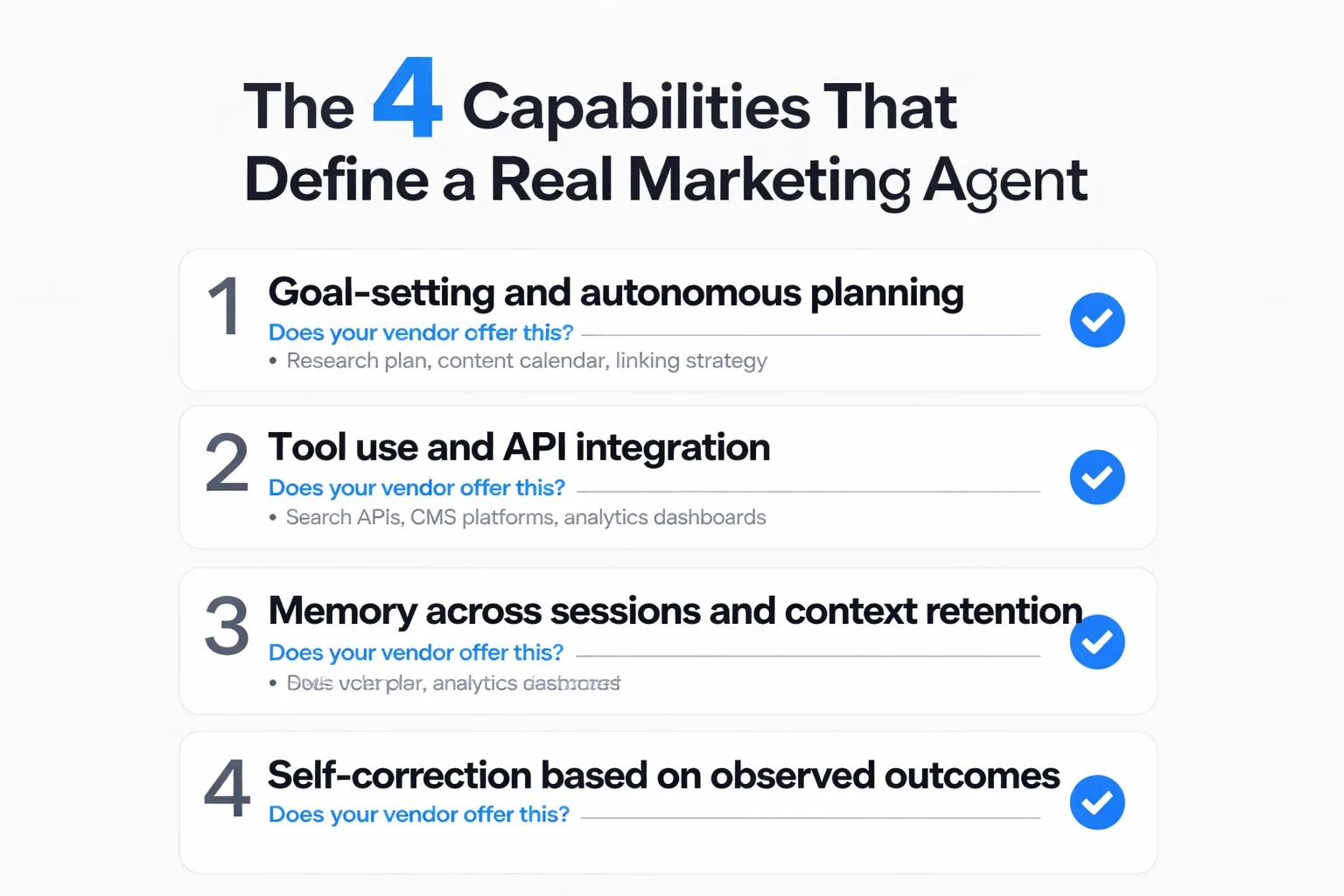

The 4 Capabilities That Define a Real Marketing Agent

Use this as a vendor evaluation checklist. If a platform can't demonstrate all four, it's not a marketing agent — it's automation with an AI label.

1. Goal-setting and autonomous planning. The agent should be able to receive a high-level objective and decompose it into a task sequence on its own. If you have to specify every step, you're the agent. A real system can take "grow our topical authority in B2B SaaS" and produce a research plan, content calendar, and internal linking strategy without hand-holding.

2. Tool use. Agents need to call external tools — search APIs, CMS platforms, analytics dashboards, structured data validators — and interpret what those tools return. This is what separates an LLM from an agent. The model alone can generate text. The agent can query Ahrefs, read the output, and decide what to write based on what it found. Tool use is the bridge between language and action.

3. Memory across sessions. This is the capability most often missing from tools claiming to be agents. A real marketing agent remembers that last month's content push targeted informational intent, that a particular cluster underperformed, that your brand voice guidelines prohibit certain framings. Session memory is what allows an agent to build a coherent strategy over time rather than starting from zero every conversation. Without it, you're running disconnected sprints, not a continuous program.

4. Self-correction. The agent should observe its own outputs, compare them against goals, and revise its approach. This is the hardest capability to fake. True self-correction requires feedback loops — ranking data, engagement signals, conversion metrics — and the ability to update strategy based on what those signals say. A tool that generates content but never learns from how that content performs isn't self-correcting. It's just productive.

When you're evaluating any vendor making "AI marketing agent" claims, run through these four. Ask for a demo that shows the agent handling an unexpected result — a keyword that suddenly became competitive, a piece of content that dropped in rankings. How it responds tells you more than any feature list.

Which Platforms Are Closest to True Marketing Agents Right Now

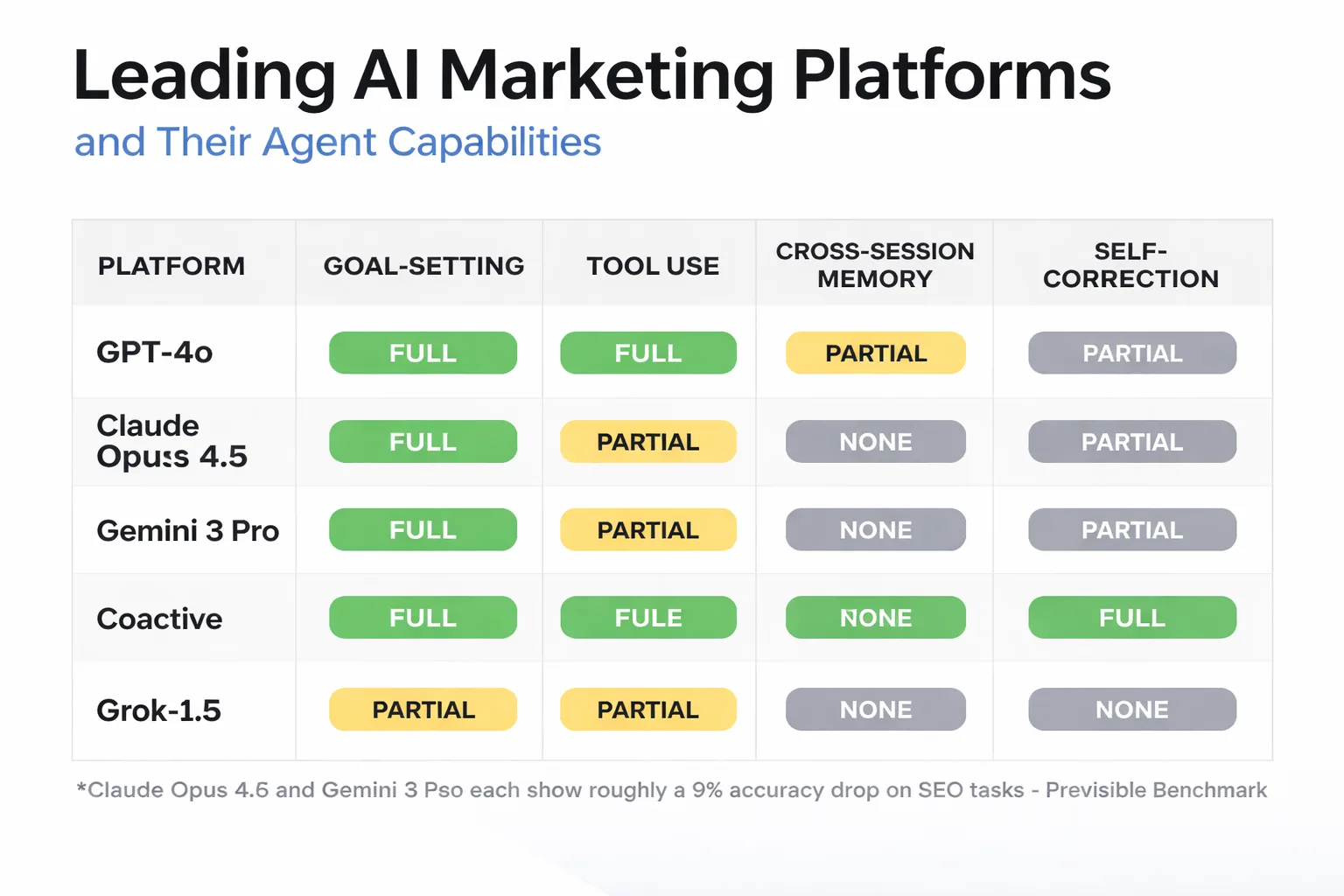

Honest answer: we're early. Most platforms in 2026 are strong on one or two of the four capabilities and weak on the rest.

Platforms built on OpenAI's GPT-4o architecture with custom tool integrations — think Custom GPTs with connected APIs — come closest to genuine tool use. They can query external data sources, interpret results, and act on them. Where they typically fall down is memory and self-correction. Without persistent memory infrastructure, each session starts fresh.

There's a finding from Previsible's benchmark research that genuinely surprised me: the latest flagship models — Claude Opus 4.5, Gemini 3 Pro — show roughly a 9% accuracy drop on SEO tasks compared to their predecessors. Nine percent. That's not noise. It runs directly counter to the assumption that newer always means better for content workflows. What the data suggests is that as models optimize for deep reasoning and agentic tasks, they regress on the structured, pattern-matching work that SEO requires. The implication for anyone building on raw API calls is uncomfortable: you can't assume the newest model is your best tool for content creation.

The shift this points to — and I've been pushing my own team on this — is away from raw prompts toward what practitioners are calling "contextual containers": Custom GPTs, Claude Projects, Gemini Gems. These structured environments persist instructions, brand voice, and workflow logic across sessions. They're not full agents, but they're the closest practical approximation available right now. If you're still running raw prompts with the latest flagship model for SEO work, you're likely getting worse results than you were six months ago.

For a deeper look at how these models compare on actual content tasks, the AI Agent Benchmarks breakdown on Meev is worth reading — it gets into the mechanics of where model performance actually diverges for marketing workflows.

On the platform side: tools like Jasper and Copy.ai have moved toward workflow orchestration but remain largely rule-based in their sequencing. They use AI for generation but automation for routing. That's a meaningful distinction. Purpose-built agent frameworks — AutoGPT derivatives, custom LangChain deployments — offer more genuine autonomy but require significant engineering to deploy reliably at content-team scale.

The honest 2026 answer is: no single off-the-shelf platform fully delivers on all four capabilities. The teams getting the most out of AI marketing agents are assembling composites — a structured environment for memory and brand context, a tool-use layer for data retrieval, a human review checkpoint for self-correction. It's more architecture than software purchase.

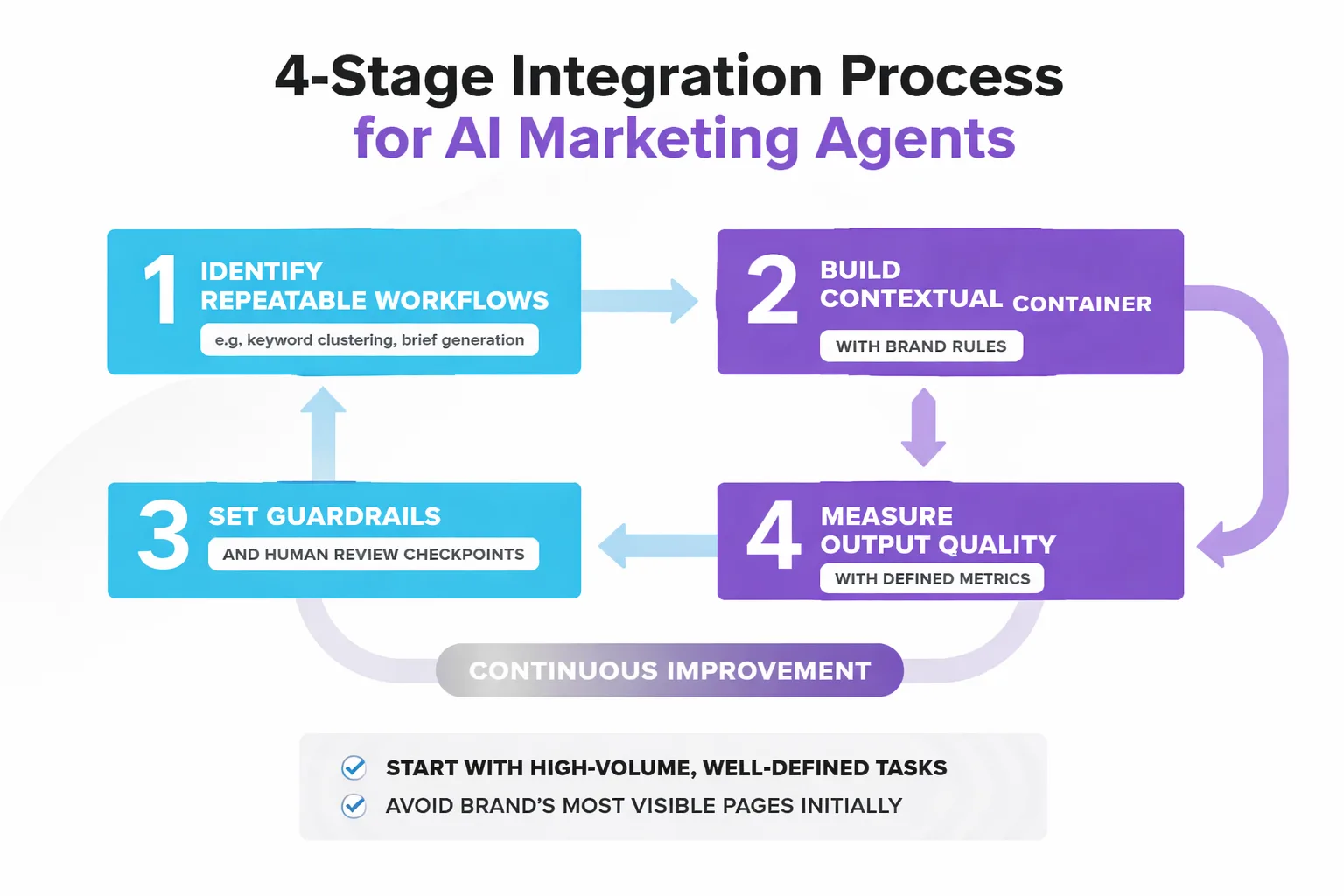

How to Integrate a Marketing Agent Into Your Content Stack

Start with the workflows that are high-volume, well-defined, and currently eating your team's time without requiring much creative judgment. Keyword clustering is the canonical first handoff. Brief generation from a keyword and competitor set is a close second. These are tasks where the inputs and outputs are clear, the quality bar is measurable, and the cost of a bad output is low.

Don't start with content that touches your brand's most visible pages. The failure mode I keep seeing is teams handing off homepage copy or pillar content to an agent before they've calibrated its outputs. The first three months of any AI content program should be treated as provisional — I tell my team this explicitly. The question isn't whether the content got published. It's whether a person with domain knowledge touched it in a way that left a mark. A perspective, a data point gathered internally, a comparison that required judgment. Without that, you're renting visibility, not building it.

On guardrails: the most effective ones aren't content filters — they're structural. Define what the agent is allowed to publish autonomously versus what requires human sign-off. In practice, this means: keyword research and brief generation can be fully automated; first drafts require human review before publishing; any content touching product claims, pricing, or legal territory requires editor approval. That tiered structure keeps the agent productive without exposing you to the reputational or ranking risk of fully unsupervised output.

Measuring output quality is where most teams underinvest. The metrics that matter aren't word count or publication velocity — they're ranking movement at 60 and 90 days, time-on-page relative to query intent, and whether the content is getting cited in AI Overviews. That last one matters more than most people realize. According to ConvertMate's 2026 GEO benchmark study, 83% of AI Overview citations come from pages outside the organic top 10 — meaning there's a parallel visibility surface that traditional ranking metrics won't capture. If your measurement framework is purely rank-based, you're missing a significant portion of the return.

The same research found that AI search traffic converts at 4.4x the rate of traditional organic search. That number reframes the ROI calculation entirely. A page that ranks 15th organically but gets cited in AI Overviews may be generating more qualified traffic than a page sitting at position 3. Your agent's output quality measurement needs to account for this.

One practical starting point that's worked in my experience: run your agent's output through a structured review against Google's Search Quality Rater Guidelines before publishing. Not because Google's raters will see it — they won't — but because the E-E-A-T framework is a useful proxy for whether a piece of content has anything in it that a language model couldn't have generated by summarizing existing web content. If it doesn't, it's a liability. The sites that ignored that distinction after the 2024 algorithm cycles mostly didn't recover.

For ai content automation to work at scale, the human role doesn't disappear — it shifts. You move from writer to editor, from editor to architect. The agent handles the repeatable. You handle the irreplaceable: the take, the lived experience, the judgment call that requires knowing your audience in a way no model has been trained to know.

FAQ

What's the difference between a marketing agent and a chatbot? A chatbot responds to inputs within a single conversation, with no persistent memory or ability to take actions outside the chat. A marketing agent can plan multi-step workflows, call external tools, retain context across sessions, and adjust its approach based on observed results. The gap is substantial.

Can an AI marketing agent replace a content strategist? Not in 2026, and probably not for a while. What it can replace is the repeatable, low-judgment work that currently consumes a strategist's time — keyword clustering, brief generation, performance monitoring. The work that requires genuine expertise — positioning, audience insight, editorial judgment — still needs a person. The strategist who learns to direct agents effectively will outperform the one who ignores them.

How do I know if a vendor's tool is actually agentic? Ask for a demo that shows the tool handling an unexpected result without human intervention. Can it receive a goal, build a plan, execute across multiple tools, and revise based on what it finds? If the demo requires you to specify every step, it's automation with an AI layer — not an agent.

Does newer AI model always mean better for SEO tasks? No — and this is the counterintuitive finding that should change how teams build. Previsible's benchmark data shows roughly a 9% accuracy drop on SEO tasks in the latest flagship models compared to their predecessors. Teams need to test model performance on their specific workflows, not assume the newest release is the best fit.

What workflows should I hand off to a marketing agent first? Start with keyword clustering, content brief generation, and performance monitoring. These are high-volume, well-defined, and low-risk. Avoid handing off brand-visible content until you've calibrated the agent's outputs over at least 60–90 days of production.

How does a marketing agent affect AI search visibility? Significantly, if you use it correctly. The ConvertMate 2026 GEO benchmark found that 83% of AI Overview citations come from pages outside the organic top 10. An agent that monitors AI citation patterns and optimizes for generative engine visibility — not just traditional rankings — creates a meaningful advantage that rank-tracking alone won't show you.

What this means for your content program in 2026: The marketing agent isn't a tool you buy — it's a capability you build. The teams winning right now are the ones treating AI not as a content factory but as an autonomous collaborator with specific strengths (volume, consistency, data synthesis) and specific weaknesses (judgment, lived experience, novelty). Map your workflows to those strengths. Keep humans in the loop where judgment is irreplaceable. Measure the right outputs. That's the actual definition of a marketing agent strategy that works.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

See how Meev's AI-driven publishing system handles the repeatable work so your team can focus on what actually requires judgment.