The OpenAI ecosystem has evolved far beyond the simple chat interface most content teams rely on. While many organizations still treat these tools as glorified autocomplete, the reality is that they now function as a full content operating system. If your team is still stuck on basic ChatGPT prompts, you are essentially running a Ferrari in first gear.

As Head of Content Strategy at Meev, I've watched this play out across dozens of content operations over the past 18 months. Teams that understand the full OpenAI ecosystem — what each tool does, where it fits, and where it breaks — are producing content at 3-4x the velocity of teams that don't. The ones that don't understand it are either underusing it or, worse, using it wrong and wondering why their rankings are sliding.

Key Takeaways

- Most content teams use ChatGPT for drafting but ignore the GPT-4o API, Assistants API, and agentic workflows that could automate 60-70% of their production pipeline.

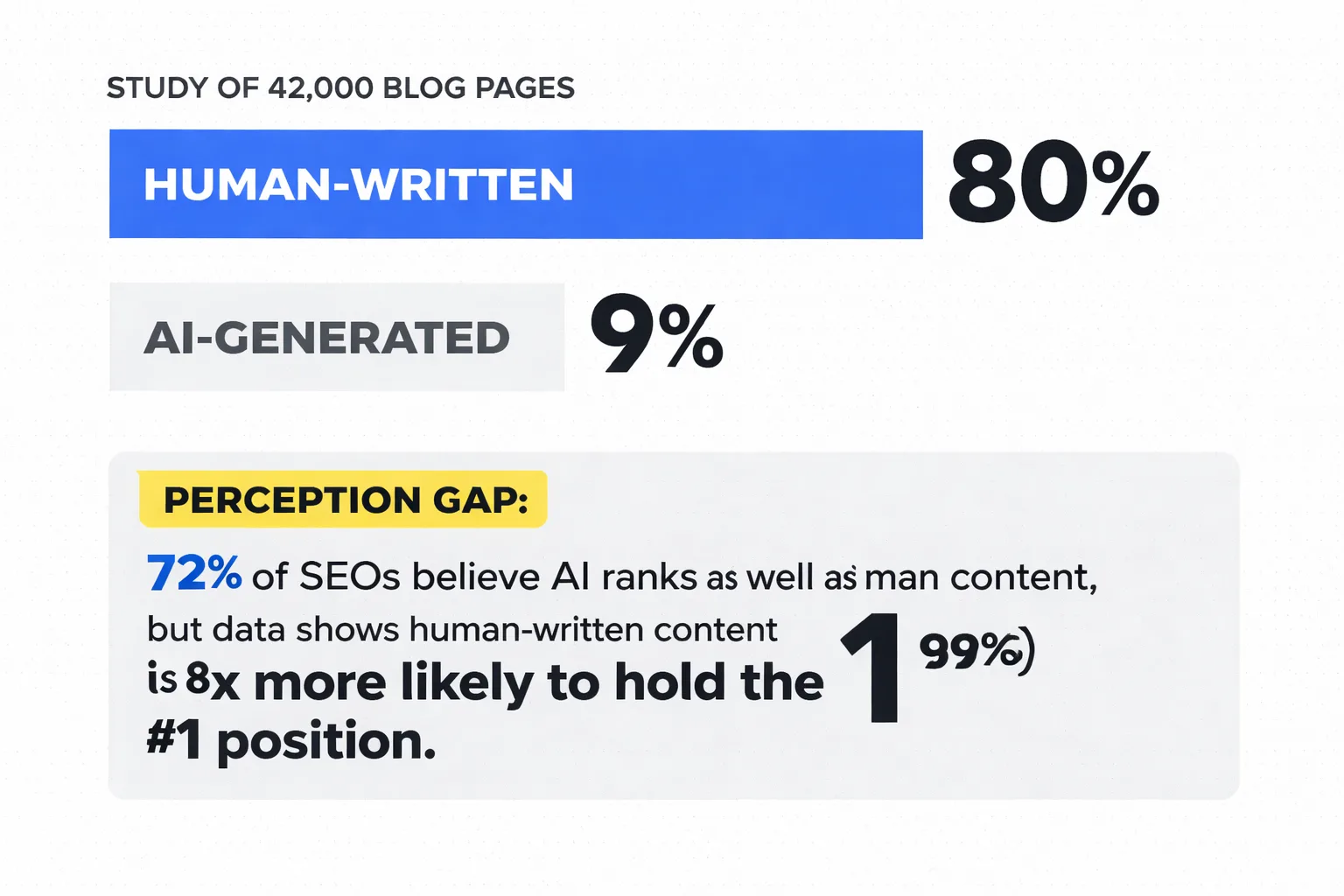

- Position 1 results are 8x more likely to be human-written than purely AI-generated content — the ecosystem works best as a human-AI collaboration layer, not a replacement.

- The biggest integration failure isn't hallucination — it's context fragmentation across long projects, which breaks quality consistency at scale.

- A 3-person content team can realistically save 52+ hours per month using the full ecosystem, cutting per-article time from 11 hours to 5.5 hours at roughly $55/month in API costs.

\"The OpenAI ecosystem isn't a single tool — it's a content operating system. Teams that treat it as one are leaving 90% of the value on the table.\"

TLDR: - Most content teams use ChatGPT for drafting but ignore the GPT-4o API, Assistants API, and agentic workflows that automate 60-70% of their production pipeline. - Position 1 results are 8x more likely to be human-written than purely AI-generated content — the ecosystem works best as a human-AI collaboration layer, not a replacement. - The biggest integration failure isn't hallucination — it's context fragmentation across long projects, which breaks quality at scale. - A 3-person content team can realistically save 15-20 hours per week using the full ecosystem, but only if they've mapped tools to specific workflow stages.

What is the Full OpenAI Ecosystem Beyond ChatGPT?

Most content teams know ChatGPT. Far fewer have mapped the full OpenAI product surface and what each layer actually does for a content operation.

Here's the current ecosystem as I'd draw it for a content team:

ChatGPT (consumer interface): The front door. Best for ad-hoc tasks — quick research, tone checks, headline brainstorming, rephrasing. The Projects feature (rolled out in late 2024) now lets you maintain persistent context across conversations, which is genuinely useful for ongoing content series. Canvas mode adds a collaborative document layer — you can edit AI output inline, ask for targeted rewrites, and track changes. It's closer to a co-writing environment than a chat interface.

GPT-4o API: The engine under the hood. This is where you build repeatable, scalable workflows — content briefs generated from a keyword list, bulk meta description generation, structured data extraction from raw research. The API gives you programmatic control: you can set system prompts, temperature, output format, and chain multiple calls together. If ChatGPT is a hammer, the API is a CNC machine.

Assistants API: Persistent AI agents with memory, file access, and tool use. For content teams, this is the key to long-form research projects — you can upload a 200-page industry report and have the assistant answer specific questions against it, maintain context across a multi-week project, or run a content audit against a style guide. The file search capability alone has replaced what used to be a 4-hour manual research task for my team.

Operator agents (and the broader agentic layer): This is where the ecosystem gets genuinely powerful — and where most content teams haven't gone yet. Agents can execute multi-step tasks autonomously: pull a keyword list from a spreadsheet, generate briefs, draft outlines, flag low-quality outputs for human review, and push approved drafts to a CMS. The AI Agent Benchmarks research shows workers save up to one hour per day on average — but that number jumps significantly when you move from chat-based AI to agentic workflows.

Sora: Video generation. For content teams, the immediate use case is social content, explainer video scripts, and visual asset creation. It's not production-ready for long-form brand video yet, but for short-form distribution content, it's already viable.

Where Does Each Tool in the OpenAI Ecosystem Fit in Your Pipeline?

The OpenAI ecosystem works best when you stop thinking about it as "AI for content" and start thinking about it as a task-routing system. Different tools have different strengths, and misrouting tasks is the most common failure mode I see.

Here's the mapping I use across content operations:

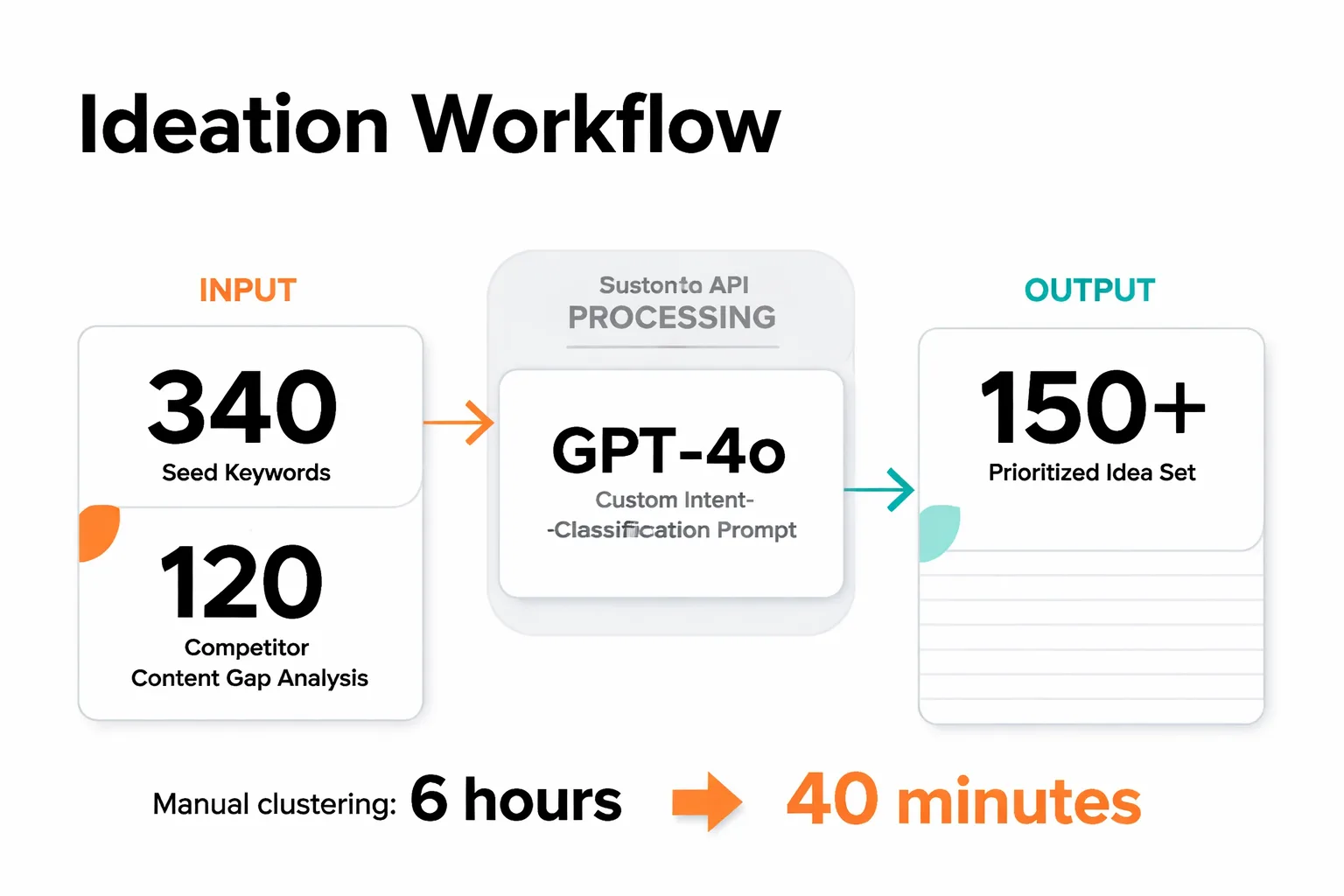

Ideation stage → ChatGPT (Projects) + GPT-4o API For one-off ideation sessions, ChatGPT with a well-structured system prompt works fine. For teams generating 50+ content ideas per month, the API is the right tool — you can feed it a keyword list, a competitor content gap analysis, and your existing content inventory, and get back a prioritized idea set with intent classification. I ran this exact workflow for a B2B SaaS client earlier this year: we fed 340 seed keywords into a GPT-4o API call with a custom intent-classification prompt, and it collapsed what would have been a 6-hour manual clustering session into 40 minutes. The output still needed human review — the AI created 3 clusters that would have cannibalized each other — but the acceleration was real.

Research stage → Assistants API This is the most underused part of the ecosystem for content teams. The Assistants API with file search lets you upload source documents — competitor articles, industry reports, customer interviews, internal data — and query against them with persistent context. For a 3,000-word thought leadership piece, I'll upload 8-10 source documents and run structured research queries before drafting. The output quality is meaningfully higher than asking ChatGPT to "research" a topic from its training data, because you're grounding it in verified sources rather than relying on what it may or may not have learned.

Drafting stage → GPT-4o API with structured prompts Not ChatGPT. The API gives you control over output format, length, and style consistency that the consumer interface doesn't. For teams producing 20+ articles per month, the difference between a well-engineered API prompt and a ChatGPT conversation is the difference between consistent output and constant rework. But most teams skip this: position 1 results are 8x more likely to be human-written than purely AI-generated content. The API draft is a starting point, not a finished product. Human editing isn't optional if you care about rankings.

Editing and QA stage → ChatGPT Canvas Canvas mode is genuinely useful here. You can paste a draft, ask for targeted improvements ("tighten the intro," "make the examples more specific," "check for passive voice"), and edit inline without losing context. It's faster than back-and-forth prompting and produces cleaner output than asking for a full rewrite.

Repurposing stage → GPT-4o API (batch processing) Take a finished 2,500-word article and generate: 5 LinkedIn posts, 3 email newsletter snippets, 10 social captions, a YouTube script outline, and a short-form video hook. This is a batch API call, not a manual ChatGPT session. The whole repurposing workflow for a single article takes under 10 minutes when it's properly automated.

Distribution and scheduling → Operator agents This is the frontier for most content teams right now. Agents that can push approved content to a CMS, schedule social posts, update internal content calendars, and flag content for review based on performance data. If you want to understand how this fits into a broader automated content pipeline, How to Build a Content Pipeline That Runs Without You is worth reading alongside this.

| Workflow Stage | Best OpenAI Tool | Time Saved (est.) | Human Checkpoint Needed? |

| Ideation | GPT-4o API | 3-4 hrs/week | Yes — intent review |

| Research | Assistants API | 4-5 hrs/article | Yes — source verification |

| Drafting | GPT-4o API | 2-3 hrs/article | Yes — always |

| Editing/QA | ChatGPT Canvas | 1-2 hrs/article | Yes — final read |

| Repurposing | GPT-4o API (batch) | 2-3 hrs/article | Light — spot check |

| Distribution | Operator agents | 3-4 hrs/week | Yes — approval gate |

The Integration Problem Nobody Warns You About

Here's where I'm going to be blunt, because most of the content about the OpenAI ecosystem skips this entirely: the integration problems are real, they're not edge cases, and they will break your quality at scale if you don't design for them upfront.

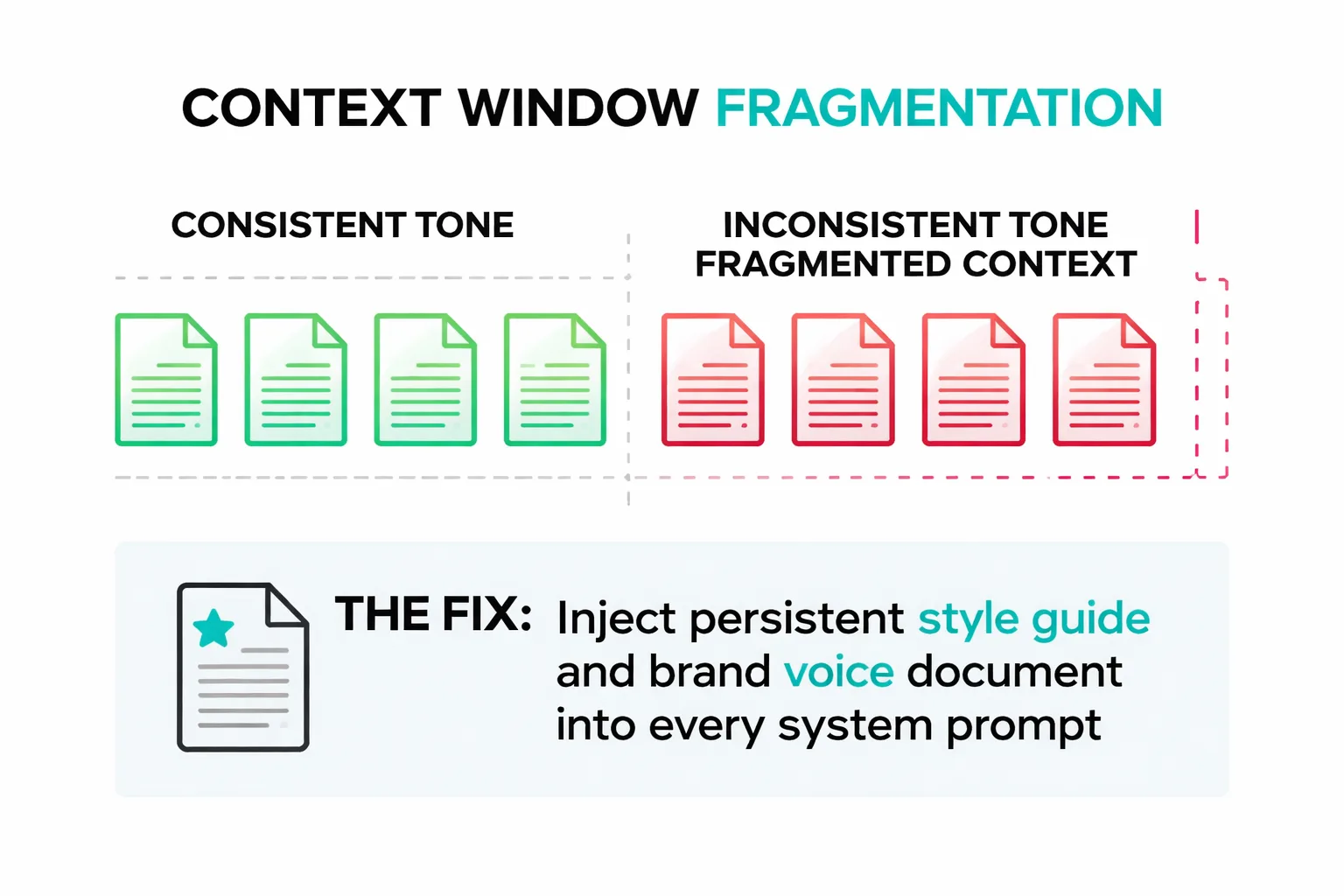

Context window fragmentation is the one that hits hardest. The GPT-4o API has a 128K token context window — which sounds enormous until you're running a 10-article content series where each piece needs to be consistent with the others in tone, terminology, and argument structure. Each API call starts fresh. The model doesn't remember what it wrote in article 3 when it's writing article 7. The fix is a persistent style guide and brand voice document that gets injected into every system prompt — but building that document correctly takes real time, and most teams skip it because it's not glamorous work. I've seen this exact failure mode on 4 separate content operations in the last 6 months: the first 3 articles look great, articles 4-8 start drifting in tone, and by article 10 the series feels like it was written by different people. Because, effectively, it was.

Hallucination risk in research tasks is the second failure mode, and it's more dangerous than most teams realize. The Assistants API with file search is much safer than asking ChatGPT to research from memory — but it's not immune. I've caught the model confidently citing a statistic from an uploaded document that it slightly misquoted, changing a percentage in a way that changed the meaning. The fix is a mandatory human verification step for any statistic that will appear in a published article. Not a spot check — every stat, every time. This adds time, but the alternative is publishing incorrect data, which is a much bigger problem for E-E-A-T and reader trust.

API rate limits at scale become a real operational constraint faster than most teams expect. If you're running a content operation producing 50+ pieces per month with batch API calls for repurposing and distribution, you will hit rate limits during peak production periods. The practical fix is staggered batch processing — don't run all your API calls at the same time — and having a fallback workflow for when the API is throttled. Teams that don't plan for this end up with production bottlenecks that feel like the AI "stopped working."

The human checkpoint design problem is the one that's hardest to get right. Too many checkpoints and you've negated the time savings. Too few and quality degrades. My current framework: mandatory human review at research verification, final draft editing, and pre-publication QA. Everything else — ideation, brief generation, repurposing, distribution prep — can run with light spot-checking. That ratio (3 mandatory checkpoints, 3 light-touch stages) is what keeps quality high without turning your human editors into AI babysitters.

Wondering which part of your content pipeline would benefit most from the OpenAI ecosystem?

Is Your AI Content Actually Ranking?

The AI chatbot content creation conversation always eventually lands here: is this stuff actually working? And the honest answer is: it depends entirely on how you're using it.

The Semrush analysis of 42,000 blog pages found that position 1 results are 8x more likely to be human-written. Purely AI-generated content appeared in the top spot just 9% of the time. Human-written content was there 80% of the time. That number stopped me cold when I first saw it — not because it surprised me, but because 72% of SEOs in the same study said they believed AI content ranks at least as well as human-written content. That's a massive perception gap.

What this means for your AI content workflow: the ecosystem is a production accelerator, not a quality replacement. The teams winning with AI content are using it to handle the structural and mechanical work — research synthesis, outline generation, first drafts, repurposing — while keeping human judgment in the loop for the things that actually drive rankings: original insight, specific examples, editorial voice, and E-E-A-T signals. The teams losing are the ones who've fully automated drafting and publishing without human editing, and are now wondering why their traffic looks like a cliff edge. The case studies from Hastewire and LowFruits document exactly what that failure mode looks like in practice — it's not a slow fade, it's a sudden drop.

For GEO (Generative Engine Optimization) specifically, the OpenAI ecosystem creates an interesting feedback loop: content that gets cited by ChatGPT and Perplexity tends to be content that's structured for extractability — clear definitions, quotable standalone sentences, specific data points. The Assistants API is actually useful here because it can help you structure content to be citation-friendly for AI search engines, not just Google. This is a workflow most content teams haven't built yet, and it's one of the cleaner competitive advantages available right now.

How to Calculate ROI from the OpenAI Ecosystem?

Calculating SEO ROI from an AI ecosystem investment requires a more granular framework than most teams use. Here's the audit I run for content operations:

Step 1: Baseline your current time-per-content-type. Not "how long does an article take" but broken down by stage: research (X hours), brief (Y hours), draft (Z hours), edit (A hours), repurposing (B hours). Most teams have never done this. Do it for 5 pieces across different content types before you implement anything.

Step 2: Implement the ecosystem by stage, not all at once. Start with the highest time-cost stage. For most teams, that's research or drafting. Measure time savings after 4 weeks.

Step 3: Calculate API cost vs. time saved. GPT-4o API pricing is roughly $5 per 1M input tokens and $15 per 1M output tokens (as of mid-2025). A well-structured article research + drafting workflow uses approximately 15,000-25,000 tokens total — call it $0.30-0.50 per article in API costs. If that workflow saves 3 hours of a content writer's time at $50/hour, the ROI math is not complicated.

Worked example — 3-person content team: Team: 1 content strategist, 2 writers. Current output: 12 articles/month. Current time allocation: ~60% of each writer's time on research + drafting.

After implementing GPT-4o API for research synthesis and first drafts, Assistants API for source document analysis, and batch API calls for repurposing: - Research time per article: from 4 hours to 1.5 hours (62% reduction) - First draft time: from 5 hours to 2 hours (60% reduction) - Repurposing: from 2 hours to 20 minutes per article - Monthly API cost: approximately $45-60 - Monthly time saved: ~52 hours across both writers - Output increase: from 12 to 18-20 articles/month without adding headcount

That's not a hypothetical. That's the pattern I've seen play out across multiple content operations when the ecosystem is implemented correctly — with proper human checkpoints, not as a full replacement.

The quality delta question is harder to measure but equally important. Track rankings, organic traffic, and engagement metrics (time on page, return visits) for AI-assisted content vs. your pre-AI baseline. If quality is dropping — longer time to rank, lower engagement, higher bounce rates — the workflow needs more human editing, not less. If quality is holding or improving, you've found the right balance.

Do this if: your team is spending more than 50% of writing time on research and first drafts — the API ROI is immediate and measurable. Do this if: you're producing 10+ pieces per month and repurposing is manual — batch API calls will save 2-3 hours per article. Skip this for now if: your team is under 3 people and producing fewer than 8 pieces per month — the setup cost of proper API integration won't pay back quickly enough.

Building topical authority at scale with AI assistance requires getting this infrastructure right first. If you're thinking about the broader content strategy layer, The Complete Guide to Building Topical Authority With AI Content covers how the content strategy layer sits on top of the operational infrastructure we've covered here.

What’s the One Thing You Should Do Today in the OpenAI Ecosystem?

Pull your last 30 days of content production data and time-log it by stage: research, brief, draft, edit, repurpose. If you don't have that data, spend 20 minutes estimating it from memory for your last 5 articles. Find the single highest time-cost stage. That's where you start with the OpenAI ecosystem — not with a full workflow overhaul, not with API integrations, not with agents. One stage, one tool, four weeks of measurement. The teams that try to implement everything at once end up with a fragmented workflow that's slower than what they replaced. The teams that start with one stage and measure it properly end up with a system they can actually trust.

FAQ

What is the OpenAI ecosystem for content teams?

The OpenAI ecosystem is a full content operating system beyond just ChatGPT, including tools like the GPT-4o API, Assistants API, and agentic workflows. Content teams that map these tools to specific workflow stages can automate 60-70% of their production pipeline. This leads to 3-4x higher content velocity compared to teams using only ChatGPT.How many OpenAI products do most content teams actually use?

Most content teams only use ChatGPT and sometimes the API, treating the ecosystem like a fancy autocomplete tool. This underutilization leaves 90% of the value on the table, as teams ignore advanced features like Assistants API for structured tasks. Fully leveraging the ecosystem can save a 3-person team 15-20 hours per week.Why is human-AI collaboration better than pure AI content?

Position 1 search results are 8x more likely to be human-written than purely AI-generated content. The OpenAI ecosystem excels as a collaboration layer, enhancing human work rather than replacing it. This approach maintains quality and avoids issues like declining rankings from over-reliance on AI.What is the biggest failure when integrating OpenAI tools?

The primary issue isn't hallucination but context fragmentation across long projects, which breaks quality at scale. Teams must map tools to workflow stages to maintain consistency. Proper integration turns the ecosystem into a seamless operating system for content production.See how Meev maps AI tools to your specific content workflow — and builds the human checkpoints that keep quality high at scale.