By Judy Zhou, Head of Content Strategy

Key Takeaways

- The correlation between AI-generated content and Google ranking is just 0.011 (Ahrefs 2025) — AI origin doesn't determine ranking, but missing quality gates and E-E-A-T signals absolutely do.

- HubSpot achieved a 642% increase in AI citations through semantic optimization, not more content volume — structure and specificity matter more than publication frequency.

- Most AI blog writers optimize for traditional SEO signals while ignoring GEO and citation architecture; only a handful of platforms (Meev, Sight AI, Writesonic) include any AI search visibility tracking at all.

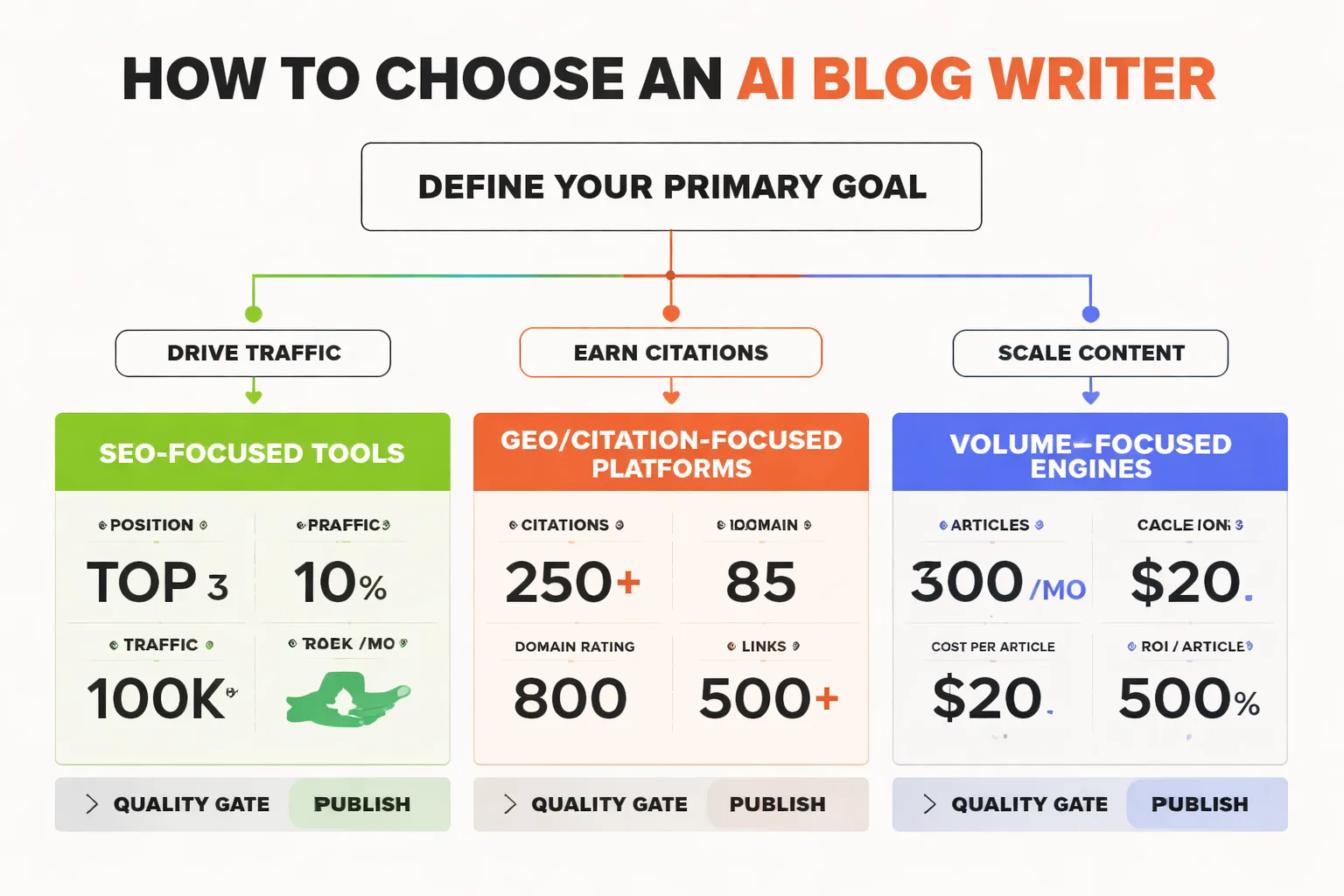

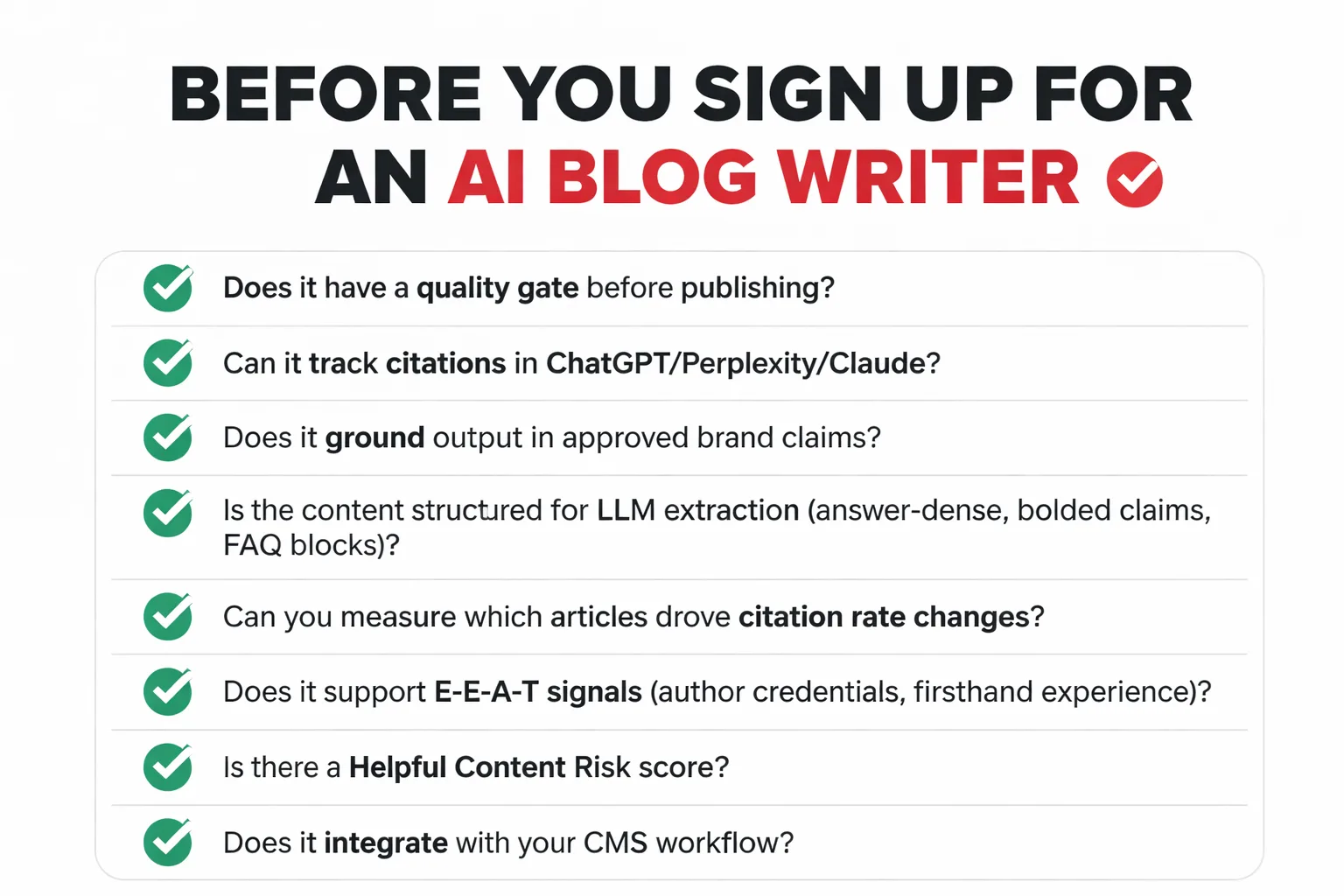

- The right evaluation framework covers four criteria: quality gating before publish, citation-aware content structure, knowledge grounding to prevent hallucination, and a measurement loop that connects articles to citation rate changes.

Your content manager pulls up the analytics dashboard on a Monday morning and something is wrong. Organic traffic is flat, but stranger still, your brand is nowhere in ChatGPT's answers about your industry — even for topics where you've published thirty articles. The AI blog writer your team onboarded six months ago has been producing content at scale, hitting every deadline, and yet your AI search visibility is effectively zero. The posts exist. The citations don't. This is the problem nobody warned you about when you signed that SaaS contract.

Choosing the right AI blog writer in 2026 is no longer just about output speed or cost-per-word. According to an Ahrefs 2025 study, the correlation between AI content share and Google ranking is just 0.011 — essentially zero. What actually determines whether your content performs is quality, E-E-A-T signals, and increasingly, whether LLMs cite you at all. HubSpot documented a 642% increase in AI citations through semantic optimization alone — not more content, smarter content. And yet over 50% of all new web articles are now AI-generated, per a Graphite study of 65,000+ articles, which means the average AI content tool is helping you blend into a sea of sameness.

The framework I'm laying out here is built for teams that want more than a writing assistant. It's for businesses that need their AI content tool to actively support Generative Engine Optimization (GEO), survive Google's Helpful Content System, and produce work that LLMs actually want to cite.

The Hidden Cost of Choosing Wrong

Most teams pick an AI blog writer the same way they pick a SaaS tool for anything else: a free trial, a feature comparison, a pricing tier that fits the budget. That's fine for a project management app. It's a real problem for content.

Here's what I keep seeing in practice: teams optimize for production metrics — articles per month, words per dollar, turnaround time — and completely skip the question of what happens to that content after it publishes. Does it earn citations in Perplexity? Does it surface in Google AI Overviews? Does it have enough information gain to differentiate itself from the five other AI-generated articles covering the same keyword?

Rankability's 2025 case study documented a case where ChatGPT-generated content for a competitive keyword was completely deindexed after a recent algorithm update. When replaced with human-written content, it reindexed within hours and ranked top 10. That's not an argument against AI writing. It's an argument against AI writing without editorial oversight and quality gating.

The penalty isn't the automation. It's skipping the review step.

Stop Optimizing for Word Count

The conventional wisdom about AI content tools is wrong. Most buyers evaluate them on generation speed, template variety, and price. Those are the least predictive variables for whether the content will actually perform in 2026.

The criteria that actually matter, in my experience overseeing content operations across hundreds of brands at Meev, break into four categories.

Quality gating. Does the tool have a mechanism to score content before it publishes — or does it just ship whatever the model outputs? This is the single biggest differentiator between tools that survive algorithm updates and tools that don't. Look for content scoring against E-E-A-T dimensions, not just readability or keyword density.

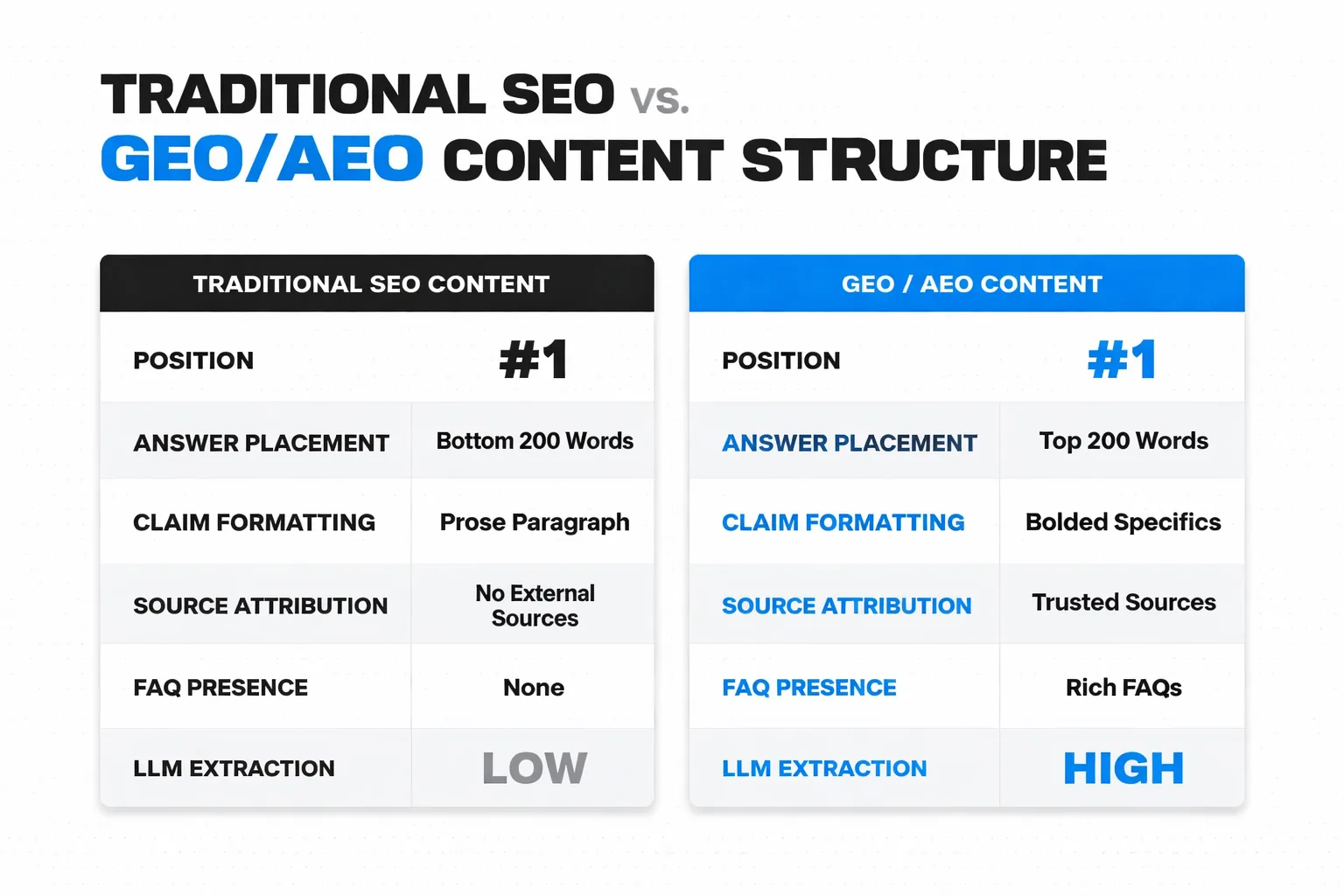

Citation architecture. Is the content structured to be extracted by LLMs? This means direct answers near the top of articles, bolded claims with specific numbers, named sources, and FAQ blocks that mirror how users query ChatGPT or Perplexity. Most AI writers don't think about this at all. They optimize for Google's traditional ranking signals while the search behavior of their target audience has already shifted toward ChatGPT visibility and Perplexity.

Knowledge grounding. Does the tool let you enforce approved claims, brand facts, and proprietary data — or does it hallucinate freely and leave fact-checking to whoever reviews the draft? For regulated industries or brands with specific positioning, this is non-negotiable. An AI writer that confidently invents statistics is a liability, not an asset.

Measurement loop. Can you trace a specific article back to a change in citation rate or AI search visibility? Without this, you're flying blind. You'll keep producing content without knowing whether any of it is actually moving the needle on the metrics that matter in 2026.

How Does the Google Helpful Content System Actually Work?

Google's Helpful Content System evaluates whether content was made primarily for people or primarily for search engines. The distinction sounds simple. In practice it's where most AI content operations quietly fail.

The Ahrefs study found that AI origin alone doesn't predict ranking — but that doesn't mean AI content is safe by default. What it means is that Google isn't running an AI detector and penalizing based on the output. It's evaluating quality signals: depth of coverage, specificity of claims, evidence of firsthand experience, and whether the content answers what the user actually needed. AI-generated content that scores poorly on those dimensions gets penalized. AI-generated content that scores well doesn't. The model that wrote it is irrelevant.

What this means practically: the AI blog writer you choose needs to produce content that passes quality thresholds a human editor would recognize, not just content that clears a plagiarism check or hits a keyword density target. The tools that will hurt you are the ones that optimize for surface signals — word count, heading structure, keyword placement — without building in any mechanism to evaluate whether the content is actually good. I've watched teams publish hundreds of articles using tools like this and then wonder why their traffic collapsed after a core update. The answer is almost always the same: they automated production but left quality to chance.

The teams that came through the March 2024 Helpful Content Update intact had one thing in common. They had a human editorial layer. Not necessarily a human writer — but a human reviewer enforcing topical depth, verifying claims, and making sure each article added something the existing top results didn't already cover. That's the information gain strategy that separates content that compounds over time from content that decays.

Why AI Search Visibility Changes the Evaluation Criteria

Here's the contrarian take that most AI blog writer comparisons completely ignore: if your audience is increasingly finding answers through ChatGPT, Perplexity, or Claude rather than clicking through Google results, then optimizing purely for traditional SEO rankings is optimizing for a shrinking share of your actual discovery surface.

I've been watching Perplexity citation patterns closely, and the pattern is consistent. The same 2-3 sources surface across queries in a given niche, and they're not always the highest-authority domains by traditional SEO metrics. They're the sources that answered the question most directly, with specific claims that could be extracted and attributed. Community content — Reddit threads, Quora answers — punches well above its weight here because LLMs were trained on conversational content at massive scale, and that training has baked in a different trust signal than editorial authority.

This has a direct implication for which AI blog writer you choose. A tool that generates smooth, brand-safe prose without any structural awareness of how LLMs extract answers is going to produce content that ranks fine in 2023-era Google but gets ignored by every AI search engine your audience is now using. You need a tool that understands answer-dense structure: direct responses early, bolded claims, named sources, FAQ architecture. That's not a nice-to-have feature. It's the difference between AI search visibility and AI search invisibility.

When Should You Prioritize Citation Tracking Over Content Generation?

This is the question most teams get backwards. They buy a content generation tool first, produce hundreds of articles, and then — six months later — try to bolt on some kind of measurement to figure out whether any of it is working for AI search visibility.

The right sequence is the opposite. Before you scale production, you need to know what your current citation baseline looks like across the LLMs your audience uses. Are you appearing in Claude's answers? Are you cited in Perplexity when someone asks a question you've written about? If you don't have that baseline, you have no way to know whether the content you're producing is moving the needle or just adding to the noise.

I've stopped trusting any single AI visibility platform as a source of truth after watching teams get burned by platform-level discrepancies throughout 2026. One tool shows strong citation rates; another shows near-zero presence for the same brand. Neither is necessarily wrong — they're polling different LLMs, at different cadences, with different query sets. The fix is cross-referencing at least two platforms and documenting exactly which LLMs each one is tracking before you present any citation data to a stakeholder who might act on it. For a detailed breakdown of how platforms differ, the Meev vs Profound comparison is worth reading before you commit to any measurement stack.

If your primary goal right now is scaling content production and you're not yet thinking about GEO, a dedicated content generation tool makes sense. If you're already publishing consistently and your problem is that the content isn't earning citations or surfacing in AI answers, you need a platform that closes the loop between what you publish and how LLMs respond to it.

The AI Blog Writer Comparison: 10 Tools for 2026

The table below covers the dimensions that actually matter for choosing an AI blog writer in 2026. I've weighted it toward GEO and citation features because that's where the gap between tools is widest and where the stakes are highest for businesses that depend on AI search visibility.

| Tool | Best For | AI Citation Tracking | Quality Gating | GEO/AEO Features | Starting Price |

| Meev | AI citation tracking + quality-gated publishing | Yes (5 LLMs + Google AIO) | 12-dimension matrix + HCS risk score | Yes (full GEO stack) | $49/mo |

| Sight AI | Blog content + AI visibility | Yes (ChatGPT, Claude, Perplexity) | Multi-agent workflow | Partial | $29/mo |

| Writesonic | Small business content + GEO tracking | Yes (ChatGPT, Perplexity, Google AIO) | Article Writer 5.0 | Partial | $49/mo |

| Jasper | Enterprise brand voice | No | Brand voice training | No | $59/seat/mo |

| Surfer SEO | SEO content optimization | No | Content Score vs. SERPs | No (SEO only) | $89/mo |

| Copy.ai | Multi-format writing | No | Brand Voice feature | No | $49/mo |

| Frase | Budget SEO briefs | No | SERP-based scoring | No | $12.66/mo |

| GrowthBar | Bloggers + keyword research | No | Basic | No | $29/mo |

| Rytr | Short-form, budget | No | None | No | $7.50/mo |

| Grammarly | Editing + polish | No | Grammar/clarity | No | $12/mo |

| Writer | Enterprise compliance | No | Policy guardrails | No | $39/mo |

1. Meev — Best for AI citation tracking plus quality-gated publishing

Best for: Teams that want AI citation tracking plus a content engine they can trust to publish — not just a dashboard that surfaces problems.

Meev is the only platform on this list that treats content generation and citation measurement as a single closed loop rather than two separate problems you solve with two separate tools. Every article passes through a 12-dimension Quality Matrix and a Helpful Content Risk score before it ships — the publish gate is 70/100 on both. That's not a soft recommendation from an AI; it's a hard threshold. Articles that don't clear it don't publish.

Key features: - Citation tracking across ChatGPT, Claude, Gemini, Perplexity, and Grok on a rolling cadence; Google AI Overviews and AI Mode refresh daily - 12-dimension Quality Matrix plus Helpful Content Risk score with a 70/100 publish gate on both - Knowledge Base enforcement that grounds articles in your approved claims rather than AI-generated assumptions - Autopilot topic pool with gap detection from competitor citation patterns

Pricing: 7-day free trial (no credit card, no auto-charge). Lite $49/mo, Starter $99/mo, Pro $269/mo, Agency $599/mo. 20% annual discount. Cancel anytime; hard-cap quotas with no overage fees.

For teams already using other AI writing tools and wondering why citations aren't materializing, the Meev vs Sight AI comparison breaks down exactly where the gap tends to appear in quality-gated workflows.

2. Sight AI — Best for content teams tracking AI brand mentions

Best for: Content teams and growth marketers who want blog content optimized for both traditional search engines and AI-powered answer engines like ChatGPT and Perplexity.

Sight AI takes a multi-agent approach to content production — instead of routing everything through a single prompt, it assigns specialized agents to research, outlining, writing, and optimization. The result is more structured output than a generic AI writer, and the AI visibility tracking layer means you can actually see whether the content is earning brand mentions in LLM responses.

Key features: - 13+ specialized writing agents for research, outlining, writing, and optimization - AI visibility tracking across ChatGPT, Claude, and Perplexity for brand mention monitoring - Automated website indexing via IndexNow for faster content discovery - Multi-agent architecture that routes content through purpose-built agents rather than a single prompt

Pricing: Paid plans start at $29/month; tiered plans scale with content volume and AI visibility tracking features.

The multi-agent workflow is genuinely more sophisticated than most competitors at this price point, but it comes with a steeper learning curve. Teams that want to get content out the door quickly without configuring agent pipelines may find the setup friction frustrating early on.

3. Writesonic — Best for small businesses needing GEO tracking on a budget

Best for: Small business owners, solo marketers, and early-stage startups that need broad content coverage with factual accuracy and AI search visibility without enterprise pricing.

Writesonic's Article Writer 5.0 pulls live Google Search data during generation, which meaningfully reduces the hallucination problem that plagues most AI writers working from static training data. The GEO tracking layer monitors brand appearance in ChatGPT, Perplexity, and Google AI Overviews — useful for teams starting to think about AI search visibility without committing to a dedicated measurement platform.

Key features: - Article Writer 5.0 with live Google Search data integration to reduce AI hallucinations - GEO tracking that monitors brand appearance in ChatGPT, Perplexity, and Google AI Overviews - Chatsonic conversational AI layer for research, brainstorming, and content refinement - WordPress and Zapier publishing integrations for streamlined content distribution

Pricing: Lite plan from $49/month for SEO and content generation; Professional from $249/month includes GEO tracking features; free tier available.

The credit-based system can be confusing to budget around, and the interface is busier than simpler alternatives. But for a small team that wants both content generation and a first layer of AI visibility measurement without paying for two separate tools, Writesonic is one of the more practical options at this price range.

4. Jasper — Best for enterprise brand voice consistency

Best for: Marketing teams of 3 or more at mid-size to enterprise companies that need consistent brand voice enforced across multiple writers, channels, and campaigns.

Jasper's core strength is brand governance at scale. The Brand Voice Training system ingests your guidelines and sample content, then enforces consistent tone across all output — which matters enormously for enterprises where multiple writers are producing content simultaneously across different channels. The 100+ marketing-specific agents and Content Pipelines make it genuinely capable for campaign-level production.

Key features: - Brand Voice Training that ingests guidelines and sample content to enforce consistent tone across all output - 100+ marketing-specific agents including Canvas workspace for campaign management - Content Pipelines for automating entire content campaigns - SOC 2 compliance and role-based permissions for enterprise content governance

Pricing: Pro plan starts at $59/seat/month (billed annually) or $69/month billed monthly; Business plan is custom pricing with a 12-month minimum; 7-day free trial available.

Jasper is genuinely excellent at what it does, but what it does is brand-voice-consistent content production — not GEO, not citation building, not AI search visibility. If those are your priorities, you'll need to layer in additional tooling. Teams evaluating Jasper against alternatives with more GEO focus should read the Meev vs Jasper comparison before committing to the per-seat pricing.

5. Surfer SEO — Best for SEO managers closing the ranking gap

Best for: SEO managers, content strategists, and agencies that need data-driven content optimization and want to close the gap between AI-generated drafts and top-ranking pages.

Surfer's real-time Content Score benchmarks your draft against the top-ranking pages for your target keyword as you write. It's the most direct implementation of the "write to the SERP" philosophy, and for teams whose primary success metric is organic ranking, it's genuinely effective. The Topical Map feature helps build the kind of topical authority clusters that support long-term ranking performance.

Key features: - Real-time Content Score that benchmarks drafts against top-ranking SERP competitors - AI-powered Surfer AI article writer that generates NLP-optimized long-form drafts - Topical Map feature for building comprehensive topical authority clusters - Direct Jasper integration for combined brand-voice and SEO optimization workflow

Pricing: Essential plan starts at $89/month; Scale plan at $129/month; Scale AI at $219/month; all billed annually. No permanent free plan.

Surfer is built for traditional search, and it's honest about that. There's no AI citation tracking, no GEO features, and no mechanism to evaluate whether your content is structured for LLM extraction. For teams whose audience is still primarily discovering content through Google organic results, that's fine. For teams whose audience has shifted toward AI-powered search, Surfer alone won't cover the gap.

Wondering whether your current AI content tool is actually earning citations in ChatGPT, Perplexity, or Google AI Overviews?

6. Copy.ai — Best for multi-format writing across teams

Best for: Freelancers, small business owners, and marketing teams who need powerful AI writing assistance across multiple content formats without the complexity or cost of enterprise platforms.

Copy.ai's 90+ templates cover more content formats than almost any other tool on this list — blogs, emails, social posts, product descriptions, ad copy. The Infobase feature lets teams store and reuse company-specific information, which reduces the hallucination problem for brand-specific claims. For teams that need a versatile writing assistant rather than a specialized content engine, it's a solid option. Teams comparing it directly against Meev's feature set should check the Meev vs Copy.ai breakdown.

Key features: - 90+ content templates covering blogs, emails, social posts, product descriptions, and ad copy - Brand Voice feature for maintaining consistent tone across all generated content - Infobase for storing and reusing company-specific information across projects - Collaborative chat interface designed for team-based content workflows

Pricing: Free tier with 2,000 words/month; Pro plan starts at $49/month; Team and Enterprise plans available at custom pricing.

Copy.ai's SEO features are thin compared to dedicated tools like Surfer or Frase, and there's no AI citation tracking or GEO capability. The free tier runs out quickly for any team publishing with real frequency. But as a versatile multi-format writing assistant for teams that need breadth over depth, it's well-priced and genuinely easy to use.

7. Frase — Best for budget-conscious SEO brief builders

Best for: Small businesses and content managers who want to improve SEO performance on a budget, especially teams managing external writers who need structured, research-backed briefs.

Frase's SERP-based content brief builder is its real differentiator. It analyzes the top-ranking pages for any target keyword and structures a brief around the gaps and angles those pages are covering — which makes it particularly useful for teams managing freelance or agency writers who need clear direction before they start writing.

Key features: - SERP-based content brief builder that analyzes top-ranking pages for any target keyword - Long-form AI Writer that generates content informed by competitive NLP analysis - Content scoring against live competitor data for gap identification - Document sharing and project status tools for managing external writer workflows

Pricing: Solo plan starts at $12.66/month for 4 SEO articles; Basic plan at $38.25/month for 30 optimized articles; unlimited AI generation (without SEO tools) at $35/month; 5-day trial for $1.

Frase is genuinely good at what it does, and the pricing is hard to argue with at the entry tier. The limitations are real though: no AI citation tracking, no GEO features, and SEO tools that are less comprehensive than Surfer. It's a strong choice for budget-conscious teams focused on traditional SEO content, less so for teams building toward AI search visibility.

8. GrowthBar — Best for individual bloggers combining keyword research and drafting

Best for: Individual bloggers, website owners, and marketers who want keyword research and AI drafting in one place without a steep learning curve or enterprise-level pricing.

GrowthBar packs keyword research, AI outline generation, and long-form drafting into a single lightweight interface that doesn't require much setup. The Brand Voice tool learns your writing style over time, and the on-page SEO audit feature is useful for improving existing content rather than just creating new pieces.

Key features: - Blog Topic Generator and AI Blog Outline Writer for rapid content ideation - Integrated keyword analysis visible throughout the writing and editing process - On-page SEO audit tool for analyzing and improving existing published content - Brand Voice tool that learns and applies your brand's writing style

Pricing: Standard plan starts at $29/month for 2 user accounts, 25 articles, 500 paragraphs, and SEO tools; 14-day free trial available; no permanent free plan.

For an individual blogger or small site owner who needs both keyword research and AI drafting without managing two separate subscriptions, GrowthBar is a practical choice. The article limits on lower tiers become restrictive quickly for teams publishing at any real volume, and the SEO tools aren't deep enough to replace a dedicated platform for serious content operations.

9. Rytr — Best for freelancers needing fast, affordable short-form copy

Best for: Freelancers, individual bloggers, and small businesses that need a fast, affordable AI assistant for everyday short-form writing tasks without complex workflows.

Rytr is the simplest tool on this list, and that's intentional. Forty-plus use cases, twenty-plus tone options, a clean interface, and pricing that starts at $7.50/month. It's built for speed and accessibility, not depth.

Key features: - 40+ pre-built use cases including social posts, emails, ads, and landing pages - 20+ tone-of-voice options for matching brand personality across content types - Simple, no-frills interface with minimal setup required - Saver plan for occasional users who need more than the free tier without full commitment

Pricing: Free plan with 10,000 characters/month; Unlimited plan at $7.50/month (billed annually); Premium plan at $24.16/month (billed annually).

Rytr is honest about what it is: a fast, cheap writing assistant for short-form tasks. There are no AI agents, no SEO tools, no citation tracking, and no GEO features. For long-form blog production or any content strategy with AI search visibility goals, it's the wrong tool. But for a freelancer who needs to draft social posts, email subject lines, or short ad copy quickly, the price-to-output ratio is hard to beat.

10. Grammarly — Best for editing and polishing any AI-generated content

Best for: Anyone who writes emails, documents, blog posts, or business communications and wants an AI-powered editing safety net with light generative capabilities built in.

Grammarly is the only tool on this list that's primarily an editing layer rather than a content generator. The real-time grammar, clarity, tone, and engagement suggestions work across browser, desktop, and mobile — which means it integrates into whatever writing environment you're already using. The GrammarlyGO generative features are capable for light drafting and rephrasing tasks.

Key features: - Real-time grammar, clarity, tone, and engagement suggestions across browser, desktop, and mobile - GrammarlyGO generative AI for rephrasing, expanding, and drafting content from prompts - Eight specialized AI agents including Reader Reactions for predicting audience takeaways - Citation Finder for automatically formatting sources in business and academic content

Pricing: Free plan available; Pro plan at $12/month (billed annually); Business plan at $15/member/month (billed annually).

Grammarly's AI generation is capped at 2,000 prompts per month on Pro, which makes it a complement to a primary content tool rather than a replacement. For teams already using an AI blog writer and looking for an editing layer that catches clarity and tone issues before content publishes, it's an excellent addition at a low cost.

11. Writer — Best for enterprises in regulated industries

Best for: Enterprises with 50+ employees in regulated industries like financial services or healthcare that need AI writing with strict brand governance, data privacy, and compliance requirements.

Writer's differentiator is the compliance layer. The platform trains on your internal brand guidelines, terminology, and style guides, then flags unsupported claims and off-brand language in real time. For enterprises in regulated industries where an AI-generated factual error carries legal or reputational risk, that guardrail is genuinely valuable.

Key features: - Company-specific AI model training on internal brand guidelines, terminology, and style guides - Legal compliance and policy guardrails that flag unsupported claims and off-brand language - Role-based permissions and approval workflows for enterprise content governance - AI agent platform with purpose-built agents for marketing, support, and operations content

Pricing: Team plan starts at $39/month; Enterprise plan is custom pricing. No consumer or solo-creator plan available.

Writer is built for enterprises, and the pricing and minimum commitment reflect that. There's no path in for small teams or individual creators, and the compliance focus means it's not optimized for the kind of high-velocity content production that most content marketing teams need. For the specific use case it targets — regulated enterprise content at scale — it's one of the most serious options available.

Making the Right Choice for Your Content Strategy

The right AI blog writer for your business in 2026 depends on one question more than any other: what does success look like six months after you start publishing?

If success is organic traffic from traditional Google search, tools like Surfer SEO and Frase are built for that outcome. They score content against live SERPs and optimize for ranking signals that Google's traditional algorithm rewards.

If success is AI search visibility — appearing in ChatGPT answers, getting cited by Perplexity, surfacing in Google AI Overviews — you need a different stack entirely. You need content structured for LLM extraction, a quality gate that enforces E-E-A-T signals before anything publishes, and a measurement layer that tells you whether your citation rate is actually moving. That combination doesn't exist in most AI content tools. It's the specific gap that platforms like Meev are built to close.

If success is brand voice consistency across a large team, Jasper or Writer will serve you better than anything else on this list.

The mistake I see most often: teams buy a content generation tool when what they actually need is a content system. Generation is one piece. Quality gating, citation architecture, and measurement are the other three. Any tool that only solves one of those four problems is going to leave you with content that exists but doesn't perform.

Frequently Asked Questions

What's the difference between an AI blog writer and an AI search visibility platform?

An AI blog writer generates content. An AI search visibility platform measures whether that content earns citations in LLM-powered search engines like ChatGPT, Perplexity, and Google AI Overviews, and ideally helps you close the gap when it doesn't. Most AI blog writers have no visibility tracking at all. A few, like Writesonic and Sight AI, include basic GEO monitoring. Meev is the only platform that integrates quality-gated content generation with citation tracking across five LLMs as a unified system.

Does using an AI blog writer hurt my Google rankings?

Not inherently. The Ahrefs 2025 study found the correlation between AI content share and ranking is just 0.011. But AI content without editorial oversight, E-E-A-T signals, or quality gating can absolutely get penalized — not because it's AI-generated, but because it's shallow, unverified, or fails Google's Helpful Content System thresholds. The automation isn't the liability. Skipping the quality review is.

How many LLMs should my AI visibility tool track?

At minimum, track ChatGPT, Perplexity, and Google AI Overviews — those three represent the majority of AI-powered search queries for most B2B audiences. Claude and Gemini matter depending on your audience's tool preferences. The important thing is to document exactly which LLMs your tracking tool polls, because discrepancies between platforms are common and can lead to misleading conclusions about your actual citation performance.

What is an information gain strategy in the context of AI content?

Information gain means your content adds something the existing top results don't already cover: original data, a specific example, a named case study, a direct answer to a question that's currently buried in paragraph five of every competing article. LLMs preferentially cite sources that answer questions directly and specifically. Content that restates what's already everywhere doesn't earn citations. Content that says something new, or says something familiar with more precision, does.

Can I use multiple AI writing tools at once?

Yes, and for most mature content operations, you probably should. A common stack: a content generation tool for drafts, a quality scoring layer for pre-publish review, and a citation tracking platform for post-publish measurement. The risk is that adding tools without a clear workflow creates gaps where quality checks get skipped. The cleaner solution is a platform that handles multiple stages of that workflow natively — but if you're assembling tools, be explicit about who owns each stage of the process.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

See exactly which LLMs are citing your content — and which articles are driving the lift — with Meev's citation tracking and quality-gated publishing platform.