By Judy Zhou, Head of Content Strategy

Key Takeaways

- Publishing AI content at volume without quality controls causes measurable SEO damage — one documented case of 100 AI posts per hour with zero human editing triggered ranking penalties rather than gains.

- 88% of ChatGPT citations come through the general search index, but 80% of those cited pages don't rank in Google's top 100 for the original query — meaning traditional keyword ranking alone is insufficient for AI citation visibility.

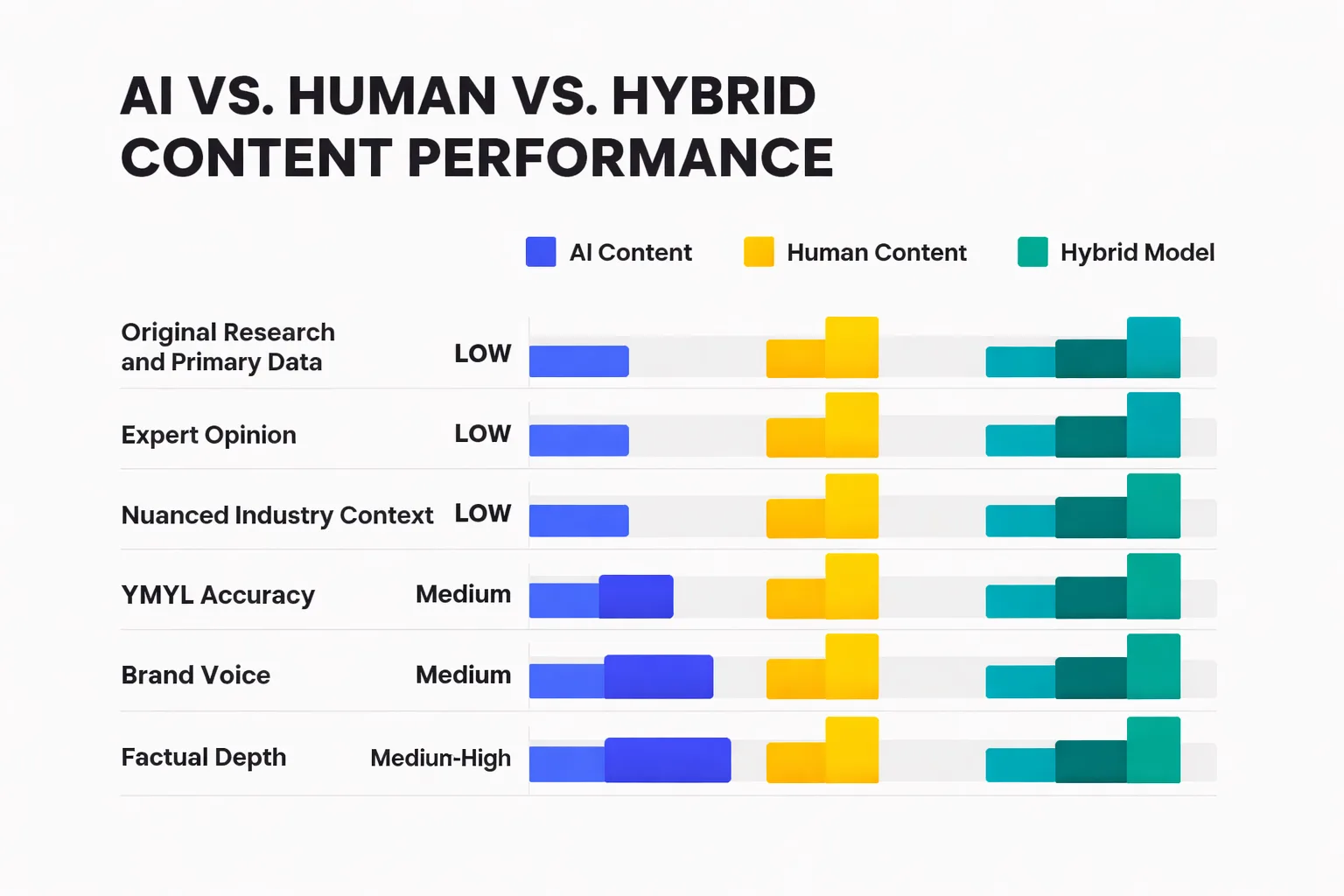

- The hybrid model (AI-drafted, human-refined) consistently outperforms both pure AI and pure human output by allocating AI to structure and synthesis, and humans to original insight, factual verification, and expert perspective.

- E-E-A-T in 2026 requires explicit human signals — named authors with real credentials, firsthand experience language, and external citations — not just well-structured AI prose.

In March 2025, a mid-sized SaaS company celebrated a milestone: their AI content pipeline had published its 10,000th article. Traffic was up. The team was lean. The CEO sent a congratulatory Slack message. Six weeks later, a broad core update rolled through Google's index and erased sixty percent of their organic visibility overnight. Nobody had noticed that 80% of their content answered questions no human had ever actually searched. This is not a cautionary tale about AI — it is a cautionary tale about what happens when speed replaces strategy.

Artificial intelligence content creation in 2026 is not a question of whether to use it. The question is where to deploy it, what to keep human, and how to avoid the traps that are currently wiping out content programs that got the sequencing wrong. As Head of Content Strategy at Meev, where I oversee AI-driven content research and publishing for hundreds of brands, I've watched the gap between teams that are winning with AI and teams that are quietly bleeding rankings widen every quarter. The difference is almost never the tool. It's the workflow design.

Key facts for 2026: AI Overviews now reduce organic click-through rates by up to 58% on affected queries (Ahrefs). An Ahrefs study of 1.4 million ChatGPT prompts found that 88% of citations come through the general search index — but 80% of those cited pages don't rank in Google's top 100 for the original query. Google's Search Quality Rater Guidelines treat E-E-A-T as a primary quality signal, not a checkbox. And the Stanford HAI 2026 AI Index confirms that frontier model capabilities are still advancing — but content quality benchmarks remain task-dependent and not universally transferable.

What AI Content Creation Actually Looks Like at Scale (Not the Demo Version)

The demo version looks frictionless. You type a brief, the model returns 1,200 words, you hit publish. The production version — the one running at 50, 500, or 5,000 posts per month — looks nothing like that.

At 50 posts per month, the bottleneck is brief quality. Most teams underestimate how much human thinking goes into a good AI prompt. The output is only as good as the input: target audience, search intent, angle differentiation, competitor gaps, brand voice constraints. Teams at this volume typically spend more time on briefs and post-publish editing than they save on drafting. That's fine — the ROI still works — but it's not the zero-effort pipeline vendors sell.

At 500 posts per month, the bottleneck shifts to quality control infrastructure. You need systematic review workflows, not ad-hoc editing. You need factual accuracy checks, especially for any YMYL-adjacent content. You need a way to catch the specific failure mode I see constantly: AI drafts that are technically coherent but topically hollow — they hit the keyword, they have the right headers, they pass a surface-level read, and they tell the reader nothing they couldn't have found in the first three Google results. At this volume, that failure mode scales catastrophically.

At 5,000 posts per month, you're essentially running a media operation. The teams doing this successfully have built editorial systems, not just AI prompts. They have content taxonomies, intent classification layers, human subject-matter reviewers for specific verticals, and automated flagging for content that hits certain risk thresholds. The teams doing it unsuccessfully are the ones generating headlines like the case SEO practitioner Jessica Bowman documented: an organization publishing 100 AI-generated posts per hour with zero human editing. That program didn't just plateau — it triggered SEO damage. Bowman's assessment is blunt and correct: "producing a large amount of low quality content can actually hurt SEO." Volume without quality control is not a content strategy. It's a liability.

The real bottlenecks nobody puts in the pitch deck: internal linking governance at scale (I've watched AI linking tools create cannibalization signals on 500+ page sites by cross-linking pages with 60-70% topical overlap), content deduplication as the library grows, and the compounding cost of fixing AI-generated factual errors after they've been indexed and cited elsewhere.

The Quality Ceiling — Where AI Content Still Falls Short

Every AI model available in 2026 — GPT-5, Claude 4 Sonnet, Gemini 1.5 Pro — has gotten meaningfully better at surface-level quality. Grammar is clean. Structure is logical. The prose doesn't read as robotic as it did two years ago. The quality ceiling isn't about prose mechanics anymore. It's about epistemic depth.

Here's where the ceiling is real and documented:

Original research and primary data. AI cannot conduct a survey, run an experiment, or produce a proprietary dataset. It can synthesize what already exists. For content categories where original research is the differentiation — industry benchmarks, original case studies, primary interviews — AI is a production tool, not a replacement for the intellectual work that makes the content worth citing.

Expert opinion with genuine stakes. There's a difference between a model generating a plausible-sounding expert perspective and an actual domain expert putting their name and reputation behind a claim. Google's raters are trained to notice this. Readers in sophisticated verticals notice it too. In my experience, the content that earns editorial citations and backlinks almost always contains a named human perspective that couldn't have been generated — a practitioner's counterintuitive take, a specific failure story, a prediction grounded in firsthand experience.

YMYL accuracy under pressure. For health, finance, legal, and safety content, AI hallucination rates — even at frontier model capability — remain unacceptable without human verification. The Stanford HAI 2026 AI Index confirms that model performance varies significantly by task type, and factual precision on high-stakes queries is not a solved problem. Publishing AI-generated YMYL content without expert review isn't just an SEO risk — it's an ethical one.

Nuanced industry context. The best content in any vertical reflects an understanding of the field's internal debates, the practitioners who are wrong in interesting ways, the conventional wisdom that's starting to crack. AI models trained on historical web data reproduce the consensus. They don't challenge it with the kind of earned skepticism that makes content genuinely useful to an expert reader.

When comparing AI model capabilities for content specifically, the Artificial Analysis methodology is worth understanding: their Intelligence Index v4.0 incorporates 10 evaluations, but as the Toolsify analysis of Claude 4 vs. GPT-5 noted, "the answer to 'which is better?' starts with 'better at what?'" — a point that applies directly to content generation. GPT-5 scores 91.5% on HumanEval+ (a coding benchmark), but coding performance doesn't translate to nuanced long-form editorial quality. Choosing your AI model based on the wrong benchmark is a real mistake I see teams make.

The Hybrid Model That's Outperforming Pure AI and Pure Human Output

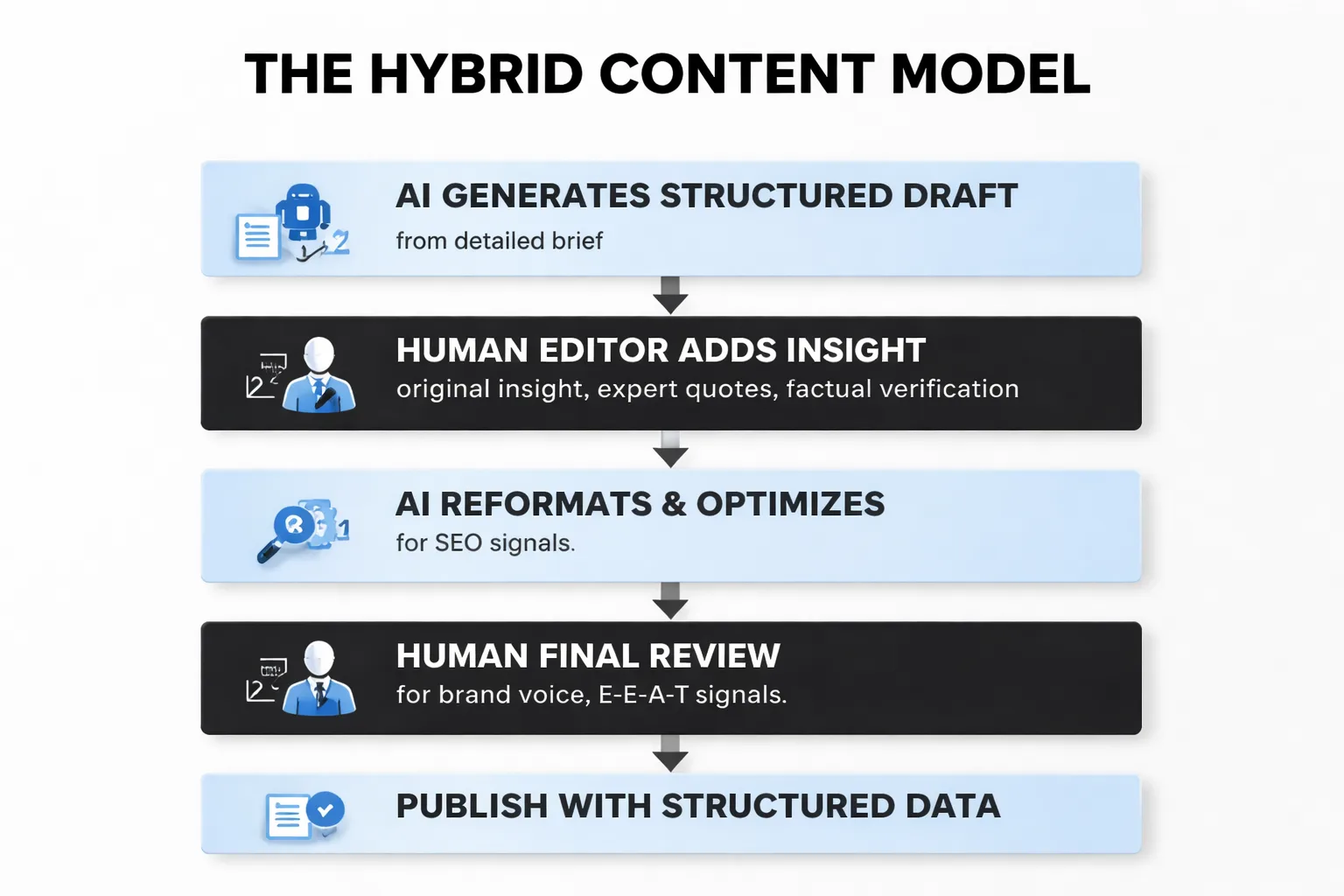

The pattern I keep seeing across content programs that are actually growing organic traffic in 2026: they're not running pure AI, and they're not running pure human. They've found the specific division of labor where each mode does what it's actually good at.

AI is genuinely excellent at: generating structured first drafts from detailed briefs, producing consistent formatting and header hierarchies, synthesizing existing research into readable prose, generating semantic variations for internal linking anchor text, and producing volume across content types that don't require original insight — product descriptions, FAQ expansions, location-based pages with genuine differentiation.

Humans are irreplaceable for: the opening hook that reflects a real perspective, the counterintuitive claim that earns a link, the expert quote that makes a piece citable, the factual verification pass that catches the hallucination buried in paragraph four, and the brand voice calibration that makes content feel like it comes from a specific organization rather than a generic language model.

The teams winning with this model aren't spending less time on content — they're spending time differently. Less time on structural drafting, more time on the intellectual inputs that make content worth reading. That reallocation is where the ROI actually lives. For a deeper look at how different AI writing tools support or undermine this hybrid approach, the comparison between Meev and Jasper AI is instructive — the tradeoffs between auto-publishing infrastructure and manual optimization workflows map directly onto the hybrid model question.

The contrarian take here — and I'll say it plainly: most content teams are applying human review in the wrong place. They're editing AI prose (fixing sentences, adjusting tone) when they should be adding AI-resistant inputs before the draft is generated (original data, expert perspective, proprietary angles). Editing AI output for style is polishing. Adding original intellectual content before the draft is the actual value creation.

E-E-A-T in an AI Content World — What Google's Raters Are Actually Checking

Google's Search Quality Rater Guidelines are public, and most content teams have read a summary of them. Fewer have actually worked through what the guidelines mean operationally for AI-generated content at scale.

Here's what raters are instructed to evaluate, translated into workflow decisions:

Experience means evidence that the content creator has firsthand exposure to the topic. For AI content, this means the human contributor's experience needs to be explicit — not implied. Author bios with specific credentials, first-person anecdotes grounded in real situations, references to specific projects or outcomes. A generic "our team of experts" byline does not satisfy this signal.

Expertise means demonstrated subject-matter knowledge at the level the topic requires. For YMYL content, this means named credentials. For non-YMYL content, it means the kind of specificity that only comes from someone who has actually worked in the field — the specific failure mode, the exception to the rule, the thing the textbook gets wrong.

Authoritativeness is largely an external signal — who else in the field cites, references, or links to this content? This is where the content volume trap becomes dangerous. Publishing 5,000 AI articles that no one references doesn't build authority. Publishing 50 articles that earn editorial citations does. The strategic shift I made in late 2024 was treating external relational signals as a co-equal input to topical clustering — before launching any content cluster, I now ask: can we name three external sources likely to reference this content organically within 90 days? If not, we reposition the angle.

Trustworthiness is the foundation of the other three. For AI content, the primary trust risk is factual error — a hallucinated statistic, a misattributed quote, a claim that was true in 2022 and isn't anymore. Building a verification step into every AI content workflow isn't optional for trust-sensitive topics. It's the baseline.

For teams building AEO (Answer Engine Optimization) signals alongside traditional SEO, the E-E-A-T framework applies equally to AI citation engines. The Ahrefs 1.4M-prompt study finding that 80% of ChatGPT-cited pages don't rank in the top 100 for the original query is genuinely surprising — it means ChatGPT is surfacing content through "fanout queries" where ranking dynamics differ from traditional SERP position. Understanding this distinction matters for AEO vs. SEO strategy: optimizing purely for top-10 Google rankings may be insufficient for AI citation visibility.

The practical implication: structured data markup, clear entity signals, and self-contained quotable sentences (the kind an AI engine can extract and attribute) are becoming first-order content decisions, not afterthoughts. Teams still treating schema markup as a technical SEO nice-to-have are leaving AI citation visibility on the table.

Is your AI content workflow built to rank — or just built to publish fast?

Your 2026 AI Content Stack — What to Automate, What to Keep Human

Here's the decision framework I use with content teams. It's organized by three variables: content type, risk level, and audience sophistication.

Automate with confidence (low risk, low sophistication requirement): - Product and category page descriptions with genuine differentiating data inputs - FAQ expansions from existing support documentation - Location-based content with verified local data - Content refreshes updating statistics and dates in existing high-performing articles - Meta descriptions and title tag variants for A/B testing - Internal linking anchor text suggestions (with mandatory human review before implementation — see the cannibalization risk above)

Automate with human review (medium risk): - Informational blog content in non-YMYL verticals - Thought leadership first drafts (AI generates structure, human adds original perspective) - Social media content derived from longer-form human-authored pieces - Email newsletters synthesizing existing content - Competitor comparison content (high factual accuracy requirement — verify every claim)

Keep human-led (high risk, high sophistication): - Any YMYL content: health, finance, legal, safety - Original research, data studies, and industry reports - Executive byline content and named expert pieces - Crisis communications and brand-sensitive announcements - Content targeting expert-level audiences who will immediately recognize shallow treatment - Link-bait content designed to earn editorial citations

The ai model comparison question — GPT-5 vs. Claude 4 Sonnet vs. others — matters less than most teams think for content production. What matters more is the brief quality, the verification layer, and the human inputs that make the content AI-resistant. That said, context window size has real operational implications: Claude 4 Sonnet's 1,000k token context window versus GPT-5's 400k means meaningfully different capabilities for long-form content synthesis and document-level consistency.

For teams evaluating their tooling, the Meev vs. Frase comparison and Meev vs. Surfer SEO comparison are useful starting points — the key question is whether you need a research and optimization layer or a full publishing automation layer, and those are genuinely different product categories.

One workflow decision that's consistently undervalued: where you put the SEO optimization pass. Most teams run it after the draft. The teams I've seen get the best results run a keyword and intent analysis before the brief, build the semantic structure into the brief itself, and use the AI draft to fill in the structure rather than retrofitting SEO signals onto a draft that was generated without them. It's a sequencing change, not a tooling change — and it's the difference between content that ranks and content that technically covers a topic.

For teams thinking about how AI content intersects with Google AI Overviews specifically, optimizing for Claude Opus 4.7 and AI Overview citation is worth reading alongside this framework — the citation dynamics for AI search require a different set of structural decisions than traditional SERP optimization.

The honest 2026 state of play: artificial intelligence content creation is a production capability, not a strategy. The teams treating it as strategy are the ones celebrating 10,000 articles and then watching their traffic disappear. The teams treating it as infrastructure — with clear human inputs, systematic quality controls, and a genuine understanding of where AI stops and editorial judgment begins — are the ones building content programs that compound.

Frequently Asked Questions

Does AI-generated content violate Google's guidelines? No — Google's current guidance focuses on content quality and helpfulness, not the method of production. Content created primarily to manipulate search rankings is what violates guidelines, regardless of whether a human or AI wrote it. The practical implication: AI content that genuinely serves user intent, passes E-E-A-T evaluation, and contains accurate information is treated the same as human-written content in Google's index.

How do I maintain brand voice when using AI at scale? The most effective method I've seen is a detailed style guide embedded directly into the system prompt — not just tone descriptors, but specific vocabulary preferences, sentence length targets, banned phrases, and example passages from your best-performing human-written content. AI models will approximate your voice reasonably well with enough examples. The gap that persists is the idiosyncratic perspective — the brand's specific point of view on contested industry questions — which still requires human input at the brief stage.

What's the minimum viable human review process for AI content? For non-YMYL content at medium volume, the minimum I'd recommend: one factual accuracy pass (checking all statistics, quotes, and named claims against primary sources), one brand voice pass (adjusting the opening hook and any passages that read as generic), and one intent alignment check (confirming the content actually answers the specific search query it's targeting, not just the broad topic). That's roughly 20-30 minutes per piece. Below that threshold, you're accepting risk that compounds as your content library grows.

How does AI content affect topical authority building? This is where the volume trap is most dangerous. Topical authority comes from comprehensive, high-quality coverage of a subject — not just comprehensive coverage. A cluster of 200 AI-generated articles that are topically shallow may actually dilute authority signals compared to 50 deeply researched pieces. Google's systems are increasingly capable of evaluating depth, not just coverage. The right approach is to use AI to achieve coverage breadth while ensuring the anchor pieces in each cluster — the ones targeting your highest-value queries — have genuine depth and human expertise.

When will AI be ready to replace human writers entirely? Not in 2026, and probably not for the content types that actually drive business outcomes. The specific capabilities that make content worth reading — original research, expert perspective, counterintuitive claims grounded in firsthand experience, the kind of nuanced industry context that only comes from working in a field — are not capabilities frontier models have solved. What AI has solved is the production layer: structure, synthesis, consistency, and volume. The teams that understand this distinction are building durable content programs. The teams that don't are the cautionary tale in the opening paragraph.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

See how Meev's AI content system builds in the quality controls, E-E-A-T signals, and human review layers that actually protect your rankings.