By Judy Zhou, Head of Content Strategy

Key Takeaways

- 58% of U.S. Google searches now produce AI-generated summaries — making AI Overview optimization a non-optional priority for content teams in 2025. AI Overviews appear for 82% of desktop queries with under 1,000 monthly searches, so long-tail informational content is your highest-leverage entry point. 58% of marketers report AI-referred visitors convert at higher rates than traditional organic traffic — getting cited in an AI Overview is a conversion signal, not just a visibility win. Claude Opus 4.7's extended context window enables full content cluster analysis in under 30 minutes — use it in diagnostic mode before generation mode.

If you're trying to optimize for Google AI Overviews in 2025, the rules have shifted fast enough that most content teams are still playing catch-up. Google AI Overviews now reach 2 billion monthly users, and 58% of U.S. adults' searches in March 2025 produced an AI-generated summary. That's not a trend — that's a structural shift in how the web distributes attention.

As Head of Content Strategy at Meev, where I oversee AI-driven content research and publishing for hundreds of brands, I've watched teams scramble to respond. Most of them are making the same mistake: treating AI Overviews like a featured snippet problem. It isn't. The optimization logic is fundamentally different, and the teams using Claude's advanced reasoning capabilities to close that gap are pulling ahead.

Here's what actually works.

TLDR - 58% of U.S. Google searches now produce AI-generated summaries, making AI Overview optimization a non-optional priority for content teams in 2025. - AI Overviews appear for 82% of desktop queries with under 1,000 monthly searches — meaning long-tail, informational content is your highest-leverage entry point. - 58% of marketers report AI-referred visitors convert at higher rates than traditional organic traffic — getting cited in an AI Overview isn't just a visibility win, it's a conversion signal. - Claude Opus 4.7's extended context window lets you feed entire content clusters for gap analysis — a workflow that previously took hours now takes under 30 minutes.

What Google AI Overviews Actually Are

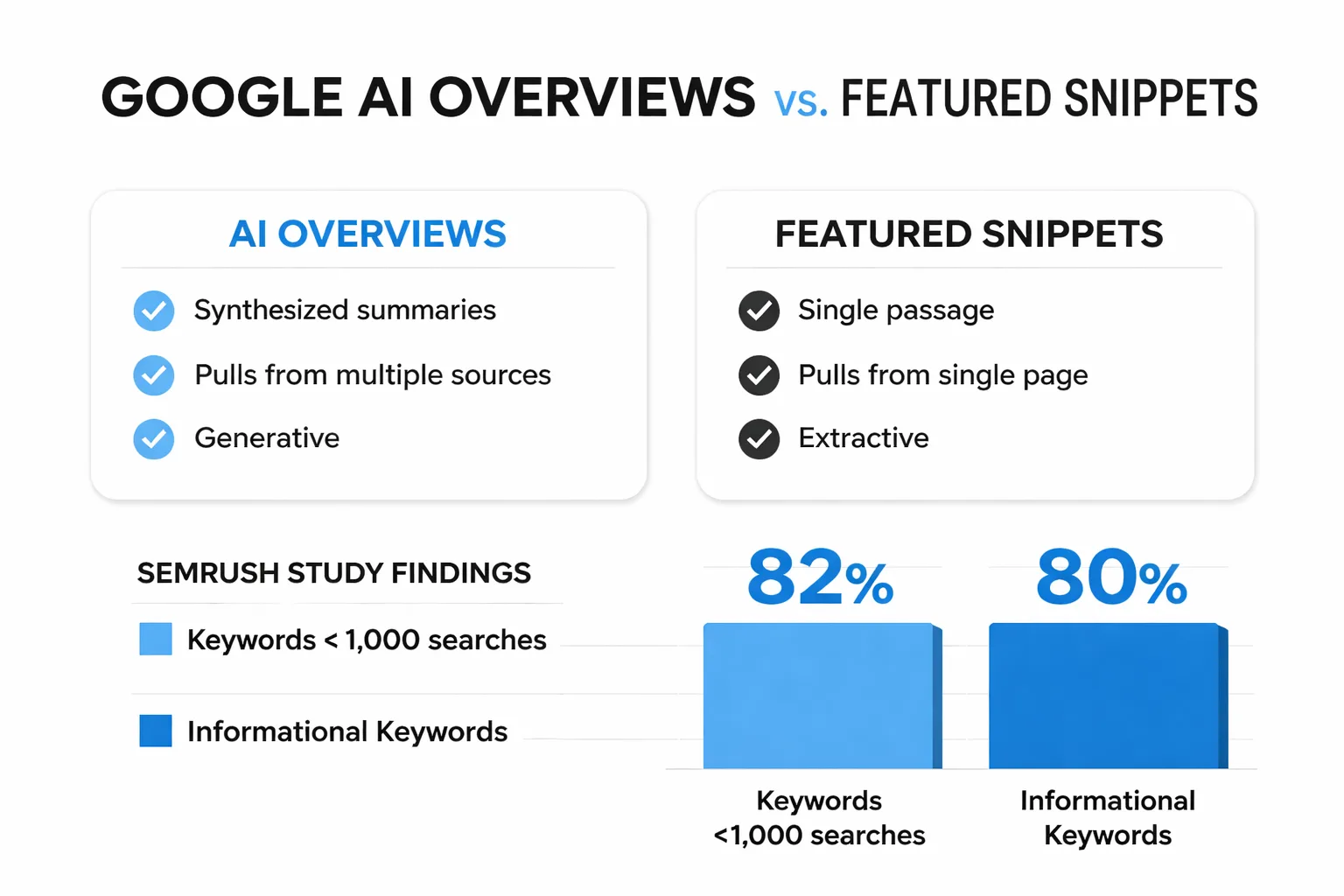

Google AI Overviews are synthesized summaries that appear above traditional organic results, pulling from multiple sources to answer a query directly. They're not the same as featured snippets, which pull a single passage from a single page. AI Overviews are generative — Google's model reads across sources, synthesizes an answer, and cites the pages it drew from.

That distinction matters enormously for how you write. Featured snippet optimization meant owning one clean definition or list. AI Overview optimization means being a credible, citable source that a language model trusts to quote accurately. The bar is different, and frankly higher.

Semrush's study of 200,000 keywords found that 82% of desktop AI Overviews appeared for keywords with under 1,000 monthly searches, and 80% of desktop AI Overviews targeted informational keywords. That number stopped me cold. It means the sweet spot isn't your high-volume head terms — it's the long-tail, question-based queries where your actual expertise lives.

Why Claude Opus 4.7 Changes the Workflow

Most AI writing tools treat content optimization as a generation problem — give me a topic, I'll write you an article. Claude Opus 4.7 is genuinely different because its extended context window and advanced reasoning capabilities make it a powerful analysis tool, not just a generation tool. That distinction is what makes it useful for claude opus content optimization at scale.

Here's what I mean practically. When I'm auditing a content cluster for AI Overview readiness, I need to understand: Does this content answer questions directly? Is the entity structure clear? Are the claims specific and attributable? Doing that manually across 20 articles takes a full day. With Claude, I can paste in multiple full articles simultaneously and prompt it to evaluate them against a rubric — flagging vague claims, identifying missing direct-answer paragraphs, and surfacing where the content drifts from the core query intent.

The workflow I've settled on has three phases: diagnostic, rewrite, and validation. In the diagnostic phase, I feed Claude the target query, the existing article, and the top three AI Overview citations for that query (when they exist). I ask it to identify the specific passages being cited in those overviews and compare them structurally to my content. What makes those passages citable? Are they more direct? More specific? Do they open with the answer rather than building to it? The gap analysis that comes back is genuinely useful — not generic SEO advice, but specific structural observations about why a competitor's paragraph is getting pulled while mine isn't.

The Prompt Engineering That Actually Works

Prompt engineering for AI Overview optimization is not the same as prompting for content generation. You're not asking Claude to write — you're asking it to reason about how Google's AI will read and cite your content. The framing matters.

The prompts I return to most often:

For direct-answer gap analysis: "Here is my article on [topic] and the query [exact query]. Identify every question a user with this query might have that my article doesn't answer in the first two sentences of a section. For each gap, write a replacement opening sentence that leads with the answer."

For entity and specificity audit: "Review this article and flag every claim that uses vague language (words like 'many,' 'often,' 'some experts') where a specific number, named study, or concrete example could replace it. Output a list of the flagged sentences with suggested replacements."

For competitive citation analysis: "Here are three passages that appear in Google's AI Overview for [query]. Here is my article covering the same topic. Explain structurally why those passages are more citable — focus on sentence structure, specificity, and answer proximity."

That third prompt is the one that changed how I think about this work. The answers Claude returns aren't mystical — they're consistent. Citable passages tend to open with the answer, use specific numbers, name their sources, and avoid subordinate clauses that bury the key claim. Once you see that pattern clearly, you can edit for it systematically.

Want to see how a structured AI content workflow gets your pages cited in Google AI Overviews?

E-E-A-T Is the Floor, Not the Ceiling

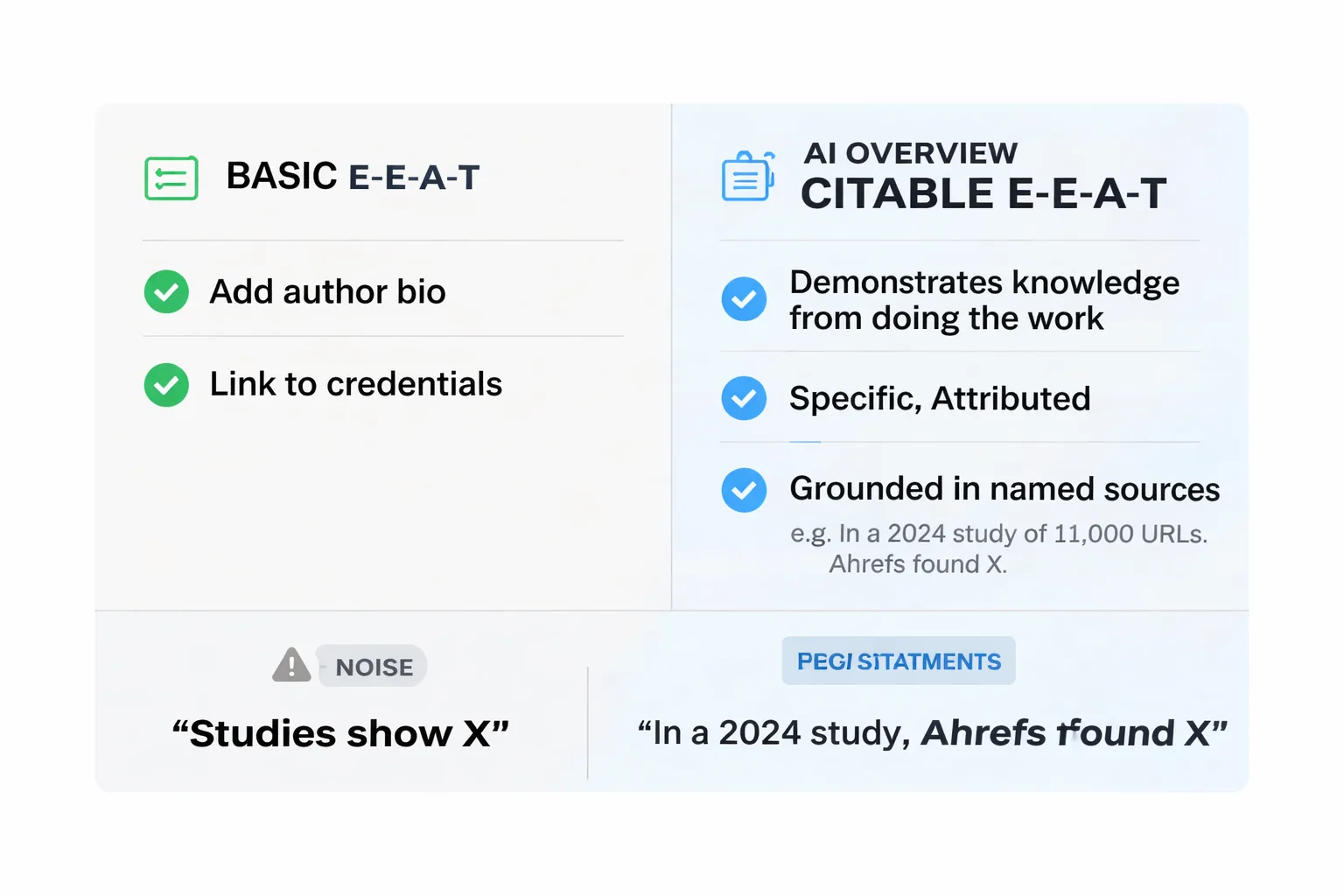

I want to push back on something I see constantly in SEO content: teams treating E-E-A-T as a checklist item. Add an author bio. Link to credentials. Done. That's not what Google's quality rater guidelines are actually asking for — and it's definitely not what gets content cited in AI Overviews.

E-E-A-T for AI Overviews means demonstrating that your content contains knowledge you could only have from doing the work. A passage that says "studies show X" is not the same as a passage that says "in a 2024 study of 11,000 URLs, Ahrefs found X, which tracks with what I see when auditing content clusters." The second version is citable because it's specific, attributed, and grounded in a named source. The first version is noise.

The failure mode I see most often — and I saw this play out clearly in how the March 2024 Core Update hit content farms — is articles that are technically well-structured but contain nothing a person with actual domain knowledge would say. They hit the keywords. They have H2s and bullet points. They don't contain a single sentence that required expertise to write. Google's systems have gotten genuinely good at distinguishing those two categories, and AI Overviews pull almost exclusively from the second.

This is also why I've been skeptical of the "slop content" shortcut — the pattern of using AI to generate plausible-sounding paragraphs that technically answer a question but don't demonstrate any real understanding of the problem. It worked briefly. It doesn't work now. The teams that scaled that approach in 2023 and early 2024 are the ones who got hit hardest.

Structuring Content for AI Citation

The structural pattern that gets content cited in AI Overviews is consistent enough that I've turned it into a formatting rule for every brief I write. Here's the framework:

The Direct Answer Opening. Every section that could answer a standalone question should open with a 40-60 word direct answer. Not a setup. Not a transition. The answer, stated clearly, in the first sentence. This mirrors how AI Overviews pull content — they look for passages that are self-contained and answer-first.

Specific, Attributable Claims. Every data point should be named and sourced. "Traffic can increase" is uncitable. "Semrush's AI Overviews study found AI Overviews appeared for 6.49% of keywords in January 2025, peaking at roughly 25% in July 2025" is citable. The difference isn't length — it's specificity and attribution.

Entity Clarity. Name the thing you're talking about on first use. Don't refer to "the tool" or "this approach" — name it. AI systems parsing your content for citation need clear entity signals to attribute claims correctly.

Scannable Hierarchy. Short paragraphs for punchy claims. Longer paragraphs for complex mechanics. Never bury the key claim in the middle of a long paragraph — AI systems and human readers both miss it.

When I run existing content through Claude using the prompts above, the most common structural failure I find is answer-burying. Writers build to their point. They set up context, provide background, then deliver the answer in paragraph three. For AI Overview citation, that structure actively works against you. The answer needs to come first.

Technical SEO Still Matters

SEO for Google AI Overviews isn't purely a content problem — the technical layer still determines whether your content gets crawled and parsed correctly in the first place.

A few specifics worth tracking:

Structured data and schema markup. Google Search Console's structured data reports will show you which pages have valid schema and which have errors. For content targeting AI Overviews, FAQ schema and Article schema are the most relevant. They don't guarantee inclusion, but they give Google's systems clearer signals about what your content is and what questions it answers. The Google Search Console structured data reports are worth auditing quarterly — errors accumulate silently.

Crawl equity and internal linking. This is the gap I find most consistently in content audits. Teams build out topic clusters and then do almost nothing to connect them structurally. I reviewed a site last quarter with 60+ cluster articles across three pillar topics, and Screaming Frog showed the supporting pages averaging fewer than two internal links each. Google couldn't parse the hierarchy. The content existed; the crawl equity didn't flow. For AI Overview optimization specifically, this matters because Google needs to understand your topical authority before it will cite you as a source — and topical authority signals come partly from how your content links together.

Page experience signals. Core Web Vitals still factor into whether Google trusts your pages enough to surface them in AI Overviews. A technically broken page with great content will lose to a technically sound page with good content. This isn't new SEO advice — but it's worth restating because I see teams invest heavily in content quality while ignoring the technical debt that's quietly suppressing their pages.

For teams building out their technical SEO stack, the SEO tool stack that's actually worth it in 2025 is worth reading — it covers which tools actually move the needle versus which ones just add dashboard noise.

Claude vs Other Models — What the Data Says

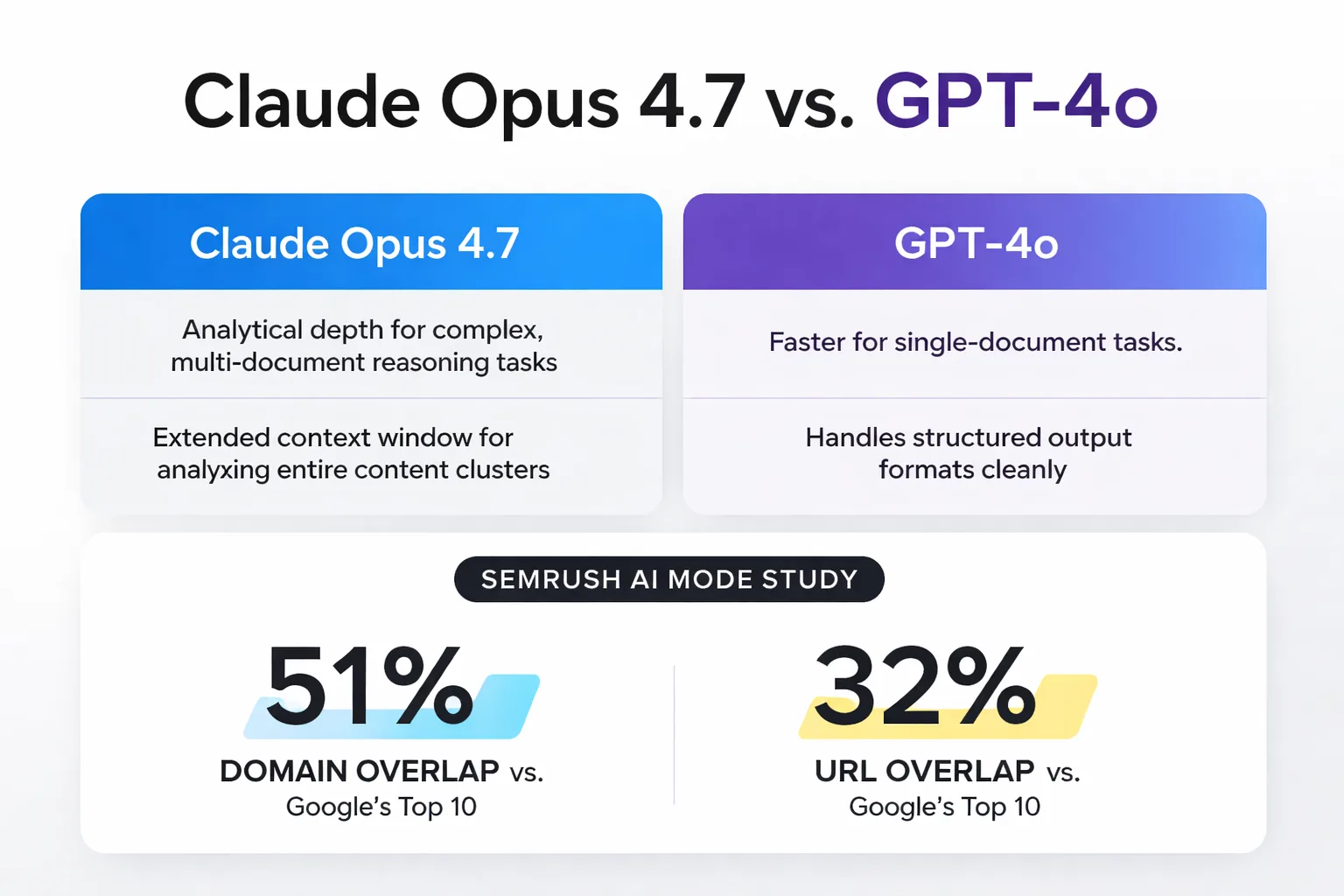

Here's the honest answer: there's no published study comparing Claude Opus 4.7 to GPT-4o on Google AI Overviews inclusion rates. Anyone claiming specific percentages for one model versus another is making it up. The Semrush AI Mode comparison study found 51% domain overlap and 32% URL overlap between AI Mode responses and Google's top 10 results — but that data doesn't break down by which AI model generated the content.

What I can tell you from working with both tools is this: the difference isn't in the output quality for standard content tasks. It's in the analytical depth for complex, multi-document reasoning tasks. Claude's extended context window is genuinely useful when you're analyzing an entire content cluster — something I need to do regularly when auditing for topical authority gaps. GPT-4o is faster for single-document tasks and handles structured output formats slightly more cleanly in my experience.

For the specific workflow of optimizing content for AI Overviews — which involves reading multiple competitor pages, analyzing structural patterns, and generating targeted rewrites — Claude's context handling gives it a practical edge. That's not a model benchmark; it's a workflow observation.

| Task | Claude Opus 4.7 | GPT-4o |

| Multi-document cluster analysis | Strong — large context window handles full clusters | Good — may require chunking longer clusters |

| Structural gap analysis vs competitors | Strong — detailed reasoning about citation patterns | Good — less granular on structural specifics |

| Direct-answer rewrite generation | Strong | Strong |

| Structured data / schema generation | Good | Strong — cleaner JSON output |

| Speed for single-document tasks | Moderate | Fast |

| Prompt sensitivity | High — rewards detailed prompts | Moderate — handles vague prompts reasonably |

The contrarian take I'll offer: the model you use matters less than the prompts you write and the editorial judgment you apply to the output. I've seen teams get mediocre results from Claude with bad prompts and excellent results from GPT-4o with precise ones. The tool is not the strategy.

The Conversion Case for AEO

I want to address the ROI question directly, because I hear it constantly: "If AI Overviews reduce clicks, why are we optimizing for them?"

The answer is in the conversion data. According to HubSpot's 2026 State of Marketing report, 58% of marketers report that visitors referred by AI tools convert at higher rates than traditional organic traffic. That's not a small signal. It suggests that users who click through from an AI-generated summary are further along in their decision process — they've already gotten the overview, they're clicking because they want depth or want to act.

The pattern I keep seeing in analytics across content programs is that AI-referred traffic has shorter time-to-conversion and lower bounce rates on product or service pages. The AI Overview is doing pre-qualification work. Users arrive already oriented to the topic and looking for a specific next step.

This reframes the optimization goal. You're not just trying to get cited for visibility — you're trying to get cited by the right queries so the traffic you do receive is high-intent. That means being selective about which queries you target for AEO optimization, not just which ones have volume. A citation in an AI Overview for a bottom-of-funnel informational query is worth more than one for a broad awareness query, even if the broader query has more searches.

When I think about seo google ai overviews claude as a combined strategy, the ROI case is clearest for brands that have strong topical authority in a specific domain. Generalist content doesn't get cited. Specific, expert, well-structured content does.

Topical Authority Is Non-Negotiable

I used to think content drift was a minor SEO hygiene issue. I stopped thinking that after watching a client's traffic stall for six months despite consistent publishing — their cluster had quietly expanded into loosely related topics, none of it reinforcing the core subject. Google had no clear signal about what the site was actually the authority on. We cut about 30% of the cluster, tightened the remaining pieces around a coherent topic spine, and rankings started moving within about 10 weeks.

For AI Overview optimization, topical authority is the prerequisite. Google's AI systems don't cite random pages that happen to answer a question — they cite pages from domains that have established authority on the subject. If your content cluster is scattered, you won't get cited even if individual pages are well-structured.

The practical implication: before you spend time optimizing individual articles for AI Overviews, audit your cluster for coherence. Are all the pieces reinforcing a single core topic? Are they internally linked in a way that signals hierarchy? Do they collectively demonstrate more depth on this subject than any single competitor page?

If the answer to any of those is no, the optimization work you do on individual articles will have a ceiling. Fix the architecture first.

The goal when you optimize for Google AI Overviews isn't to game a single query — it's to become the source Google's AI trusts enough to cite repeatedly across a topic area. That's a content strategy problem, not a formatting problem. And it's the one that, once solved, compounds over time in a way that individual article tweaks never will.

FAQ

What is Google AI Overviews optimization?

Google AI Overviews optimization is the practice of structuring content so Google's generative AI system selects it as a cited source in the synthesized summaries that appear above organic search results. Unlike featured snippet optimization, which targets a single passage from a single page, AI Overviews pull from multiple sources — so the goal is to be a credible, specific, and clearly structured source that the AI can quote accurately.How does Claude Opus 4.7 help with AI Overview optimization?

Claude Opus 4.7's extended context window allows you to feed entire content clusters — multiple full articles simultaneously — for gap analysis against competitor AI Overview citations. The most effective workflow uses Claude in diagnostic mode: comparing your content's structure to passages currently being cited in AI Overviews and identifying specific structural differences like answer proximity, claim specificity, and entity clarity.Do AI Overviews reduce organic traffic?

Pew Research found that Google users are less likely to click on links when an AI summary appears. However, HubSpot's 2026 State of Marketing report found that 58% of marketers report AI-referred visitors convert at higher rates than traditional organic traffic — suggesting that clicks from AI-cited sources are higher-intent, partially offsetting the volume reduction.What content types get cited most in AI Overviews?

According to Semrush's study of 200,000 keywords, 80% of desktop AI Overviews target informational keywords, and 82% appear for queries with under 1,000 monthly searches. Long-tail, question-based content with direct-answer structure and specific, attributed claims is the highest-leverage content type for AI Overview citation.Is structured data required for AI Overview inclusion?

Structured data isn't a hard requirement for AI Overview inclusion, but it gives Google's systems clearer signals about your content's purpose and structure. FAQ schema and Article schema are the most relevant for content targeting AI Overviews. Errors in your structured data implementation — visible in Google Search Console — can suppress pages that would otherwise be strong candidates for citation.How is AEO different from traditional SEO?

AEO (Answer Engine Optimization) focuses on making content directly citable by AI systems — prioritizing answer-first structure, specific attributable claims, and entity clarity over keyword density and backlink volume. Traditional SEO optimizes for ranking position in a list of blue links. AEO optimizes for being the source an AI selects when synthesizing an answer — a fundamentally different signal.How long does it take to see results from AI Overview optimization?

There's no published benchmark with a specific timeline, and anyone giving you a precise number is guessing. The pattern I observe is that structural improvements — rewriting sections to open with direct answers, adding specific attributed claims — can influence AI Overview citations within a few weeks of re-crawl. Topical authority improvements, which require cluster-level work, take longer — typically two to three months before the domain-level signals shift meaningfully.About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev's AI-driven content system is built to produce the kind of specific, expert, well-structured content that Google's AI actually cites — at scale, without the manual overhead.