Most SEO tool stacks are built by accident — one subscription added after a conference, another after a competitor analysis, a third because someone on the team swore by it at their last job. The result is a bloated, overlapping mess that costs $800–$2,000/month and produces the same insights from two well-chosen tools. As a content strategist who's audited stacks across more than 30 sites over the past two years, I can tell you: the problem isn't a lack of search engine optimization tools. It's a lack of stack discipline.

\"The average content team I audit is paying for 6–8 SEO tools and getting unique, non-duplicated insight from maybe 3 of them.\"

TLDR: - Most SEO stacks have 4 overlapping tool categories — rank tracking, auditing, keyword research, and content optimization — where teams routinely pay for the same capability twice. - A lean 4-tool core (crawler + keyword tracker + content optimizer + analytics layer) covers 90% of what most content-first operations actually need. - AI-native tools are now replacing legacy software for specific workflows — particularly keyword clustering, AEO content structuring, and AI model performance tracking. - You can audit your entire stack in 30 minutes using a cost-per-insight framework: map each tool to one unique deliverable, and cut anything that can't pass the test.

Key Takeaways

- Most SEO stacks duplicate capabilities across rank tracking, auditing, keyword research, and content optimization — teams routinely pay for the same insight twice across 6–8 tools.

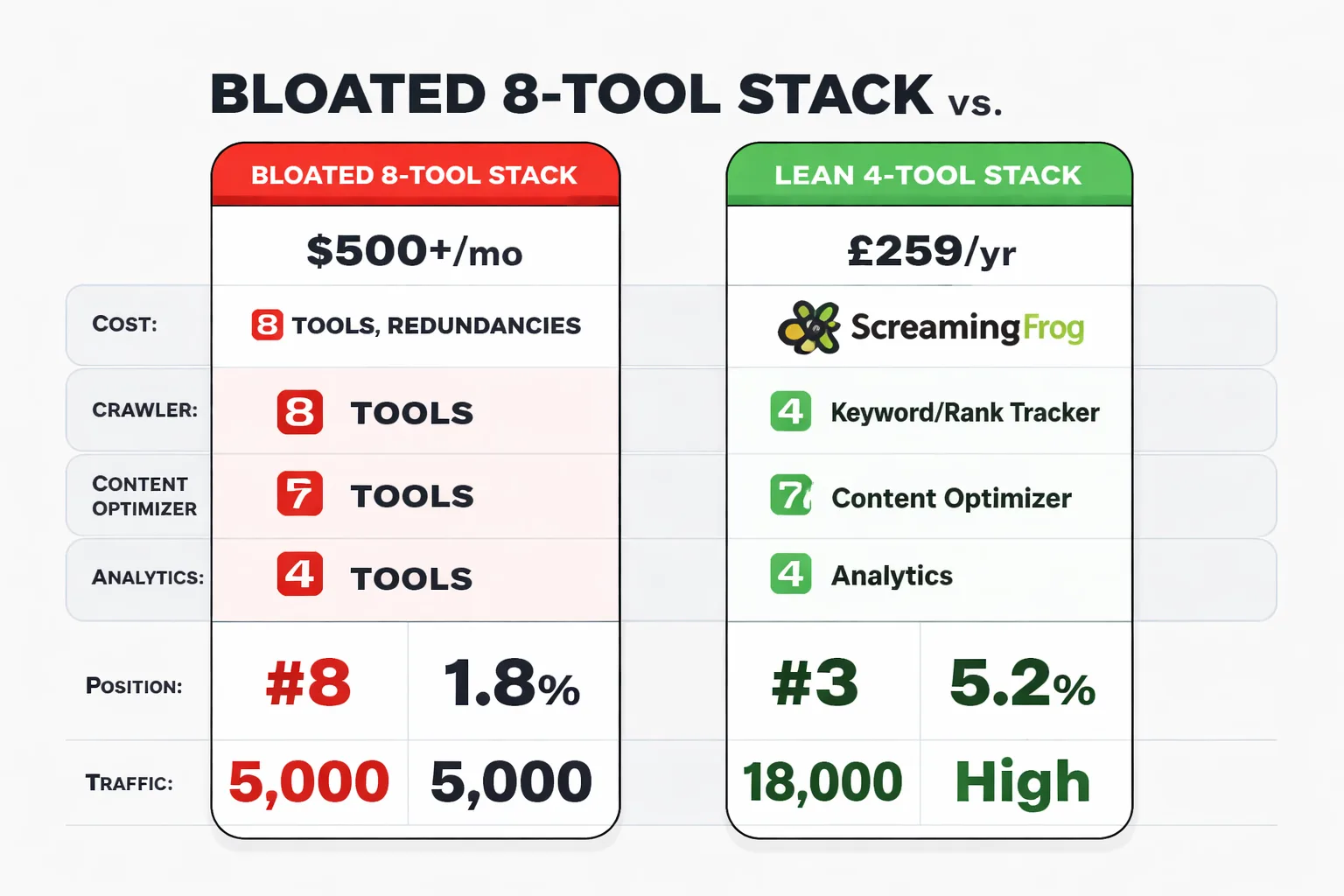

- A lean 4-tool core (Screaming Frog + Semrush or Ahrefs + Frase + GSC/GA4) covers 90% of content-first SEO needs for $283–$323/month.

- AI-native tools are replacing legacy software for specific workflows — keyword intent classification, AEO content structuring, and AI model citation tracking — not wholesale, but surgically.

- The 30-minute stack audit framework (map each tool to one unique deliverable, calculate cost-per-insight, cut anything that can't pass the test) consistently eliminates $500–$800/month in redundant subscriptions with zero operational impact.

Why Most SEO Tool Stacks Are Bloated and Redundant

Here's the overlap pattern I see constantly. A team is running Semrush for keyword research and rank tracking. They're also running Ahrefs because someone trusts its backlink index more. They added Screaming Frog for technical crawls, but they're also running Semrush's site audit. They brought in Clearscope or Surfer for content optimization, but Semrush's Writing Assistant is already sitting unused in the same subscription they're paying for. That's four tools doing the work of two — and the billing reflects all four.

The four categories where overlap kills ROI are predictable: rank tracking, site auditing, keyword research, and content optimization. Every major platform — Semrush, Ahrefs, Moz — has built features in all four. Most teams pick one platform for its strongest feature, then add a second platform for a different strength, and end up with full redundancy across the other three. I've seen teams with $1,400/month in SEO subscriptions where a single Semrush Pro plan at $130/month would have covered 80% of their actual workflow. The other $1,270 was paying for dashboards nobody logged into.

The calculating SEO ROI problem compounds this. According to the CMO Survey, 65% of marketers can't quantitatively demonstrate the impact of their marketing — which means most teams can't even tell you whether their $600/month Ahrefs subscription is generating more pipeline than a $99/month alternative would. If you can't measure the tool's contribution to revenue, you can't justify the cost. And most teams aren't measuring it.

The fix isn't finding better tools. It's forcing every tool to justify its seat at the table with a unique deliverable it owns exclusively.

What Is the Minimum Viable Search Engine Optimization Tool Stack for a Content-First SEO Operation

A content-first operation — one where the primary growth lever is organic search driven by editorial output — needs exactly four tool categories. Not eight. Four. Here's how I'd build it from scratch today:

Layer 1 — Crawler: Screaming Frog (free up to 500 URLs, £259/year for unlimited) Nothing in this price range touches Screaming Frog for technical SEO. It surfaces crawl errors, redirect chains, duplicate content, missing meta tags, and structured data issues faster than any cloud-based alternative. The trade-off: it's desktop software, not a shared dashboard. If your team needs collaborative access to crawl data, you'll want to export reports into a shared drive. For most content-first operations, that's a non-issue — crawls happen monthly, not daily.

Layer 2 — Keyword Research + Rank Tracking: Semrush Pro ($139/month) OR Ahrefs Lite ($99/month) I'm not going to pretend there's a clear winner here — the honest answer is that Semrush wins on keyword database breadth and Ahrefs wins on backlink index accuracy. For content-first teams that care more about finding topical gaps than obsessing over link metrics, Semrush's keyword clustering and topic research tools are more immediately useful. For teams doing active link building, Ahrefs' link data is worth the premium. Pick one. Do not run both. If you want a deeper breakdown of how domain authority scores differ between the two, this comparison of Moz vs Ahrefs domain authority is worth reading before you commit.

Layer 3 — Content Optimizer: Frase ($45/month) or NeuronWriter This is the layer most teams skip or overbuy. Frase earns its seat by combining SERP research, content briefing, and optimization scoring in one workflow — you can go from keyword to publishable brief in under 20 minutes. The trade-off is that its AI writing output is mediocre; use it for structure and NLP scoring, not draft generation. If your team is already using a dedicated AI writing tool, Frase's brief-building alone justifies the cost.

Layer 4 — Analytics: Google Search Console (free) + GA4 (free) I'll be blunt: most teams underuse what they already have here. Google Search Console's Query report alone — filtered by CTR under 2%, sorted by impressions descending — gives you a prioritized list of optimization targets that most $300/month rank trackers can't match for actionability. GA4's content grouping, when set up correctly, lets you track content ROI at the cluster level, not just the page level. The tool isn't the problem. The setup is.

Total cost for this stack: $283–$323/month. That's it. Everything else is overhead until you've maxed out what these four layers can tell you.

Is your current SEO tool stack actually earning its cost — or are you paying for overlap?

Where AI-Native Tools Are Replacing Legacy Software

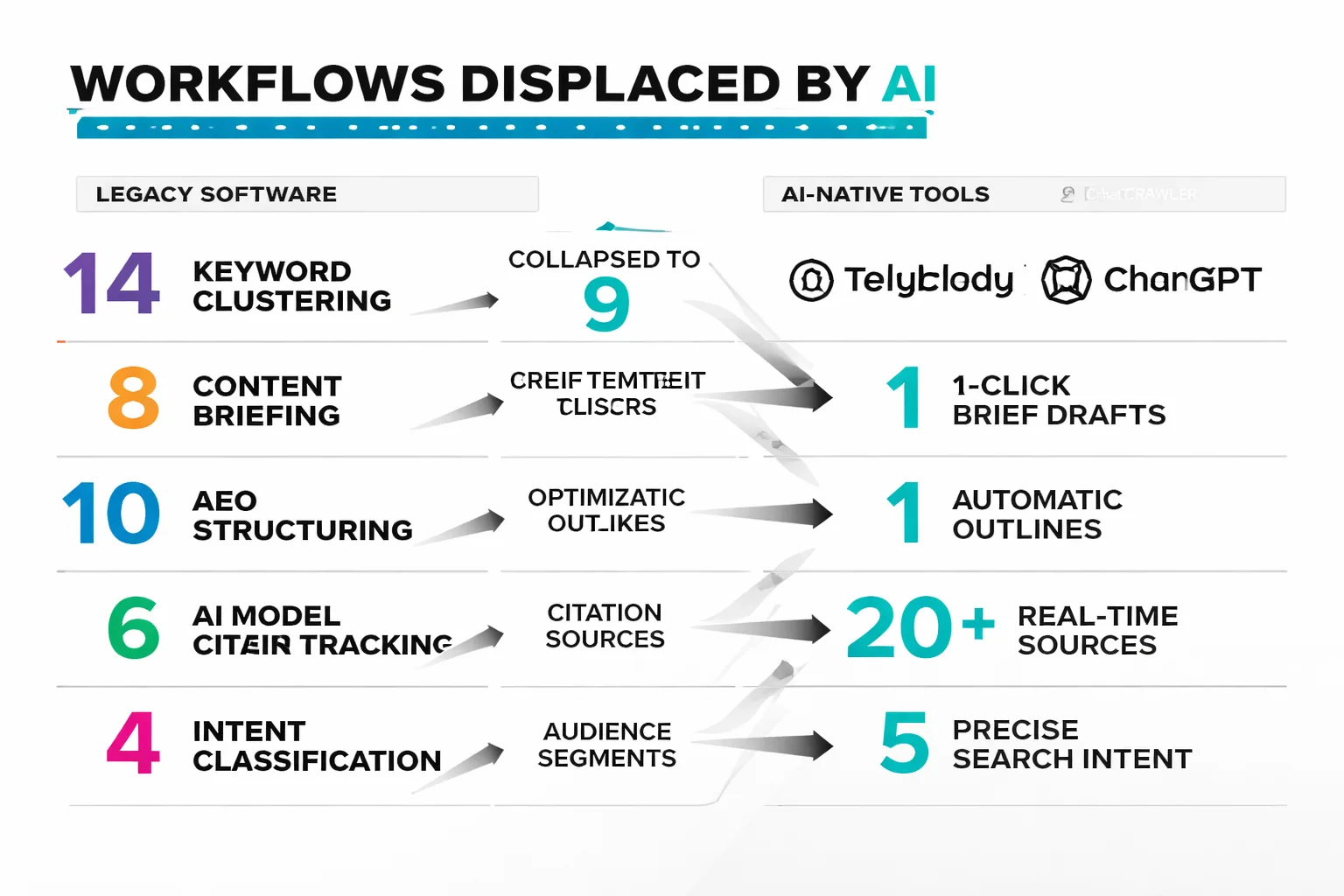

The shift I've been watching closely over the past 12 months isn't that AI tools are replacing Semrush or Ahrefs wholesale. It's more surgical than that — AI-native tools are taking over specific workflows where legacy platforms were always mediocre.

Keyword clustering is the clearest example. I tested this directly with a B2B SaaS client earlier this year — we ran the same 200-keyword seed list through Semrush's keyword grouping tool and then through a manual intent-classification pass using an AI workflow. Semrush produced 14 clusters. The AI intent pass collapsed those into 9 and flagged 3 of the Semrush clusters as cannibalization risks — keywords that looked topically related but actually served different user intents. If we'd built content around all 14 clusters, we would have created internal competition before we ever competed externally. That's the core limitation of volume-based clustering: it groups by semantic similarity, not by what the searcher is actually trying to accomplish.

The other workflow where AI-native tools are winning is Answer Engine Optimization (AEO) content structuring. As AI chatbot content creation becomes a primary discovery channel — with ChatGPT, Perplexity, and Google's AI Overviews now synthesizing answers rather than just ranking pages — the structural requirements for content have changed. Legacy content optimization tools score against top-10 SERP competitors. They don't tell you whether your content is structured to be extracted and cited by an LLM. Tools like Perplexity's internal search behavior and emerging AI agent benchmarks point toward a different set of signals: direct answer paragraphs, structured data markup, and topical completeness over keyword density.

For teams building toward topical authority with AI content, this matters enormously. A content cluster that's optimized for traditional rank tracking but ignores AEO structuring is increasingly leaving citation traffic on the table — and that traffic doesn't show up in your rank tracker at all.

The honest caveat: AI-native tools for SEO are still maturing. I wouldn't replace Semrush's keyword database with a ChatGPT workflow for primary keyword research — the volume data isn't there. But for intent classification, content structuring, and tracking how your content performs inside AI models rather than just on traditional SERPs, the AI-native layer is no longer optional. It's the gap in every legacy stack I audit.

One pattern worth flagging: the rise of Google-Extended bot blocking as a signal. Teams are starting to selectively block AI crawlers from scraping their content while simultaneously optimizing for AI citation — a tension that doesn't have a clean answer yet, but one that your stack needs a position on. If you're blocking Google-Extended and wondering why your content isn't appearing in AI Overviews, that's your answer.

How to Audit Your Current SEO Tool Stack in 30 Minutes

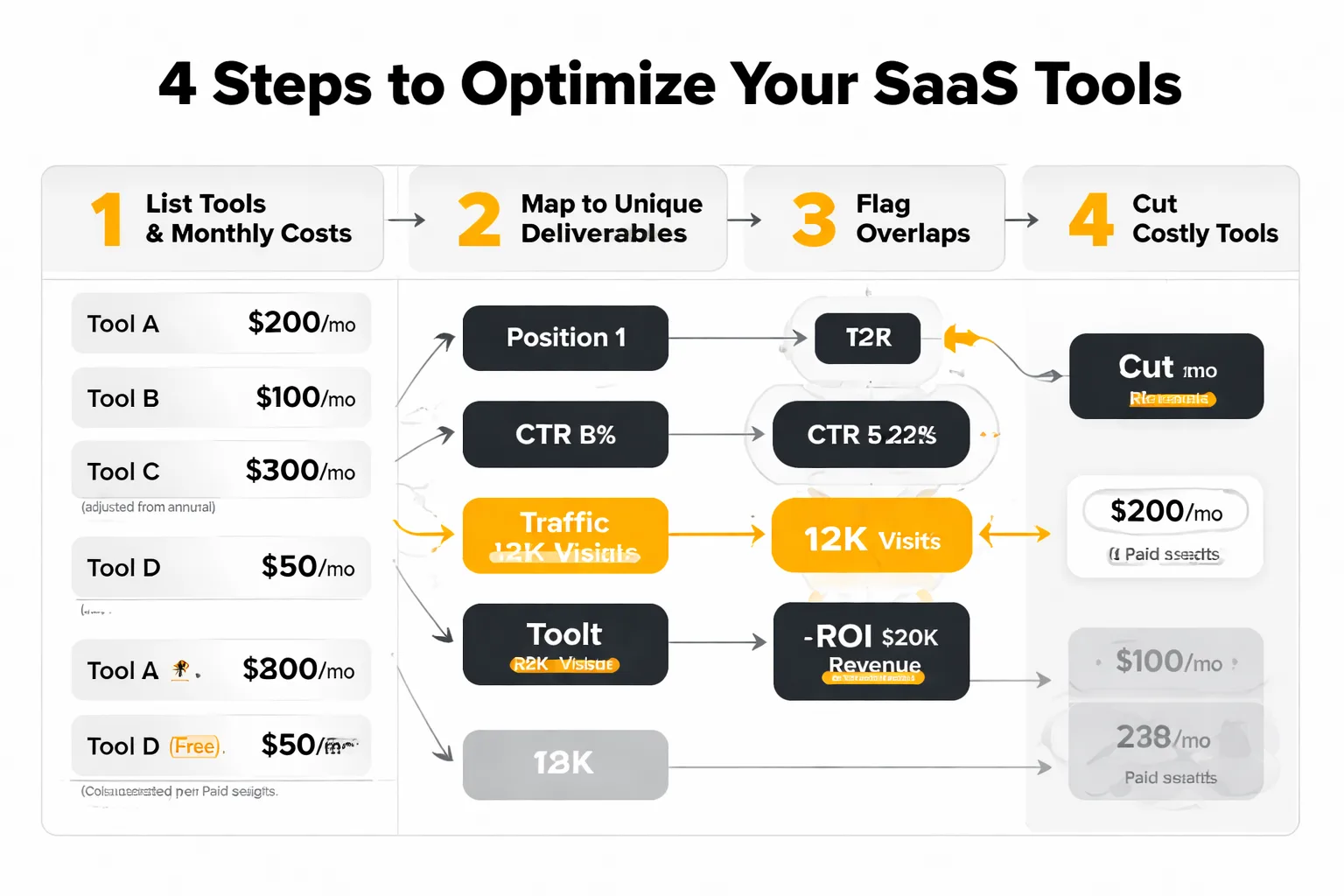

This is the framework I run in team meetings when a client wants to cut tool costs without losing capability. It takes 30 minutes if you come prepared with your current tool list and monthly costs.

Step 1: List every tool and its monthly cost. Include annual subscriptions converted to monthly. Include tools that are technically "free" but require paid team seats. Be exhaustive — include tools that haven't been logged into in 90 days.

Step 2: Assign each tool ONE unique deliverable it owns. Not a feature. A deliverable — a specific output that someone on your team uses to make a decision or produce work. "Rank tracking" is not specific enough. "Weekly rank movement report for our 50 target keywords, reviewed in Monday standup" is a deliverable. If two tools produce the same deliverable, you're paying for the same insight twice.

Step 3: Flag every tool that can't claim a unique deliverable. These are your cut candidates. Don't cancel them yet — first ask whether the deliverable they were supposed to own is actually being produced by another tool, or whether it's just not being produced at all. Sometimes a "redundant" tool is covering a workflow gap that nobody noticed was important until you tried to remove it.

Step 4: Calculate cost-per-insight for your remaining tools. Divide the monthly cost by the number of times per month a specific decision or piece of work is produced from that tool's output. A $200/month tool that drives 4 decisions per month costs $50 per insight. A $50/month tool that drives 1 decision per month costs $50 per insight. Same efficiency. But a $200/month tool that drives 1 decision per month — or zero — is the problem.

The teams I've run this with consistently find the same thing: 2–3 tools survive the audit cleanly, 1–2 tools need workflow fixes to justify their cost, and 1–3 tools get cut immediately with no operational impact. In one audit of an eight-person content team in Q1 of this year, we eliminated $740/month in subscriptions in 45 minutes — none of which required replacing with anything else, because the deliverables those tools were supposed to own were already being produced by tools that stayed.

The SEO keyword cannibalization check belongs in this audit too. Pull your top 20 target keywords in Google Search Console and check which pages are ranking for each. If two pages from your own domain are competing for the same query, you have a tool problem and a content problem — and adding another SEO tool won't fix either one. The audit reveals the overlap; the fix is consolidation.

One more thing worth saying plainly: the best search engine optimization tool stack is the one your team actually uses consistently. I've seen $2,000/month stacks produce worse results than a $200/month stack because the expensive one required workflow changes nobody made. Technical SEO improvements, content optimization, and rank tracking only compound when the data flows into actual decisions. A lean stack that gets used beats a bloated stack that gets ignored — and the 30-minute audit is how you find out which one you actually have.

FAQ

What is the minimum SEO tool stack for a small team?

A lean four-tool core covers most needs: Screaming Frog for technical crawling, Semrush or Ahrefs for keyword research and rank tracking, Frase for content optimization, and Google Search Console plus GA4 for analytics. Total cost runs $283–$323/month — far below what most small teams currently spend.Is Semrush or Ahrefs better for content-first SEO?

Semrush is stronger for content-first teams because its keyword clustering and topic research tools are more developed. Ahrefs wins on backlink data accuracy, making it the better choice for teams with active link building programs. Running both simultaneously is never justified.How do AI tools fit into a traditional SEO stack?

AI-native tools are best used for specific workflows where legacy platforms underperform: intent classification, AEO content structuring, and tracking content performance inside AI models like ChatGPT and Perplexity. They don't replace keyword databases but they fill gaps that traditional rank trackers don't address.What is AEO and why does it matter for SEO tool selection?

Answer Engine Optimization (AEO) is the practice of structuring content to be extracted and cited by AI-powered answer engines — including Google's AI Overviews, ChatGPT, and Perplexity. It matters for tool selection because most legacy SEO tools don't score content against AEO criteria, creating a blind spot in traditional optimization workflows.How do I calculate SEO ROI from my tool stack?

Divide each tool's monthly cost by the number of revenue-linked decisions or content outputs it produces per month. A tool that can't be traced to a specific deliverable that connects to traffic, leads, or revenue is a cost center, not an investment. According to the CMO Survey, 65% of marketers can't demonstrate marketing impact quantitatively — meaning most teams have never run this calculation at all.Should I block AI crawlers with Google-Extended bot blocking?

Only if you have a specific reason to prevent your content from being used in AI training or synthesis. Blocking Google-Extended while simultaneously trying to appear in Google's AI Overviews creates a direct conflict. Decide on a position — optimize for AI citation or block AI crawlers — and configure your robots.txt accordingly.How often should I audit my SEO tool stack?

At minimum, once per year during budget planning — but the 30-minute framework in this article is light enough to run quarterly. Tool utility changes as your content strategy evolves; a tool that earned its seat during an aggressive link building phase may have zero unique deliverable once you shift to a content-first model.Build a leaner, smarter content operation. See how AI-powered content tools can replace half your stack without losing a single insight.