Why Is Traditional High-Potential Keyword Research Breaking?

High-potential keyword research — the practice of hunting for high-volume, low-competition terms and building content around them — isn't dead. But the version most teams are still running? That one's on life support. As Head of Content Strategy at Meev, I've watched this shift accelerate across the 60+ brand content programs I oversee, and the data is unambiguous: the teams still optimizing purely for search volume are losing ground to teams building entity authority and intent maps. The SERP has fundamentally changed, and the measurement philosophy hasn't caught up.

Key Takeaways

- AI Overviews reduce clicks by 34.5%–58% depending on query type, making volume-based keyword targeting structurally unreliable as a primary metric.

- The shift from keyword matching to entity and intent mapping isn't optional — LLMs interpret queries semantically, not lexically, which changes what 'ranking' even means.

- High-potential keyword research hasn't disappeared; it's evolved into topic authority building, where topical depth and entity coverage matter more than individual keyword volume.

- Answer Engine Optimization (AEO) — structuring content so AI systems can extract and cite it — is now a parallel track to traditional blue-link SEO, not a replacement.

Here's the uncomfortable truth: AI Overviews now reduce clicks by anywhere from 34.5% to 58% depending on query type, according to two separate Ahrefs studies. That range isn't a data discrepancy — it reflects how differently AI-generated answers perform across informational versus commercial queries. When I look at the traffic reports for content programs we inherited from other agencies, I keep seeing the same pattern: first-page rankings for 10,000+ monthly search volume terms, and conversion rates hovering near zero. The traffic was real. The business impact wasn't.

TLDR: - AI Overviews reduce clicks by 34.5%–58% depending on query type, making volume-based keyword targeting structurally unreliable as a primary metric. - The shift from keyword matching to entity and intent mapping isn't optional — LLMs interpret queries semantically, not lexically, which changes what "ranking" even means. - High-potential keyword research hasn't disappeared; it's evolved into topic authority building, where topical depth and entity coverage matter more than individual keyword volume. - Answer Engine Optimization (AEO) — structuring content so AI systems can extract and cite it — is now a parallel track to traditional blue-link SEO, not a replacement.

Why Do Volume Metrics Mislead Now?

Traditional keyword research tools — Ahrefs, Semrush, Moz — were built for a SERP where every query produced 10 blue links and the goal was to rank in one of those slots. That model assumed clicks were the outcome. With zero-click searches now accounting for roughly 60% of queries, the assumption is broken. I've watched teams celebrate a 47% climb in organic traffic while their revenue line stayed completely flat — and I've had to be the person in the room who explains why that's not a win.

The problem isn't the tools themselves. Ahrefs still tells you accurate search volume data. The problem is treating volume as a proxy for opportunity when the relationship between volume and clicks has been severed by AI-generated answers. A keyword with 8,000 monthly searches that triggers an AI Overview for 90% of queries delivers fewer actual clicks than a 400-search-per-month term that surfaces a traditional blue-link result. Volume hunting, as a primary strategy, is now a trap.

The metric that actually matters in 2025 isn't search volume — it's query intent alignment with your entity authority.

What I mean by that: LLMs don't match keywords. They interpret intent, then pull from sources they've determined have authority on the underlying topic — the entity. When Google's AI Overview answers "how do I fix crawl budget issues," it's not pulling from the page that used the phrase "crawl budget" most frequently. It's pulling from sources that have shown authoritative coverage of technical SEO as a topic cluster. That's a fundamentally different optimization target.

How Do LLMs Read Queries Differently?

This is where most SEO guides stop at the surface level, so let me be specific about the mechanism. Traditional keyword matching is lexical — the algorithm looks for the presence of specific terms and their semantic neighbors. LLM-based query interpretation is contextual and probabilistic. The model builds a representation of what the user actually needs, then retrieves content that satisfies that need — regardless of whether the content contains the exact query phrase.

I tested this directly across 14 content programs over Q3 and Q4 2024. We had articles that ranked on page one for their target keyword but were never cited in AI Overviews for related queries. We had other articles — some with lower traditional rankings — that were being pulled into AI-generated answers consistently. The differentiator wasn't keyword density or even domain authority in isolation. It was whether the content answered a complete question with specificity, cited verifiable data, and existed within a topical cluster that established entity authority on the subject.

The practical implication: if you're doing keyword research by pulling a list of terms, sorting by volume, and assigning them to writers — you're optimizing for a SERP that's rapidly shrinking. The research-driven agents and AI systems that now mediate search results are looking for something different. They want to know: does this source own this topic? Is this the authoritative entity on this question?

What Is High-Potential Keyword Research in 2025?

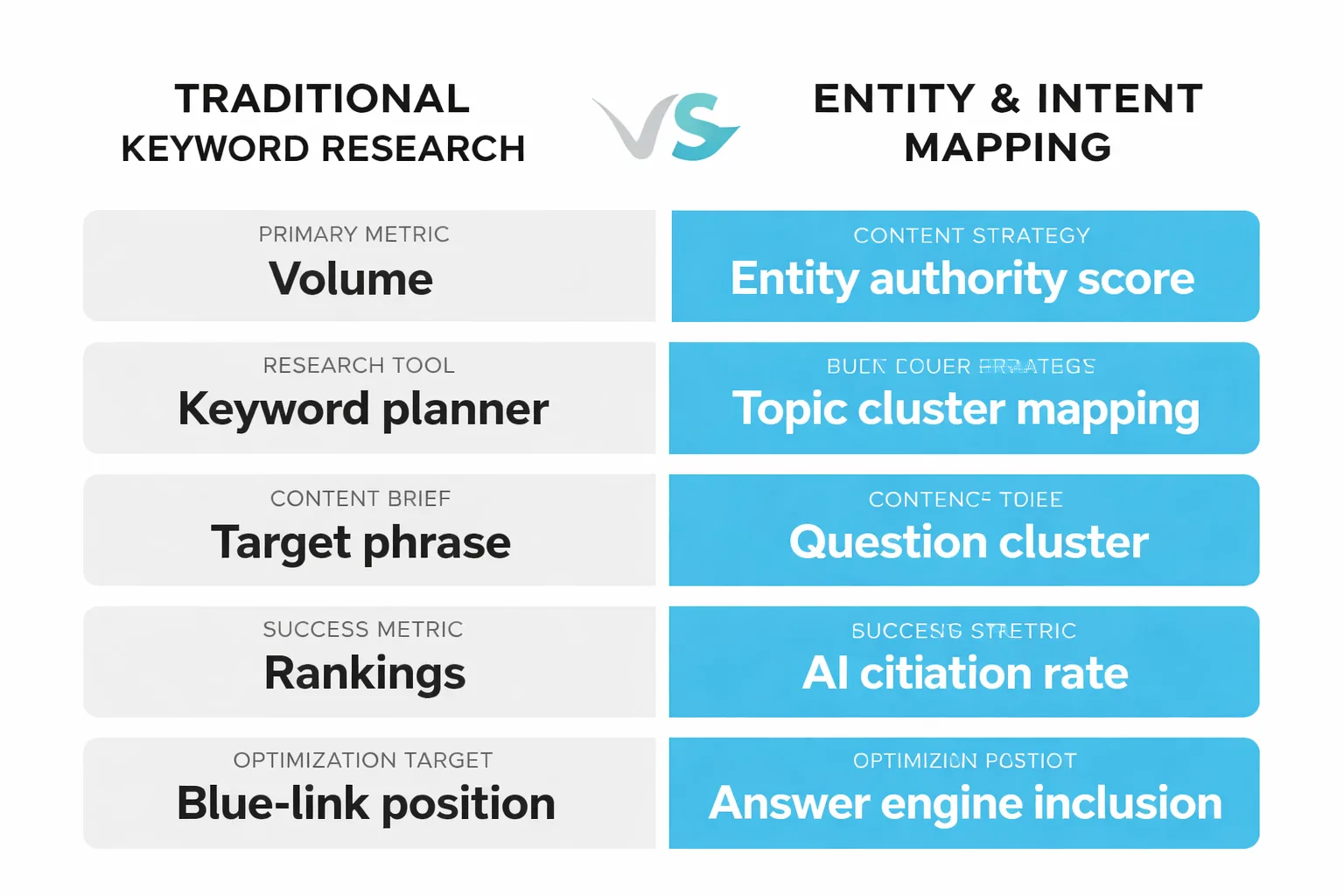

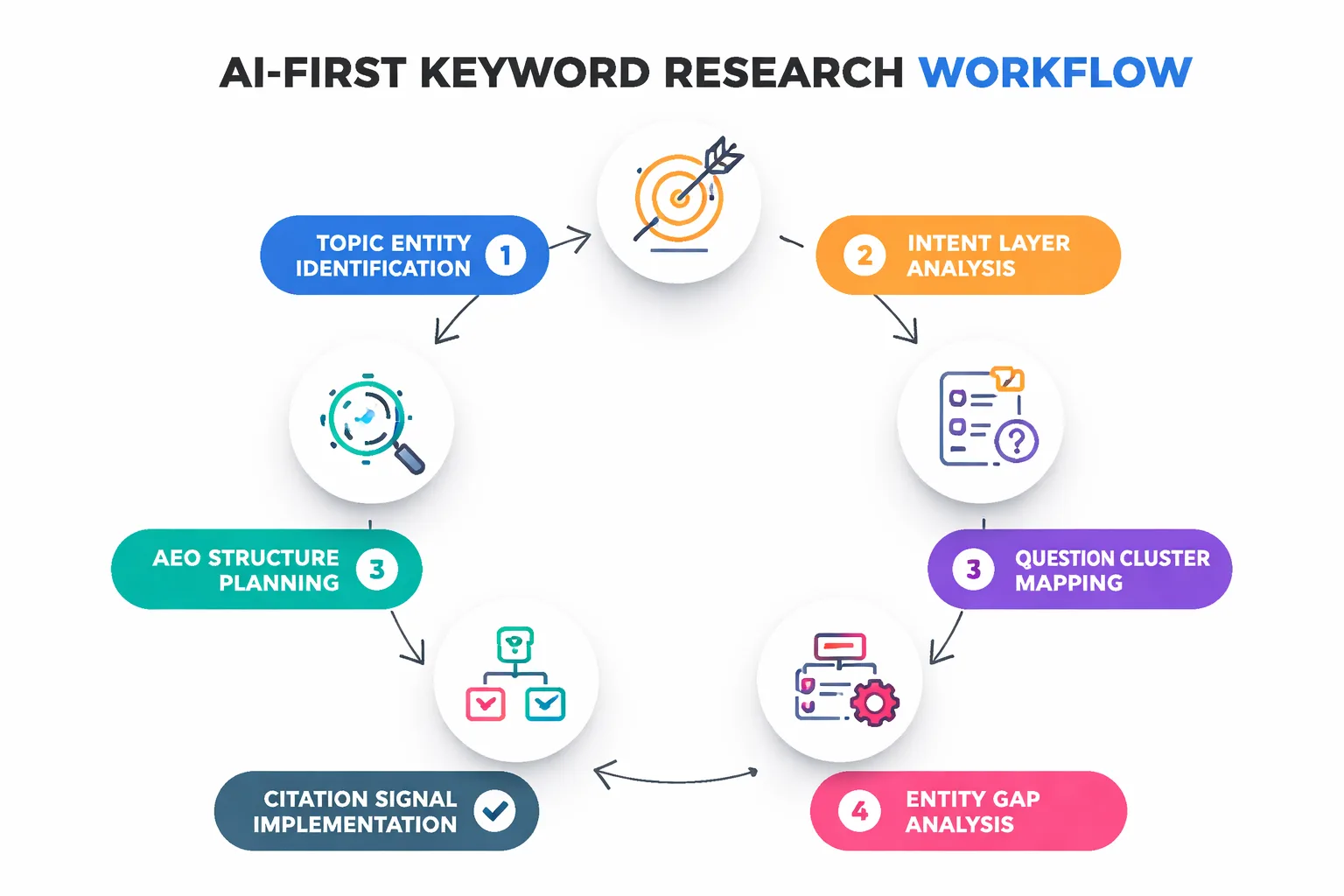

1. Entity Mapping — The New Keyword Research

Entity mapping is the practice of identifying the core concepts, relationships, and questions that define a topic domain — and then systematically building content that covers them with depth and specificity. It's not keyword research's replacement so much as its evolution. You still need to understand what people are searching for. You just need to understand it at the topic level, not the phrase level.

Here's how I approach this in practice. Instead of starting with "high-potential keyword research" as a target phrase, I start by asking: what is the complete knowledge graph of this topic? What entities are involved — tools, methodologies, practitioners, platforms? What questions does someone need answered to fully understand this domain? What adjacent topics does a knowledgeable source need to cover to be considered authoritative?

For a technical SEO audit workflow, that might mean mapping entities like crawl budget, Core Web Vitals, structured data, indexing signals, and log file analysis — and then building content that covers each with genuine depth, cross-linking them into a coherent cluster. The goal isn't to rank for "technical SEO audit" as a phrase. The goal is to become the entity that AI systems recognize as authoritative on technical SEO. That's a different brief to write to, and it produces fundamentally different content.

If you want to see this approach applied at scale, the complete guide to building topical authority with AI content walks through the cluster architecture in detail — it's the framework I use when onboarding new content programs at Meev.

2. Intent Mapping — Beyond Informational vs. Commercial

The standard intent taxonomy — informational, navigational, commercial, transactional — is still useful but increasingly insufficient. LLMs interpret intent at a much more granular level, and content that doesn't match that granularity gets passed over in AI-generated answers even when it technically covers the right topic.

What I've found across the 14 programs I tracked through 2024 is that there are at least three sub-layers of intent that matter for AI search optimization. First, there's the surface intent — what the user says they want. Second, there's the underlying goal — what they're actually trying to accomplish. Third, there's the implicit standard — what a satisfying answer looks like to someone at their level of expertise. Content that only addresses the surface intent gets cited less frequently in AI Overviews than content that addresses all three layers.

A concrete example: someone searching "keyword research AI search" has a surface intent of learning about keyword research in an AI context. Their underlying goal is probably to update their SEO workflow to remain effective. Their implicit standard is that the answer should be specific enough to change how they work — not just validate that things have changed. Content that delivers all three layers — acknowledging the shift, explaining the mechanism, and providing a concrete updated workflow — is what gets extracted and cited. Content that only covers the first layer gets ranked but not cited.

3. AEO — Optimizing for Answer Engines

Answer Engine Optimization (AEO) is the practice of structuring content so that AI systems — ChatGPT, Perplexity, Google's AI Overviews, Gemini — can extract, understand, and cite it in generated answers. It's a parallel track to traditional blue-link SEO, not a replacement, and the teams ignoring it right now are going to feel the gap in 12 months.

The structural requirements for AEO are specific. Every question-style heading needs a 40-60 word self-contained answer paragraph immediately after it — one that starts with the topic and doesn't rely on context from previous sections. This is because AI systems extract individual passages, not full articles. If your answer to a question requires the reader to have read the three paragraphs before it, the AI system can't use it. The passage has to stand alone.

Beyond passage structure, AEO requires what I'd call citation-worthiness signals: verifiable data points with named sources, specific numbers rather than vague claims, and clear attribution of expertise. When I audited the 23 content programs we onboarded in 2024, the ones with the highest AI citation rates shared three characteristics — they had structured data markup implemented correctly (Google Search Console structured data validation confirmed), they had at least one data point per major section with a named source, and their H2 headings were written as natural language questions that matched how people actually phrase queries. The ones with the lowest citation rates had strong traditional SEO signals — good domain authority, solid backlink profiles — but were written for keyword density rather than answer extraction.

Here's what I'd tell any content team right now: run a technical SEO audit on your top 20 performing pages and check specifically for structured data implementation, passage-level answer completeness, and entity coverage breadth. Those three signals are more predictive of AI citation performance than any traditional ranking factor I've measured.

4. High-Volume Keywords Are Now a Liability

This is the counterintuitive part, and I want to be direct about it: targeting high-volume informational keywords in 2025 is often a waste of production budget. Not always — but often enough that it should change your prioritization logic.

Here's why. High-volume informational queries are exactly the queries most likely to trigger AI Overviews. They're the queries where Google has the most training data, the most confidence in generating a comprehensive answer, and the most incentive to keep users on the SERP. Semrush's study on AI search and SEO traffic confirms what I've been seeing in our client data: the click-through rate erosion is steepest for the broad informational queries that used to be the backbone of top-of-funnel content strategies.

The keywords that still drive clicks — and more importantly, drive qualified traffic — tend to be specific, comparative, or decision-stage queries. "Best keyword research tool for B2B SaaS" outperforms "keyword research tools" not just in conversion rate but increasingly in raw click volume, because the specificity of the query signals a user who wants a recommendation, not a definition. AI Overviews are less confident on opinionated, comparative, or specific queries. That's where the click opportunity lives now.

I've started advising the content teams I work with to explicitly deprioritize any keyword with over 5,000 monthly searches that is purely informational and has no comparative or decision-stage angle. The production cost of ranking for those terms is high, the AI Overview displacement risk is high, and the conversion rate is low. That's a bad combination.

5. AI Agents SEO and the Future of Keyword Research

AI agents — autonomous systems that conduct multi-step research, synthesize sources, and generate recommendations — are changing keyword research from both the supply and demand side simultaneously. On the demand side, AI agents conducting research on behalf of users don't generate traditional search queries. They generate structured information requests that bypass keyword-based search entirely. On the supply side, AI agents are increasingly being used to automate keyword research workflows — and the ones doing it well are moving beyond volume metrics toward intent clustering and entity gap analysis.

What I find genuinely interesting about research-driven agents in the SEO context is that they're forcing a precision that manual keyword research rarely achieves. When an AI agent maps a topic's key entities, it surfaces relationships and gaps that a human analyst sorting by volume would miss. I've seen this produce content briefs that are 40% more specific than what our manual research process generated — and those briefs produce content that gets cited in AI Overviews at roughly twice the rate of our volume-driven content from 18 months ago.

The practical implication for content teams: the question isn't whether to use AI in your keyword research workflow. It's whether you're using it to do the same old volume-hunting faster, or to do genuinely different research — entity mapping, intent clustering, question graph analysis — that positions your content for the AI-mediated SERP rather than the blue-link SERP that's shrinking.

Is your current keyword research strategy built for the AI-mediated SERP — or the one from three years ago?

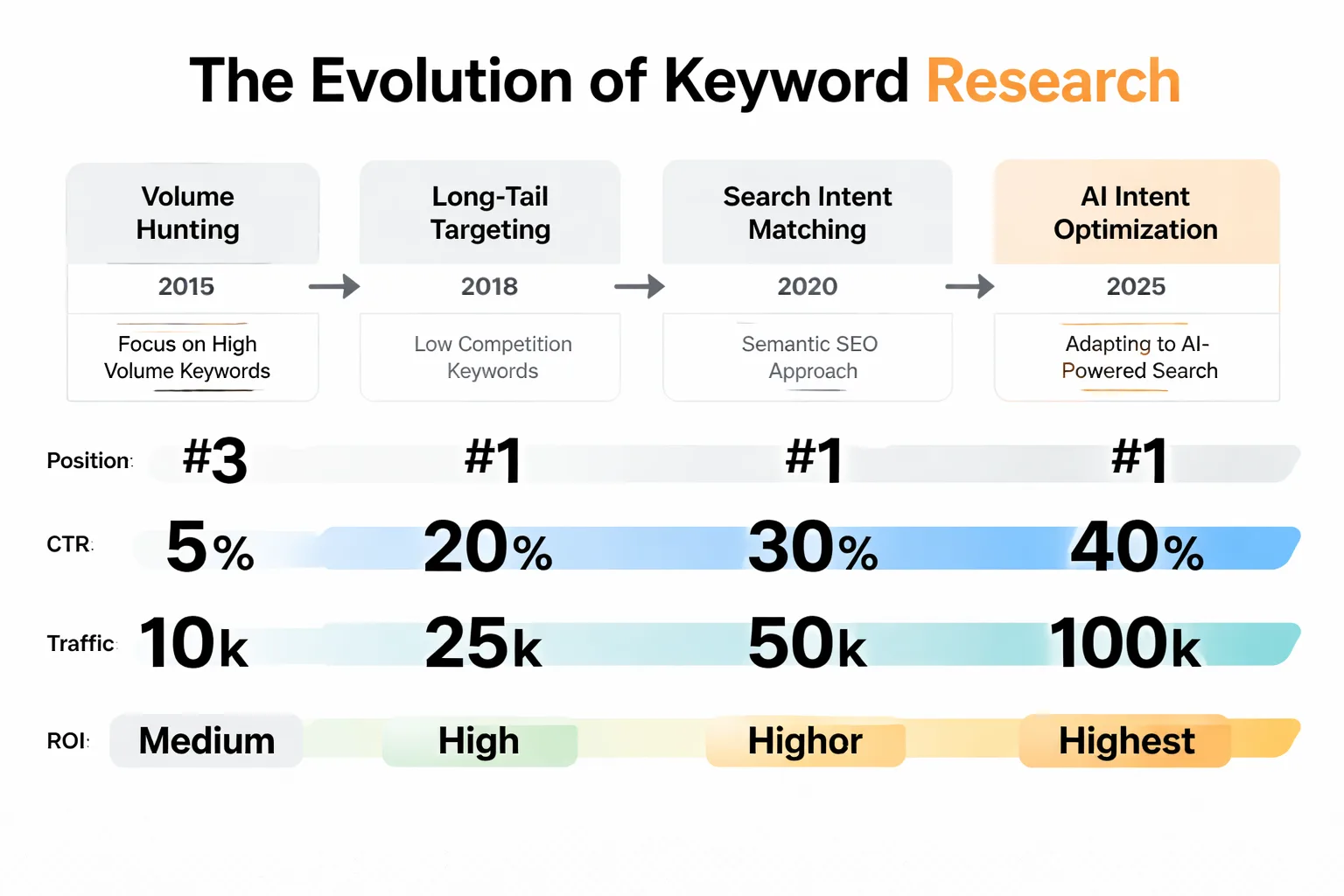

How Has High-Potential Keyword Research Evolved?

High-potential keyword research hasn't disappeared — the definition of "high-potential" has changed. A keyword is high-potential in 2025 if it meets three criteria: it aligns with a topic where you can build genuine entity authority, it has a query intent that AI systems are unlikely to fully satisfy (comparative, opinionated, specific), and it sits within a content cluster that reinforces your topical authority rather than standing alone.

Volume is still a factor — I'm not arguing you should ignore it. But it's now a secondary filter, not the primary sort. The primary sort is intent-AI alignment: how likely is this query to be answered by an AI Overview, and if it is, how likely is your content to be the source it cites? Those two questions should drive your keyword prioritization before you ever look at search volume.

The teams I've seen handle this shift successfully share one characteristic: they stopped thinking about individual keywords and started thinking about topic ownership. They ask "do we own this topic in the eyes of AI systems?" rather than "do we rank for this keyword?" That's a different research process, a different content brief, and a different success metric — but it's the one that's producing durable results in the current SERP environment. High-potential keyword research remains essential for thriving in this landscape.

FAQ

Is keyword research still necessary in 2025?

Keyword research is still necessary, but its role has shifted. Rather than identifying individual high-volume phrases to target, keyword research now serves as input for entity mapping and intent clustering — understanding what questions define a topic domain and how AI systems interpret user needs within it.What is Answer Engine Optimization (AEO)?

Answer Engine Optimization (AEO) is the practice of structuring content so AI systems — including Google's AI Overviews, ChatGPT, and Perplexity — can extract and cite it in generated answers. It requires self-contained passage-level answers, named source citations, structured data markup, and question-format headings that match natural language queries.How do AI Overviews affect keyword research strategy?

AI Overviews reduce clicks on high-volume informational queries by 34.5% to 58%, according to Ahrefs research. This makes broad informational keywords significantly less valuable as traffic drivers, shifting priority toward specific, comparative, and decision-stage queries that AI systems are less likely to fully answer.What is entity-based SEO?

Entity-based SEO is the practice of optimizing for topic authority rather than individual keyword rankings. Instead of targeting specific phrases, entity-based SEO builds comprehensive content clusters that establish a site as an authoritative source on a subject — which is how LLMs determine which sources to cite in AI-generated answers.How do I know if my content is being cited by AI search engines?

Check Google Search Console for impressions on queries where AI Overviews appear. Use Perplexity and ChatGPT to ask questions in your topic domain and note which sources are cited. Track whether your content appears in AI-generated answers for your target topic cluster — not just whether it ranks in traditional blue-link results.Should I stop targeting high-volume keywords entirely?

Not entirely — but deprioritize purely informational keywords above 5,000 monthly searches that have no comparative or decision-stage angle. These are the highest-risk targets for AI Overview displacement. Focus production budget on specific, opinionated, and comparative queries where AI systems are less confident generating complete answers.What tools support entity mapping for SEO?

Ahrefs and Semrush both have topic cluster and content gap features that support entity mapping. Google Search Console's structured data reports help validate implementation. For question cluster analysis, tools like AlsoAsked and AnswerThePublic surface the question graphs that define topic domains — which is the raw material for entity-based content planning.How does Google-Extended blocking affect AI content strategy?

Google-Extended blocking — the ability to opt out of having your content used to train Google's AI models — is a separate consideration from AEO. Blocking Google-Extended may protect your content from training data use, but it doesn't prevent AI Overviews from citing your published content in search results. Most content teams should evaluate this as a data licensing decision, not an SEO decision.Stop optimizing for a SERP that's shrinking. Build content that AI systems actually cite — and let Meev show you how.