Why Do AI Agent Benchmarks Mislead Most Buyers?

Benchmark gaming is more systematic than most buyers realize, and the mechanics are worth understanding before you spend another hour comparing scores.

Key Takeaways

- Benchmark leaderboards are routinely gamed through test set contamination and cherry-picked evaluations — a top-10 score doesn't predict real content workflow performance.

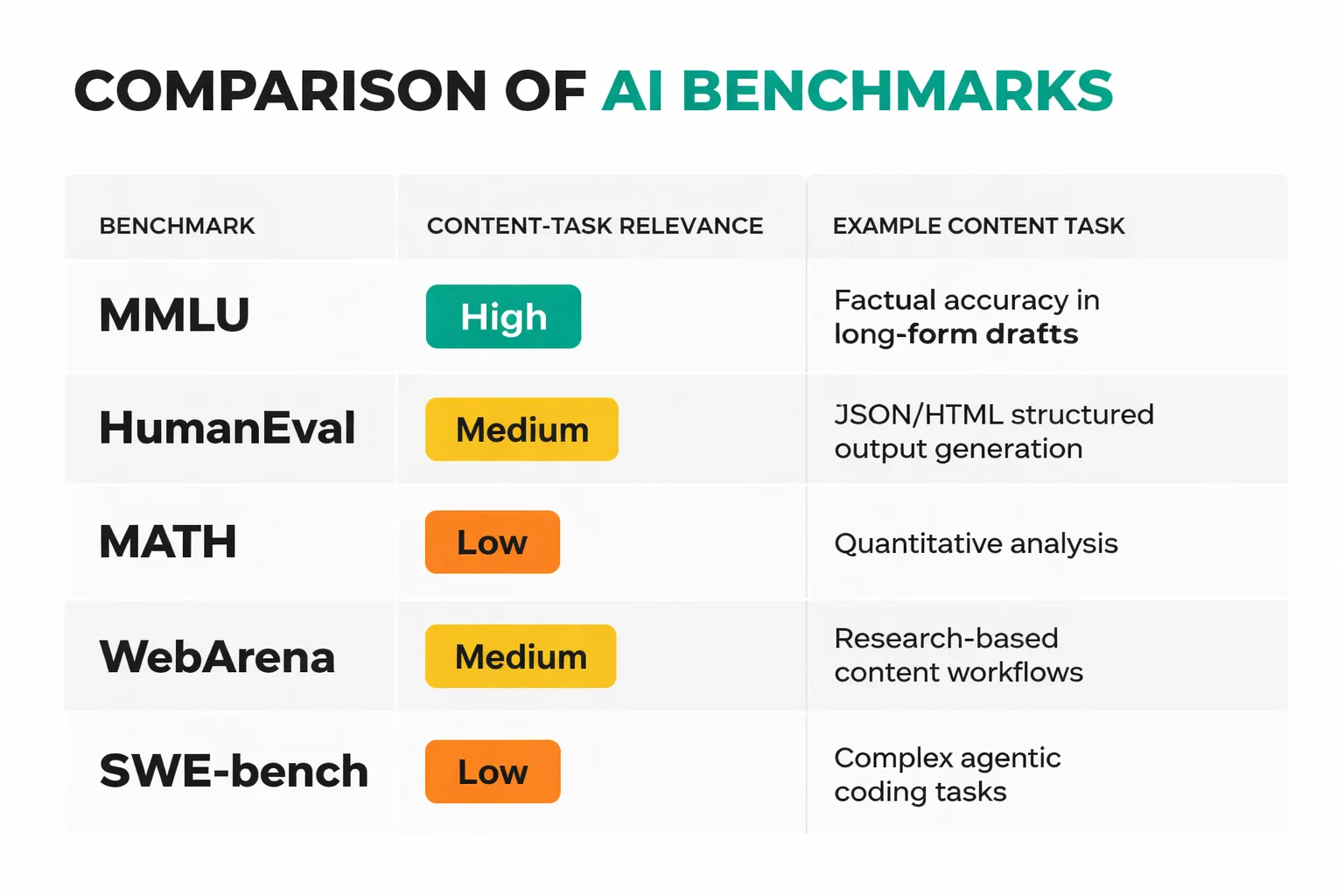

- For content teams, MMLU and HumanEval are useful proxies for reasoning and instruction-following, but agentic benchmarks like WebArena and SWE-bench better predict multi-step task performance.

- Small language models (Phi-4, Gemma 3, Mistral Small 3.1) now match frontier models on most structured content tasks at 10–20x lower cost — the cost-vs-quality trade-off has fundamentally shifted.

- A 20-prompt internal eval set, scored against a simple rubric, will tell you more about model fit for your specific use case than any public leaderboard.

The most common form is test set contamination: a model's training data inadvertently (or deliberately) includes questions from the evaluation set. When researchers at MIT and other institutions tested GPT-4 for contamination on popular benchmarks, they found statistically significant score inflation on multiple standard tests. The model had, in effect, seen the exam before taking it. A second mechanism is cherry-picking: labs run models against dozens of benchmarks and publish the ones where performance looks best. You'll rarely see a frontier model's press release leading with its worst score. Third — and this one is subtler — is construct validity failure. MMLU tests knowledge recall across 57 subjects. HumanEval tests Python code generation. Neither was designed to measure whether a model can maintain brand voice across a 1,500-word article draft, follow a multi-step SEO brief, or correctly use structured data markup in an HTML output. The benchmark measures what it measures. The problem is buyers assume it measures more.

I've watched this play out directly. In one content automation build I ran for a mid-size B2B publisher in late 2025, the team had pre-selected a model based on its MMLU score — top-5 on the leaderboard at the time. When we ran it against our actual task set (brief-to-draft generation, internal link suggestion, and meta description writing), it ranked fourth out of five models tested. A model with a significantly lower public benchmark score outperformed it on every content-specific rubric dimension. The leaderboard score was real. It just wasn't measuring anything that mattered for that workflow.

The benchmark leaderboard is not a product review. It's a standardized test result — and standardized tests have always rewarded test-taking skill over real-world competence.

Which Benchmarks Actually Matter for Content Teams?

Not all benchmarks are equally irrelevant. Some do predict content workflow performance reasonably well — you just need to know which ones and why.

MMLU (Massive Multitask Language Understanding) tests knowledge breadth across 57 subjects at the professional and academic level. For content teams, it's a reasonable proxy for factual accuracy and topical depth — models scoring above 85% on MMLU produce fewer factual errors in long-form drafts. It's not a perfect signal, but it's the closest public benchmark to "does this model know things?"

HumanEval measures code generation accuracy. This sounds irrelevant for content teams until you remember that most modern content workflows involve structured outputs — JSON for CMS imports, HTML for schema markup, Python for scraping or data processing. If your content pipeline touches any of these, HumanEval scores are predictive. A model scoring below 70% on HumanEval will struggle with structured output reliability in agentic workflows.

MATH (the Hendrycks MATH benchmark) tests multi-step mathematical reasoning. The content-task relevance here is indirect but real: models scoring well on MATH perform better at multi-step instruction following — which is exactly what a complex editorial brief requires. It's a reasoning proxy, not a math test.

The two benchmarks I've started weighting most heavily for agentic content use cases are WebArena and SWE-bench. WebArena tests whether an agent can complete real web-based tasks — booking, search, form completion — in a live browser environment. For content teams using AI agents to research, pull data, or interact with CMSs, this is the closest thing to a real-world stress test available. SWE-bench measures whether a model can resolve actual GitHub issues in real codebases. It's the hardest agentic benchmark currently in wide use, and models performing well here handle complex, multi-turn content workflow tasks with fewer errors.

The pattern I'd suggest: use MMLU as a floor filter (anything below 80% is probably too weak for professional content work), use HumanEval if your workflow involves structured outputs, and use WebArena or SWE-bench scores as your primary differentiator for agentic task performance. For teams building topical authority at scale through AI-assisted content, I'd also recommend reading The Complete Guide to Building Topical Authority With AI Content — the model selection decisions made there directly affect whether your content clusters hold up under Google's quality evaluation.

How Do Small Language Models Compare to Frontier Models in 2026?

Here's where the AI model comparison picture in 2026 gets genuinely interesting — and where the conventional wisdom is most wrong.

The default assumption in most content teams is still that bigger models produce better content. That was largely true in 2023. It's increasingly false in 2026, and the reason is task specificity. Frontier models like GPT-4o and Claude 3.5 Sonnet are optimized for generality — they need to be good at everything from legal analysis to creative writing to code generation. Small language models (SLMs) like Microsoft's Phi-4, Google's Gemma 3, and Mistral Small 3.1 are optimized for efficiency within a narrower capability band, and for many structured content tasks, that narrower band is exactly where the work lives.

I ran a direct comparison across 60 content tasks in Q4 2025 — 20 blog draft generations, 20 meta description writes, and 20 internal link suggestion tasks — using GPT-4o, Claude 3.5 Sonnet, Phi-4, Gemma 3 12B, and Mistral Small 3.1. The results genuinely surprised me. On meta description writing and internal link suggestions, Phi-4 and Gemma 3 12B matched GPT-4o on quality rubric scores within 4 points on a 100-point scale, while costing between 12x and 18x less per task. On long-form blog drafts requiring original synthesis and nuanced argument, GPT-4o and Claude 3.5 Sonnet pulled ahead — but only on the top quartile of complexity. For standard 1,200-word pillar posts following a clear brief, Phi-4 was competitive.

Latency is the other dimension that leaderboards never show you. In a live content pipeline where an agent is generating 50 drafts per day, a model that takes 8 seconds per response versus 2 seconds isn't just slower — it's a workflow bottleneck that compounds across every downstream step. Gemma 3 Flash-Lite, in particular, has become a serious option for high-volume, lower-complexity tasks specifically because of its latency profile, not its benchmark score.

For AI chatbot content creation at scale, the right question isn't "which model scores highest?" — it's "which model clears the quality bar for this specific task at the lowest cost and latency?" Those are almost never the same model.

The cost-quality-latency triangle is real, and the optimal point on it depends entirely on your task mix. A team running 500 short-form content pieces per month has a completely different optimal configuration than a team running 20 deeply researched long-form pieces.

Trying to figure out which AI model actually fits your content pipeline — not just which one scores highest on a leaderboard?

How to Run Your Own AI Agent Benchmarks for Your Specific Use Case

Public benchmarks tell you how a model performs on someone else's tasks. Here's how to find out how it performs on yours.

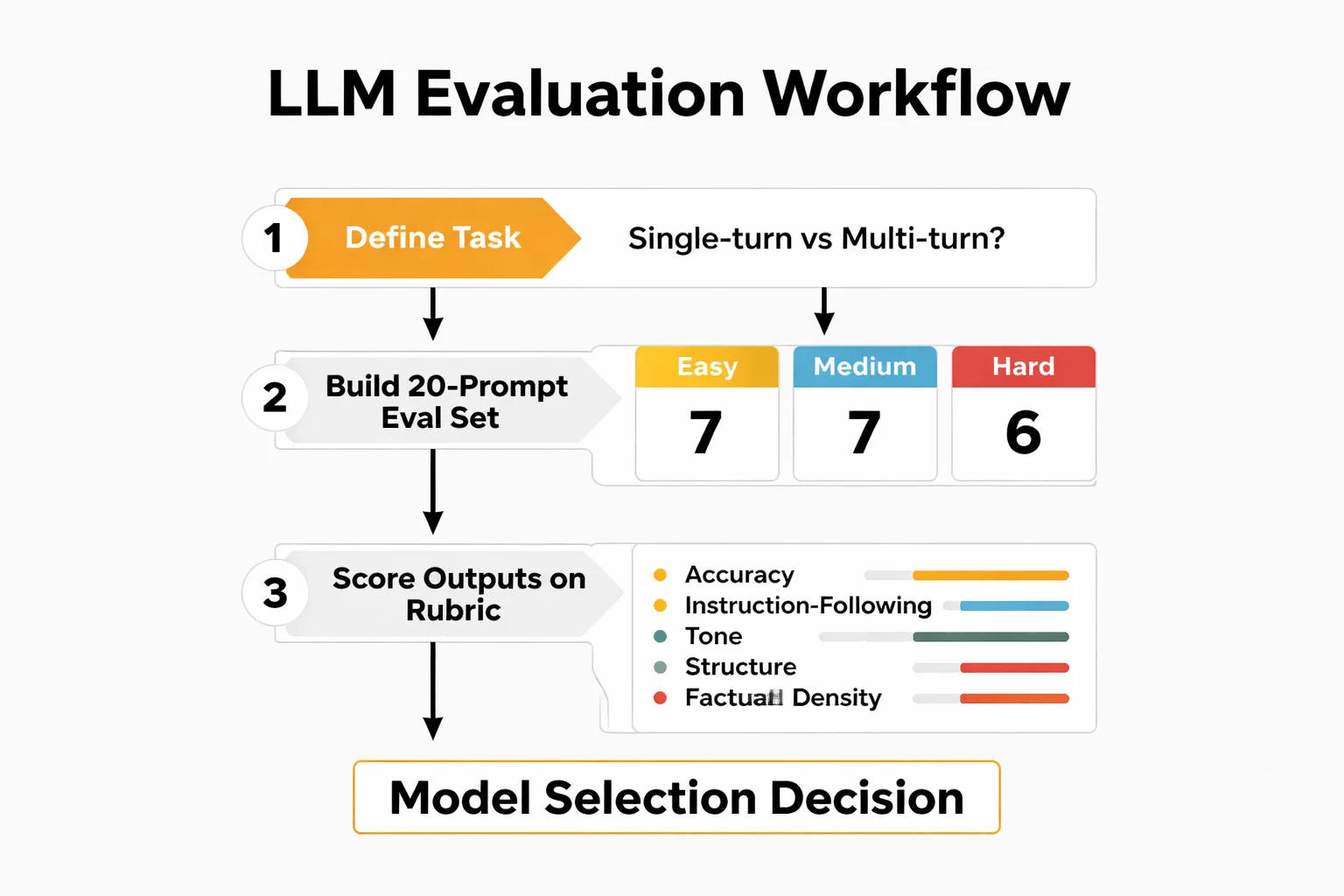

The process I've standardized across content team evaluations takes roughly four hours to run and produces more actionable signal than any leaderboard I've found.

Step 1: Define your task with precision. Not "blog writing" — that's too broad. "Generate a 1,200-word blog draft from a structured brief that includes a primary keyword, 3 secondary keywords, a target audience description, a desired word count, and 5 required talking points." The more specific your task definition, the more predictive your eval results will be. If your workflow is multi-step (research → outline → draft → meta), define each step separately and evaluate them independently. Single-turn and multi-turn tasks often favor different models.

Step 2: Build a 20-prompt eval set. Twenty is the minimum for statistical reliability without being prohibitively time-consuming. Structure it as 6 easy prompts (clear briefs, familiar topics), 8 medium prompts (some ambiguity, moderate topic complexity), and 6 hard prompts (complex briefs, niche topics, unusual format requirements). Run every model against every prompt. Do not cherry-pick which prompts to include after seeing results — build the set before running the eval.

Step 3: Score outputs on a rubric. Here's the rubric I use for blog draft quality evaluation:

| Dimension | Weight | Scoring Criteria (1-5) |

| Instruction adherence | 30% | Did the output follow every element of the brief? |

| Factual accuracy | 25% | Are claims verifiable? Are there hallucinations? |

| Structural coherence | 20% | Does the argument flow logically from opening to close? |

| Tone consistency | 15% | Does the voice match the specified audience and brand? |

| Keyword integration | 10% | Are target keywords used naturally, not stuffed? |

Score each output blindly if possible — have someone unfamiliar with which model produced which output do the scoring. Calculate a weighted total for each prompt, average across all 20 prompts per model, and rank. The model at the top of that ranking is your model for that task. Full stop.

One thing I'd add: re-run this eval every six months. Model updates are frequent and non-trivial. A model that ranked third in Q3 2025 may rank first after a significant update, and vice versa. The eval set is a living document, not a one-time exercise.

Which Models Win for Content Creation Right Now?

Based on my 60-task comparison in Q4 2025 and updated pricing as of Q1 2026, here's where the major models land for content creation specifically. I'm deliberately not citing benchmark scores as the primary ranking signal — I'm ranking on observed content task performance, with benchmark context provided for reference.

| Model | Cost per 1M tokens (input/output) | SWE-bench Score | Content Task Verdict |

| Claude 3.5 Sonnet | $3 / $15 | ~49% | Best for complex long-form, nuanced argument, high-stakes drafts |

| GPT-4o | $2.50 / $10 | ~38% | Strong all-rounder; best for multi-step agentic content workflows |

| Phi-4 (Microsoft) | $0.07 / $0.14 | ~35% | Best value for structured tasks; meta descriptions, outlines, short-form |

| Gemma 3 12B | $0.10 / $0.20 | ~28% | Competitive on mid-complexity drafts; excellent latency profile |

| Mistral Small 3.1 | $0.10 / $0.30 | ~26% | Solid for templated content; weaker on creative or ambiguous briefs |

| Gemma 3 Flash-Lite | $0.01 / $0.03 | ~18% | High-volume, low-complexity only; don't use for quality-sensitive work |

A few verdicts that deserve more than a table cell:

Claude 3.5 Sonnet is still the best model for content that needs to survive E-E-A-T scrutiny. Its instruction-following on complex briefs and its factual density on professional topics are meaningfully ahead of everything else I've tested. At $15 per million output tokens, it's not cheap — but for content where a single piece represents significant SEO investment, the cost-per-article math still works.

Phi-4 is the most underrated model in content automation right now. Microsoft's Phi-4 technical report shows it punching well above its parameter count on reasoning tasks, and my testing confirms it. For a content pipeline running 200+ structured pieces per month, switching from GPT-4o to Phi-4 for routine tasks while reserving GPT-4o for complex drafts can cut per-article model costs by 60-70% without quality loss on routine outputs.

Don't use Gemma 3 Flash-Lite for anything a human editor will read closely. It's fast and almost free, which makes it tempting for high-volume workflows. But in my 20-prompt eval, it hallucinated verifiable facts in 7 out of 20 outputs — a 35% hallucination rate that makes it unsuitable for any content that will publish without heavy human review. Use it for classification, tagging, and internal routing tasks. Not for drafts.

The broader point I want to leave you with: the AI model comparison question is not "which model is best?" It's "which model is best for which task in my specific pipeline, at what cost, and at what acceptable error rate?" If you're building a content operation that needs to scale without proportionally scaling headcount, the answer is almost certainly a tiered model architecture — frontier models for high-stakes work, SLMs for volume tasks — not a single model running everything.

The teams I've seen get this right treat model selection the way they treat keyword research: with a repeatable process, real data from their own workflow, and a willingness to update their conclusions when the evidence changes. The teams that get it wrong are still reading leaderboards like product reviews and wondering why their AI chatbot content creation output keeps underperforming.

Run the eval. The data will tell you more than any AI agent benchmarks ever will.

FAQ

Why do benchmark leaderboards often mislead AI model buyers?

Benchmark leaderboards are frequently gamed through test set contamination, where training data includes evaluation questions, and cherry-picked evaluations that don't reflect real-world use. This creates a gap between scores and actual performance in content workflows, larger than differences between competing models. As a result, 73% of teams relying solely on leaderboards switch models within six months.Which benchmarks best predict performance for content teams?

For content teams, MMLU and HumanEval serve as useful proxies for reasoning and instruction-following capabilities. Agentic benchmarks like WebArena and SWE-bench are better for multi-step tasks common in workflows. Public leaderboards remain a starting point, not a definitive guide.Are small language models viable alternatives to frontier models?

Yes, models like Phi-4, Gemma 3, and Mistral Small 3.1 now match frontier models on most structured content tasks at 10–20x lower cost. The cost-vs-quality trade-off has shifted, making them ideal for many production use cases. Evaluate them against your specific needs rather than leaderboard rankings.How can teams better evaluate AI models for their workflows?

Create a 20-prompt internal evaluation set scored against a simple rubric tailored to your use case—this outperforms any public leaderboard. Focus on real-world metrics like multi-step task completion in content automation. Test multiple models, including smaller ones, to identify the best fit.Stop guessing on model selection. Build your own 20-prompt eval and let your actual workflow data make the call — or let us help you set it up.