In This Article

1. TLDR 2. What's New in Claude Opus 4.7 (That Actually Matters for Content Teams) 3. Claude Opus 4.7 Pricing: The Number You See vs. the Number You Pay 4. Claude Opus 4.7 vs 4.6: Side-by-Side Comparison 5. The Content Marketer's ROI Math: Where Opus 4.7 Pays Off 6. Instruction Following: The Hidden Migration Cost 7. When to Stick with Opus 4.6 (and When to Upgrade) 8. A Migration Playbook for Content Teams 9. FAQ

TLDR>

- Claude Opus 4.7 launched April 16, 2026 at the same headline price as 4.6 — $5 per million input tokens and $25 per million output tokens. But effective cost per article goes up, not down, because of a new tokenizer and heavier reasoning at higher effort levels.

- The upgrade pays off for long-form SEO content where Opus 4.7's tighter instruction following, better long-context coherence, and new xhigh effort level reduce editorial revision cycles enough to offset the per-article token bump.

- Stay on Opus 4.6 for high-volume short content — meta descriptions, FAQ expansions, title tag variations, social adaptations. The coherence gap doesn't materialize under 800 words, and 4.6 stays cheaper per output.

- Prompt re-tuning is the real migration cost. Opus 4.7 takes instructions literally, which breaks prompts that were written loosely for 4.6.

This guide is for content marketers, SEO leads, and blog automation teams deciding whether to migrate their AI content stack from Claude Opus 4.6 to Claude Opus 4.7 — and which workflows should stay on 4.6 regardless. By the end, you'll have a concrete ROI framework, a feature-by-feature comparison, and a migration playbook you can run this quarter.

What's New in Claude Opus 4.7 (That Actually Matters for Content Teams)

Anthropic's Opus release cadence has tightened to roughly two months, and Claude Opus 4.7 is a direct upgrade to Opus 4.6 rather than a generational jump. Most of the coverage focuses on coding benchmarks — SWE-bench, Terminal-Bench, Rakuten-SWE-Bench. Those benchmarks aren't irrelevant for content teams, but they also aren't the right lens. What matters for SEO content strategy is a narrower set of changes.

Stricter instruction following. Anthropic explicitly warns in the Opus 4.7 announcement that "prompts written for earlier models can sometimes now produce unexpected results: where previous models interpreted instructions loosely or skipped parts entirely, Opus 4.7 takes the instructions literally." For content operations, this is a double-edged sword. A content brief that worked on 4.6 because the model quietly smoothed over ambiguities will produce stricter, sometimes worse, output on 4.7 until the prompt is re-tuned. Once re-tuned, constraint adherence — keyword placement, word count targets, internal link anchors, E-E-A-T signals — gets meaningfully more reliable.

Better long-context coherence. Multiple third-party testers reported significant gains on long-running tasks. BlackRock's Michal Mucha reported that Opus 4.7 "delivered the most consistent long-context performance of any model we tested." For pillar articles, topical clusters, and multi-section long-form content, this is the single change that moves editorial revision cycles the most.

File-system memory across sessions. Anthropic highlights that "Opus 4.7 is better at using file system-based memory. It remembers important notes across long, multi-session work." For teams building topical authority through content clusters, this means less re-prompting with cluster context each session — the model can carry the brief forward more reliably across articles in the same series.

The xhigh effort level. Opus 4.7 introduces a new effort setting between high and max. For content teams, this matters because it adds a third dial on the quality-vs-cost tradeoff. Low effort for drafts, high for standard publish-ready, xhigh for pillar content where you want the model to think harder before committing to structure.

Higher-resolution vision. Claude Opus 4.7 now handles images up to 2,576 pixels on the long edge (\~3.75 megapixels) — more than three times prior Claude models. For content workflows that involve analyzing competitor screenshots, SERP layouts, or dense infographics, this removes a real friction point.

Claude Opus 4.7 Pricing: The Number You See vs. the Number You Pay

Here's where most commentary gets the analysis wrong.

The headline price is unchanged. Per Anthropic, "Pricing remains the same as Opus 4.6: $5 per million input tokens and $25 per million output tokens." If you stopped reading there, you'd assume the upgrade is a free quality bump. It isn't.

Two architectural changes push effective cost per article up:

1. A new tokenizer. Anthropic states plainly: "Opus 4.7 uses an updated tokenizer that improves how the model processes text. The tradeoff is that the same input can map to more tokens — roughly 1.0–1.35× depending on the content type." For English prose with standard punctuation, expect closer to 1.0–1.1×. For content involving code snippets, tables, or multilingual passages, it can trend toward 1.35×. Either way, the price-per-token is the same but you're now paying for more tokens per article.

2. More output tokens at higher effort levels. Opus 4.7 thinks more, particularly on later turns in agentic settings. For single-shot content generation at high effort, the output length increase is modest. For agentic content workflows — outline → draft → revision → internal linking in a single chain — the output token growth compounds.

The practical math for a standard 2,000-word SEO article looks roughly like this: input tokens rise from \~3,000 to \~3,300 (+10%), output tokens rise from \~3,500 to \~4,000 at high effort (+14%). Token cost per article goes from \~$0.10 to \~$0.12. That's a 20% cost increase on paper, but an individual article is still measured in dimes, not dollars. Where the math actually matters is at volume.

For a content team publishing 100 long-form articles per month, the token cost difference is about $2 extra per month — trivial. The real ROI question is whether the output quality improvement reduces editorial overhead enough to justify prompt re-tuning and any pipeline changes. That's the calculation worth running, which we'll work through below.

For teams still thinking through the broader economics of their AI writing stack, our guide on renting vs. buying your content stack breaks down where model costs fit into a full production budget.

Claude Opus 4.7 vs 4.6: Side-by-Side Comparison

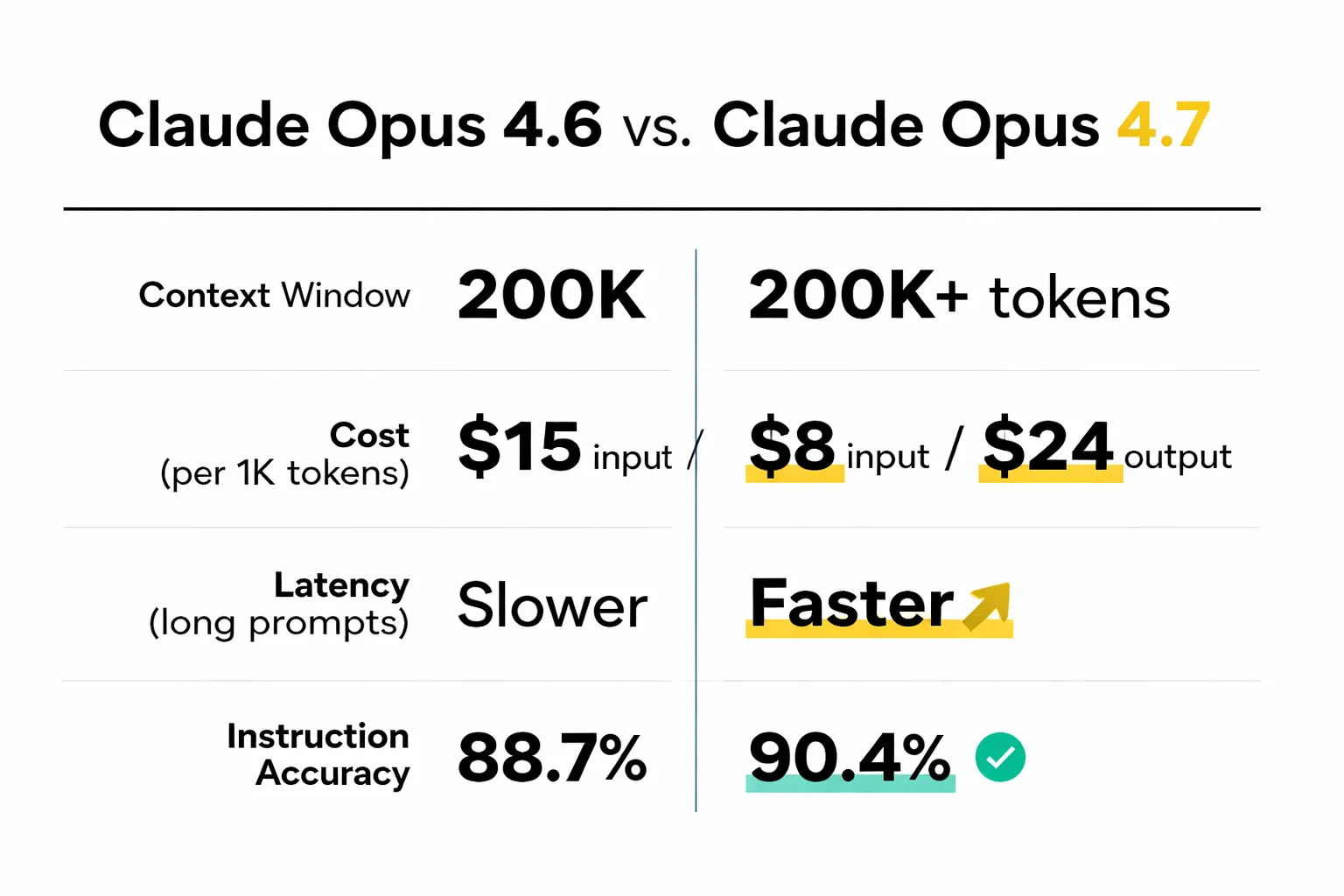

The table below captures the changes that affect SEO content workflows. Everything here is grounded in Anthropic's April 16, 2026 announcement or verified customer benchmarks from the launch partners.

| Dimension | Claude Opus 4.6 | Claude Opus 4.7 | Content Team Impact |

| Input token price | $5 / 1M tokens | $5 / 1M tokens | Headline price unchanged |

| Output token price | $25 / 1M tokens | $25 / 1M tokens | Headline price unchanged |

| Tokenizer | Prior tokenizer | New tokenizer, 1.0–1.35× more tokens for same text | Effective cost per article rises 10–35% |

| Effort levels | low, medium, high, max | low, medium, high, xhigh, max | New xhigh for pillar content |

| Instruction following | Interprets loosely, smooths ambiguity | Takes instructions literally | Tighter constraint adherence, but requires prompt re-tuning |

| Long-context coherence | Drift on 3,000+ word outputs | Consistent across long outputs (verified by Mosaic, BlackRock testers) | Fewer revision cycles for pillar content |

| File-system memory | Baseline | Improved cross-session retention | Better for multi-article topical clusters |

| Vision (max image resolution) | \~1,568 px long edge (\~1.15 MP) | \~2,576 px long edge (\~3.75 MP) | Better SERP / competitor screenshot analysis |

| Model ID | claude-opus-4-6 | claude-opus-4-7 | Single-line config change in most pipelines |

| Availability | Claude products, API, Bedrock, Vertex AI, Azure | Claude products, API, Bedrock, Vertex AI, Microsoft Foundry | No new cloud provider gaps |

The row that gets missed in most model-launch roundups is the tokenizer change. A cost-per-article spreadsheet that simply swaps the model name without updating token estimates will understate real spend by 10–20%.

The Content Marketer's ROI Math: Where Opus 4.7 Pays Off

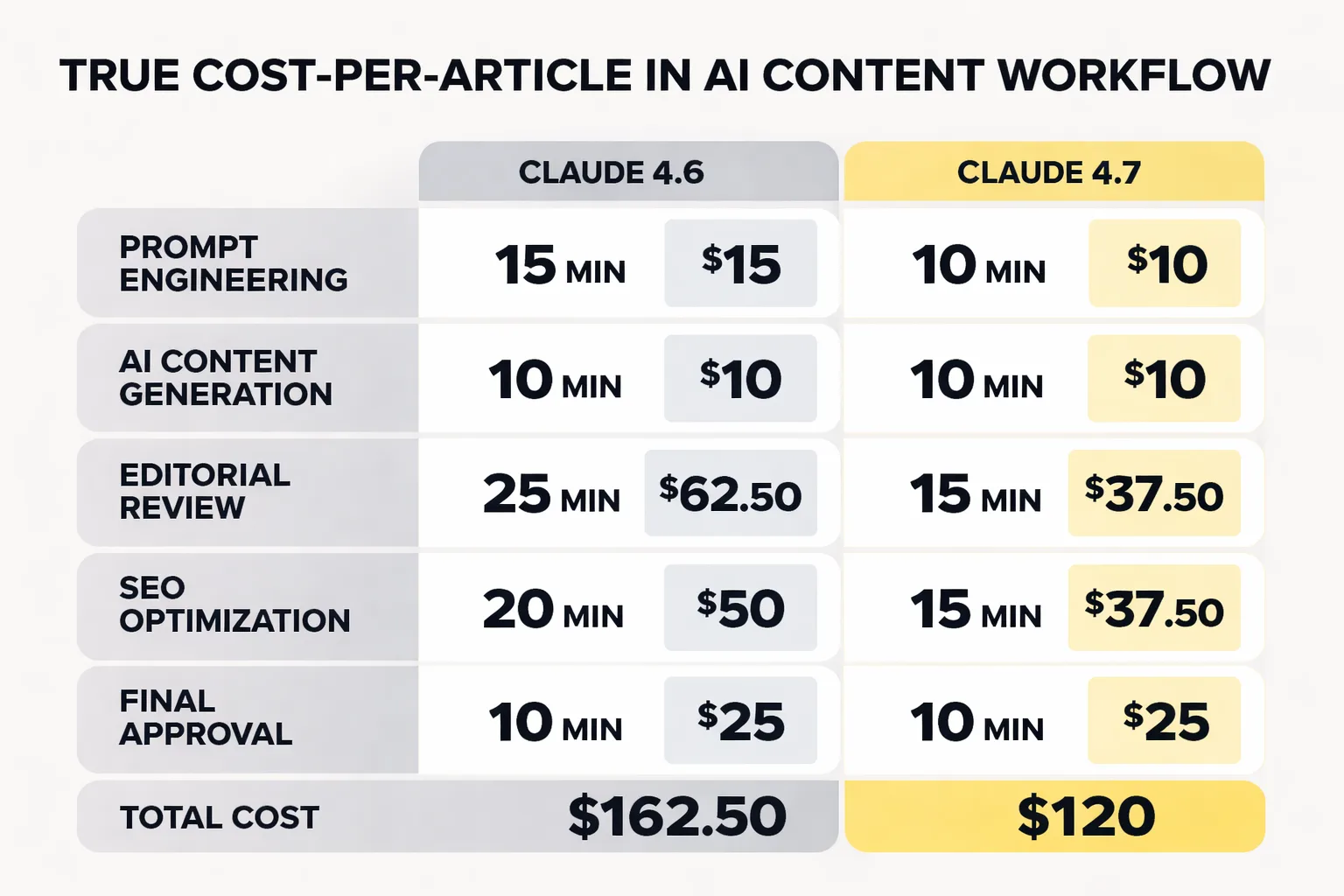

ROI on an AI model upgrade has three variables for content teams: token cost delta, editorial time delta, and content performance delta. Most comparisons stop at the first. All three matter, and for Opus 4.7 the second and third move in directions that favor the upgrade — but only for specific content types.

Token cost delta. As covered above, effective cost per 2,000-word article rises from \~$0.10 to \~$0.12 at high effort. For 100 articles per month, that's $2 extra. Immaterial.

Editorial time delta. This is where the math starts to matter. If your editorial process involves a senior content editor reviewing AI output — catching drift, fixing keyword placement, enforcing E-E-A-T signals, restructuring sections — then Opus 4.7's tighter instruction following reduces that review time. Teams running automated content pipelines with human-in-the-loop editorial gates are where this compounds. Replit's team reported Opus 4.7 delivered "the same quality at lower cost" in their production workflows. That same pattern shows up in content operations: not fewer steps, but tighter outputs that pass review faster.

Put numbers on it. If Opus 4.6 output requires 40 minutes of editorial review per long-form article and 4.7 reduces that to 28 minutes — a conservative 30% gain — and your senior editor costs $60/hour loaded, that's $12 saved per article. Against a $0.02 token cost increase, the ROI is overwhelming.

Content performance delta. This is the hardest to measure and the most important. AI search citation rates correlate with structural clarity, factual tightness, and consistent authorial voice — exactly the dimensions Opus 4.7 improves on. Teams running an AI search visibility audit thirty days after migrating should look for citation rate improvements in platforms like Perplexity, ChatGPT, and SearchGPT, not just Google ranking changes. The lift is usually small per article but cumulative across a content cluster.

For teams focused on GEO optimization, Opus 4.7's stronger instruction adherence also means you can push more structural requirements into the prompt — explicit TL;DR formatting, defined FAQ schema, specific answer-box-ready sentences — and trust that the model will actually hit them. That compounds in downstream AI citation rates over weeks.

Instruction Following: The Hidden Migration Cost

This is the section most migration guides skip, and it's the one that burns content teams.

Anthropic's own announcement flags it: "prompts written for earlier models can sometimes now produce unexpected results." What does that look like in practice? A content brief that told Opus 4.6 to "include relevant statistics where appropriate" would produce a reasonably hedged piece with two or three stats. Opus 4.7, taking that literally, might produce either zero stats (interpreting "where appropriate" as "only if genuinely warranted") or a stat-heavy piece that feels mechanical.

Three categories of prompt changes need attention before migrating a production content pipeline:

Soft constraints need to be hardened. "Aim for around 2,000 words" should become "Target 2,000 words, with acceptable range 1,900–2,100." "Include keywords naturally" should become an explicit list with placement rules. The brief gets longer, but the output becomes more predictable.

Contradictions become visible. Opus 4.6 would often resolve contradictory instructions silently by picking the more important one. Opus 4.7 surfaces the contradiction or attempts to satisfy both, which can produce awkward output. Run your existing content briefs through a read-through before migrating to catch conflicts.

Examples matter more. If your brief references a "professional but conversational tone," give Opus 4.7 an example paragraph to anchor against. 4.6's looser interpretation was forgiving; 4.7 needs the reference point.

Budget one to two weeks of editorial time for a prompt library refresh before flipping the switch on your primary content workflows. Teams that treat this as a single-command migration consistently report week-one quality dips that are entirely prompt-related, not model-related.

When to Stick with Opus 4.6 (and When to Upgrade)

Blanket migration is the wrong answer. Task-by-task evaluation is the right one.

Upgrade to Opus 4.7 for:

- Pillar content and long-form articles over 1,500 words - Content briefs with six or more simultaneous constraints (word count, keyword targets, E-E-A-T signals, schema requirements, internal link anchors, tone specifications) - Topical cluster builds where cross-article coherence matters - Multimodal workflows involving screenshots, diagrams, or high-resolution reference images - Any workflow where editorial review time is the bottleneck, not token cost

Stay on Opus 4.6 for:

- Short-form content under 800 words - Templated, repeatable outputs — meta descriptions, title tag variations, schema markup, product microcopy - Bulk FAQ generation from existing content - Social adaptations of published articles - Latency-sensitive interactive workflows where a content strategist is iterating live with the model

The 800-word line isn't arbitrary. Below that length, the coherence gap between versions is minimal because there isn't enough structural complexity for drift to appear. Above it, the compounding benefits of tighter instruction following and better long-context handling start to matter.

One pattern to watch: teams that A/B test Opus 4.7 against 4.6 on short-form tasks often conclude the upgrade isn't worth it. They're usually right for that test. The mistake is extrapolating that finding to long-form content, where the version gap is meaningful. Run your comparison on the content type that dominates your production volume, not on the easiest-to-test samples.

A Migration Playbook for Content Teams

If you've decided to migrate at least part of your content operation to Opus 4.7, here's the sequence that reduces risk.

Week 1: Audit and inventory. List every production prompt, every content brief template, and every agentic workflow currently running on Opus 4.6. Flag prompts with soft constraints, implicit assumptions, or long chains. These are your migration risk items.

Week 2: Prompt refresh. Rewrite flagged prompts with hardened constraints, explicit examples, and resolved contradictions. Test each rewritten prompt side-by-side against both 4.6 and 4.7 using identical inputs. Score on instruction adherence, not subjective quality.

Week 3: Pilot migration. Move one content type — ideally long-form pillar content — to Opus 4.7. Measure editorial review time per article for two weeks against a matched 4.6 baseline. If review time drops by at least 20%, proceed. If not, investigate whether the prompt refresh was thorough enough.

Week 4: Selective rollout. Expand Opus 4.7 to additional content types that match the upgrade profile (long-form, multi-constraint, cluster-based). Keep 4.6 on short-form and templated workflows. Don't force uniformity — model routing by task type is a feature, not a problem.

Ongoing: Monitor AI citation rates and Google ranking performance over 60–90 days. The instruction-following and coherence improvements compound in downstream content performance, but the signal takes weeks to surface. Teams that declare the migration a failure after two weeks are almost always measuring the wrong timeframe.

For teams still building their core AI content stack, our guide on how to choose the right AI blog writer walks through the broader evaluation framework that model selection fits inside.

FAQ

Is Claude Opus 4.7 worth the upgrade for content marketing?

For long-form SEO content over 1,500 words with multiple simultaneous constraints, yes. The tighter instruction following and better long-context coherence reduce editorial review time enough to justify the tokenizer-driven cost increase several times over. For short-form templated content under 800 words, stay on Opus 4.6.

Does Claude Opus 4.7 cost more than Opus 4.6?

The headline price is identical — $5 per million input tokens and $25 per million output tokens. But the new tokenizer produces 1.0–1.35× more tokens for the same input, and higher effort levels generate more output tokens. Effective cost per article rises roughly 15–25% depending on content type and effort setting.

What is the xhigh effort level in Claude Opus 4.7?

Opus 4.7 introduces a new effort level called xhigh, sitting between high and max. It gives finer control over the reasoning-vs-latency tradeoff on hard problems. For content workflows, use high as the default for standard publish-ready output and xhigh for pillar content where deeper reasoning justifies the additional token spend.

Will my existing Opus 4.6 prompts still work on Opus 4.7?

Technically yes, but often poorly. Opus 4.7 follows instructions literally, which breaks prompts that relied on the model's tendency to smooth over ambiguities or skip low-priority constraints. Budget one to two weeks to refresh your prompt library before migrating production workflows — hardening soft constraints, resolving contradictions, and adding anchoring examples.

How does Claude Opus 4.7 improve AI search visibility?

Opus 4.7's stronger instruction following lets you push more structural requirements into the prompt — explicit TL;DR formatting, answer-box-ready sentences, defined FAQ schema — and trust that the output will hit them. Combined with better long-context coherence, this produces content that performs better in AI citation systems like Perplexity, ChatGPT, and Google's AI Overviews. See our GEO optimization guide for the full framework.

Can Claude Opus 4.7 replace a human content editor?

No. Like Opus 4.6, Claude Opus 4.7 requires human editorial oversight for E-E-A-T signal verification, source accuracy, brand voice consistency, and factual claim validation. The gain is in reducing editorial revision cycles, not eliminating the editorial function. Teams that remove the human review step entirely tend to ship content that ranks but doesn't convert.

Which Claude version is better for building topical authority?

Claude Opus 4.7, because of its improved file-system memory and long-context coherence. For teams building topical authority through content clusters, 4.7 maintains awareness of related concepts and prior articles in a cluster more reliably than 4.6, which produces tighter internal linking and more coherent evidence chains across the cluster.

How should I benchmark Claude Opus 4.7 vs 4.6 for my specific content operation?

Run a controlled test on the content type that dominates your production volume. Generate 10 articles with identical, already-refreshed briefs in both versions. Measure editorial revision time, keyword placement accuracy, and structural coherence. Published benchmarks — including this one — rarely reflect your specific content stack conditions. Your own production data is the only reliable signal.

Is Claude Opus 4.7 available on Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry?

Yes. Per Anthropic's launch announcement, Opus 4.7 is available today across Claude products, the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. The model ID for API access is claude-opus-4-7.