By Judy Zhou, Head of Content Strategy

Key Takeaways

- Implement quality gates that block AI drafts from reaching your CMS unless they pass measurable thresholds for information gain, unlike the agency case that saw traffic declines after scaling to 100 posts per hour with zero human review.

- Anchor every automated topic to a defined topical map to avoid topical drift that lowers crawl frequency and softens rankings on core subjects.

- Treat SEO automation as a quality-control problem rather than a volume problem, since Google's Helpful Content system suppresses scaled output that lacks real information gain.

- Place all review gates before content publishes, not after, because 91% of frequent AI users now rely on LLMs for searches and will ignore thin, unoriginal results.

Automated SEO content is only a problem when it has nothing real to say.

To automate SEO effectively in 2026, you need quality gates before content reaches your CMS, not after. Google's Helpful Content system now actively suppresses scaled output that lacks information gain. 91% of frequent AI users rely on LLMs for internet searches, according to research cited in a Nature Communications framework study on LLM citation accuracy. A documented agency case study showed traffic decline and ranking loss after producing AI content at 100 posts per hour with zero human review, per Search Engine Journal's analysis. Quality-gated content publishing. Where automated drafts are blocked from going live unless they pass measurable thresholds. Is the only approach that scales without triggering spam signals.

I run content strategy at Meev, where we oversee AI-driven publishing for hundreds of brands. The pattern I keep seeing is this: teams treat automation as a volume problem and then wonder why their traffic charts look like a ski slope going down. The fix isn't less automation. It's smarter gates.

Why Most SEO Automation Backfires

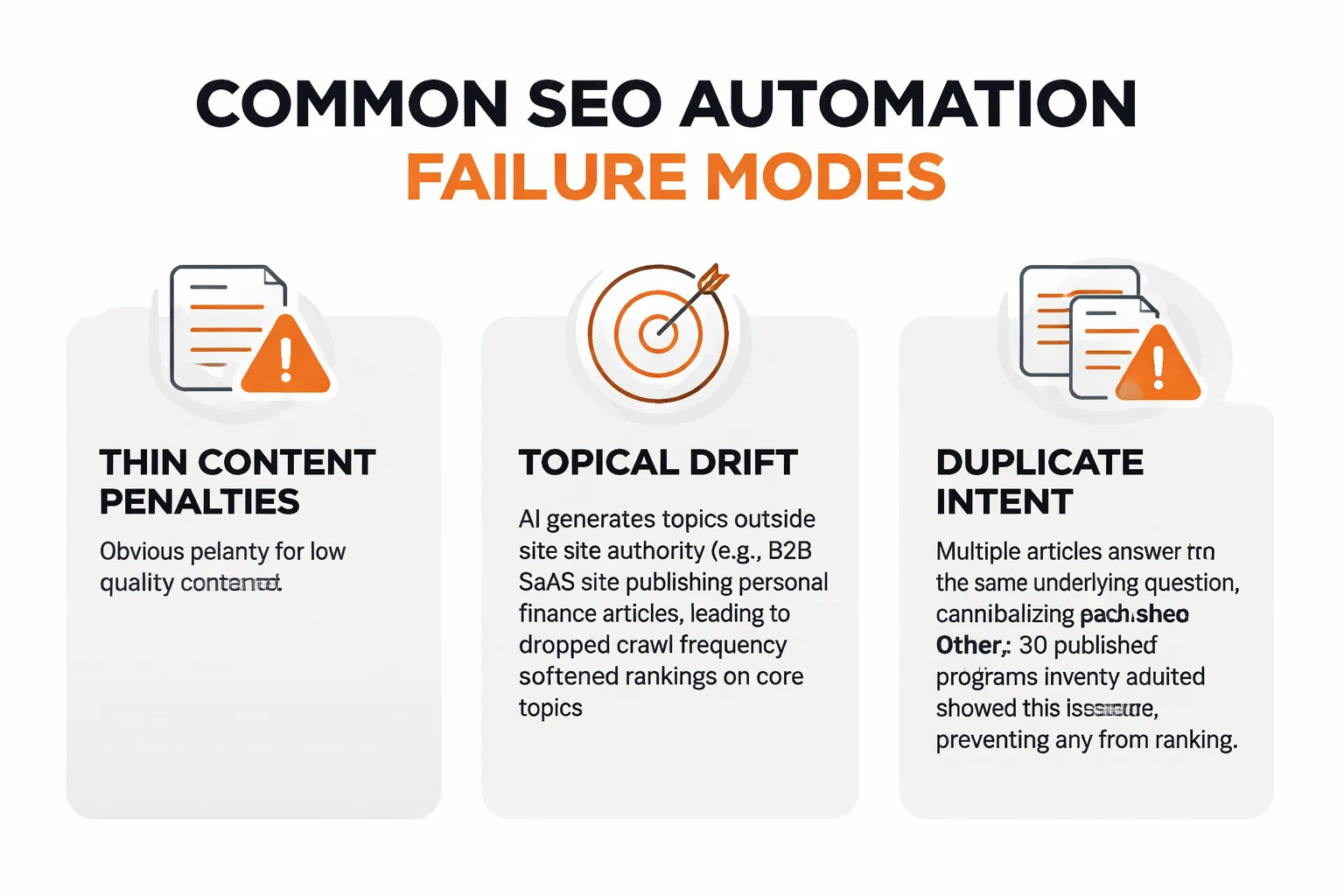

The failure modes are specific, and they're worth naming precisely because most post-mortems I read treat them as one undifferentiated mess called "low quality content."

Thin content penalties are the obvious one. But the more insidious problem is topical drift. When you let an AI generate topics autonomously without anchoring to a defined topical map, the output starts covering adjacent subjects your site has no authority on. Google's systems notice when a site about B2B SaaS suddenly publishes three articles about personal finance. The crawl frequency drops. Rankings on your core topics soften, even when those articles were strong.

Duplicate intent is the failure mode nobody talks about. Two articles targeting different keyword phrases can answer the same underlying question. At scale, you end up with five pieces cannibalizing each other, none of them ranking because Google can't determine which one to surface. I've audited content programs where 30% of the published inventory was competing against itself. The solution isn't to delete everything. It's to catch duplication at the planning stage, before generation starts.

Then there's the Google Helpful Content system. Search Engine Journal's reporting quotes agency partner Dan Taylor directly: "Google's quality threshold is quietly killing scaled AI content at ranking. The real problem isn't that content is AI-generated, but that multiple factors, including lack of human review and quality control, prevent indexing and serving." SEO practitioner Jessica Bowman is blunter in her LinkedIn case study: "Producing a large amount of low quality content can actually hurt SEO." These aren't warnings about AI in general. They're warnings about a specific workflow failure: generation without a gate.

Step 1. Define Your Quality Gates Before You Automate Anything

This is the step most teams skip because it feels like admin work. It isn't. Your quality gates are the architecture of your entire automation program.

Start with three measurable thresholds. First, an information gain standard: every article must contain at least one claim, data point, or perspective that isn't already present in the top three ranking results for its target keyword. This sounds abstract until you operationalize it. In practice, it means your brief-generation stage needs to pull the current SERPs and explicitly identify the gaps your piece will fill. If you can't name the gap, you don't have a brief. You have a topic.

Second, an E-E-A-T signal requirement. For AI search visibility specifically, this means every published piece needs a named author with a verifiable profile, at least two outbound citations to primary sources, and language that reflects direct experience rather than generic instruction. 83% of AI users find AI-powered tools more efficient than traditional search, per the same Nature Communications citation framework. Which means your content is competing not just for Google rankings but for inclusion in AI-generated answers. Generic content loses that competition every time.

Third, a keyword cannibalization check before the article enters the generation queue. Not after. If your planning stage doesn't include a scan against your existing published inventory, you're building the problem into the workflow from the start. Tools like Google Search Console's performance report can surface this manually; a proper ai powered content creation platform should run it automatically at the topic-selection stage.

The number that should anchor your quality standard: Meev's quality firewall scores articles across 16 dimensions and blocks anything below 70/100 from auto-publishing. That threshold isn't arbitrary. It reflects the floor at which content demonstrates enough information gain, structural integrity, and E-E-A-T signals to compete in 2026 SERPs. Set your own threshold explicitly before you run a single generation job.

Step 2. Build a Workflow That Separates Generation from Approval

The mistake teams make is treating AI generation and publishing as one continuous pipe. They're not. They're two separate stages with different quality criteria, and conflating them is exactly what produces the 100-posts-per-hour disasters.

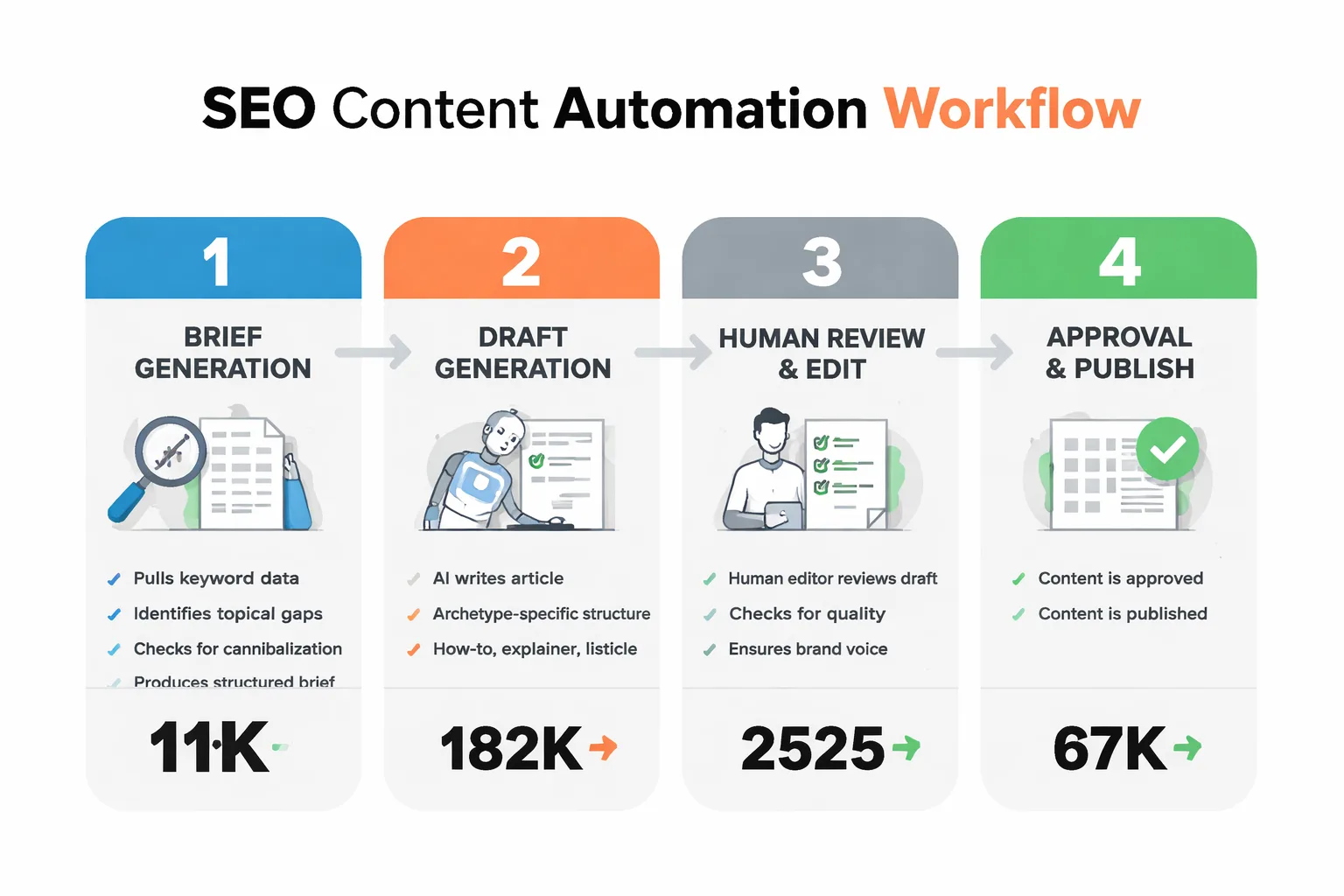

Here's the workflow I recommend:

Stage 1: Brief generation. AI pulls keyword data, identifies topical gaps against current SERPs, checks for cannibalization against existing content, and produces a structured brief. No prose yet. The brief includes: target keyword, information gain angle (what this piece will say that the top results don't), required data points or primary sources, and author entity to attach.

Stage 2: Draft generation. AI writes the article against the brief. This is where archetype matters. A how-to article has different structural requirements than an explainer or a listicle. Treating every topic the same way produces content that technically covers the keyword but doesn't match what the reader expects to find.

Stage 3: Automated QA. Before any human sees the draft, run it through automated checks: plagiarism scan, factual flag review (any claim that can't be source-traced gets flagged), keyword cannibalization confirmation, and your quality score threshold. Anything below your floor goes back to generation with a specific failure reason, not a generic rejection.

Stage 4: Human editorial review. This is not a full rewrite. It's a 10-15 minute pass that answers three questions: Does this contain a genuine information gain claim? Does it read like a practitioner wrote it? Are the outbound citations authoritative? If yes to all three, approve. If no to any, return with specific notes.

Stage 5: Publish with indexing signals. Submit to CMS, trigger IndexNow ping, and submit to Google Search Console sitemap. These two indexing steps are skipped by most auto-blog setups and they matter for crawl frequency.

The human review layer is non-negotiable. I know that sounds like a concession to manual work, but the math holds: a 15-minute review on a piece that takes 8 minutes to generate is still 75% faster than traditional production. You're not eliminating human judgment. You're repositioning it at the approval gate instead of the generation stage.

Step 3. Monitor Output Quality at Scale With the Right Signals

Publishing is not the end of the workflow. It's the beginning of the measurement loop.

The metrics most teams track (pageviews, sessions) are lagging indicators. By the time they tell you something is wrong, you've published 40 more pieces with the same problem. Here are the signals that give you earlier warning:

AI citation rate. Are your published articles being cited by ChatGPT, Perplexity, or Google AI Overviews? This is now a meaningful quality proxy. A Virayo GEO case study documented a SaaS client generating 20+ free trial signups per month directly from ChatGPT citations after implementing content clustering and internal linking optimization. If your automated content isn't earning AI citations, it's likely failing the information gain threshold even if it's passing your automated QA. Tracking ChatGPT visibility and Perplexity citation share gives you a leading signal on content quality that organic rankings don't surface for weeks.

Crawl frequency. If Google is crawling your new content less frequently over time, that's a signal your site-level quality score is declining. Check this in Google Search Console's crawl stats. A healthy automated content program should maintain or improve crawl frequency as the topical authority of the domain builds.

Content decay signals. Impressions dropping on pieces published more than 90 days ago, with no ranking change, indicates the content was never strong enough to hold a position. This is different from normal ranking volatility. Build a monthly decay audit into your workflow: any piece with declining impressions and no top-10 ranking after 90 days gets evaluated for consolidation or deletion.

Organic CTR against expected. If a piece ranks in positions 1-3 but has a CTR below 2%, the title or meta description is mismatched to search intent. At scale, this pattern suggests your brief-generation stage is targeting keywords without properly modeling what the searcher actually wants to find.

For Google AI Overviews optimization specifically, note that Google AIO has roughly a 54% overlap with organic rankings. If you're ranking, you have a real head start on AIO inclusion. That overlap means your quality gates for organic SEO and for AI Overviews visibility are largely the same. Which simplifies the measurement problem considerably.

Want to see how a 16-dimension quality firewall blocks weak drafts before they reach your CMS?

The Automation Stack That Actually Holds Up

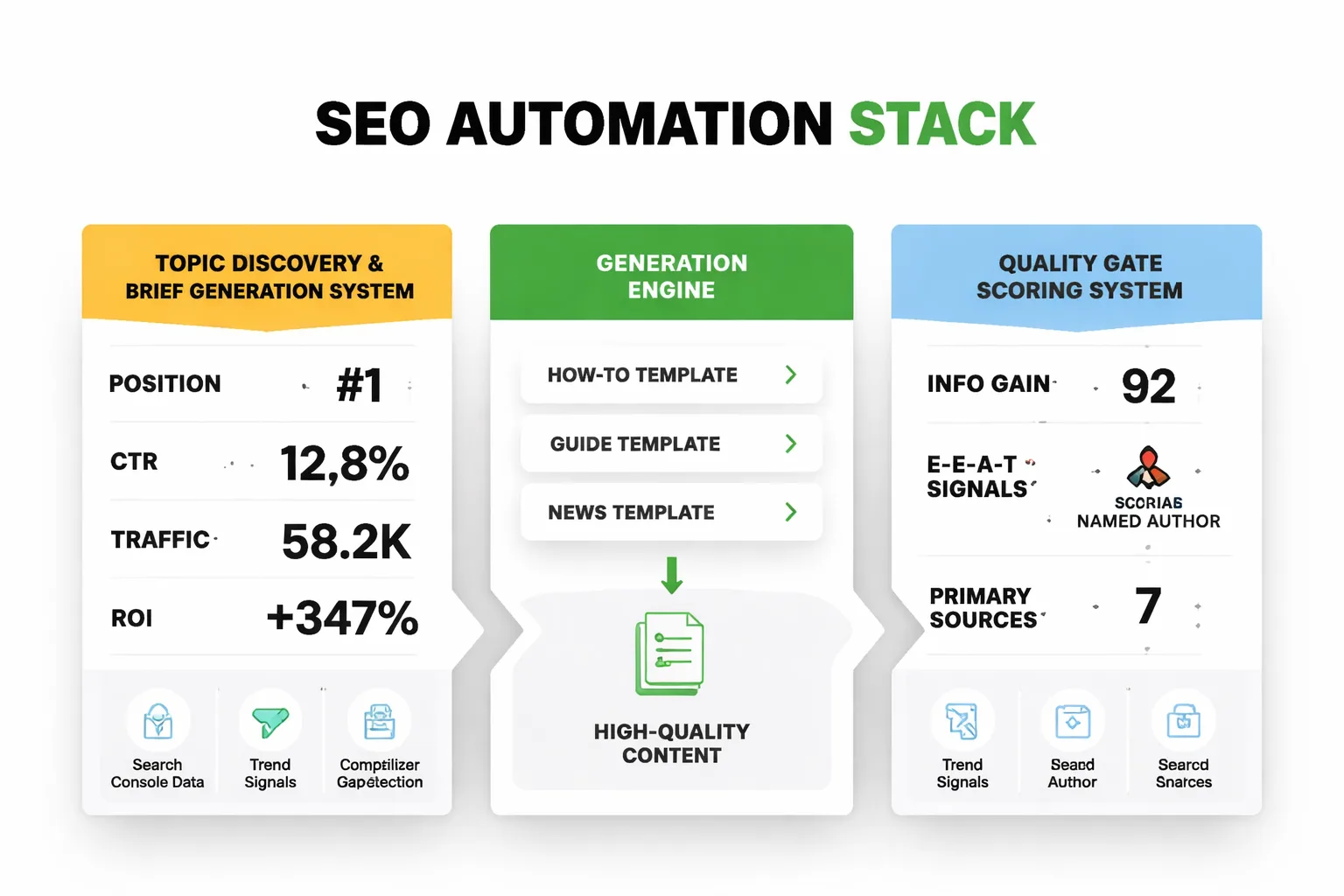

I'll give you the concrete stack, not a list of generic tool categories.

For topic discovery and brief generation, you need a system that pulls from multiple keyword sources (Search Console data, trend signals, competitor gap analysis) and runs cannibalization detection before any generation starts. This is where most off-the-shelf solutions fail. They generate topics without checking what you've already covered.

For generation, archetype-aware prompting matters more than which LLM you use. A how-to article needs different structural scaffolding than an explainer. The model doesn't know the difference unless the prompt encodes it. If you're running your own prompts, build separate templates for each content type and test them against your quality threshold before deploying at scale.

For the quality gate, you need a scoring system that evaluates multiple dimensions simultaneously: information gain (is there a unique claim?), E-E-A-T signals (named author, primary source citations), structural integrity (does the piece have a coherent argument?), and Google Penalty Risk (thin content, keyword stuffing, duplicate intent). Meev's 16-dimension quality firewall blocks drafts below 70/100 automatically. If you're building your own gate, start with those four categories and assign weighted scores.

For publishing and indexing, multi-CMS support matters if you're running content programs across multiple client domains. The indexing step (IndexNow + GSC sitemap submission) should be automatic on every publish, not manual. This is a detail that compounds at scale. Skipping it on 50 articles per month means 50 pieces sitting in a crawl queue instead of getting indexed promptly.

For AI search visibility measurement, you need per-engine tracking. Perplexity source selection and Google AI Overviews reward different content properties. Perplexity leans toward conversational, direct-answer formats; Google AIO has that 54% organic ranking overlap. Tracking them separately tells you which content types are working where. Claude visibility and Gemini visibility round out the picture for brands that need full-spectrum AI search coverage.

A realistic weekly output cadence for a solo founder or small team: 8-10 articles per week, with 2-3 hours of human review time distributed across the week (roughly 15-20 minutes per piece). That cadence produces 400-500 articles per year without triggering spam signals, assuming your quality gate is holding. Compare that to the 100-posts-per-hour failure case. The difference isn't the AI model. It's the gate.

Where This Approach Breaks Down

I want to be honest about the scenarios where quality-gated automation doesn't save you.

Highly regulated or YMYL topics. Health, legal, and financial content requires more than a quality score and a named author. The E-E-A-T bar for these categories is genuinely high. Google expects demonstrable professional credentials, not just an author bio. An automated workflow with a 15-minute human review is not sufficient for a medical information site. The gate needs to be a credentialed human editor with domain expertise, not a generalist reviewer.

Topics with no information gain available. Some keyword targets are genuinely saturated. The top three results are comprehensive, well-cited, and regularly updated. If your brief-generation stage can't identify a real gap, the honest answer is to skip the topic, not generate a weaker version of what already exists. Automation makes it tempting to publish anyway because the marginal cost of generation is low. That temptation is exactly how you accumulate the thin content inventory that triggers Helpful Content suppression.

New domains with no topical authority. A quality-gated workflow on a brand-new domain still faces the cold-start problem. Google needs to establish what your site is about before it rewards your content with meaningful rankings. Publishing 10 quality articles per week across five different topic clusters on a new domain reads as topical confusion, not authority. Narrow the focus for the first 90 days, then expand.

FAQ

How many articles per week can I safely automate without triggering Google spam signals?

There's no universal number, but the pattern I've observed is that cadence matters less than consistency and quality gate integrity. A site publishing 10 quality-gated articles per week will outperform one publishing 3 thin articles per week. The risk threshold isn't volume. It's the ratio of low-quality to high-quality output. If your gate is blocking 20-30% of drafts as failing quality checks, that's a healthy signal your filter is working. If it's blocking 0%, your threshold is probably too low.

Does E-E-A-T optimization actually improve LLM citation rates?

For Google AI Overviews, yes. There's a meaningful overlap with organic rankings, and E-E-A-T signals that improve rankings also improve AIO inclusion. For Perplexity and ChatGPT, the evidence is less direct. Reddit accounts for roughly 40% of LLM citation share, and those threads aren't E-E-A-T optimized. What seems to matter more for conversational AI engines is directness of answer and platform distribution. E-E-A-T is necessary but not sufficient for full-spectrum AI search visibility.

What's the minimum viable human review for automated content?

Three questions, 15 minutes: Does this contain a genuine information gain claim? Does it read like a practitioner wrote it? Are the outbound citations authoritative? If you can't answer yes to all three in 15 minutes, the draft needs more work. This isn't a full editorial review. It's a gate check. Full rewrites belong in the generation stage, not the approval stage.

How do I track whether my automated content is being cited by AI engines?

You need per-engine visibility tracking, not just organic rank monitoring. Meev's AI visibility platform tracks mention position and citation share across ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini, and Grok with daily refresh on SERP-driven surfaces. The citation data gives you a leading quality signal. If automated content isn't earning citations within 60-90 days of publish, it's likely failing the information gain threshold even if it passed your automated QA.

Can I automate SEO for multiple client domains without managing separate workflows?

Yes, but you need multi-domain infrastructure with per-domain quality settings, not a single workflow applied uniformly. Different clients have different E-E-A-T requirements, different topical authority baselines, and different CMS targets. A workflow that works for a SaaS blog will fail for a local services site. The quality gate thresholds and brief templates should be configured per domain, even if the underlying generation and review process is standardized.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Stop publishing AI content that tanks your rankings. Meev's quality-gated auto-blog engine scores every draft across 16 dimensions and blocks anything below 70/100 — automatically.