By Judy Zhou, Head of Content Strategy

Key Takeaways

- AI search visibility tools measure citation sourcing — which URLs models pull from when answering queries — not just brand mention counts; the mention-citation gap is the metric that actually matters.

- Meev is the only tool in this roundup that combines multi-LLM citation tracking with a quality-gated content engine, making it the strongest choice for teams that need to act on visibility gaps, not just monitor them.

- Profound leads on enterprise analytics depth (up to 10 LLMs, SOC2, competitive brand perception graphing), but its Starter tier is ChatGPT-only — Growth at $399/month is the minimum for multi-engine coverage.

- Before paying for any AI visibility tool, demand five things: named LLM coverage list, URL-level citation attribution, 90-day trend history, export capability, and documented accuracy methodology — tools that can't answer these questions aren't ready.

In early 2023, when ChatGPT crossed 100 million users and brands first noticed their organic traffic curves bending in strange new directions, nobody had a tool to explain what was happening inside the model. Marketers could see the effect — answer engines absorbing queries that once drove clicks — but the mechanism was a black box. By 2024, a handful of startups began instrumenting that black box: crawling LLM outputs, logging citations, mapping the gap between brand mentions and actual sourced references. By 2026, that niche has matured into a legitimate software category, and the best ai search visibility tools in it are genuinely worth paying for.

I lead content strategy at Meev, where we work with brands trying to earn citations across ChatGPT, Perplexity, Claude, and Google AI Overviews — not just rank in the ten blue links. The evaluation framework I use for these tools is different from how I'd evaluate a rank tracker, and I think most buyers get this wrong. So before I rank anything, let me explain what these tools actually do.

What These Tools Actually Measure (and What They Don't)

AI search visibility — as HubSpot's playbook for marketers defines it — measures how often a brand is mentioned, how owned content is cited, and how mentions are framed in model responses. That's three distinct things, and most tools only do one or two of them well.

Traditional rank tracking asks: "Where does my URL appear in a SERP?" AI visibility tracking asks something harder: "When a user asks a question my brand should answer, does the model cite me, mention me without attribution, or ignore me entirely?" That last distinction — the mention-citation gap — is the thing I wish more teams understood before they buy. A tool that only counts brand mentions is measuring the wrong thing. Mentions without citations don't drive traffic. They don't build authority. They're noise.

Here's the mechanics: LLMs generate responses by predicting tokens based on training data and, in retrieval-augmented systems, live web content. When Perplexity answers a query, it's pulling from indexed sources and attributing them. When ChatGPT answers with web browsing enabled, it's doing something similar. The HubSpot AI citation tracking guide makes the point clearly: "visibility inside AI answers is no longer a vanity metric" — it's a sourcing question. Whether your content gets pulled as a source, or just referenced vaguely, determines whether you get the downstream traffic and authority signal. AI visibility tools instrument that sourcing layer. The good ones tell you which of your URLs are being cited, for which prompts, across which engines, and how that's trending over time.

What they don't measure — and this is the uncomfortable truth I've had to sit with — is direct revenue impact. I've audited articles optimized heavily for AI citation that Perplexity was pulling from regularly, and Google Analytics showed almost nothing. That's not a content failure. That's a measurement gap the industry hasn't closed yet. Keep that in mind when a vendor promises ROI dashboards.

E-E-A-T is worth mentioning here too, because it comes up constantly in GEO conversations. Google's own documentation and analysis from BrightEdge both confirm that E-E-A-T isn't a score you can measure — it's a qualitative framework that influences how algorithms evaluate content. There's no correlation coefficient between an "E-E-A-T score" and AI citation rates, because no such score exists. What does exist, per Quattr's analysis, is a binary inclusion/exclusion dynamic: credibility gaps that once cost you ranking positions now cost you inclusion in AI answers entirely. AI visibility tools can't score your E-E-A-T. But they can show you whether you're being included — which is the outcome E-E-A-T signals are supposed to drive.

The 5 Tools, Ranked by Real-World Utility

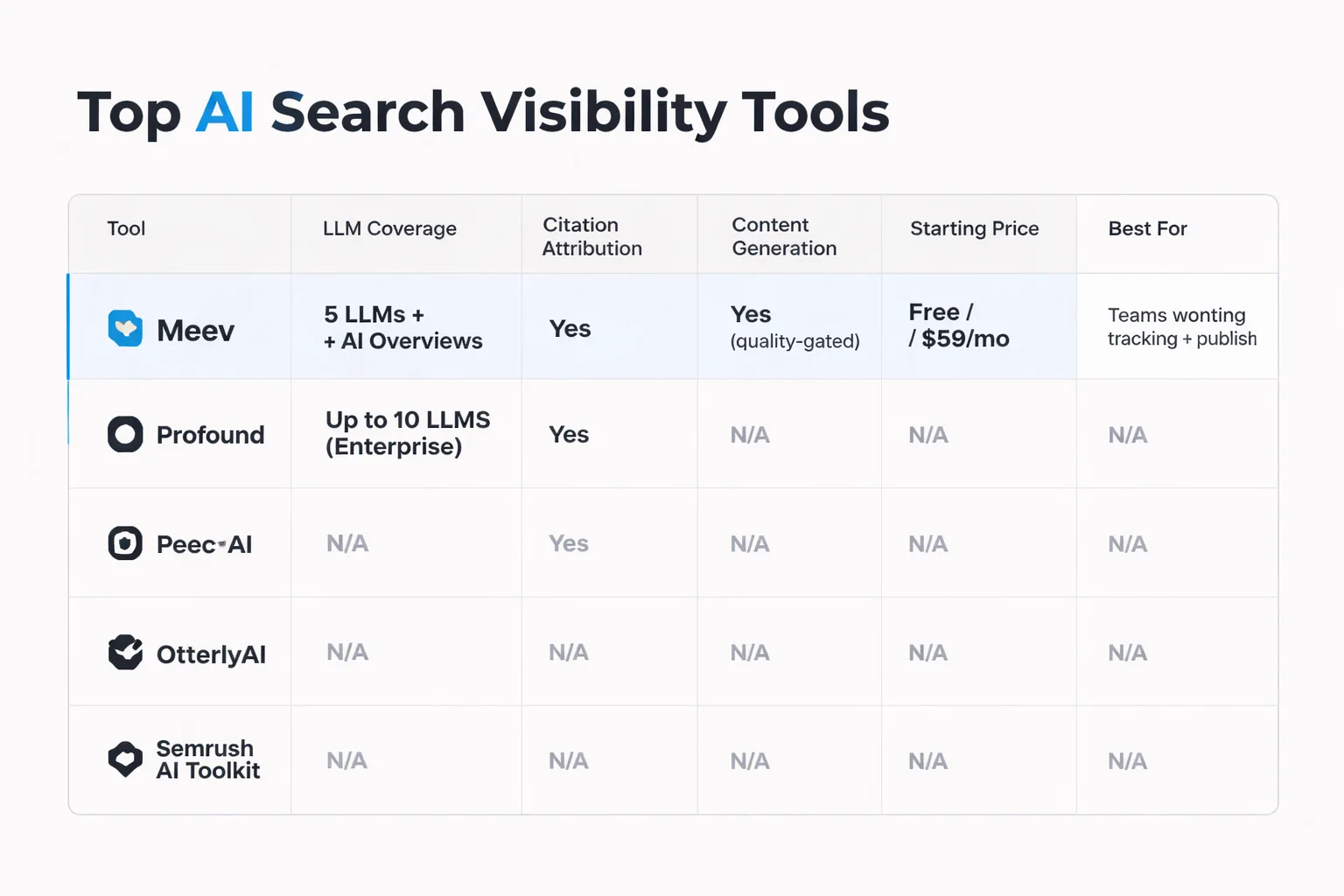

IMAGE:Comparison infographic ranking 5 AI search visibility tools across 6 dimensions: LLM coverage (number of engines tracked), citation source attribution (yes/no/partial), trend tracking over time, export capability, pricing transparency, and content generation included — with color-coded cells for Meev, Profound, [Peec AI, OtterlyAI, and Semrush AI Toolkit]

The tools below are ranked by how useful they are for teams doing actual generative engine optimization work — not just dashboarding. I'm looking at LLM coverage breadth, citation source attribution, pricing transparency, and whether the tool closes the loop between insight and action.

| Tool | LLM Coverage | Citation Attribution | Content Generation | Starting Price | Best For |

| Meev | 5 LLMs + AI Overviews | Yes | Yes (quality-gated) | Free / $59/mo | Teams wanting tracking + publishing |

| Profound | Up to 10 LLMs (Enterprise) | Yes | No | $99/mo | Enterprise analytics teams |

| Peec AI | 9+ AI engines | Yes | No | €89/mo | Mid-market SaaS, multi-language |

| OtterlyAI | ChatGPT, Perplexity, GAO, Claude | Yes | No | $29/mo | Solopreneurs, small agencies |

| Semrush AI Toolkit | 7 engines + GAO | Yes | Partial (via Semrush) | $99/mo add-on | Existing Semrush subscribers |

1. Meev — Best for Teams That Need Tracking and Publishing

Best for: Teams that want AI citation tracking plus a content engine they can trust to publish — not just a dashboard that surfaces problems.

Meev tracks citations across ChatGPT, Claude, Gemini, Perplexity, and Grok with a hybrid daily/2×-week cadence — which matters more than it sounds. Citation patterns in volatile query categories can shift within days, and weekly-only refresh tools miss those moves. What separates Meev from every other tool on this list is the closed loop: it doesn't just show you where you're missing citations, it generates content designed to earn them, gates every article through a 12-dimension Quality Matrix and a Google Penalty Risk Matrix (70/100 publish threshold on both), and maps each article back to the citation-rate delta it drove.

Key features: - Citation tracking across ChatGPT, Claude, Gemini, Perplexity, and Grok with hybrid daily/2×-week cadence - 12-dimension Quality Matrix plus Google Penalty Risk Matrix — 70/100 publish gate on both - Knowledge Base enforcement — articles grounded in your approved claims, not AI hallucination - Autopilot topic pool with gap detection from competitor citation patterns

Pricing: Free forever tier. Starter $59/mo, Pro $199/mo, Agency $599/mo. 20% annual discount. Cancel anytime; hard-cap quotas with no overage fees.

The quality gate is the thing I find most credible about Meev's approach. The pattern I keep seeing across content teams is that AI visibility tracking creates a firehose of gap data with no systematic way to act on it. Meev's architecture treats content quality scoring as a pre-publication requirement, not an afterthought. For teams that have been burned by scaled content abuse — publishing volume without editorial standards — that gate is the difference between building topical authority and accumulating penalty risk. If you want a direct comparison against Profound's analytics-first approach, Meev's head-to-head breakdown is worth reading before you commit to either.

2. Profound — Best for Enterprise AI Visibility Intelligence

Best for: Enterprise marketing and SEO teams that need research-grade AI visibility intelligence and multi-engine competitive analysis at scale.

Profound is the most analytically rigorous tool in this category. Its prompt-level tracking with AI-generated prompt suggestions, citation analysis down to the URL level, and competitive brand perception graphing give enterprise teams a level of visibility intelligence that smaller tools can't match. If your job is to present AI share-of-voice data to a CMO or brief an agency on where a brand stands across ten LLMs simultaneously, Profound is built for that workflow.

Key features: - Prompt-level tracking with AI-generated prompt suggestions across up to 10 LLMs on Enterprise - Citation analysis showing which URLs, domains, and pages are sourced by AI engines - Share of Voice and brand visibility scoring by topic, region, and platform - Competitive benchmarking with brand perception graphing and deep competitor mapping

Pricing: Starter $99/month (ChatGPT-only, 50 prompts); Growth $399/month (3 engines, 200+ prompts); Enterprise custom (up to 10 engines, multi-company tracking, SSO/SOC2). First month of Self-Serve available at $95 (67% off).

The honest limitation: the Starter tier is ChatGPT-only. If you need Perplexity or Google AI Overviews data — and you should, given how differently those engines select sources — you're on the Growth plan at $399/month before you've even started. The dashboards are data-rich, which is a strength, but teams without a dedicated analyst will find the learning curve real.

3. Peec AI — Best for Multi-Language, Multi-Engine Tracking

Best for: Mid-market SaaS and e-commerce brands that need fast, structured AI visibility reporting across multiple engines without a complex enterprise setup.

Peec AI tracks across 9+ AI engines including ChatGPT, Perplexity, Gemini, Claude, DeepSeek, Copilot, Llama, and Grok — the broadest engine coverage of any tool in this roundup at the mid-market price point. Its entity and product-level tracking is genuinely useful for e-commerce brands that need to track individual SKUs or sub-brands in AI answers, not just the parent brand. The 115+ language support makes it the default choice for teams running global campaigns. If you're comparing Peec against Meev's approach to citation outreach and content generation, this detailed breakdown covers where each tool's workflow diverges.

Key features: - Multi-platform monitoring across 9+ AI engines with unlimited seats on all plans - Entity and product-level tracking — drills into individual SKUs, features, or sub-brands in AI answers - Visibility, Position, and Sentiment scoring (0–100) with prompt-level strategy recommendations - 115+ language support for global brand tracking with multi-country dashboards

Pricing: Starter €89/month (up to 25 prompts, ~2,250 answers/month, unlimited seats); Pro €199/month (up to 100 prompts, ~9,000 answers/month); Enterprise €499+/month (300+ prompts, ~27,000 answers/month, dedicated account rep). Additional AI engines available as paid add-ons.

The gap: Peec doesn't generate content. It's a pure analytics layer, which means you'll need a separate workflow to act on what it surfaces. For teams that already have a content operation and just need the measurement infrastructure, that's fine. For teams still building their GEO content engine, the lack of an action layer is a real friction point.

4. OtterlyAI — Best for Small Teams and Agencies on a Budget

Best for: Solopreneurs, small marketing teams, and agencies wanting affordable, fast brand monitoring across major AI engines without committing to enterprise pricing.

OtterlyAI earned a 2025 Gartner Cool Vendor designation, which is notable for a tool at this price point. Its Prompt Research Tool — which identifies the queries buyers actually use across AI engines — is genuinely useful for informing a citation building strategy, not just monitoring. The GEO Audit Tool analyzing 25+ on-page factors gives small teams an actionable optimization checklist that would otherwise require a consultant. At $29/month for the Lite tier, it's the lowest-friction entry point into structured AI visibility monitoring.

Key features: - Prompt Research Tool that identifies the queries buyers actually use across AI engines - GEO Audit Tool analyzing 25+ on-page factors for generative engine optimization - Citation and link tracking identifying source URLs and AI-driven referral opportunities - Competitive share-of-voice analysis with brand sentiment monitoring and Looker Studio connector

Pricing: Lite $29/month; Standard $189/month; Premium/Pro $489/month; Enterprise custom pricing. 14-day free trial available with no credit card required.

The ceiling is real, though. No direct API for data export limits how far you can push OtterlyAI into a larger analytics stack. And it doesn't estimate AI-driven traffic, so attribution stays manual. For a solo content marketer or a small agency managing a handful of clients, those gaps are acceptable. For anyone running LLM optimization at scale, you'll outgrow this tool.

Wondering how your brand actually appears in ChatGPT, Perplexity, and Google AI Overviews right now?

5. Semrush AI Toolkit — Best for Existing Semrush Users

Best for: SEO and content teams already using Semrush who want AI visibility data in the same environment they use for rank tracking, keyword research, and site audits.

The Semrush AI search visibility checker is the most convenient option on this list — if you're already paying for Semrush. Tracking brand presence across ChatGPT, Google AI Overviews, Gemini, Claude, Grok, Perplexity, and DeepSeek from the same dashboard you use for keyword research removes the context-switching that makes multi-tool workflows slow. The competitor citation comparison is particularly useful: seeing which domains and URLs rivals are cited from in AI answers maps directly to a publisher pitching and brand mention outreach strategy.

Key features: - AI Brand Visibility tracking leveraging four popular LLMs alongside Google AI Overview desktop and mobile monitoring - Competitor citation comparison showing which domains and URLs rivals are cited from in AI answers - Integrated keyword research and content workflows so AI visibility gaps can be closed without exporting CSVs - Weekly AI Overview trend tracking with a free AI Overview Study Tool showing trigger frequency data

Pricing: AI Visibility Toolkit starts at $99/month per domain; Semrush One (full SEO Toolkit + AI visibility) starts at $199/month; Enterprise AIO available at custom pricing for multi-brand and agency scale.

The honest caveat: this is an add-on cost on top of your existing Semrush subscription. If you're on a $249/month Standard plan and add the AI Toolkit at $99/month, you're at $348/month before you've touched enterprise features. For teams not already in the Semrush ecosystem, purpose-built tools like Profound or Peec AI will give you more for less. Confirm which LLMs and language markets are live before committing — coverage for newer engines is still expanding.

Head-to-Head — Which Tool Fits Which Use Case

The ai model comparison question I get most often isn't "which tool is best" — it's "which tool is best for my situation." Here's how I'd map it.

Solo content marketer or early-stage startup: OtterlyAI at $29/month gets you structured monitoring and a GEO audit checklist without overcommitting budget. If you're also producing content and want the tracking and publishing loop in one place, Meev's free tier is worth testing before you pay anything.

Agency managing multiple client brands: The unlimited seats on Peec AI's plans make it structurally attractive for agencies — you're not paying per user as your team grows. OtterlyAI's Looker Studio connector is useful for client reporting. Meev's Agency plan at $599/month is designed explicitly for multi-brand publishing at scale, with the quality gate protecting you from the reputation risk of shipping low-quality content under client names.

In-house SEO or content team at a mid-market company: This is where Meev's Pro plan or Peec AI's Pro tier compete most directly. The deciding factor is whether you need content generation (Meev) or just analytics (Peec). If your team already has writers and just needs the measurement layer, Peec's €199/month Pro plan is strong. If you're trying to scale content output alongside tracking, Meev's closed-loop architecture justifies the additional cost.

Enterprise marketing or brand team: Profound is the default recommendation at this level — the multi-engine coverage, SOC2 compliance, SSO, and research-grade competitive analysis are built for enterprise procurement. AthenaHQ is worth evaluating as well, particularly for organizations already using GA4 and Google Search Console as their measurement layer. Semrush AI Toolkit wins if you're already a Semrush Enterprise customer and want to minimize vendor sprawl.

One pattern I keep seeing: teams at every size underinvest in the action layer. Tracking citations without a systematic content response is like running a fever without taking medicine — you have good data and no treatment. The best ai visibility analytics for search optimization aren't the ones with the prettiest dashboards. They're the ones that connect measurement to content decisions you can actually execute. For a broader view of how this category is evolving, the best AI visibility tools roundup for 2026 covers additional players worth knowing.

What to Demand From Any AI Visibility Tool Before You Pay

Five things. Non-negotiable.

1. LLM coverage breadth — and specificity about which engines. "We track major AI engines" is not an answer. Get the list. ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Copilot — which ones, which versions, which interfaces (web vs. API vs. app)? Coverage gaps matter because citation patterns differ meaningfully across engines. Perplexity source selection is structurally different from how ChatGPT with browsing picks references. A tool that only tracks one engine is giving you a partial picture.

2. Citation source attribution down to the URL level. Brand mention counts are noise. What you need is: when the model cited my brand, which specific URL did it pull from? Was it my homepage, a third-party review, a press mention? That URL-level data tells you whether your content strategy is working and where to focus publisher pitching for AI citations.

3. Trend tracking over time — not just point-in-time snapshots. Citation rates fluctuate. A tool that only shows you today's state can't tell you whether you're gaining or losing ground after a content push. Minimum viable: 90 days of historical data, weekly granularity. Better: daily tracking with anomaly alerts.

4. Export capability. If you can't get your data out of the tool — into a CSV, via API, or into a BI layer — you're renting insight you can't own. This matters especially for agencies that need to merge AI visibility data with other performance signals for client reporting.

5. Accuracy methodology disclosure. This one is the hardest to evaluate and the most important. How does the tool generate its prompt set? How often does it re-run queries? Does it use the same prompts over time (for trend validity) or rotate them (for coverage breadth)? The best tools document this. The ones that don't are selling you a black box to explain a black box — which is exactly where we started in 2023.

HubSpot launched a CRM-native deep research connector with ChatGPT on June 4, 2025, letting over 250,000 HubSpot customers query CRM data directly through ChatGPT. That's a signal about where the category is heading: AI visibility isn't just a monitoring problem, it's an integration problem. The tools that survive the next two years will be the ones that embed into existing workflows rather than demanding a separate tab.

The honest state of this category: it's good, not great. The tracking infrastructure has matured faster than the attribution layer. If you want a clear-eyed view of how AI content creation fits into this picture — and where the quality standards are heading — the AI content creation state of play for 2026 is worth reading alongside this tool comparison. The tools are only as useful as the content strategy behind them.

Frequently Asked Questions

Do I need a separate AI visibility tool if I already use Semrush or Ahrefs? Probably yes, at least for now. The Semrush AI search visibility checker and Ahrefs Brand Radar are useful additions to existing subscriptions, but their AI visibility feature depth is narrower than dedicated platforms like Profound or Peec AI. If AI citation tracking is central to your strategy — not a side metric — a purpose-built tool will give you more granular data, faster refresh cadence, and better citation source attribution than a bolted-on module.

How is AI search visibility different from traditional SEO rank tracking? Rank tracking tells you where your URL appears in a list of results. AI visibility tracking tells you whether your content is being sourced, mentioned, or ignored when a model generates an answer. The mechanics are different: AI engines don't return ranked lists, they synthesize responses. That means the measurement question changes from "what position am I in" to "am I cited, and for which prompts."

What is the mention-citation gap and why does it matter? The mention-citation gap is the difference between an AI model referencing your brand by name and actually sourcing your content as a cited URL. Mentions without citations don't drive referral traffic and don't signal to the model that your content is authoritative. Tools that only count mentions are measuring a weaker signal. The tools worth paying for distinguish between the two and tell you which of your URLs are being cited — not just whether your brand name appeared.

How many prompts do I need to track for meaningful AI visibility data? This depends on your category, but the pattern I keep seeing is that teams underestimate prompt volume. A narrow prompt set gives you clean data on a small surface area. Profound's Growth plan at $399/month includes 200+ prompts — that's a reasonable floor for a mid-market brand with multiple product lines. For a focused single-product startup, 25–50 well-chosen prompts can be sufficient if they're genuinely representative of how buyers research your category.

Can these tools tell me why I'm not being cited? Partially. Citation source attribution tells you which competitors are being cited for the prompts where you're absent — that's actionable for content gap analysis and publisher pitching. But the underlying model weighting that determines source selection isn't fully transparent. Tools like AthenaHQ's Action Center and OtterlyAI's GEO Audit get closest to answering "why" by analyzing on-page factors correlated with citation inclusion. That's still inference, not ground truth.

Is there a free way to start tracking AI search visibility before committing to a paid tool? Yes. Meev has a free forever tier. OtterlyAI offers a 14-day free trial with no credit card required. LLMrefs has a freemium tier. Semrush has a free AI Overview Study Tool. For a team just getting started, I'd recommend using the free tier of one dedicated tool alongside manual spot-checking — querying ChatGPT and Perplexity with your target prompts biweekly and logging what you find. It's not scalable, but it builds intuition for what the paid tools are actually measuring before you spend money on them.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Start tracking your AI citations for free — and see exactly which prompts your competitors are winning that you're not.