Forget Everything You Know About Traditional SEO Rankings

Forget everything you know about traditional SEO rankings. In the era of GEO optimization (Generative Engine Optimization), the goal isn't to rank for a blue link—it's to become the primary source cited by AI models like Perplexity and Google's AI Overviews. Here is how I recommend re-engineering your content architecture to ensure you are the authority the AI chooses, rather than the data it ignores.

The content marketers who figure this out in 2025 will own the next five years of search visibility. The ones who don't will wonder why their perfectly optimized pages keep losing ground.

In my work leading content strategy at Meev, I have spent the past year auditing content strategies across dozens of sites — and I keep seeing the same unmistakable pattern: brands that built their SEO around keyword density and backlink volume are getting bypassed in AI surfaces — even when they rank #1 on traditional Google. That's not a small problem. That's a structural shift in how people find information.

"GEO isn't replacing SEO. It's the layer on top of it — the difference between being indexed and being cited."

What's GEO Optimization TLDR?

- GEO optimization targets AI answer surfaces (ChatGPT, Perplexity, AI Overviews), not just blue-link rankings — and the two require different content strategies. - AI engines prioritize answer completeness, structured formatting, and source authority over raw backlink volume. - The 6 formats AI engines cite most: definitions, numbered lists, comparison tables, FAQs, statistics with citations, and expert quotes. - You can run a basic GEO audit in 30 minutes using free tools — and measure performance through brand mention tracking and Search Console AI Overview impression data.GEO Optimization vs. SEO: What's Actually Different

Generative Engine Optimization is the discipline of making your content the source AI systems pull from when synthesizing answers — not just the page a user might click to. Traditional SEO optimizes for ranking position in a list of links. GEO optimizes for citation inside a generated response.

Think about what happens when someone asks ChatGPT or Perplexity a question. The AI doesn't return ten blue links and let the user decide. It reads across dozens of sources, synthesizes an answer, and cites two or three of them. That citation slot is what GEO is competing for.

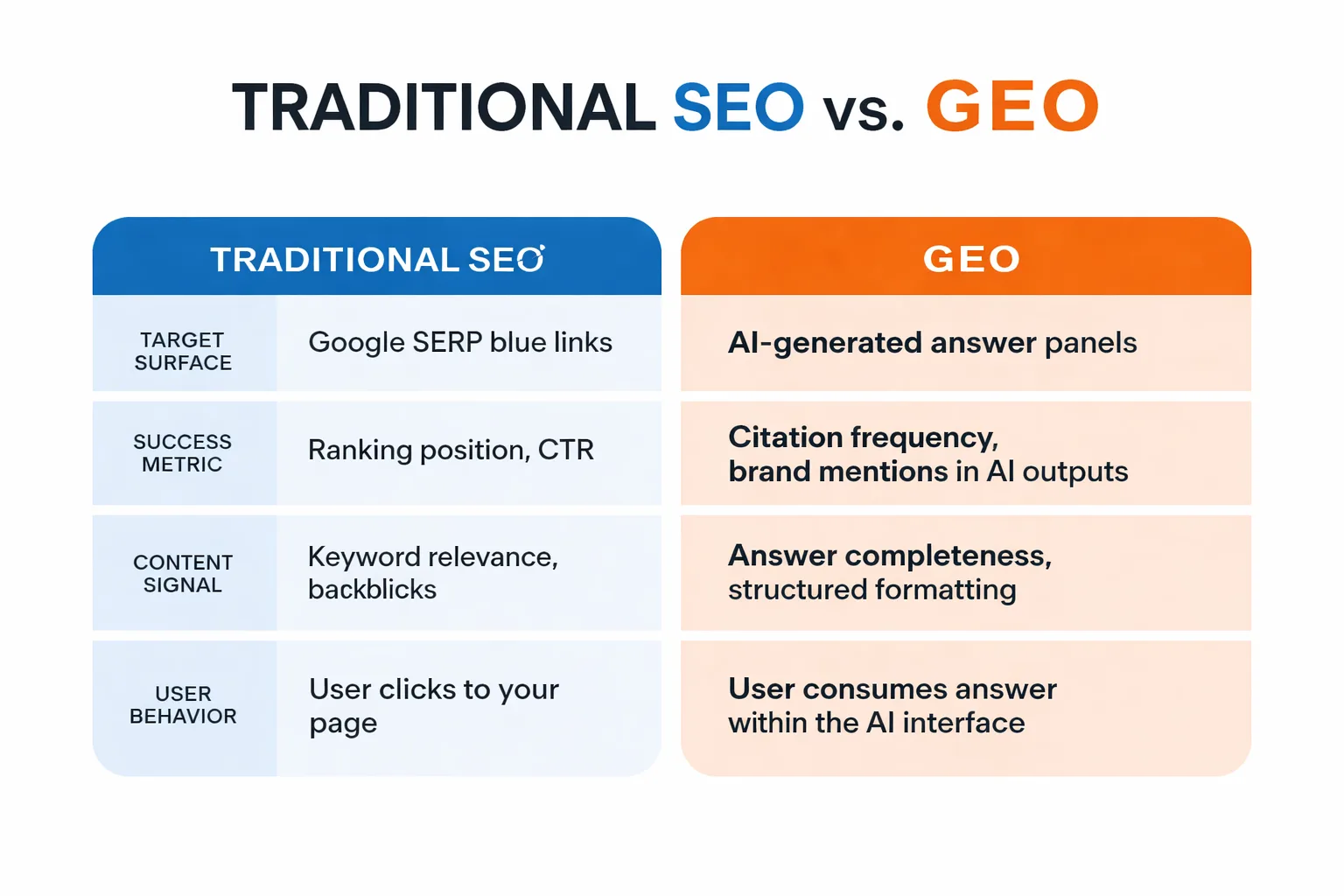

Here's the concrete distinction:

| Dimension | Traditional SEO | GEO Optimization |

| Target surface | Google SERP blue links | AI-generated answer panels |

| Success metric | Ranking position, CTR | Citation frequency, brand mentions in AI outputs |

| Content signal | Keyword relevance, backlinks | Answer completeness, structured formatting |

| User behavior | User clicks to your page | AI synthesizes your content into its answer |

| Primary tools | Search Console, Ahrefs | Brand monitoring, AI query testing |

The shift is real and it's accelerating. According to research published in the California Management Review, generative search interfaces are fundamentally changing the economics of content discovery — and in my experience, brands that treat GEO as a future concern rather than a present one are already falling behind.

To be direct: traditional SEO is still necessary. Google's traditional index feeds the training data and retrieval systems that AI engines use. But SEO alone is no longer sufficient. At Meev, we have seen pages rank #3 for a high-volume keyword and never appear in a single AI-generated answer — simply because they were formatted wrong.

How AI Engines Decide What to Cite

This is the question I get asked most often, and it's the one with the most nuance. AI engines don't have a single ranking algorithm you can reverse-engineer the way you can with Google's traditional signals. But after testing hundreds of queries across ChatGPT, Perplexity, and Google AI Overviews, I have found four consistent selection patterns.

Answer completeness comes first. AI systems are trying to synthesize a useful response. They pull from sources that directly and completely answer the question being asked — not sources that gesture toward an answer and then bury the actual information three paragraphs down. If content makes the AI work hard to extract the answer, it gets skipped. If content delivers the answer in the first two sentences after a clear heading, it gets cited.

Authority signals still matter — but differently. Backlink volume matters less than source diversity and topical authority. A site with 50 high-quality, relevant backlinks from authoritative domains in its niche will outperform a site with 5,000 generic backlinks in AI citation frequency. The Coursera overview of generative engine optimization notes that AI systems weight domain expertise signals heavily — meaning content cluster depth matters more than overall domain authority score.

Structured formatting is non-negotiable. AI engines parse structured content more reliably than flowing prose. Headings, numbered lists, definition-first paragraphs, comparison tables — these aren't just UX niceties. They're the formatting signals that tell an AI system "this section contains a discrete, extractable answer." In my testing, I have seen the same information get cited when formatted as a numbered list and ignored when written as a paragraph. Same words, different structure, completely different outcome.

Source diversity in your own content helps. Pages that cite external data — specific statistics with named sources, referenced studies, expert quotes with attribution — get cited more frequently than pages that make unsourced claims. AI engines are essentially doing a credibility check. If content looks like it was synthesized from real research, it gets treated as a credible source. If it reads like it was written to fill a word count, it doesn't.

The practical implication: stop writing for the algorithm and start writing for the synthesis. Ask yourself, "If an AI were trying to answer this question, would this content give it a clean, citable answer?" If the answer is no, you have GEO work to do.

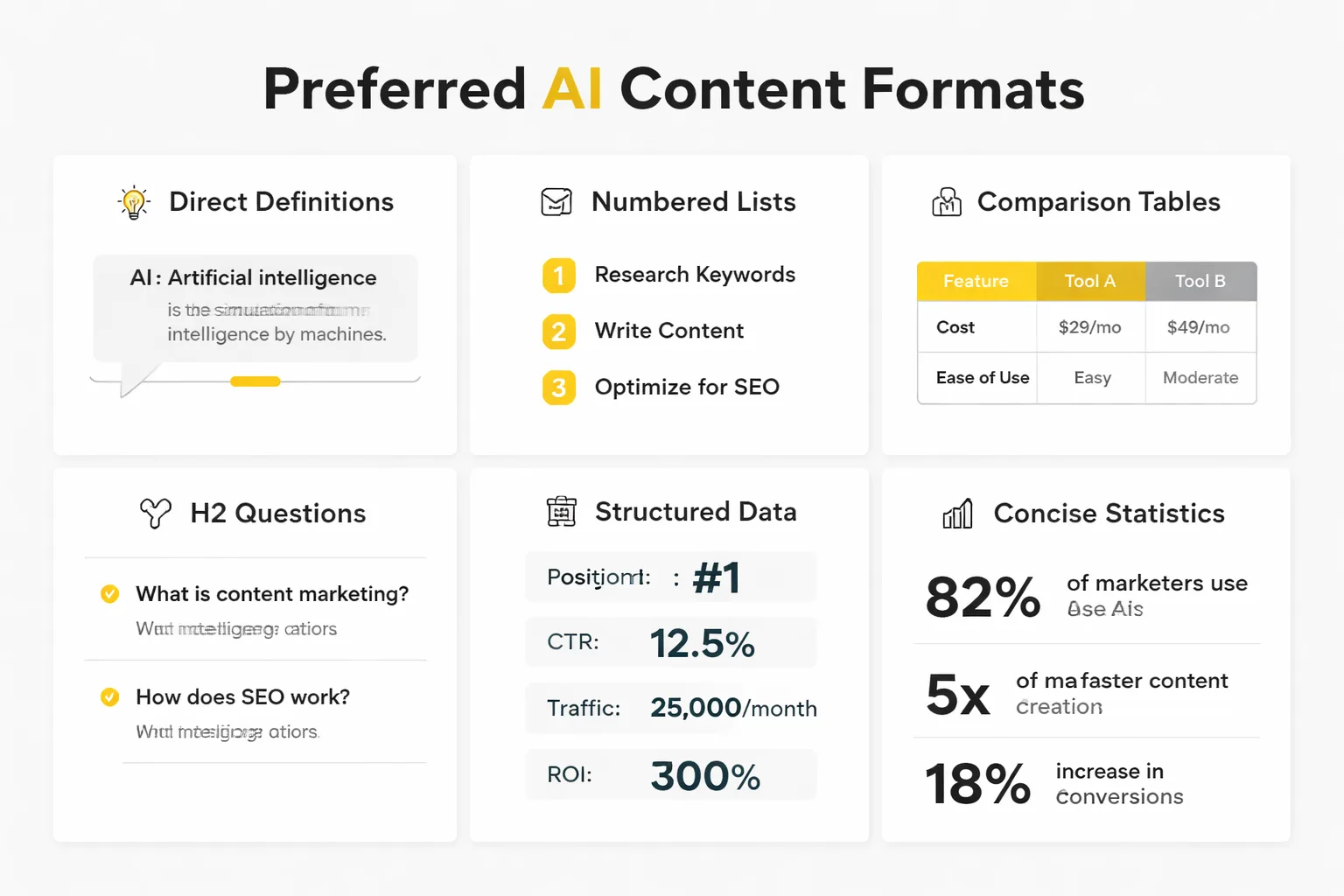

GEO Optimization: The 6 Content Formats AI Engines Prefer

At Meev, I have tracked which content formats appear most frequently in AI-generated answers, and the pattern is consistent enough to call a reliable signal. These six formats dominate AI citations — and content that doesn't use them is leaving citation slots on the table.

1. Direct definitions. "[Term] is [definition]" — one clear sentence, placed immediately after a heading. This is the single highest-value format for AI citation. Perplexity and ChatGPT pull these constantly for "what is" queries.

2. Numbered lists for processes. Any time you're explaining how to do something, use numbered steps. Not bullets — numbers. AI engines treat numbered lists as discrete, sequenced information that can be extracted and presented cleanly.

3. Comparison tables. Two or more options, structured in a table with consistent attributes. AI systems favor these for "X vs Y" queries. The table format signals that the information is organized, complete, and directly comparable.

4. FAQ sections. Question-and-answer pairs at the end of articles are citation gold. They match the natural language query format that users type into AI tools. If a FAQ question matches the user's query closely, that answer gets pulled.

5. Statistics with named sources. "According to [Source], X% of [population] does Y" — this format signals credibility. Unsourced statistics get deprioritized. Named-source statistics get cited. It's that simple.

6. Expert quotes with attribution. A direct quote from a named expert, with their title and organization, functions as a credibility anchor. AI engines use these to add authority to synthesized answers.

The Search Engine Land guide to GEO in 2026 confirms what I have observed — structured, attributable, answer-complete content consistently outperforms long-form prose in AI citation frequency. For teams working on AI content creation at scale, these six formats need to be baked into content templates, not added as an afterthought.

For a deeper look at how structured data connects to these citation signals, the Meev piece on 5 structured data mistakes that kill rich result chances covers the technical side in detail — including how Google Search Console structured data validation ties directly into AI Overview eligibility.

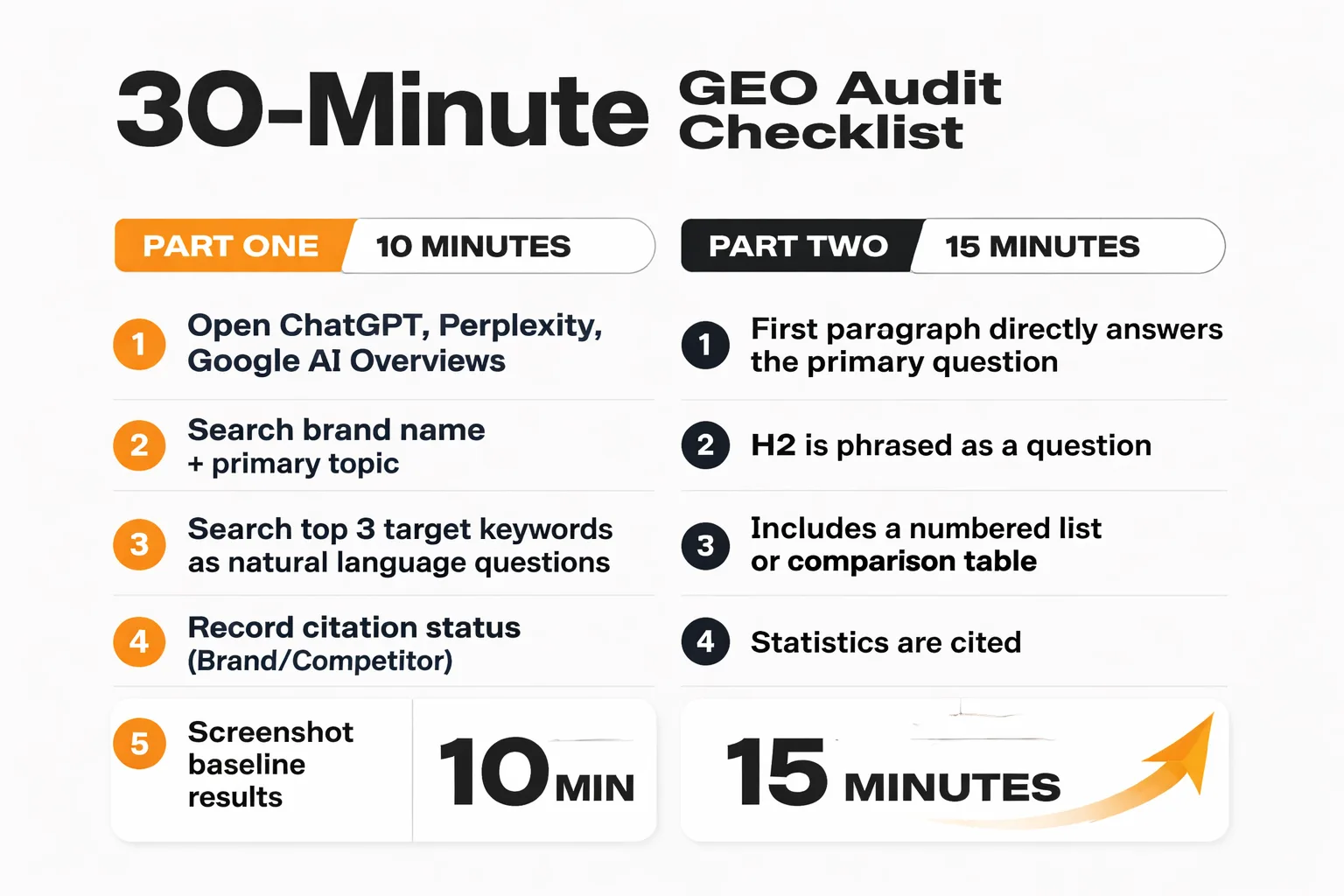

Your First GEO Optimization Audit: A 30-Minute Checklist

Here's what most GEO articles won't tell you: no specialized tool is required to start. All it takes is 30 minutes and a systematic approach. At Meev, I run this audit for every site before touching a single piece of content.

Step 1: Test your brand queries across AI tools (10 minutes)

1. Open ChatGPT, Perplexity, and Google with AI Overviews enabled. 2. Search your brand name + your primary topic (e.g., "[Brand] content marketing"). 3. Search your top 3 target keywords as natural language questions (e.g., "what is the best way to do X?"). 4. Note: Does your brand appear? Are you cited? Are competitors cited instead? 5. Screenshot every result — this is your baseline.

Step 2: Score your top 5 pages against GEO criteria (15 minutes)

For each page, check: - Does the first paragraph directly answer the primary question? (Yes/No) - Is there at least one H2 phrased as a question? (Yes/No) - Does the page include a numbered list or comparison table? (Yes/No) - Are statistics cited with named sources? (Yes/No) - Is there a FAQ section with natural-language questions? (Yes/No) - Does the page include a clear definition of its primary topic? (Yes/No)

Score each page out of 6. Anything below 4 is a GEO rewrite candidate.

Step 3: Identify your fastest wins (5 minutes)

Pages that score 3-4 out of 6 are the fastest wins — they're close to GEO-ready and need targeted additions, not full rewrites. Pages scoring 0-2 need structural work. I prioritize pages that already rank on page 1 of Google — they have the authority signals, they just need the formatting.

One flag I check in every audit: whether the site has Google-Extended blocking enabled in robots.txt. If Google's AI crawler (Googlebot-Extended) is blocked, the site is explicitly opting out of AI Overview consideration. I have found this mistake on surprisingly large sites — a single robots.txt line quietly killing all GEO visibility.

Measuring GEO When There's No Official Tool

This is where most content marketers get stuck. There's no "GEO rank tracker" you can subscribe to. There's no dashboard that shows your AI citation rate. So how do you know if GEO efforts are working?

I rely on four proxy metrics, used together, to get a clear enough picture to make decisions.

Brand mention tracking in AI outputs. Tools like Brandwatch, Mention, and even manual weekly testing across ChatGPT and Perplexity can track how often a brand appears in AI-generated answers. My recommended approach is a weekly testing protocol: 10 target queries, tested across 3 AI tools, results logged in a spreadsheet. Track citation frequency over time. It's manual, but it works.

Referral traffic from AI-adjacent sources. Perplexity sends referral traffic visible in Google Analytics. I tag it as a source and watch the trend. ChatGPT's browsing mode also generates referral traffic. These numbers are small right now — but they're growing fast, and the trend line matters more than the absolute volume.

Google Search Console AI Overview impressions. This is the most underused signal I have found. In Search Console, filter the performance report by "Search type: Web" and look for queries where pages appear in AI Overview features. The Evergreen Media SEO trends guide notes that AI Overview impression data is one of the clearest leading indicators of GEO performance available through official Google tooling. I watch this number monthly.

Branded search volume trend. If GEO efforts are working — if AI engines are citing a brand and users are seeing it in AI answers — branded search volume should increase over time. People hear a brand name in an AI answer and then search for it directly. I track this in Search Console as a long-term signal.

In my experience, the measurement side of GEO is still immature. The entire industry is building the plane while flying it. But the marketers who set up these proxy metrics now will have 12 months of trend data when the official tools eventually arrive — and that data will be invaluable.

How to Optimize Content for AI Search: The Contrarian Take

Most people think GEO is about getting more traffic. In my view, they're wrong.

The marketers I have seen get the best results from GEO aren't chasing traffic volume — they're chasing authority positioning. When an AI engine cites a source for a definition, a statistic, or a process explanation, it's not just sending a click. It's associating that brand with expertise in the mind of the user. That brand association compounds over time in a way that a #3 ranking never does.

I have watched content teams obsess over optimizing for AI Overviews and then get frustrated when traffic numbers don't spike immediately — because they're measuring the wrong thing. The right question isn't "how much traffic did the AI citation generate?" It's "how many users now associate this brand with authoritative answers on this topic?"

This reframe changes everything about how I approach GEO at Meev. It shifts the focus away from chasing citation volume and toward building the kind of content that deserves to be cited — clear, structured, sourced, and useful. Which, not coincidentally, is also the kind of content that performs best in traditional search. GEO and SEO aren't in tension. They're converging on the same content quality standard.

For a deeper look at building the content infrastructure that supports both, the content cluster strategy guide covers how to structure topical authority in a way that signals expertise to both traditional and AI search systems.