The digital labor market for AI content creation is undergoing a silent transformation. While headlines focus on AI replacing jobs, a shadow economy of 'ghost workers'—humans managing, correcting, and feeding AI models—has emerged as the true backbone of the modern content pipeline. Here is why your current strategy ignores the people behind the prompts.

Who this is for: Content marketers, SEO leads, and brand operators who are already using AI in their workflows — or are about to. By the end of this guide, you'll be able to map the invisible roles running your AI content operation, identify where your pipeline is structurally fragile, and make smarter decisions about where humans still need to sit in the loop.

Key Takeaways

- AI content creation has spawned an invisible layer of unacknowledged roles — prompt engineers, AI content auditors, synthetic media managers — that teams are filling informally without job titles or accountability structures.

- Semrush's analysis of 42,000 blog pages found that position 1 results are 8x more likely to be human-written, even though 72% of SEOs believe AI content ranks just as well.

- Brands using GenAI extensively exceeded their revenue goals by 22% on average, but only when human oversight was embedded — not when AI ran the pipeline alone.

- The future isn't AI replacing content teams — it's AI agents doing the volume work while humans hold the roles that determine whether any of it actually ranks.

TLDR

- AI content creation has spawned an invisible layer of unacknowledged roles — prompt engineers, AI content auditors, synthetic media managers — that teams are filling informally without job titles or accountability structures.

- Semrush's analysis of 42,000 blog pages found that position 1 results are 8x more likely to be human-written, even though 72% of SEOs believe AI content ranks just as well.

- Brands using GenAI extensively exceeded their revenue goals by 22% on average, but only when human oversight was embedded — not when AI ran the pipeline alone.

- The future isn't AI replacing content teams. It's AI agents doing the volume work while humans hold the roles that determine whether any of it actually ranks.

What Is the Ghost Workforce in AI Content Creation?

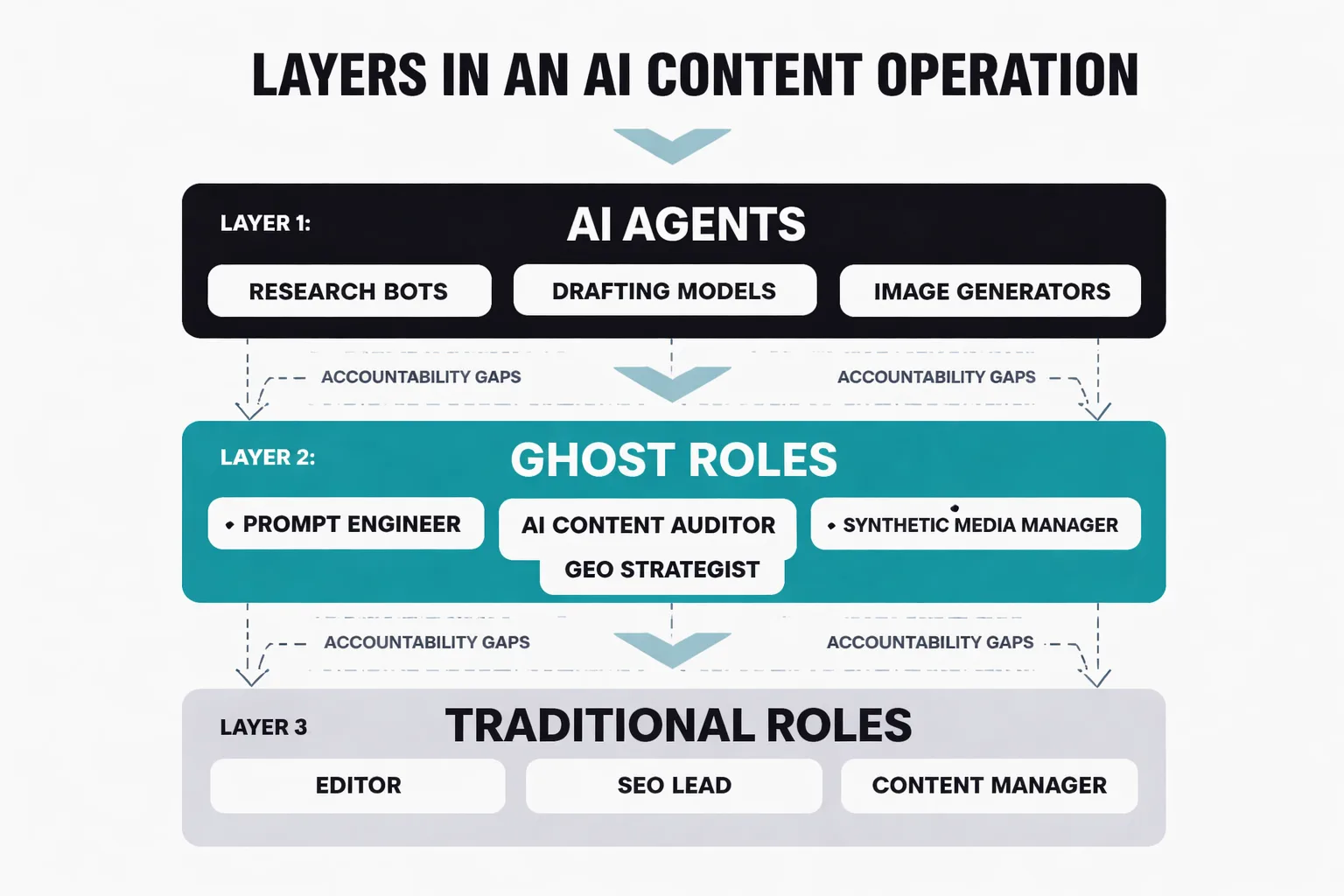

The ghost workforce in AI content creation refers to the invisible layer of human roles and AI agents that are actively running content operations — but have no formal titles or org chart spots, no accountability. They exist in the gap between "we use AI tools" and "we have an AI content strategy."

Here's what I mean. Over the past 18 months, I've watched dozens of content teams at brands ranging from mid-market SaaS to enterprise e-commerce quietly absorb new responsibilities without renaming them. Someone on the editorial team starts spending 40% of their week writing and refining prompts. Someone in SEO starts auditing AI-generated drafts for factual accuracy and brand voice. A social media manager becomes the de facto synthetic media manager, deciding which AI-generated visuals are safe to publish and which ones carry watermarking risk under tools like SynthID. None of these people have new job descriptions. None of them got raises for it. And none of their managers have a clear picture of how much of the content pipeline now depends on their informal expertise.

That's the ghost workforce. And it's bigger than most leadership teams realize.

The scale of this shift is real. A Deloitte Digital survey from late 2024 found that 29% of brands had already implemented GenAI in marketing operations — and among those, 41% reported reduced overall content production costs. That same cohort of brands using GenAI extensively exceeded their revenue goals by 22% on average. Those are compelling numbers. But they don't tell you who is doing the work that makes those numbers possible. That's the question nobody's asking.

What Are the AI Content Marketing Roles in the Ghost Workforce?

Let me be specific about what the ghost workforce actually looks like in practice, because vague warnings about "AI disruption" aren't useful. These are the four roles I see operating informally inside content teams right now — doing real work that determines whether AI content creation succeeds or fails.

The AI Prompt Engineer (Unofficial) This person isn't called a prompt engineer. They're called a senior content strategist, or a managing editor, or sometimes just "the person who's good with ChatGPT." Their actual job has shifted substantially: they're now responsible for designing the instruction sets that determine the quality, tone, and structure of every AI-generated draft. When the output is bad, it's usually their prompt architecture that's the problem — but because the role isn't formalized, there's no feedback loops or training, no shared knowledge capture. I've seen teams where one person holds this knowledge entirely in their head, and when they leave, the AI output quality drops by a visible margin within weeks.

The AI Content Auditor This role exists because AI content creation at scale produces a specific category of failure: content that is grammatical but factually inaccurate. Or content that's technically accurate but so generic it contributes nothing to topical authority. The AI content auditor is the person who catches this before it publishes — checking claims against primary sources, flagging brand voice drift, and identifying when two AI-generated articles are so similar they'll create duplicate content issues in Google's index. I treat duplicate content hygiene as non-negotiable in any repurposing workflow, and this role is why. Without it, teams discover the problem six months later when rankings soften and nobody can diagnose why.

The Synthetic Media Manager As AI-generated images, video scripts, and audio become standard in content operations, someone has to manage the legal and reputational risk. Which images carry embedded watermarks from tools like SynthID? Which AI-generated visuals could create brand liability? Which synthetic assets need disclosure? This person exists on most content teams already — they just don't have a title that reflects what they're actually doing.

The GEO Strategist This is the newest ghost role, and in my view the most strategically important one right now. Generative Engine Optimization — optimizing content to be cited and surfaced by AI search engines like ChatGPT, Perplexity, and Google's AI Overviews — requires a fundamentally different skill set than traditional SEO. It's not about keyword density or backlink profiles. It's about writing content that AI models find quotable and authoritative, with clear structure to extract and surface. Someone on your team is already doing this intuitively. They just don't know that's what it's called, and they're not doing it systematically.

Why Do AI-Only Content Pipelines Break?

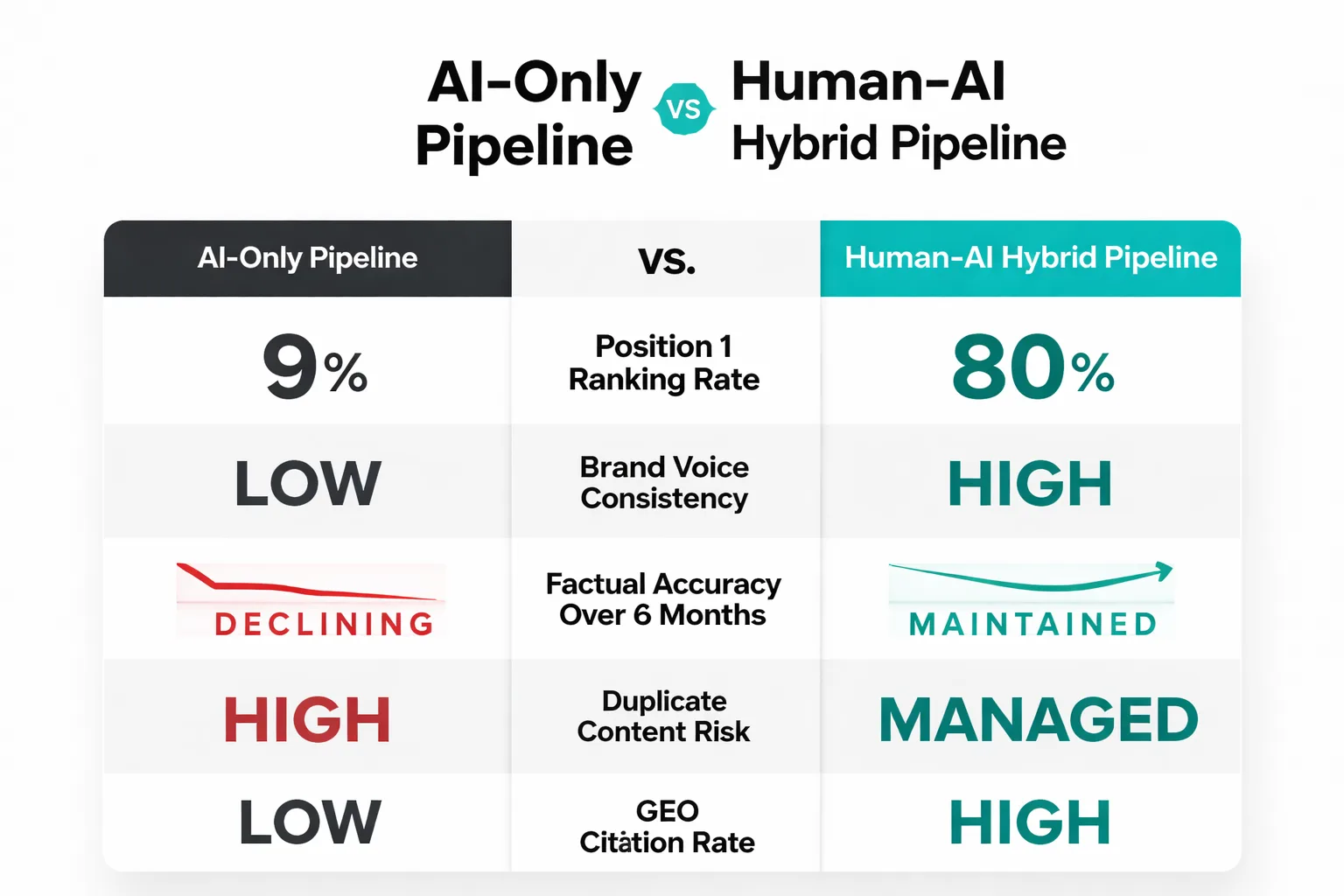

Here's the finding that genuinely surprised me when I first saw it: Semrush's analysis of 42,000 blog pages found that position 1 results are 8x more likely to be human-written. Content classified as purely AI-generated appeared in the top spot just 9% of the time. Human-written content held the top spot 80% of the time. And yet 72% of SEOs believe AI content ranks at least as well as human-written content. That gap between perception and reality is where AI-only pipelines go to die.

The failure mode isn't what most people expect. It's not that Google detects AI content and penalizes it directly. Google has been consistent on this: they don't penalize AI-generated content as a category. What they penalize is low-quality, unoriginal, or spammy content — and AI-only pipelines produce that at industrial scale when there's no human in the loop to catch it. Case studies documented by Hastewire show the pattern clearly: sites that deployed AI content at volume without editorial oversight saw rankings collapse, not because of an AI detection filter, but because the content failed Google's quality signals across the board.

The three specific failure modes I see most often in AI-only pipelines:

1. Topical cannibalization — AI models, given similar prompts, produce structurally similar articles. When a site publishes 40 AI-generated posts on adjacent topics without a topical architecture review, Google's index sees near-duplicate content competing against itself. Ranking signals dilute. The wrong URL surfaces. Organic performance softens across the cluster.

2. Brand voice drift — Over a 6-month AI content pipeline, without a human auditor reviewing for consistency, the brand voice gradually shifts toward whatever the model's default register is. It's subtle at first. By month four, the content sounds like it was written by a different company.

3. Factual decay — AI models have training cutoffs. Content published today about a fast-moving topic may be accurate at publication and wrong within 90 days. Without a refresh workflow owned by a human, that content stays live, accumulates backlinks, and eventually becomes a liability.

For a deeper look at how to structure AI content so it builds authority rather than diluting it, The Complete Guide to Building Topical Authority With AI Content covers the architecture decisions that prevent cannibalization before it starts.

How Does GEO Differ from SEO?

Traditional SEO and Generative Engine Optimization are not the same discipline, and treating them as interchangeable is one of the most expensive mistakes I see content teams make right now. As Head of Content Strategy, I've watched teams optimize obsessively for Google Search Console structured data signals and page speed optimization for SEO — and then wonder why their content never gets cited in AI Overviews or Perplexity answers.

The reason is structural. Traditional SEO optimizes for crawlability, keyword relevance, and link authority. GEO optimizes for extractability — the likelihood that an AI model will pull a specific sentence or paragraph from your content and surface it as an answer. These require different writing patterns and structures—plus different instincts.

What GEO-optimized content actually looks like: - Standalone sentences that make sense out of context (AI models extract sentences, not paragraphs) - Direct answer paragraphs after every question-style heading (40-60 words, no pronouns referencing earlier sections) - Specific, quotable data points with named sources - Clear definitional statements: "[Topic] is..." format that AI models can lift cleanly

What traditional SEO still requires that GEO doesn't replace: - Technical site health (crawlability, Core Web Vitals, structured data) - Backlink authority for competitive keywords - Internal linking architecture for topical clusters - Click-through rate optimization for SERP titles and meta descriptions

The division of labor I'd recommend: assign GEO strategy to whoever currently owns your featured snippet optimization — they already think in extractable answer units. Keep traditional SEO with whoever manages your technical audit workflow. These are different enough skill sets that combining them in one role produces mediocre results in both.

The uncomfortable truth about AI search visibility: SparkToro's research found that AI models are highly inconsistent when recommending brands or products. The same query asked to the same model on different days can produce different brand recommendations. That inconsistency means GEO isn't a one-time optimization — it's an ongoing content maintenance discipline. Someone has to own it. Right now, on most teams, nobody does.

Is your team running an AI content pipeline without formally naming who owns what?

What Is the Human Cost Nobody Measures?

I want to be direct about something that gets almost no coverage in the AI content creation conversation: what happens to the humans on content teams when AI agents absorb the volume work.

The optimistic framing — and it's not wrong, exactly — is that AI handles the repetitive, low-creativity tasks, freeing human writers for higher-value strategic work. I've seen this play out positively on teams that were intentional about the transition. But I've also seen the other version, and it's uglier. Writers who spent years developing craft find themselves doing QA on AI output all day. Editors who used to shape narratives are now running fact-check checklists. The work is less interesting, the career trajectory is less clear, and the psychological impact is real.

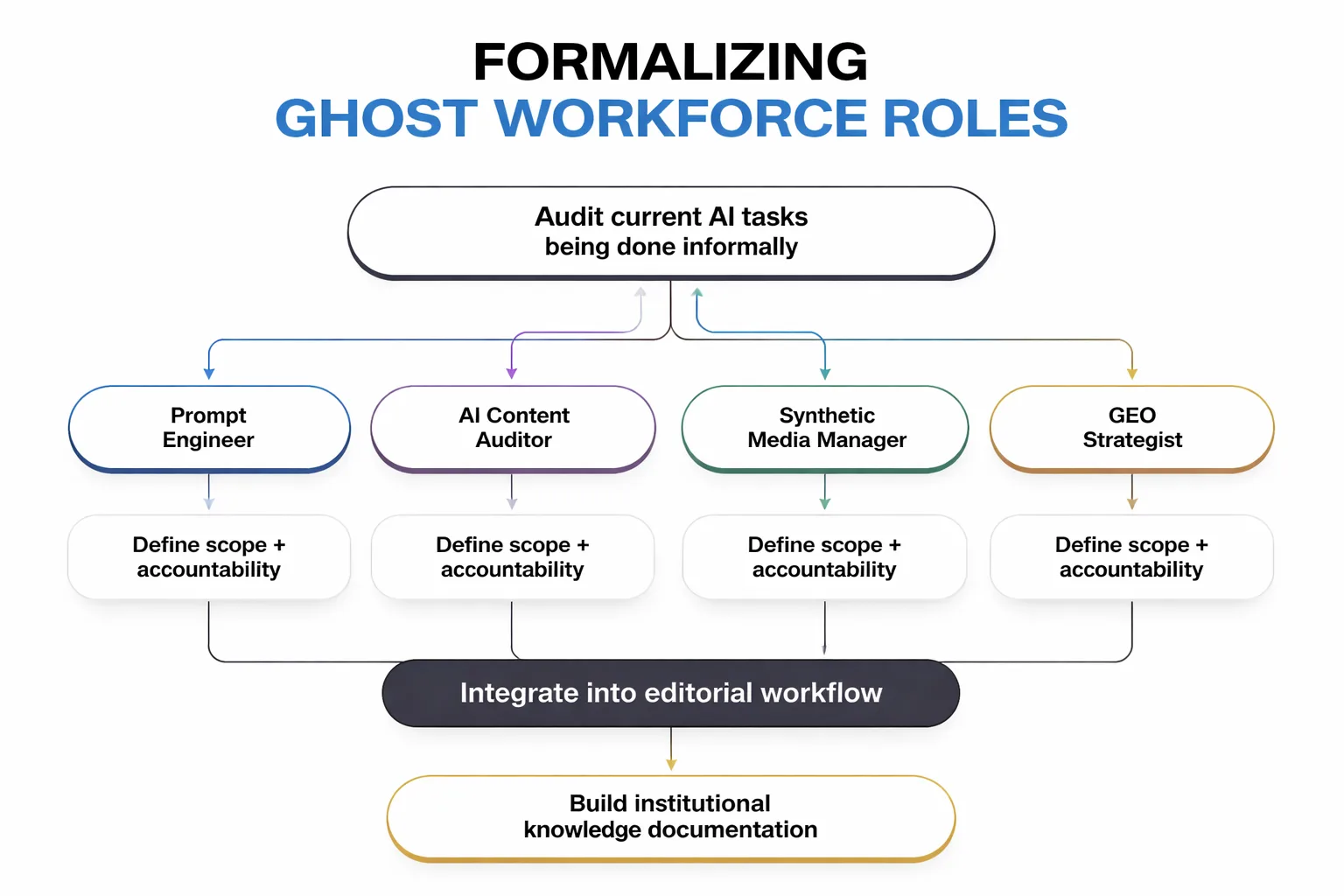

The teams that navigate this well share one characteristic: they named the new roles explicitly. They didn't just absorb AI into existing job descriptions — they created actual titles, actual accountability structures, and actual career paths for the ghost workforce roles I described earlier. The AI content auditor became a real position with a real scope. The GEO strategist got a seat in the editorial planning meeting. The prompt engineer's work got documented and institutionalized instead of living in one person's head.

This matters for retention, obviously. But it also matters for content quality. When the humans in your AI pipeline feel like they're doing meaningful, recognized work, they bring judgment and care to it. When they feel like they've been reduced to babysitting a language model, the quality of their oversight degrades. And that's when the AI-only failure modes I described earlier start showing up in your rankings.

How Do You Build a Sustainable AI Content Creation Pipeline?

Sustainable AI content creation isn't about finding the right tool. It's about building the right structure around the tools you already have. Here's the framework I use when auditing content operations that have scaled AI without scaling oversight.

Step 1: Map your ghost workforce. Spend one week tracking where human judgment is actually being applied in your AI pipeline. Who's writing the prompts? Who's reviewing the output? Who's making the call on what publishes? You'll find the ghost roles immediately. Write them down.

Step 2: Assign formal ownership. Every role you identified in Step 1 needs a named owner, a documented scope, and a feedback loop. This doesn't require new headcount — it requires clarity. The person who's already doing the AI auditing work should have that reflected in their role, their goals, and their performance reviews.

Step 3: Build a quality gate before publication. Not every piece of AI-generated content needs the same level of review. Create a tiered system: high-competition, high-stakes content (pillar pages, product pages, anything targeting competitive keywords) gets full human editorial review. Supporting content (FAQ pages, long-tail informational posts) gets a lighter audit focused on factual accuracy and duplicate content risk. Purely automated content (meta descriptions, structured data, internal link anchor text) gets spot-checked monthly.

Step 4: Separate your SEO and GEO workflows. Run a technical SEO audit on your existing content to identify crawl issues, structured data gaps, and page speed problems. Run a separate GEO audit to identify which pages have extractable answer paragraphs, quotable standalone sentences, and direct definitional statements. These are different checklists. Treat them that way.

Step 5: Build a refresh calendar. AI-generated content has a shorter shelf life than well-researched human content on fast-moving topics. Build a 90-day refresh cycle for any AI content touching topics with high factual decay risk: AI tools, algorithm updates, market data, regulatory changes. Assign the refresh work to your AI content auditor role.

The number that should anchor your expectations: Brands using GenAI extensively exceeded their revenue goals by 22% on average, according to Deloitte Digital's 2024 analysis. That's a real number. But it comes from brands that embedded human oversight into their AI operations — not from brands that handed the pipeline entirely to AI agents and walked away. The 22% upside requires the ghost workforce to be doing its job.

If you're thinking about how to structure the operational side of this — the actual pipeline mechanics — How to Build a Content Pipeline That Runs Without You covers the workflow architecture in detail.

One more thing I'd push back on: the assumption that AI content creation for social media operates by the same rules as long-form SEO content. It doesn't. Social AI content lives and dies on brand voice consistency and platform-native formats — neither of which AI models handle well without tight human guardrails. The ghost workforce roles that matter most for social are the prompt engineer and the synthetic media manager. Get those two roles formalized before you scale AI on social channels.

FAQ

What is the AI ghost workforce in content marketing?

The AI ghost workforce refers to the informal layer of human roles and AI agents that run content operations without official titles or accountability structures. These include prompt engineers, AI content auditors, synthetic media managers, and GEO strategists — people doing critical work that determines content quality and search performance, but whose contributions are unacknowledged in org charts or job descriptions.Does AI-generated content rank on Google?

AI-generated content can rank, but the data is stark: Semrush's analysis of 42,000 blog pages found that position 1 results are 8x more likely to be human-written. Purely AI-generated content holds the top spot just 9% of the time. Google doesn't penalize AI content as a category — it penalizes low-quality, unoriginal content, which AI pipelines without human oversight tend to produce at scale.What is GEO and how is it different from SEO?

Generative Engine Optimization (GEO) is the practice of structuring content to be cited and surfaced by AI search engines like ChatGPT, Perplexity, and Google's AI Overviews. Unlike traditional SEO, which optimizes for crawlability and keyword relevance, GEO optimizes for extractability — writing standalone sentences, direct answer paragraphs, and quotable data points that AI models can lift and surface as answers.What are the biggest risks of running an AI-only content pipeline?

The three main failure modes are topical cannibalization (AI-generated articles on adjacent topics competing against each other in Google's index), brand voice drift (gradual shift away from brand identity over months of AI output), and factual decay (accurate content at publication becoming outdated without a human-owned refresh workflow). All three are manageable with the right oversight structure.How do I formalize ghost workforce roles without adding headcount?

Start by auditing where human judgment is currently being applied in your AI pipeline — who's writing prompts, reviewing output, and making publication decisions. Those people are already doing the ghost workforce roles. Formalizing them means documenting their scope, reflecting the work in their job descriptions and performance goals, and building institutional knowledge so the expertise doesn't live in one person's head.What is SynthID and why does it matter for content teams?

SynthID is Google DeepMind's tool for embedding invisible watermarks in AI-generated content — images, audio, video, and text. For content teams, it matters because AI-generated assets may carry embedded markers that could trigger disclosure requirements or platform flags. The synthetic media manager role exists partly to track which assets carry these markers and manage the associated risk.How often should AI-generated content be refreshed?

For content on fast-moving topics — AI tools, algorithm updates, market data, regulatory changes — a 90-day refresh cycle is the minimum I'd recommend. AI models have training cutoffs, and content that was accurate at publication can become a liability within months. Assign refresh ownership to your AI content auditor role and build it into your editorial calendar as a standing workflow, not a reactive fix.Can small teams realistically manage a human-AI hybrid content pipeline?

Yes — and in many ways, small teams have an advantage because the ghost workforce roles are easier to assign clearly when there are fewer people. A three-person content team can cover all four ghost roles with some overlap: one person owns prompt engineering and GEO strategy, one owns AI content auditing and quality gates, and one manages synthetic media and publication decisions. The key is making the roles explicit, not leaving them implicit.Stop running a ghost workforce. Build an AI content system with real roles, real oversight, and content that actually ranks.