What Autoblogging Actually Looks Like in 2026

By Judy Zhou, Head of Content Strategy

Key Takeaways

- 74% of new webpages now contain AI-generated content (Ahrefs 2024), making the question not whether to automate, but how to do it without the E-E-A-T gaps that trigger Google penalties.

- The modern autoblogging stack has five stages — keyword ingestion, brief generation, LLM draft, human review gate, and structured CMS publish — and skipping the review gate is where most programs fail.

- A trained editor can maintain quality across 15–20 AI-drafted posts per week using a focused 10-minute review that catches factual drift, adds one human signal, and confirms no keyword cannibalization.

- Organic CTR on AI Overview-affected queries dropped from 1.76% to 0.61%, meaning autoblogging ROI now depends on targeting queries that Overviews don't fully answer — not just high-volume keywords.

Autoblogging in 2026 looks almost nothing like what that word meant ten years ago — and if you're still operating with the old mental model, you're either leaving serious capacity on the table or flying toward a Google penalty you won't see coming. As Head of Content Strategy at Meev, where I oversee AI-driven publishing for hundreds of brands, I've watched this space evolve from a black-hat curiosity into something that can genuinely compete — when it's built right.

The core tension hasn't changed, though: speed versus quality. What's changed is how much of that tradeoff you can actually engineer away.

Autoblogging Then vs. Now — Why the Old Definition Is Obsolete

The 2010s version of autoblogging was essentially content laundering. Scrapers pulled articles from RSS feeds, spinners swapped synonyms to dodge duplicate detection, and the whole thing ran on autopilot until a Panda update killed it. The strategy wasn't content — it was arbitrage on Google's inability to detect low-quality text at scale.

That era is gone. Not because the tools got worse, but because Google got dramatically better at behavioral signals. A spun article that technically passes a plagiarism check still gets buried if users bounce in four seconds — and they will, because the text is incoherent.

What replaced it is something more interesting and more demanding. Modern ai blog automation uses large language models to draft content that is, sentence by sentence, often indistinguishable from competent human writing. According to an Ahrefs 2024 study cited on Reddit, 74% of new webpages now contain AI-generated content. That number stopped me cold the first time I saw it.

Seventy-four percent. The web is already mostly AI-written.

So the question isn't whether to use AI in your content workflow. It's whether your implementation is sophisticated enough to survive what Google is increasingly good at detecting — not the AI origin of text, but the absence of genuine human judgment in it. Those are different problems with different solutions.

The Modern Autoblogging Stack (What Actually Works)

The workflows I've seen succeed in 2026 share a common architecture, even when the specific tools differ. It's a five-stage pipeline, and the order matters.

Stage 1 — Keyword ingestion. This is where most teams underinvest. You're not just pulling a keyword list; you're doing high-potential keyword discovery that filters for intent clusters, cannibalization risk, and AI Overview exposure. Tools like Semrush or Ahrefs handle the raw data. The judgment call — which keywords are worth automating versus which need a human-written flagship piece — is still a human decision. I'd argue it's the most important human decision in the whole system.

Stage 2 — Brief generation. A good brief isn't just a keyword and a word count. It should include: target intent, competing URLs to beat, required data points, E-E-A-T signals to hit (named author, specific examples, original angle), and any entities Google associates with this topic. Automated brief generators have gotten good enough that I'd trust them for 80% of informational queries. For YMYL topics — health, finance, legal — I still want a human building the brief.

Stage 3 — LLM draft. GPT-4o and Claude are both capable at this stage for standard informational content. What I found interesting — and the Search Engine Land reporting on newer model benchmarks confirmed it — is that newer models aren't automatically better for SEO tasks. There's reportedly a ~9% accuracy drop in some newer models on standard SEO workflows compared to previous versions. The lesson: don't chase the latest model release. Chase consistency and instruction-following.

Stage 4 — Human review gate. This is non-negotiable. I'll expand on what this actually looks like in practice below, but the short version: a trained editor should touch every post before it publishes. Not rewrite it. Touch it.

Stage 5 — CMS publish with structured data. Schema markup, internal linking, canonical tags, author attribution — these aren't optional decorations. They're how you tell Google's quality signals what to make of this page. Automated publishing pipelines that skip structured data are leaving ranking signals on the table.

The tools I've seen at each stage: Semrush or Ahrefs for keyword ingestion, custom GPT or Claude wrappers for brief and draft generation, a lightweight CMS integration (WordPress REST API or Webflow) for publish. The glue is usually a no-code orchestration layer — Zapier, Make, or a custom Python script for teams with engineering support.

Wondering if your current blog automation setup would survive a Google quality review?

Where Autoblogging Fails Without Human Oversight

I used to think the risk of AI-generated content at scale was quality — that it would read badly and audiences would notice. I was wrong about where the failure actually shows up.

The pattern I kept seeing was a traffic spike followed by a collapse to near-zero, sometimes within months. The sites that got wiped weren't always publishing garbage. Some of it read fine. The problem was structural.

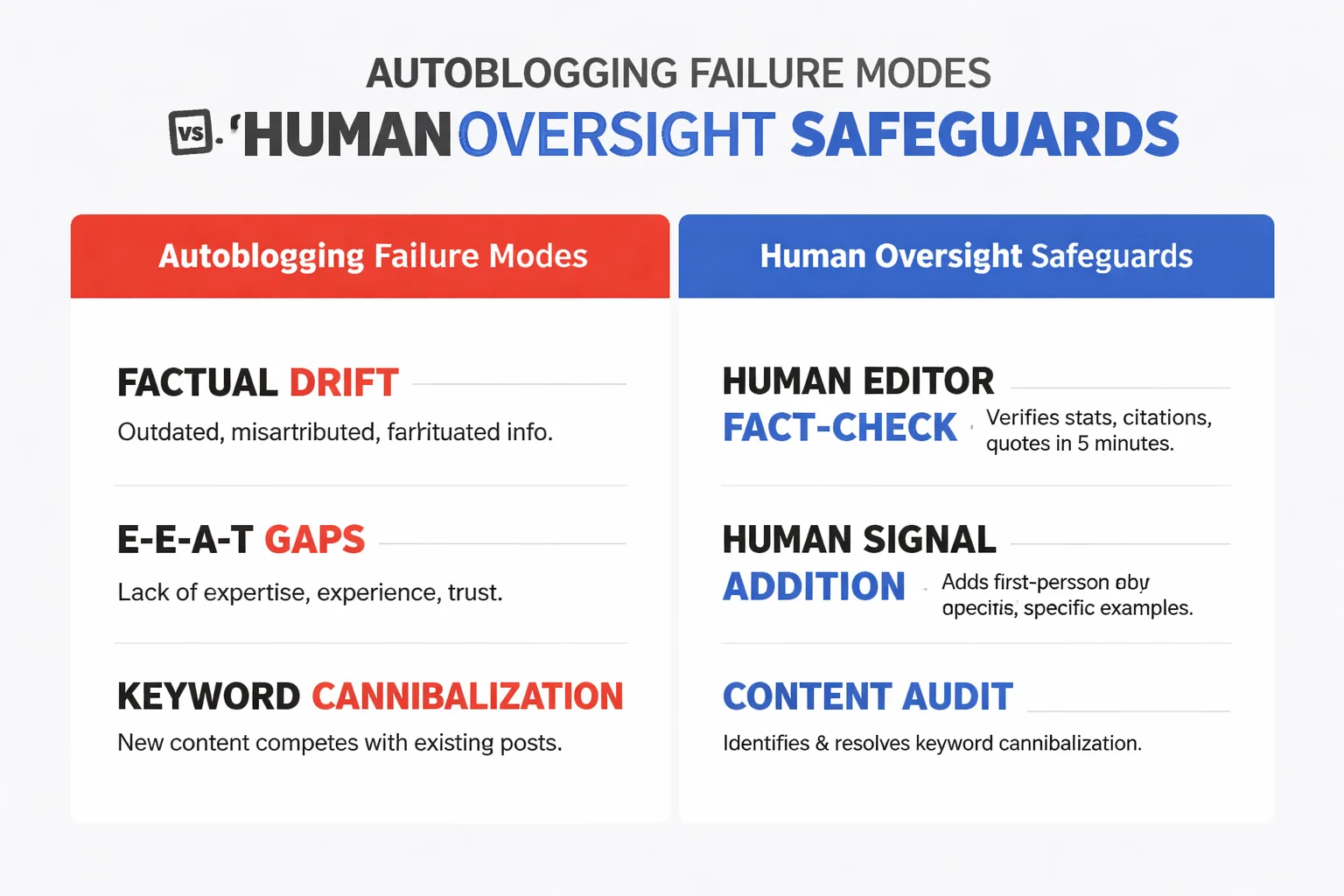

Factual drift is the most common failure mode. LLMs synthesize from training data, which means they confidently produce statistics that are outdated, misattributed, or simply fabricated. I've seen AI drafts cite studies that don't exist, attribute quotes to the wrong person, and present 2021 data as current. A human editor with five minutes and a browser catches this. An automated pipeline doesn't.

E-E-A-T gaps are subtler and more dangerous. Google's quality rater guidelines have always rewarded demonstrated expertise and lived experience — and what the post-2024 algorithm cycles showed is that this is now being enforced algorithmically, not just through manual review. The sites that held through those updates weren't the ones with better prompts. They were the ones where a person with domain knowledge had touched the content in a way that left a mark. A personal observation. A counterintuitive take. A specific comparison that required judgment. AI can't generate those authentically — it can imitate them, and the imitation is getting better, but Google's behavioral signals (time-on-page, return visits, engagement depth) catch the difference.

Keyword cannibalization is the failure mode I see most often in scaled autoblog operations. When you're publishing 50 posts a month from a keyword list, you will accidentally create multiple pages targeting the same intent. The result isn't doubled coverage — it's split authority, confused crawlers, and neither page ranking as well as one consolidated piece would. An SEO cannibalization strategy check before each publish is a five-minute task that prevents months of cleanup work.

There's also a timing dimension that most teams miss. What Glenn Gabe documented across the December 2024 Google spam update cases shows a more uncomfortable truth: sites can rank, accumulate impressions, and still get wiped — often right around that 90-day window when Google has enough behavioral and quality signal to make a harder call. I've started treating the first three months of any new automated content program as provisional. The question isn't "did it get indexed?" It's "does it have anything in it that a human editor actually contributed?"

The Human-in-the-Loop Model That Scales Without Sacrificing Quality

Here's the contrarian take that most autoblogging content gets wrong: the goal isn't to minimize human involvement. It's to concentrate human involvement where AI is weakest.

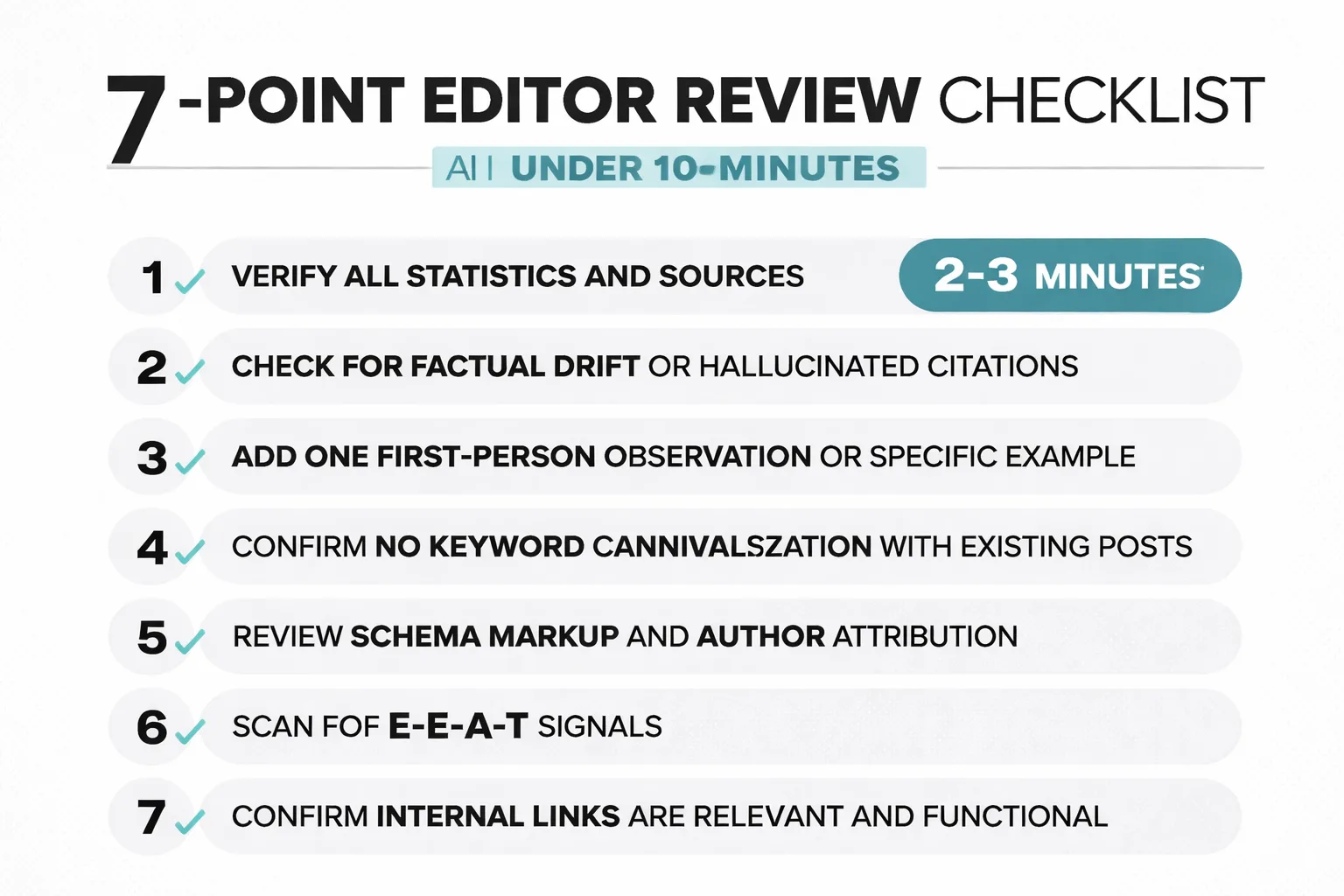

A well-trained editor can review an AI draft in under ten minutes if they know what to look for. Here's what that review actually covers:

1. Fact-check the statistics. Every number, every citation, every attributed quote. This takes two to three minutes with a browser open. It's not optional.

2. Add one human signal. This is the most important step and the one most teams skip. The editor should add something that couldn't have come from a language model summarizing the web — a specific client example, a counterintuitive observation from their own experience, a data point the team gathered themselves. One paragraph. That's the difference between a page Google trusts and one it tolerates.

3. Check intent alignment. Does this page actually answer what someone typing this query wants to know? AI drafts often drift toward what's easiest to write, not what the searcher needs. A 30-second read of the top three competing results tells you if the draft is on target.

4. Scan for cannibalization. Pull up your site's existing content on this topic. If you've already got a post targeting this intent, you're either consolidating or differentiating — not publishing a third version.

5. Confirm structured data and authorship. Author schema, article schema, breadcrumbs. These take 60 seconds to verify in the CMS and they matter more than most teams realize for AI search citation and Google Search Console structured data signals.

6. Internal link check. Does the post link to at least two relevant internal pages? Does it receive at least one internal link from existing content? Automated publishing pipelines often handle the outbound links but ignore the inbound — which means new posts sit in an orphan state and crawlers deprioritize them.

7. Read the first and last paragraph aloud. This sounds unnecessary until you do it. AI-generated openings and conclusions are often the weakest part of a draft — generic, hedging, and forgettable. Thirty seconds of editing here meaningfully improves engagement signals.

The BCG 2024 research found that 74% of companies have yet to show tangible value from AI use. I'd argue a significant portion of that failure comes from treating AI as a replacement for human judgment rather than an amplifier of it. The teams I've seen get consistent ROI from automated blog content are the ones who got ruthless about where editors spend their time — not less time total, but better-targeted time.

For teams comparing workflow options, the Meev vs. Jasper AI breakdown is worth reading if you're evaluating which platforms actually support this kind of human-in-the-loop publishing versus which ones are optimized for pure output volume.

How to Measure Whether Your Autoblog Is Actually Ranking

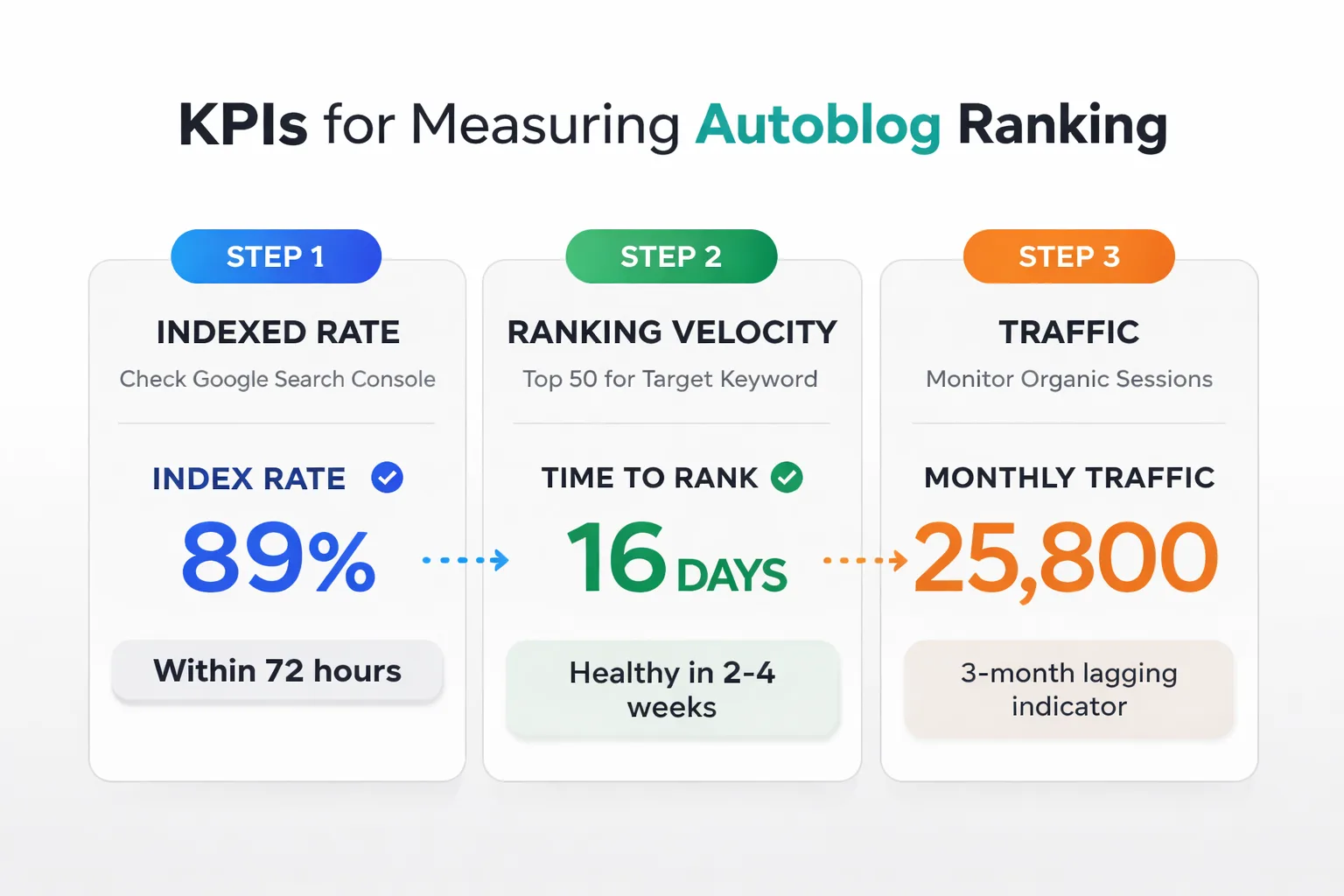

Most autoblogging post-mortems I've seen focus on the wrong metrics. Traffic is a lagging indicator. By the time you see traffic drop, the problem is three months old.

Here are the KPIs that actually tell you whether your automated blog content is working — and the order in which to watch them.

Indexed rate. Within 72 hours of publish, check Google Search Console to confirm the URL is indexed. If your indexed rate drops below 85% on new posts, something is wrong upstream — either crawl budget is constrained, the content is being flagged as low-quality, or your sitemap submission is broken. This is the earliest warning signal in the system.

Ranking velocity. Track how quickly new posts enter the top 50 for their target keyword. A healthy automated content program sees posts entering ranking positions within two to four weeks. Posts that don't move in 60 days typically have one of three problems: the keyword is too competitive for your domain authority, the intent match is off, or the content has an E-E-A-T gap that's suppressing it.

Organic CTR. This is where the AI Overviews impact hits hardest. When Google rolled out AI Overviews, organic CTR on affected queries dropped from 1.76% to 0.61% — a structural change, not a dip. If your autoblogged content is targeting queries that AI Overviews fully answer, you're publishing into a traffic void. I now run a pre-publish filter: would an AI Overview fully answer this? If yes, we either add a human angle that goes beyond what the Overview covers, or we don't publish it.

Engagement signals. Time on page, scroll depth, and return visit rate. These are the behavioral signals that determine whether early rankings hold. A post that ranks in position 8 but has a 15-second average session duration will fall. A post in position 12 with three-minute average sessions and 40% scroll depth will climb. Google's re-ranking systems are upstream of performance metrics — behavioral signals drive outcomes, not the other way around.

A simple dashboard setup that works: Google Search Console for indexed rate and ranking data, GA4 for engagement signals, and a spreadsheet tracking publish date, target keyword, first indexed date, 30-day rank, 60-day rank, and average session duration. That's it. Anything more elaborate and you're optimizing the dashboard instead of the content.

For SEO ROI calculation, the frame I use is straightforward: cost per post (writer time + tool costs + editor time) divided by the organic traffic value that post generates at 90 days (estimated traffic × average CPC for that keyword). Posts below a 3x return at 90 days get audited. Posts below 1x get consolidated or redirected.

What this means in practice: autoblogging works as a scaling mechanism when the measurement system is tight enough to catch failures early. Without that feedback loop, you're not running a content program — you're running a content lottery.

FAQ

Is autoblogging against Google's guidelines? Automated content generation is not inherently against Google's guidelines — Google's stance, updated in its spam policies, is that content produced primarily to manipulate search rankings is the problem, regardless of how it was created. AI-assisted content that provides genuine value to users is treated the same as human-written content. The risk comes from publishing at scale without editorial oversight, which produces the thin, undifferentiated content Google's quality systems are increasingly good at identifying.

How many posts per week can an autoblogging system realistically handle with quality controls? The pattern I keep seeing is that teams with one dedicated editor can maintain quality across 15 to 20 posts per week using the 10-minute review process. Beyond that, you need either additional editorial capacity or a tighter pre-publish filter that reduces the volume of posts requiring full review.

Does Google penalize AI-written content? Google penalizes low-quality content and spam. The origin — human or AI — is not the criterion. What Google's systems evaluate are quality signals: E-E-A-T indicators, behavioral engagement, factual accuracy, and uniqueness of perspective. AI-written content that passes those tests ranks. AI-written content that doesn't, doesn't — same as human-written content.

What's the minimum viable autoblogging setup for a small team? Keyword research tool (Ahrefs or Semrush), an LLM with a well-structured prompt template, one editor spending two to three hours per day on review, and Google Search Console for monitoring. That's genuinely sufficient to publish 10 to 15 posts per week with defensible quality.

How do AI Overviews affect autoblogging ROI? Significantly, for informational queries. When organic CTR drops from 1.76% to 0.61% on Overview-affected queries, the traffic math changes entirely. The autoblogging use cases that still generate strong ROI are: comparison content, specific how-to queries with procedural depth, opinion-driven pieces, and content targeting long-tail queries that AI Overviews don't yet cover reliably.

Should I block Google-Extended from crawling my autoblogged content? This is a nuanced call. Blocking Google-Extended (Google's AI training crawler) via robots.txt prevents your content from being used in AI training data, but it doesn't affect how Googlebot indexes or ranks your pages. For most publishers, the ranking benefit of being indexed outweighs the training-data concern. The exception: if your content contains proprietary research or original data you don't want absorbed into future AI models, selective blocking makes sense.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

See how Meev's human-in-the-loop publishing pipeline handles AI blog automation at scale — without the E-E-A-T gaps that sink most autoblog programs.