By Judy Zhou, Head of Content Strategy

Key Takeaways

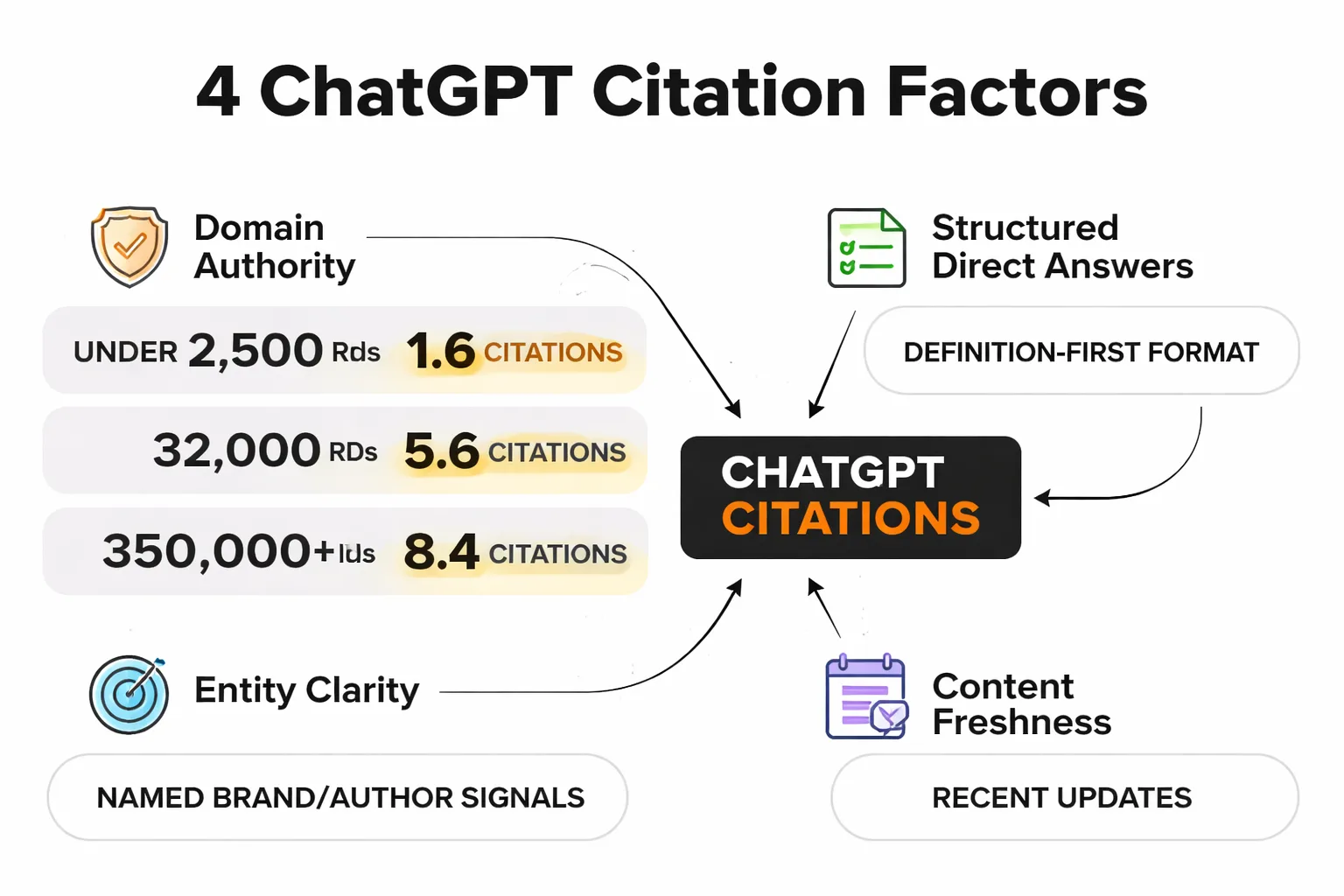

- Sites crossing 32,000 referring domains see ChatGPT citation rates nearly double (from 2.9 to 5.6 average citations), according to SE Ranking's analysis of 129,000 domains — making domain authority the single most measurable citation lever.

- Only 32.3% of ChatGPT citations are realistically influenceable through SEO or outreach — the rest are locked up by Wikipedia, Reuters, and institutional sources you can't displace.

- ChatGPT doesn't 'rank' pages the way Google does — it recalls entities, so brand consistency, named authorship, and cross-web citation signals matter as much as on-page optimization.

- The highest-ROI short-term move is reformatting existing content with definition-first paragraphs, FAQ blocks with 50-120 word answers, and FAQ schema markup — these structural changes can improve Browse-mode citation rates within 2-4 weeks.

Most advice on ranking in ChatGPT is guesswork dressed up as strategy.

I've read dozens of posts promising to reveal how to rank in ChatGPT, and most of them recycle the same five bullet points: write clearly, use headers, build backlinks, add schema, be authoritative. That's not wrong exactly — it's just incomplete in ways that matter. The real picture is messier, more specific, and frankly more actionable once you understand what's actually driving ChatGPT citation decisions.

Domain authority has a measurable threshold effect on ChatGPT citations. Wikipedia holds 16.3% of ChatGPT's citation share — far ahead of any other single source. Only 32.3% of ChatGPT citations are realistically influenceable through SEO or outreach. Sites crossing 32,000 referring domains see citation rates nearly double, from 2.9 to 5.6 citations on average.

Those numbers come from real research — and they should reshape how you think about this problem entirely.

Why 'Ranking' in ChatGPT Is the Wrong Mental Model (And What to Use Instead)

ChatGPT doesn't rank content the way Google does. There's no index, no crawl budget, no PageRank flowing through a graph. When you ask ChatGPT a question, one of three things is happening: it's drawing on its training data (a static snapshot with a knowledge cutoff), it's using Browse to retrieve live web pages, or — in the case of Perplexity and similar tools — it's running a retrieval-augmented generation (RAG) pipeline that pulls chunks of text and synthesizes them. These are fundamentally different mechanisms, and optimizing for the wrong one is the most common mistake I see.

For most conversational queries without Browse enabled, ChatGPT is working from training data. That means the question isn't "does my page rank on Google today?" — it's "was my content prominent, clear, and widely referenced enough to make it into the training corpus and get weighted appropriately?" That's a very different problem. When Browse IS enabled, you're back in familiar territory: the model fetches URLs and synthesizes from what it retrieves, so traditional page-level signals matter more. The practical implication is that your AEO vs. SEO strategy needs to account for both modes — not just the one you're currently testing.

The mental model I've started using with my team: think of ChatGPT citation as brand recall, not page ranking. Google rewards pages. LLMs recall entities. If your brand, your content, and your named expertise don't appear consistently across the web — in citations, in third-party articles, in Wikipedia edits, in forum threads — the model has weak signal about who you are. You're not competing for a position. You're competing for salience in a probabilistic memory system.

The 4 Factors That Correlate With ChatGPT Citations

1. Domain authority — with a specific threshold that matters

SE Ranking's analysis of 129,000 unique domains across 216,524 pages found that sites with over 350,000 referring domains averaged 8.4 ChatGPT citations, while those with up to 2,500 referring domains averaged just 1.6 to 1.8. The finding that stopped me cold was the threshold effect at 32,000 referring domains — citations nearly doubled at that inflection point, jumping from 2.9 to 5.6. Below that threshold, incremental link building produces marginal citation gains. Above it, the model appears to treat your domain as a reliable entity worth referencing. Sites with Domain Trust scores below 43 averaged 1.6 citations — essentially invisible. This isn't a reason to give up if you're a smaller site. It's a reason to be strategic about where you concentrate authority signals rather than spreading thin.

2. Structured direct answers

LLMs are extractors, not readers. They pull the most parseable, self-contained answer from a block of text. Pages that open with a clear definition or direct answer to the implied question — before any context, caveats, or background — get extracted more reliably. I've tested this pattern repeatedly when optimizing content for AI Overviews and ChatGPT Browse mode: a 40-60 word direct answer in the first paragraph of a section outperforms a 300-word discursive treatment of the same topic, even when the longer version is objectively more thorough.

3. Entity clarity

ChatGPT doesn't just cite pages — it cites sources it can identify as entities. That means your brand name, your authors, and your topical focus need to be consistent and recognizable across the web. Ahrefs' Brand Radar analysis found that homepages account for 23.8% of ChatGPT citations and educational pages account for 19.4% — both page types where entity identity is unambiguous. Reviews and media pages account for 11%. The pattern is clear: pages where the "who" and "what" are obvious get cited more. Named authorship, consistent brand signals, and clear topical focus aren't soft E-E-A-T hygiene — they're citation mechanics.

4. Content freshness

With Browse enabled, freshness matters directly. Without it, freshness mattered at training time — content that was heavily linked and referenced before the training cutoff carries more weight than content published after it. The practical implication: if you're trying to influence ChatGPT's knowledge base for the next model generation, you need to be building citation footprints now, not after the cutoff. This is a longer game than most content teams are playing.

Content Formatting That LLMs Prefer

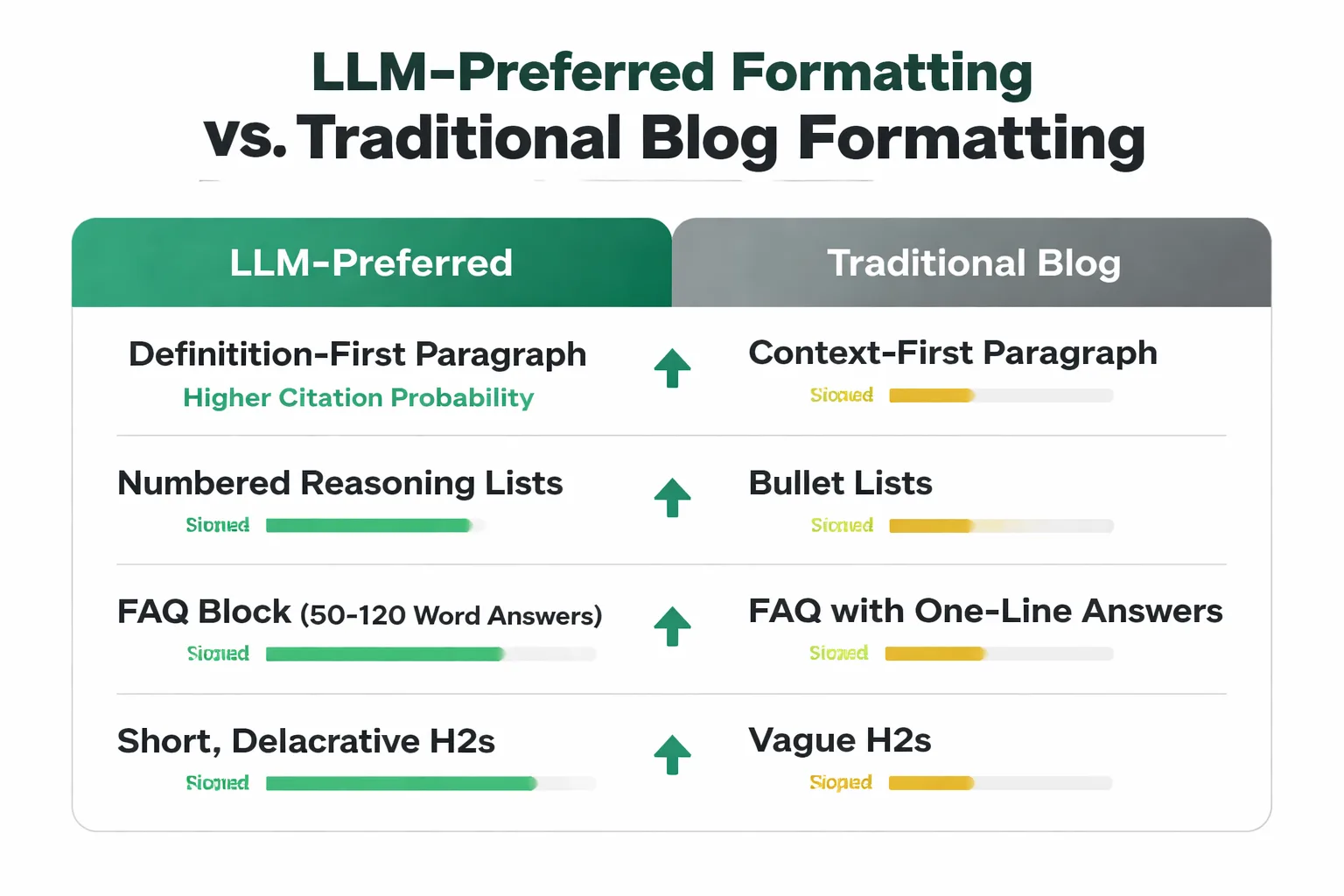

The formatting advice I give for LLM optimization is different from what I'd give for traditional SEO, and the difference is meaningful. Traditional SEO optimization often rewards longer, more comprehensive treatments. LLM citation optimization rewards parseable, extractable units of meaning.

Definition-first paragraphs. Every section that introduces a concept should open with a direct definition or claim — not background, not context, not "many experts believe." The first sentence of any paragraph is the highest-probability extraction point. If that sentence is vague or scene-setting, the LLM skips to the next chunk. If it's a concrete, attributable claim, it gets pulled.

Numbered reasoning. When you're explaining a process or a set of factors, numbered lists outperform bullet lists for LLM extraction because they signal sequence and completeness. A model that's synthesizing an answer wants to know "here are the three things" — not "here are some things." Use numbered lists for anything where order or count matters. Use prose for nuance.

FAQ blocks with substantive answers. This is one of the highest-ROI structural moves for chatgpt citation factors. FAQ sections with 50-120 word answers per question give LLMs a pre-packaged Q&A format that maps directly onto how users prompt ChatGPT. The question becomes the trigger; the answer becomes the citation. I've seen this pattern pull citations in Browse mode on queries that the main article text wasn't capturing. The key is that each FAQ answer must be self-contained — no "as mentioned above," no references to other sections.

Short, declarative H2s. Heading text gets weighted as a signal for what the section is about. Vague headings like "Our Approach" or "Key Considerations" give the model nothing to work with. Specific headings like "Sites With 32,000+ Referring Domains Get Cited Twice as Often" give it an extractable claim before the section even starts. This is the same principle behind why optimizing content for Google AI Overviews requires heading specificity — the extraction logic is similar.

Structured data for forums and Q&A. One underused signal: FAQ schema markup. When a page has FAQ schema, Browse-mode LLMs can parse the structured Q&A directly rather than inferring it from prose. This is particularly relevant for "structured data for forums" use cases, where community Q&A content can be marked up to improve LLM readability.

How to Test Whether ChatGPT Is Citing You

Most teams skip this step entirely. They publish content, assume it's working, and never verify. Here's the systematic method I use.

Step 1: Enable Browse mode. Open ChatGPT with Browse enabled (GPT-4 with browsing, or use Perplexity for a more transparent citation view). This tests real-time retrieval, not training data — which is the mode you can actually influence in the short term.

Step 2: Build a query list from your target topics. Take your top 20-30 content pieces and distill each into 2-3 natural-language questions a user would actually ask. Don't use your exact title — use the implied question behind it. "How to rank in ChatGPT" becomes "how does ChatGPT decide which sources to cite?" and "what makes a website get referenced by ChatGPT?"

Step 3: Run each query and log the citations. Create a simple spreadsheet: query, cited domains, cited URLs, whether your domain appeared. Run this weekly or bi-weekly. The pattern you're looking for isn't just "did we get cited" — it's "which query types cite us vs. don't cite us?" That gap tells you where your content formatting or entity clarity is failing.

Step 4: Cross-reference with Perplexity. Perplexity shows citations more transparently than ChatGPT. Run the same queries in both tools. If Perplexity cites you but ChatGPT doesn't, the issue is likely entity recognition in training data. If neither cites you, the issue is page-level formatting or domain authority. If ChatGPT cites you but Perplexity doesn't, you may have strong training data presence but weak real-time retrieval signals.

Step 5: Track the 32.3% opportunity. Ahrefs' analysis found that only 32.3% of ChatGPT citations are realistically influenceable through SEO or outreach — the rest are dominated by Wikipedia, major news outlets, and institutional sources. Focus your audit on queries where the citation pool is actually contestable. Trying to displace Reuters on a breaking news query is not a winnable battle. Trying to become the cited source on a niche technical question in your domain is.

Curious how your content stacks up against ChatGPT's citation criteria right now?

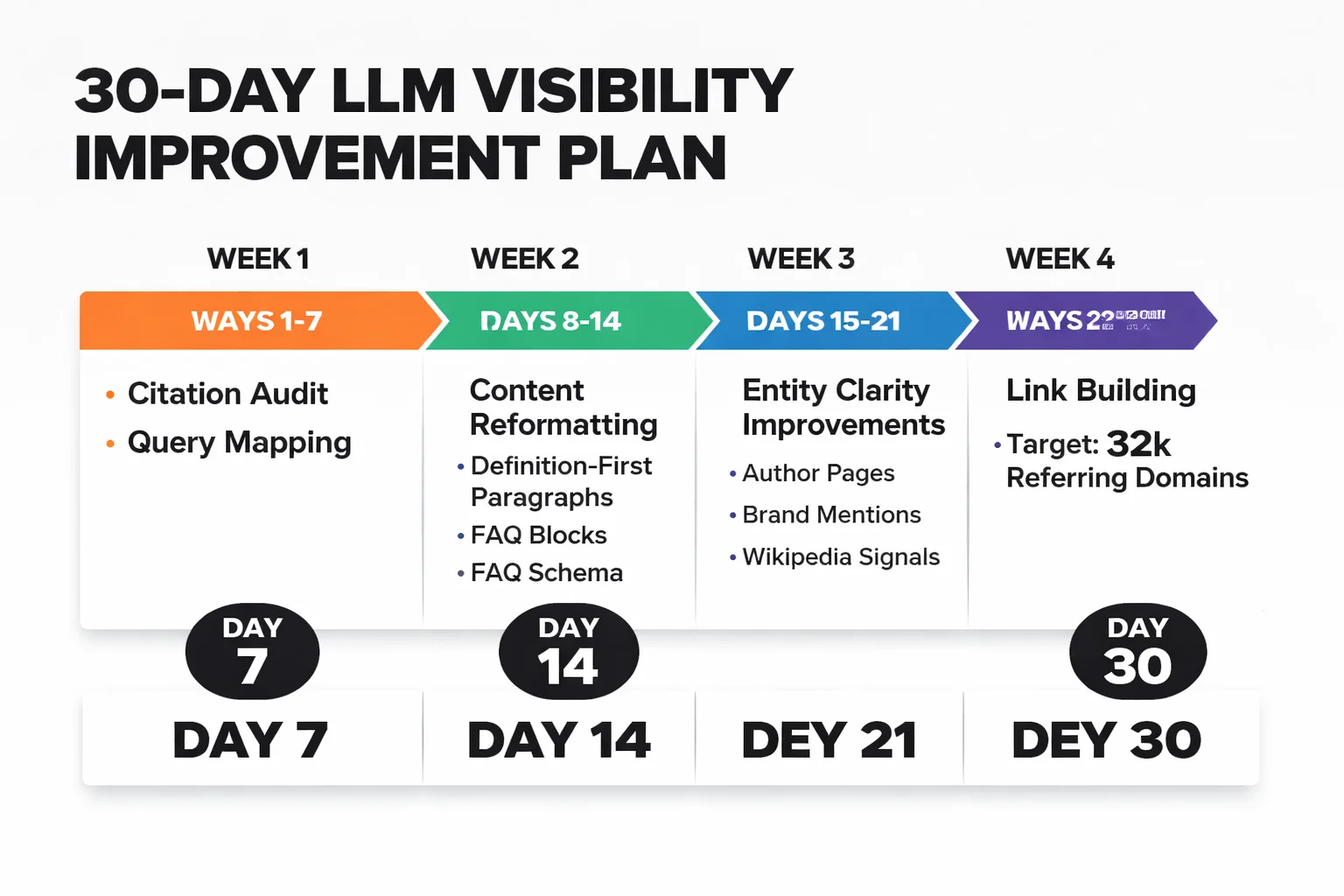

The 30-Day Action Plan to Improve Your LLM Visibility

This isn't a 30-day plan that produces 30-day results. LLM citation influence — especially for training data — operates on a 6-12 month cycle. What you can move in 30 days is your Browse-mode citation footprint and your structural readiness for the next model update.

Week 1 — Audit and prioritize (Days 1-7)

Run the citation audit described above. Map your current citation footprint across ChatGPT Browse and Perplexity. Identify your top 10 content pieces by organic traffic and check whether any are being cited. Build a gap list: high-traffic pages with zero LLM citations are your priority targets. Also check your domain's referring domain count against that 32,000 threshold — if you're significantly below it, link building needs to be a parallel workstream, not an afterthought.

Week 2 — Reformat for extraction (Days 8-14)

Take your top 5 gap pages and reformat them: add a 40-60 word direct answer in the opening paragraph, restructure body sections to open with declarative claims, add a FAQ block with 4-6 substantive Q&A pairs, and implement FAQ schema markup. This is the highest-leverage short-term move for chatgpt citation factors. Don't rewrite the content — restructure the existing content so it's more parseable. The goal is extraction readiness, not comprehensiveness. If you're using an AI content platform, tools that support full automation vs. manual SEO research can accelerate this reformatting at scale.

Week 3 — Strengthen entity signals (Days 15-21)

Audit your author pages, your About page, and your brand's Wikipedia presence (or lack thereof). Named authorship on every article is non-negotiable — anonymous content has weak entity signal. If your brand doesn't have a Wikipedia article, look at whether you qualify and consider contributing to relevant Wikipedia pages in your niche (citing your content where genuinely appropriate). Check that your brand name, domain, and topical focus are consistent across your site, your social profiles, and third-party mentions. Inconsistency is a silent citation killer.

Week 4 — Build the link foundation (Days 22-30)

With the 32,000 referring domain threshold in mind, assess your current position. If you're at 5,000 referring domains, you're not reaching 32,000 in 30 days — but you can start the trajectory. Focus link building on the highest-authority domains that are topically relevant: industry publications, academic sources, and authoritative blogs in your niche. A single link from a domain with 500,000+ referring domains does more for your ChatGPT citation probability than 50 links from domains with 1,000 referring domains each. This is the same principle behind why over-linking from weak pages dilutes rather than builds authority.

What to measure at Day 30

Re-run your citation audit. Track: number of queries where your domain now appears (Browse mode), number of FAQ blocks indexed with schema, referring domain count delta, and — if you have access to a tool like Ahrefs Brand Radar — your share of ChatGPT mentions by topic. Don't expect dramatic movement in 30 days on training-data-dependent queries. Do expect measurable improvement in Browse-mode citation rates for the pages you reformatted.

The llm content optimization work that actually compounds is the kind that makes your content more citable across every channel simultaneously — clearer structure, stronger entity signals, higher domain authority. The teams I see making real progress aren't chasing ChatGPT specifically. They're building content systems that are genuinely harder to ignore, across Google, AI Overviews, Perplexity, and ChatGPT at once. That's a more durable bet than any single-channel tactic.

Frequently Asked Questions

Does Google ranking position affect ChatGPT citation probability?

Not directly, and this is a common misconception. SE Ranking's research measured citation frequency by domain authority metrics — specifically referring domain counts and Domain Trust scores — not by Google ranking position. A page ranked #1 on Google from a low-authority domain is less likely to be cited by ChatGPT than a page ranked #5 from a domain with 50,000+ referring domains. The two signals are correlated but not equivalent. Focus on domain-level authority, not page-level ranking position, when optimizing for LLM citations.

Can AI-generated content get cited by ChatGPT?

There's no evidence that ChatGPT discriminates between human-written and AI-generated content at the citation level. What matters is whether the content is clear, structured, and published on an authoritative domain. That said, researchers at UMD have concluded that current AI detectors are unreliable in practical scenarios, so the detection angle is largely moot. The quality and authority signals are what drive citation probability, regardless of how the content was produced.

How does Wikipedia dominate ChatGPT citations so heavily?

Wikipedia's citation dominance — 16.3% mention share according to one analysis, and as high as 29.7% in other datasets measuring top cited pages — reflects two things: its massive domain authority (essentially unlimited referring domains) and its structured, neutral, definition-first writing style that maps perfectly onto LLM extraction patterns. Wikipedia articles are also heavily cross-linked and cited across the web, which reinforces their training data weight. You can't out-Wikipedia Wikipedia, but you can borrow its structural patterns.

Does ChatGPT cite pages it can't access or that block AI crawlers?

In Browse mode, no — ChatGPT can only cite pages it can retrieve. If you've implemented Google-Extended blocking or other crawler restrictions, you're excluding yourself from real-time retrieval. For training data, the situation is more complex: content that was accessible before a crawl cutoff may still appear in the model's knowledge base even if you block crawlers afterward. The practical advice: don't block AI crawlers unless you have a specific reason to, and audit your robots.txt for unintended exclusions.

How long does it take to see results from LLM optimization?

For Browse-mode citations, structural improvements (FAQ blocks, definition-first formatting, schema markup) can show measurable impact within 2-4 weeks. For training-data-dependent citations, you're working on a timeline tied to model update cycles — typically 6-18 months. The most durable approach is to treat LLM visibility as a byproduct of genuine topical authority: consistent publishing, strong domain authority, clear entity signals. Those compound over time in ways that single-tactic optimization doesn't.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Stop guessing which content ChatGPT will cite — let Meev build you a structured, authority-first content system that's designed for LLM visibility from day one.