By Judy Zhou, Head of Content Strategy

Key Takeaways

- Only 12% of URLs cited by AI search engines appear in Google's top 10 results — meaning strong Google rankings give you almost no guarantee of AI citation visibility.

- AI citation drops trace back to three specific root causes: source freshness decay, competitor content displacing yours in the retrieval pool, and E-E-A-T signal degradation from domain or author changes.

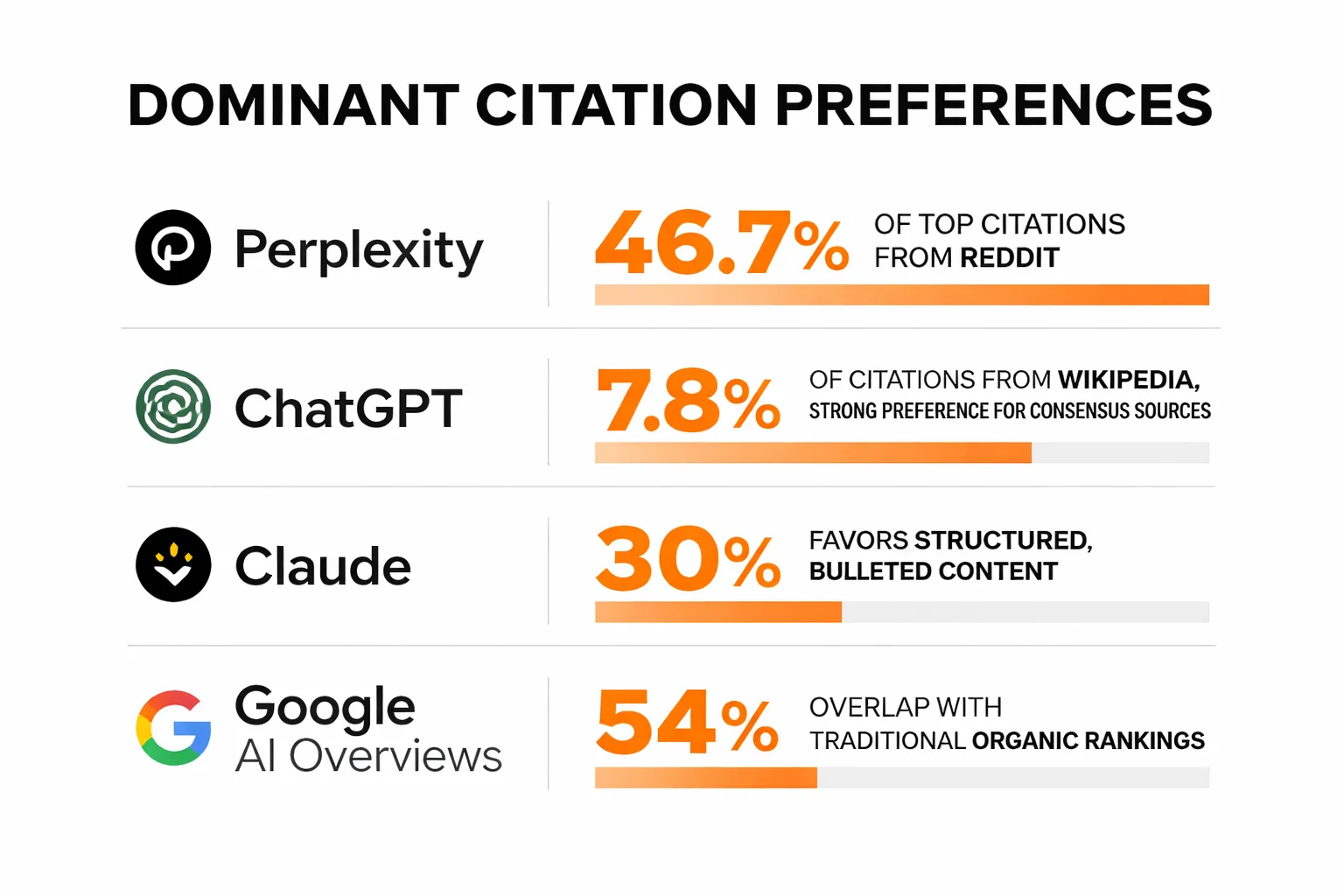

- Platform citation behavior varies dramatically: Perplexity draws 46.7% of citations from Reddit, ChatGPT favors Wikipedia-style consensus sources, and Claude is 30% more likely to cite bullet-pointed pages — so there is no single optimization strategy that works across all platforms.

- The highest-leverage fix for brand mention outreach is editorial, not tactical: pitch briefs must anchor specific claims to your brand name explicitly, not just link back, or AI systems have no signal to surface you in a named recommendation.

Your brand didn't disappear from AI answers—it was replaced, quietly, by someone more credible.

That sentence lands differently once you've watched it happen in real time. One week your brand appears in Perplexity responses for your core category query. The next week, after a retrieval index refresh or a model weight update, you're gone. A competitor you've never lost to in Google search is now the named recommendation. And your traditional SEO metrics haven't moved at all. AI search visibility operates on a completely different citation logic than Google rankings. Research from Search Atlas analyzing 18,377 matched queries found that only 12% of URLs cited by AI search engines appear in Google's top 10 results. ChatGPT shows just 8% overlap with Google's top 10. Google Gemini, despite being a Google product, shows only 6%. 28% of AI-cited pages have zero organic Google visibility whatsoever. If you've been treating your Google rankings as a proxy for AI citation health, you've been reading the wrong instrument.

The Volatility Problem Nobody Talks About

The pattern I keep seeing in content audits is this: brands don't lose AI visibility gradually. They fall off cliffs.

A content team will spend months building up consistent citation presence across ChatGPT, Perplexity, and Google AI Overviews. They'll start seeing referral traffic from Perplexity in GA4. Brand mentions in AI responses become a KPI someone actually tracks. And then a model update drops, or a retrieval pipeline gets refreshed, and the citations evaporate. Not reduced. Gone. The team runs the same queries they'd been monitoring and their brand doesn't appear in the first five responses. They check their Google rankings. Everything looks fine. They check their backlink profile. No drops. Nothing in Search Console. The traditional signals are all green while the AI visibility is dead.

What makes this so disorienting is that the two systems are genuinely decoupled. The Search Atlas research makes this concrete: source overlap between any two AI engines ranges from just 16% to 59%, meaning the citation pools these platforms draw from are substantially different from each other and from Google's index. Perplexity shows 28% overlap with Google's top 10. ChatGPT shows 8%. These aren't minor variances. They're different retrieval architectures pulling from different source pools, refreshed on different schedules, weighted by different signals. A brand that's visible in one system can be invisible in another, and the reasons for each are distinct.

The volatility compounds because most content teams have no monitoring infrastructure for this. They're running manual query tests sporadically, not tracking mention frequency across platforms systematically. By the time the drop registers, the refresh cycle that caused it is already two or three iterations old. You're debugging a problem that's already moved.

3 Root Causes of Citation Drop-Off

Not every AI visibility loss has the same cause, and the fix depends entirely on which failure mode is actually hitting you. I've identified three that account for the vast majority of cases.

Source freshness decay is the most common and the least understood. AI retrieval pipelines, especially those powering live-query systems like Perplexity, weight recency heavily. An article that earned citations because it was the freshest authoritative source on a topic will lose that position when a newer piece covers the same ground. This isn't about quality degradation. Your content didn't get worse. It just got old relative to something newer in the retrieval pool. The BrightEdge citation patterns research shows that Perplexity draws 46.7% of its top citations from Reddit, a platform where content is perpetually fresh because users are posting daily. If your static blog post from 18 months ago is competing against an active Reddit thread on the same topic, freshness alone can explain why Perplexity stopped citing you.

Competitor content displacing yours in the retrieval pool is subtler. This happens when a competitor publishes content that covers your topic with more structural clarity, more specific claims, or better entity co-occurrence with the query terms. LLMs don't just retrieve content that's relevant. They retrieve content that's retrievable, meaning it's structured in ways that make extraction easy. Research from Discovered Labs found that Claude is 30% more likely to cite bullet-pointed pages. ChatGPT cites competitor websites at a rate 11.1 percentage points higher than Google does. If a competitor restructures their content to be more extractable, they can displace you in the retrieval pool without outranking you in traditional search.

E-E-A-T signal degradation is the third cause, and it's the one that tends to follow organizational changes. A site migration, an author byline removal, a domain consolidation, a CMS switch that strips structured data. Any of these can weaken the entity signals that AI systems use to assess source credibility. The generative engine optimization research published on arXiv confirms that AI search systematically favors earned media from third-party authoritative sources over brand-owned content. If your E-E-A-T signals weaken, your content slides down the retrieval hierarchy even if the text itself is unchanged.

Is your brand still appearing in AI answers after the latest model updates?

How to Diagnose Which Cause Is Hitting You

Diagnosis before remediation. Running the wrong fix wastes months.

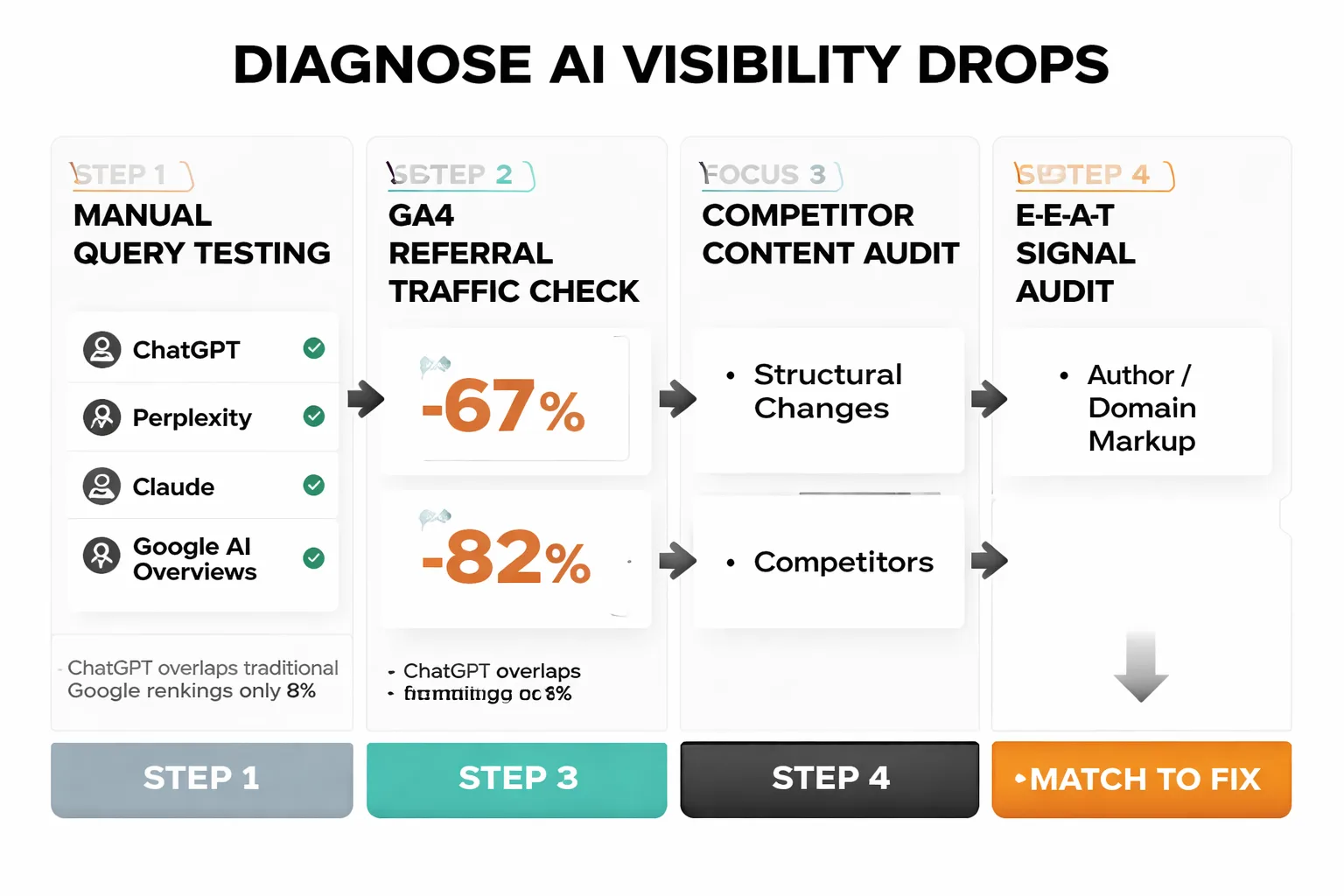

Start with manual query testing across the four major platforms: ChatGPT, Perplexity, Claude, and Google AI Overviews. Run the 10-15 queries where you previously appeared. Document which platforms still cite you and which don't. This matters because the platforms have genuinely different citation behaviors. According to the Search Atlas research, AI Overviews shows 76% overlap with traditional Google rankings, while ChatGPT shows only 8%. If you've dropped from Perplexity but still appear in AI Overviews, that's a freshness or retrieval pool problem, not an E-E-A-T problem. If you've dropped from AI Overviews specifically, your traditional SEO signals are likely involved.

Next, pull GA4 and filter referral traffic by source. Perplexity, ChatGPT, and Claude all show up as referral sources when they drive clicks. A sharp drop in referral traffic from these sources, correlated with the date you noticed the citation loss, confirms the drop is real and not a monitoring artifact. If referral traffic from Perplexity drops but referral from AI Overviews holds, you've narrowed the platform scope.

For the freshness hypothesis: find the competitor content that's now appearing where yours used to. Check its publication date and last-modified date. If it's newer than your content by more than six months and covers the same entity cluster, freshness decay is likely the culprit. For an LLM citation tracking baseline, tools like Meev's visibility platform let you monitor citation frequency across platforms over time, so you're not reconstructing history from memory.

For the E-E-A-T hypothesis: audit your content for author bylines, linked author profiles, structured data markup, and any domain or CMS changes in the six months before the drop. Check if your schema markup survived any platform migrations. Run a crawl to verify canonical tags are intact. A single CMS migration that strips JSON-LD from 200 pages can tank entity signals across your entire content library.

For the competitor displacement hypothesis: look at the structural format of the content that replaced yours. Is it more heavily bulleted? Does it include a definition block at the top? Does it open with a direct answer to the query? These structural choices matter more than most content teams realize, especially for Perplexity citations and Claude, where formatting signals are weighted heavily in retrieval selection.

Why Platform-Specific Signals Matter More Than Generic SEO

Here's the contrarian take that most GEO guides miss: there is no unified AI search optimization strategy. The platforms are too different.

Perplexity draws 46.7% of its top citations from Reddit, according to the BrightEdge data. That's not a rounding error. That's the dominant citation source for the platform that content marketers most want to appear in. ChatGPT, by contrast, pulls 7.8% of its citations from Wikipedia and shows a strong preference for consensus sources. Claude visibility favors structured, bulleted content at a rate 30% higher than unformatted prose. Google AI Overviews, as a Google product, maintains 54% overlap with traditional organic rankings, making it the one platform where conventional SEO still transfers meaningfully.

The implication is uncomfortable: a single piece of content optimized for one platform is likely underperforming on the others. A well-structured, bulleted explainer will do well on Claude but may not penetrate Perplexity's Reddit-heavy citation pool. A piece that earns strong organic rankings will surface in AI Overviews but may be invisible to ChatGPT.

I used to assume backlinks were doing the heavy lifting for AI visibility the same way they do for traditional search. That assumption was wrong. The pattern I keep seeing is that brand mentions in context outperform backlinks by a significant margin for AI citation purposes. An article that says "according to [Brand], the conversion rate for X is Y%" gets picked up in AI responses. An article that links to the brand but calls it "a recent study" does not. The model has no reliable signal to surface you by name if your name isn't co-occurring with the claim.

This is what I've started calling the mention-citation gap. Your brand earns a placement in a high-authority publication. The AI platform pulls that publication as a source. And then the response recommends a competitor by name because that competitor's name was explicitly anchored to the claim in the text, and yours wasn't. The fix isn't more placements. It's rewriting the pitch brief so the journalist anchors the claim to your brand name explicitly.

How Does the Retrieval Pipeline Actually Work?

Understanding why brands disappear requires understanding what retrieval systems are actually doing when they generate a response.

LLMs don't search the web the way a browser does. They operate through retrieval-augmented generation (RAG) pipelines that pull from pre-indexed source pools, apply relevance scoring against the query, and then pass the retrieved content to the language model for synthesis. The critical point is that the source pool itself is bounded. Not everything on the web is in the retrieval index. What gets included depends on licensing agreements, crawl frequency, domain authority thresholds, and platform-specific content policies.

This is why the Reddit data is so striking. Reddit's citation dominance across platforms, particularly its 46.7% share on Perplexity, isn't purely organic. It reflects commercial infrastructure. Reddit signed a $60M licensing deal with Google and a roughly $70M agreement with OpenAI that embedded its content directly into retrieval pipelines. That's not a content strategy. That's a data partnership that shapes what the retrieval system even has access to. Most brands will never operate at that scale, but understanding the architecture clarifies what the actionable levers actually are.

For content teams, the practical implication is this: the question isn't "how do we get AI labs to notice us." It's "are we structured well enough to appear in the retrieval layer when it does pull live sources." That means fresh content, clear entity signals, explicit brand-name co-occurrence with claims, and structural formatting that makes extraction easy. These are the inputs the retrieval layer scores on.

When Should You Prioritize Brand Mention Outreach?

Brand mention outreach is the highest-leverage tactic most content teams are underinvesting in, but only if the execution is right.

The failure mode I see constantly: a team runs a publisher pitching campaign, earns placements in DR 70+ outlets, and sees zero AI visibility lift. The placements are real. The coverage is real. The citations don't move. What went wrong is that the pitch brief was optimized for backlink acquisition, not mention co-occurrence. The resulting articles say "according to a recent study" and bury the brand name in a hyperlink. The AI retrieval system has no signal to surface the brand by name because the brand name never appears in context with the claim.

The fix is editorial, not tactical. Rewrite your pitch briefs so journalists are anchoring specific, verifiable claims to your brand name explicitly. "According to Meev's 2026 content velocity analysis, brands publishing more than 8 pieces per month see 3x the citation frequency" is a citable sentence. "A recent study found that publishing frequency matters" is not. The specificity and the named attribution are what make the difference for generative engine optimization.

For getting content cited in Perplexity specifically, the platform's heavy reliance on Reddit means that forum presence matters more than most B2B content teams expect. Authentic participation in relevant subreddits, where your brand's perspective is named and specific, creates the kind of mention co-occurrence that Perplexity's retrieval layer scores on. This isn't about spam. It's about being the named source in a community where Perplexity already looks.

The Fix: Rebuilding Citation Stability

Once you've diagnosed the root cause, the remediation is specific. Here's what works for each.

For source freshness decay: establish a content refresh cadence, not a rewrite schedule. The distinction matters. A full rewrite signals a new URL to crawlers and can temporarily reset your citation history. A refresh, updating statistics, adding a new data point, revising the publication date with a "last updated" timestamp, signals recency while preserving the URL's existing citation equity. For high-value pages, quarterly refreshes are the minimum. For pages in fast-moving categories, monthly is more appropriate. The goal is to never let a piece go more than six months without a substantive update if it's a core citation target.

For competitor displacement: conduct a structural audit of the content that replaced yours. If it's more heavily bulleted, restructure your content. If it opens with a direct definitional answer, add one. If it includes a summary table, add one. The arXiv GEO research is clear that AI search favors earned media and structured, extractable content. Match the structural signals that the retrieval layer rewards, then differentiate on depth and specificity. Being structurally competitive is table stakes. Winning on information gain is what creates durable citation advantage.

For E-E-A-T signal degradation: start with a structured data audit. Verify that JSON-LD is intact across all pages that were previously generating citations. Check author schema, article schema, and organization schema. If a CMS migration stripped these, restoring them is the highest-priority technical fix. Beyond markup, rebuild author entity signals: linked bylines, author pages with verifiable credentials, external mentions of the author in authoritative publications. These signals don't recover overnight. Budget 60-90 days to see citation recovery after E-E-A-T remediation.

Across all three root causes, the systemic fix is monitoring infrastructure. Manual query testing is not a strategy. By the time you notice a drop through manual testing, you're already weeks behind. An ai search visibility tool that tracks citation frequency across platforms on a scheduled basis gives you the early warning signal that manual testing can't. The goal is to catch a 20% citation frequency drop before it becomes a 100% disappearance.

The brands that maintain stable AI visibility aren't the ones with the highest domain authority. They're the ones running the tightest operational loops: monitoring citation frequency, refreshing content on schedule, maintaining publisher relationships so their brand name stays in active circulation in third-party content, and structuring every piece to be extractable by the retrieval systems that matter. That's not a one-time optimization. It's an ongoing content operation.

Topical authority for AI search works the same way it does in traditional SEO, but the signals are different. It's not about having the most pages on a topic. It's about having your brand name consistently co-occurring with specific claims across a range of authoritative third-party sources. The breadth of that co-occurrence is what creates citation stability across model updates. A single high-authority citation is fragile. Fifty mentions across twenty publications, each anchoring a specific claim to your brand name, is durable.

Frequently Asked Questions

How long does it take to recover AI visibility after a citation drop? Recovery time varies by root cause. Source freshness issues can resolve in 2-4 weeks once content is refreshed and re-crawled. Competitor displacement takes longer because you're competing against content that's already been indexed and scored. E-E-A-T signal recovery is the slowest: expect 60-90 days after restoring structured data and author signals before citation frequency returns to baseline. There's no shortcut here. The retrieval systems need time to re-index and re-score your content.

Does improving Google rankings help with AI citation visibility? For Google AI Overviews, yes, significantly. The Search Atlas research found 76% overlap between AI Overviews citations and Google's top 10 results. For ChatGPT and Gemini, the overlap is only 8% and 6% respectively, so traditional ranking improvements have minimal transfer. Perplexity sits at 28% overlap. The practical answer: improving Google rankings helps AI Overviews specifically, but won't fix visibility gaps in other AI platforms without platform-specific optimization.

What's the most common mistake in brand mention outreach for AI citations? Optimizing for backlink acquisition instead of mention co-occurrence. A placement that links to your brand but refers to it as "a recent study" or "one company" produces zero AI visibility lift. The brand name must appear explicitly in context with the specific claim for LLMs to surface it in a named recommendation. Rewrite your pitch briefs to anchor claims to your brand name directly.

How often should I run manual query tests to monitor AI visibility? Manual testing is better than nothing, but it's not a reliable monitoring system. Query results vary by session, location, and model version. For meaningful trend data, you need automated tracking across a fixed query set, run consistently over time. Weekly automated monitoring is the minimum for brands where AI visibility is a revenue-relevant metric. Monthly is acceptable for early-stage programs.

Does content volume hurt AI citation stability? Volume itself isn't the problem. Undifferentiated volume is. Sites that publish hundreds of pages with near-identical entity coverage and no unique data points per page are building toward a quality penalty, not away from one. The test I apply: can you extract one unique fact from each page that doesn't appear on any sibling page? If you can't pass that test on 60% or more of your inventory, the volume is working against you, not for you.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Track your citation frequency across ChatGPT, Perplexity, and Google AI Overviews before the next refresh wipes you out.