By Judy Zhou, Head of Content Strategy

Key Takeaways

- Only 12% of AI citations overlap with Google's top 10 results, meaning strong SEO rankings don't predict AI search visibility at all.

- Ahrefs analyzed 55.8 million AI Overviews and found brand mentions (correlation 0.664) are the single strongest predictor of AI citation — outranking backlinks and E-E-A-T signals.

- Perplexity pulls 46.7% of its citations from Reddit while ChatGPT pulls 47.9% from Wikipedia — platform-specific distribution strategies are non-negotiable for AI citation coverage.

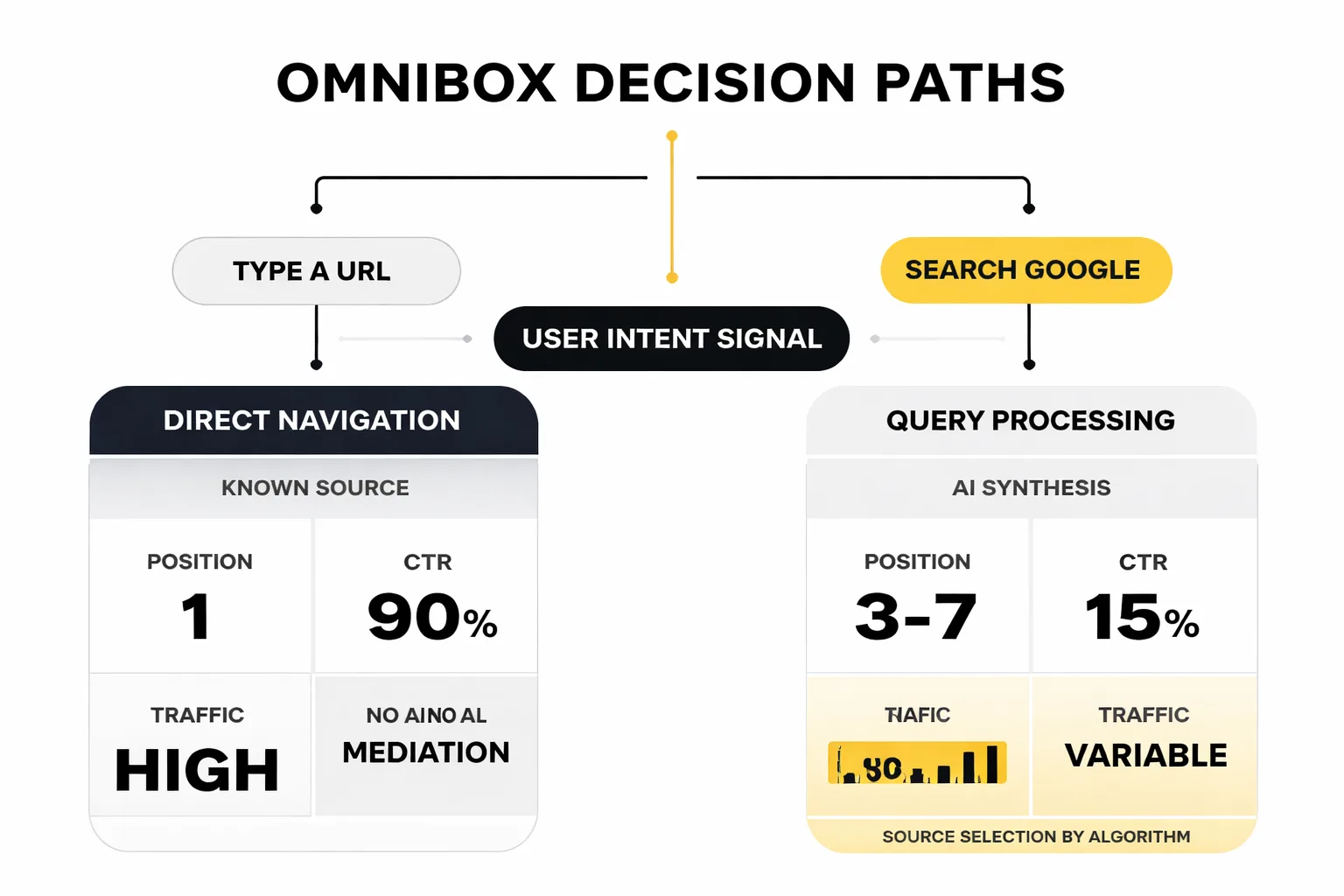

- The 'search vs. type a URL' decision is a proxy for query confidence, and AI engines are building citation hierarchies around open-ended discovery queries — not navigational ones.

In 2008, Google engineers made a quiet but consequential decision: they would merge the browser's address bar and its search box into a single input field, later dubbed the 'omnibox.' The 'Search Google or type a URL' placeholder was the user-facing manifestation of that collapse — a small piece of microcopy that unified two fundamentally different user behaviors into one gesture. Sixteen years later, that merger looks like the earliest signal of a much larger convergence: one where the line between searching, answering, and arriving at a destination dissolves entirely into AI-mediated intent.

The phrase "search google or type a url" gets 60,500 monthly searches. That number stopped me cold the first time I saw it. Most people searching this phrase aren't asking a technical question — they're signaling a deeper confusion about what search even is anymore. And as someone who leads content strategy across hundreds of brands at Meev, that confusion matters enormously. Because the intent behind "do I search or go directly?" is exactly the same intent signal that AI search engines are trying to decode — and getting right or wrong — every single day.

Why This Query Has 60,500 Monthly Searches

The surface answer is simple: people see the placeholder text in Chrome and wonder what it means. But the deeper answer is that this phrase captures a genuine behavioral fork. When someone types into that bar, they're making a real-time decision about their own certainty. Do I know where I'm going? Or do I need help getting there?

That fork — known destination versus open discovery — is the oldest distinction in information retrieval. Librarians call it navigational versus informational intent. Google's own quality rater guidelines have used similar language for years. But what's changed in 2026 is that AI search engines don't just respond to that fork. They're actively reshaping it.

When a user types a direct URL, they've already resolved their intent. They know the source, they trust it, they're done deliberating. When they search, they're outsourcing that resolution to an intermediary. For two decades, that intermediary was a ranked list of ten blue links. Now it's increasingly a synthesized paragraph from ChatGPT, Perplexity, or Google AI Overviews — and the source selection happening inside that synthesis is where the real content strategy battle is being fought.

The 60,500 people searching this phrase every month are, in a roundabout way, asking: "Who do I trust to answer my question?" That's not a browser question. That's the central question of AI search visibility.

The Intent Fork AI Engines Are Actually Reading

Here's the contrarian take I don't see discussed enough: the distinction between "search" and "type a URL" isn't just a UX quirk. It's a proxy for query confidence — and AI engines are building citation hierarchies around exactly this signal.

When someone searches a broad, open-ended query, they're implicitly asking for synthesis. They want a trusted intermediary to weigh sources and return a verdict. AI engines love this task. But when someone navigates directly to a domain, they've already done that weighting themselves. They've chosen a source. The AI never gets a chance to intercede.

This matters for content strategy because the queries that AI engines answer — the ones where they synthesize, cite, and recommend — are almost entirely the "search" side of that fork. Nobody types "perplexity.ai" into the omnibox and then gets an AI Overview. They type "best project management tools for remote teams" and get a synthesized answer that may or may not include your brand.

The Ahrefs study of 55.8 million AI Overviews found that brand mentions were the single most correlated factor with AI Overviews inclusion, with a correlation of 0.664. The top three correlated factors were all brand-related. Not backlinks. Not keyword density. Not even E-E-A-T signals in the traditional sense. Brand mentions — the kind that accumulate when other sources reference you in the context of answering open-ended queries.

That finding reframes the whole content strategy question. If you want to appear in the synthesized answers that AI returns for "search"-type queries, you need to be the brand that other trusted sources mention when they answer those questions. That's a very different brief than ranking for a keyword.

How Does Source Selection Actually Differ Across AI Engines?

Not all AI search engines fish from the same pond. This is the piece most "AI search visibility" guides gloss over, and it's where I've seen teams waste real budget.

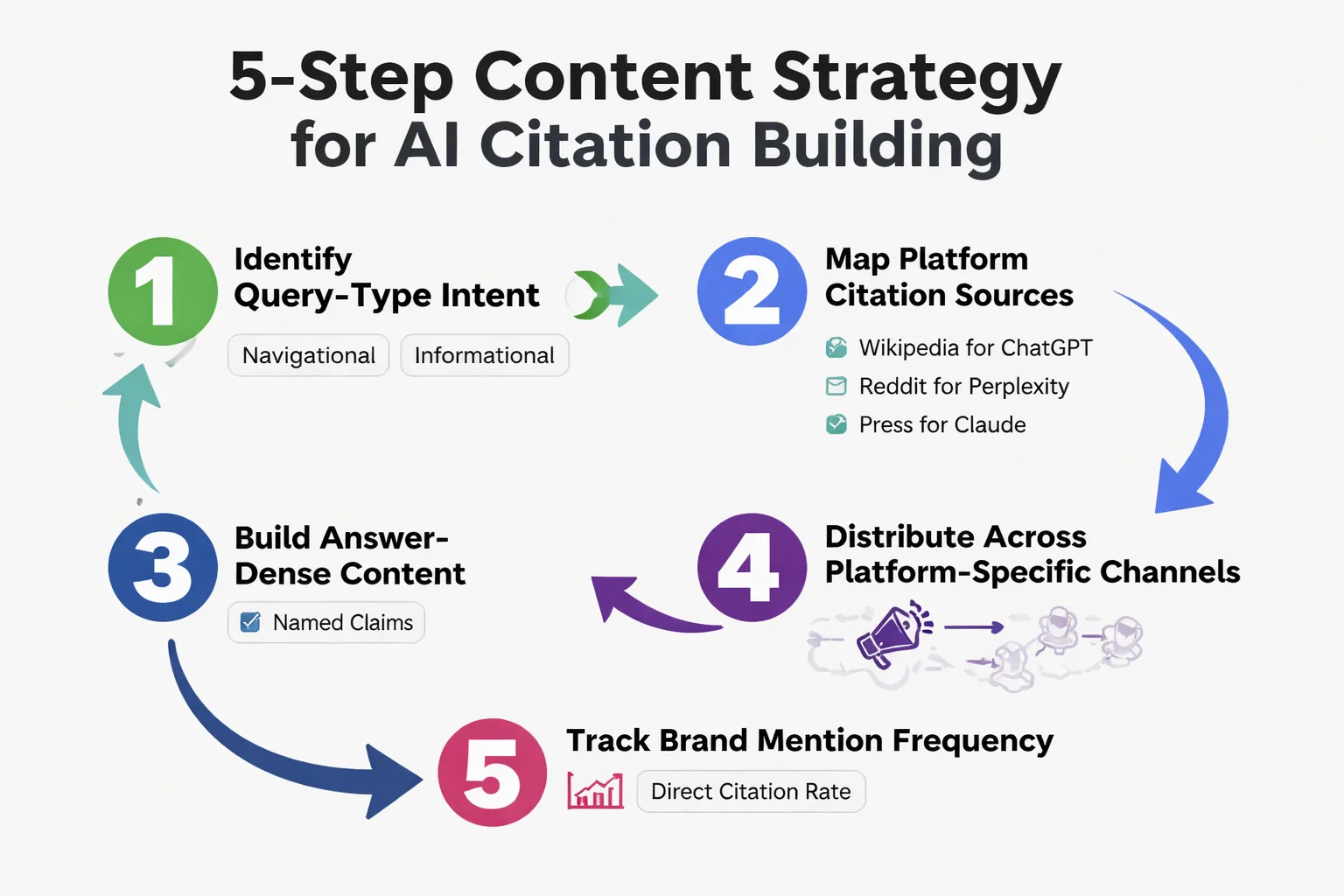

Search Atlas analyzed 18,377 matched queries and found that only 12% of AI citations overlap with Google's top 10 results. Perplexity showed 25-30% median domain overlap with Google — the closest of any engine tested. ChatGPT cited Wikipedia in 47.9% of its top citations. Perplexity cited Reddit in 46.7% of its citations.

Let that sink in. Nearly half of Perplexity's citations come from Reddit. Not from authoritative B2B blogs, not from long-form editorial pieces, not from the kinds of content most content teams are producing. From Reddit threads.

For B2B SaaS companies, this is a genuine crisis hiding in plain sight. According to Forrester, 94% of B2B buyers use AI search during vendor research. But if your content strategy is built entirely around Google rankings, you're invisible in the AI engines those buyers are actually using. Strong Google rankings don't translate to ChatGPT or Perplexity citations — the data is unambiguous on this.

The practical implication: you need a platform-specific citation strategy. Press mentions and authoritative third-party coverage for ChatGPT visibility. Community presence and genuine Reddit engagement for Perplexity citations. Technical depth and structured documentation for Claude. These aren't interchangeable tactics — they're distinct distribution channels with different source preferences.

I'd also flag the mention-citation gap that Semrush's AI Visibility Index identifies as a core strategic distinction. Brands can win in AI search through two separate paths: direct citations of their own content, or mentions on third-party sources that AI engines then reference. These are genuinely different strategies, and most teams haven't decided which one they're pursuing. Bankrate and Patagonia are cited as examples of brands executing each path well — but they're doing very different things to get there.

Why Google Rankings Don't Predict AI Citations

I keep running into teams that treat their Google rankings as a proxy for AI search visibility. It's an understandable assumption. It's also wrong.

The 12% overlap figure from Search Atlas is the clearest evidence I've seen. If AI engines were simply pulling from Google's top results, you'd expect 70-80% overlap. At 12%, you're looking at fundamentally different source selection logic. Google's ranking algorithm optimizes for relevance and authority signals built over years of link-based inference. AI engines are doing something different: they're optimizing for the sources that help them produce accurate, synthesized answers to open-ended queries.

Those aren't the same thing. A page can rank #1 for a keyword and never appear in an AI Overview if it's not being cited by other sources in the context of answering related questions. Conversely, a page with modest Google rankings can get heavy AI citation if it's the source that trusted communities and publications reference when discussing a topic.

The Ahrefs research on how to rank in AI Overviews reinforces this: the LLM consistently returns brands it sees mentioned frequently in the index results, not just brands with high domain authority. This is why answer engine optimization requires a different measurement framework than SEO. You're not optimizing for a ranking position. You're optimizing for citation frequency across the sources that AI engines trust.

For teams building out their AI search visibility strategy, I'd recommend looking at tools built specifically for this measurement gap — the Meev vs Profound comparison is a useful starting point for understanding what purpose-built AI visibility tracking actually looks like versus retrofitted SEO tools.

Want to know where your brand actually appears in AI search citations — not just Google rankings?

Does Citation Outreach Actually Move the Needle?

This is where I have to be honest about what the evidence does and doesn't support.

HubSpot claims a 300% increase in AI search citations through their publisher pitching strategy. That's a compelling number. But when I look at the broader research base, I find the evidence thin enough that I'd be cautious about treating it as a replicable playbook without more context on methodology and baseline.

What the University of Toronto's controlled experiments across multiple verticals actually established is that AI search citations track "earned media" authority signals — the kind that accumulate over years, not the kind you can manufacture through a 90-day outreach sprint. That finding is consistent with what the Ahrefs brand mention correlation data shows: the brands appearing in AI Overviews aren't there because they ran a citation campaign. They're there because they've built the kind of sustained, referenced presence that AI systems treat as authoritative.

The pattern I keep seeing in teams that struggle with this: they borrow the targeting logic from link building campaigns and point it at AI citation goals. Same DA thresholds, same outreach templates, same 60-day timelines. Nothing moves. The problem isn't execution — it's that the framework was never validated for AI citation mechanics. There's no GEO-native targeting methodology that has been rigorously tested at scale yet. I'm aware of one Reddit practitioner account running a 6-month campaign and one peer-reviewed preprint. That's the entire evidence base.

For teams trying to get content cited in Perplexity, the more defensible strategy is building the conditions for citation rather than engineering individual citations. That means: genuine topical authority, structured content that AI engines can extract from cleanly, and distribution across the specific platforms each engine favors. It's slower. It's also what the data actually supports.

The GEO Tactics That Are Actually Working in 2026

One thing I haven't been able to find — and I've looked hard — is a retrospective audit of what GEO strategies were getting citations in 2023 and early 2024 that have since stopped working. Every guide I pull up is dated 2025 or later and written as a forward-looking playbook. The GEO/AEO industry is incentivized to publish optimization guides, not post-mortems. Nobody's tracking citation decay systematically. That's a real blind spot.

What I can say with more confidence, based on current data:

Answer-dense content structure matters. AI engines extract from content that answers questions directly and early. The same pattern that makes content extractable for Google AI Overviews makes it citable by Perplexity and ChatGPT. Structured headers, direct answers in the first paragraph of each section, and specific named claims with attributable sources all correlate with higher citation rates.

Brand mention velocity matters more than link velocity. Given the Ahrefs correlation of 0.664 for brand mentions, the question isn't "how do I get more backlinks?" It's "how do I get more sources mentioning my brand in the context of answering questions in my topic area?" Those are different activities with different distribution channels.

Platform-specific content distribution is non-negotiable. If Perplexity is pulling 46.7% of its citations from Reddit and ChatGPT is pulling 47.9% from Wikipedia, then a content strategy that only publishes to your own domain is leaving the majority of AI citation surface area untouched. This is the generative engine optimization insight that most SEO-first teams are slow to internalize.

For teams evaluating tools to track this, the ai search visibility measurement problem is real — most legacy SEO platforms weren't built to track citation frequency across AI engines, and retrofitting them produces noisy data.

The mention-citation gap identified in Semrush's AI Visibility Index is also worth building into your measurement framework. Tracking whether you're being cited directly versus mentioned on third-party sources that get cited is a different analytical question, and conflating the two gives you a misleading picture of where your AI search visibility actually comes from.

The Omnibox Was Always a Mirror

That Chrome placeholder text — "Search Google or type a URL" — was never really about browser UX. It was a mirror held up to user intent. It asked: how confident are you? How much do you trust yourself to know the answer versus trusting an intermediary to find it?

AI search engines are now that intermediary for the vast majority of open-ended queries. And the brands that will win in this environment aren't the ones with the best keyword strategies. They're the ones that show up consistently in the sources AI engines trust: the Reddit threads, the Wikipedia entries, the press coverage, the technical documentation that gets cited when someone asks an open-ended question in your category.

The omnibox merger of 2008 was the first signal. The AI search convergence of 2026 is the answer engine optimization moment that follows from it. Building for search google or type a url behavior means building for the full spectrum of user confidence — from the person who knows exactly where they're going to the person who needs a trusted intermediary to get them there. Both paths matter. But right now, the intermediary path is where the content strategy work is actually happening.

If you're tracking AI search visibility and trying to understand where your brand sits in that citation hierarchy, the Meev vs Jasper comparison is a useful framing for understanding what purpose-built citation tracking looks like versus general-purpose AI writing tools that have bolted on visibility features.

Frequently Asked Questions

What does 'Search Google or type a URL' actually mean in Chrome? It's the placeholder text in Chrome's omnibox — the unified address and search bar introduced in 2008. Typing a web address (like example.com) navigates directly to that site. Typing anything else triggers a Google search. The distinction matters because navigational queries bypass AI synthesis entirely, while search queries are where AI Overviews, Perplexity, and ChatGPT intervene.

Why do AI search engines cite different sources than Google's top 10 results? Because they're optimizing for different things. Google ranks pages by relevance and link authority. AI engines select sources that help them synthesize accurate answers to open-ended queries. According to Search Atlas's analysis of 18,377 queries, only 12% of AI citations overlap with Google's top 10 results — meaning strong Google rankings don't predict AI citation at all.

How is answer engine optimization different from traditional SEO? SEO optimizes for ranking position in a list of results. Answer engine optimization (AEO) optimizes for inclusion in synthesized AI responses. The key metric shifts from keyword ranking to brand mention frequency and citation rate across AI engines. The Ahrefs study of 55.8 million AI Overviews found brand mentions (correlation 0.664) were the strongest predictor of AI inclusion — not backlinks or keyword optimization.

Should I focus on Perplexity or ChatGPT for AI citation strategy? Both, but with different tactics. ChatGPT cites Wikipedia in 47.9% of top citations, so Wikipedia presence and third-party editorial coverage matter most there. Perplexity cites Reddit in 46.7% of citations, making community engagement and forum presence more valuable for Perplexity visibility. Treating them as the same channel produces mediocre results on both.

Does a 90-day citation outreach campaign actually work? The evidence is thin. University of Toronto research found AI citation tracks earned media authority signals that accumulate over years, not sprints. The more defensible approach is building sustained topical authority, structured extractable content, and platform-specific distribution — the conditions for citation rather than engineered individual citations.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Start tracking your AI search visibility across ChatGPT, Perplexity, and Google AI Overviews — before your competitors do.