By Judy Zhou, Head of Content Strategy

Key Takeaways

- Google Keyword Planner remains reliable for mapping informational demand and seasonal trends, but its volume data no longer predicts traffic accurately — AI Overviews now absorb significant click share on exactly the high-volume informational queries GKP highlights.

- Filter GKP results for question-format keywords (how, what, why, which) before exporting — these are the queries most likely to trigger AI Overviews and LLM citation opportunities.

- 44.2% of ChatGPT citations come from the first 30% of content (Kevin Indig, analysis of 1.2M AI answers) — meaning your content brief must require front-loaded, answer-dense openings to compete for citation placement.

- Cross-referencing GKP keywords against live Perplexity and ChatGPT responses reveals the mention-citation gap — terms where you have topical authority but no AI citation presence — which is your highest-priority GEO target.

When Google launched its Keyword Tool in 2008 — later rebranded as Google Keyword Planner in 2013 — it was designed for one purpose: helping advertisers bid smarter on paid search. For over a decade, SEOs quietly borrowed it for organic research, treating monthly search volume as a proxy for content priority. Then, in 2023, generative AI rewrote the rules of search visibility. Suddenly the same tool that helped marketers win auctions held an unexpected second life — as a map of the exact informational territory that large language models are trained to answer, and that answer engines compete to source.

Google Keyword Planner remains one of the most underrated tools for keyword research for AI search — not because it was designed for that purpose, but because it maps demand signals that LLMs are trained to satisfy. The pattern I keep seeing is this: teams either ignore GKP entirely in favor of shiny AI-native tools, or they use it exactly as they did in 2018 and wonder why their content doesn't surface in AI Overviews. Both approaches miss what GKP actually does well in 2026.

What Google Keyword Planner Still Gets Right in 2026

Let me be direct about something most tutorials won't say: GKP is not an AI search tool. It never will be. But it's an extraordinarily accurate map of human information demand — and human information demand is exactly what LLMs are trained to reflect.

Where GKP remains genuinely reliable: search volume trends over 12-month windows, seasonal demand patterns, and the relative scale of informational vs. transactional queries in a topic cluster. These signals haven't degraded. If GKP tells you that "how to file a small claims lawsuit" gets 10x the search volume of "small claims lawyer near me," that ratio reflects real population-level curiosity — and LLMs are trained on content that addresses that curiosity at scale.

Where GKP misleads you in 2026 is subtler. Monthly search volume no longer maps cleanly to traffic opportunity because AI Overviews absorb a growing share of clicks on informational queries. A keyword showing 40,000 monthly searches might now deliver a fraction of that to any individual publisher, because Google's own AI answer sits above the fold. The Semrush AI Overviews study found that AI Overviews appear for a substantial share of informational queries — which means the traffic model you used to build around high-volume keywords is broken for a meaningful subset of your target terms.

The competition column in GKP is also nearly useless for organic strategy. It reflects advertiser competition, not organic ranking difficulty. I've watched teams deprioritize genuinely authoritative content topics because GKP showed "high" competition — when that label meant CPCs were expensive, not that organic ranking was hard.

The right mental model for GKP in 2026: treat it as a demand census, not a traffic forecast. It tells you what questions people are asking. It does not tell you how much traffic answering those questions will send you. Those are now two different things.

Step 1–3: Setting Up GKP for AI-Optimized Research

You need a Google Ads account to access Google Keyword Planner — there's no way around this. You don't need to run ads. Create an account at ads.google.com, skip the campaign setup when prompted (Google buries the "skip" option; look for "Switch to Expert Mode" during onboarding), and navigate to Tools → Keyword Planner. If you complete the campaign setup accidentally, just pause the campaign immediately — you won't be charged unless you activate bidding.

Step 1: Choose "Discover New Keywords," not "Get Search Volume." The second option is for validating terms you already know. The first is where genuine research happens — it surfaces semantically related terms you wouldn't have thought to check, which is exactly where AI search intent gaps live. Enter 3–5 seed keywords representing your topic's core informational territory.

Step 2: Set your location and language deliberately. The default is often your account's billing country. If you're targeting a specific market, change this — GKP's volume data is location-sensitive in ways that matter. A keyword showing 1,000 monthly searches in the US might show 200 in the UK, and the AI Overview behavior can differ between markets too.

Step 3: Filter for informational intent before you export anything. This is the step most people skip, and it's the most important one for keyword planner content strategy in the AI era. In the results panel, use the "Refine keywords" sidebar to filter by keyword text. Add filters for question words: "how," "what," "why," "when," "which," "does," "can," "should." Informational queries — particularly question-format queries — are the ones most likely to trigger AI Overviews, and they're the queries where LLMs are competing hardest to provide direct answers. If you're optimizing for Generative Engine Optimization (GEO) and citation visibility, these are your primary targets.

Transactional queries ("buy," "price," "near me," "hire") still matter for PPC and bottom-funnel content, but they rarely trigger the AI answer formats where citation opportunities exist. Filter them out for this phase of research.

Step 4–6: Extracting the Keywords That Matter for GEO

Once you have a filtered list of informational keywords, the work splits into three phases that most practitioners collapse into one — and that's where precision gets lost.

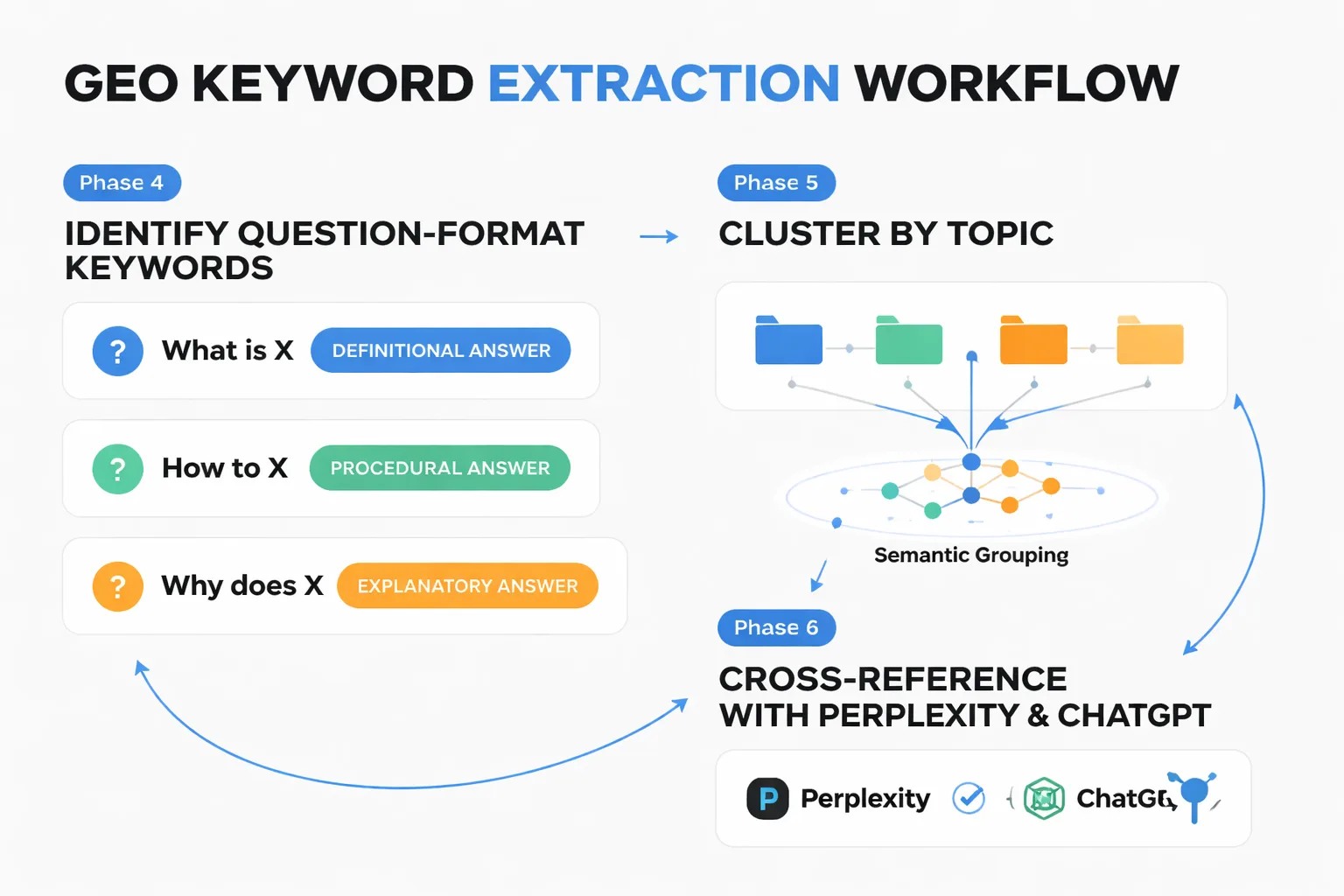

Step 4: Identify question-format keywords and tag them by answer type. Not all question keywords are equal for AI citation purposes. "What is X" queries tend to produce definitional AI answers that cite authoritative, encyclopedic sources. "How to X" queries produce procedural answers that cite step-by-step guides. "Why does X" queries produce explanatory answers that cite analytical content. Export your filtered keyword list and add a column tagging each keyword by answer type — this determines what content format will win citations, not just rankings.

Step 5: Cluster by topic, not by volume. The instinct is to sort by monthly search volume and work down the list. Resist it. For AI search visibility, topical authority matters more than individual keyword volume — LLMs are more likely to cite sources that demonstrate deep coverage of a subject than sources that have one high-traffic page. Group your keywords into clusters of 8–15 semantically related terms. Each cluster becomes a content topic territory, not a single article target. This is the same logic behind building topical authority for AI search, and GKP gives you the raw material to map it.

Step 6: Cross-reference with Perplexity and ChatGPT to validate AI answer triggers. This is the step that transforms GKP from a traditional SEO tool into an AI search research instrument. Take your top 20–30 question-format keywords and run them directly in Perplexity and ChatGPT. You're checking three things: Does this query produce an AI-generated answer (vs. a list of links)? What sources does the AI cite? Is there a "mention-citation gap" — meaning your brand or content exists in the topic space but isn't being pulled into the answer?

According to a Search Atlas analysis of 18,377 matched queries, Perplexity showed only 25–30% median domain overlap with Google results — which means ranking well on Google does not guarantee AI citation, and vice versa. That gap is where keyword planner content strategy gets interesting. The terms where you rank on Google but don't appear in Perplexity answers are your highest-priority GEO targets.

The pattern I keep seeing when I run this cross-reference: high-volume GKP keywords often have saturated AI answers from established publishers, while mid-volume question keywords (500–2,000 monthly searches) frequently produce AI answers that cite only 2–3 sources — meaning there's a real opening for a well-structured, authoritative piece to break into the citation set.

Step 7: Turning GKP Data Into a Content Brief That Wins Citations

Here's the contrarian take most keyword research tutorials won't give you: the content brief is where keyword research for AI search diverges most sharply from traditional SEO, and volume data is the least important input at this stage.

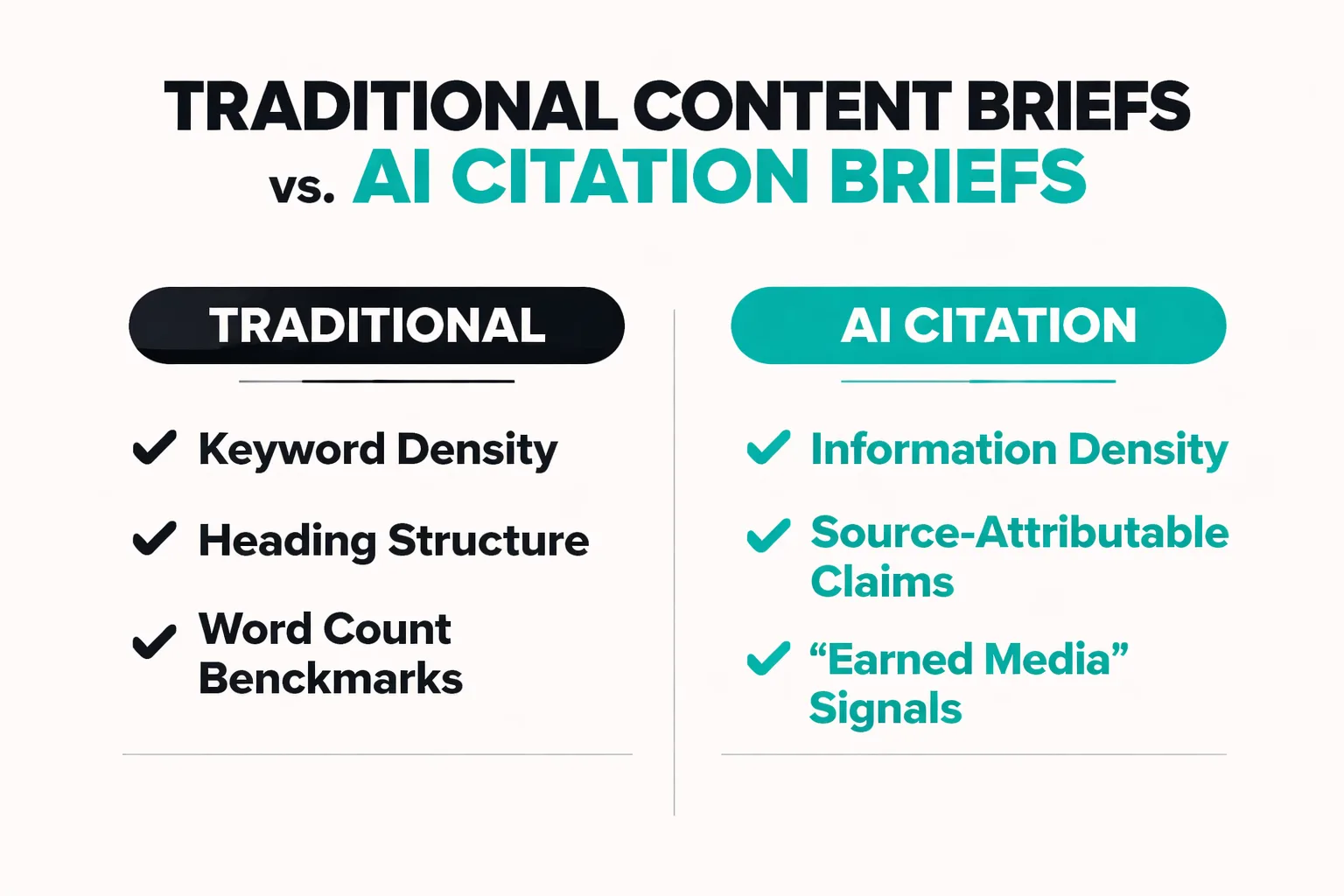

A brief built for AI citation has a different architecture than a brief built for Google rankings. Traditional briefs optimize for keyword density, heading structure, and word count benchmarks. AI citation briefs optimize for information density, source-attributable claims, and what researchers at arxiv call "earned media" signals — the third-party authoritative references that generative engines systematically favor over brand-owned content.

Here's how I structure a brief using GKP data plus AI search intent signals:

1. Lead with the cluster's primary question keyword as the brief's core thesis question. Not as a keyword to include — as the actual question the content must answer definitively. If GKP surfaces "how does compound interest work" as your highest-volume informational keyword, the brief's first requirement is: "This piece must answer this question in the first 200 words, completely, in a way that can be extracted and cited."

This connects directly to what Kevin Indig's analysis of 1.2 million AI answers and 18,012 verified citations found: 44.2% of ChatGPT citations come from the first 30% of content. That number stopped me cold when I first saw it. It means the answer-dense opening isn't just good writing practice — it's the primary citation surface. If your key claim is buried in paragraph 12, ChatGPT will likely cite someone else who said it in paragraph 2.

2. Map secondary keywords to specific sections, not to the document overall. Each GKP secondary keyword in your cluster should anchor a distinct section of the content. This creates the topical depth that signals authority to LLMs — not keyword repetition, but genuine multi-angle coverage of the topic territory.

3. Build the "information gain" angle explicitly into the brief. This is the piece most teams skip. Information gain means your content must contain something that isn't already in the top 5 existing sources on the topic — a specific data point, a named case study, a practitioner perspective, a synthesis that doesn't exist elsewhere. LLMs are trained to avoid redundancy; they cite sources that add something. If your brief doesn't specify what's new or different about this content, you're producing content that competes with existing citations rather than earning new ones.

For teams scaling this process across dozens of briefs simultaneously, tools that combine AI content generation with citation-aware structuring — like Meev's quality-gated publishing workflow — handle the brief-to-draft pipeline in a way that preserves these GEO signals without requiring manual enforcement on every piece.

4. Include a "citation target" field in every brief. This is a named external source — a study, a primary document, a recognized authority — that the content should reference and potentially earn a reciprocal mention from. This is the publisher pitching for AI citations logic applied at the brief level. If you're writing about compound interest and you cite a Federal Reserve educational resource accurately, you're creating the conditions for that source's domain authority to reflect on yours in AI answer contexts.

5. Specify front-loading requirements explicitly. Given the 44.2% first-30% citation finding, every brief I write now includes a hard requirement: the primary claim, the key statistic, and the direct answer to the core question must all appear before the 300-word mark. This isn't just for AI citation — it's also what makes content perform in AI Overviews, where Google's system extracts from early, dense, well-structured passages.

The AI Content Penalty Question — Answered Directly

I want to address something that comes up every time I discuss AI-optimized content workflows: the fear that using AI in the content production process triggers Google penalties.

The evidence doesn't support that fear — but it does support a more specific concern. An Ahrefs study of 600,000 top-ranking pages found AI content present in 86.5% of them, with a correlation between AI content percentage and ranking position of essentially zero (0.011). That's not a rounding error. That's a non-relationship. The sites that lost 60–90% of organic traffic in Google's March 2024 core update weren't penalized for using AI — they were penalized for publishing at volume with zero editorial oversight, zero original perspective, and zero information gain.

The distinction matters enormously for how you use GKP data. If you use keyword research to identify genuine informational gaps and produce content that addresses those gaps with real specificity, AI assistance in drafting is not your risk. If you use keyword research to generate templated content at scale with no editorial layer — treating GKP as a content printer queue rather than a research instrument — that's the scaled content abuse pattern Google's systems are built to detect.

For teams comparing AI content platforms to understand where editorial quality gates exist in different tools, the Meev vs. Sight AI comparison breaks down how quality-gated workflows differ from pure-volume approaches. The difference isn't which AI model you use — it's whether there's a human or system-level quality check before content publishes.

Why GKP + AI Search Validation Beats Either Alone

The honest answer to "should I use Google Keyword Planner for AI search research" is: yes, but not alone, and not the way you used it in 2020.

GKP gives you demand data at a scale and accuracy that no AI-native tool has replicated. It tells you what people are asking, at what volume, with what seasonal patterns. What it cannot tell you is whether those questions are being answered by AI engines already, which sources are being cited, and what information gain angle would make a new piece worth citing.

That's why the workflow I've described — GKP for demand mapping, Perplexity and ChatGPT for AI answer validation, and a citation-aware brief structure for content production — produces better results than either traditional keyword research or AI search optimization in isolation. The Search Atlas research showing only 25–30% domain overlap between Perplexity and Google results makes this concrete: you need both lenses to see the full picture.

For teams evaluating which platforms support this kind of integrated research-to-publishing workflow, the Meev vs. Profound comparison covers how AI search visibility tracking integrates with content production — which is increasingly where the real leverage lives.

The teams I've seen execute this well share one characteristic: they treat keyword research as the beginning of an AI search strategy, not the end of it. GKP tells you where the demand is. The cross-referencing tells you where the citation gaps are. The brief structure determines whether your content earns a place in the answer — or just adds to the noise.

Want to see how a quality-gated content workflow turns GKP research into AI-cited articles at scale?

Frequently Asked Questions

Do I need to run Google Ads to use Google Keyword Planner? You need a Google Ads account, but you don't need to spend money on ads. Create an account, select "Switch to Expert Mode" during setup to skip the campaign creation flow, and access Keyword Planner through the Tools menu. Your account can remain inactive with no budget — GKP access doesn't require active campaigns or billing.

How accurate is GKP search volume data for informational keywords? GKP reports search volume in ranges (100–1K, 1K–10K) rather than exact numbers for most terms, which limits precision. For trend direction and relative volume comparison between keywords, it's reliable. For exact traffic forecasting, it's not — and in 2026, traffic forecasting is additionally complicated by AI Overviews absorbing click share on informational queries. Use GKP for demand validation, not traffic prediction.

Which types of keywords are most likely to trigger AI Overviews? Informational queries — particularly question-format keywords beginning with "how," "what," "why," and "which" — trigger AI Overviews at the highest rate. Navigational queries (brand names, specific sites) and transactional queries ("buy," "price," "near me") trigger them far less frequently. When building a GEO-focused content strategy, prioritize the informational cluster from your GKP research.

Can GKP data tell me which keywords ChatGPT or Perplexity will cite content for? No — and this is a critical gap to understand. GKP measures search demand on Google, not citation likelihood in AI engines. The Search Atlas analysis of 18,377 matched queries found only 25–30% domain overlap between Perplexity and Google results, meaning Google ranking and AI citation are partially independent outcomes. Use GKP to identify which topics to pursue, then validate AI answer behavior manually in Perplexity and ChatGPT.

How often should I refresh my GKP keyword research for an AI search strategy? Quarterly is the minimum for topic clusters in fast-moving categories. GKP's 12-month trend data is useful for spotting demand shifts — a keyword that was stable for two years and is now trending upward often signals an emerging AI answer gap, because existing content was written before the topic accelerated. Set a calendar reminder to re-run your seed keyword lists every 90 days and compare the trending column against your previous export.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev combines AI-driven keyword research with citation-aware publishing — so your content doesn't just rank, it gets cited by ChatGPT, Perplexity, and Google AI Overviews.