By Judy Zhou, Head of Content Strategy

Key Takeaways

- 96% of AI Overview citations come from sources with strong E-E-A-T signals (Wellows analysis of 2,400 citations) — meaning citation is a portfolio-level trust judgment, not a page-level optimization win.

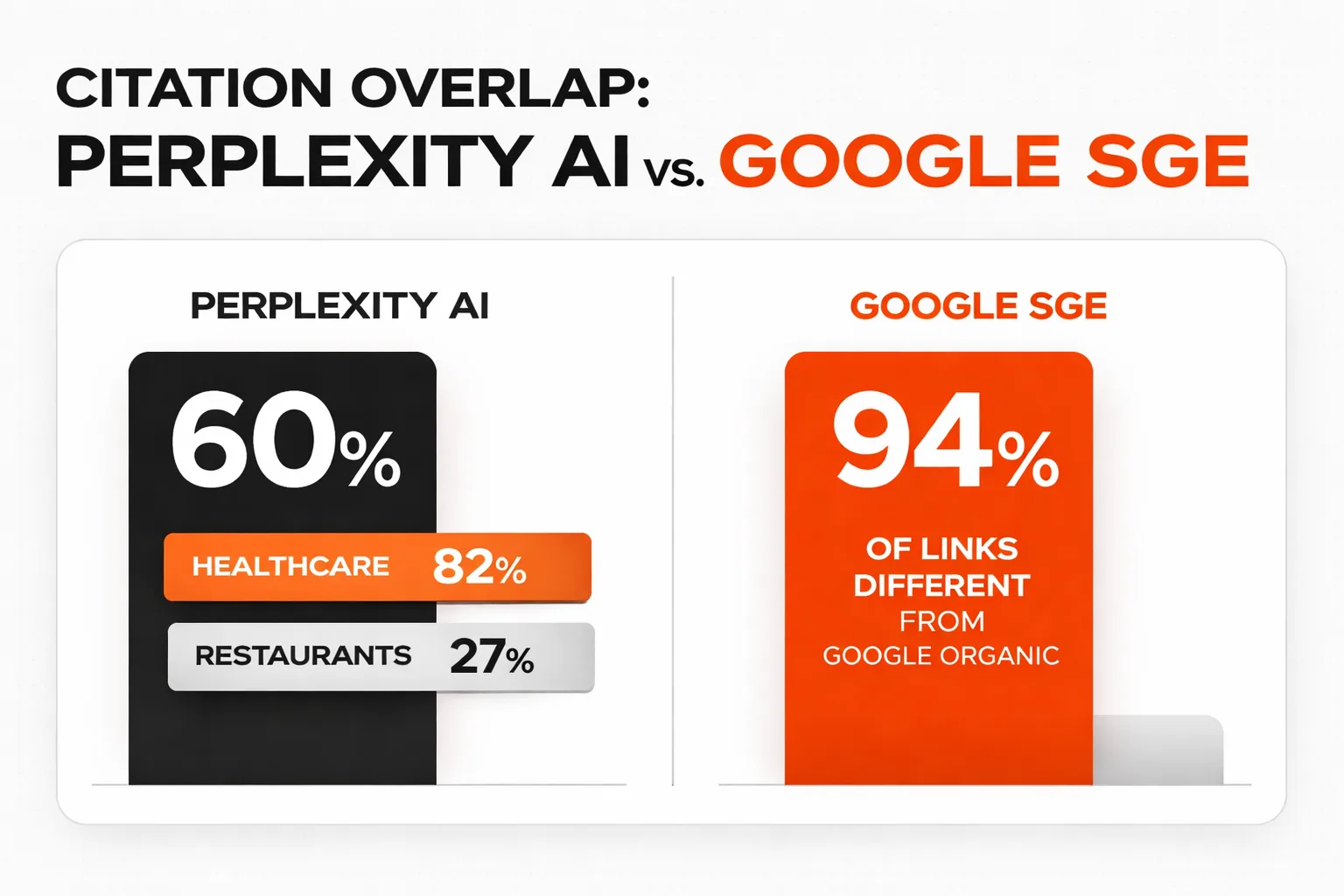

- Perplexity cites 60% from Google's top 10 organic results, while Google AI Overviews deliberately diversifies — 94% of its links differ from Google's own rankings — so your strategy must be platform-aware.

- Brands cited in AI Overviews earn 35% more organic clicks and AI-sourced traffic converts at 14.2% vs. 2.8% for traditional Google organic, making citation rate a higher-value KPI than most teams currently track.

- The GPRM framework has four dimensions — Depth Coherence, Entity Consistency, Relevance Freshness, and Cross-Platform Presence — and all four must be built simultaneously; optimizing one in isolation produces inconsistent citation results.

AI search doesn't cite the best article — it cites the most trusted portfolio.

That sentence reframes everything. If you've been optimizing individual pages for AI search citations and wondering why results are inconsistent, you're solving the wrong problem. AI search citation isn't a page-level event. It's a portfolio-level judgment — and understanding the mechanics behind that judgment is the entire point of what I'm calling the GPRM: the Generative Portfolio Quality & Relevance Model.

Wellows' analysis of 2,400 AI Overview citations found that 96% came from sources with strong E-E-A-T signals. A Seer Interactive study confirmed that brands cited in AI Overviews earn 35% more organic clicks than those not cited, even as overall CTR has dropped 61% for queries where AI Overviews appear. BrightEdge's survey of 750+ marketers found 68% are already making strategic adjustments for AI search — but 57% admit they're still figuring out what actually works. Presence AI's study of 1,200+ content pages across ChatGPT, Claude, Perplexity, and Google AI Overviews revealed that citation rates vary dramatically by content type, not just content quality.

Here's the frame I use: AI citation is a trust inference problem. The model doesn't read your article and decide it's good. It infers trustworthiness from a cluster of signals that collectively describe your entity — your brand, your authors, your content history, your cross-platform presence. GPRM is my attempt to name and structure that cluster so content teams can actually build toward it.

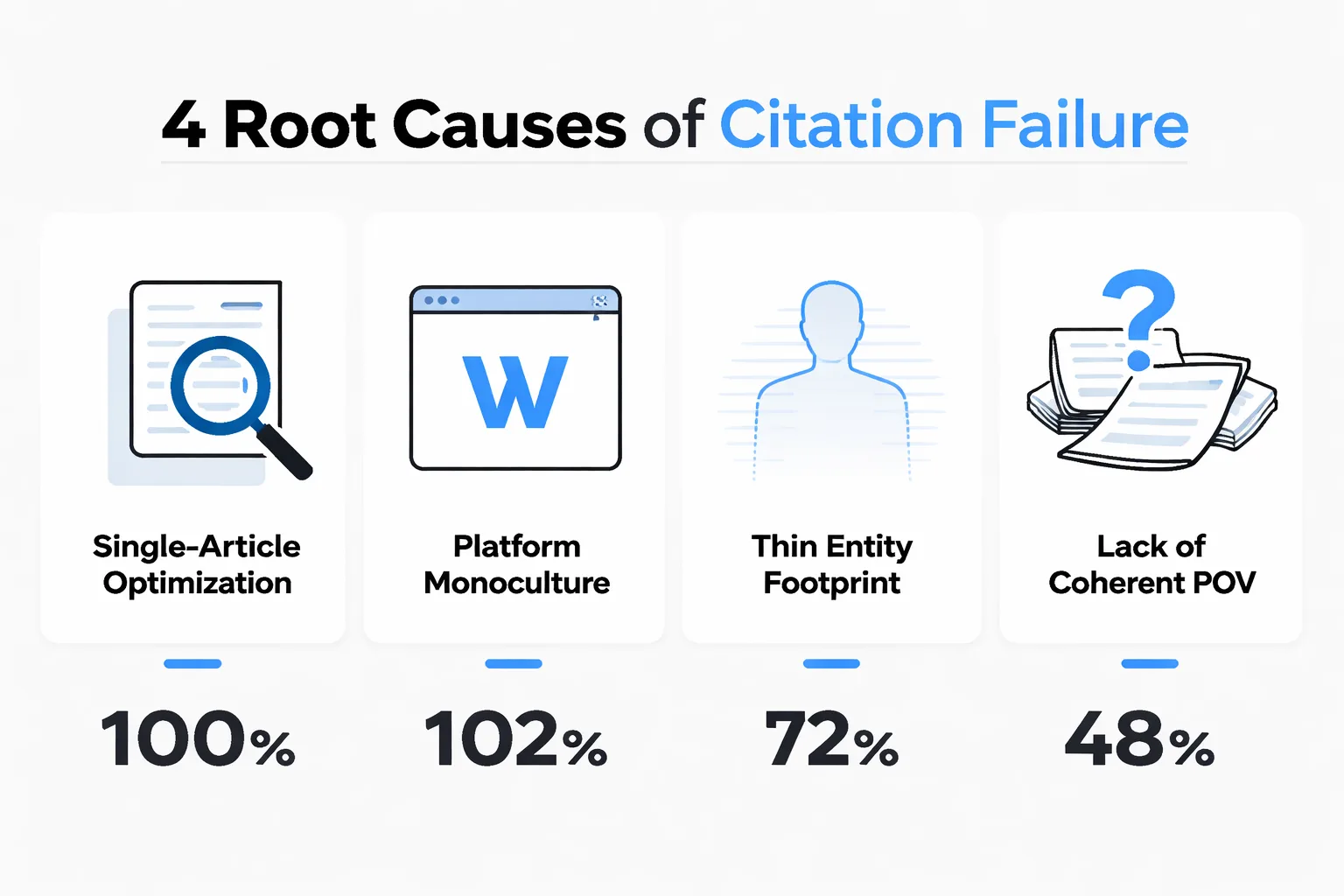

The Four Root Causes of Citation Failure

Before I explain the model, I want to name what's actually going wrong for most content programs that aren't getting cited. I've audited enough of these to see the pattern clearly.

First: single-article optimization. Teams treat each piece of content as a standalone citation candidate, optimizing it in isolation — adding author bios, structured data, internal links — without asking whether the surrounding content ecosystem signals topical authority. An AI model encountering your article doesn't just evaluate that article. It evaluates whether the domain behind it has a coherent, deep, consistent point of view on the topic.

Second: platform monoculture. If your entity only exists on your own domain, you're invisible to the inference layer. AI models build entity understanding from cross-platform signals — LinkedIn presence, third-party citations, mentions in other authoritative documents. A brand that publishes exclusively on its own blog has a thin entity footprint, regardless of how good the content is.

Third: content architecture that fragments authority. This is the one I keep coming back to. The failure mode I see most often in content programs is optimizing for connection volume rather than connection quality — linking pages that share keywords without asking whether those pages share intent hierarchy. The result is a site where no single page has enough concentrated authority to rank for high-intent terms, and no coherent topical signal for AI systems to extract.

Fourth: no maintenance loop. AI systems de-prioritize stale content — not because it's wrong, but because freshness is itself an E-E-A-T signal. A page published in 2021 with no updates signals that the publisher may no longer be actively engaged with the topic. I think of this as citation decay: the gradual erosion of citation eligibility as content ages without refresh signals.

How Does GPRM Actually Work?

GPRM stands for Generative Portfolio Quality & Relevance Model. It's a framework for evaluating — and building — the portfolio-level signals that AI search engines use to decide whether to cite you.

The model has four dimensions. Each one is necessary. None is sufficient alone.

Dimension 1 — Depth Coherence. This measures whether your content portfolio has genuine depth on a topic, not just breadth. A site with 200 articles touching on 50 different subjects has low depth coherence. A site with 60 articles that collectively cover one subject from every meaningful angle — definitions, comparisons, case studies, technical implementation, common failures — has high depth coherence. AI systems building a context window for a query want the most complete, authoritative source. Depth coherence is what makes you that source.

Dimension 2 — Entity Consistency. This measures whether your brand, your authors, and your claims are consistently represented across platforms. The Google Quality Rater Guidelines treat E-E-A-T as a multi-signal construct — experience, expertise, authoritativeness, trustworthiness — and AI systems operationalize this by looking for entity consistency across sources. If your author claims expertise on your site but has no LinkedIn presence, no third-party citations, and no bylines elsewhere, the entity signal is weak. If the same author appears in industry publications, has a verifiable professional history, and is cited by other authoritative sources, the entity signal is strong.

Dimension 3 — Relevance Freshness. This measures whether your content is actively maintained and whether your topical coverage reflects current developments. Semrush's study of the most-cited domains in AI found Wikipedia ranking as #1 or #2 cited source in four of five verticals — partly because Wikipedia is relentlessly updated. Freshness isn't just about publication date; it's about whether the content reflects the current state of knowledge on a topic.

Dimension 4 — Cross-Platform Presence. This measures how widely your entity is represented outside your own domain. Semrush's analysis of 89,000 LinkedIn URLs cited in AI search found LinkedIn ranks #2 in citations at 11% of AI responses on average, with 54-64% of cited posts focused on knowledge sharing. That number surprised me — it means AI systems are actively pulling from LinkedIn as a citation source, which means your LinkedIn content strategy is now part of your AI search citation strategy whether you've acknowledged it or not.

Why Different AI Engines Cite Differently

One thing GPRM has to account for is that Perplexity, Google AI Overviews, and ChatGPT don't use identical citation logic — and the differences matter for how you build your portfolio.

Search Engine Land's analysis found that 60% of Perplexity AI citations overlap with top 10 Google organic results. Healthcare had the highest overlap at 82%; restaurants had the lowest at 27%. What this tells me: Perplexity leans heavily on traditional SEO authority signals. If you rank in Google's top 10, you have a strong baseline for Perplexity citation.

Google SGE is the opposite. A separate Search Engine Land study found 94% of SGE links are different from Google's own organic results. Google is deliberately diversifying its citation sources away from the same pages it ranks organically. This is the counterintuitive finding that most SEOs haven't fully processed yet: ranking #1 in Google doesn't automatically get you cited in Google AI Overviews. The model is actively looking for sources it doesn't already surface in organic results.

As Jason Barnard argued in Search Engine Land, topical authority alone isn't enough for AI search — "the missing layer isn't in content or structure. It's in the signals that determine selection once a topic is understood — the difference between being eligible and being chosen." That framing is exactly right, and it's what GPRM is designed to address.

For content teams, this means the portfolio strategy has to be platform-aware. You're not building one portfolio — you're building signals that work across different citation logics simultaneously. If you want to understand the broader strategic difference between traditional and AI-first optimization, the breakdown in AEO vs. SEO: Optimize for AI Search & Answer Engines is worth reading alongside this framework.

Building a Citation-Worthy Portfolio — The GPRM Approach

Here's where the framework becomes operational. GPRM isn't just diagnostic — it's a build plan.

Step 1: Audit your depth coherence score. Map every piece of content you have on your primary topics. For each topic cluster, ask: does this portfolio cover the full query landscape — definitions, comparisons, implementation guides, failure cases, advanced nuance? Most content programs I've reviewed have heavy coverage at the top of the funnel (definitions, introductions) and almost nothing at the middle and bottom (comparisons, decision frameworks, technical depth). AI systems preferentially cite sources that can answer the full range of queries on a topic, not just the introductory ones.

Step 2: Strengthen your entity signals. Every author who publishes on your site should have a verifiable professional presence — a LinkedIn profile with demonstrable expertise, bylines on third-party publications, and ideally some form of third-party validation (awards, speaking engagements, citations by other authoritative sources). This isn't optional for AI citation. E-E-A-T is a binary gatekeeping filter for AI systems, not a ranking signal — you either clear the threshold or you don't.

Step 3: Build a freshness maintenance loop. Assign a review cycle to every piece of content in your portfolio. I recommend quarterly reviews for high-traffic pages, annual reviews for evergreen reference content. The review doesn't need to be a full rewrite — updating statistics, adding new examples, and refreshing the publication date with substantive changes is enough to signal active maintenance. For teams running at scale, this is where automated content publishing tools can actually add value — not for generating content from scratch, but for flagging pages that haven't been updated and surfacing refresh opportunities.

Step 4: Diversify your entity footprint. Start treating LinkedIn as a citation channel, not just a distribution channel. Semrush's research is clear: educational long-form content (500-2,000 words) and mid-length posts (50-299 words) account for the largest share of AI citations on LinkedIn, with knowledge-sharing content dominating cited posts. If your LinkedIn strategy is primarily promotional, you're leaving citation opportunities on the table.

Step 5: Implement structured data rigorously. Google Search Console structured data is the technical layer that makes your content machine-readable for AI systems. Schema markup for articles, authors, organizations, and FAQs creates explicit entity signals that AI models can extract without inference. This is table stakes — not a differentiator, but a prerequisite.

Is your content portfolio actually built to earn AI search citations — or just to rank?

The Measurement Problem Nobody Talks About

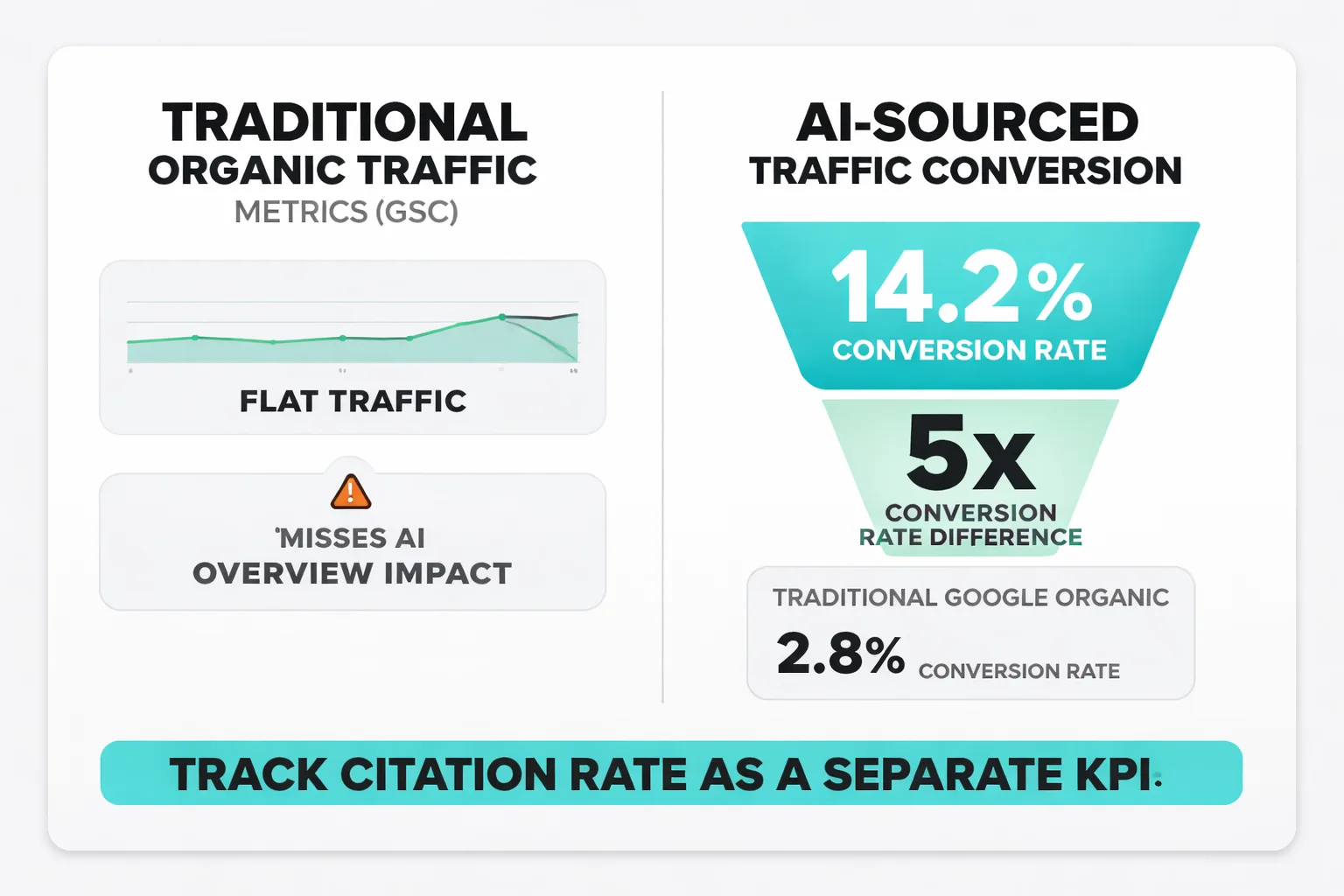

Here's my contrarian take: most teams are measuring AI citation impact wrong, and the measurement failure is causing them to underinvest in portfolio-level strategies.

Organizations tracking only traditional organic traffic metrics in Google Search Console will miss AI Overview impact entirely. GSC treats AIO impressions identically to standard organic results. A brand could see flat traffic while losing 30% of leads to uncited AI Overview visibility — and never know it from their standard reporting.

The Seer Interactive data makes the stakes concrete: AI-sourced traffic converts at 14.2% compared to traditional Google organic at 2.8%. That's a 5x conversion rate difference. If you're not measuring citation rate as a separate KPI — frequency of brand mentions in AI responses, share of voice against competitors in AI Overviews — you're flying blind on your highest-converting traffic channel.

The measurement infrastructure you need: citation rate tracking (how often your brand appears in AI responses for target queries), share of voice in AI Overviews versus named competitors, and AI-sourced pipeline attribution using fractional models. None of this is available out of the box in standard analytics stacks. Building it requires intentional instrumentation — and most teams haven't started.

For teams thinking about how to calculate the ROI of this kind of investment, the framework for calculating SEO ROI applies directly — you need to assign a value to citation-driven visibility, not just click-driven traffic.

The 76% Convergence Signal

One finding from BrightEdge's research deserves its own moment: ChatGPT and AI Overviews recommend the same brands 76% of the time. That number stopped me cold.

It means the citation pool across the two dominant AI surfaces is largely shared. Build the right portfolio signals, and you're not optimizing for one platform — you're optimizing for most of the AI citation ecosystem simultaneously. The inverse is also true: if you're excluded from that shared pool, you're invisible across both surfaces at once.

This convergence is why GPRM treats portfolio quality as a platform-agnostic foundation. The specific citation mechanics differ between Perplexity and Google AI Overviews and ChatGPT — but the underlying trust signals that get you into the eligible set are largely consistent. E-E-A-T content quality, entity consistency, depth coherence, freshness — these aren't Google-specific signals. They're the signals AI systems use to infer trustworthiness, and they work across engines.

For teams evaluating which AI writing tools to use in building this portfolio, the tool choice matters less than the strategy. I've written about this distinction in the context of full automation vs. SEO research tools — the question isn't which tool generates content faster, but which approach produces the portfolio-level signals that earn citations.

Frequently Asked Questions

How long does it take to build AI citation authority through GPRM? There's no honest universal answer, but the pattern I keep seeing is that meaningful citation frequency starts appearing 3-6 months after a portfolio reaches depth coherence on a topic cluster — meaning you have 15-25 pieces covering a topic from multiple angles with strong entity signals. Isolated page optimization rarely produces citation results in under 90 days regardless of quality.

Does GPRM apply equally to small sites and large publishers? The framework applies to both, but the starting point differs. Large publishers typically have entity footprint advantages (cross-platform presence, third-party citations) but often have depth coherence problems — broad coverage with thin topical depth. Small sites often have the opposite problem: genuine depth on a narrow topic but weak entity signals. GPRM helps both diagnose where the gap actually is.

How does Google's AI Overviews citation logic differ from Perplexity's? The data is clear: Perplexity cites 60% from Google's top 10 organic results, while Google AI Overviews deliberately diversifies — 94% of its links differ from Google's own organic rankings. This means a Perplexity-first strategy leans on traditional SEO authority, while an AI Overviews strategy requires building entity signals that exist outside your organic ranking position.

Can structured data markup directly improve AI citation rates? Structured data creates explicit, machine-readable entity signals — author schema, organization schema, FAQ schema — that reduce the inference burden on AI systems. It doesn't guarantee citation, but it removes a barrier. Google's Quality Rater Guidelines treat structured entity information as a trust signal, and AI systems trained on Google's quality signals carry that weighting forward.

What's the biggest mistake teams make when optimizing for AI search citations? Treating it as a page-level SEO problem. The 96% E-E-A-T citation rate from Wellows' analysis isn't telling you to optimize one page better — it's telling you that AI systems are making portfolio-level trust inferences. A single well-optimized article on a thin domain won't get cited. A moderately optimized article on a domain with deep topical authority, strong entity signals, and active content maintenance will.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev helps content teams build citation-worthy portfolios at scale — from topical depth mapping to structured publishing workflows that AI engines trust.