By Judy Zhou, Head of Content Strategy

Key Takeaways

- 73% of product discovery now begins with conversational queries, but most e-commerce product pages and category content are structurally invisible to AI retrieval systems — they contain specs and CTAs, not the explanatory prose LLMs can cite.

- The four content types that consistently earn AI citations are buying guides with explicit criteria, comparison articles, 'best X for Y' pieces, and individual FAQ articles — not product pages or category navigation.

- Google AI Overviews and Perplexity use retrieval-augmented generation that indexes fresh content on a rolling basis, meaning well-structured content can appear in AI answers within 2–6 weeks of publication — far faster than waiting for model retraining.

- 40–60% of cited domains change monthly across major AI platforms, making weekly LLM citation tracking and a documented mention-citation gap score essential metrics for any e-commerce GEO program.

In the spring of 2023, a small cohort of search researchers noticed something strange in early ChatGPT plugin logs and Bing Chat transcripts: certain domains were being cited repeatedly across unrelated queries while comparably authoritative sites were ignored entirely. That pattern — invisible to traditional rank trackers, inexplicable by conventional SEO metrics — became the first observable fingerprint of what academics at Princeton and Georgia Tech would later formally define as Generative Engine Optimization, or GEO. Less than two years later, it is the most consequential visibility discipline e-commerce content teams have never staffed for.

Generative Engine Optimization (GEO) is the practice of structuring content so that AI-powered answer engines — Google AI Overviews, Perplexity, ChatGPT, Microsoft Copilot — retrieve and cite it in response to user queries. 73% of product discovery now begins with conversational queries rather than traditional keyword searches. That shift alone should be enough to stop any e-commerce content director mid-roadmap. The question isn't whether your category pages rank on page one. It's whether an AI answers "what's the best standing desk for a small apartment" and mentions your brand at all.

The core problem for e-commerce teams is structural, not cosmetic. Product pages are optimized for conversion, not citation. Category pages are optimized for crawling, not comprehension. The content types that AI engines actually retrieve — buying guides, comparison articles, FAQ clusters, expert-attributed explainers — are exactly what most e-commerce content calendars deprioritize in favor of product launches and seasonal promotions. This playbook is for teams ready to fix that.

Why E-Commerce Content Has a GEO Problem Most Teams Don't See

Product pages are conversion artifacts, not knowledge objects. That distinction matters enormously to an LLM.

When a retrieval-augmented generation system like Google AI Overviews or Perplexity pulls content to construct an answer, it's looking for something that functions like a credible, self-contained explanation — a piece of text that answers a question without requiring the reader to also see a "Buy Now" button to understand it. Product pages fail this test structurally. They contain specs, prices, and CTAs. They rarely contain the kind of contextual, comparative, or explanatory prose that an LLM can excerpt and present as a useful answer. A product description that reads "Ergonomic mesh back, adjustable lumbar support, 5-year warranty" gives an LLM nothing to cite when someone asks "what should I look for in an ergonomic office chair?"

Category pages are worse. They're typically thin-text pages organized around facets and filters — useful for human navigation, opaque to AI retrieval. The LLM sees a page that says "Shop Office Chairs — 847 results — Filter by: Price, Brand, Color" and correctly concludes there's nothing citeable there.

The deeper issue is that e-commerce content teams often operate in a split model: a small editorial team producing blog content, a separate merchandising team managing PDPs, and almost no coordination between the two on AI visibility goals. The blog content that could theoretically support GEO — buying guides, comparison posts — is often produced at low volume, lacks author attribution, and isn't structured with schema markup. I've seen this pattern repeatedly across content operations of all sizes: the editorial side knows something is wrong with their AI visibility, but the fix requires touching systems (PDP templates, schema implementations, author profiles) that sit outside their remit.

The Sunrise Integration case study makes this concrete in a way that surprised even me. Sunrise Integration is a 24-year Shopify Plus Partner with enterprise clients including Disney, DHL, and Live Nation — and their CEO Coby Pfaff has publicly stated: "Shoppers are asking ChatGPT what to buy. If your store isn't structured for AI, you won't get cited. That's GEO." When GEO Raiser ran a full audit of their own agency's site, they scored 52 out of 100. Their quick score was 79 — a gap that reveals exactly how surface-level most GEO self-assessments are. The audit found zero author attribution on over 100 pages, no Article schema on any blog content, and a missing llms.txt file. A 24-year agency publicly championing GEO for enterprise clients hadn't implemented the basics on their own domain. If that's the gap at an agency level, imagine what it looks like inside a mid-market e-commerce operation where content and engineering rarely share a roadmap.

The 4 Content Types E-Commerce Teams Should Prioritize for GEO

Not all content gets cited equally. The pattern I keep seeing is that AI engines overwhelmingly favor content that performs one of four functions: it recommends, it compares, it explains, or it answers a direct question. E-commerce teams that produce content in these four formats — deliberately, at scale, with proper structure — are the ones showing up in AI answers.

1. Buying guides with explicit criteria. "Best standing desks for small apartments" isn't a product page — it's a knowledge object. The LLM can extract "look for desks under 48 inches wide with a memory preset controller" as a direct, citable answer. Buying guides work because they contain the evaluative prose that AI engines need to answer "what should I buy?" questions. They need to be genuinely opinionated, not just a list of products with affiliate links. The guides that get cited name criteria, explain tradeoffs, and tell the reader why one option beats another for a specific use case.

2. Comparison content. "X vs Y" articles are structurally ideal for AI retrieval. They contain explicit comparisons, named entities, and conclusion sentences — all of which are easy for a RAG system to excerpt. According to data tracked by Profound and Bluefish, 40–60% of cited domains change monthly across major AI platforms, with Google AI Overviews showing 59.3% citation drift. That volatility makes comparison content especially valuable: it's the type that earns citations across multiple query variants, giving you more surface area against a moving target.

3. "Best X for Y" articles. These map almost perfectly to the conversational query patterns that now drive 73% of product discovery. "Best running shoes for wide feet," "best air purifier for a 500 sq ft room," "best mattress for side sleepers under $1,000" — these are exactly the queries that trigger AI Overviews and Perplexity summaries. Building a systematic library of these articles, mapped to your actual product catalog, is the single highest-leverage GEO investment most e-commerce teams aren't making.

4. FAQ clusters. Not a single FAQ page — a cluster of individual, deeply-answered FAQ articles targeting specific questions in your product category. "How often should you replace a water filter?" is a better GEO target than a generic FAQ page titled "Water Filter FAQs." Individual question-focused pages give AI engines a clean, extractable answer with a clear source URL. They also compound: a cluster of 30 FAQ articles covering a product category builds topical authority for AI search in a way that a single buying guide never will.

The scaling challenge is real. Producing all four content types at meaningful volume requires either a large editorial team or an AI-assisted production workflow. Meev's auto-blogging engine is built specifically for this use case — quality-gated content publishing at scale, with author attribution and schema built into the output. If you're evaluating tools for this, the comparison between Meev and Sight AI covers the quality-gating and citation outreach differences in detail.

Want to know which of your product categories have zero AI citation presence right now?

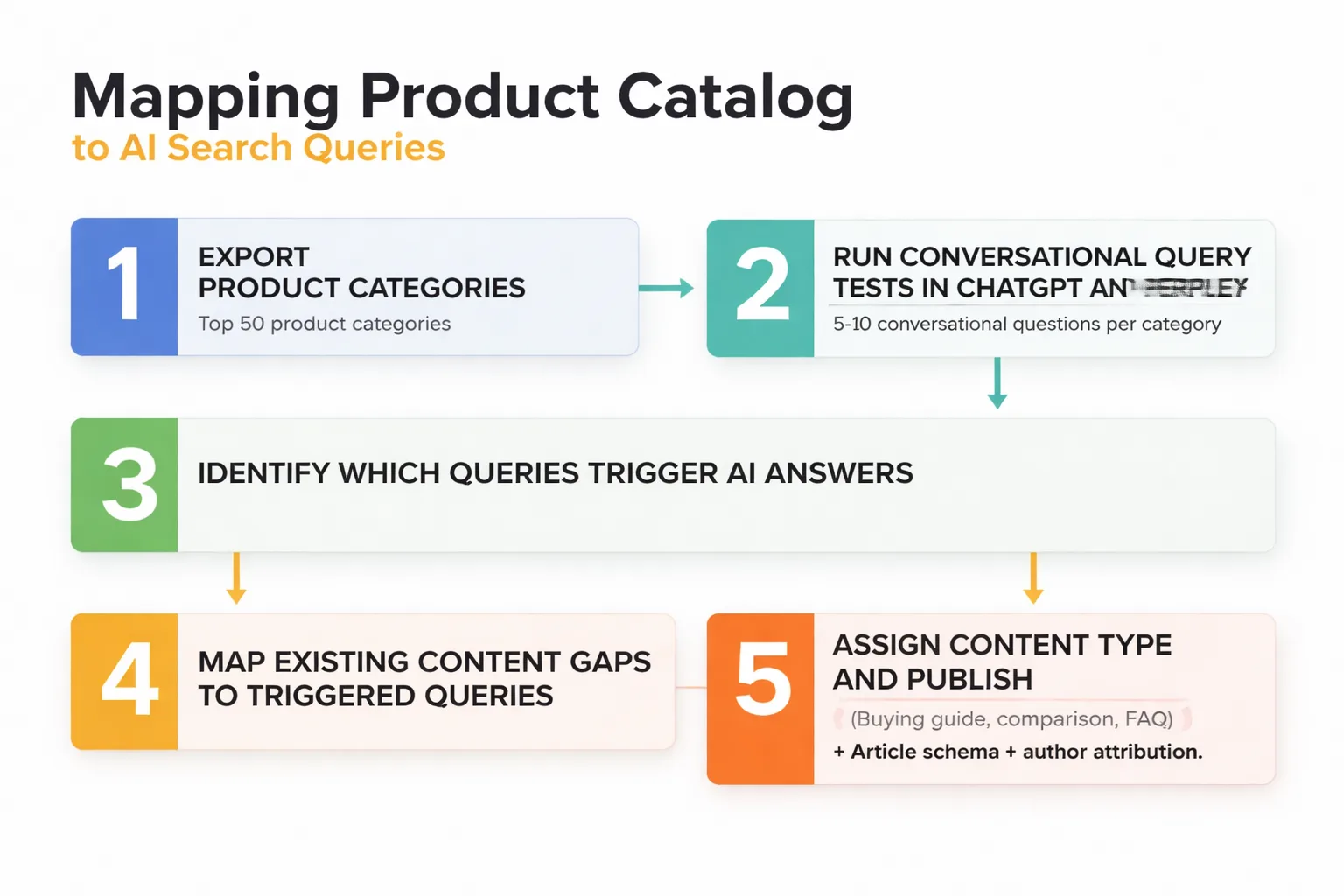

How to Map Your Product Catalog to AI Search Queries

The workflow I recommend starts with your product taxonomy, not a keyword tool.

Export your top 50 product categories. For each one, write out 5–10 conversational questions a shopper might ask before buying — not keyword-style queries, but actual questions. "What's the difference between a French press and a pour-over?" not "french press vs pour over." Then run those questions through ChatGPT and Perplexity and document what happens.

If an AI answer appears and your brand or domain isn't cited, you have a citation gap. If no AI answer appears at all, you have an opportunity to be the first credible source. Both are actionable. The ones that already trigger AI answers are your highest-priority targets — the retrieval layer is already active on those queries, which means a well-structured piece of content can enter the citation rotation relatively quickly.

Here's what I mean by "relatively quickly": this is where the RAG mechanics matter more than most teams realize. Google AI Overviews and Perplexity both use retrieval-augmented generation, which indexes fresh content on a rolling basis — it's not waiting for a model retraining cycle. I've watched well-structured posts surface in AI Overviews within 2–6 weeks of publication. That's not a training effect; that's the retrieval layer doing what it's designed to do. The implication for e-commerce teams is that you don't need a 12-month content calendar to start seeing GEO results. You need the right content type, properly structured, published now.

For the content structure itself: every piece targeting a conversational query needs a self-contained answer in the first 100 words, explicit author attribution (a named person with verifiable credentials, not "Staff Writer"), Article schema markup, and a clear conclusion that names a recommendation or summarizes the key finding. The Sunrise Integration audit found that missing author attribution and Article schema were the two most common structural failures blocking AI citation — and both are fixable in a content template update, not a site rebuild.

For Perplexity specifically, the path to citation is harder and more PR-dependent than most content teams want to hear. Based on what practitioners tracking Perplexity's reranking system have observed, it operates closer to a domain whitelist than a content quality signal — meaning the content on your site matters less than whether your domain has been mentioned in Tier-1 trade publications. Perplexity processed 780 million queries in May 2025, growing 20% month-over-month. At that scale, a citation slot is functionally equivalent in reach to a feature placement in a top-tier trade publication. The uncomfortable implication: for Perplexity visibility, your best investment might be a media relations budget, not a content calendar.

For tracking which queries your brand is actually appearing in, tools purpose-built for LLM citation tracking are now table stakes. The best AI visibility tools for 2026 covers the current field in detail, including how platforms like Profound and Peec AI approach citation monitoring differently.

Why Reddit Is Your Fastest GEO Distribution Channel

This is the contrarian take most e-commerce content teams aren't ready for: Reddit is a more effective near-term GEO distribution channel than digital PR.

I know how that sounds. But here's the mechanism. The debate in GEO circles about whether to pitch journalists or focus on owned content is mostly a distraction — and the reason is that pitching journalists to influence LLM training data is close to useless in the near term. Base model weights don't update on a rolling basis. You're waiting for a training run that may be 12–18 months out. What actually moves the needle right now is the retrieval layer, and Reddit feeds that layer continuously.

High-quality posts on relevant subreddits — r/homeoffice for standing desks, r/running for footwear, r/personalfinance for financial products — can surface in Google AI Overviews within 2–6 weeks of publication. That's not theoretical; it's the feedback loop I've watched play out. The Guardian's January 2026 investigation into health-query AI Overviews confirmed that Google was citing Reddit threads directly — sometimes incorrectly, which led Google to quietly pull health-related AI Overviews after coverage dropped. The quality problem is real, but the citation mechanism is working exactly as described.

For e-commerce teams, the Reddit strategy looks like this: identify the subreddits where your product category is actively discussed, write substantive posts that answer real purchase questions (not promotional content — actual helpful answers with specifics), and treat those posts as a publication channel with a 2–6 week feedback loop into AI responses. This is not the same as spamming Reddit with affiliate links. It's treating Reddit like a high-authority publication target, which in the context of AI retrieval, it functionally is.

The teams I see winning GEO citations in 2025 aren't the ones with the best publisher relationships. They're the ones posting substantive, well-structured content on Reddit and treating it like a near-real-time distribution channel. That's a hard mental model shift for a brand content team — but the mechanism is real.

Measuring GEO Performance for E-Commerce: Metrics That Matter

Most e-commerce content teams have no GEO measurement infrastructure at all. They're tracking organic traffic, rankings, and conversion rates — none of which capture AI visibility. That's a problem, because citation drift data shows that 40–60% of cited domains change monthly across major AI platforms. If you're not tracking your citation share, you're flying blind through a volatile environment.

The metrics that actually matter for e-commerce GEO fall into three categories.

Brand mention frequency in AI answers. How often does your brand appear when someone asks a conversational query in your product category? This requires actively querying AI engines with your target questions and logging the results — either manually or through a dedicated LLM citation tracking tool. The Meev vs Profound comparison covers how these tools differ in their approach to tracking brand mentions across ChatGPT, Perplexity, and Google AI Overviews.

Citation share by product category. Which of your product categories are generating AI citations, and which have zero presence? This is the e-commerce-specific version of topical authority for AI search. A brand that sells both camping gear and kitchen appliances might have strong citation share in camping (because they've published detailed buying guides) and zero presence in kitchen (because their content there is mostly product descriptions). Category-level citation share tells you where to invest next.

The mention-citation gap. This is the delta between how often your brand is mentioned in AI answers versus how often it's cited as a source. A brand can be mentioned frequently ("many shoppers prefer Brand X") without ever being cited as the source of information. Closing that gap requires the structural work — author attribution, Article schema, self-contained answer formatting — that converts a brand mention into an actual citation. We spent the better part of Q1 optimizing on-site content specifically to close this gap for one client. Almost none of the on-site work moved the needle. What actually worked was getting the client mentioned in two Tier-1 trade publications. Citations followed within weeks. The lesson: the mention-citation gap is often a PR problem wearing a content problem's clothes.

Revenue attribution. This is the hardest metric and the most important one for justifying GEO investment to a CFO. The approach I recommend is indirect: track which content pieces are generating AI citations, then look at whether those pages show increased branded search volume, direct traffic, or assisted conversions in the weeks following citation activity. It's not a clean attribution model — but it's more defensible than "we think AI visibility is driving awareness."

For teams building out this measurement infrastructure, the AI content creation landscape in 2026 has shifted significantly toward integrated visibility tracking — tools that combine content production with citation monitoring rather than treating them as separate workflows.

One honest caveat on measurement: Google AI Overviews citation quality is not reliable enough to use as a quality signal for your own content. The Guardian's January 2026 investigation showed that Google was citing demonstrably false sources at scale — Reddit threads, Wikipedia entries with errors — and that its Helpful Content System was running on a separate track from AIO source selection entirely. Google pulled health-related AI Overviews after that coverage. Until Google demonstrates that HCS actually gates AIO selection, I'm treating AIO citation as a reach metric, not a credibility signal. Track it, pursue it, but don't assume appearing in an AI Overview means Google has validated your content quality.

FAQ

Do product pages ever get cited in AI answers? Rarely, and almost never for informational queries. Product pages can occasionally appear in AI answers for highly specific, transactional queries ("where can I buy X brand Y model"), but they're structurally unsuited for the explanatory queries that dominate AI search. The fix isn't rewriting product pages — it's building a layer of editorial content (buying guides, comparisons, FAQs) that sits above the catalog and answers the questions shoppers ask before they're ready to buy.

How long does it take to see GEO results after publishing new content? For Google AI Overviews and Perplexity, which use retrieval-augmented generation and index fresh content on a rolling basis, well-structured content can appear in AI answers within 2–6 weeks of publication. For base model citations (ChatGPT without browsing, for example), you're waiting for a model retraining cycle — potentially 12–18 months. The practical implication: prioritize content formats and distribution channels (including Reddit) that feed the retrieval layer, not the training layer.

What's the minimum viable GEO setup for a small e-commerce team? Three things: named author attribution on all editorial content (a real person with a linked bio, not "Admin"), Article schema markup on blog posts and buying guides, and an llms.txt file at your domain root. These are the three structural failures the Sunrise Integration audit identified as most common — and all three are implementable without a major technical project. Beyond that, publish one substantive buying guide or comparison article per week targeting conversational queries in your top product categories.

Is GEO replacing SEO for e-commerce, or do both matter? Both matter, but the resource allocation is shifting. Traditional organic search still drives significant e-commerce traffic, and ranking signals haven't disappeared. But the queries where AI answers now appear — "what's the best X for Y" — are exactly the high-intent, pre-purchase queries that drove the most valuable organic traffic. If AI answers those queries without citing you, you lose that traffic regardless of your ranking position. GEO isn't replacing SEO; it's becoming the upstream discipline that determines whether your content is even in the consideration set.

How do I track my brand's citation share across AI platforms? Manual tracking (querying ChatGPT, Perplexity, and Google AI Overviews with your target questions and logging results in a spreadsheet) is a viable starting point for small teams. At scale, dedicated LLM citation tracking tools — Profound, Peec AI, Bluefish — automate this process and provide citation drift data across platforms. Given that 40–60% of cited domains change monthly, weekly tracking is more useful than monthly snapshots.

Does structured data (schema markup) directly influence AI citations? Schema markup doesn't directly cause AI citations — LLMs don't read schema the way search crawlers do. But it signals content structure and entity clarity in ways that improve how AI systems interpret and retrieve your content. Article schema, specifically, helps AI engines identify the author, publication date, and content type — all of which factor into source selection. Think of schema as a label that makes your content easier to process correctly, not a ranking factor in the traditional sense.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev's content engine builds the buying guides, comparison articles, and FAQ clusters that actually get cited in AI answers — with author attribution and Article schema built in from the start.