By Judy Zhou, Head of Content Strategy

Key Takeaways

- ChatGPT outages are rare and resolve quickly — check status.openai.com in under 10 seconds, but if the status page is green, your problem is content invisibility, not downtime.

- Outbound referral traffic from ChatGPT grew 206% in 2025, but over 30% of that traffic flows to just 10 domains — concentration that makes content structure and citability more important than domain authority alone.

- AI systems retrieve content differently than Google ranks it: answers buried in paragraph 18 of a pillar page rarely get cited, while a 400-word focused post with the answer in the first 150 words gets cited regularly.

- Run three diagnostic checks when your brand disappears from AI answers: systematic prompt testing across ChatGPT, Perplexity, and AI Overviews; citation monitoring via GA4 referral traffic; and a content signal audit focused on answer placement, entity density, and recency.

Checking whether ChatGPT is down is the wrong question — and the fact that so many marketers are asking it reveals exactly why their content keeps getting ignored by AI systems. Uptime is not your problem. ChatGPT has a near-perfect availability record. What's actually failing is your content's legibility to large language models: its authority signals, its information density, its citability. Chasing server-status pages while competitors quietly build GEO-optimized content libraries is how brands lose the AI search era before they realize it started.

The phrase "is ChatGPT down" gets searched thousands of times a month. Most of those searches aren't coming from people experiencing a real outage. They're coming from marketers and publishers who notice their brand, their data, or their perspective is simply absent from AI-generated answers — and they don't have a better frame for what's happening. I want to give them one.

When ChatGPT Is Actually Down: How to Check in 30 Seconds

The fastest, most reliable method: go directly to OpenAI's status page. It shows real-time system status across ChatGPT, the API, and individual model endpoints. Green across the board means ChatGPT is not down. If there's a yellow "degraded performance" or red "outage" indicator, you've found your answer in under ten seconds.

For a second opinion, Downdetector's ChatGPT page aggregates user-reported problems and plots them on a timeline. The spike charts are useful for distinguishing a genuine widespread outage from a local issue affecting only you. If OpenAI's status page is green but Downdetector shows a spike, the problem is almost certainly on your end: your internet connection, a browser extension conflict, a VPN routing issue, or a cached session that needs to be cleared.

Common error messages and what they actually mean:

- "ChatGPT is at capacity right now" — high traffic, not a full outage. Try again in a few minutes or switch to a different browser. - "Something went wrong" — usually a session timeout. Refresh the page or log out and back in. - "Network error" — local connectivity problem in most cases. Check your connection before assuming OpenAI is at fault. - API rate limit errors (429) — you've hit your usage tier's request ceiling. Not a system outage.

That's the literal answer to "is ChatGPT down." Bookmark the status page, check it first, and move on. Because if the status page is green and your brand still isn't showing up in ChatGPT answers, you have a different problem entirely.

The More Likely Problem: ChatGPT Can't Find Your Content

Most "ChatGPT not working" complaints I hear from content teams aren't about uptime at all. They're about invisibility. The model is running fine. It's just not citing them.

This distinction matters enormously. An outage is temporary and requires no action on your part. Content invisibility is structural and compounds over time. Every week your content fails to get cited is a week a competitor's content is filling that space instead.

Outbound referral traffic from ChatGPT grew 206% in 2025, and over 30% of that referral traffic flows to just 10 domains. Read that again. The traffic is real and growing fast — it's just extremely concentrated. If you're not in that top tier of cited sources, you're not getting a proportional share of a growing pie. You're getting close to zero.

The mechanics behind this are worth understanding. ChatGPT doesn't crawl the web in real time for most queries. It draws from its training data (which has a knowledge cutoff) and, when the search feature is enabled, from live web retrieval. According to Semrush's clickstream analysis, ChatGPT enables its search feature on roughly 34.5% of queries as of early 2026. For the other 65.5%, it's working entirely from what it already knows. If your content wasn't prominent enough to be absorbed into the model's training corpus, or if your site's structure makes it hard for retrieval systems to parse, you're invisible in both modes.

I've watched content teams spend months on outreach campaigns and link-building programs, operating on the assumption that domain authority would translate directly into AI citation outcomes. In my experience running content strategy across multiple brands, that assumption is wrong. Format and recency move the needle. Authority signals help at the margins, but they don't compensate for content that retrieval systems can't parse cleanly.

ChatGPT Citation Patterns: What the Evidence Shows

The data on ChatGPT citation behavior is uneven — partly because the system behaves differently across contexts, and partly because most published research conflates the ChatGPT UI with the API, which I've found are genuinely different products from a citation standpoint.

In the UI, community-validated content shows up constantly. I'd estimate Reddit appears in roughly half of ChatGPT UI responses for any topic with active community discussion. Pull the same query through the API and Reddit nearly disappears. That divergence tells me the UI is being tuned for engagement signals, not editorial quality. Which has real implications for how we think about "optimizing for ChatGPT citations" — that's not even a coherent goal until you specify which version of ChatGPT you mean.

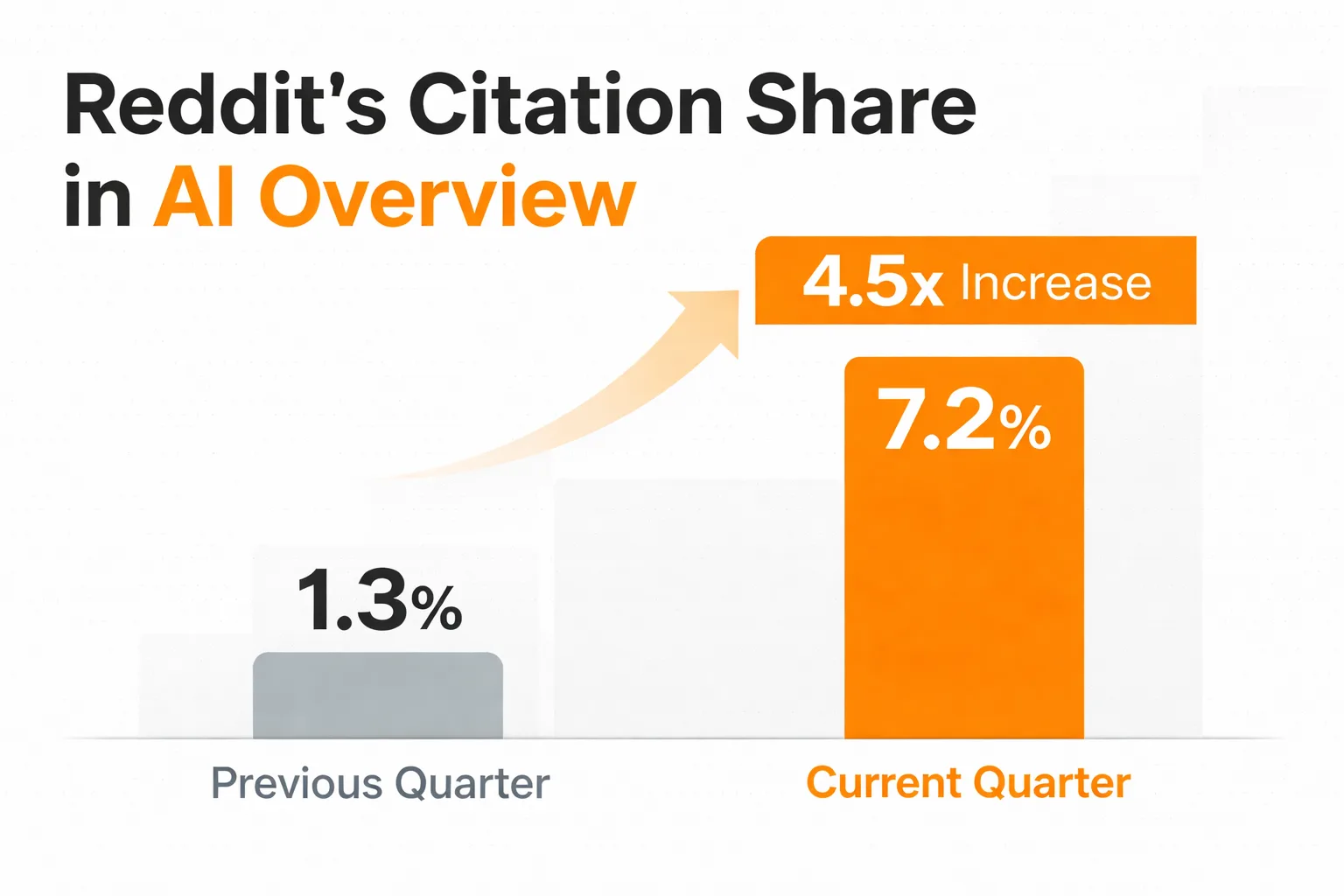

Reddit's AI Overview citation share went from 1.3% to 7.2% in a single quarter. That's a 4.5x increase driven entirely by content characteristics, not relationship building or domain authority. The structural features of Reddit content — specific answers to specific questions, community validation signals, dense entity references, direct language — match what retrieval systems are scanning for. Your brand article, however polished, often doesn't.

The pattern I keep seeing across content audits: content that gets cited shares a few structural features regardless of domain. It answers one question completely and specifically, rather than covering a topic broadly. It uses named entities (people, tools, companies, studies) rather than generic category language. It contains specific numbers that can be extracted and quoted. And it's structured so the answer appears in the first 100-150 words, not buried in paragraph seven after three paragraphs of context-setting.

Google's E-E-A-T guidelines have always pushed in this direction, but the AI citation layer makes the stakes higher. A page that ranks #3 on Google still gets clicks. A page that doesn't get cited by an AI engine gets nothing — no click, no mention, no brand signal. The visibility gap between cited and uncited content is more binary in AI search than it ever was in traditional search.

One more thing the evidence shows: recency matters more than most content leads realize. Posts published within 90 days are showing up in AI Overviews within 2-6 weeks. That's a faster feedback loop than most editorial calendars are built to support. Evergreen-only content strategies are leaving citation opportunities on the table.

For a deeper look at how different AI search tools evaluate and surface content, the best AI visibility tools comparison for 2026 is worth reading alongside this.

How Does AI Search Visibility Differ From Traditional SEO?

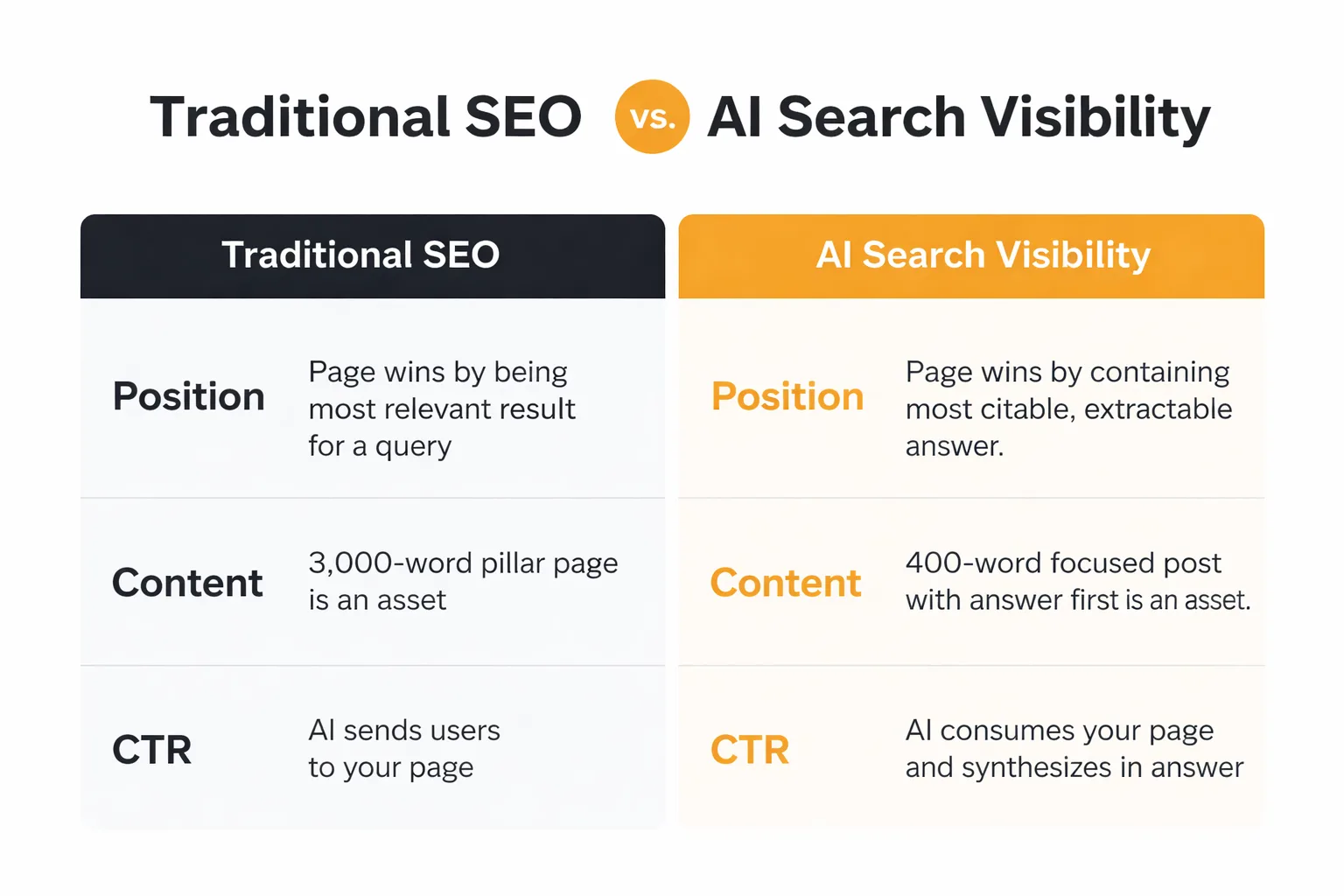

Traditional SEO rewards a page for ranking. AI search rewards a page for being extractable. Those are related but not identical goals, and conflating them is how content teams end up with pages that rank on page one of Google but never appear in a single AI-generated answer.

In traditional SEO, a page wins by being the most relevant result for a query. In AI search, a page wins by containing the most citable, extractable answer to a question the AI is trying to answer. The AI isn't sending users to your page — it's consuming your page and synthesizing an answer. That changes what "winning" looks like entirely.

The structural implications are significant. A 3,000-word pillar page that covers a topic comprehensively is a traditional SEO asset. But if the specific answer to a specific question is on paragraph 18 of that page, surrounded by context and caveats, an AI retrieval system may never surface it. The same information in a 400-word focused post, with the answer in the first paragraph, would get cited regularly.

This is why I've started thinking about content architecture differently. Instead of asking "does this page cover the topic thoroughly," I ask "does this page answer one question so completely that an AI could extract a citable paragraph from it in under three seconds." Those are different design constraints.

For teams comparing tools to track this, Meev vs. Profound covers how AI search visibility platforms differ in how they measure citation performance — which is relevant if you're trying to build a monitoring workflow rather than just guessing.

Why Do Some Brands Disappear From AI Answers?

Disappearing from AI answers is almost never a single-cause problem. In my work overseeing content strategy across multiple brands, I've seen four root causes show up repeatedly, and they usually appear in combination.

First: training data recency gaps. If your best content was published more than 18 months ago and hasn't been updated, it may be underrepresented in the model's current training data. The fix isn't to delete and rewrite — it's to publish fresh, specific content that covers the same territory with current data and named sources.

Second: structural illegibility. Content written for human readers who will scroll, skim, and interpret context doesn't always parse cleanly for retrieval systems. Dense paragraphs, buried answers, heavy use of pronouns without clear antecedents, and vague category language all reduce extractability. According to Moz's analysis of AI tools and SEO, AI-generated content at scale also creates E-E-A-T risks when it lacks first-person experience signals and specific attribution — which is a structural problem, not a volume problem.

Third: the mention-citation gap. Your brand might be mentioned in content that gets cited, without being the cited source itself. That's a visibility problem with a specific fix: you need to be the primary source for claims in your area of expertise, not a secondary reference someone else's article mentions in passing.

Fourth: the wrong format for the query type. Perplexity optimizes for recency and community consensus. ChatGPT UI optimizes for engagement. Neither is optimizing for what we'd recognize as authoritative long-form content. Matching your content format to the specific AI system you're targeting matters more than most teams realize. For a practical comparison of how different platforms handle this, Meev vs. Peec AI walks through how visibility tracking and citation outreach differ across tools.

Wondering which AI systems are actually citing your content right now?

3 Checks to Run When Your Brand Disappears From AI Answers

When a brand's AI search visibility drops — or never existed in the first place — I run the same diagnostic sequence every time. These three checks surface the actual problem faster than any audit framework I've tried.

Check 1: Systematic prompt testing across multiple AI systems.

Don't just ask "what is [your brand]" — that's a brand query with a different retrieval logic than category queries. Run at least three query types: branded ("what does [your brand] do"), category ("best tools for [your category]"), and comparative ("[your brand] vs [competitor]" or "alternatives to [competitor]"). Run each query in ChatGPT UI, Perplexity, and Google AI Overviews. Document which queries surface your content, which surface competitors, and which return no relevant citations. This gives you a citation gap map — the specific query types and AI systems where you're invisible.

The reason to test all three systems separately: they pull from different source pools and reward different content characteristics. A brand that appears consistently in Perplexity but never in ChatGPT UI has a different problem than a brand that's absent from all three. For teams building out this kind of monitoring workflow, Meev vs. Sight AI covers how quality-gated content publishing and citation outreach tools compare — relevant if you're moving from diagnosis to systematic fix.

Check 2: Citation monitoring setup.

Once you know where you're absent, set up ongoing monitoring so you catch changes quickly. Google Alerts for your brand name plus your primary topic area catches mentions in content that may later get cited. More directly, check your GA4 referral traffic for chatgpt.com and perplexity.ai as sources — Semrush's analysis found that ChatGPT referral traffic grew 206% in 2025, so if your referral numbers from these sources are flat or zero, that's a clear signal you're not being cited at scale. One important nuance from that same research: AI search visitors have a 4.1% higher bounce rate than traditional Google search visitors and view fewer pages per session. Don't optimize purely for citation volume — the quality of the query context matters too.

Check 3: Content signal audit on your highest-priority pages.

For the specific pages you want cited, run through this checklist. Does the primary answer appear in the first 150 words? Does the page contain at least three named entities (specific tools, studies, people, companies) rather than generic category terms? Does it include at least one specific, extractable number with a named source? Was it published or meaningfully updated within the last 90 days? Is the page's topic narrow enough that an AI could extract a single citable paragraph, rather than broad enough that it covers a whole topic area?

If you're failing more than two of those criteria on a priority page, that's where to start. The fix is usually a targeted rewrite of the opening 200 words plus a structural edit to surface the specific answer earlier — not a full page rebuild. For teams thinking about content operations at scale, AI content creation in 2026 covers the workflow considerations that affect citability, including the editorial review layer that separates content that compounds in visibility from content that stays invisible.

One thing I've learned the hard way: don't try to fix everything at once. The teams I've seen make the fastest progress pick one AI system, one query type, and three to five priority pages. They optimize those specifically, measure citation changes over 60-90 days, and then expand. Trying to boil the ocean on AI search visibility is how you end up with a lot of activity and no measurable change.

The underlying point I keep coming back to is this: "is ChatGPT down" is a question about infrastructure. "Why isn't my brand showing up in AI answers" is a question about content strategy. They feel similar when you're frustrated and your brand is absent from AI-generated responses. But they have completely different answers — and only one of them is something you can actually fix.

Frequently Asked Questions

How long does a typical ChatGPT outage last? Most ChatGPT outages resolve within 30 minutes to a few hours. Major incidents — like the ones that affect API access and the UI simultaneously — have historically lasted under 24 hours. OpenAI posts incident updates on their status page with timestamps, so you can track resolution progress in real time rather than repeatedly testing the interface.

Does updating my content actually improve AI citation rates? Yes, but the type of update matters. Adding a current statistic with a named source, restructuring the opening to answer the primary question in the first 150 words, or adding specific named entities to a previously generic page all improve extractability. Simply refreshing the publication date without changing the content structure doesn't move the needle in my experience.

Why does my content rank on Google but not appear in ChatGPT answers? Ranking and citation use different signals. Google rewards relevance and authority for a query. AI systems reward extractability — whether a specific, citable answer can be pulled from your content cleanly. A broad pillar page can rank #1 on Google while being nearly invisible to AI retrieval if the specific answer to a specific question is buried in the middle of the page.

How is Perplexity's source selection different from ChatGPT's? Perplexity optimizes heavily for recency and community consensus, which is why Reddit and recent news sources show up frequently. ChatGPT UI appears to weight engagement signals more heavily. For most brands, this means Perplexity is actually easier to get cited in for recent, specific content — while ChatGPT UI favors content with strong community validation signals. Testing both separately is worth the 20 minutes it takes.

What's the fastest way to improve LLM content visibility for a new page? Focus on three things: answer placement (put the direct answer in the first 100 words), entity density (name specific tools, studies, and sources rather than using category language), and recency (AI systems with live search enabled favor content published within the last 90 days). A page that does all three well will outperform a longer, more authoritative page that buries its answer and uses generic language.

Should I use different content formats for different AI systems? Yes, and this is an underappreciated point. Perplexity pulls heavily from recent, community-validated content — short, specific, direct-answer formats work well. ChatGPT's training data favors content that was widely linked and referenced before its knowledge cutoff. Google AI Overviews pulls from pages already ranking in the top 10 for a query. Optimizing for one system doesn't automatically optimize for the others, which is why the prompt-testing diagnostic across all three systems is the right starting point.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Stop guessing why your brand is invisible to AI search engines. Meev tracks your citation patterns across ChatGPT, Perplexity, and Google AI Overviews — and shows you exactly what to fix.