AI Rewriter Tools Compared: Which One Preserves Your Voice?

By Judy Zhou, Head of Content Strategy

Key Takeaways

- Fewer than 10% of AI-rewritten blog pages sustain top-10 rankings beyond 60 days without a human differentiation layer added post-generation, per an audit of 1,000+ pages.

- AI content scores roughly 50% on E-E-A-T signals versus 89% for human-written content — making the human review layer non-negotiable, not optional.

- The biggest voice-preservation gap isn't the tool you choose — it's whether you include a style guide excerpt and real brand examples in your rewrite prompt.

- Use a four-variable triage (ranking position, intent match, content age, E-E-A-T density) before any rewrite to avoid accidentally disrupting content that's already working.

Every content team eventually reaches for an ai rewriter — and almost every one of them discovers the same ugly truth about three weeks in. The output is fluent. It's clean. It reads well. And it sounds absolutely nothing like you.

I run content strategy at Meev, where we oversee AI-driven publishing for hundreds of brands. Voice preservation isn't a nice-to-have for us — it's the whole game. A brand that publishes 40 articles a month through an AI rewriter and loses its editorial identity by article 12 hasn't scaled content production. It's scaled genericness. And Google's algorithm, increasingly, knows the difference.

The uncomfortable truth: most AI rewriting tools are optimized for fluency, not fidelity. They'll make your sentences cleaner. They won't make them yours.

Key takeaways before we get into the tools:

- AI rewrites fewer than 10% of blog pages sustaining top-10 rankings beyond 60 days, according to an audit of 1,000+ pages cited by JustWords - AI scores roughly 50% on E-E-A-T signals versus 89% for human-written content, per NeuraPlusAI's 2026 comparison - The fix isn't a better tool — it's a better workflow that treats the AI draft as a skeleton, not a finished product - Voice preservation requires explicit prompting, not just better models

Why Most AI Rewriters Destroy Brand Voice (And How to Spot It)

Large language models are trained to produce text that reads smoothly and scores well on fluency benchmarks. They are not trained to sound like you. This distinction matters enormously in practice — and it's why you can hand the same paragraph to five different AI rewriters and get five outputs that all feel vaguely like the same anonymous content mill.

Here's a concrete example. Take this original sentence from a B2B SaaS brand with a deliberately blunt, no-hedging editorial voice: "Most onboarding flows fail because product teams build for the user they wish they had, not the one they actually got." Run that through a standard AI rewriter and you'll typically get something like: "Onboarding flows often fall short because product teams design for an idealized user rather than addressing the needs of their actual customer base." The rewrite is grammatically correct. It's also completely defanged — the specificity, the edge, the rhythm that made the original memorable are gone. What the model did was sand down the friction. And friction, in editorial voice, is often the point.

The pattern I keep seeing is that LLMs default to what I'd call "neutral professional" — a register that's inoffensive, moderately formal, and completely interchangeable with every other piece of neutral professional content on the internet. It's the editorial equivalent of beige. Brands that have spent years developing a recognizable voice — sardonic, direct, warm, technical — watch it evaporate in a single rewrite pass.

How do you spot the damage before it ships? Three signals:

1. Sentence length homogenization — your brand uses short punchy sentences for emphasis. The rewrite irons them all to the same medium length. 2. Hedge insertion — words like "often," "typically," and "may" appear in the output even when your style is declarative and certain. 3. Personality word removal — specific verbs, brand-specific terminology, and idiosyncratic phrasing get swapped for generic equivalents.

If you're seeing these in your rewritten output, the tool isn't preserving your voice. It's replacing it.

The 5 AI Rewriter Tools Content Teams Actually Use

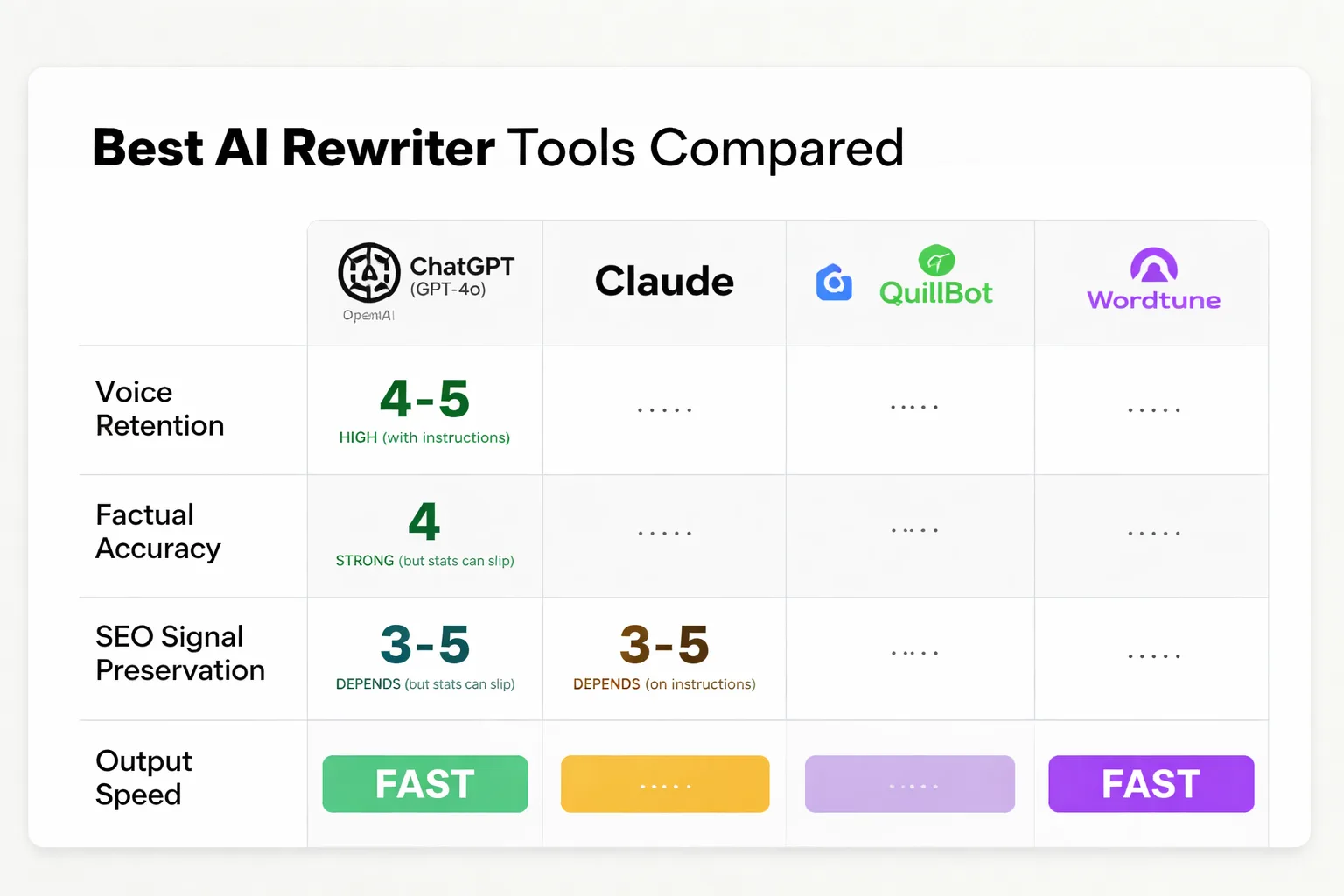

I've tested these across real content workflows — not toy examples. Here's my honest assessment across four criteria: voice retention, factual accuracy, SEO signal preservation, and speed.

ChatGPT (GPT-4o) is the most capable general rewriter if you know how to prompt it. Voice retention is high when you provide explicit style instructions — without them, it defaults to neutral professional like everyone else. Factual accuracy is strong for well-established claims but will confidently paraphrase a statistic into a slightly different (wrong) number. SEO signal preservation depends entirely on whether you tell it to keep heading structure and keyword placement. Speed is fast. The ceiling here is genuinely high; the floor is low if you're running it with generic prompts.

Claude (Anthropic) is my current preference for voice-sensitive rewrites. It's more likely to ask clarifying questions about tone when given ambiguous instructions, and it handles nuanced style guides better than GPT-4o in my testing — particularly for brands with unconventional punctuation habits or non-standard sentence structures. The Claude token optimization approach matters here: longer, richer system prompts produce measurably better voice fidelity. Factual accuracy is comparable to GPT-4o. Slightly slower on large batches.

Gemini (Google) has improved significantly in 2025 but still lags on voice retention for distinctive editorial styles. Where it genuinely shines is SEO signal preservation — unsurprisingly, given the source. It tends to maintain keyword density and heading hierarchy better than the others out of the box. For commodity rewrites where voice isn't the priority, it's a solid choice.

QuillBot is the tool most content teams reach for first because it's cheap and fast. It's also the worst performer on voice retention of the five. QuillBot optimizes for paraphrase diversity — it will give you multiple "modes" of rewriting — but none of those modes are your mode. For avoiding plagiarism in academic or research contexts, it works. For brand content? I'd avoid it.

Wordtune sits between QuillBot and the LLM-based tools. It offers "tone" controls (formal, casual, etc.) that help, but the categories are too coarse for most brand voices. A brand that's "professional but irreverent" doesn't fit into either bucket. Factual accuracy is the weakest of the five — it will occasionally rewrite a specific claim into something plausible-sounding but inaccurate. Always verify outputs.

| Tool | Voice Retention | Factual Accuracy | SEO Signal Preservation | Speed |

| ChatGPT (GPT-4o) | High (with prompting) | Good | Medium | Fast |

| Claude | High | Good | Medium | Medium |

| Gemini | Medium | Good | High | Fast |

| QuillBot | Low | Medium | Low | Very Fast |

| Wordtune | Medium | Low | Low | Fast |

The honest takeaway: the gap between tools is smaller than the gap between prompting strategies. A well-prompted ChatGPT will outperform a poorly-prompted Claude every time.

Wondering how your current AI rewriting workflow stacks up against a fully automated publishing system?

How to Prompt Any AI Rewriter to Keep Your Style

This is where most teams leave money on the table. They open the tool, paste the content, hit "rewrite," and wonder why it sounds generic. The model had no idea what "your voice" meant. You didn't tell it.

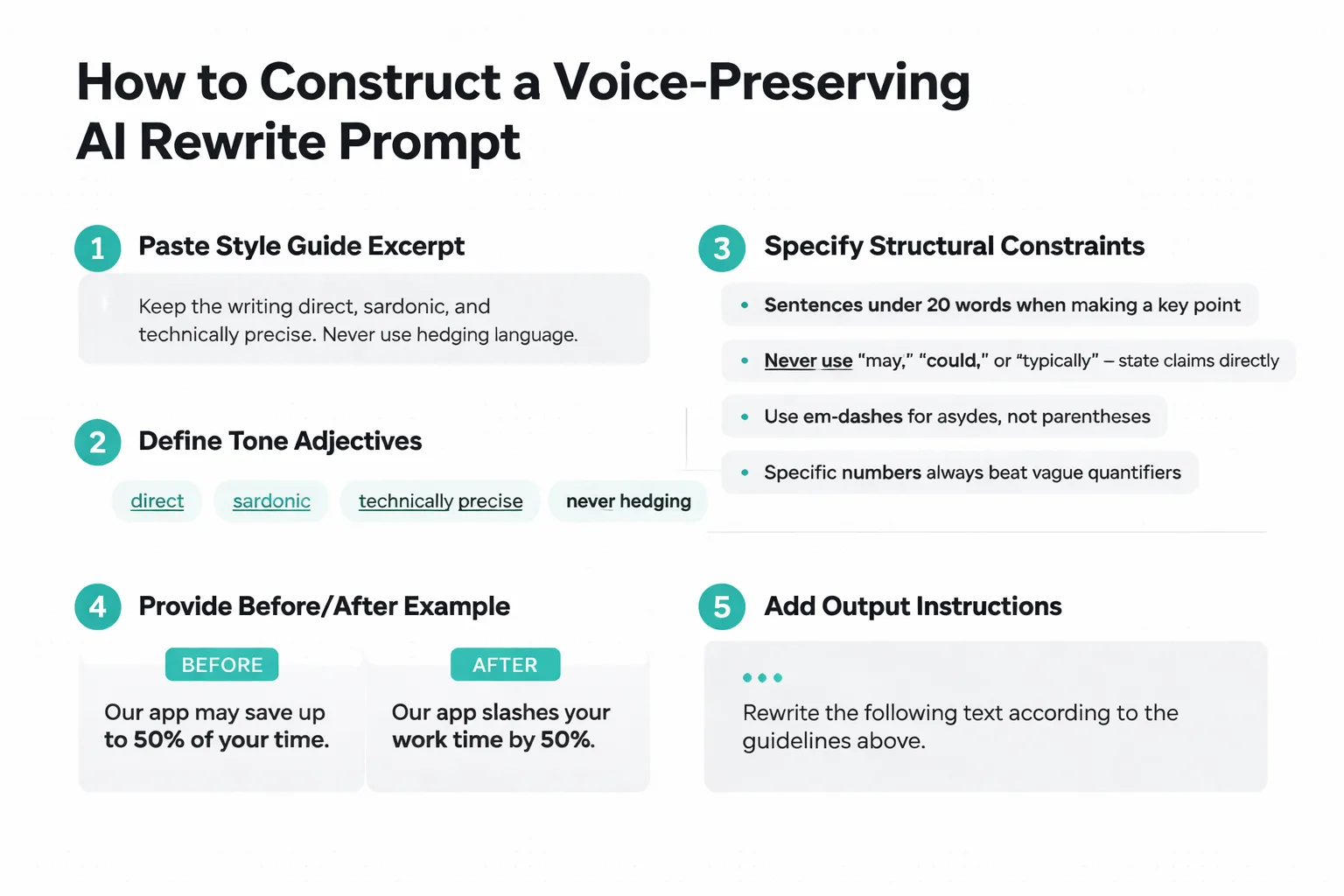

Here's the prompt structure I use for voice-sensitive rewrites:

You are rewriting content for [Brand Name]. Their editorial voice is [3-5 specific adjectives — e.g., "direct, sardonic, technically precise, never hedging"]. Style rules:

- Sentences under 20 words when making a key point

- Never use "may," "could," or "typically" — state claims directly

- Use em-dashes for asides, not parentheses

- Specific numbers always beat vague quantifiers

Here is an example of their voice at its best:

[PASTE 100-150 WORDS OF YOUR BEST EXISTING CONTENT]

Now rewrite the following, preserving the voice above:

[PASTE CONTENT TO REWRITE]

The example block is the most important part. Telling the model your voice is "sardonic" is abstract. Showing it 150 words of actual sardonic writing is concrete. The model pattern-matches against the example far more reliably than it interprets adjectives.

When I've added a style guide excerpt to prompts versus running without one, the difference in output quality is immediately visible — hedge words drop out, sentence rhythm tightens, and brand-specific terminology survives the rewrite. It's not a marginal improvement. It's the difference between content you'd publish and content you'd send back for revision.

For teams running high volume, build this into a template. Store your style block as a reusable prompt prefix. Every rewrite starts from the same foundation. This is how you get consistency at scale — not by finding a better tool, but by systematizing the instructions you give the tool you already have.

One more thing: always specify what not to change. "Preserve all statistics, named examples, and direct quotes exactly as written" is a sentence that will save you hours of fact-checking. Models will paraphrase numbers if you don't explicitly tell them not to.

When to Rewrite vs. When to Rewrite From Scratch

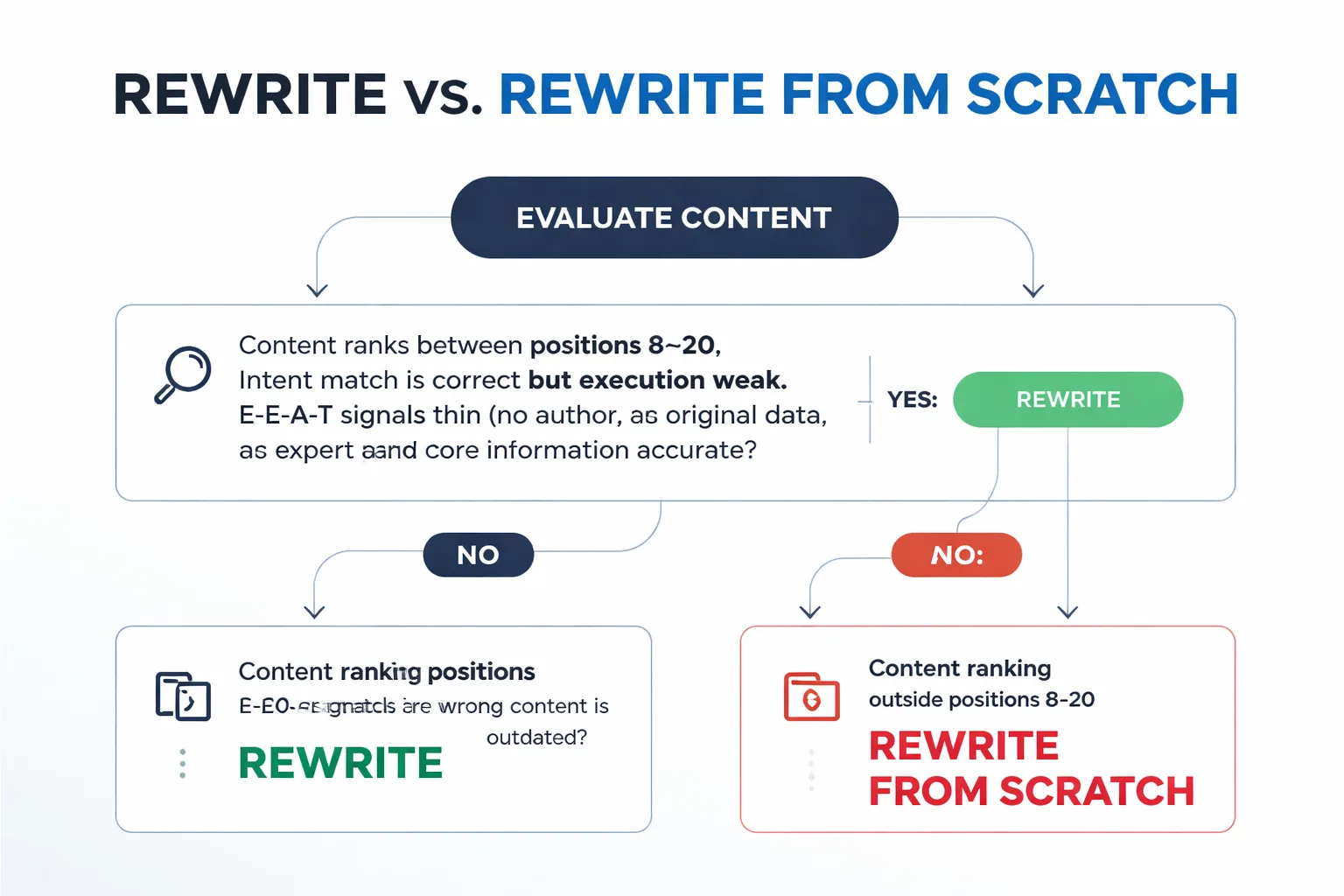

This is a question I get constantly, and the answer depends on four variables — content age, current ranking position, intent match, and E-E-A-T signal density. Get these wrong and you'll spend time rewriting content that should either be left alone or rebuilt entirely.

Content age alone is not a signal to rewrite. A three-year-old article ranking in position 4 with strong E-E-A-T signals is not a rewrite candidate — it's a monitor-and-update candidate. Touch it too aggressively and you risk disrupting whatever signals are keeping it there.

Here's the decision framework I use:

Rewrite when: The content ranks between positions 8–20, the intent match is correct but the execution is weak, the E-E-A-T signals are thin (no author, no original data, no expert perspective), and the core information is still accurate. This is the sweet spot for AI rewriting — the structure and topic are right, the execution needs lifting.

Rewrite from scratch when: The content is ranking outside position 30, the search intent has shifted since publication, or the factual foundation is outdated enough that preserving it would require more correction than creation. Rewriting bad content with an AI tool produces polished bad content. Start over.

Leave it alone when: It's ranking in positions 1–7 and traffic is stable or growing. The risk of disruption outweighs any potential gain. I've seen teams rewrite top-5 content "to make it better" and watch rankings drop within weeks. If it's working, the algorithm likes something about it — don't guess what that something is and accidentally remove it.

The E-E-A-T signal check is non-negotiable before any rewrite decision. NeuraPlusAI's 2026 comparison found AI content scores around 50% on E-E-A-T versus 89% for human-written content. If you're rewriting already-thin content with an AI tool and not adding a human layer — a practitioner quote, original data, an editorial perspective — you're moving the E-E-A-T score in the wrong direction.

A rule of thumb I've landed on: if the content can't pass the "why does this specific version need to exist" test, don't rewrite it — rebuild it.

Building a Rewrite Workflow That Scales Across a Content Team

Scaling rewrites without scaling quality failures requires SOPs, not just good intentions. Here's the workflow structure I'd implement for any team running 20+ rewrites per week.

Stage 1 — Triage and decision. Before anything touches an AI tool, a content strategist runs the four-variable check above (ranking position, intent match, content age, E-E-A-T density) and assigns each piece to one of three buckets: rewrite, rebuild, or leave. This takes 5–10 minutes per URL with a good audit template. Skip this stage and you'll waste rewrite capacity on content that should be left alone.

Stage 2 — Templated AI draft. Writers pull from a shared prompt library — not writing prompts from scratch each time. The library contains pre-built style blocks for each brand or content type in your portfolio, the factual preservation instruction, and any specific structural requirements (heading format, word count range, CTA placement). Consistency here is everything. One writer using a custom prompt and another using the standard template will produce outputs that look like they came from different brands.

Stage 3 — Human E-E-A-T layer. This is the non-negotiable gate. Every AI-rewritten piece requires at least one of: a practitioner quote sourced from a real subject matter expert, a proprietary data point not available in the source content, or an editorial perspective that takes a clear position the model couldn't have generated from the original. This is the layer that separates content that holds rankings from content that falls out of the top 10 within 60 days — and per that audit of 1,000+ blog pages, fewer than 10% of AI-rewritten pages sustain top-10 rankings beyond that window without this kind of differentiation.

Stage 4 — Quality scoring rubric. Before anything goes to final edit, it gets scored against a 10-point rubric: voice fidelity (3 points — does it sound like the brand?), factual accuracy (3 points — are all statistics verified against source?), SEO signal preservation (2 points — primary keyword in correct positions, heading structure intact?), and E-E-A-T signal presence (2 points — is there a human layer?). Anything scoring below 7 goes back for revision. This rubric sounds bureaucratic until you realize it cuts your revision cycles in half because writers know exactly what "good" looks like before submission.

Stage 5 — SEO verification pass. A separate check — not part of the quality rubric — confirms that the primary keyword appears in the first 100 words, at least two H2 headings, and the final paragraph. Check Google Search Console structured data if the content uses schema markup. This takes 3 minutes and prevents the embarrassing situation where a beautifully rewritten piece loses its keyword targeting because the model decided to paraphrase the focus term.

For teams comparing full-stack AI publishing platforms against point solutions for rewriting, I'd recommend reading our breakdown of Meev vs Jasper AI — the distinction between a rewriting tool and an end-to-end publishing workflow matters more than most teams realize until they're trying to scale past 50 pieces a month.

The workflow above isn't fast to implement. But it's the difference between a content operation that compounds and one that churns. Google's March 2024 update removed 45% of low-quality, unoriginal content from rankings — and the sites that got hit weren't running more AI than the ones that survived. They were running AI without the human differentiation layer.

That's the real lesson from every rewrite failure I've studied: the tool isn't the problem. The workflow is.

Frequently Asked Questions

Does Google penalize AI-rewritten content? Google doesn't penalize AI use directly — it penalizes low-quality, unoriginal content regardless of how it was produced. The March 2024 core update removed 45% of low-quality content from rankings, and the majority of deindexed sites had AI-rewritten or mass-produced content with no original insight layered on top. The method isn't the issue. The output quality is.

Which AI rewriter is best for SEO content specifically? For SEO signal preservation out of the box, Gemini performs best in my testing — it maintains keyword density and heading structure more reliably than the others without explicit instruction. For overall quality when voice matters, Claude with a detailed style prompt is my current recommendation.

How do I test whether an AI rewriter is preserving my voice? Take 10 sentences from your best-performing content and run them through the rewriter. Compare sentence length distribution, count hedge words ("may," "often," "typically"), and check whether brand-specific terminology survived. If the output fails two of those three checks, the tool or prompt needs adjustment.

Can I use AI rewriters to pass Google's helpful content assessment? Only if you add original value. The helpful content system evaluates whether content demonstrates first-hand expertise and provides genuine value beyond what's already ranking. An AI rewrite of existing content, by definition, adds no new information. The human E-E-A-T layer — original data, expert perspective, editorial position — is what makes rewritten content pass that assessment.

How many rewrites per week can one editor realistically review? With a quality scoring rubric in place, an experienced editor can review 8–12 rewritten pieces per day at the stage 4 gate. Without a rubric, that number drops to 4–6 because every piece requires judgment calls that should have been standardized. The rubric isn't overhead — it's leverage.

What's the biggest mistake teams make with AI rewriting at scale? Skipping the triage stage. Teams eager to move fast will run their entire content library through an AI rewriter without first identifying which pieces should be left alone. Rewriting content that's already ranking well is one of the most reliable ways to drop rankings I've seen — and it's entirely avoidable with a 10-minute audit step before the rewrite queue starts.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Stop rewriting content that loses your voice at scale — see how Meev builds brand-consistent AI content from the ground up.