By Judy Zhou, Head of Content Strategy

Key Takeaways

- Google AI Mode is a separate experience from AI Overviews — it replaces the full SERP with a conversational AI interface, and a Semrush study of 230,000+ prompts found its citation sidebar averages only ~7 unique domains per response.

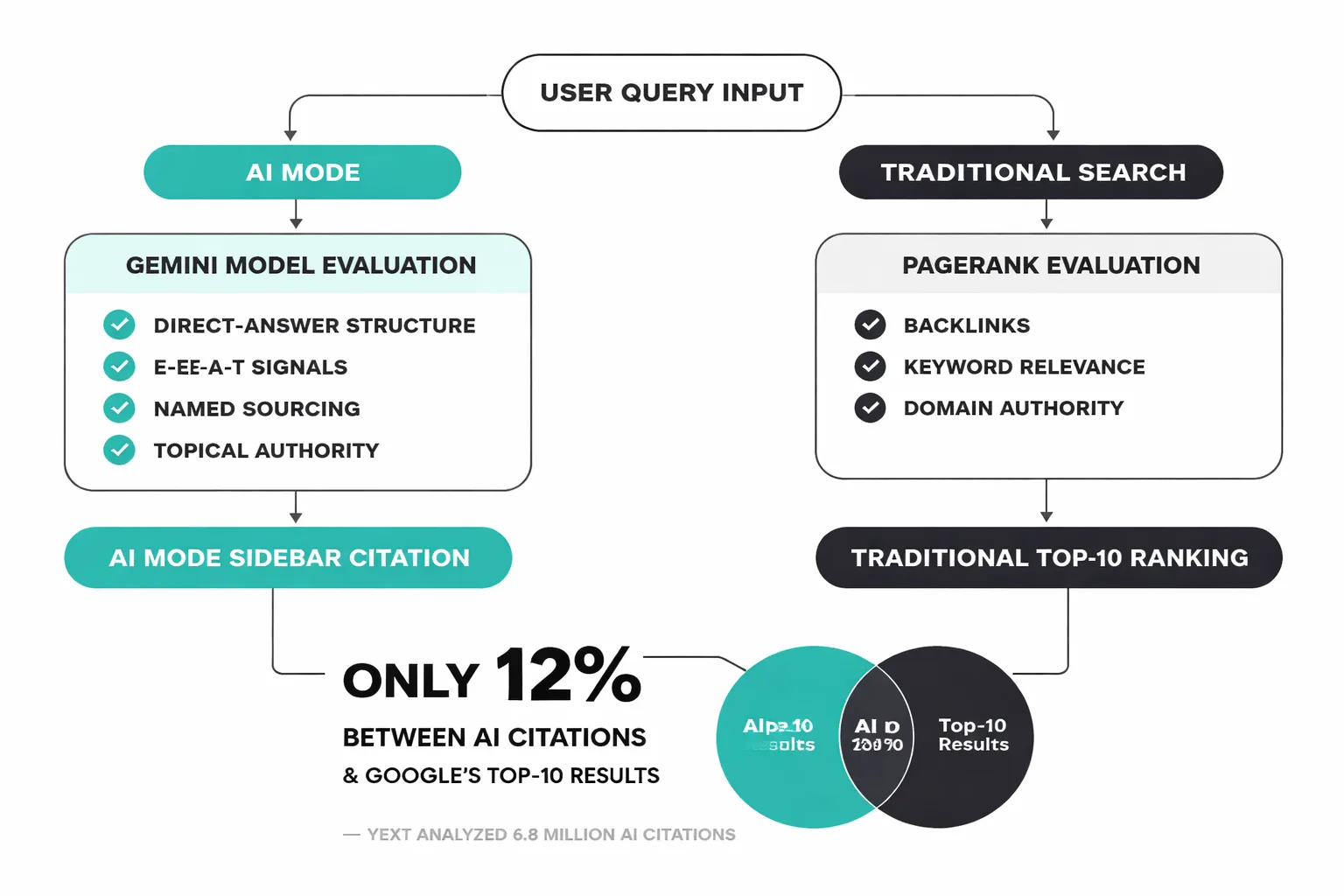

- Only 32% of URLs cited in Google AI Mode's sidebar overlap with Google's traditional top-10 results, meaning strong organic rankings do not reliably translate into AI Mode visibility.

- Each AI model (ChatGPT, Perplexity, Google AI Mode) rewards structurally different content — treating 'AI optimization' as a single task is the fastest way to underperform on all three platforms.

- Direct-answer formatting, explicit E-E-A-T signals, named sourcing, and genuine information gain are the content signals Google AI Mode appears to reward — and dedicated AI visibility tracking (not Search Console) is now required to measure whether it's working.

A user types 'best project management tools for remote teams' into Google. No list of blue links appears. Instead, a fluent, multi-paragraph AI answer fills the screen — recommending three tools by name, explaining each one's strengths, and pulling language that sounds almost editorial. At the very bottom, two small source links appear. Your competitor's blog is one of them. Your site, despite ranking in position two for that exact query for the past eighteen months, is nowhere. This is AI Mode — and it is already live.

AI Mode is Google's most significant restructuring of search in a decade. Powered by Gemini, it replaces the traditional ten-blue-links SERP with a conversational, multi-turn AI response for a growing share of queries. A Semrush study analyzing over 230,000 prompts found that 92% of Google AI Mode responses feature a sidebar with roughly seven unique domains — meaning the citation surface is radically narrower than traditional search. The overlap between those sidebar links and Google's classic top-10 results is only 51% at the domain level and 32% at the URL level, which tells you that ranking well in traditional search does not reliably translate to AI Mode visibility. And critically, each AI model — ChatGPT, Perplexity, and Google AI Mode — rewards structurally different content, so a single optimization strategy won't cover all three.

AI Mode vs. AI Overviews — The Distinction That Actually Matters

The naming confusion is real, and it's costing marketers strategy clarity. AI Overviews and AI Mode are not the same product — they're different surfaces with different triggers, different citation behaviors, and different implications for your content.

AI Overviews (formerly Search Generative Experience, or SGE) appear as an optional module within a standard Google SERP. You still see blue links below them. They activate on a subset of queries, primarily informational ones, and they're designed to coexist with traditional results. Think of AI Overviews as a feature layered onto the existing search page.

AI Mode is a separate search experience entirely. When a user switches into AI Mode — currently accessible via a tab in Google Search Labs — the interface shifts to a conversational format modeled more closely on ChatGPT or Perplexity than on traditional Google. There are no ten blue links. There's a primary AI-generated answer, a source panel, and the ability to ask follow-up questions in a threaded conversation. Google's AI Mode is built on a more advanced Gemini integration than AI Overviews, with multimodal input support (images, voice, text) and the capacity to handle significantly more complex, multi-part queries.

For google ai mode search strategy, the distinction matters because the content that earns citation in AI Mode is not identical to the content that earns an AI Overview snippet. AI Overviews tend to pull from pages already ranking in Google's top 10. AI Mode, as the Semrush data shows, has only a 32% URL overlap with those same top-10 results. If you've been optimizing purely for AI Overviews, you may be leaving your AI Mode visibility largely unaddressed.

The relationship to SGE is worth clarifying too. SGE was Google's experimental predecessor, tested in Search Labs through 2023 and early 2024. AI Overviews replaced SGE in May 2024 when Google rolled the feature out broadly. AI Mode is the next evolution — a more immersive, standalone surface that Google appears to be positioning as the future default for complex queries.

How AI Mode Changes the Search Results Page

The SERP in AI Mode looks nothing like what most marketers have spent a decade optimizing for.

Instead of a ranked list, the user sees a primary response block — typically three to five paragraphs of synthesized answer, written in a conversational register. Below or beside that block sits a source panel: a carousel of cited domains, each shown with a favicon, domain name, and brief excerpt. According to the Semrush multi-platform AI visibility analysis, that sidebar averages around seven unique domains — not ten, not twenty. Seven.

That compression is the first thing that should concern you. Traditional Google distributes traffic across ten organic results plus ads, featured snippets, and People Also Ask boxes. AI Mode concentrates citation into a much smaller set. If your domain isn't in those seven, you're not visible — not in a reduced way, but in a zero way.

The second structural shift is conversational threading. Users can follow up directly within the same session: "Which of those tools has the best mobile app?" or "Compare the pricing on the first two." Each follow-up generates a new AI response, potentially pulling from a new set of sources. This means a single query session can generate multiple citation opportunities — or multiple opportunities to be excluded — depending on how well your content covers adjacent subtopics.

Traditional blue-link rankings don't disappear entirely. In the current implementation, a "More results" option can surface conventional SERP links below the AI response. But user behavior data from analogous AI search products suggests that most users don't scroll past the AI answer. The blue links are becoming the fine print.

What AI Mode Means for Content Visibility and Traffic

Here's the number that stopped me cold when I first saw it: a Yext analysis of 6.8 million AI citations found only a 12% overlap between AI citations and Google's top-10 results. Twelve percent. Years of traditional SEO investment, and the content that dominates Google's blue links is largely invisible to the AI models now answering those same queries.

The traffic implication follows directly. If AI Mode answers a query completely — and Gemini is increasingly capable of doing exactly that for informational queries — the user has no reason to click through to any source. Zero-click outcomes, already a concern with featured snippets and AI Overviews, become the dominant mode in AI Mode. The citation panel exists, but citation is not the same as a click. Getting named is better than not getting named. It's not the same as getting traffic.

Which content formats are surviving this shift? The pattern I keep seeing is that content built around explicit question-answer structure — where a clear question is posed in a heading and a direct, standalone answer appears in the first paragraph of that section — gets extracted more reliably than content written in a flowing editorial style. That's not a guess; it's the same logic that drove featured snippet optimization, applied at a higher level of sophistication. If I can't pull a clean, standalone answer out of a section in under fifteen seconds, an AI model probably can't either.

Content that's getting buried: thin overview pages that summarize what a topic is without adding any original analysis, perspective, or data. The Moz analysis of AI Overviews failures identified surface-level content as a primary driver of exclusion from AI-generated answers. The same logic applies to AI Mode — and the bar appears to be higher, not lower, because Gemini has more room to synthesize across multiple sources before settling on what to cite.

For marketers tracking AI search visibility, the mention-citation gap is the metric that matters most right now. Your brand may be mentioned in training data, discussed in forums, and referenced in industry publications — but none of that guarantees citation in an active AI Mode response. LLM citation tracking tools (see our breakdown of AI visibility tools for 2026) are now essential for understanding where that gap exists and which queries you're being excluded from.

Wondering where your brand actually shows up in AI Mode responses — and which queries you're being excluded from?

Why AI Model Comparison Changes Your Strategy

One of the most operationally important things I've learned in this work is that "optimizing for AI" is not a single task. It's three or four different tasks depending on which model you're targeting.

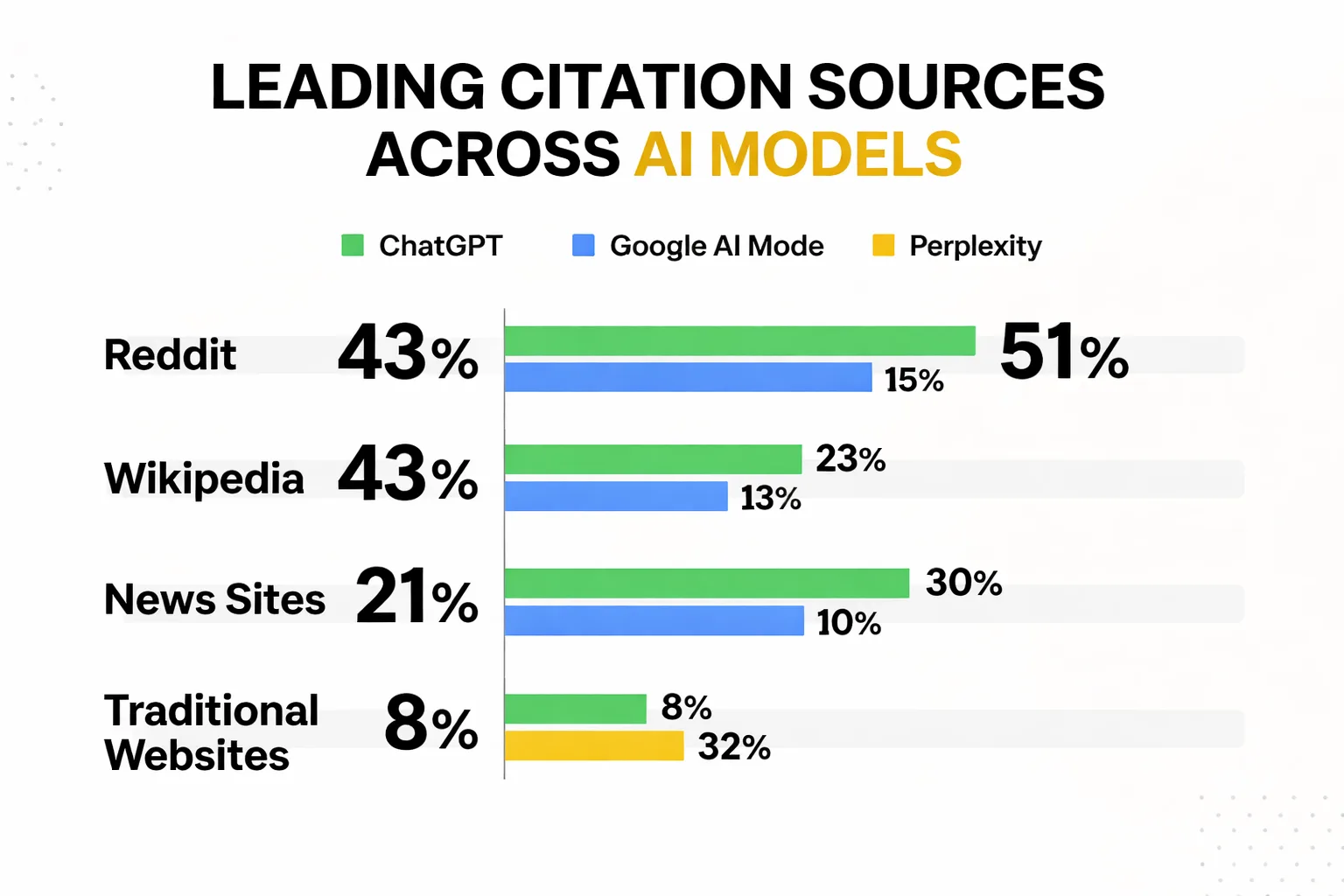

The Semrush AI visibility platform study tracked over 100 million citations across ChatGPT, Google AI Mode, and Perplexity over a three-month period and found that each model rewards structurally different sources. Reddit is the leading citation source across all three platforms — but the degree varies significantly. Perplexity leans heavily on Reddit-style conversational content and community-driven sources. ChatGPT skews toward encyclopedic, Wikipedia-style writing. Google AI Mode, being a Google product, has more overlap with traditional web content — but that 32% URL overlap figure means it's still pulling from a substantially different set than organic search.

The ai model comparison implication for content strategy: you cannot write one piece and expect it to perform across all three surfaces. For topics where ChatGPT dominates user behavior (technical queries, product research, code-related questions), structured and definitional writing wins. For community-driven or opinion-heavy topics where Perplexity is the primary surface, Reddit presence and conversational formatting matter more. For Google AI Mode specifically, the combination of E-E-A-T signals, direct-answer structure, and traditional web authority seems to be the operative formula — but the source selection is still distinct enough from traditional Google that you need to track it separately.

I've seen teams waste months treating AI visibility as a monolith. They'd run one content audit, make one set of formatting changes, and expect results across all three platforms. The source hierarchies are just too different for that to work.

The Auto-Blog Trap — What Grokipedia Got Wrong

Before getting to what actually works, it's worth being direct about what doesn't — because the failure mode is seductive and the case study is instructive.

Grokipedia, an Elon Musk-affiliated AI-generated encyclopedia, launched with significant resources and a clear mandate to produce content at scale. According to SEO practitioner Joel Gross's analysis, the site saw dramatic drops in Google search rankings starting in December 2024, with further declines following Google's March 2024 Core Update. SEO consultant Jessica Bowman's assessment was blunt: "Producing a large amount of low quality content can actually hurt SEO" — and she documented a separate publisher producing 100 posts per hour with zero human editing that failed to rank entirely.

The lesson isn't that AI content is penalized. It's that content with zero E-E-A-T signals, no human editorial layer, and no information gain over existing sources gets treated as what it is: noise. Google's scaled content abuse detection doesn't care whether a human or a model wrote the piece. It cares whether the piece offers anything a reader couldn't get from the ten sources that already exist on the topic.

For AI Mode citation specifically, this matters even more. Gemini is synthesizing across sources before deciding what to cite. Content that simply restates what's already widely known gives the model no reason to surface your page specifically. The content that earns AI Mode citations tends to have something extractable and distinct — a named data point, a specific framework, a practitioner perspective that isn't available elsewhere. That's the information gain principle, and it's not optional in this environment. If you're evaluating content systems, the AI content creation landscape in 2026 has shifted significantly toward quality-gated workflows for exactly this reason.

How to Optimize for AI Mode Right Now

Optimizing for google ai mode search is not a single tactic. It's a set of structural and editorial decisions that compound over time. Here's what the current evidence supports.

Direct-answer formatting is non-negotiable. Every section of your content that targets a specific question should open with a 40-60 word standalone answer — one that would make sense if extracted and quoted without any surrounding context. This is the extractability principle: if an AI model can't pull a clean answer from your page in under ten seconds, it won't. Structure your H2s as questions when the topic calls for it, and front-load the answer before elaborating.

E-E-A-T signals need to be explicit, not implied. For AI Mode, this means named authors with verifiable credentials, first-person experience signals woven into the body copy (not just the bio), and specific data points attributed to named sources. Vague authority — "experts agree," "studies show" — is invisible to both Gemini and to human fact-checkers. Name the study. Name the researcher. Link to the primary source. This is especially true for YMYL (Your Money or Your Life) topics where Gemini appears to apply a higher citation threshold.

Structured data still matters, but differently. Schema markup for FAQPage, HowTo, and Article types helps Gemini understand content structure. But structured data is a signal amplifier, not a substitute for content quality. A well-structured page with thin content will not outperform a substantive page with minimal markup.

Build topical authority, not just keyword coverage. The Wix AI Search Lab's guidance on AI search optimization notes that LLMs rely on training data built around associations rather than keyword matching — meaning a brand needs "a definitive digital footprint that is easy to understand" to be recognized as an authority in a niche. This is the topical authority for AI search argument: covering a topic cluster deeply, with consistent entity signals and named expertise, outperforms targeting individual keywords in isolation.

Track your AI search visibility separately from organic rankings. The 12% overlap between AI citations and Google's top 10 means your Search Console data is not telling you what's happening in AI Mode. You need dedicated tracking — prompt-level monitoring, citation audits, and mention-citation gap analysis. Tools like Meev's AI visibility platform and others in the category are now essential infrastructure, not nice-to-haves. If you're evaluating options, the comparison between Meev and Peec AI is worth reviewing for teams focused on citation outreach specifically.

Content quality scoring should include information gain. Before publishing, ask: what does this piece say that isn't already said by the top five results for this query? If the answer is "not much," the piece is a candidate for consolidation or enrichment, not publication. A Rankability study of 487 search results found that 83% of top-ranking pages use human-generated content — but the more important signal is that those pages tend to contain original analysis, specific data, or practitioner perspective that the AI-generated alternatives don't.

The generative engine optimization (GEO) frame is useful here: think of every piece of content as a potential source document for an AI model. Would Gemini cite this page specifically, or would it synthesize the same answer from three other sources and leave yours out? If it's the latter, the content needs work before it needs distribution.

Frequently Asked Questions

Is Google AI Mode available to everyone right now? As of mid-2025, AI Mode is available through Google Search Labs as an opt-in experience. Users in the US can enable it via the Labs tab in Google Search. Google has signaled broader rollout, but the timeline for making it the default experience hasn't been confirmed publicly. Marketers should treat it as a near-term default, not a distant possibility.

Does ranking in Google's top 10 guarantee AI Mode citation? No — and this is the most important thing to internalize. The Semrush analysis found only 32% URL overlap between AI Mode sidebar citations and Google's traditional top-10 results. A strong organic ranking improves your chances but does not guarantee AI Mode visibility. You need to optimize for AI Mode's citation criteria directly, not assume your existing rankings will transfer.

How is AI Mode different from Perplexity or ChatGPT search? All three use large language models to generate answers with cited sources, but their source hierarchies differ significantly. Google AI Mode has higher overlap with traditional web content than Perplexity or ChatGPT, and it integrates with Google's index in real time. Perplexity leans heavily on Reddit and community sources. ChatGPT favors encyclopedic, Wikipedia-style content. Each requires a somewhat different content strategy to earn consistent citation.

What types of content are most likely to be cited in AI Mode? Content with explicit question-answer structure, named sourcing, first-person experience signals, and original data or analysis performs best based on current evidence. Thin overview pages, content that simply restates what's widely known, and pages with no identifiable author or expertise signals are the most likely to be excluded from AI Mode citations.

How do I track whether my content is being cited in AI Mode? Search Console does not currently report AI Mode impressions or citations separately. Dedicated AI visibility tracking tools — which monitor specific prompts and track which domains appear in AI-generated responses — are now the primary way to measure this. The mention-citation gap (how often your brand is mentioned vs. actively cited) is the key metric to close.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev tracks your AI search visibility across Google AI Mode, ChatGPT, and Perplexity — so you can close the mention-citation gap with evidence, not guesswork.