By Judy Zhou, Head of Content Strategy

Key Takeaways

- ChatGPT Search accounts for 87.4% of AI-driven referral traffic despite citing sources less frequently than Perplexity — making it the highest-priority optimization target for most content marketers.

- AI search systematically favors earned third-party media over brand-owned content, according to GEO research published on arxiv.org — outreach strategy and content format are both required, not interchangeable.

- Only 28% of brands achieve both citations and named recommendations in AI-generated answers (Semrush) — being cited as a source and being recommended by name require completely different content and pitching strategies.

- The right engine to optimize for first depends on three variables: domain age and authority, publishing frequency, and content type — there is no universal answer, and aggregating citation rates across platforms hides the patterns that drive real decisions.

In 2012, Google's Knowledge Graph quietly redefined what a search engine was supposed to do — not just retrieve documents, but answer questions directly. Most marketers noticed, shrugged, and went back to building links. A decade later, the same transition is happening again, only faster and far more disruptive. The launch of ChatGPT in late 2022, followed by Perplexity, Google's AI Overviews, and a wave of competing AI-powered answer engines, has fundamentally changed where authoritative answers live — and who gets credit for producing them. History, it turns out, rewarded the marketers who adapted early the first time.

If you're a content marketer still treating these platforms as fancy search boxes, you're missing the actual question: not "which ai powered answer engine is most useful to me as a user," but "which one is most likely to cite my content, recommend my brand, and send buyers my way." That framing shift changes everything about how you evaluate these tools.

Perplexity provides inline, numbered citations for every output (according to PCMag), making it the most citation-transparent platform in this comparison. ChatGPT accounts for 87.4% of AI-driven referral traffic despite citing in fewer responses than Perplexity. Research from arxiv.org's GEO analysis shows AI search systematically favors earned third-party media over brand-owned content, contrasting with Google's more balanced source mix. And only 28% of brands achieve both citations and named recommendations in AI-generated answers, according to Semrush's analysis.

Those four facts should make you uncomfortable. They made me uncomfortable. Here's how to use them.

What Makes an Answer Engine Different from a Search Engine

A traditional search engine is a librarian. It hands you a list of shelves and lets you walk the stacks yourself. An ai powered answer engine is more like a research assistant who has already read the shelves, synthesized the relevant passages, and is now presenting you with a conclusion. The librarian shows you sources. The research assistant decides which sources were worth reading.

That distinction has enormous consequences for content marketers. When Google returns ten blue links, every result gets a click opportunity. When Perplexity or ChatGPT Search synthesizes an answer, typically one to four sources get cited — and the rest get nothing. The entire traffic distribution model collapses from a long tail to a very short head.

The mechanics behind this are worth understanding briefly. Most answer engines use a form of retrieval-augmented generation (RAG): they retrieve relevant documents from a live index or a curated knowledge base, then feed those documents into a large language model to generate a synthesized response. The LLM doesn't just quote from those documents — it reasons across them, resolving contradictions and filling gaps with its own training. This is why generative engine optimization is a different discipline than SEO. You're not optimizing for a ranking algorithm. You're optimizing to be the document the retrieval layer selects as worth synthesizing from.

For answer engine optimization (AEO) and broader LLM optimization strategy, the implication is clear: content that reads as authoritative to a human editor also reads as authoritative to a retrieval system. Structured claims, named sources, direct answers to specific questions, and demonstrated expertise all increase retrieval probability. Fluffy brand content, generic overviews, and keyword-stuffed filler all decrease it.

The other thing that changes: synthesis engines don't just cite you, they represent you. If your content contains a claim that gets picked up and synthesized into an AI answer, the AI may paraphrase it, contextualize it alongside competitors, or use it to support a conclusion you didn't intend. That's a new editorial risk category most content teams aren't managing yet.

The 6 Engines Ranked: Criteria and Scoring

I scored each engine across three dimensions that matter specifically to content marketers: citation transparency (can you see where the answer came from?), source diversity (does it pull from a range of publishers or favor a narrow set?), and content marketer accessibility (how easy is it to query these engines to understand your own citation footprint?). I'm not ranking these as consumer products — I'm ranking them as platforms you need to understand, optimize for, and monitor.

1. Perplexity is the most citation-transparent platform in this group. Every response includes inline numbered citations linking directly to source URLs. PCMag's reviewer noted this as its defining characteristic versus Google, and from a content marketer's perspective, it's genuinely useful: you can query your own topic space and see exactly which domains are getting cited, at the prompt level. The source diversity is good — Perplexity pulls from real-time web results rather than training data alone, which means fresh content has a real shot. Its weakness for marketers is that high citation rate doesn't automatically translate to referral traffic. In my own testing across several months, Perplexity's citation behavior was generous but the downstream traffic impact was modest compared to ChatGPT.

2. ChatGPT Search (the browsing-enabled version of GPT-4o) cites less frequently than Perplexity, but those citations punch far above their weight in traffic terms. The 87.4% AI referral traffic share I mentioned earlier is the single most important number in this entire ranking. If you're optimizing for business outcomes rather than citation vanity metrics, ChatGPT Search is the platform that deserves the most attention. Citation behavior here tends to favor high-authority domains and sources that have been referenced extensively in training data — which creates a compounding advantage for established publishers and a steeper hill for newer ones.

3. Google AI Overviews occupies a unique position because it sits inside the search engine that still drives the majority of organic traffic for most content marketers. Index.dev's developer testing found Google AI Overviews "promise instant, ecosystem-aware summaries" — meaning they heavily favor content already ranking well in traditional Google search. That's a double-edged sword. If you have strong organic rankings, AI Overviews can amplify your visibility. If you don't, it's another layer of competition you're not winning. Structured markup, E-E-A-T signals, and topical authority for AI search all matter here more than on any other platform.

4. Bing Copilot (Microsoft's AI answer layer built on GPT-4) is underrated by content marketers who have written off Bing as irrelevant. Bing Copilot's integration into Microsoft 365, Windows, and Edge gives it a user base that skews professional and enterprise — a valuable audience if you're in B2B content. Its citation behavior is similar to ChatGPT Search (same underlying model), but the source pool it draws from is Bing's index rather than OpenAI's training data, which means Bing-indexed content has a more direct path to citation.

5. You.com is a smaller player but worth including because its source diversity is notably higher than the major platforms. It actively surfaces a wider range of publishers, including niche and specialist sites that would never crack a Google AI Overview. For content marketers at mid-market or specialist brands, You.com represents an accessible entry point for citation visibility — lower competition, more willingness to surface non-dominant sources.

6. Brave Leo rounds out the list. Built on Brave's independent search index and using a mix of open-source LLMs, Leo is the most privacy-focused option and has a loyal but niche user base. Citation transparency is moderate. For most content marketers, Leo is a long-tail consideration rather than a primary optimization target — but worth monitoring if your audience skews toward privacy-conscious tech users.

Wondering which AI engines are actually citing your content right now — and which competitors they're recommending instead?

Citation Behavior Compared Across All 6

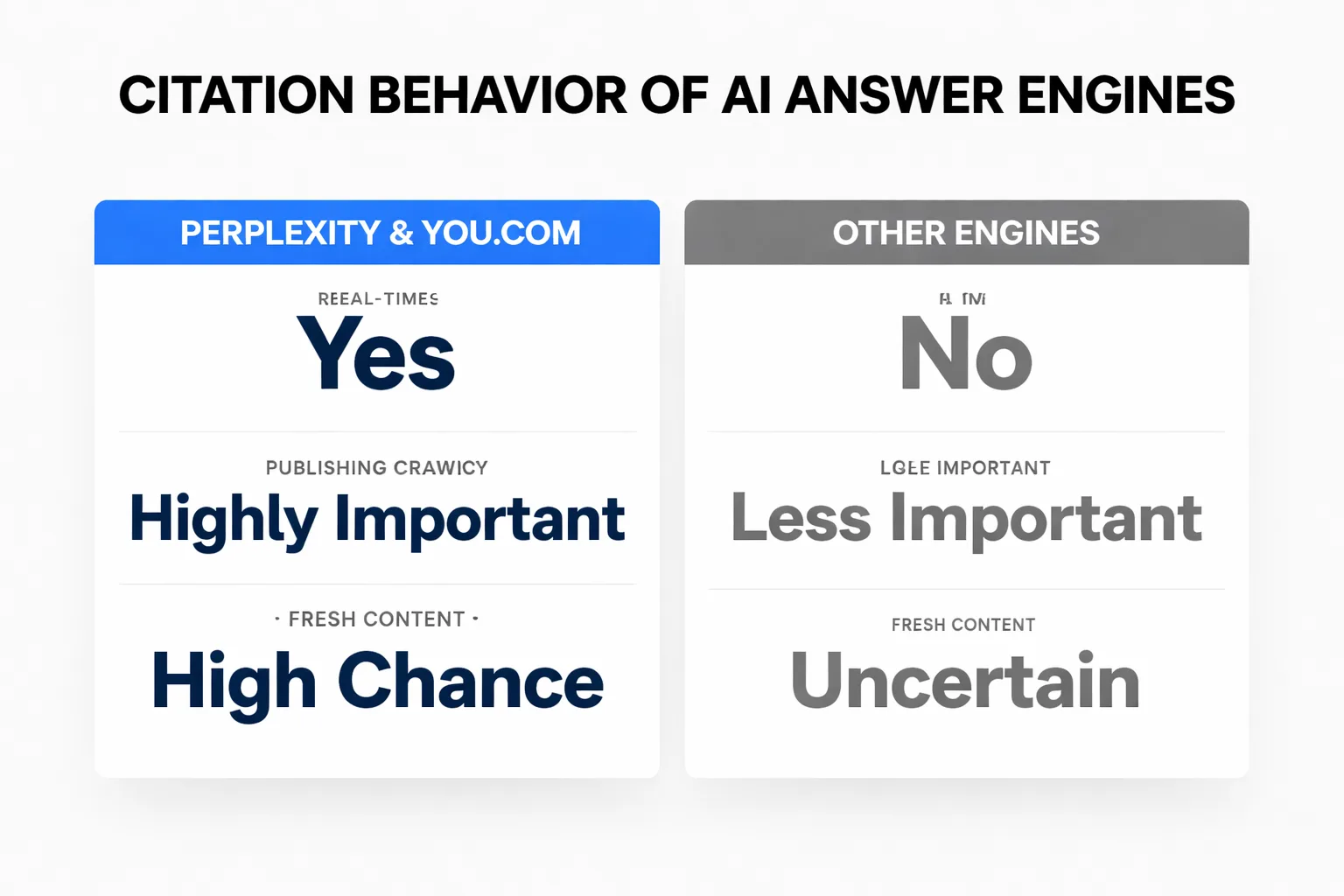

The pattern I keep seeing when I audit citation behavior across these platforms: they are not all fishing in the same pond, and the bait that works in one pond actively fails in another.

Perplexity and You.com both draw heavily from real-time web crawls, which means publishing frequency matters. A site that publishes well-sourced, structured content consistently will see citation rates climb over time because fresh content has a real shot at retrieval. This is where AI content at scale strategies can actually pay off — provided the quality gate is real, not theatrical. The failure pattern I keep seeing with auto-blog workflows isn't bad grammar or thin structure. It's that the content passes every internal quality check and still collapses in AI retrieval. What scoring tools don't measure is demonstrated expertise and original perspective, which is exactly what retrieval systems are selecting for.

ChatGPT Search and Bing Copilot reward domain authority more than freshness. Both draw on training data and indexed content, and both show a strong bias toward sources that appear frequently across the web as authoritative references. The GEO research from arxiv.org makes this explicit: AI search systematically favors earned media — third-party, authoritative sources — over brand-owned content. This isn't a subtle preference. It's a structural feature of how these systems were trained. A journalist writing "brands like X and Y are leading this space" with no hyperlink anywhere is doing more for AI recommendation visibility than a linked feature in a high-DA outlet. That finding is genuinely uncomfortable if your entire pitching strategy is built around link acquisition metrics.

Google AI Overviews behaves differently from all of them. Its citation pool is tightly correlated with traditional Google rankings — Index.dev's analysis found Google wins for "speed and simplicity" while Perplexity wins for "depth, citations, and reproducible research" — which means the same structured markup, schema, and E-E-A-T signals that help you rank organically also help you appear in AI Overviews. Structured FAQ markup, HowTo schema, and clear heading hierarchies all increase retrieval probability here.

Brave Leo is the outlier. It uses its own independent index and open-source models, which means the correlation between traditional SEO signals and Leo citation behavior is weaker. Structured content still helps — direct answers to specific questions, named claims with sources — but domain authority signals carry less weight than on ChatGPT or Google.

The mention-citation gap is real across all six platforms. I've started auditing placements specifically for named-without-linked patterns, and the volume is almost always lower than teams assume when they're reporting campaign success. Getting cited and getting recommended are not the same outcome. We spent a full quarter pitching for backlinks and domain authority signals — the standard playbook — and our AI citation rate looked fine on paper. But when I started actually querying ChatGPT and Perplexity for our core topics, competitors were getting named. We were getting used as a source to support claims about them. The Ahrefs Brand Radar data puts a number on what I was seeing anecdotally: 80% of brands are stuck in this pattern.

Which Engine Should You Optimize for First

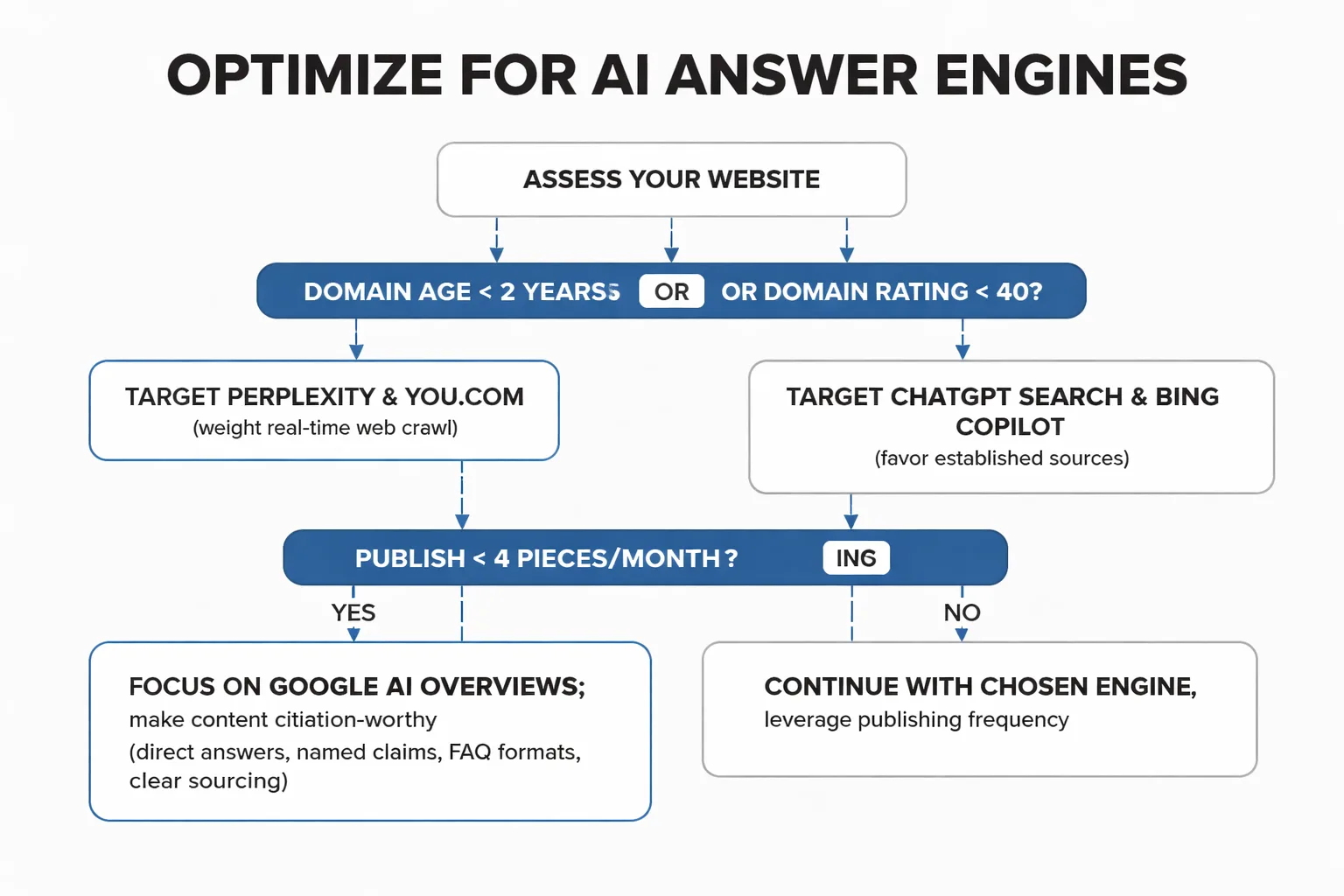

The honest answer: it depends on three variables that most "optimize for AI search" guides treat as constants.

Variable 1: Your domain age and authority. If your site is under two years old or has a domain rating below 40, ChatGPT Search and Bing Copilot are not going to be your fastest wins. Both platforms heavily favor established, frequently-cited sources. Perplexity and You.com are more accessible for newer publishers because they weight real-time web crawl results more heavily than training-data authority signals. Start there, build citation presence, and use that as the foundation for the harder platforms.

Variable 2: Your publishing frequency. If you publish fewer than four pieces per month, freshness-based platforms like Perplexity won't give you much to work with. Your priority should be making each piece of content as structurally citation-worthy as possible — direct answers, named claims, FAQ formats, clear sourcing — and targeting Google AI Overviews, where your existing organic rankings can amplify AI visibility without requiring high publishing volume. Teams publishing daily or near-daily have a different calculus: Perplexity rewards that cadence, and the compounding effect of consistent citation presence across a high-volume topic cluster is real.

Variable 3: Your content type and audience. B2B content marketers with professional audiences should weight Bing Copilot higher than most guides suggest. The Microsoft 365 integration means Copilot is being queried by enterprise buyers inside their workflow tools — a context where your brand appearing in an answer has direct commercial relevance. E-commerce and DTC brands should weight Google AI Overviews heavily because the purchase intent in those queries is highest and the overlap with traditional Google search is tightest. Media and publishing brands should prioritize Perplexity because its users are explicitly seeking cited, sourced answers — exactly the audience that values editorial credibility.

Here's the framework I use when advising on where to start. New domain, low authority, moderate publishing frequency: Perplexity first, You.com second, Google AI Overviews third as authority builds. Established domain, strong organic rankings, lower publishing frequency: Google AI Overviews first, ChatGPT Search second, Bing Copilot third. B2B SaaS or enterprise content: Bing Copilot first, ChatGPT Search second, Perplexity third. High-volume content operation with quality gates in place: all six simultaneously, with segmented reporting by platform so you're not aggregating citation rates into a number that looks healthy while the pipeline stays flat.

The segmentation point matters more than most teams realize. We now segment AI visibility reporting by platform instead of aggregating it. A citation on Perplexity and a citation on ChatGPT Search are not the same commercial event. Treating them as equivalent is how you end up with impressive-looking dashboards and flat revenue. If you want a tool that tracks citation rates across platforms separately rather than blending them, the Meev vs Profound comparison breaks down exactly how different platforms handle that segmentation problem.

For teams that want to understand the full answer engine optimization picture before committing to a platform strategy, the Meev vs Peec AI comparison covers how citation drift detection works in practice — specifically what happens when an engine that was citing you regularly stops, and how to diagnose whether it's a content quality issue or an index coverage issue. Those are different problems with different fixes, and conflating them wastes months of effort.

One contrarian take worth sitting with: most content marketers are optimizing for citation rate when they should be optimizing for citation quality. A single ChatGPT mention in a response to a high-intent buyer query is worth more than twenty Perplexity citations in informational responses that never convert. The generative engine optimization research from arxiv.org provides a strategic GEO agenda but doesn't resolve this quality-versus-quantity tension — because the answer is specific to your business model, not universal. Before you build an answer engine optimization strategy around any of these six platforms, define what a citation is actually worth to you commercially. Everything else follows from that.

For teams building out their broader AI search visibility infrastructure, the best AI visibility tools roundup for 2026 covers how monitoring platforms integrate with content workflows — because tracking citations you're already earning is a different problem from engineering the conditions that earn new ones. Both matter. Neither one alone is a strategy.

And if you're running a content operation at scale and wondering whether quality-gated auto-publishing can coexist with genuine AI citation visibility, the honest answer is: it can, but the quality gate has to be measuring the right things. The Meev vs Surfer SEO comparison gets into what "quality" actually means in an AI retrieval context versus a traditional on-page SEO context — they're related but not identical, and conflating them is one of the most common mistakes I see in content operations that are otherwise well-run.

Frequently Asked Questions

Can I appear in AI answer engines without ranking on Google first? Yes, but the path differs by platform. Perplexity and You.com index the real-time web independently of Google, so strong content can earn citations without Google rankings. ChatGPT Search and Bing Copilot draw partly on training data and Bing's index, meaning Google rankings are less directly relevant. Google AI Overviews is the exception: its citation pool correlates tightly with traditional Google rankings, so organic SEO performance is a meaningful prerequisite there.

How often do AI answer engines update their source pools? Perplexity and You.com update continuously from live web crawls, meaning fresh content can be cited within days of publication. ChatGPT Search's browsing mode also pulls live results, but the underlying model's training data creates a baseline bias toward historically authoritative sources. Google AI Overviews updates in line with Google's crawl frequency for your domain. Bing Copilot follows Bing's index refresh cycle. For content marketers, this means publishing frequency matters most for Perplexity and You.com, while domain authority matters more for ChatGPT and Google.

What content formats are most likely to earn AI citations? Direct-answer formats consistently outperform in retrieval. FAQ structures, numbered lists with clear claims, definition-first paragraphs, and content that names specific sources and statistics all increase citation probability. The GEO research from arxiv.org confirms that AI search favors earned third-party media over brand-owned content — so the format of your content matters, but where it's referenced matters equally. A structured article cited in a trade publication earns more retrieval weight than the same article sitting alone on your domain.

Is there a difference between being cited and being recommended by an AI engine? Yes, and it's a critical distinction. Being cited means your URL appears as a source for a claim. Being recommended means the AI names your brand as a solution or leader in a category. Only 28% of brands achieve both, according to Semrush's analysis. Citation-building and recommendation-building require different strategies: citations come from structured, authoritative content; recommendations come from consistent unlinked brand mentions in editorially independent contexts. Most content teams are optimizing for citations and wondering why AI engines never recommend them by name.

How do I track which AI engines are citing my content? Manual querying across all six platforms is the starting point but doesn't scale. Dedicated AI search visibility tools like Meev track citations across ChatGPT, Claude, Gemini, Perplexity, and Grok with a hybrid daily and twice-weekly cadence. The key capability to look for is platform-segmented reporting — not an aggregated citation count, but a breakdown by engine so you can see where your citation presence is strong and where it's absent. Aggregated numbers hide the platform-specific patterns that actually drive optimization decisions.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Track your citation footprint across all six AI answer engines with platform-level segmentation — so you know exactly where to optimize next.