By Judy Zhou, Head of Content Strategy

Key Takeaways

- Standard benchmarks like MMLU and HumanEval don't predict content quality — a model can score in the 90th percentile and still produce generic, unusable copy.

- ChatGPT favors Wikipedia at 47.9% of top citations while Perplexity prioritizes Reddit at 46.7%, meaning your content distribution strategy must differ by AI platform.

- Cost-per-quality-output — calculated as API cost divided by average quality score — is a more actionable ROI metric than cost-per-token alone.

- Run your model comparison chart every 60–90 days; silent model updates can shift quality and consistency scores significantly without any public announcement.

In the spring of 2023, when GPT-4 first landed in enterprise workflows, most teams compared AI models the only way they knew how: read the output, shrug, decide. It was the same informal judgment call editors had used for freelancer pitches for decades. But within eighteen months, the stakes had quietly transformed — models weren't just drafting copy, they were becoming the gatekeepers of citation, visibility, and brand authority inside AI search. The frameworks for predicting output quality never caught up. Until now.

The problem with ai model comparison today isn't a lack of data. It's a lack of signal. Leaderboards show you MMLU scores. Benchmarks show you HumanEval pass rates. Neither tells you whether a model will write a compelling intro, maintain your brand voice across 50 articles, or produce content that earns citations in Perplexity. Those are the outputs that actually move business metrics — and they require a completely different evaluation methodology.

Four things content teams need to know upfront: First, standard benchmarks like MMLU correlate poorly with real-world content quality for subjective writing tasks. Second, ChatGPT and Perplexity have fundamentally different citation behaviors — ChatGPT favors Wikipedia at 47.9% of top citations while Perplexity prioritizes Reddit at 46.7%, which means your content distribution strategy needs to differ by platform. Third, the best-performing model for your use case changes monthly as providers push updates. Fourth, cost-per-quality-output — not cost-per-token — is the metric that actually predicts ROI.

Why Standard Benchmarks Lie to Content Marketers

MMU and HumanEval were designed by researchers to test researchers. MMLU measures knowledge recall across 57 academic subjects. HumanEval measures whether a model can write syntactically correct Python. These are genuinely useful signals if you're building a coding assistant or a trivia engine. They are nearly useless if you're trying to predict whether a model will write a listicle that earns backlinks.

Here's the specific failure mode I keep running into. A model can score in the 90th percentile on MMLU and still produce introductions that open with "In today's rapidly evolving digital landscape..." — the kind of opener that signals to every experienced editor that no human touched this draft. The benchmark measured what the model knows. It didn't measure how the model writes.

The OlympicArena benchmark paper illustrates this gap well. Claude 3.5 Sonnet outperforms GPT-4o on Physics tasks in that evaluation — a genuinely useful signal for scientific content. But that same advantage doesn't transfer to marketing copy, where the variables are tone, specificity, and structural judgment rather than domain knowledge. The benchmark tells you something real. It just doesn't tell you the thing you actually need to know.

There's also the saturation problem. Vellum's leaderboard explicitly excludes pre-April 2024 models because legacy benchmarks like MMLU have become saturated — scores cluster so tightly at the top that they no longer differentiate models meaningfully. When the top ten models all score between 87% and 92% on the same test, you've learned nothing actionable about which one to use for your content workflow.

The contrarian take I'd push here: most ai model comparison chart exercises in marketing teams are theater. Teams screenshot a leaderboard, pick the model at the top, and call it a decision. What they've actually done is outsourced their judgment to a benchmark that was never designed for their use case. The frameworks below are built to fix that.

The 6 Frameworks, Ranked by Practical Signal

I rank these by how reliably each framework predicts real-world content performance — not by how easy they are to run. The hard ones are at the top for a reason.

Framework 1 — Task-Specific Prompt Testing This is the highest-signal framework and the most underused. The methodology is simple: take 5 real content briefs from your actual workflow, run each brief through every model you're evaluating, and grade the outputs blind. No model names visible during grading. Score each output on structure, specificity, tone match, and factual accuracy — four dimensions, 1–5 scale each. The model that wins this test wins for your use case. Not for someone else's. Yours. I've seen teams skip this entirely because it takes two hours. Those same teams spend months wondering why their AI content isn't performing.

Framework 2 — Consistency Scoring Across 10 Runs Run the same prompt ten times. Grade the variance. A model that produces a 4/5 output 70% of the time and a 2/5 output 30% of the time is a worse production tool than a model that produces a reliable 3.5/5 every single run. Consistency is what separates a drafting tool from a production system. The pattern I keep seeing is that models with higher benchmark scores often show more variance on creative tasks — they have a higher ceiling but a lower floor.

Framework 3 — Citation Accuracy Audits This one became non-negotiable for me after the arXiv study analyzing 24,000+ conversations across AI search systems showed that only 12% of AI citations overlap with Google's top 10 results. If your content strategy depends on AI citation — and increasingly it should — you need to know whether the model you're using for research actually cites real sources accurately. The test: give the model 10 factual prompts that have verifiable answers, ask it to cite sources, then manually verify every citation. Models that hallucinate citations at scale will eventually poison your content's credibility.

Framework 4 — Tone Preservation Tests Provide a 500-word brand voice sample. Ask the model to write three new pieces in that voice. Have your editorial team grade them blind against human-written samples. This test reveals something benchmarks completely miss: whether a model can subordinate its own stylistic defaults to yours. Some models are remarkably good at this. Others will sand down every edge until everything sounds like a corporate FAQ.

Framework 5 — Instruction-Following Rate Give the model 20 prompts with specific structural constraints: "write exactly 150 words," "use no more than 3 sentences per paragraph," "include a statistic in the first sentence." Count how many outputs comply with all constraints without requiring a follow-up prompt. This matters enormously for automated publishing workflows where human review is limited. A model with an 85% instruction-following rate in a 1,000-article pipeline produces 150 non-compliant articles that someone has to catch.

Framework 6 — Cost-Per-Quality-Output This is the framework that finally makes the ROI case to finance. Calculate it as: (API cost per 1,000 words) ÷ (average quality score from Framework 1). A model that costs $0.40 per 1,000 words and scores 3.8/5 on quality has a cost-per-quality-output of $0.105. A model that costs $0.15 per 1,000 words and scores 2.1/5 has a cost-per-quality-output of $0.071 — cheaper per token, but worse value per usable output. BenchLM's monthly API cost calculator is useful for the raw cost inputs here.

Wondering which AI model is actually earning citations in your niche right now?

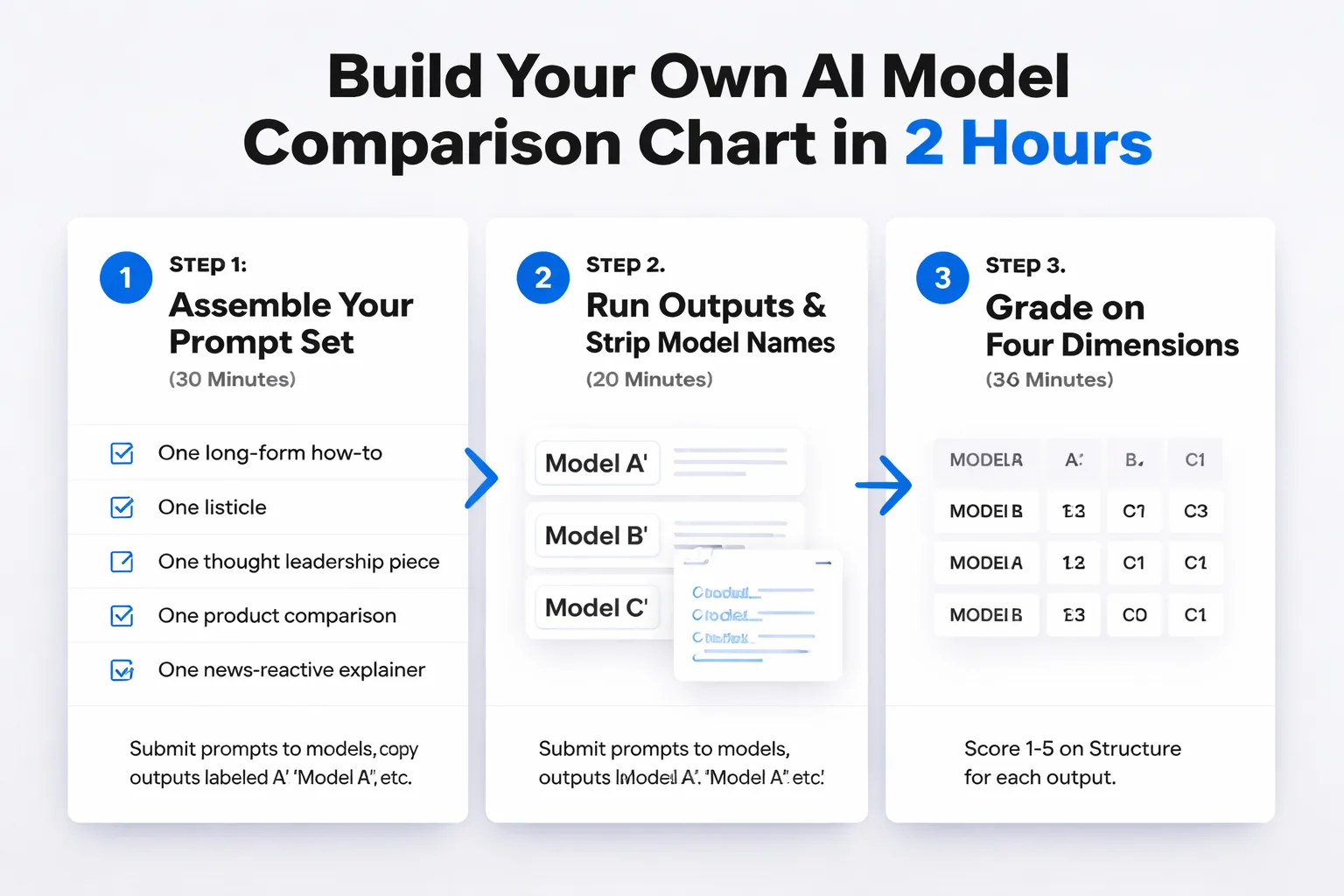

How to Build Your Own Comparison Chart in 2 Hours

A scoring template lives or dies by the prompts you choose. Generic prompts produce generic signal. Here's the repeatable process I use.

Step 1 — Assemble your prompt set (30 minutes) Pull five real briefs from your content calendar. If you don't have briefs, write five prompts that represent your actual use cases: one long-form how-to, one listicle, one thought leadership piece, one product comparison, one news-reactive explainer. These should be prompts you'd actually send to a writer — specific, not generic.

Step 2 — Run outputs and strip model names (20 minutes) Submit each prompt to every model you're evaluating. Copy outputs into a blank document, labeled only as "Model A," "Model B," etc. Do not let the graders know which model produced which output. Blind grading is non-negotiable — I've watched teams unconsciously inflate scores for models they already prefer.

Step 3 — Grade on four dimensions (30 minutes) For each output, score 1–5 on: Structure (does it follow the brief's format?), Specificity (does it include concrete details, not vague claims?), Tone Match (does it sound like your brand?), and Factual Density (does it include verifiable information rather than filler?). Average the four scores for a single quality number per output.

Step 4 — Build the comparison chart (20 minutes) Create a simple table: models as columns, frameworks as rows. Populate with scores from your testing. Add a "Cost-Per-Quality-Output" row using API pricing from Artificial Analysis, which tracks live price-per-1M-token across providers. The final row should be a weighted average — weight Framework 1 (task-specific quality) at 40%, Framework 2 (consistency) at 25%, and split the remaining 35% across the other four based on your workflow's specific needs.

Step 5 — Share and version-control (20 minutes) This chart has a shelf life of about 60 days before model updates make it stale. Put it in a shared doc, date it clearly, and assign someone to re-run the test quarterly. The teams I've seen get the most value from this process treat it like a recurring audit, not a one-time project. For a deeper look at how this connects to broader AI content creation workflows, the mechanics are similar — systematic evaluation beats intuition every time.

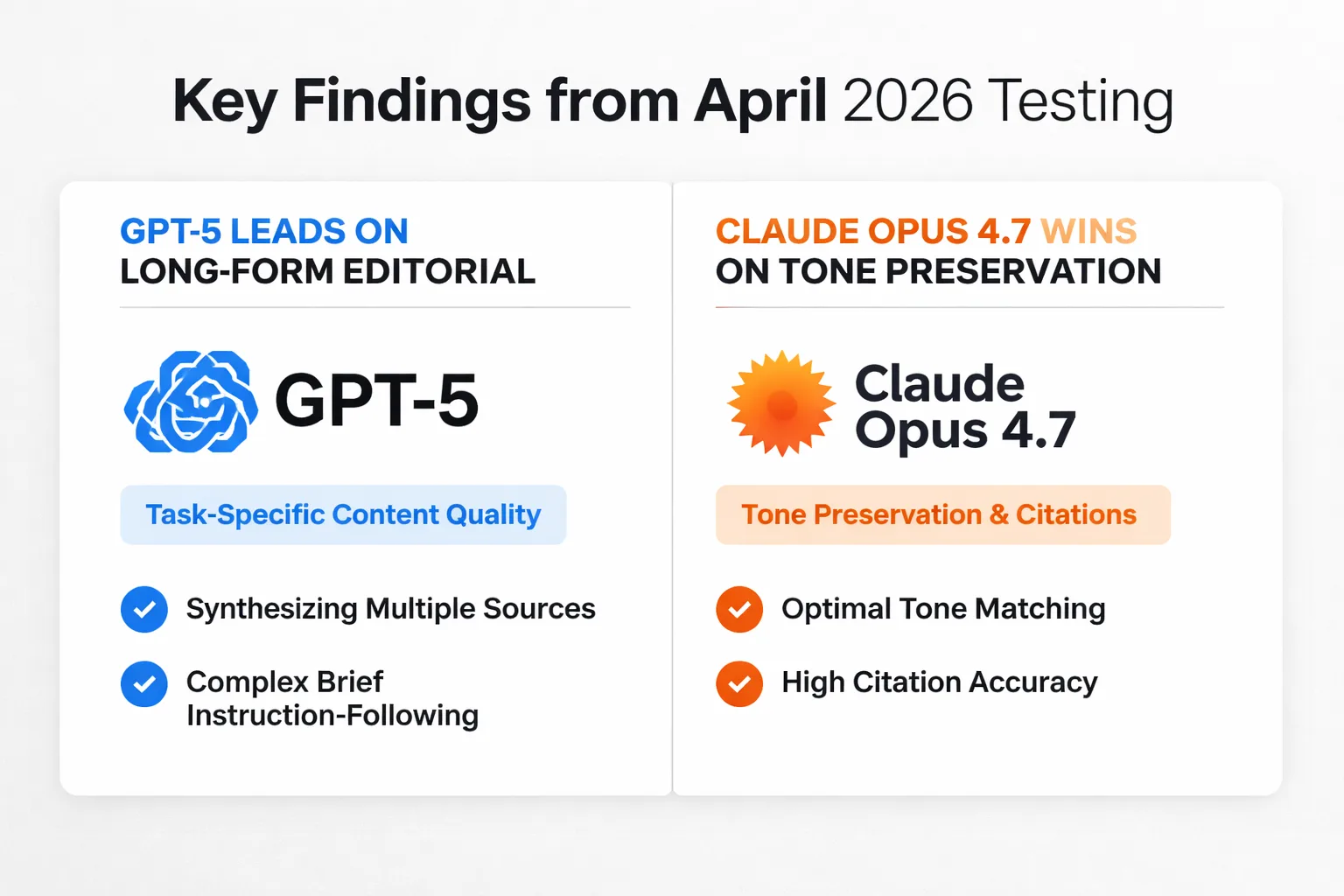

Which Models Win on Which Framework Right Now

I want to be explicit about the caveat before the data: this snapshot reflects April 2026 testing conditions. Model providers push updates without announcement. A model that leads on consistency today may regress after a silent fine-tuning update next month. Treat this as a starting point for your own testing, not a permanent ranking.

For task-specific content quality (Framework 1), GPT-5 currently leads on long-form editorial — specifically on pieces that require synthesizing multiple sources into a coherent argument. The output structure is more predictable, and the instruction-following rate on complex briefs is noticeably higher than earlier versions.

Claude Opus 4.7 wins on tone preservation (Framework 4). This isn't surprising — Anthropic has consistently prioritized instruction-following and stylistic adaptability. For brands with a distinctive editorial voice, Claude Opus 4.7 is the model I'd run through Framework 4 first. It also performs well on citation accuracy, though the arXiv citation patterns research is a useful reminder that what a model cites and what AI search engines pull from are separate questions entirely.

Gemini 2.5 currently leads on multilingual consistency — not a framework I listed above, but worth flagging for teams publishing in multiple languages. For English-only workflows, its advantage on the six frameworks is less pronounced.

Mistral sits in an interesting position. On cost-per-quality-output (Framework 6), it frequently wins for high-volume, lower-complexity content: product descriptions, FAQ expansions, category page copy. The quality ceiling is lower than GPT-5 or Claude Opus 4.7, but the economics are substantially better for tasks that don't require nuanced argumentation. For teams running AI visibility tools alongside content production, Mistral's cost profile makes it worth including in your comparison chart even if it doesn't top the quality rankings.

The one finding that stopped me cold from the benchmarking research: Artificial Analysis tracks live latency and throughput measurements updated 8× daily, and the speed rankings shift more than the quality rankings do. If your workflow has latency constraints — real-time content generation, live publishing pipelines — run Framework 6 with latency as a variable, not just API cost.

How this connects to AI search visibility: The model you use for content creation affects your citation potential, but it's not the whole story. The arXiv study of 366,000+ citations found that only 9% of citations reference news sources, and citation patterns differ dramatically by platform — Perplexity's Reddit preference vs. ChatGPT's Wikipedia preference means the same content gets cited differently depending on where users are searching. Model choice is one variable. Content structure, source attribution, and distribution strategy are the others. For teams serious about AI search visibility as a channel, model selection is the starting point, not the finish line.

For teams comparing platforms that track this end-to-end — from model selection through to citation monitoring — the Meev vs Peec AI comparison breaks down how citation tracking capabilities differ across tools, which is the measurement layer that makes the model selection work visible.

Frequently Asked Questions

How often should I re-run my AI model comparison chart? Every 60–90 days at minimum. Model providers push silent updates that can shift quality and consistency scores significantly without any public announcement. If you're running a high-volume content operation, assign one person to own the quarterly re-test as a recurring calendar item — not a project that happens when someone remembers.

Do benchmark leaderboards like MMLU predict content quality at all? For factual accuracy tasks — research synthesis, data summarization, technical explainers — there's a weak positive correlation. For tone, structure, and brand voice tasks, the correlation is essentially zero. Use benchmarks to eliminate models that fail basic capability thresholds, then use task-specific prompt testing to make the final selection.

Which framework matters most for SEO and GEO content specifically? Citation accuracy (Framework 3) and instruction-following rate (Framework 5) matter most for content targeting AI search visibility. Citation accuracy determines whether your research layer is trustworthy. Instruction-following rate determines whether your structured content formats — FAQ blocks, numbered lists, bolded claims — survive the generation process intact, which directly affects how AI engines extract and cite your content.

Can I use a free tool to build my comparison chart? Yes. WhatLLM provides a free four-dimension scoring framework across 310+ models with daily pricing updates — useful for the cost inputs in Framework 6. BenchLM covers 178 benchmarks with a confidence indicator showing how much verified data backs each score. Neither replaces your own task-specific testing, but both are solid starting points for narrowing the field before you invest time in manual evaluation.

How do I handle it when two models score nearly identically? Run Framework 2 (consistency scoring) as the tiebreaker. In a production content workflow, reliability beats ceiling performance. A model that produces a consistent 3.8/5 across 10 runs is more valuable than one that produces 4.5/5 twice and 2.0/5 three times. The variance tells you more about production risk than the average does.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Meev tracks AI citations across ChatGPT, Claude, Gemini, Perplexity, and Grok — so you can see which models and which content are driving your brand's visibility in AI search.