By Judy Zhou, Head of Content Strategy

Key Takeaways

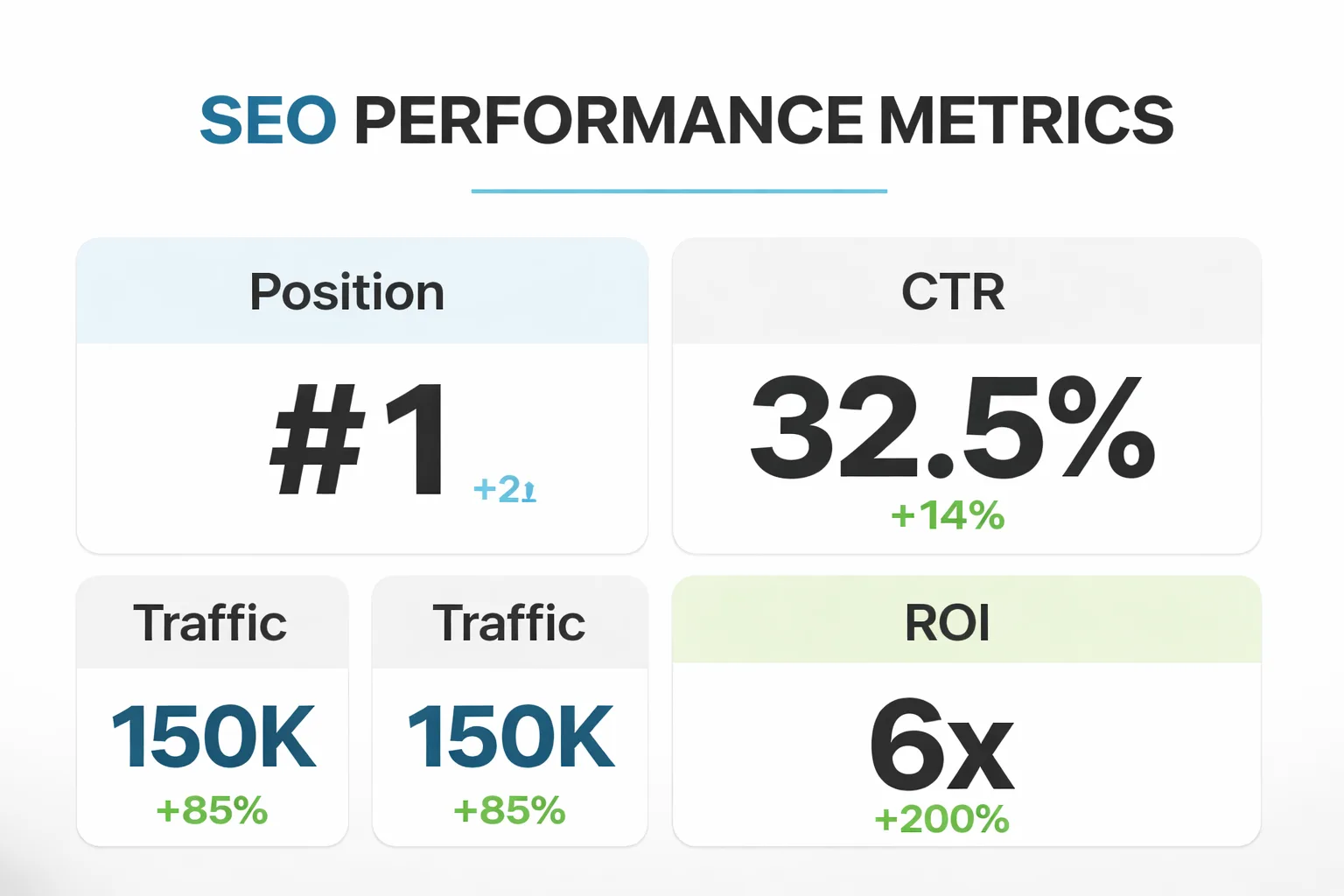

- Google AI Overviews appeared in 6.49% of keywords in January 2025, peaked at 25% by July, and settled below 16% by November, slashing organic CTR by 61% for affected queries.

- 58% of U.S. Google users experienced at least one AI summary search by March 2025, making AI visibility essential for local businesses today.

- Brands cited in AI Overviews see CTR 35% higher than traditional organic results, creating a 96-percentage-point swing over uncited competitors.

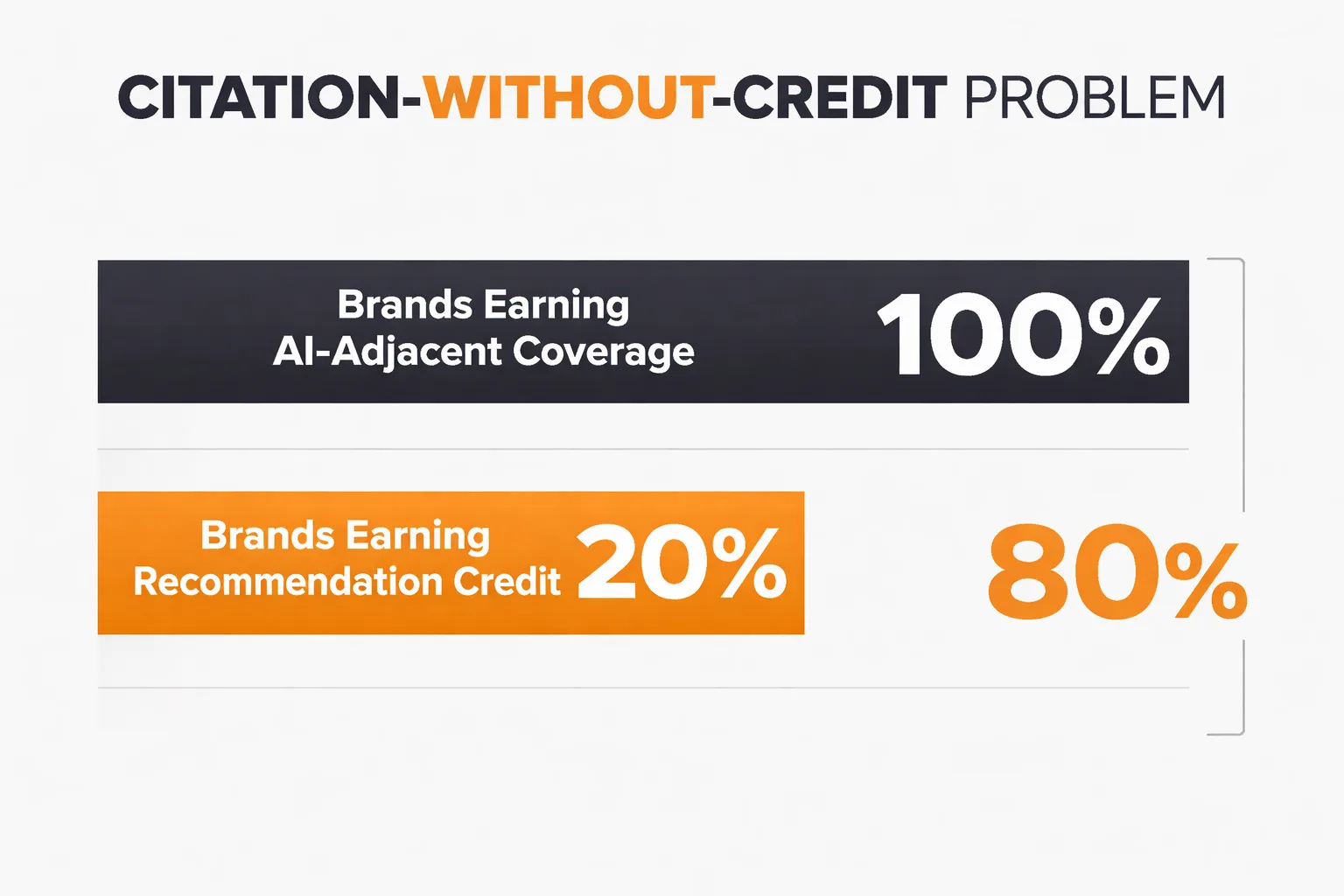

- Close the mention-citation gap—where 80% of brands get mentioned but not credited—by optimizing for direct citations in AI responses to dominate local intent queries.

When Google launched its Search Generative Experience in May 2023, most local business owners dismissed it as a curiosity — a flashy experiment that wouldn't disrupt the search habits that had governed their marketing for two decades. They were wrong. Within eighteen months, the way people discovered local services had begun a structural shift that accelerated sharply into 2025 and 2026. Understanding where that transformation started — and the specific moment AI answers began replacing ranked results in local intent queries — is the foundation for everything you need to do next.

AI Visibility 2026 is no longer a future-state concern for local businesses. It's the present operating condition. Google AI Overviews appeared in 6.49% of all keywords in January 2025, peaked at roughly 25% by July, and settled below 16% by November — a volatile rollout that caught most local operators flat-footed. According to Pew Research Center, 58% of U.S. Google users conducted at least one search producing an AI summary as of March 2025. The stakes are asymmetric: organic CTR drops 61% for queries where a Google AI Overview appears, but when your brand is cited inside that overview, CTR runs 35% higher than traditional organic results, according to data surfaced by Frase's GEO Playbook. That 96-percentage-point swing between cited and uncited brands is the mention-citation gap — and closing it is the entire project.

The Mention-Citation Gap Is Real and Widening

Most local businesses have heard of AI Overviews by now. Fewer understand that being mentioned in a source an AI engine pulls from is categorically different from being cited by name in the AI's response. I've started calling this the citation-without-credit problem, and the Ahrefs Brand Radar data puts it at roughly 80% of brands — meaning four in five businesses earning AI-adjacent coverage get none of the recommendation credit.

What's happening mechanically: large language models separate the source layer from the recommendation layer. If your business name isn't co-occurring with the specific claim in the original content, the model has no reliable signal to surface you when someone asks "best [service] near me" or "who should I call for [problem] in [city]." Domain authority doesn't fix this. I've watched campaigns with solid DR 70+ placements produce zero AI visibility lift because the pitches were optimized for backlink acquisition, not mention co-occurrence. The fix isn't more placements. It's rewriting the pitch brief so the resulting article anchors claims to your business name explicitly, not just links back.

For a local HVAC company or dental practice, this isn't abstract. If a prospective patient asks ChatGPT or Perplexity for a recommendation in your metro area and your name doesn't appear in the response, you've lost that lead before the search even registered in your analytics.

Generative Engine Optimization Local Business 2026: The Four Root Causes

Generative Engine Optimization for local businesses fails for predictable reasons. Understanding them saves months of wasted effort.

Root cause one: treating GEO as an extension of link building. Traditional SEO rewarded domain authority signals. AI retrieval systems reward named entity co-occurrence. These are different problems requiring different tactics. A backlink from a regional news outlet matters less than whether that outlet's article says "according to [Your Business Name], homeowners in [City] should expect..." versus "according to a local contractor."

Root cause two: ignoring the retrieval pipeline architecture. I spent a good chunk of last year advising our team to submit structured feedback to AI labs and optimize for citation-friendly formats — and I'll be honest, I can't point to a single measurable outcome from any of it. What actually changed my thinking was digging into how Reddit ended up with 40.1% citation share across major LLMs. It wasn't schema markup or a clever outreach campaign. It was a $60M licensing deal with Google and roughly a $70M agreement with OpenAI that embedded Reddit's content directly into retrieval pipelines. That's not a content strategy — that's a commercial infrastructure play. Most local businesses can't replicate it, but understanding it clarifies where the real leverage is: structured, retrievable content that appears in the sources AI engines already have licensed access to.

Root cause three: content that fails the uniqueness test. I've reviewed sites that got hit with Google's Scaled Content Abuse manual action, and the pattern is nearly identical every time. Thousands of pages, near-identical entity coverage, no behavioral depth signals, nothing that tells a crawler why page 847 exists separately from page 846. I tried running a content audit on one scaled site using a simple test: could I extract one unique fact per page that didn't appear on any sibling page? We failed on roughly 60% of the inventory. Those pages didn't survive. Volume itself isn't the liability. Undifferentiated volume is.

Root cause four: E-E-A-T signals that don't translate to AI source selection. Google's helpful content system rewards demonstrated experience. AI engines reward the same signals, but the translation mechanism is different — they're looking for named authors with verifiable credentials, specific claims with attributed sources, and content that answers the exact question a user would type into a chat interface. A "meet the team" page doesn't accomplish this. A blog post where your head technician explains the three most common reasons furnaces fail in your climate, with their name attached, does.

Is your local business showing up when customers ask AI engines for recommendations in your area?

How Does LLM Citation Tracking Work for Local Businesses?

LLM citation tracking is the practice of systematically querying AI engines with the questions your target customers would ask, then recording which sources get cited and whether your brand appears in the response. It's a different measurement framework than rank tracking, and most local businesses haven't set it up yet.

The mechanics are straightforward. You build a query library — 50 to 150 questions mapped to your service categories and geographic markets. You run those queries across ChatGPT, Perplexity, Google AI Overviews, and Claude on a regular cadence (weekly or bi-weekly is realistic). You record three things: whether your brand is named in the response, whether a source you own or earned coverage in is cited, and which competitors are getting the recommendation. Birdeye analyzed over 2,400 real-world searches across ChatGPT, Gemini, Claude, Perplexity, DeepSeek, and Grok in their local search accuracy report — the methodology is a useful template for building your own tracking cadence.

The pattern I keep seeing in our own campaigns: Perplexity cites sources more explicitly and more frequently than ChatGPT, which makes it a better early signal for whether your content is entering the retrieval layer. Articles that mentioned our clients' brand names in context of a specific claim got picked up in Perplexity responses; articles that linked to the brand but named it generically did not. If you're building a Perplexity citations strategy before tackling ChatGPT, that's the right sequence — Perplexity's citation behavior is more transparent and therefore more actionable.

For tracking tools, Meev vs Profound is worth reviewing if you're evaluating AI search visibility platforms — the comparison covers how different tools handle citation monitoring versus just mention tracking, which is a meaningful distinction.

Build Content That AI Engines Actually Retrieve

The framing I keep seeing in GEO circles sets up a false binary: either citations are locked into training data and nothing you do matters, or direct outreach to AI labs moves the needle. Neither conclusion leads content teams somewhere useful. What the Semrush citation studies — pulling from 150,000+ LLM citations — actually show is that citation patterns are dynamic enough to be influenced, but the influence mechanism is retrieval pipeline design: what sources get indexed and surfaced at query time.

For local businesses, that means four concrete content moves.

Write answer-dense service pages. Every service page should open with a 40-60 word direct answer to the most common question about that service. "How much does [service] cost in [city]?" "How long does [service] take?" "What should I ask before hiring a [professional] in [state]?" AI engines extract from the top of content. If your answer isn't in the first 200 words, it often doesn't get pulled at all.

Attach named author credentials to every piece. A blog post attributed to "Staff Writer" carries no E-E-A-T weight for AI source selection. A post attributed to "Maria Chen, licensed electrician with 14 years in the Phoenix metro area" carries signal. This isn't vanity — it's entity architecture. The author name, the credential, and the geographic specificity all function as structured signals that AI engines use to assess source reliability.

Create FAQ content that mirrors natural language queries. Not "Frequently Asked Questions About Our Plumbing Services" with five bullet points. Actual questions typed into ChatGPT: "Is it worth fixing a 15-year-old water heater or should I replace it?" "What's the difference between a tankless and a traditional water heater for a family of four?" These match the query patterns AI engines are trained to answer. Getting your content into Google AI Overviews starts with matching the question format your customers are already using.

Use schema markup for local entities. LocalBusiness schema, Service schema, and FAQPage schema are the three that matter most for local AI visibility. They don't guarantee citation, but they structure your content in the format AI retrieval systems prefer. Think of schema as putting your content in the right file format — the AI engine can parse it faster and more reliably.

Publisher Pitching for AI Citations: What Actually Works

The most frustrating pattern in publisher pitching campaigns: a brand earns a citation in a high-authority outlet, the AI platform pulls that content as a source — and then recommends a competitor by name in the actual response. The pitch template matters more than the target publication.

Here's what a citation-optimized pitch looks like versus a standard link-building pitch.

A standard pitch gets you: "Local homeowners are increasingly concerned about energy costs, according to a recent survey." Your brand is linked in the byline. The AI engine reads the article, uses it as a source, and surfaces the generic claim with no brand attribution.

A citation-optimized pitch gets you: "According to [Your Business Name], which services over 2,000 homes annually in the Denver metro area, homeowners who upgrade to a smart thermostat see an average of 18% reduction in heating costs." The brand name, the geographic specificity, the specific claim, and the credential are all co-occurring in a single sentence. That's what the AI engine needs to surface your brand when someone asks about energy savings in Denver.

The brief you send to journalists has to specify this. Most journalists default to generic attribution because it reads more neutrally. Your job is to give them a quote so specific and credible that using it verbatim is easier than paraphrasing it. For guidance on getting content cited in Perplexity specifically, these five repeatable steps are worth working through before you build your outreach list.

One more thing on outreach: regional and trade publications outperform national outlets for local AI citation. A mention in a city business journal or a trade association newsletter that covers your service category will be pulled more reliably for local queries than a buried mention in a national publication. Smaller distribution, higher relevance signal.

Why Most Teams Skip the Quality Gate

This is the part of the GEO workflow that gets rationalized away fastest, and it's the part that matters most for long-term AI visibility.

Quality-gated content publishing means that before any piece goes live, it passes a minimum threshold: one unique data point, one named author with verifiable credentials, one answer-dense opener, and schema markup applied. That's it. Four checks. Most teams skip this because the production pressure is real — clients want volume, schedules are tight, and the quality gate feels like friction.

Here's what skipping it costs: Google's SpamBrain system has been issuing Scaled Content Abuse manual actions with increasing frequency. The pattern I've seen across affected sites is always the same — undifferentiated volume at scale, not AI-generated prose. The threshold is informational, not stylistic. Teams spend energy on AI detection avoidance when the actual risk is content that can't pass the "what's unique about this page?" test. If your content workflow can't inject a unique data point at generation time, it's building toward a manual action, not away from one.

For teams running auto-blog engine workflows, the quality gate has to be baked into the generation step, not bolted on afterward. Reviewing 500 posts manually for uniqueness after the fact isn't operationally viable. The injection of location-specific data, named author attribution, and service-specific claims has to happen at the template level.

When GEO Advice Breaks Down for Local Businesses

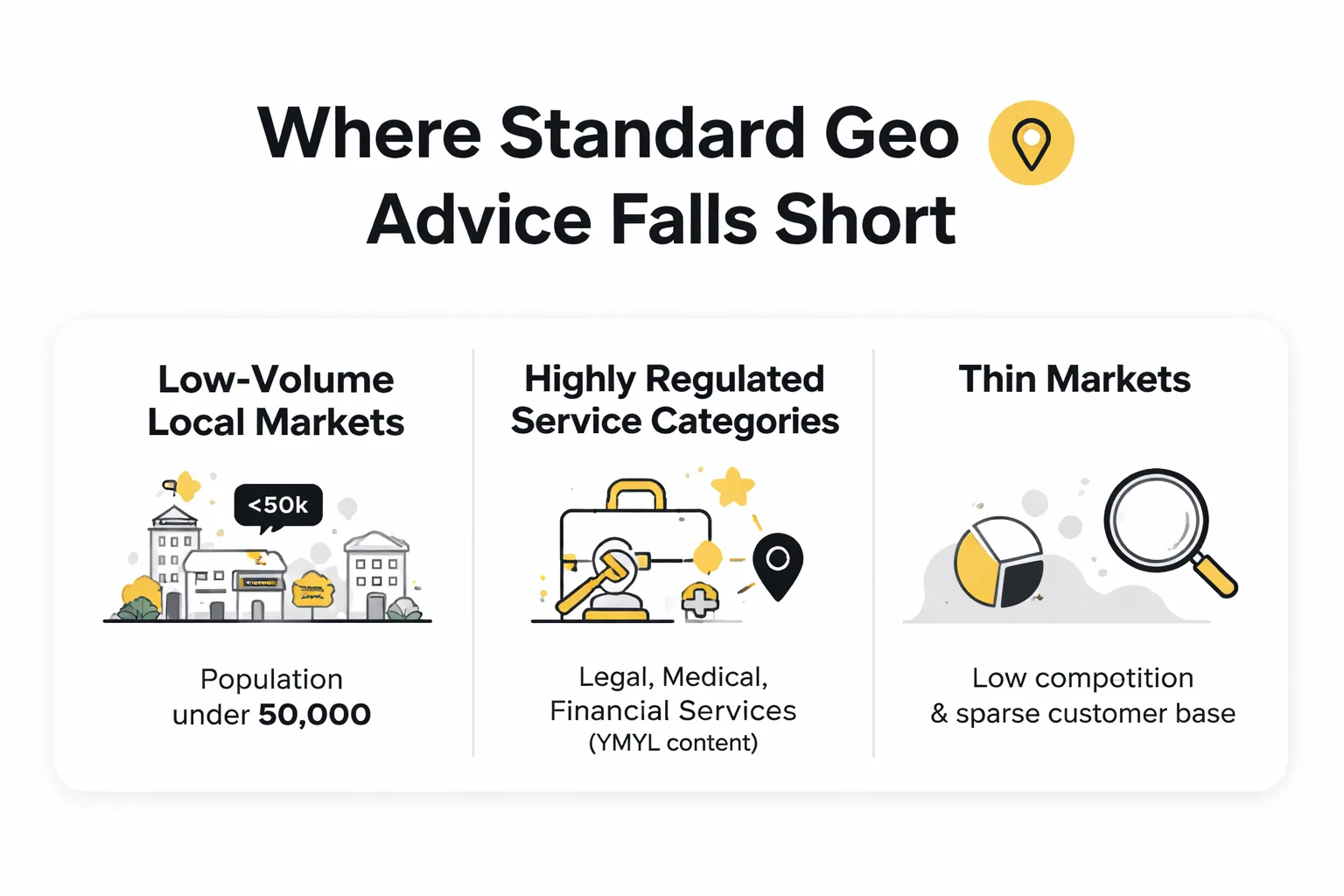

Not every GEO tactic that works for B2B SaaS translates to local service businesses. Three specific scenarios where the standard playbook fails.

Low-volume local markets. If your service area has a population under 50,000, AI engines may not have enough local query volume to develop strong citation patterns for your category. The standard "build topical authority" advice assumes enough search volume to create measurable signal. In thin markets, direct Google Business Profile optimization and local review volume often outperform GEO tactics because AI engines are pulling review aggregates and GBP data for low-volume local queries.

Highly regulated service categories. Legal, medical, and financial services face a different citation environment. AI engines apply additional scrutiny to YMYL (Your Money or Your Life) content, and the E-E-A-T bar is higher. A dental practice can't just publish FAQ content — the author credentials, the clinical claims, and the source citations all need to meet a higher standard. The tactics work, but the timeline is longer and the content investment is heavier.

Single-location businesses competing against franchise networks. Franchise brands have centralized content operations, national PR budgets, and established entity signals across hundreds of locations. A single-location competitor trying to out-publish a franchise on GEO is fighting the wrong battle. The better play is hyper-local specificity — content that covers your specific neighborhood, your specific service history in that market, and your specific team credentials — which franchise content can't replicate at scale.

How Should Local Businesses Track AI Search Visibility?

AI search visibility tracking for local businesses requires a different stack than traditional rank tracking. Here's the minimum viable setup.

Start with a query library built around your service categories and geographic modifiers. "Best [service] in [city]" is the obvious one, but the higher-value queries are the problem-framed ones: "what to do when [problem] happens in [city]," "how much does [service] cost in [state]," "is [service] worth it for [specific situation]." These match the actual prompts your customers are typing into AI engines.

Run those queries weekly across at least three platforms: ChatGPT, Perplexity, and Google AI Overviews. Claude is worth adding if you have the capacity — Claude visibility tracking has become more relevant as Claude's market share in conversational search has grown through 2026. Record brand mentions, competitor mentions, and which sources are cited in each response.

The metric to watch isn't just "did we appear" — it's the ratio of mentions to citations. If your brand appears in 30% of relevant responses but is named as a recommendation in only 8%, you have a mention-citation gap to close. That gap closes through the publisher pitching and content quality work described above, not through more content volume.

For teams evaluating platforms to automate this tracking, the Meev vs Sight AI comparison covers the quality-gated content and citation outreach dimensions that matter most for local businesses specifically.

The Contrarian Take on GEO for Local Businesses

Everyone in the GEO space is telling local businesses to optimize for AI citation. I want to say something more uncomfortable: for most local businesses in 2026, Google Business Profile and review volume still drive more measurable leads than any GEO tactic.

AI Overviews appear in roughly 18% of searches according to Originality.AI's LLM visibility data. For local service queries specifically, the AI Overview appearance rate is lower because these queries often trigger map pack results instead. The Semrush 2025 data actually shows that click-through rates for keywords with AI Overviews have steadily risen since January — which means the zero-click panic driving a lot of GEO investment may be overstated for local intent queries.

This doesn't mean ignore GEO. It means sequence your investment correctly. Get your Google Business Profile fully optimized, get your review velocity consistent, get your NAP citations clean. Then layer GEO tactics on top. The businesses I've seen waste money on GEO are the ones who treated it as a replacement for local SEO fundamentals rather than an addition to them.

The pattern I keep seeing: local businesses that win in AI search aren't doing exotic things. They're doing the fundamentals better than competitors — named authors, specific claims, geographic entity signals, and consistent review presence — and those fundamentals happen to satisfy both traditional local SEO and AI retrieval requirements simultaneously.

FAQ

How long does it take to see results from Generative Engine Optimization for a local business? Most local businesses see measurable changes in AI citation tracking within 60-90 days of implementing structured content changes — specifically named author attribution, answer-dense openers, and FAQ schema. Publisher pitching campaigns take longer: expect 90-120 days from pitch to publication to AI retrieval. The timeline accelerates significantly if you're targeting regional publications that AI engines already pull from regularly for local queries.

Does Google Business Profile data appear in AI Overviews for local queries? Yes, and this is underutilized. Google AI Overviews for local service queries frequently pull GBP data including business hours, review summaries, and service descriptions. Keeping your GBP fully populated — services listed with descriptions, Q&A section answered, photos updated — functions as structured data that AI Overviews can extract directly. This is often faster to implement than content-based GEO tactics.

What's the difference between GEO and AEO for local businesses? Generative Engine Optimization (GEO) focuses on getting your brand cited in AI-generated responses across LLMs like ChatGPT, Perplexity, and Google AI Overviews. Answer Engine Optimization (AEO) is the older practice of optimizing for featured snippets and voice search answers. In practice for local businesses, the tactics overlap significantly — both reward direct-answer content, FAQ structure, and schema markup. GEO adds the publisher pitching and brand mention co-occurrence layer that AEO didn't require.

How do different AI models select local business sources differently? Perplexity cites sources most explicitly and pulls from live web content, making it the most transparent platform for citation tracking. ChatGPT blends training data with live retrieval (via browsing) and tends to favor well-known brand names with broad web presence. Google AI Overviews prioritize sources that already rank well organically and have strong GBP signals. Claude tends to be more conservative with local recommendations and cites sources less frequently. This means your citation strategy should prioritize Perplexity for early signal detection, then work backward to the other platforms.

Should local businesses worry about Scaled Content Abuse penalties when using AI writing tools? The risk is real but specific. Google's Scaled Content Abuse action targets undifferentiated volume — pages that exist without unique informational value — not AI-generated prose per se. A local business publishing 50 location pages that each contain identical content with only the city name swapped is at risk. A local business using AI tools to draft content that's then enriched with location-specific data, named author credentials, and unique service claims is not. The quality gate described in this article (one unique data point, one named author, one answer-dense opener, schema applied) is sufficient protection for most local content operations.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

See exactly where your brand stands in AI-generated responses — and which content changes will move the needle fastest for your local market.