By Judy Zhou, Head of Content Strategy

Key Takeaways

- Wikipedia and Reddit together account for over 25% of ChatGPT citations across 600,000 events analyzed in the 5W Citation Source Audit Q1 2026.

- ChatGPT cites only about 50% of the URLs it retrieves in responses, according to Ahrefs research, creating a hidden visibility gap.

- 67.82% of sources cited in Google AI Overviews do not rank in the top 10 for the same query, per Goodfirms analysis.

- Track citations across seven AI platforms and verticals, as no single source dominates, per Tinuiti's Q1 2026 AI Citation Trends Report, to replace outdated SEO metrics.

In November 2022, when OpenAI released ChatGPT to the public, search marketers filed it under 'interesting experiment' and moved on. Within eighteen months, major publishers were reporting double-digit referral traffic drops as users began resolving queries inside the chat window itself. By 2025, Google's own AI Overviews had restructured the SERP so fundamentally that the SEO playbook required a rewrite. Now, in 2026, a new tracking discipline has crystallized from that disruption. ChatGPT Visibility Tracking. And understanding it means understanding every inflection point that made traditional search measurement insufficient.

ChatGPT Visibility Tracking 2026 is the practice of monitoring when, how, and why large language models cite or mention your brand, content, or domain in generated responses. According to the 5W Citation Source Audit Q1 2026, Wikipedia and Reddit together account for over 25% of ChatGPT citations across roughly 600,000 citation events analyzed. Ahrefs research found that ChatGPT only cites approximately 50% of the URLs it actually retrieves in responses. A Goodfirms analysis found that 67.82% of sources cited in Google AI Overviews do not rank in Google's top 10 for the same query. And according to Tinuiti's Q1 2026 AI Citation Trends Report, citation patterns vary significantly by platform and vertical, with no single source dominating across all seven AI platforms they tracked. The measurement gap between traditional SEO rankings and AI citation behavior is real, it's widening, and most content teams are still flying blind.

The Measurement Gap Nobody Warned You About

Here's the uncomfortable truth I keep running into as I oversee content strategy across hundreds of brands: ranking well and being AI-visible are now measuring completely different things. I used to treat position one as a reasonable proxy for AI citation likelihood. That assumption is wrong, and the data is unambiguous about it.

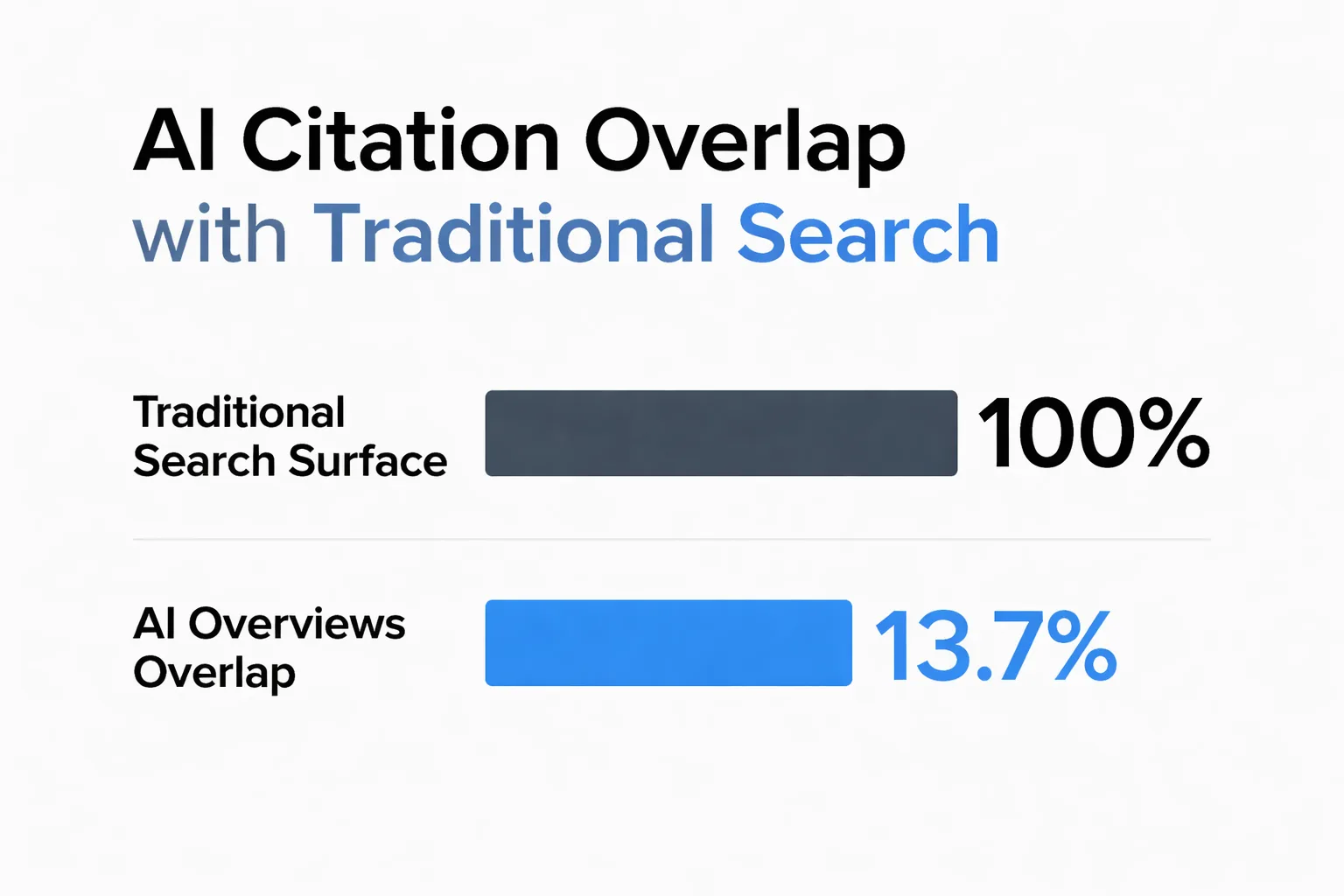

Ahrefs ran analysis across 730,000 AI responses and found only 13.7% overlap between what Google's traditional search surface cites and what AI Overviews pulls from. You can build a content pipeline that wins traditional rankings and still be architecturally invisible to AI retrieval. Not because the content failed quality checks, but because it was optimized for the wrong retrieval system entirely. I've stopped treating 'ranking well' as a proxy for 'AI-visible.' They're measuring different things now.

The pattern I keep seeing is that content teams discover this gap only after they notice referral traffic collapsing. By then, the structural problem has been baked into their content architecture for months.

How LLMs Actually Select Sources

Most explanations of AI citation behavior treat it like a black box. It isn't. The selection mechanics are knowable, and understanding them is the foundation of any serious ChatGPT Visibility Tracking 2026 practice.

Large language models don't browse the web the way a search crawler does. When a model like ChatGPT or Perplexity generates a cited response, it's drawing from a combination of its training corpus, real-time retrieval (where enabled), and a ranking layer that scores candidate sources for relevance and credibility signals. The arXiv paper on Generative Engine Optimization by Chen, Wang, Chen, and Koudas documents something practitioners need to internalize: AI search engines show a systematic bias toward earned media over brand-owned content and social content. Your company blog, no matter how well-written, is structurally disadvantaged compared to a Wikipedia entry or a Reddit thread discussing the same topic.

This is why the 5W Citation Source Audit numbers hit so hard. Wikipedia and Reddit together hold 25%+ of ChatGPT's citation share. According to OtterlyAI's AI Citation Economy Report 2026, community platforms like Reddit and Quora capture 52.5% of citations versus 47.5% for brand domains. And the top 15 domains capture 68% of total AI citation share. That's a winner-take-most distribution that makes the long tail of brand content structurally marginal.

The second mechanism worth understanding is what I call answer-burial. AI retrieval systems scan for the most direct answer in the first retrievable chunk of a document. If your content buries the actual answer in paragraph four after two sentences of topic framing and a definition nobody asked for, the piece is functionally skipped by AI retrieval regardless of how well it ranks or how long it is. I've started auditing content specifically for this: if the direct answer isn't in the first 60 to 80 words, it's invisible to AI context-window scanning. The fix isn't complicated, but most content templates are still structured the old way, introduction first, payoff later.

Third: claim distinctiveness matters more than claim volume. The citation hallucination problem compounds this. The GhostCite benchmark tested 13 LLMs across 40 domains and found hallucination rates ranging from 14% to 95% depending on the model. A competitor can publish a thin paraphrase of your original research, and an LLM can cite their version as the authoritative source while your original goes unattributed. The structural fix I keep coming back to is making claims so distinctively formatted. Specific stat plus named methodology plus dated finding. That paraphrasing without attribution becomes harder to execute cleanly.

Why Citation Volume Is the Wrong Success Metric

I used to think getting cited by AI search engines was the win. Build authoritative content, optimize for LLM visibility, watch the referral traffic arrive. What I actually found was that citation and traffic are almost completely decoupled.

The Tow Center ran 1,600 test queries through AI search engines and found that even when publishers were cited, referral traffic was negligible. That tracks exactly with what I see in analytics across the brands I work with: brand mentions in AI responses, zero click-through. The model summarizes your work, maybe names you, and the user never leaves the interface. A mention in a Perplexity response is closer to a footnote in a book nobody opens than a backlink.

So what does winning in AI search actually look like in 2026?

The honest answer is that the field hasn't fully resolved this. But the practitioners making real progress are tracking three things beyond raw citation count: citation context (is the brand mentioned as the authoritative source or as a passing reference?), mention-citation conversion rate (how often does a brand mention in AI responses include a linked citation versus a bare name drop?), and citation consistency across platforms. Tinuiti tracked citation patterns across seven AI platforms and nine commercial categories in Q1 2026 and found no single platform dominated across all contexts. That means your AI visibility strategy has to be multi-platform by design, not an afterthought.

The Mention-Citation Gap. And How to Close It

The mention-citation gap is the delta between how often your brand appears in AI-generated responses and how often it appears with an actual linked citation. Closing that gap is the most actionable lever in LLM Citation Tracking 2026, and it's the one most teams ignore because it requires editorial work, not just technical optimization.

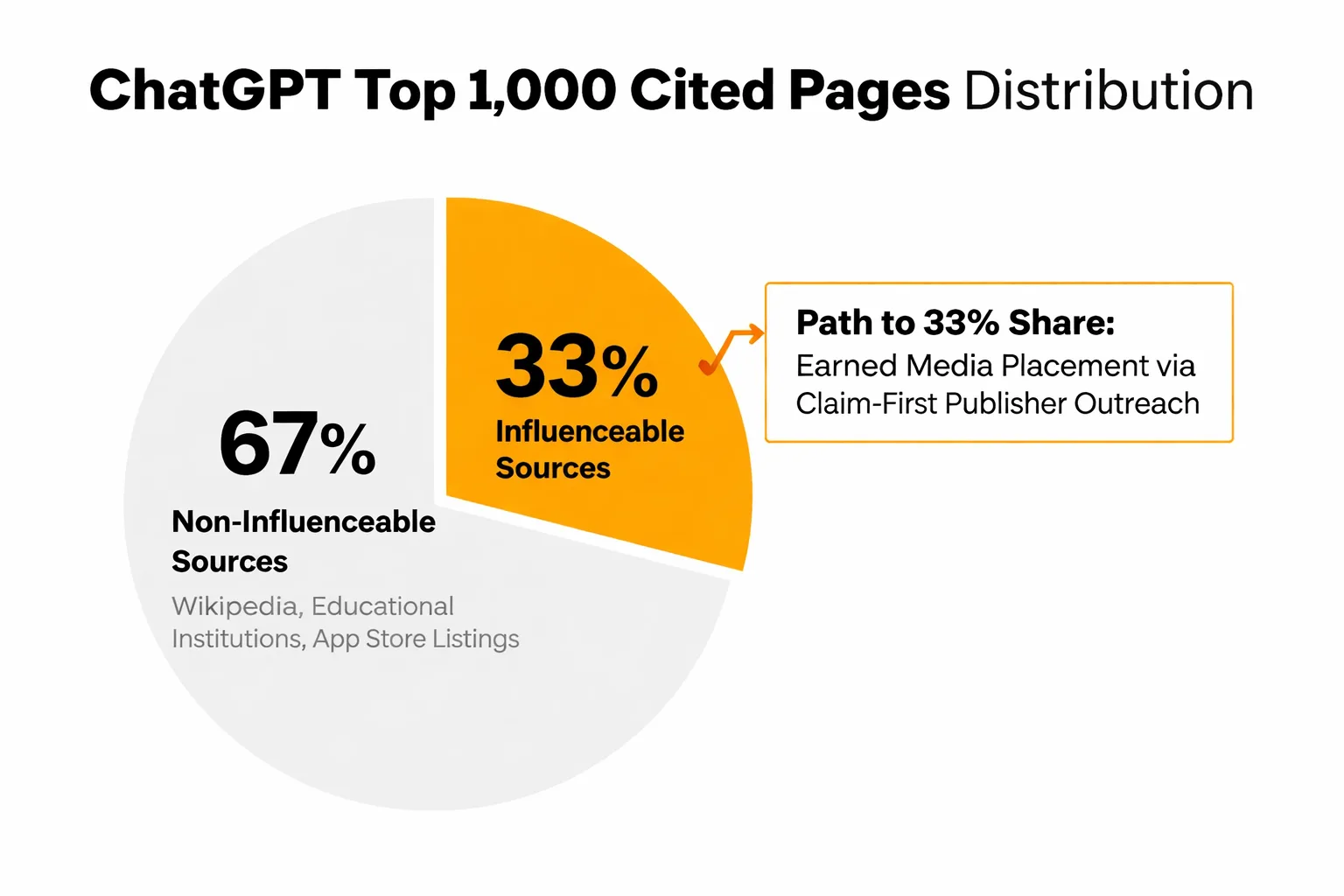

Here's what the citation distribution data tells us about where to focus. Ahrefs found that 67% of ChatGPT's top 1,000 cited pages come from sources that are essentially non-influenceable: Wikipedia entries, educational institution content, app store listings. That leaves 33% of citation share in territory where brand content can actually compete. The path into that 33% runs through earned media placement, not owned content optimization.

The strategy that's showing real traction is what I'd describe as claim-first publisher outreach. Instead of pitching your brand, you pitch a specific, citable finding: a named statistic, a methodology, a dated benchmark. When that finding gets picked up by a publication that already sits in AI citation pools, you've effectively seeded the LLM training and retrieval corpus with an attributed version of your claim. It's slower than publishing on your own domain. It works better.

Reddit's trajectory makes this concrete. Josh Blyskal's analysis of 100M+ ChatGPT responses found Reddit citations grew from 1% to 3.3% of ChatGPT responses in six months, while Wikipedia citations dropped from 11% to 3.9% in the same period. Human-generated, opinion-based content in community platforms is gaining citation share at the expense of encyclopedic sources. Brands that participate authentically in those communities, rather than just publishing on their own domains, are building citation infrastructure that the LLMs are actively rewarding.

Are you tracking where your brand appears in ChatGPT and Perplexity responses — or just hoping your SEO rankings are enough?

How Does AI Model Comparison Affect Your Citation Strategy?

Not all AI platforms cite the same way, and treating them as interchangeable is a strategic mistake. The arXiv GEO paper documents systematic differences in how AI search engines source information, but the practitioner-level reality is messier: citation behavior varies by platform, query type, and vertical.

Perplexity is structurally different from ChatGPT in one important way: it cites sources by default in nearly every response, with live linkable citations that users can follow. ChatGPT's citation behavior is more selective, and Ahrefs data shows it only cites approximately 50% of the URLs it retrieves. Google AI Overviews operates differently again, pulling from a source pool where 67.82% of cited pages don't rank in the traditional top 10. Claude tends toward structured research tasks and shows different citation preferences than ChatGPT for the same queries, though no published benchmark currently isolates Claude versus ChatGPT citation rates with controlled methodology.

What this means practically: you need platform-specific visibility tracking, not a single aggregate score. A brand that's well-cited by Perplexity may be nearly invisible in ChatGPT responses for the same topic, because the retrieval mechanisms differ. I'd recommend starting with ChatGPT visibility tracking and Perplexity citation tracking as separate measurement workstreams before trying to build a unified dashboard. The platforms are different enough that aggregating them prematurely hides the signal you need.

For Claude AI visibility, the pattern I keep seeing is that structured, research-grade content with explicit methodology statements performs better than conversational content, even when the conversational version ranks higher in traditional search. That's a meaningful divergence from the SEO optimization model most teams are running.

Why Scaled Content Kills AI Visibility

This is the contrarian take I'll stand behind: the auto-blog playbook that dominated 2023 and 2024 is now actively damaging AI citation prospects, not just failing to help them.

The mechanism is what practitioners call the Mt. AI curve. Scaled AI content gets a traffic spike for two to three months as Google's freshness signals reward new content. Then Google's quality threshold enforcement kicks in, and the traffic collapses. Google's March 2024 core update deindexed hundreds of sites publishing AI-generated content at scale without editorial oversight. But the AI visibility damage is worse than the SEO damage, because LLMs are specifically trained to weight human-generated, experience-based content over templated outputs.

Reddit's citation growth from 1% to 3.3% in six months isn't a coincidence. It's a signal that AI systems are actively rewarding the human-layer credibility that scaled content lacks. The Ahrefs finding that 86.5% of top-ranking pages contain some AI content with near-zero correlation to ranking position tells you that AI content isn't the problem. Scaled AI content without editorial oversight is the problem. The distinction matters enormously for anyone running an auto-blog workflow.

The brands I see maintaining strong AI visibility are running quality-gated publishing: every piece goes through an editorial pass that adds first-person observations, named methodologies, and specific dated findings before it publishes. That's not a small operational change. It's a fundamental rethink of what a content pipeline is for. If you're comparing tools that support this approach, the Meev vs. Profound comparison breaks down which platforms actually enforce quality gates versus which ones just claim to.

How Do You Actually Track ChatGPT Visibility?

ChatGPT Visibility Tracking 2026 requires a different instrumentation stack than traditional SEO analytics. Here's the measurement framework I've found most reliable.

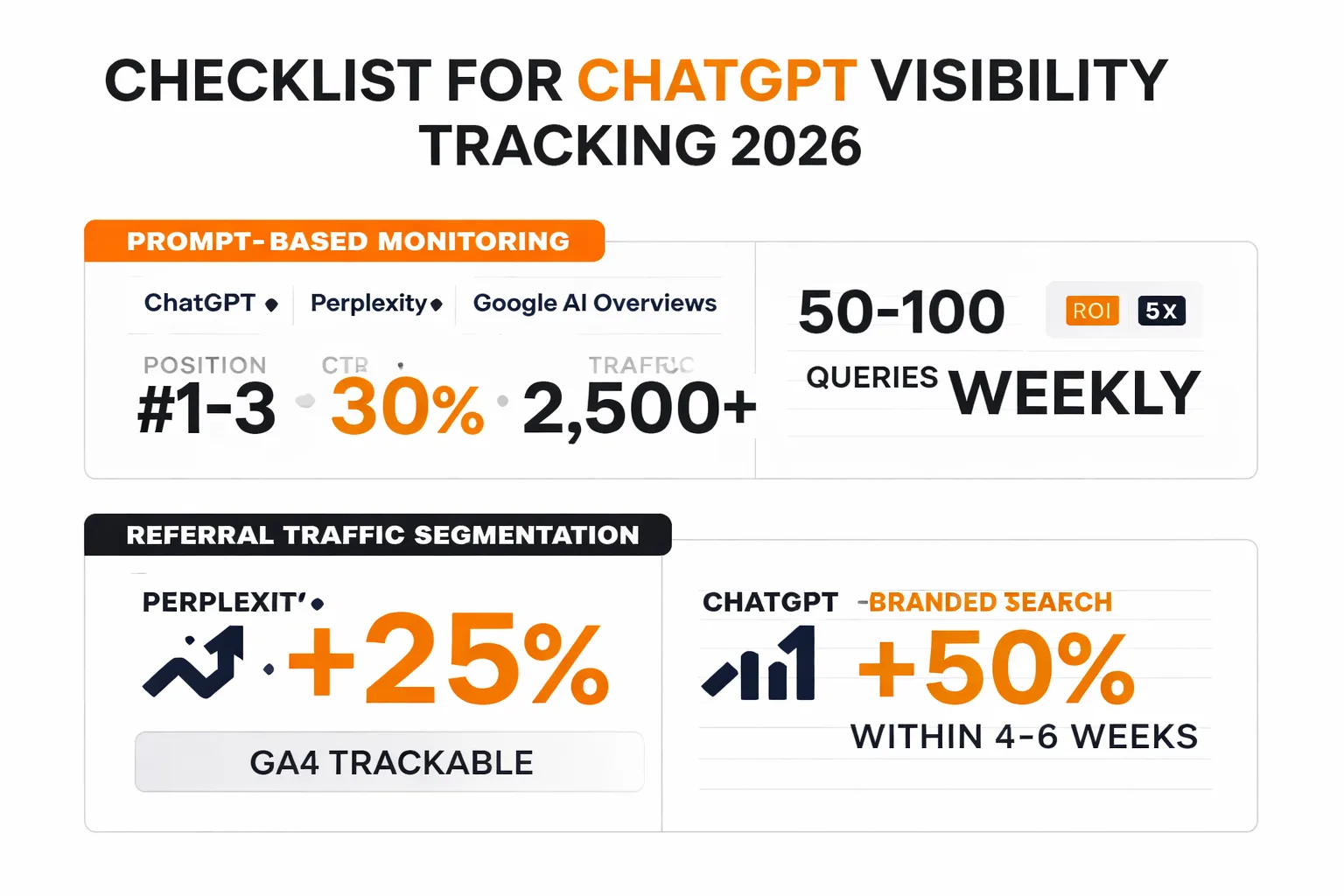

Start with prompt-based monitoring. Build a set of 50 to 100 queries that represent your target topics, and run them through ChatGPT, Perplexity, and Google AI Overviews on a weekly cadence. Track whether your domain appears in responses, whether it's cited with a link, and what context surrounds the mention. This is manual at first, but it gives you ground truth that no third-party tool can replicate.

Layer in referral traffic segmentation. AI-driven referral traffic from Perplexity is trackable in GA4 because Perplexity sends referral headers. ChatGPT traffic is harder to isolate because users often navigate directly after reading a response. The proxy metric I use is branded search volume as a leading indicator: if AI mentions are increasing brand awareness, you should see branded query volume rise within four to six weeks.

For Google AI Overviews tracking, the measurement challenge is that Google doesn't expose AI Overview citation data in Search Console. You're working from manual query sampling and third-party tools that scrape SERP features. Only 14% of marketers currently track AI visibility in any systematic way, which means the competitive advantage of doing it well is still significant.

The schema markup question comes up constantly in these conversations. I'll be direct: the claimed 3.2x lift in AI citation frequency from structured data implementation that circulates in GEO communities is not reliable. When Ahrefs tracked 1,885 pages before and after schema addition, the citation lift disappeared entirely. Schema correlates with cited pages because high-authority sites use schema AND get cited, but the schema isn't doing the causal work. Don't build your AI visibility strategy around it.

Putting This Into Practice With the Right Tools

Generative Engine Optimization 2026 is not a single tactic. It's a measurement discipline, an editorial standard, and a distribution strategy running simultaneously. Most teams try to solve it with a single tool purchase and wonder why nothing changes.

The tool landscape for AI search visibility has matured significantly in 2026. Platforms now track citation frequency across multiple LLMs, monitor brand mention context, and flag the mention-citation gap in near real-time. If you're evaluating options, the Meev vs. Sight AI comparison covers quality-gated content and citation outreach specifically, which is the combination most teams underinvest in. For teams coming from traditional SEO tools, the Meev vs. Copy.ai comparison and Meev vs. Jasper comparison are useful for understanding where auto-blog workflows end and AI visibility strategy begins.

At Meev, where I oversee content strategy across hundreds of brands, the pattern we've found most reliable is this: treat AI citation as an editorial outcome, not a technical one. The brands winning citation share in 2026 are the ones publishing fewer pieces with more distinctive claims, placing those claims in earned media channels that already sit in LLM citation pools, and tracking the gap between mention and citation as a primary KPI rather than an afterthought.

The 83% of AI-generated answer queries that resolve without a click-through aren't going back to traditional search behavior. That traffic is gone. What remains is the brand authority signal that accumulates when your claims are cited consistently, accurately, and in the right context across AI surfaces. That's what ChatGPT Visibility Tracking 2026 is actually measuring. And right now, most of your competitors aren't measuring it at all.

Frequently Asked Questions

What tools are available for ChatGPT Visibility Tracking in 2026?

Several platforms now offer AI search visibility monitoring, including brand mention tracking across ChatGPT, Perplexity, Google AI Overviews, and Claude. The key differentiator to look for is whether a tool tracks citation context (not just mention frequency) and whether it surfaces the mention-citation gap. Ahrefs Brand Radar, Tinuiti's citation tracking methodology, and dedicated GEO platforms are the most referenced options in practitioner communities as of Q1 2026.

Does schema markup improve AI citation rates?

The short answer is no, not causally. Ahrefs tracked 1,885 pages before and after schema markup addition and found citation lift disappeared when controlling for domain authority. High-authority sites use schema AND get cited, but the schema isn't the driver. Focus editorial energy on claim distinctiveness and earned media placement instead.

How is AI visibility different from traditional SEO rankings?

Traditional SEO measures position in a ranked list of blue links. AI visibility measures whether your content is retrieved, cited, and attributed in generated responses. Ahrefs found only 13.7% overlap between what traditional search and AI Overviews cite from the same content pool. Optimizing for one does not reliably improve the other.

Why is Reddit appearing so frequently in ChatGPT citations?

Analysis of 100M+ ChatGPT responses found Reddit's citation share grew from 1% to 3.3% in six months while Wikipedia's dropped from 11% to 3.9%. LLMs appear to be rewarding human-generated, experience-based content in community platforms over encyclopedic or brand-owned sources. This reflects the earned media bias documented in GEO research.

What is the mention-citation gap and why does it matter?

The mention-citation gap is the difference between how often your brand appears in AI responses (as a bare name drop) versus how often it appears with an actual linked citation. A mention without a citation provides almost no referral traffic and is vulnerable to citation hallucination, where a competitor's paraphrase of your content gets attributed instead. Closing this gap through distinctive claim formatting and earned media placement is the highest-leverage AI visibility tactic available in 2026.

How do different LLMs compare in their citation behavior?

Perplexity cites sources by default with live linkable citations. ChatGPT cites approximately 50% of the URLs it retrieves, making its citation behavior more selective. Google AI Overviews pulls from a source pool where 67.82% of cited pages don't rank in the traditional top 10. Claude shows preference for structured, research-grade content with explicit methodology statements. Each platform requires separate tracking and different content optimization approaches.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Start measuring your AI citation share across ChatGPT, Perplexity, Google AI Overviews, and Claude before your competitors close the gap.