AEO Content Optimization: Why AI Overviews Skip Your Best Content

AI Overviews don't choose sources based on word count or traditional authority; they choose them based on information density and semantic accessibility. If your content is being passed over, it's not because your research is lacking—it's because your data is buried in prose lacking AEO content optimization that AI models find difficult to index.

Picture this: the post is well-researched, hits 2,400 words, has a clean H2 structure, and covers the topic more thoroughly than anything on page one. Then Google's AI Overview runs for that exact query — and cites a thinner, older article from a domain no one has heard of. I've watched this pattern play out dozens of times across content teams I've worked with, and the frustration is completely understandable.

But here's what I've found after digging into the data: AI Overviews don't reward effort. They reward machine-readable clarity. And those are two very different things.

\"Only 38% of AI Overview citations come from pages that rank in the top 10 of traditional search results.\" — BusySeed, 2026

That stat should reframe everything. If ranking high doesn't guarantee AI visibility, then the signals driving AI citation are fundamentally different from traditional SEO signals. Here's what I've found they actually are.

Is AEO about word count or freshness?

The two most common assumptions I hear from content teams who've been excluded from AI Overviews: "We need longer content" and "We need to publish more frequently." Both are wrong — and I've seen teams waste real resources chasing them.

Word count is a proxy metric that made sense when Google's ranking systems were simpler. AI Overviews use a retrieval architecture (often called Retrieval-Augmented Generation, or RAG) that pulls specific passages — not entire articles — to construct answers. The system doesn't care that a post is 3,000 words if the specific passage answering the query is buried in paragraph 14, surrounded by hedging language, and lacks a clear subject-predicate structure. RAG systems are essentially running a semantic search across content at the sentence and paragraph level. If those units aren't self-contained and answerable on their own, the system skips them.

Freshness matters even less than people think. I've seen posts from 2021 consistently cited in AI Overviews while fresher competitors get ignored. What those older posts have in common isn't recency — it's structural clarity. They answer the question in the first two sentences after a heading. They use specific numbers. They don't bury the lede under three paragraphs of context-setting.

The actual eligibility signals, based on patterns I've observed across dozens of sites, cluster around three things: semantic precision (does the content answer a specific question unambiguously?), source credibility signals (does the page demonstrate E-E-A-T in ways AI systems can detect?), and structural extractability (can the answer be lifted out of context and still make sense?). None of those are about length or publish date.

One more thing that's quietly killing AI visibility for a lot of sites I audit: Google-Extended blocking. If a site's robots.txt blocks Google-Extended — the crawler Google uses to train and update its AI systems — it's opting out of AI Overview consideration entirely. I recommend checking robots.txt as an immediate priority. This misconfiguration shows up on sites that are otherwise doing everything right.

What content structures do AI prefer?

Getting concrete here matters, because most AEO advice I come across stays frustratingly vague. In my work leading content strategy at Meev, I've identified three specific structural patterns that dramatically increase the chances of being cited — and here's exactly what they look like.

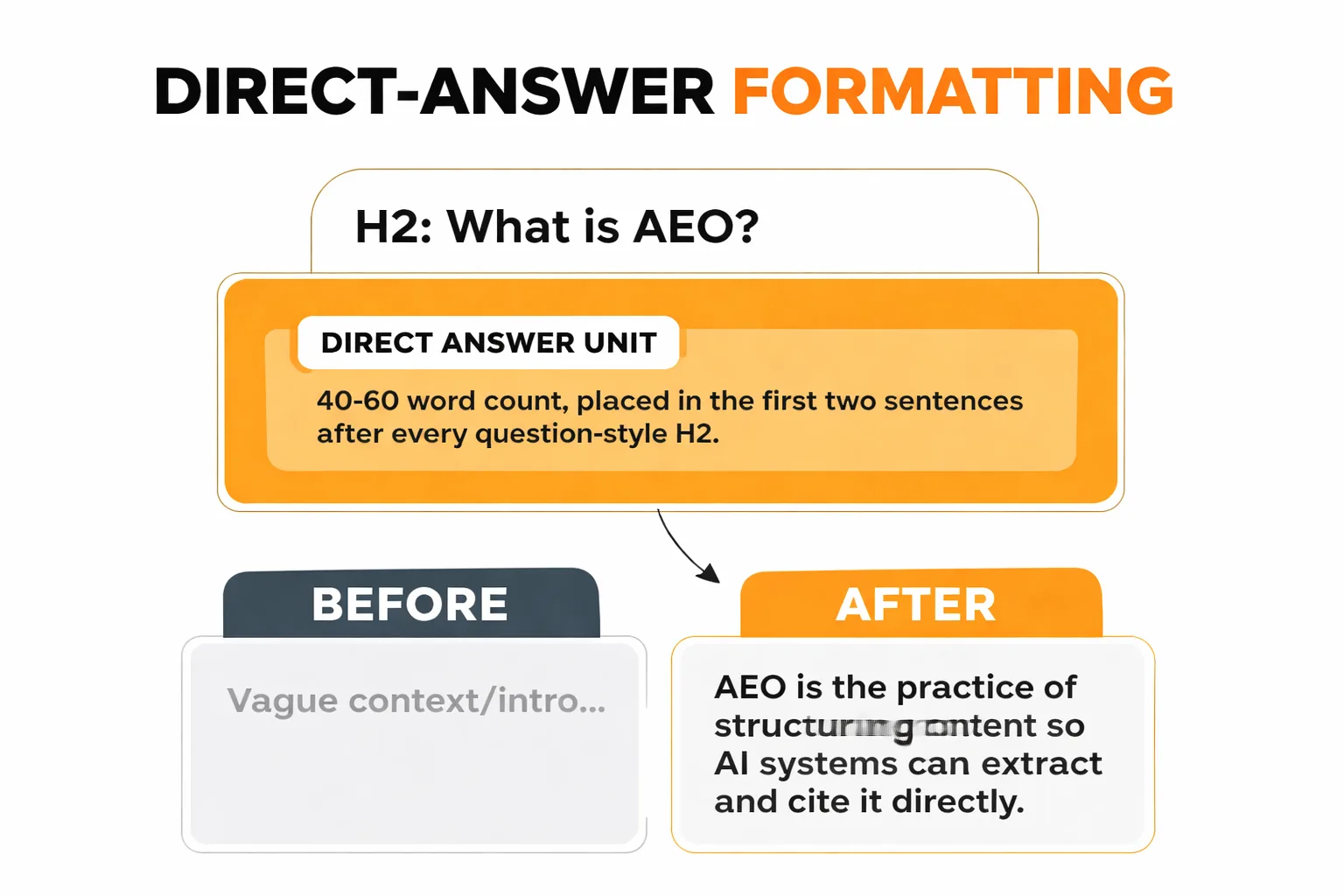

1. Direct-Answer Formatting

The single biggest structural change any content team can make — and the one I recommend first — is placing a 40-60 word direct answer in the first two sentences after every question-style H2. Not a teaser. Not context. The actual answer.

Before: "Understanding answer engine optimization requires looking at how search has evolved over the past decade. With the rise of voice search and AI assistants, the way users interact with search engines has fundamentally changed..."

After: "Answer engine optimization (AEO) is the practice of structuring content so AI systems can extract and cite it directly in generated responses. Unlike traditional SEO, AEO prioritizes semantic clarity and extractable answer units over keyword density or link authority."

The second version can be pulled out of context and still make complete sense. That's the test I apply to every section I edit.

2. Claim-Evidence Pairing

AI systems are trained to avoid hallucination risks. One of the ways they do this is by preferring content where claims are immediately followed by specific, verifiable evidence. In my experience, vague assertions get skipped. Specific, sourced claims get cited.

Before: "Content freshness is important for SEO performance."

After: "Content freshness influences crawl frequency but not AI Overview citation rates — pages from 2021 regularly outperform newer content when their answer structure is clearer."

The second version makes a specific, falsifiable claim. AI systems treat that as a lower hallucination risk than a vague generalization.

3. Entity Disambiguation

This one's underrated, and it's something I flag constantly in content reviews. AI systems build knowledge graphs, and they need to know exactly which entity the content is about. If a piece of content covers "optimization" without clearly specifying what is being optimized, for what system, and toward what outcome, the system can't confidently map that content to a query.

Before: "Optimization strategies vary depending on your goals."

After: "AEO content optimization — structuring web content for citation in AI-generated answers — requires different tactics than traditional on-page SEO, which targets human-readable ranking signals."

Every major concept in the content should be defined in context, not assumed. Think of it as writing for a very literal reader who has no prior knowledge of the topic. Because that's essentially what a RAG system is.

If you want a deeper look at how these structural signals interact with Google's broader quality assessment, this breakdown of the 7 signals Google uses to rank AI vs. human content is worth reading alongside this article.

How to Audit Your Existing Posts for AEO Gaps

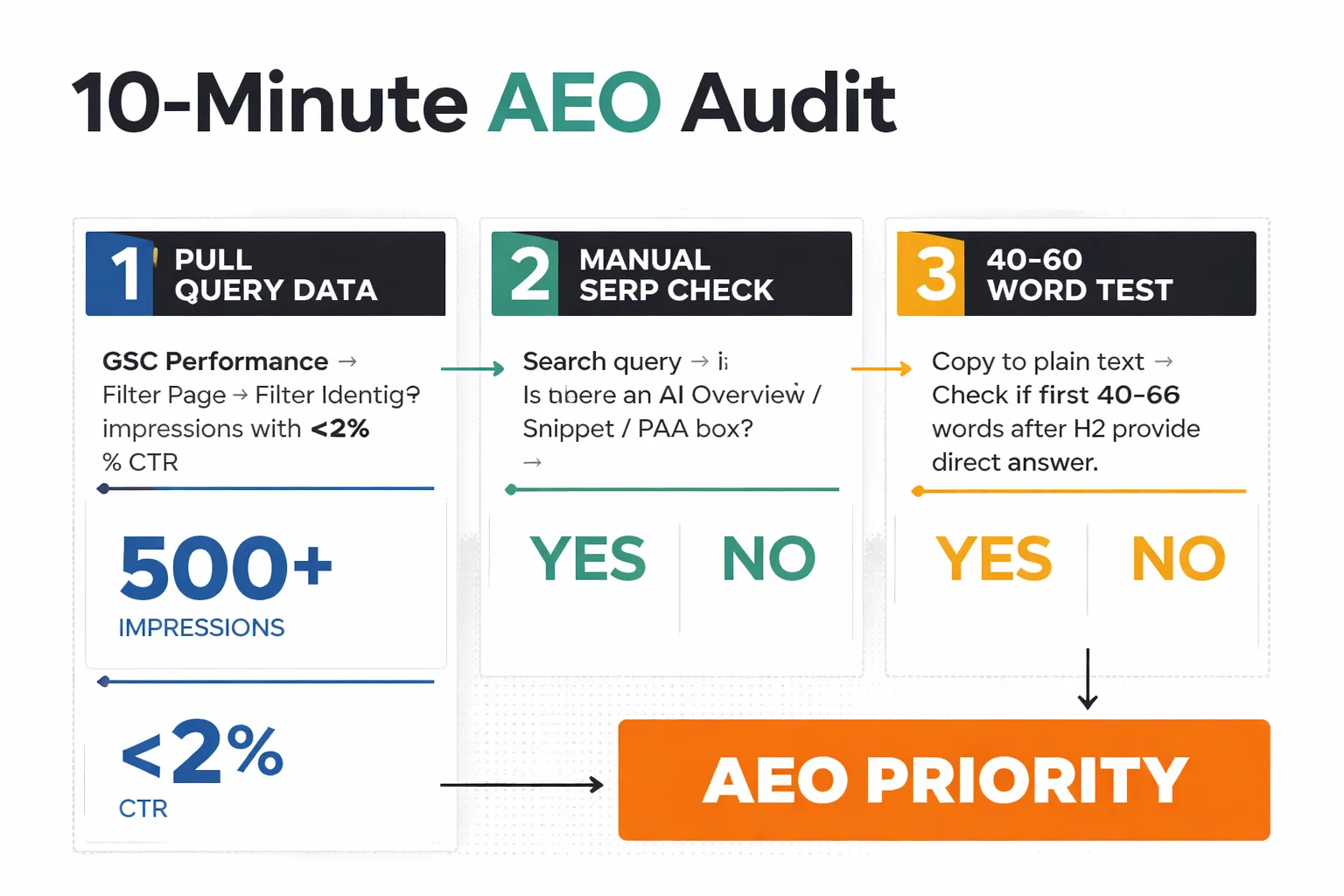

Here's a 10-minute audit I run on any post before deciding whether to rewrite it for AI Overview visibility. The only tools needed are Google Search Console and a plain-text version of the article.

Step 1: Pull your query data in Search Console. Go to Search Console → Performance → Search Results. Filter by the page being audited. Look at the queries driving impressions. If a page is getting 500+ impressions on a query but a CTR under 2%, that's a strong signal that an AI Overview is answering the query before users reach the result. In my work, these are the highest-priority AEO targets.

Step 2: Check for featured snippet presence. Search those high-impression, low-CTR queries manually. Is there an AI Overview? A featured snippet? A People Also Ask box? If yes, the content is competing in AEO territory and losing. If no, the low CTR might have a different cause.

Step 3: Run the 40-60 word test. Copy the article into a plain text editor. After each H2, highlight the first 40-60 words. Read them in isolation. Do they answer the implied question of that heading completely? Or do they set up context that requires the rest of the section to make sense? If it's the latter, rewrite the opening of that section.

Step 4: Count your specific claims. Scroll through the article and highlight every sentence that contains a specific number, named source, or verifiable fact. Divide that by the total paragraph count. In my experience, posts that get cited in AI Overviews have at least one specific claim per 3-4 paragraphs. If the ratio falls below that, the content is written in generalities that AI systems distrust.

Step 5: Check your robots.txt for Google-Extended.

This takes 30 seconds. Go to yourdomain.com/robots.txt and search for "Google-Extended". If there's a Disallow: / under that user-agent, AI crawling is being blocked entirely. I recommend fixing this before doing anything else.

Step 6: Scan for entity clarity. Search the article for pronouns and vague references: "it", "this", "they", "the process". Each one is a potential disambiguation failure. Replace them with the specific entity name. It feels repetitive in the writing — but it's exactly what AI systems need.

This audit consistently surfaces the same two or three issues on every site I run it on. The most common: strong content buried under weak openings, and specific knowledge hidden inside vague sentence structures. Both are fixable in an afternoon.

Answer Engine Optimization Strategy: Prioritizing Pages with the Highest AEO Upside

Most content teams make the mistake of starting their AEO rewrites with their newest posts or their highest-traffic pages. In my experience, that's backwards. Here's the framework I use to rank a content backlog by actual AEO opportunity.

Score each page on three dimensions:

| Dimension | What to Measure | High Score Signal |

| Traffic Potential | Monthly impressions in Search Console | 1,000+ impressions, CTR under 3% |

| Query Intent Match | Is the primary query a direct question or definition? | "What is", "How does", "Why does" queries |

| SERP Feature Presence | Is there already an AI Overview or featured snippet? | Yes = high AEO territory |

Pages that score high on all three are the immediate priority. They're already getting seen for queries where AI Overviews dominate — they're just not being cited. In my work at Meev, I've seen structural rewrites on these pages move the needle within weeks, not months.

Pages with high traffic potential but no SERP features yet are the second tier. These are opportunities to establish AI Overview presence before competitors do. The answer engine optimization trends HubSpot tracked in 2026 show that early movers in AEO-optimized content are building citation authority that compounds over time — similar to how early domain authority worked in traditional SEO.

Pages with low traffic potential, regardless of their AEO quality, go to the bottom of the list. Don't spend time perfecting the structure of a post that nobody's searching for.

My recommendation here is direct: don't rewrite everything at once. Pick the top five pages by this framework, rewrite them for AEO structure, and monitor Search Console for 4-6 weeks. The data will show which structural changes are actually driving AI Overview citations for a specific domain and audience — and that data will make the next batch of rewrites far more precise.

Also worth noting: AI visibility can increase direct traffic even when nobody clicks through brand recognition in AI-generated answers. Being cited in an AI Overview — even if the user doesn't click through — builds the kind of ambient authority that eventually drives branded searches. That's a longer-term play, but it's real, and it's why AEO investment compounds in ways that pure traffic metrics don't capture. At Meev, we've seen this dynamic play out firsthand.

The bottom line on answer engine optimization strategy: AI systems aren't ignoring content because it's bad. They're ignoring it because it's not structured for machine extraction. Fix the structure, and the citations follow.