By Judy Zhou, Head of Content Strategy

Key Takeaways

- Pages ranking for Google AI Overview fan-out queries are 161% more likely to be cited than pages ranking only for the main query.

- GPT-4o analyzed 274,951 references across 10,000 papers and systematically favors already-cited sources, creating a Matthew effect that rewards early topical authority builders.

- Build AI citation authority using the 5-stage framework: start with topical cluster building and fan-out query coverage to outpace competitors.

- Track AI citations as the new brand awareness metric, HubSpot's top marketing priority through 2026.

In 2012, Google's Panda update drew a line in the sand: thin content would no longer be rewarded, and topical depth would begin to matter. Fourteen years later, a far more consequential line is being drawn. The rise of generative engines — ChatGPT, Perplexity, Google AI Overviews — has separated the concept of ranking from the concept of being cited entirely. The publishers who recognized Panda's signal early captured a decade of organic advantage. The publishers who recognize the generative search shift in 2026 will define the next era of AI content authority.

AI content authority in 2026 is no longer determined by backlink count or keyword density. It's determined by whether AI systems trust your content enough to cite it when a user asks a question you could answer. That's a fundamentally different game, and most content teams are still playing the old one.

As Head of Content Strategy at Meev, where I oversee AI-driven publishing for hundreds of brands, I've watched this shift happen in real time. Pages ranking for Google AI Overview fan-out queries are 161% more likely to be cited than pages ranking only for the main query. The HubSpot State of Marketing Report confirms brand awareness is a top marketing priority through 2026, with AI citation tracking becoming the new measurement standard. And research analyzing 274,951 references generated by GPT-4o across 10,000 papers shows LLMs systematically favor already-cited sources, reinforcing a Matthew effect that rewards early movers. The window to establish AI content authority is open. It won't stay open.

The Old Ranking Model Is Broken for AI Search

Generative Engine Optimization (GEO) and traditional SEO share some DNA, but they diverge at the most important point: what counts as a win. In SEO, a win is a position-one ranking. In GEO, a win is being the source an AI cites when it synthesizes an answer. Those two outcomes increasingly come from different content strategies.

Here's the number that reframed my thinking: according to Louise Linehan's AI Overview citation study, only about 38% of AI Overview citations overlap with Google's top 10 results today. In July 2024, that overlap was roughly 76%. That's not a gradual drift. That's a structural decoupling happening over months, not years. Google's AI Overviews are pulling from a different pool than the standard SERP, and that pool is increasingly defined by fan-out query coverage rather than main-query dominance.

Fan-out queries are the sub-questions Google's systems generate internally when processing a complex search. A user types "best CRM for small businesses." Google's AI fans out to related queries: "CRM pricing tiers for startups," "CRM integrations with Gmail," "CRM onboarding time for five-person teams." Pages that rank across those fan-out variants are dramatically more likely to appear in the AI Overview response. The Surfer SEO study of 10,000 keywords found that pages ranking for both the main query and at least one fan-out query account for 51% of all AI Overview citations. Pages ranking only for the main query account for under 20%.

The implication is direct: topical authority for AI search isn't about ranking for one keyword. It's about building content clusters deep enough to rank across the semantic neighborhood of a topic.

How Does Fan-Out Coverage Actually Work?

Fan-out coverage is the mechanism by which AI systems decide whether your content is authoritative enough to cite, and it's more systematic than most practitioners realize.

When a generative engine processes a query, it doesn't just retrieve the top result. It expands the query into multiple related sub-questions, retrieves content for each, and synthesizes a response from the most credible sources across all of them. A site that ranks for the primary query but misses the sub-questions loses citation opportunities to sites that cover the full semantic territory. The Spearman correlation between fan-out ranking count and citation likelihood is 0.77, which is a strong signal in this kind of analysis.

The practical implication: content teams need to build what I call "semantic neighborhoods" around every core topic. This isn't keyword stuffing or padding. It's recognizing that a page on "content marketing ROI" should be supported by pages on "how to measure content attribution," "content marketing benchmarks by industry," and "content marketing budget allocation" — not because Google told you to, but because an AI system trying to answer a complex question about content marketing ROI will fan out to exactly those sub-questions. If you're not ranking for the neighborhood, you're not getting cited for the destination.

I use Gemini to extract fan-out query variants for our priority topics, then map existing content against those variants. The gaps are almost always surprising. Teams think they've covered a topic because they have one strong pillar page. They haven't. They've covered the main query. The fan-out territory is often 60-70% uncovered. For Google AI Overviews tracking, this gap analysis is the first thing I run before any content refresh.

Why E-E-A-T for AI Search Differs From Traditional Signals

E-E-A-T for AI search operates differently than E-E-A-T for traditional rankings, and conflating the two is one of the most common strategic errors I see.

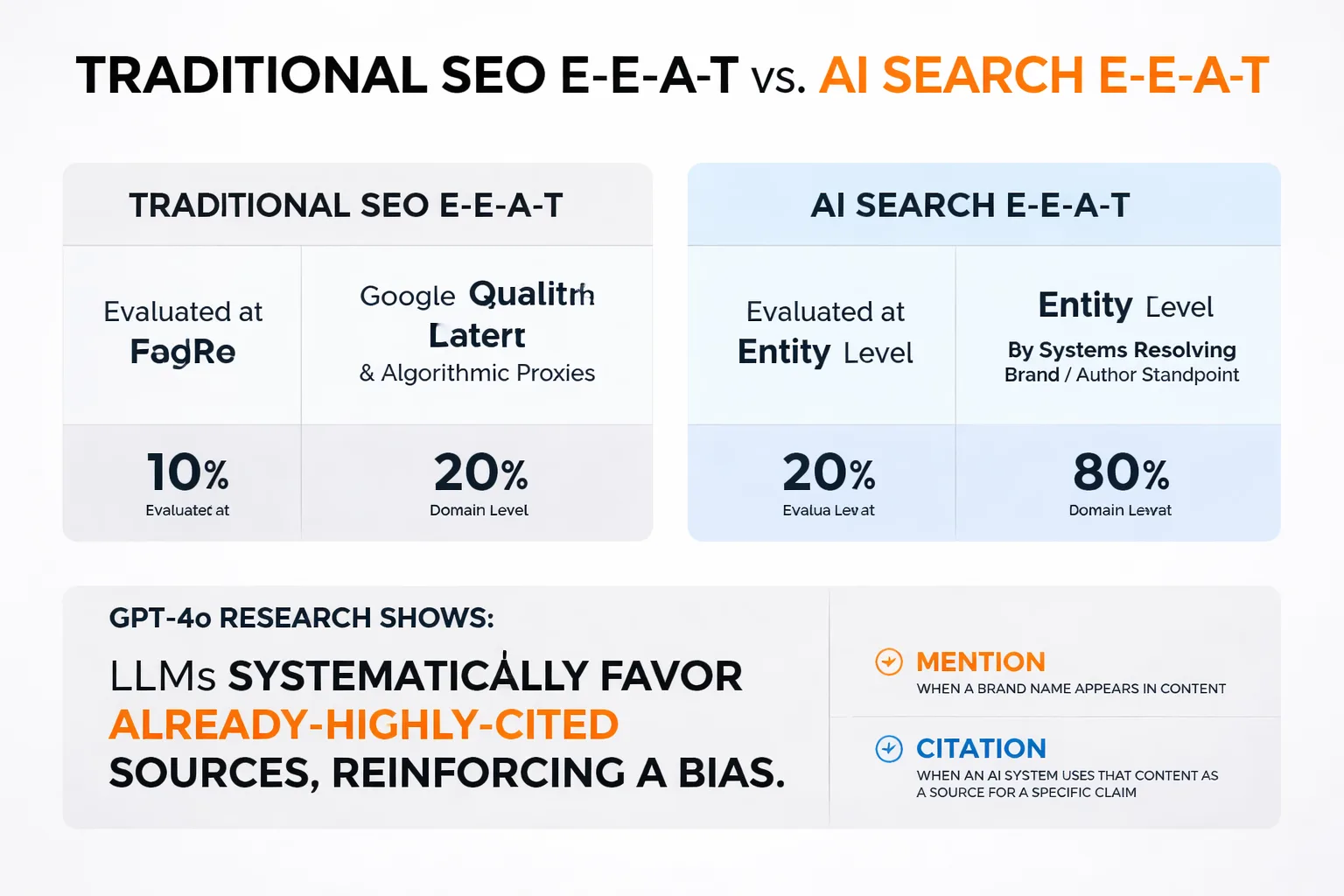

In traditional SEO, E-E-A-T signals are evaluated at the page and domain level by Google's quality raters and algorithmic proxies. In AI search, E-E-A-T is evaluated at the entity level by systems that are trying to resolve what a brand or author specifically stands for. The research on GPT-4o citation practices makes this concrete: LLMs systematically favor already-highly-cited sources, reinforcing a citation bias toward established entities. If your brand hasn't been clearly defined as an authoritative entity in the training data and retrieval corpus, you're competing against a structural disadvantage.

This is where the mention-citation gap becomes critical. A mention is when a brand name appears in content. A citation is when an AI system uses that content as a source for a specific claim. The gap between the two is enormous for most brands, and it's almost entirely explained by semantic hollowness in the mentions themselves.

The failure mode I see most often in brand mention outreach is that teams treat placement volume as the deliverable and positioning as someone else's problem. I've seen campaigns generate dozens of mentions in a quarter with zero measurable lift in AI-sourced referrals. When I dig into why, the mentions are almost always semantically hollow. They name the brand but don't reinforce what the brand specifically stands for. AI systems aren't running a popularity contest. They're trying to resolve what a brand is clearly enough to recommend it confidently. If your mentions don't consistently anchor a specific, differentiated idea, you're not building that resolution.

What I'd actually test: take your next outreach campaign and score every placed mention on whether it contains your core positioning language, not just your brand name. My guess is fewer than 30% do. That's the real gap, and no amount of volume fixes it.

How Does the Mention-Citation Gap Get Bridged?

Bridging the mention-citation gap requires a shift in how you think about outreach deliverables. The goal isn't placement. The goal is contextual embedding.

The "add us as a resource" pitch fails at roughly three times the rate of pitches that identify a specific factual gap in an existing article. I've seen this play out directly: generic resource requests get ignored, or the publisher adds a link buried in a "further reading" section that neither humans nor AI systems treat as authoritative. When we shifted our outreach to leading with a concrete gap — "your section on X is missing current data on Y, here's a stat that fills it" — response rates improved and the placements we earned were woven into the actual argument of the article.

That distinction matters for AI visibility because background links get used as background evidence. Contextually embedded citations are what get surfaced in Perplexity or ChatGPT responses. For teams tracking Perplexity citation performance, the difference between a buried link and an embedded citation is often the difference between zero AI referrals and consistent ones.

Here's the framework I use for citation-ready outreach:

1. Identify a specific factual claim in a target article that is outdated, missing, or unsourced. 2. Match that gap to a data point, study, or original finding your brand can own. 3. Pitch the gap first, the brand second. "Your readers are missing X" lands better than "we'd love to be featured." 4. After placement, verify the mention contains your core positioning language, not just your brand name. 5. Track whether the placed citation appears in AI responses on the target topic using an AI search visibility tool.

Step five is where most teams stop tracking, which means they have no feedback loop on what's actually working.

Wondering which AI engines are actually citing your content right now?

Stop Treating Schema as a GEO Strategy

There's a practitioner thread on Reddit claiming a 3.2x citation lift from schema implementation. I wanted it to be true. It would have made my roadmap much simpler. But when you stack that single claimed test against large-scale citation frequency studies, the signal breaks down fast.

The Semrush and Tinuiti data both point to the same uncomfortable reality: the dominant citation driver in 2026 isn't content structure, it's platform identity. Reddit and Wikipedia are being treated by LLMs as trusted source categories, not just individual pages that happened to rank. That realization changed how I think about content distribution. We've started prioritizing getting our team's genuine expertise into Reddit threads and contributing to Wikipedia where we legitimately can, not as a hack, but because that's where AI models are actually pulling from.

Schema still goes on our pages. But I've stopped treating it as a GEO differentiator. It's hygiene, not strategy.

The same applies to the "optimize for concise answer blocks" advice that's been circulating in GEO communities. That strategy was largely built on the assumption that Reddit-style formatting was a reliable citation surface for AI platforms. Then Ahrefs Brand Radar data confirmed that ChatGPT sharply reduced Reddit citations around September 2025. If your GEO playbook was tuned to how ChatGPT was pulling Reddit content six months ago, you may have optimized for a surface that no longer exists at the same scale.

For teams using ChatGPT visibility tracking, the practical implication is to diversify citation surfaces rather than over-indexing on any single platform's current behavior. What ChatGPT cites today may not match what it cites in six months.

The GEO vs. Helpful Content System Tension

The GEO vs. Google Helpful Content System tension is the most unresolved strategic question I'm sitting with right now, and I don't think anyone has clean answers yet.

Google's Helpful Content System still structurally rewards depth and demonstrated expertise. It hasn't reformed to favor snippet-friendly brevity. But GEO advice keeps pushing toward tighter, more extractable formats. I tried leaning harder into the concise answer block approach for a content refresh earlier this year and saw no measurable Helpful Content signal improvement. What I suspect is happening: the tactics are being reverse-engineered from AI output patterns rather than from controlled tests, and the two optimization targets are genuinely in conflict.

Until someone runs actual A/B data, I'm treating GEO formatting as additive, not as a replacement for the depth signals the Helpful Content System still seems to require. The practical approach: write for depth first, then layer in extractable answer blocks at the top of sections. Don't sacrifice the 1,000-word section that demonstrates genuine expertise just to lead with a 60-word answer block. Do both.

This tension also shows up in scaled content operations. Teams using quality-gated publishing workflows, where AI drafts content but human editors validate before publication, tend to navigate this better than teams running fully automated pipelines. The human editorial layer catches the cases where AI-generated brevity has gutted the expertise signals that both Google and LLMs need to trust the content. If you're comparing tools for this workflow, the Meev vs Jasper comparison covers how quality-gating differs across platforms.

How to Track LLM Citations Without Guessing

LLM citation tracking is the discipline that separates teams who are building AI content authority from teams who are hoping they are. Without tracking, you have no feedback loop. Without a feedback loop, you're optimizing blind.

The core metrics I track across AI platforms:

- Citation frequency: How often does a specific URL appear as a cited source in AI responses on target queries? - Mention-citation ratio: Of all brand mentions in AI responses, what percentage include a linked or attributed citation? - Fan-out coverage rate: What percentage of identified fan-out queries for a core topic does your content rank for? - Positioning language presence: Of placed brand mentions, what percentage include your core differentiation language?

For Claude visibility specifically, citation patterns differ meaningfully from ChatGPT. Claude tends to cite longer-form, more structured content with clear authorial attribution. ChatGPT's citation behavior has shifted multiple times in the past year, which reinforces why platform-specific tracking matters rather than assuming one strategy works across all engines.

The research on GPT-4o citation practices found that LLMs diverge from traditional citation patterns by preferring more recent references with shorter citation windows. That means publishing cadence matters. A brand that publishes authoritative content consistently will accumulate citation advantage over a brand that publishes sporadically, even if the sporadic publisher's individual pieces are higher quality.

According to HubSpot's tracking guidance, users increasingly rely on AI-driven responses before clicking through to websites, which means AI citation is becoming a top-of-funnel awareness channel, not just a traffic source. That reframes the ROI calculation entirely.

Scaling AI Content Without Triggering Quality Penalties

Scaled content abuse is a real penalty vector, and teams running high-volume AI publishing operations need to take it seriously. Google's scaled content guidance is explicit: the issue isn't volume, it's whether the content provides genuine information gain over what already exists.

Information gain strategy is how I think about this operationally. Every piece of content we publish at scale needs to answer one question: what does this page tell a reader that they couldn't get from the top three existing results? If the answer is "nothing," the piece doesn't ship. This isn't a creativity standard. It's a quality gate that happens to align with both Google's Helpful Content System requirements and LLM citation preferences, since AI systems favor content that adds to the conversation rather than rephrasing it.

The practical implementation: before any AI draft goes into production, we run a gap analysis against the top-ranking content on the target query. The draft must contain at least one of the following: original data, a named case study with specific outcomes, a practitioner perspective not present in existing results, or a structural framework that organizes the topic differently than competitors. If none of those are present, the draft gets flagged for human enrichment before publication.

For teams evaluating tools that support this workflow, the Meev vs Copy.ai comparison breaks down how quality-gating and information gain checks differ across platforms built for scale.

The LLM citation bias toward already-cited sources creates a compounding dynamic: content that earns early citations gets cited more, which increases its authority signal, which increases future citation likelihood. Getting into that cycle early, with genuinely differentiated content, is the strategic advantage available to teams who move now. The publishers who recognize this shift in 2026 will define the next era of AI content authority. That line is being drawn today.

Frequently Asked Questions

What is the difference between GEO (Generative Engine Optimization) and traditional SEO? Traditional SEO optimizes for position in a ranked list of results. GEO optimizes for being cited as a source in an AI-synthesized answer. The key difference is that AI citation depends on semantic authority and fan-out query coverage, not just main-query ranking. A page can rank position one and never appear in an AI Overview if it doesn't cover the sub-questions the AI is also evaluating.

How do I know if my content is being cited by AI engines? You need dedicated LLM citation tracking, not just Google Search Console. Tools that monitor citation frequency across ChatGPT, Perplexity, Claude, and Google AI Overviews give you platform-specific data. Track citation frequency, mention-citation ratio, and whether your positioning language appears in the responses that cite you — not just your brand name.

Does schema markup improve AI citation rates? Schema is hygiene, not a GEO differentiator. Large-scale citation frequency studies point to platform identity and topical authority as the dominant citation drivers, not content structure signals. Implement schema for standard SEO reasons, but don't expect it to meaningfully move your AI citation rates on its own.

How many fan-out queries should I target per core topic? There's no fixed number, but the Surfer SEO study found a Spearman correlation of 0.77 between fan-out ranking count and citation likelihood. Practically, I aim to cover at least 5-8 fan-out variants per core topic before considering a cluster complete. Use Gemini or a similar tool to extract the semantic neighborhood around your priority queries, then audit your existing content against those variants.

What makes a brand mention "citation-ready" for AI systems? A citation-ready mention contains three things: your brand name, a specific claim that an AI system could use to answer a question, and your core positioning language. Generic name-drops don't build entity resolution. The mention needs to reinforce what your brand specifically stands for, not just that it exists.

How does publishing frequency affect AI citation rates? Research on GPT-4o citation practices found that LLMs prefer more recent references with shorter citation windows. Consistent publishing cadence builds citation advantage over time. A brand publishing authoritative content regularly will outperform a brand publishing sporadically, even if individual pieces from the sporadic publisher are stronger.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Track your citations across ChatGPT, Perplexity, Claude, and Google AI Overviews — and find the gaps before your competitors do.