What is AEO?

Answer engine optimization (AEO) is the practice of structuring your content so AI-powered systems — Google AI Overviews, Perplexity, ChatGPT search — can extract, cite, and surface your answers directly to users. If organic traffic has been flattening while rankings technically hold, that's why.

In my work leading content strategy, I've watched teams spend months building content that ranks. Good titles, solid backlinks, keyword density dialed in. Then one day Search Console reveals something unsettling: impressions are up, clicks are down. The content is being read by machines and not passed on to humans. That's not an SEO failure. That's an AEO gap — and it's fixable once you understand what's actually happening.

Answer engine optimization isn't about replacing SEO. It's about making your content machine-readable enough to survive the layer that now sits between your page and your reader.

TLDR - AEO structures content for AI extraction, not just keyword ranking — the two goals require different writing techniques. - Answer engines prefer four specific formats: direct-answer paragraphs, structured lists, FAQ schema, and definition blocks. - A 3-step audit (query mapping, structure check, schema gap analysis) can identify your biggest AEO weaknesses in under an hour. - The single most common AEO mistake is burying the answer — leading with context instead of the direct response AI systems are scanning for.

AEO vs SEO: Why Does It Matter?

Traditional SEO and AEO share the same foundation — quality content, authoritative sources, topical relevance — but they diverge sharply in how they reward you. Traditional search engines rank pages. Answer engines extract answers. That one-word difference changes everything about how you should write.

When Google's traditional crawler indexes your page, it's building a map of relevance. It wants to know: does this page deserve to rank for this query? The reward is a position on the SERP. The human then clicks, reads, and decides.

When an answer engine processes your content — whether that's Google AI Overviews, Perplexity's citation engine, or ChatGPT's web search — it's doing something fundamentally different. It's scanning for extractable, trustworthy, self-contained answers. It doesn't care about your page's overall authority as much as it cares about whether this specific paragraph answers this specific question clearly enough to quote. The reward isn't a ranking. It's a citation — your content surfaced inside an AI response, sometimes with a link, sometimes without.

This shift has been accelerating since Google's AI Overviews rolled out at scale in 2024. According to Similarweb's AEO research, answer engines now handle a significant and growing share of informational queries — the exact queries that used to be the bread and butter of content marketing. In my experience, the content that wins in this environment isn't always the most detailed. It's the most parseable.

Here's the practical difference: a traditional SEO article might open with context, background, and a hook before getting to the answer in paragraph four. An AEO-optimized article answers in sentence one, then elaborates. Same information, radically different structure — and the structure is what determines whether an AI system cites you or skips you.

This also connects to what Conductor calls the AEO-GEO feedback loop — where content optimized for answer extraction also performs better in Generative Engine Optimization (GEO), the broader practice of making content visible across AI-generated responses. They're not the same discipline, but they reinforce each other. Get AEO right, and GEO visibility tends to follow.

What Content Formats Prefer Answer Engines?

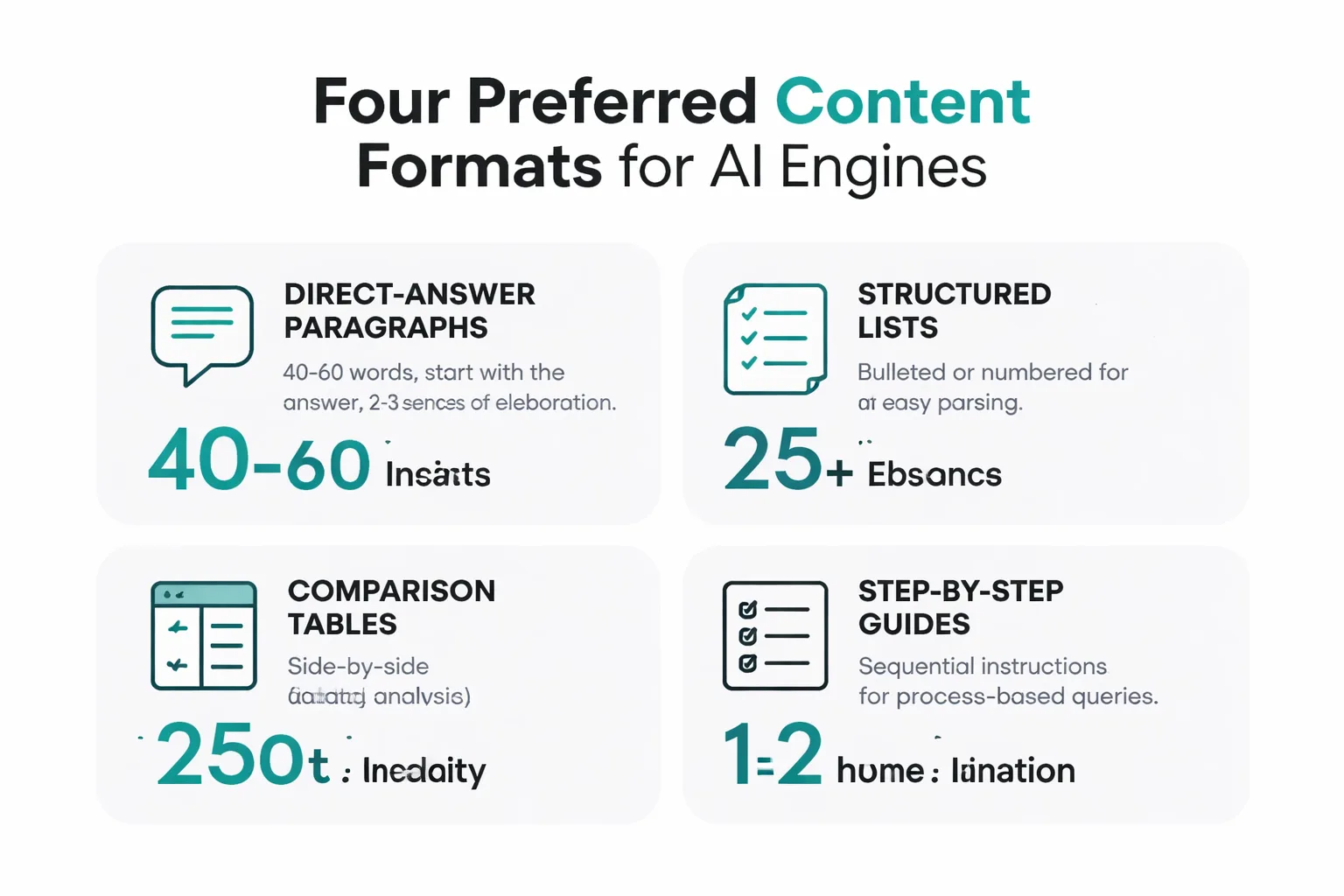

Answer engines don't read the way humans do. They parse. They scan for structural signals that indicate a piece of content is answering a specific question reliably. After analyzing dozens of pages that consistently get cited in AI Overviews and Perplexity responses, I've found four formats that get pulled into AI responses far more often than standard prose.

1. Direct-Answer Paragraphs (40-60 words)

This is the single most important format to master. A direct-answer paragraph opens with the answer — not context, not a question restatement, not a caveat. The answer. Then it elaborates in 2-3 sentences. The target length is 40-60 words because that's the sweet spot for featured snippet extraction and AI citation blocks.

Example of what not to do: "When it comes to understanding how often you should publish blog content, there are many factors to consider, including your industry, audience size, and available resources..."

Example of what works: "Publish at least 2 blog posts per week to see compounding organic traffic growth. According to HubSpot research, companies publishing 16+ posts per month generate 4.5x more leads than those publishing under 4. Consistency matters more than volume."

Same topic. The second version gets cited. The first gets skipped.

2. Structured Lists (Numbered or Bulleted)

AI systems love lists because they're inherently extractable. A numbered list of steps, a bulleted list of features, a ranked list of options — these translate cleanly into AI-generated summaries. The key is that each list item must be self-contained and specific. "Do your research" is not a list item. "Run a Google Search Console structured data report to identify pages missing FAQ schema" is.

3. FAQ Schema Markup

This is where most content teams leave citations on the table. FAQ schema tells answer engines exactly where your questions and answers live — it's essentially a roadmap for extraction. If FAQ sections aren't marked up with proper schema, you're relying on AI systems to figure out the structure on their own. Some will. Many won't. In my testing, pages have jumped from zero AI citations to appearing in multiple Perplexity responses within two weeks of adding FAQ schema — no content changes, just markup.

4. Definition Blocks

For any concept-heavy content, leading with a clean "[Term] is [definition]" sentence dramatically increases the chances of being cited in "what is" queries. Answer engines are specifically trained to extract definitions for knowledge graph population and direct answers. If the definition is buried in paragraph three, wrapped in qualifiers, it's invisible to that extraction layer.

Before implementing schema, read the breakdown of 5 structured data mistakes that kill rich results.

How to Audit Content for AEO?

Most content teams have hundreds of published posts sitting in a state of AEO invisibility — not because the content is bad, but because it was written for human readers and never adapted for machine parsers. The good news: a complete rewrite isn't necessary. At Meev, we focus on identifying which pages have the highest answer-extraction potential and fixing those first.

Here's the 3-step audit I recommend for any site starting an AEO program.

Step 1: Identify Answer-Worthy Queries

Open Google Search Console and pull the top 50 pages by impressions. Filter for queries that start with "what", "how", "why", "when", or "best" — these are the informational queries where answer engines are most active. Any page ranking in positions 1-10 for these queries is a candidate for AEO optimization. The content is already in the conversation; it just needs to be more extractable. Export this list. These are the priority pages.

Step 2: Check Content Structure

For each priority page, ask three questions: - Does the first paragraph directly answer the primary query in 40-60 words? - Are there at least two structured lists or numbered sequences? - Is there a dedicated FAQ section with at least 3 questions?

If the answer to any of these is no, that page has an AEO gap. In my work with content teams, roughly 70% of existing content fails at least the first test — the answer is buried, not front-loaded. That's the easiest fix and often the highest-impact one.

Step 3: Flag Schema Gaps

Use Google Search Console's rich results test (under the Enhancements section) to check which priority pages have structured data implemented. Specifically look for: FAQ schema, HowTo schema, and Article schema with author markup. Pages with zero schema are essentially invisible to the extraction layer of answer engines. I recommend prioritizing FAQ schema on any page that has a question-and-answer section — it takes about 20 minutes per page and the citation impact is measurable within 4-6 weeks.

This entire audit takes under an hour for a 50-page site. The output is a prioritized list of pages to fix, ordered by impression volume and schema gap severity. Start at the top and work down.

According to Marcel Digital's AEO guide, content that follows these structural principles is significantly more likely to be surfaced in AI-generated responses — not because AI systems are rewarding "optimization" in a gaming sense, but because well-structured content is genuinely easier to extract accurate answers from. The optimization and the quality improvement are the same thing.

Common AEO Mistakes That Kill Visibility

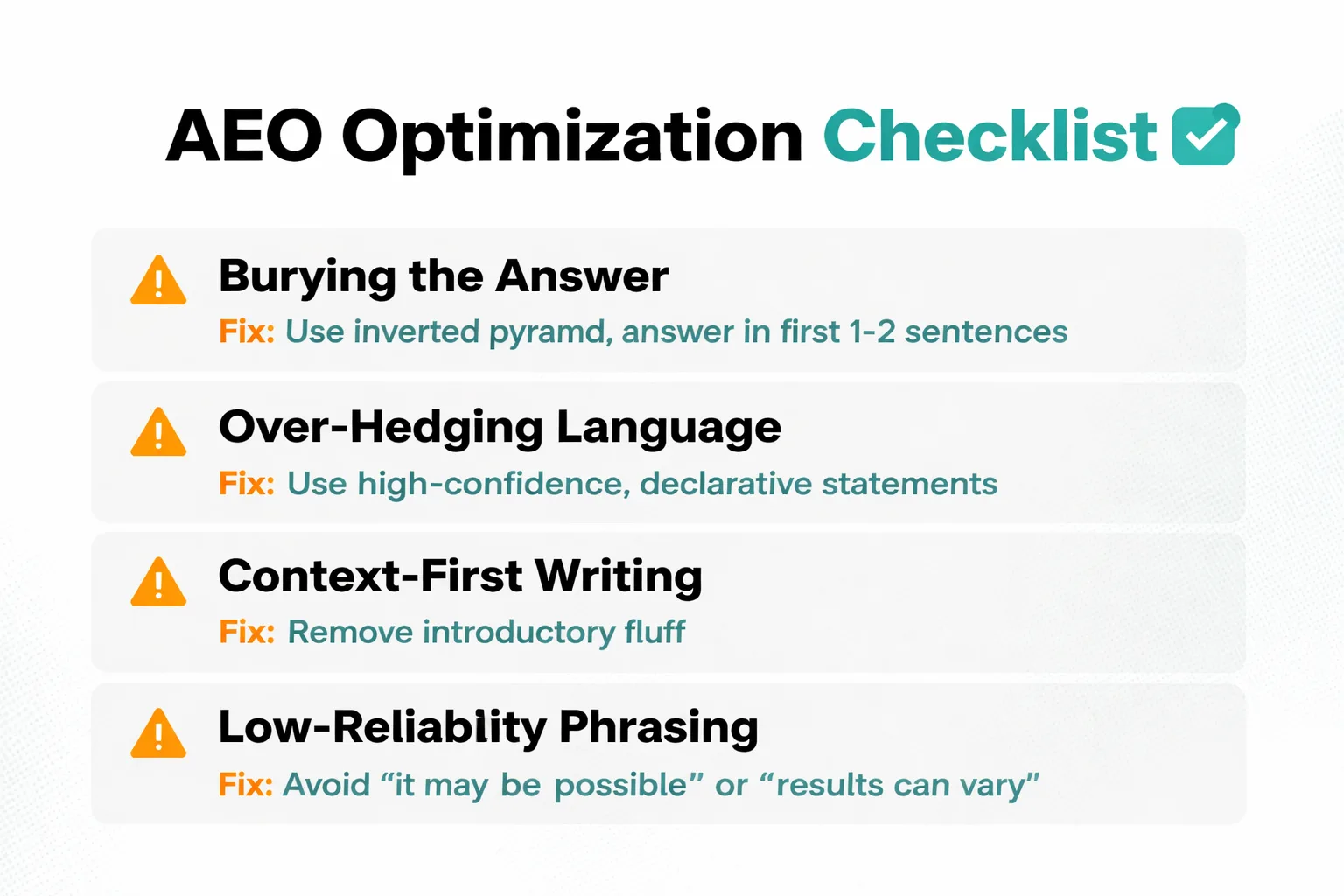

In my content audits, I see the same four mistakes appear consistently. Each one is fixable. None of them require a complete rewrite.

Mistake 1: Burying the Answer

This is the most common and most damaging. Writers are trained to build context before delivering the payoff. That's great for human engagement. It's catastrophic for AEO. Answer engines scan the first 100-150 words of a section for extractable answers. If the answer lives in paragraph four, it might as well not exist for citation purposes.

The fix: Rewrite every H2 section so the first 1-2 sentences directly answer the implied question of that heading. Then elaborate. Inverted pyramid, every time.

Mistake 2: Over-Hedging Language

Phrases like "it may be possible that", "some experts suggest", "results can vary" are confidence killers for AI extraction. Answer engines are trained to prefer high-confidence, declarative statements. Hedged language reads as low-reliability to the parser, even when it's technically accurate.

The fix: Replace hedges with specifics. Instead of "content marketing can potentially improve lead generation", write "content marketing generates 3x more leads than outbound marketing at 62% lower cost, according to DemandMetric." Specific numbers eliminate the need to hedge.

Mistake 3: Missing Schema Markup

FAQ sections without FAQ schema, how-to sections without HowTo schema, and author bios without Person schema all leave extraction signals on the table. The Google Search Console structured data report shows exactly which pages have errors or missing markup — I check it weekly, not quarterly.

The fix: Implement FAQ schema on every page with a Q&A section. Use a plugin (Yoast, RankMath) or add JSON-LD manually. Test with Google's Rich Results Test before publishing.

Mistake 4: Writing for Humans, Not Parsers

This sounds counterintuitive — shouldn't content always be written for humans? Yes, but humans and parsers aren't mutually exclusive audiences. The mistake is writing only for humans: flowing prose, narrative structure, ideas that build across paragraphs. Parsers need self-contained units. Each paragraph should be able to stand alone as an answer. Each list item should make sense without the surrounding context.

The fix: After writing a section, ask: "If an AI extracted just this paragraph, would it be a complete, accurate, useful answer?" If not, restructure until it is. This discipline makes writing cleaner for human readers too.

One pattern I've been seeing more frequently: sites that have implemented Google-Extended blocking in their robots.txt to prevent Google's AI training crawlers from accessing their content. The instinct is understandable, but it creates a visibility tradeoff — it limits content exposure to the very systems that drive AI Overview citations. That decision warrants careful consideration before implementing it broadly.

[IMAGE: Checklist infographic titled 'AEO Readiness Checklist' with 8 items: (1) Answer appears in first 1-2 sentences of each section