## What a Quality Gate Actually Is

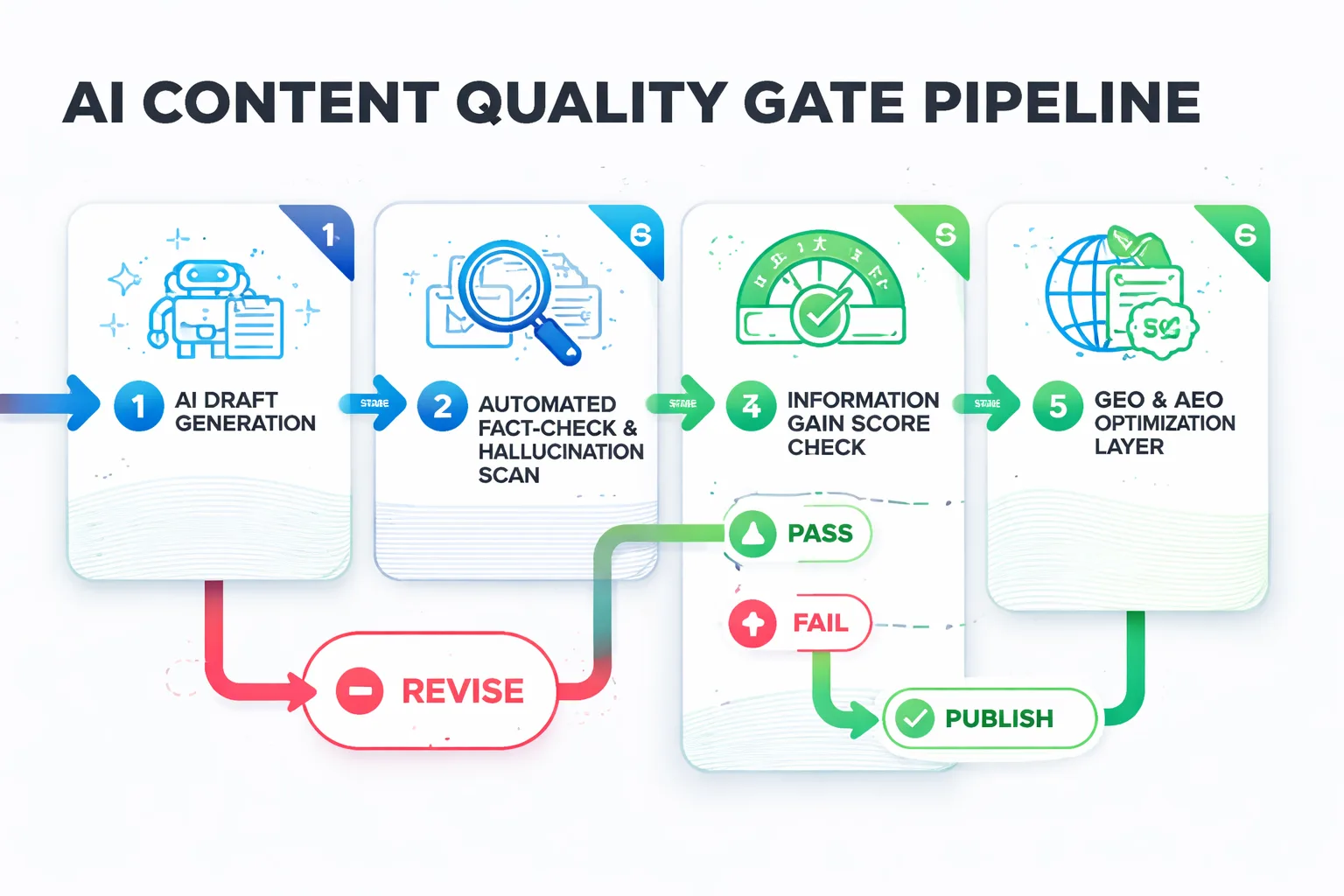

A quality gate is a programmatic checkpoint — or series of checkpoints — that AI-generated content must pass before it goes live. Think of it as the editorial equivalent of a CI/CD pipeline in software engineering: you don't ship code without automated tests, and you shouldn't ship AI content without automated validation. The gate can be human, algorithmic, or hybrid, but the key word is systematic. It's not a one-time review — it's a repeatable process baked into your publishing workflow.

Key Takeaways

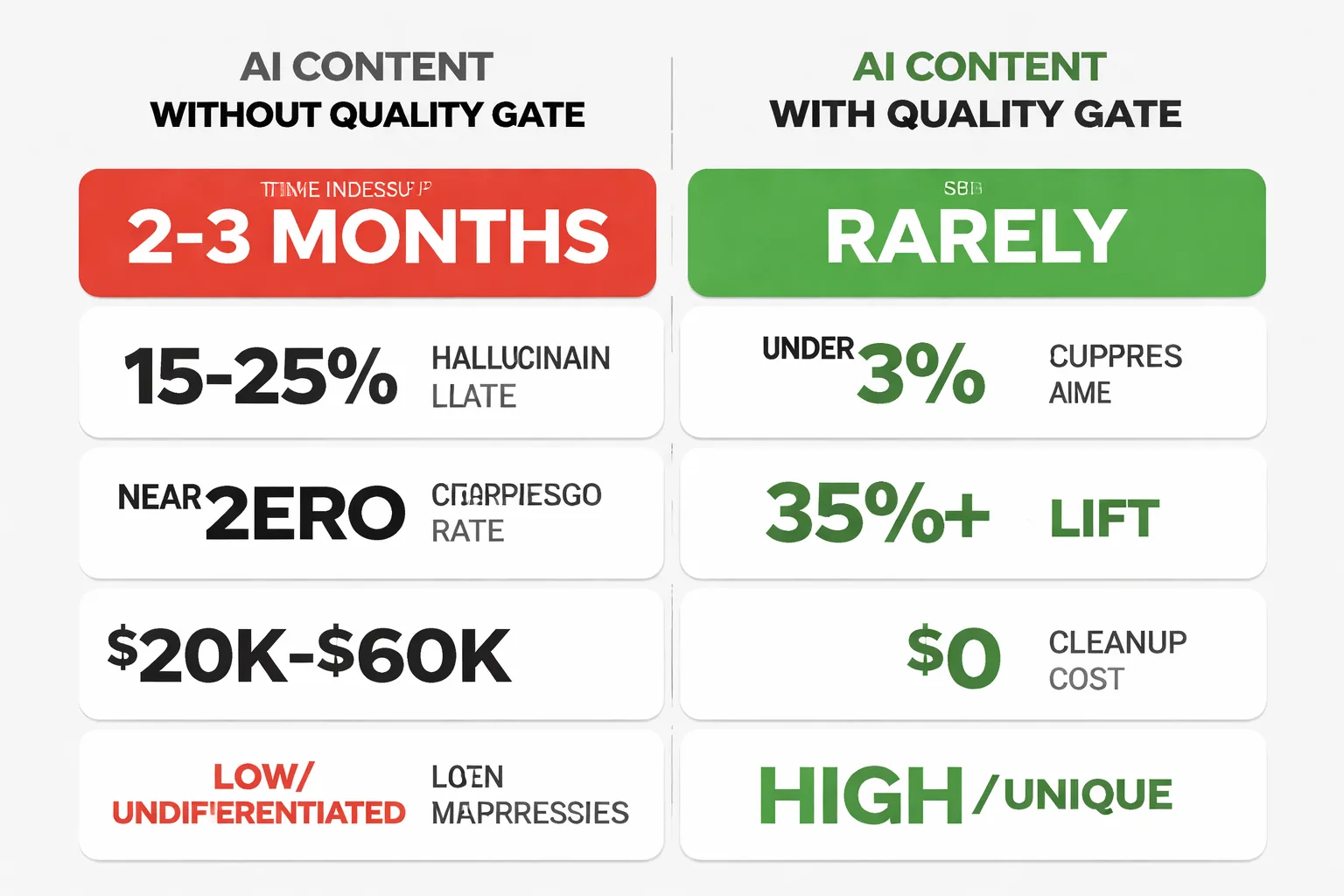

- Publishing AI content without a quality gate creates compounding financial damage — cleanup costs routinely exceed original production savings by 3–5x, with mid-size blogs facing $20,000–$60,000 in direct audit and consolidation fees.

- Google's helpful content system doesn't penalize AI-generated text — it penalizes content with no information gain, no expertise signal, and no original perspective, which is exactly what unreviewed AI output produces.

- Brands cited inside AI Overviews earn roughly 35% more organic clicks, making GEO optimization a core function of any quality gate — not an optional add-on.

- A three-checkpoint quality gate (automated fact-check, human-in-the-loop expert review, GEO optimization layer) can catch 90%+ of the failure patterns that cause index suppression, and adds only 35–60 minutes per article.

Most teams don't have one. They have a vague sense that someone should "check" the output before it goes live, but no defined criteria, no tooling, and no enforcement mechanism. That gap is where the ai content quality issues accumulate — quietly, until they're not quiet anymore.

\"The failure mode of AI content isn't technological — it's strategic. Google doesn't penalize AI-generated text. It penalizes content with no unique information, no expert layer, and no quality gate.\"

Key Takeaways (TLDR): - Publishing AI content without a quality gate creates compounding financial damage — cleanup costs routinely exceed the original production savings by 3–5x. - Google's helpful content guidelines don't penalize AI text — they penalize content with no information gain, no expertise signal, and no original perspective. - Brands cited inside AI Overviews earn roughly 35% more organic clicks — making GEO optimization a core function of any quality gate, not an afterthought. - A programmatic quality gate with human-in-the-loop validation at three specific checkpoints can catch 90%+ of the failure patterns that cause index suppression.

What Are AI Content Quality Issues?

I've watched a pattern play out repeatedly across the brands I work with — what I now call the "Mount AI" curve. A content team ships a wave of AI-generated posts. Rankings spike encouragingly in the first few weeks. Then, around the two-to-three month mark, traffic falls off a cliff and pages start disappearing from the index entirely. The team panics, publishes more content to compensate, and the hole gets deeper.

The data from Radyant's content engineering research confirms what I've seen firsthand: deleting roughly 80% of a blog's AI-generated posts has, in documented cases, doubled organic traffic. Let that sink in. The dead weight of undifferentiated AI content was actively suppressing the pages that deserved to rank. What's counterintuitive here is that Google isn't running some AI-detection algorithm and flagging your posts. Over 85% of pages ranking in the top 20 contain AI-generated content — so the technology itself isn't the problem. The problem is content that has no information gain, no unique perspective, and no signal that a human expert touched it.

The three failure patterns I see most often:

- Hallucinations and factual drift: AI models confidently state statistics that don't exist, attribute quotes to the wrong people, or describe product features that were deprecated two years ago. In regulated industries, this isn't just an SEO problem — it's a liability. - Homogenization: When every competitor is using the same foundation models with similar prompts, the outputs converge. Your "unique" article on content strategy reads identically to the one your competitor published yesterday. Google's quality rater guidelines explicitly flag this as a low-quality signal. - No information gain: The content accurately summarizes what's already on the internet. It adds nothing. This is the most common failure mode and the hardest to catch without a deliberate review step.

As a Head of Content Strategy, I've seen teams spend $8,000–$15,000 on AI content production, then spend $40,000+ on cleanup — manual audits, redirect mapping, content consolidation, and the lost revenue during the traffic trough. The math doesn't work without a gate.

What Are the Hidden Costs of AI Content?

Here's what the content cleanup bill actually looks like when you skip the quality gate. I'm not talking about hypotheticals — I'm talking about the line items I've seen in post-mortem audits.

First, there's the direct cleanup cost: a technical SEO audit to identify which pages are suppressed or deindexed, content consolidation work (merging thin posts into substantive ones), redirect mapping, and the editorial hours to add the expert layer that should have been there from the start. For a mid-size blog with 200–400 AI-generated posts, this runs $20,000–$60,000 in agency or contractor fees, conservatively.

Then there's the opportunity cost — the traffic and revenue you didn't earn during the suppression period. If your blog was generating $15,000/month in attributed pipeline before the content flood, and you lose six months of that while you recover, that's $90,000 in foregone revenue. That number doesn't appear on any invoice, which is exactly why it gets ignored in the original production decision.

Brand reputation damage is the third cost, and it's the hardest to quantify. When a hallucinated statistic gets picked up by a journalist, or a factually wrong how-to guide gets shared in a professional community, the correction cycle is brutal. I've watched brands spend months trying to rebuild trust with audiences who caught the errors. A single high-profile factual failure can cost more in brand equity than an entire year of content production savings.

The irony is that a quality gate isn't expensive to build. The cost is in the discipline of actually running it.

How to Build a Programmatic Quality Gate

This is the section most articles skip — the actual architecture. Here's the three-checkpoint system I recommend for teams publishing at scale.

Checkpoint 1: Automated Pre-Publish Validation

Before any human reviews the content, run it through automated checks. This is your first filter and it should catch the obvious failures:

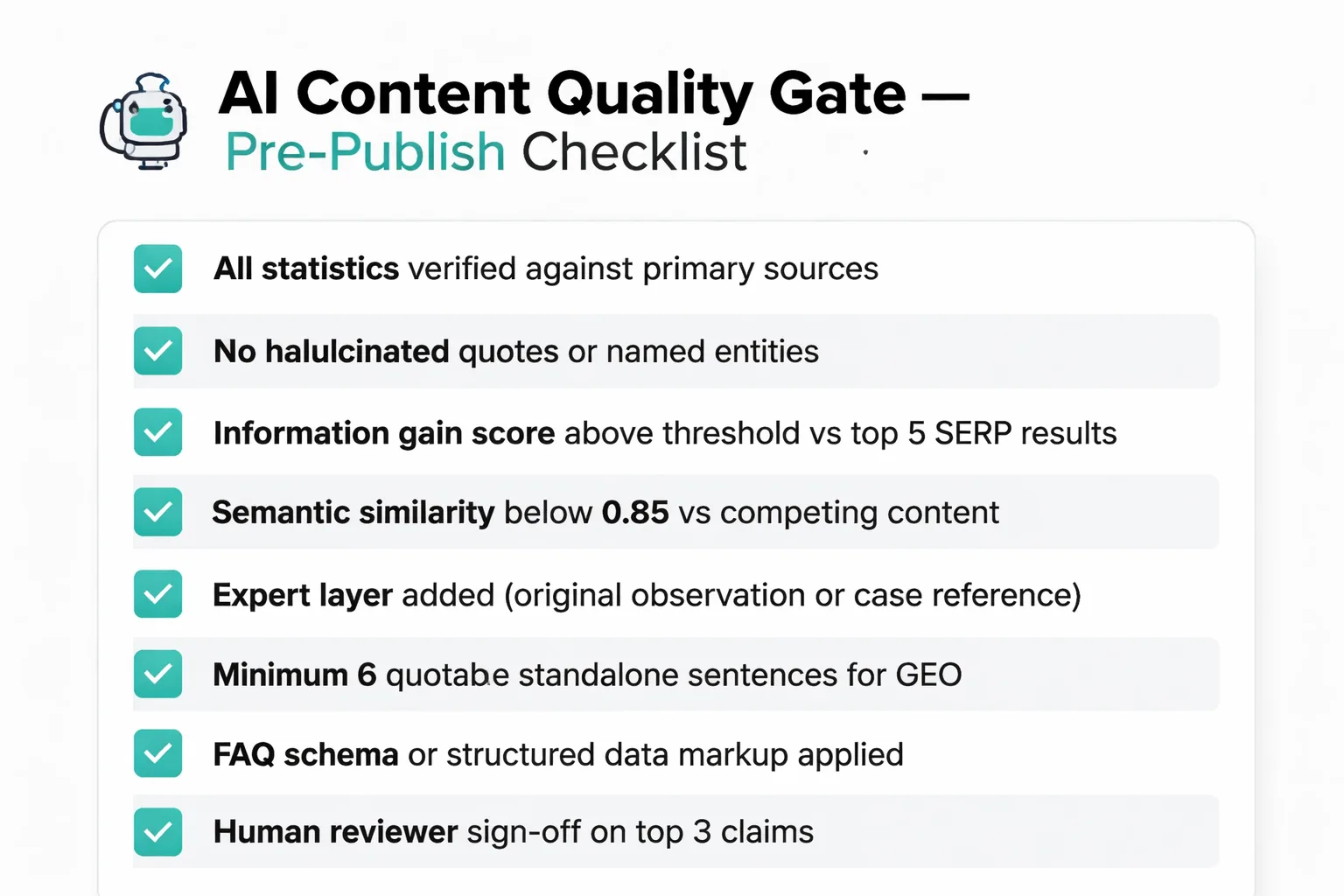

1. Factual claim extraction: Use an LLM-based fact-checking layer (tools like Factmata, or a custom GPT-4 prompt chain) to extract every specific claim — statistics, dates, named entities — and flag ones that can't be verified against a trusted source. 2. Plagiarism and homogenization check: Run the draft through Copyscape or a semantic similarity tool against your own published content and the top 10 SERP results. If cosine similarity is above 0.85 with existing content, it's a rewrite, not a new article. 3. Information gain score: This is the one most teams miss. Prompt an LLM to compare your draft against the top 5 ranking pages and score how many genuinely new data points, perspectives, or frameworks your article introduces. If the score is below a defined threshold, the draft goes back for enrichment — not to a human editor, but back to the AI with a specific prompt: "Add three insights not found in these competing articles."

Checkpoint 2: Human-in-the-Loop Expert Review

Automation catches the obvious failures. Humans catch the subtle ones. But human review at scale only works if you scope it tightly — you can't ask an expert to rewrite every AI draft. What you can ask them to do is:

- Validate the top 3 claims in each article against their domain knowledge - Add one original observation, case study reference, or data point that the AI couldn't have generated - Flag any advice that's technically accurate but contextually wrong for your audience

This takes 15–20 minutes per article, not 2 hours. The key is giving reviewers a structured checklist rather than an open-ended editing task. I've found that unstructured review requests get ignored or rubber-stamped; structured checklists with specific yes/no gates get taken seriously.

Checkpoint 3: GEO and AEO Optimization Layer

This is the checkpoint that most quality gate frameworks don't include — and it's increasingly the one that determines whether your content earns citations in AI search surfaces. According to Seer Interactive's analysis of AI Overview impact, brands cited inside AI Overviews earn roughly 35% more organic clicks and over 90% more paid clicks than those that aren't cited. That gap is only going to widen.

For GEO optimization, your quality gate should check:

- Does the article contain at least 6 quotable, standalone sentences that make sense extracted out of context? These are what AI search engines pull for citations. - Does every question-format H2 have a 40–60 word self-contained answer paragraph directly beneath it? - Are there structured data elements (FAQ schema, HowTo schema via Google Search Console structured data markup) that help AI parsers understand the content's structure?

If the answer to any of these is no, the article doesn't publish until it does.

What Is the GEO Impact of AI Content Quality Issues?

Most quality gate conversations focus on Google rankings. That's the right instinct for 2023. For 2025 and beyond, the conversation has to include generative engine optimization — because the traffic dynamics have fundamentally shifted.

What I'm seeing in performance data is a collapse in click-through behavior tied directly to AI Overviews. On informational queries where Google surfaces an AI Overview, organic CTR has fallen roughly 60% since mid-2024 — from around 1.76% to 0.61%. Even queries without AI Overviews saw organic CTR fall around 40%. The old model of "rank in position 1, get clicks" is broken.

The new model is: get cited inside the AI Overview, or accept dramatically reduced visibility. And here's the brutal truth about AI-generated content without a quality gate — it almost never gets cited. AI search engines are pulling from content that demonstrates genuine expertise, contains verifiable specific claims, and is structured for extraction. Thin, homogenized AI content fails all three criteria simultaneously.

This means the quality gate isn't just an SEO risk-management tool anymore. It's a GEO prerequisite.

If you're building a content pipeline at scale — and you should be, because the volume advantage of AI is real — the gate is what separates content that compounds in value from content that accumulates as technical debt. For a deeper look at how to structure that pipeline sustainably, How to Build a Content Pipeline That Runs Without You walks through the operational architecture in detail.

The teams I see winning right now aren't publishing less AI content. They're publishing AI content that has passed a gate — and that distinction is everything.

Is your AI content pipeline set up to catch failures before they cost you rankings?

What Is Google's Actual Stance on AI Content?

Google's position is frequently misrepresented, so let me be direct about what the guidelines actually say. Google's helpful content system doesn't penalize AI-generated text. It penalizes content that fails to demonstrate experience, expertise, authoritativeness, and trustworthiness — the E-E-A-T framework embedded in the Google search quality rater guidelines.

The practical implication: an AI-generated article that includes verified data, a named expert's perspective, and original analysis can outrank a human-written article that's vague and generic. The technology of production is irrelevant. The quality of the output is everything.

What the quality gate does, structurally, is force your AI content to meet the E-E-A-T bar before it publishes. The automated fact-check addresses the expertise and authoritativeness signals. The human-in-the-loop review adds the experience layer. The GEO optimization layer ensures the content is structured for the trust signals that AI search engines use to decide what to cite.

Skip the gate, and you're gambling that your AI output naturally clears a bar that most AI output doesn't clear. That's not a content strategy — that's wishful thinking.

How Can You Build the Gate Without Slowing Down?

The objection I hear most often: "We're publishing at scale because speed matters. A quality gate will kill our velocity."

This is a false tradeoff, and I've seen it proven wrong enough times that I'm no longer patient with it. A well-architected gate adds 20–40 minutes of processing time per article — most of which is automated. The human checkpoint, scoped correctly, adds another 15–20 minutes. You're not slowing down your content operation. You're adding a quality filter that runs in parallel with your production queue.

The teams that have implemented this architecture — automated validation, structured human review, GEO optimization layer — consistently report that their rejection rate at the gate is highest in the first two weeks, then drops sharply as the AI prompts get refined based on what's failing. The gate teaches you how to prompt better. Within a month, most teams see their pass rate climb above 80%, which means the gate is catching the 20% that would have caused the most damage.

That's the compounding return nobody talks about: the quality gate isn't just a filter, it's a feedback loop that improves your entire content production system over time by preventing ai content quality issues.

FAQ

What is a quality gate in AI content workflows?

A quality gate is a systematic checkpoint — automated, human, or hybrid — that AI-generated content must pass before publishing. It validates factual accuracy, information gain, and structural optimization for search and AI citation. Without one, content teams have no reliable mechanism to catch the failure patterns that cause index suppression and brand damage.Does Google penalize AI-generated content?

Google does not penalize AI-generated text as a category. Its helpful content system penalizes content that lacks experience, expertise, authoritativeness, and trustworthiness signals — regardless of how it was produced. AI content that passes a rigorous quality gate can and does outrank human-written content that lacks those signals.What are the most common AI content quality issues?

The three most common AI content quality issues are hallucinations (fabricated statistics, misattributed quotes, outdated facts), homogenization (outputs that are semantically identical to competing content), and zero information gain (accurate summaries of existing content that add no new perspective or data). All three are catchable with a structured quality gate.How much does it cost to clean up bad AI content?

Cleanup costs for a mid-size blog with 200–400 undifferentiated AI posts typically run $20,000–$60,000 in direct audit and consolidation work, plus significant opportunity cost from the traffic trough during recovery. This routinely exceeds the original production savings by 3–5x.What is GEO optimization and why does it matter for AI content?

Generative Engine Optimization (GEO) is the practice of structuring content to earn citations inside AI search surfaces like Google AI Overviews, ChatGPT, and Perplexity. Brands cited inside AI Overviews earn roughly 35% more organic clicks. AI-generated content without a quality gate almost never earns these citations because it lacks the verifiable specificity and structural clarity that AI search engines require.What tools can I use for automated fact-checking in a quality gate?

Factmata and custom LLM-based prompt chains (using GPT-4 or similar) are the most practical options for automated claim extraction and verification. For semantic similarity checks, Copyscape handles plagiarism, while vector-based similarity tools can flag homogenization against SERP competitors. Google Search Console structured data testing tools handle the schema validation layer.How do I add a human-in-the-loop review without slowing down content production?

Scope the human review task tightly: validate the top 3 claims, add one original observation, and flag contextually wrong advice. Use a structured checklist with yes/no gates rather than open-ended editing requests. This takes 15–20 minutes per article and can run in parallel with your automated validation queue — it doesn't require sequential blocking of your production pipeline.What is information gain and how do I measure it?

Information gain is the degree to which a piece of content introduces facts, perspectives, frameworks, or data points not already present in competing content. You can measure it by prompting an LLM to compare your draft against the top 5 ranking pages and score the number of genuinely novel elements. Set a minimum threshold — I use a score of at least 3 unique substantive points — and reject drafts that fall below it.Stop publishing AI content blind. Build a quality gate that protects your rankings and your brand.