Google Search Quality Rater Guidelines: What They Actually Say About AI Content

Google Search quality rater guidelines (QRG) are often treated like a secret manifesto, but in my experience, the truth is far more practical. While many content teams fear that any AI involvement triggers a manual penalty, what I've found buried in the QRG is far more nuanced: Google doesn't care about the tool used to create the content—it cares about the E-E-A-T signals that AI often fails to generate on its own.

I've watched this pattern play out repeatedly across teams I've worked with who were doing everything "right" by the old playbook. The problem isn't that they used AI. The problem is that they never read what Google's quality raters are actually trained to look for — and those raters are the human feedback loop that shapes the ranking systems everyone obsesses over.

The Google Search Quality Rater Guidelines are 170 pages long. Most people skim the E-E-A-T section, nod along, and move on. That's a mistake this guide will help you stop making.

TLDR - Google quality raters are explicitly trained to flag AI-generated content that lacks originality, first-hand experience, or information gain — not just content that "sounds robotic." - E-E-A-T is not a checklist; it's a holistic judgment call raters make about whether a real, credible human is behind the content. - The "Lowest" quality rating — the one that can trigger manual action — is applied to content that is "copied, auto-generated, or paraphrased" without added value. - You can audit your existing content against rater standards using four specific signals: author credentials, sourcing depth, factual accuracy markers, and original insight.

What Do Quality Raters Do?

Google quality raters are contractors — real humans, tens of thousands of them globally — who evaluate search results using a standardized rubric. They don't directly change rankings. That bears repeating because it's the most misunderstood thing in SEO: raters cannot manually demote your page. What they do is generate labeled data that Google uses to train and validate its ranking algorithms. Think of them as the ground truth that the machine learning models are trying to approximate.

This distinction matters enormously for how you think about the guidelines. When raters are trained to flag AI-generated content that lacks originality, Google is essentially signaling: "We are building systems to detect exactly this." The guidelines are a preview of the algorithm's future behavior, not just a philosophical document. In my work tracking these guidelines across multiple update cycles, I've found that the 2024 revisions made the AI content language significantly more specific — not vague gestures toward "quality" but concrete behavioral markers raters are told to look for.

The two primary rating tasks raters perform are Page Quality (PQ) ratings and Needs Met (NM) ratings. PQ asks: is this page high quality in isolation? NM asks: does this page actually satisfy the user's search intent? Both matter for AI content, but PQ is where most AI-generated pages fail first. A page can technically answer a question while still being rated low quality because it shows no evidence of genuine expertise or original thought. That combination — technically responsive but experientially hollow — is exactly what mass-produced AI content produces at scale.

What Language Flags E-E-A-T AI Content?

I want to be precise here rather than paraphrase, because paraphrasing the guidelines is how misinformation spreads. The Google Search Quality Rater Guidelines explicitly instruct raters to assign the "Lowest" quality rating to pages that are "automatically generated with little to no editing or review" and content that is "copied or paraphrased" from other sources without adding value.

That phrase — "little to no editing or review" — is doing a lot of work. It's not a ban on AI. Google's own guidance confirms that using AI or automation isn't against their policies, provided it isn't used primarily to manipulate rankings. The line is drawn at effort and value, not at the tool used.

The guidelines also introduce the concept of "information gain" — the idea that a page should add something to the existing body of knowledge on a topic, not just restate what's already ranking. This is the criterion that eliminates most AI content briefs I review. When an AI model is prompted to "write a 1,500-word article about X," it synthesizes existing content. By definition, it cannot add information gain without deliberate human intervention — a proprietary study, a first-hand account, a novel analysis.

Raters are also trained to look for what the guidelines call "Lowest" E-E-A-T signals, which include: - No author information or clearly fake credentials - Content that contradicts well-established expert consensus - Pages where the main content is "thin" relative to the page's stated purpose - Excessive ads or affiliate links that suggest the primary purpose is monetization, not helpfulness

One line from the guidelines stood out to me on careful reading: raters are told that a page can be rated Lowest even if it contains accurate information, if the presentation suggests the creator has no genuine expertise. Accuracy alone isn't enough. That's a fundamentally different bar than most content teams are optimizing for.

E-E-A-T AI Content in Practice: What Passes vs. Gets Flagged

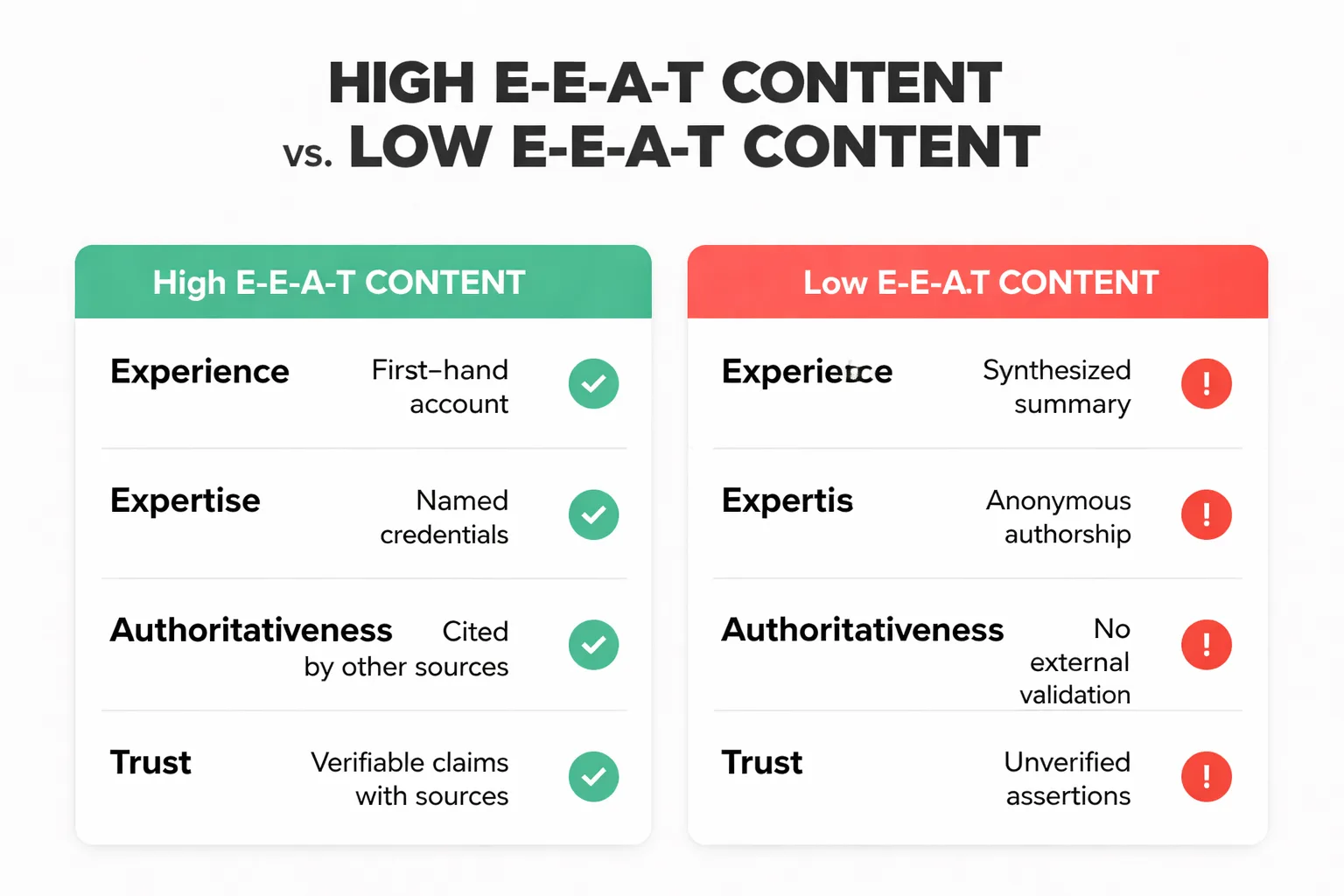

E-E-A-T — Experience, Expertise, Authoritativeness, and Trust — is not a four-box checklist. The guidelines are explicit that raters make a holistic judgment, not a point-by-point audit. That said, there are concrete signals I've seen consistently move content toward high or low ratings.

Experience is the newest addition to the framework, added specifically because Google recognized that AI could fake expertise but couldn't (yet) fake lived experience. A medical article written by someone who has managed a chronic condition reads differently than one written by someone who researched it. Raters are trained to look for first-person specificity: not "patients often report" but "when managing a personal diagnosis, the thing nobody told me was..."

For AI content creators, this is the hardest signal to manufacture — and the most important one to inject deliberately. If AI is being used to draft content, the human editor's job is to layer in genuine experience. Not fake anecdotes. Real observations, real data from actual testing, real client outcomes. In my work with content teams at Meev, I've found that a single specific, verifiable detail consistently improves content quality in ways that generic synthesis cannot replicate.

Expertise is demonstrated through accuracy, appropriate use of technical language, and the ability to acknowledge nuance and uncertainty. AI models are notoriously bad at the last one — they tend toward confident, smooth prose even when the underlying topic is genuinely contested. Raters are trained to notice when a page presents complex topics as settled when they aren't.

Authoritativeness is largely an off-page signal — it's about whether other credible sources cite or reference the content. But on-page, raters look for whether the author is named, whether their credentials are verifiable, and whether the publication has a clear editorial standard.

Trust is the foundation. The guidelines state that Trust is the most important E-E-A-T dimension. For AI content specifically, trust signals include: accurate factual claims, transparent disclosure of AI use where relevant, clear sourcing, and a website that demonstrates accountability (real contact information, clear ownership, consistent editorial voice).

Here's a comparison that illustrates the difference in practice:

| Signal | Passes Rater Review | Gets Flagged |

| Author attribution | Named author with verifiable bio and relevant credentials | "Staff Writer" or no author listed |

| Sourcing | Inline citations to primary sources (studies, official data) | Vague references to "experts say" |

| Experience markers | Specific first-hand observations or original data | Generic synthesis of existing content |

| Factual accuracy | Claims match current expert consensus with caveats where appropriate | Confident assertions on contested topics |

| Content depth | Covers the topic beyond what top-ranking pages already say | Restates the same points as competitors |

How to Audit Your Existing Content Against Google Content Quality Standards

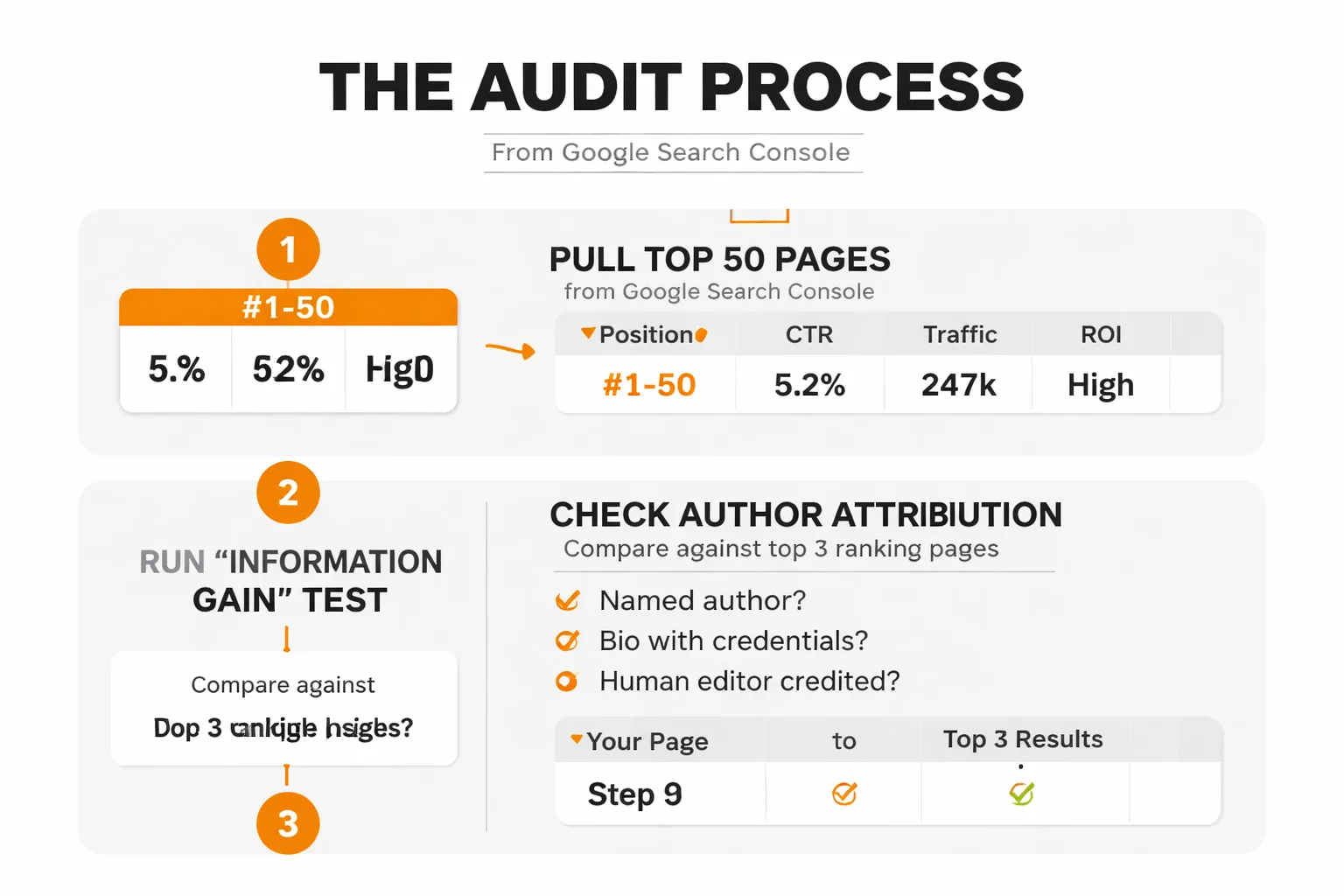

I recommend a quarterly audit of existing content for any team serious about QRG alignment. The goal isn't to retroactively fix everything — it's to identify which pages are most exposed to a quality rating downgrade and prioritize accordingly.

Here's the process I use, step by step:

1. Pull your top 50 pages by impressions from Google Search Console. These are your highest-exposure pages — the ones raters are most likely to encounter if your site is sampled. 2. Check author attribution on each page. Does every page have a named author? Does that author have a bio with verifiable credentials? If AI assistance was used, is there a human editor credited? Fix the ones that don't pass this check first — it's the lowest-effort, highest-impact fix. 3. Run the "information gain" test. Open the top 3 ranking pages for your target keyword. Read your page. Ask honestly: does your page say anything those three pages don't? If the answer is no, that page is a candidate for a content refresh, not just a meta description tweak. 4. Check every factual claim against a primary source. Not another blog post — a study, an official report, a named expert. If a claim can't be sourced to something verifiable, either remove it or qualify it explicitly. 5. Evaluate the "Needs Met" dimension. Read the page as if you just searched for the primary keyword. Did you actually get what you came for? Or did you get a lot of context-setting before the real answer? Front-load your answers. 6. Flag thin pages for consolidation or expansion. The guidelines define "thin" content not by word count but by whether the main content fulfills the page's stated purpose. A 300-word page that fully answers a simple question can be high quality. A 2,000-word page that circles the topic without landing can be thin.

For teams using AI content creation at scale, I'd add a seventh step: review your AI prompts against the E-E-A-T framework. If the brief doesn't explicitly ask for first-hand experience, sourced claims, and original analysis, the AI output won't contain them — and no amount of post-editing will fully compensate. This connects directly to the content brief work covered in the next section, and it's also why the 7 signals Google uses to rank AI vs. human content matter — the overlap with rater criteria is significant.

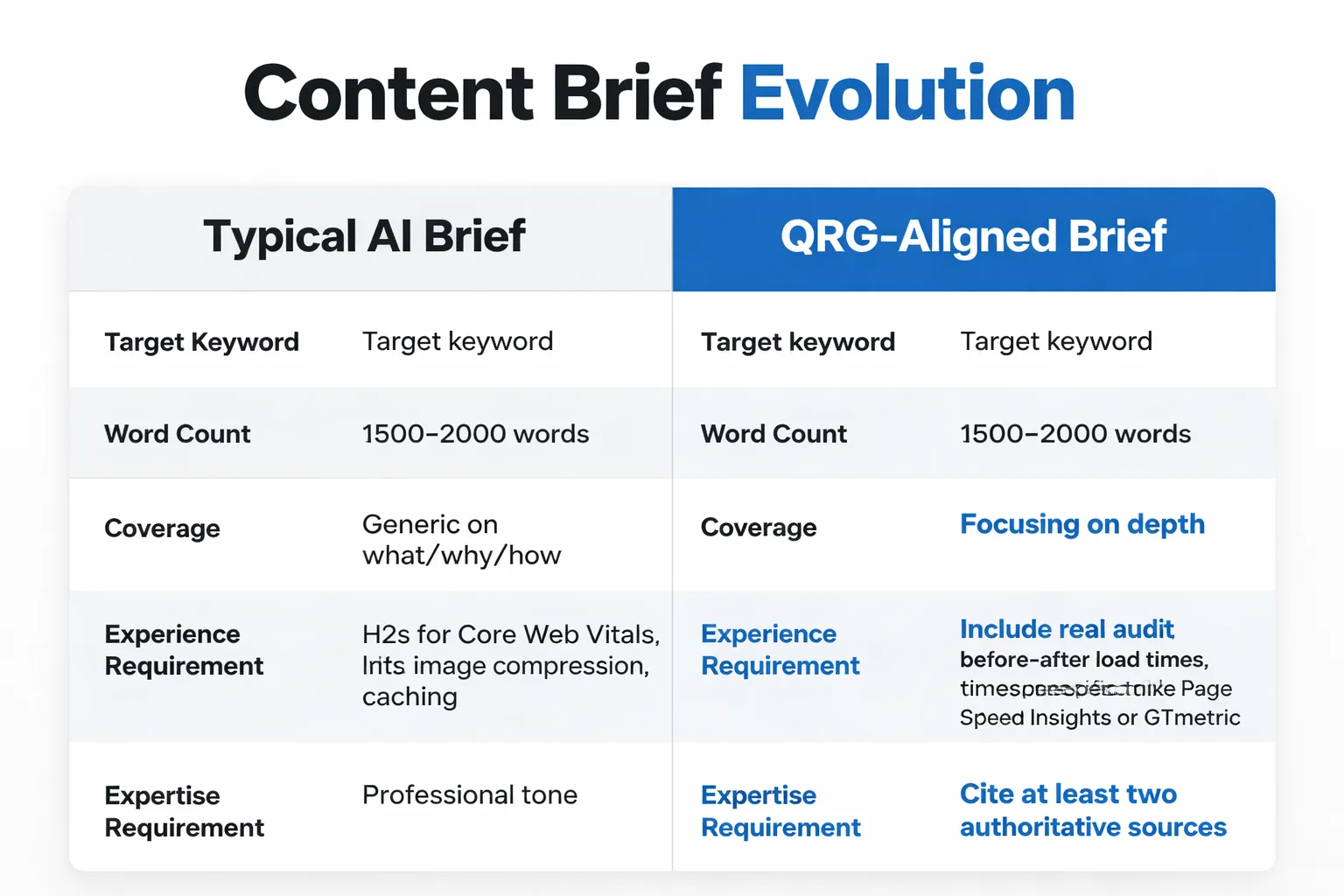

Building a Content Brief That Bakes In Quality

Most content briefs I see in the wild are keyword briefs dressed up as content briefs. They tell writers what to cover and how long to write — but they don't tell writers how to demonstrate quality at the rater level. Here's what that looks like before and after.

Before (typical AI content brief): - Target keyword: "page speed optimization for SEO" - Word count: 1,500-2,000 words - Cover: what page speed is, why it matters, how to improve it - Include H2s for: Core Web Vitals, image compression, caching - Tone: informative, professional

After (QRG-aligned content brief): - Target keyword: "page speed optimization for SEO" - Word count: 1,500-2,000 words — but depth over length. Every section must pass the "so what?" test. - Experience requirement: Include at least one specific result from a real site audit — actual before/after load times, actual tool used (e.g., PageSpeed Insights, GTmetrix), actual impact on rankings or conversions. - Expertise requirement: Cite at least two primary sources (Google's Core Web Vitals documentation, a published study on speed/conversion correlation). No citing other blog posts as sources. - Information gain requirement: Before writing, review the top 3 ranking pages. Identify one angle, data point, or recommendation they don't cover. That's your lead. - Trust requirement: Author bio must include relevant credentials. If AI-assisted, name the human editor responsible for factual accuracy. - Needs Met requirement: The primary query must be answered in the first 100 words. No preamble. - Thin content check: Each H2 section must include either a concrete example, a specific number, or a step-by-step process. No section that is purely definitional.

The difference isn't just philosophical — it's operational. When these requirements are baked into the brief, writers (human or AI-assisted) have a quality floor, not just a content outline. At Meev, we've seen teams that front-load these requirements into the brief rather than catching problems after the draft is done consistently report significant reductions in post-publication editing time.

The most important shift: Stop thinking of the content brief as a keyword targeting document. Think of it as a quality rater pre-flight checklist. If the brief can't pass a rater's Page Quality review on paper, the published content won't either.

One more point I want to make: the guidelines treat Google Search Console structured data as a trust signal in a roundabout way. Pages with proper schema markup — author schema, article schema, fact-check schema — give raters (and the systems trained on their feedback) more structured signals to evaluate. It's not a direct ranking factor, but it's part of the trust infrastructure that supports a high PQ rating. If structured data isn't being implemented correctly, that's a separate problem worth addressing.

The Contrarian Take on AI Content Rules

Most people think the solution to AI content quality problems is to write less AI content. In my view, that's the wrong conclusion. The solution is to use AI for what it's actually good at — structure, speed, coverage — and to invest human effort in the three things AI cannot generate: original experience, verified sourcing, and genuine information gain.

At Meev, we've found that content teams publishing 30% less content but investing the saved time in deeper research and real expert input consistently outperform teams publishing at maximum AI velocity. The Google Search quality rater guidelines aren't anti-AI. They're anti-hollow. That's a meaningful distinction, and it's one that changed my entire approach to production strategy when I took it seriously.

The teams winning right now aren't the ones blocking AI (Google-Extended blocking is a separate conversation about training data, not rankings). They're the ones who treat AI as a first draft engine and human expertise as the quality layer that makes the draft worth publishing.