You've stared at a Screaming Frog export with 47,000 rows, a Google Search Console report flagging 312 pages with declining impressions, and a content inventory spreadsheet that hasn't been touched since Q3. The audit is already two weeks late. The client wants answers, not a pivot table. This is the exact situation where an AI-driven SEO audit workflow stops being a nice-to-have and starts being the only realistic path forward.

I've been running technical SEO audits for over a decade, and the truth is that the bottleneck was never data collection — it was interpretation at scale. Claude Code changes that equation. Not by replacing judgment, but by collapsing the gap between "here's what the data says" and "here's what we actually fix today."

The shift isn't from manual to automated. It's from reporting to remediation. Claude Code doesn't just tell you what's broken — it writes the fix.

TLDR: - Claude Code transforms SEO audits from passive reporting into active remediation by executing code against your actual site data — not just summarizing it. - Marketing teams using agentic tools like Claude Code report a 75% reduction in time spent on repetitive strategic analysis like SEO, according to Stormy.ai. - The biggest failure mode isn't hallucination — it's context window overflow on large crawl datasets. Chunking your inputs is non-negotiable. - A repeatable Monday morning audit routine using GSC exports and Screaming Frog CSVs can surface cannibalization signals and thin content clusters in under 90 minutes, no engineering support required.

Why Manual Audits Break Down

Manual SEO audits fail at scale for a specific, predictable reason: the cognitive load of pattern recognition across thousands of URLs exceeds what any human analyst can sustain. It's not that auditors are bad at their jobs — it's that the work is structurally mismatched to human attention spans.

Here's where the breakdown happens every single time. Crawl data interpretation is the first wall. Pulling a Screaming Frog CSV with 15,000 URLs and filtering by status code is straightforward, but identifying which redirect chains are creating PageRank dilution versus which ones are benign legacy redirects — that requires cross-referencing three or four data sources simultaneously. Most analysts do this manually, which means they catch 60% of the real issues and spend four hours doing it. content gap analysis is the second wall. Comparing existing content clusters against competitor topical coverage, then mapping that against internal link structure, then layering in GSC impression data to find high-potential keyword opportunities that are being cannibalized by near-duplicate pages — that's not a spreadsheet task. That's a reasoning task. And the third wall is the one nobody talks about: the translation layer between "audit finding" and "implementation ticket." Even when a human auditor correctly identifies a schema markup gap or a cluster of thin content pages, converting that finding into an actionable fix — with the actual code or copy — takes another full work cycle. According to Stormy.ai, marketing teams using agentic tools like Claude Code report a 75% reduction in time spent on exactly this kind of repetitive strategic analysis. That number tracks with what I've seen in practice.

Claude Code SEO Workflow

Here's the setup. This isn't theoretical — it's the pipeline I use for mid-size sites with 5,000 to 50,000 indexed pages.

First, the inputs. I require three files minimum: 1. Screaming Frog export (CSV): All crawled URLs with status codes, title tags, meta descriptions, word count, canonical tags, and inbound internal link count. 2. GSC Performance export (CSV): Last 16 weeks, filtered to queries with at least 10 impressions. Include URL, query, clicks, impressions, CTR, and average position. 3. GSC Coverage export (CSV): Valid, excluded, and error URLs with their specific exclusion reasons.

Once those three files are ready, open Claude Code in the terminal and start a new session. The key distinction here — and this is what separates Claude Code from a standard ChatGPT prompt — is that Claude Code is an agentic CLI tool. It doesn't just read data and respond. It can write Python scripts, execute them against files, read the output, and iterate. It's the difference between asking a consultant to review a spreadsheet versus handing a developer the data and saying "find the problems and fix them."

I always structure the opening prompt the same way:

You are an SEO technical auditor. I'm providing three CSV files:

[screaming_frog.csv], [gsc_performance.csv], [gsc_coverage.csv].

Your task:

1. Write and execute a Python script to identify pages where GSC

impressions dropped >20% in the last 8 weeks vs. prior 8 weeks.

2. Cross-reference those URLs against the Screaming Frog data to flag

any with word count under 400, missing H1, or non-canonical status.

3. Output a prioritized CSV with columns: URL, issue_type,

recommended_action, priority_score (1-10).

That's it. Claude Code writes the script, runs it, catches errors, fixes them, and hands back a prioritized output file. What used to take me three hours of manual cross-referencing now takes twelve minutes. The Claude Code SEO workflow here isn't magic — it's just agentic execution applied to a well-defined task.

For schema injection specifically, I add a fourth step to the prompt: ask Claude Code to generate the actual JSON-LD markup for the top 20 priority pages based on their content type. It pulls the page title, meta description, and URL pattern to infer the correct schema type — Article, FAQPage, HowTo — and outputs ready-to-deploy code blocks. This directly addresses one of the biggest gaps in standard audits: the finding exists, but nobody writes the fix.

If you're also thinking about how structured data connects to rich result performance, the 5 Structured Data Mistakes Killing Rich Results piece on Meev is worth reading alongside this workflow — it covers the implementation errors that even good audits miss.

What Spreadsheets Can't Surface

This is where the real value lives.

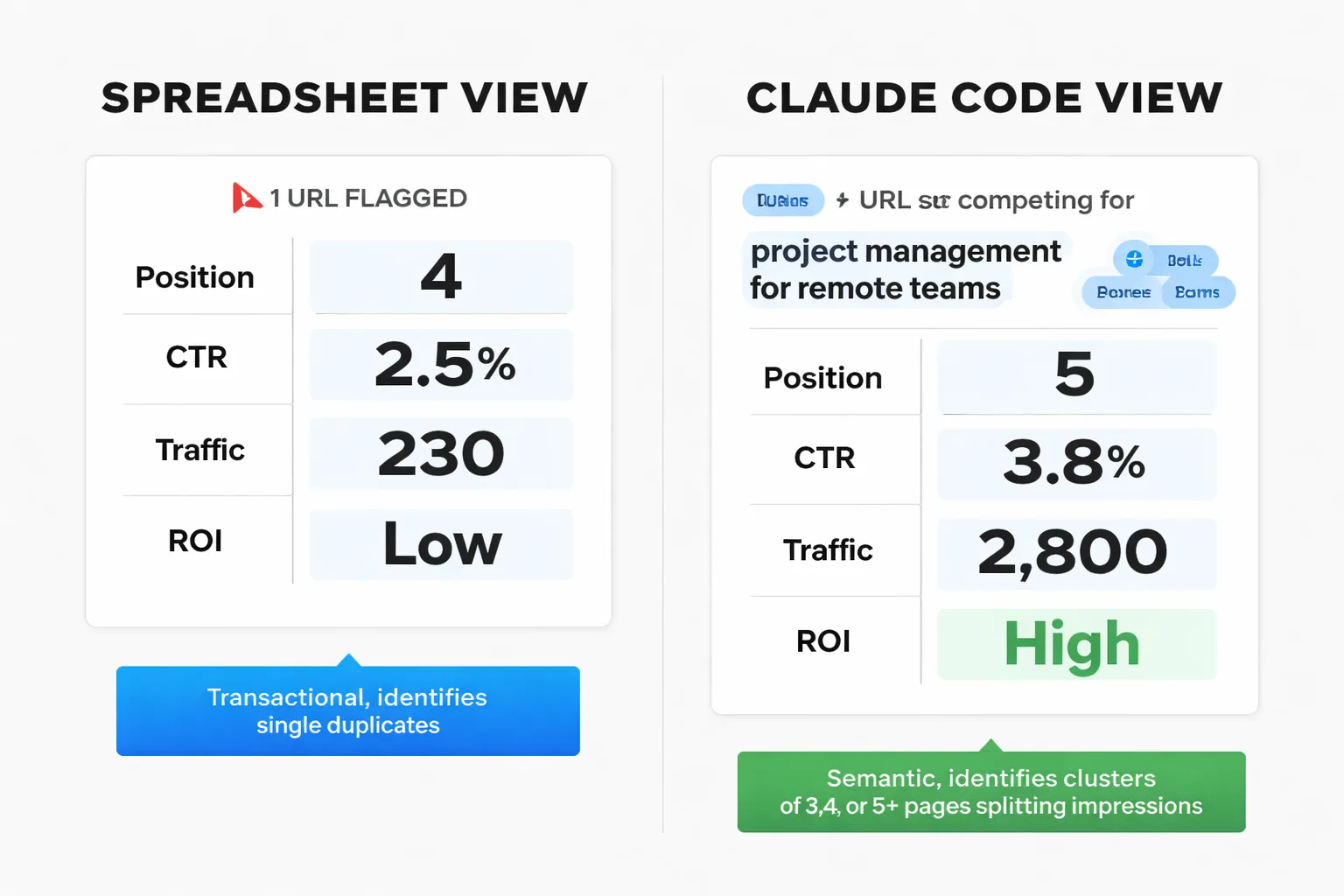

Spreadsheets are transactional. They tell you what exists. Claude Code reasons about what it means. The patterns it surfaces that pivot tables simply cannot include semantic clustering failures, cannibalization signal clusters, and thin content networks — and in my experience, the differences between these categories matter enormously for how you fix them.

SEO keyword cannibalization is the clearest example. A standard audit flags two URLs targeting the same keyword. But when I give Claude Code the full GSC query-to-URL mapping, it identifies clusters of three, four, or five pages that are collectively splitting impressions on a single topic — none of them ranking well, all of them undermining each other. I tested this on a B2B SaaS site and found a cluster of seven blog posts all competing for variations of "project management for remote teams." No individual post was obviously a duplicate. But together, they were cannibalizing each other across 340 related queries. The fix wasn't deleting pages — it was consolidating four of them into one authoritative pillar and redirecting the others. Claude Code identified the cluster, ranked the pages by authority signals, and drafted the consolidation recommendation with specific redirect mappings. That's a half-day of analyst work compressed into 20 minutes.

Thin content clusters are subtler. A page with 600 words isn't automatically thin — but a cluster of 15 pages averaging 580 words, all targeting bottom-of-funnel queries, with zero internal links pointing to them and average positions between 18 and 35? That's a thin content network that's actively suppressing the domain's topical authority on that subject. Claude Code catches this because it's doing multi-variable pattern matching across the entire URL set simultaneously, not row-by-row filtering.

98% of marketers plan to increase their investment in AI SEO in 2026, according to Typeface. In my experience, the ones who will actually see ROI from that investment are the ones using it for pattern recognition at this level — not just content generation.

One more pattern I recommend watching for: Google title tag rewrites. Claude Code can cross-reference current title tags against GSC click data to identify pages where Google is consistently rewriting the title in the SERP — a strong signal that the title tag is misaligned with searcher intent. It then generates replacement title tags based on the query language that's actually driving impressions. That's a genuinely novel insight that no standard audit checklist surfaces, and it's one of my favorite outputs to bring into client reviews.

Where AI Content Audit Automation Still Fails

The failure modes matter as much as the wins.

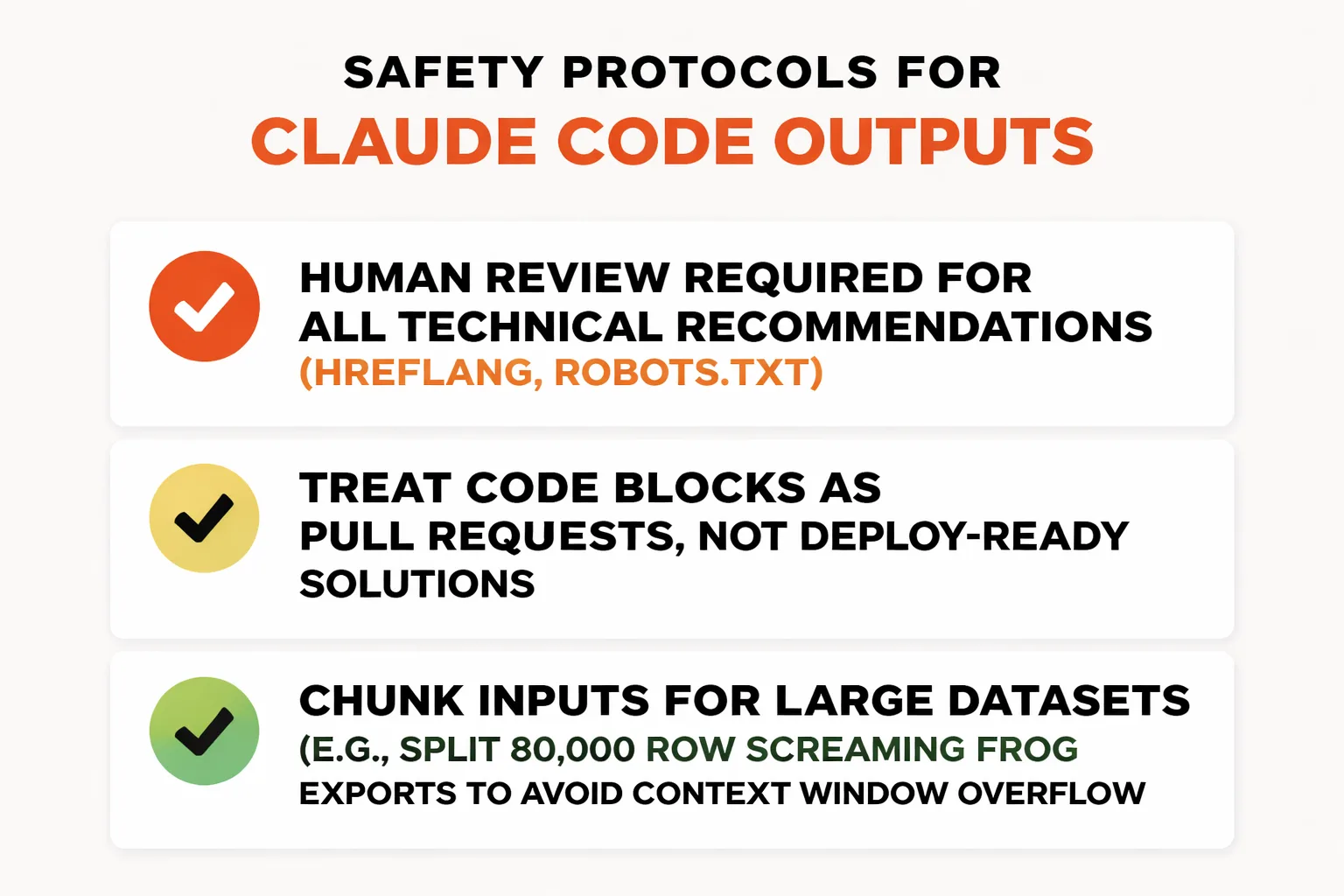

The most dangerous failure is confident hallucination on implementation recommendations. Claude Code sometimes suggests a technical fix — a specific hreflang configuration, a particular robots.txt directive — that is syntactically plausible but contextually wrong for a given site architecture. It doesn't know the CMS constraints, CDN configuration, or deployment pipeline. I've caught recommendations that would have broken staging environments if implemented without review. This is why human review on the output layer is non-negotiable. Treat every generated code block as a pull request that needs approval, not a deploy-ready solution.

The second failure mode is context window overflow. Feeding Claude Code a Screaming Frog export with 80,000 rows will result in either silent truncation or pattern inferences based on incomplete data. My rule: chunk the inputs. Split large crawls by subdirectory or content type. Run separate audit passes for technical issues versus content quality versus internal linking. Merge the outputs manually at the end. It's less elegant, but it's accurate.

The third issue is that Claude Code has no real-time web access during a standard session. It can't check live rankings, pull competitor data, or verify that a recommended fix actually resolved the issue post-deployment. For AI content audit automation to be truly closed-loop, I build a verification step into the workflow — re-export GSC data two weeks after implementation and run a comparison pass.

Finally, there's the question of what martech.org calls the fundamental tension in AI-driven SEO: the tools that make optimization faster also make it easier for everyone to optimize, which compresses competitive advantage. The answer isn't to avoid the tools — it's to use them on the strategic layer (architecture decisions, cluster consolidation, schema implementation) where human judgment adds irreplaceable value, not just on the execution layer.

How to Build a Weekly AI-Driven SEO Audit Routine

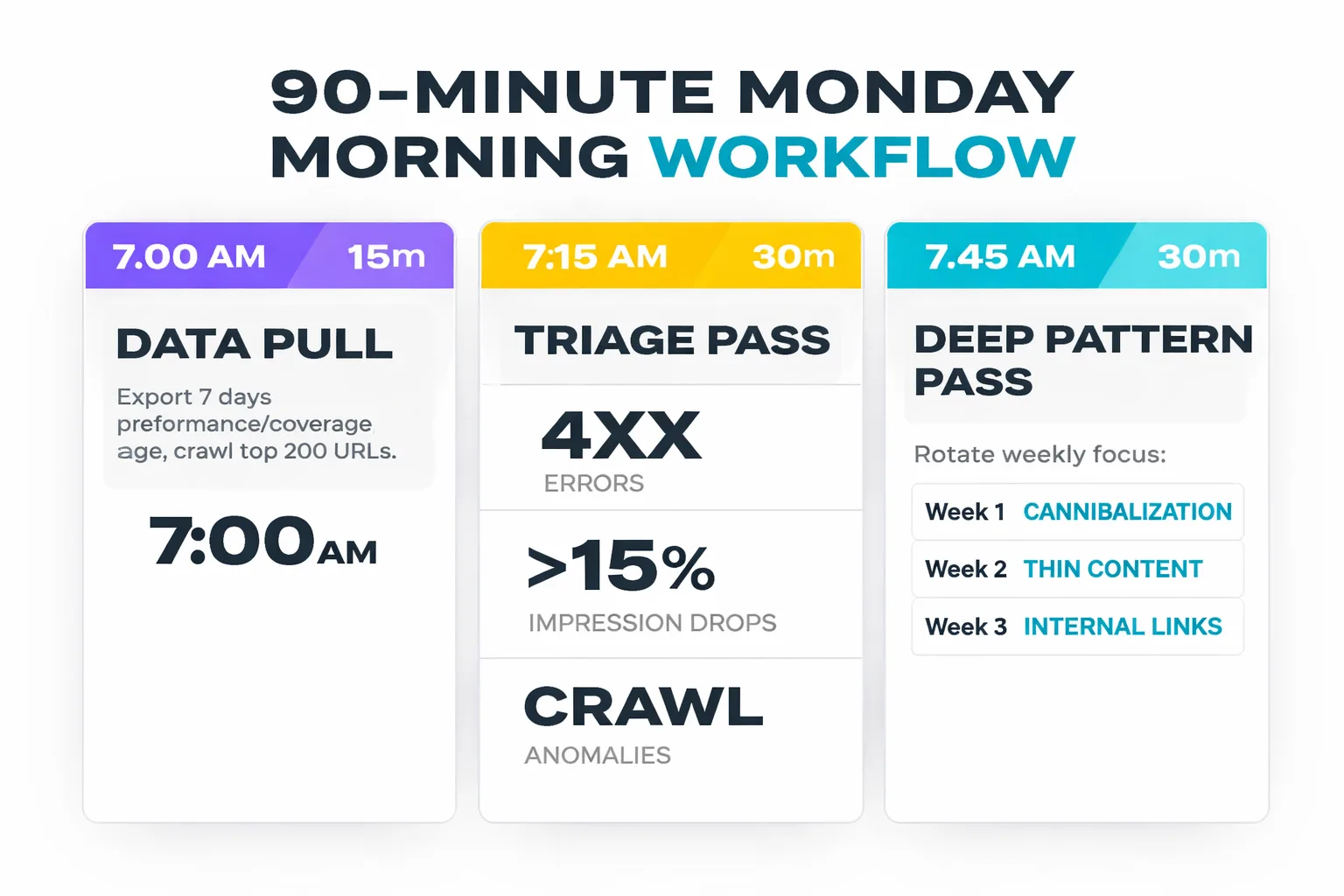

Here's the Monday morning workflow I've developed through sustained iteration. It takes 90 minutes and requires no engineering support — just Claude Code, GSC access, and a Screaming Frog license.

7:00 AM — Data pull (15 minutes) Export last 7 days of GSC performance data (queries + pages). Export last 7 days of GSC coverage data. If a Screaming Frog crawl ran in the last two weeks, use that file. If not, run a quick crawl on the top 200 URLs by traffic.

7:15 AM — Triage pass (30 minutes) Open Claude Code. Run the standard triage prompt: identify any new 4xx errors, any pages that dropped more than 15% in impressions week-over-week, and any new crawl anomalies (sudden changes in word count, missing canonical tags, new redirect chains). This is the "nothing is on fire" check.

7:45 AM — Deep pattern pass (30 minutes) This is where I rotate focus weekly. Week 1: cannibalization signals. Week 2: thin content clusters. Week 3: internal link gaps (pages with high impressions but low internal link count). Week 4: schema coverage audit. Rotating focus prevents audit fatigue and ensures systematic coverage of the full technical surface area over a month.

8:15 AM — Output review and ticketing (15 minutes) I review Claude Code's prioritized output, then approve, modify, or reject each recommendation. Approved items get converted into implementation tickets. For any code-level fixes (schema injection, meta tag updates), I attach the generated code block directly to the ticket.

This routine compounds over time. After four weeks, I have a rolling baseline. After eight weeks, trend analysis becomes possible — not just "what's broken this week" but "what's been slowly degrading for two months." That's when the workflow starts catching issues before they become ranking drops.

For teams thinking about how this fits into a broader content strategy, the connection to content cluster architecture is direct: the weekly audit identifies which clusters are underperforming, and the cluster strategy determines whether to consolidate, expand, or redirect. At Meev, we've designed these two workflows to feed each other — and I've seen the compounding effect firsthand when both are running in sync.