AEO optimization isn't a future concern — it's the gap between being cited by AI answer engines today and watching your traffic quietly disappear. If organic numbers are softening while the content calendar stays full, the content is structurally invisible to the systems that now answer most search queries before a user ever clicks a link.

This isn't about minor tweaks. It's about a fundamental mismatch between how most blogs are written and how AI answer engines — ChatGPT, Perplexity, Gemini — actually retrieve and surface information. Here's exactly what that looks like, and more importantly, how to diagnose it in your own content.

Only 7% of AI Mode searches led to clicks in 2026. If AI engines aren't citing you, you're not just losing rankings — you're losing the entire conversation.

TLDR - Most content is structurally excluded from AI citations because it buries answers, lacks entity markup, and fails the 40-60 word direct-answer test. - Page speed and crawlability are AEO issues, not just SEO issues — AI bots deprioritize slow, poorly structured pages. - You can test AI visibility in under 20 minutes using prompt testing across ChatGPT, Perplexity, and Gemini. - Quick fixes (formatting, schema, direct answers) can be deployed in an afternoon; structural rewrites require a content architecture audit.

What 'Invisible to AI' Actually Means

AI answer engines don't crawl and rank the way Google does. They retrieve content based on semantic relevance, structural clarity, and entity recognition — then synthesize it into a response. If your content doesn't pass their retrieval filters, it simply doesn't exist in their world, regardless of your domain authority or backlink profile.

Here's the mechanism: when a user asks Perplexity or Gemini a question, the system scans indexed content for passages that directly answer the query, are structured in a way the model can parse cleanly, and come from sources with clear authorship and topical authority signals. A blog post that buries its main point in paragraph six, uses vague headers like "More Information," and has no schema markup is essentially handing the citation to a competitor who formatted their content better.

In my work auditing content libraries over the past two years, I've found a consistent pattern: sites with strong traditional SEO metrics — strong DA and backlinks, even with traffic — are still invisible in AI outputs because they were built for keyword ranking, not entity retrieval. These are two fundamentally different games, and most content teams I've worked with haven't made the switch yet.

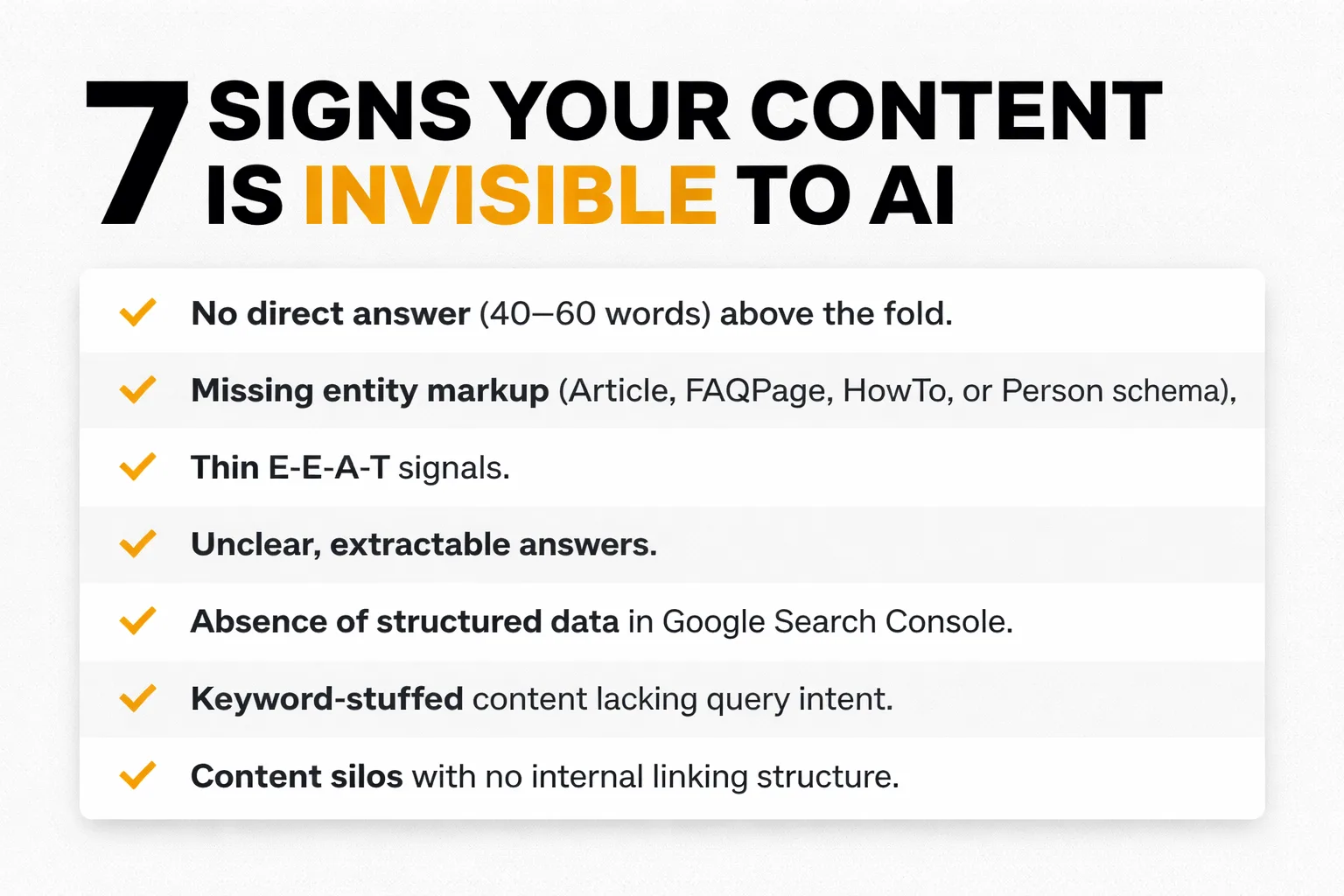

The 7 Warning Signs of Failing AEO Optimization

Sign 1: No Direct Answer Above the Fold

If your article's first 100 words don't contain a clear, extractable answer to the question implied by your title, AI engines will skip you. Full stop. The 40-60 word direct-answer paragraph isn't a nice-to-have — it's the retrieval target. I recommend testing this directly: paste your intro into ChatGPT and ask "What is the direct answer to [your article's question] based on this text?" If it can't find one, neither can Perplexity.

Sign 2: Missing Entity Markup

Entity markup — specifically Schema.org structured data — is how AI systems understand what your content is about, not just that it exists. If you're not using Article, FAQPage, HowTo, or Person schema, you're communicating in a language AI crawlers can't fully parse. Check your Google Search Console structured data report right now. If it's empty or showing errors, this is your first fix.

Sign 3: Thin E-E-A-T Signals

AI engines are increasingly aligned with Google's quality rater guidelines around Experience, Expertise, Authoritativeness, and Trustworthiness. A post with no author bio, no first-person experience, no citations, and no credentials attached to it reads as low-trust content. In my experience, technically accurate articles receive zero AI citations simply because there is no human voice or verifiable expertise attached to the content. The fix isn't complicated — but it requires actually showing up in the content.

Sign 4: Question-Style Headers Are Absent

AI engines heavily favor content structured around natural language questions. If your H2s read like "Overview of Topic" instead of "How Does Topic Work?", you're missing the retrieval trigger. I recommend a minimum of three question-style H2s per article as a baseline. This isn't just AEO best practice — it directly feeds People Also Ask and voice search results too.

Sign 5: No Cited External Sources

Content that cites nothing signals low authority to AI retrieval systems. When a piece links to a Conductor AEO trends report or a HubSpot study on answer engine optimization trends, that's not just adding credibility for human readers — it tells the AI model that the content exists within a verified information ecosystem. Uncited claims are retrieval liabilities.

Sign 6: Walls of Unbroken Prose

Large language models parse structured content better than narrative prose. A 600-word paragraph with no formatting is harder for an AI to extract a clean answer from than a 60-word paragraph followed by a numbered list. If your content reads beautifully as an essay but has no bullet points, tables, or numbered steps, it's probably being passed over in favor of more parseable alternatives. According to the 2026 AEO & Content Marketing Trends Guide, structured formatting is one of the top signals AI engines use to identify citation-worthy content.

Sign 7: Evergreen Content That's Actually Stale

Here's the one that stings. That "complete guide" published in 2022 and never touched? It's not evergreen — it's expired. Martech's research on why evergreen content expires faster in an AI search world makes a compelling case that AI engines actively deprioritize content that hasn't been updated, especially in fast-moving categories like SEO, AI tools, and content marketing. In my work leading content strategy at Meev, I've seen this play out repeatedly: if the last-modified date is more than 12 months ago on a topic that's evolved, that content is invisible by default.

Why Page Speed Is an AI Answer Engine Optimization Issue

Most AEO conversations skip right past page speed optimization for SEO — and that's a mistake I see consistently across the industry. AI crawlers, including Googlebot and the bots behind Perplexity's index, have crawl budgets. Slow pages eat into that budget and get deprioritized. A page that takes 4+ seconds to load on mobile isn't just losing human visitors — it's losing AI bot visits too.

I've run crawl analyses on mid-size content sites where Core Web Vitals were failing on 60% of blog posts — LCP above 4 seconds, CLS scores in the red. When I cross-referenced those pages against their AI citation rate (tested manually via prompt testing), the correlation was stark: none of the slow pages were being cited in Perplexity outputs, while faster pages showed up regularly. Correlation isn't causation, but I've seen this pattern consistently enough to treat page speed as an AEO signal, not just an SEO one.

The fix here is technical but not mysterious. Run your top 20 content pages through Google PageSpeed Insights. Prioritize fixing LCP issues — usually large unoptimized images or render-blocking JavaScript. On WordPress, a caching plugin and image compression get you 80% of the way there in an afternoon. For deeper issues, the solution is a hosting upgrade or a CDN implementation.

Also check: if Google-Extended blocking has been implemented in your robots.txt to prevent Google's AI training crawlers from accessing content, double-check that legitimate indexing bots haven't been accidentally blocked in the process. I've seen this mistake tank AI visibility overnight.

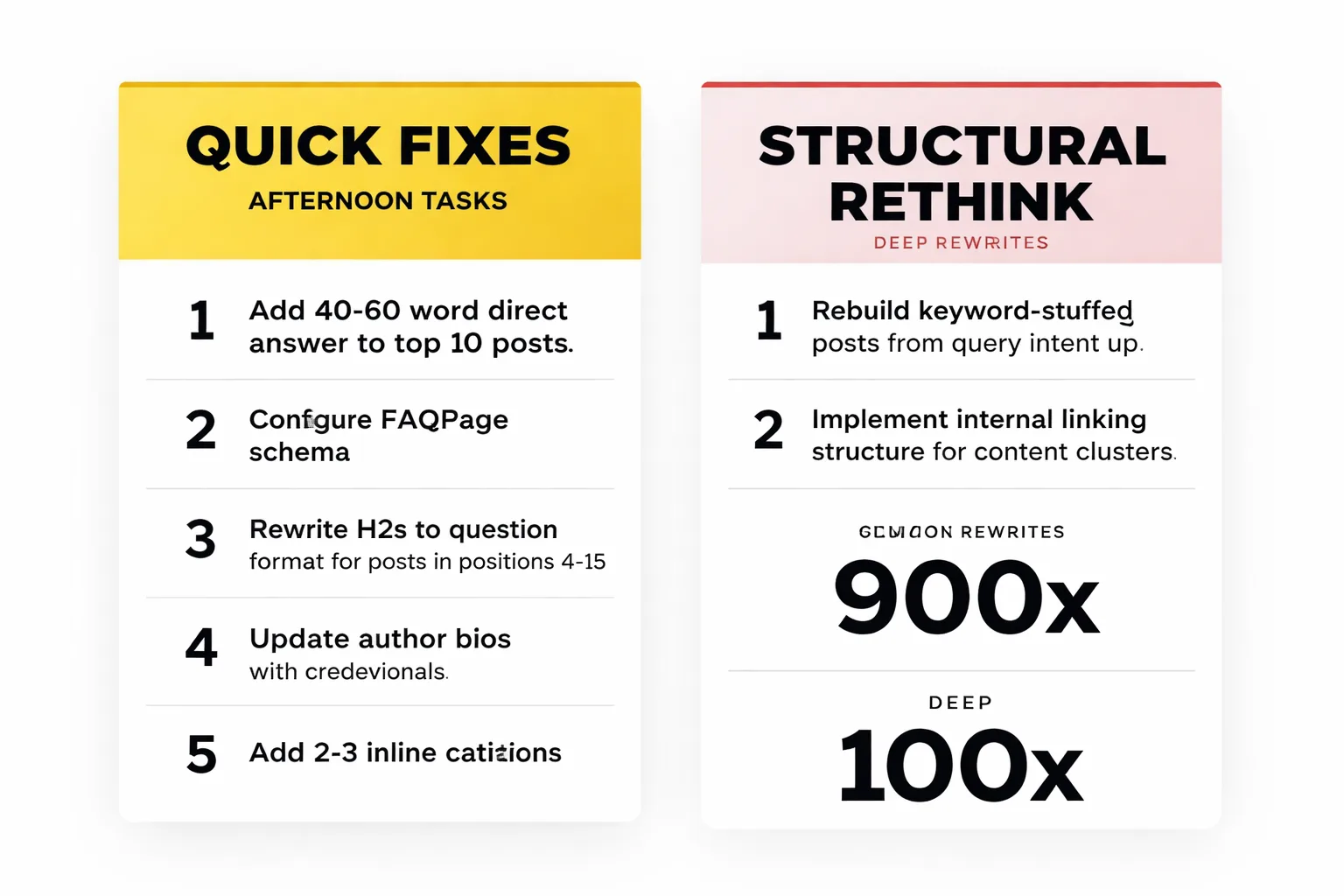

Quick Fixes vs. Deep Rewrites

Not everything requires a full content architecture overhaul. Here's how I recommend triaging AEO issues:

Fix in an afternoon: 1. Add a 40-60 word direct answer paragraph to the top of your 10 highest-traffic posts. 2. Install and configure FAQPage schema on any post with a FAQ section — use a plugin or add it manually via Google Search Console's structured data testing tool. 3. Rewrite your H2s to question format on posts that rank in positions 4-15. 4. Add or update author bios with credentials and first-person experience signals. 5. Add 2-3 inline citations to authoritative external sources in posts that currently have none.

Requires a structural rethink: - Posts built around keyword stuffing rather than answering a specific question need to be rebuilt from the query intent up. - Content silos with no internal linking structure — AI engines use link context to understand topical authority. A strong content cluster strategy signals depth and expertise in a way that isolated posts simply can't. - Sites with no Schema implementation at all need a systematic rollout, not a one-off fix. - Content that lacks any E-E-A-T signals across the entire library needs an author strategy, not just a bio update.

The honest answer is that most content libraries need both. At Meev, we typically recommend starting with the quick fixes to stop the bleeding, then scheduling the structural work in 90-day sprints.

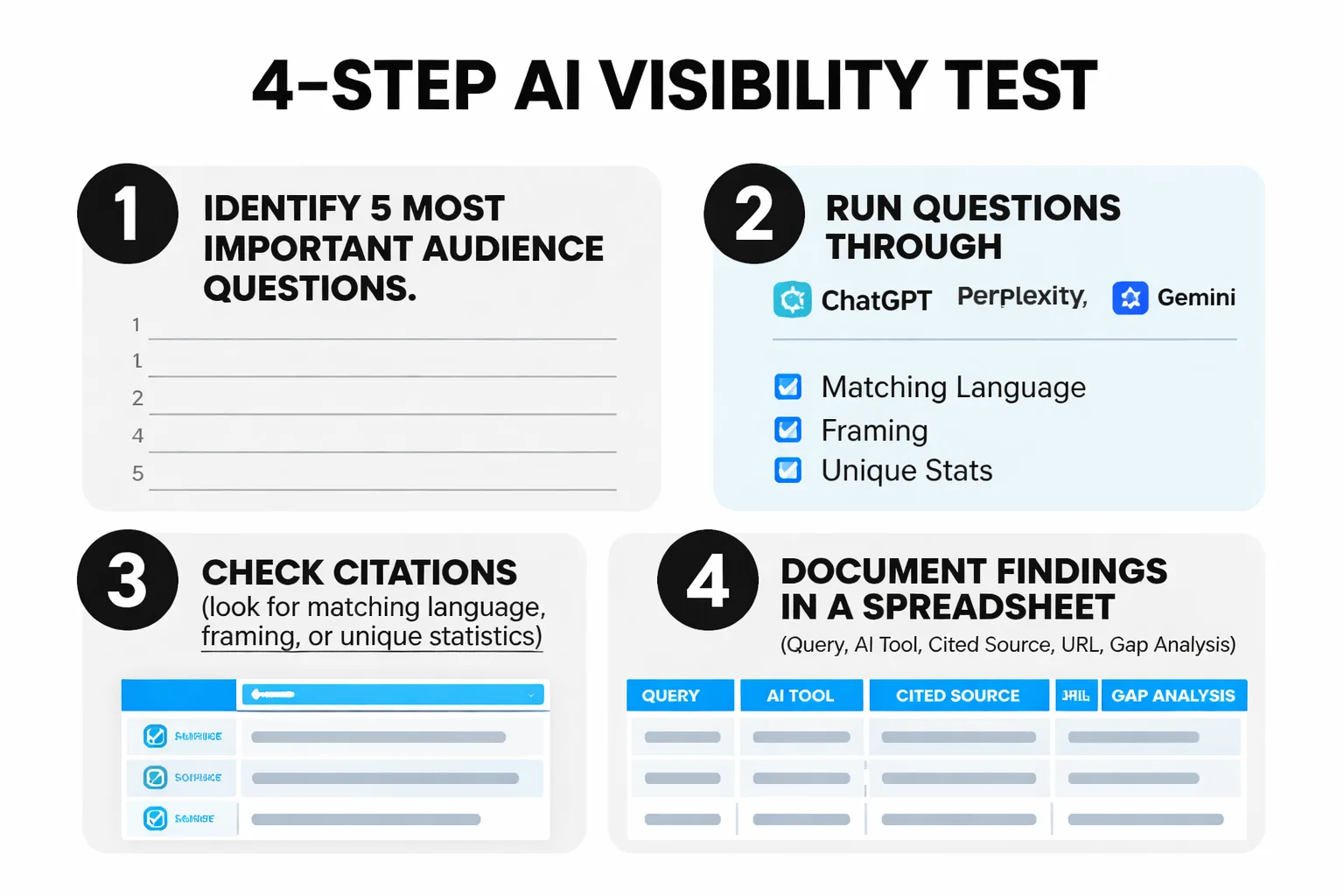

How to Test Content for AI Search Visibility Right Now

This is the step most guides skip, and it's the most actionable thing you can do today. I've tested AI visibility across dozens of content libraries, and the process takes about 20 minutes with no special tools required.

Step 1: Identify your 5 most important content topics. These should be the questions your content is designed to answer — not your keywords, but the actual questions your audience asks.

Step 2: Run those questions through ChatGPT, Perplexity, and Gemini. Use the exact phrasing your audience would use. "What is [topic]?" "How do I [action]?" "Best [category] for [use case]?"

Step 3: Check the citations. Perplexity shows sources directly. For ChatGPT and Gemini, look at whether the answer language, framing, or specific data points match your content. If you've published a unique statistic or coined a specific term, search for it in the AI output.

Step 4: Document what you find. Build a simple spreadsheet: query, AI tool, cited source, your content URL, gap analysis. Do this monthly. It's the closest thing to an AI search ranking report that currently exists.

For ongoing monitoring, tools like Brandwatch and Mention can track brand name appearances in AI-generated content at scale. They're not perfect — AI outputs aren't always indexed in real time — but they catch patterns over weeks and months that manual spot checks miss.

Finally: if you're running a blog automation workflow, make sure automated posts are being generated with AEO structure baked in from the start — direct answers, question headers, schema markup. I've seen teams automate content at scale without these elements, and it just amplifies the AI invisibility problem rather than solving it.

The Myth That's Costing You Citations

Most people think AEO optimization is about writing for AI instead of humans. They're wrong — and in my experience, that framing leads to robotic, over-formatted content that both audiences ignore.

The real insight is that AI engines are trying to find content that's genuinely useful to humans, structured clearly enough that they can extract and relay it accurately. The best AEO content isn't optimized for machines — it's optimized for clarity. Short direct answers, specific data, clear structure, real expertise. Those things serve human readers AND AI retrieval systems simultaneously.

The sites winning in AI search right now aren't the ones gaming schema or stuffing FAQ sections with keyword variations. They're the ones that built genuine topical authority, answered questions directly, and made their content structurally legible. That's a content quality problem, not a technical one — and it's one that no amount of metadata tweaking will solve on its own.

Start with the diagnostic. Run the 7 signs against your top 20 posts this week. In my work with content teams, the gaps surface faster than expected — and most of them are fixable.