By Judy Zhou, Head of Content Strategy

Key Takeaways

- Only 28% of brands earn both AI citations and named recommendations — most campaigns produce one or the other, not both.

- ChatGPT accounts for 87.4% of AI-driven referral traffic despite citing less often than Perplexity, making it the priority engine for commercial citation campaigns.

- The mention-citation gap is the metric most teams never measure: your content may be used as background evidence while competitors get named — audit this before pitching a single publisher.

- Effective AI citation outreach pitches a specific factual gap in an existing article, not a request to be added as a resource — the distinction determines whether editors respond.

What does it actually take for an AI model to cite your brand by name — not just mention a topic you cover, but specifically attribute a claim, statistic, or recommendation to your company? It's a question most marketing teams haven't seriously asked yet, and the window to act before the answer becomes obvious to everyone is closing fast. AI citation outreach is the emerging discipline that sits at the intersection of PR, content strategy, and generative engine optimization. This seven-step framework gives you a concrete process for earning those citations at scale.

The uncomfortable truth I keep running into: only 28% of brands achieve both citations and named recommendations in AI-generated answers, according to SEMrush's analysis. ChatGPT accounts for 87.4% of AI-driven referral traffic despite citing in only 62% of responses. Perplexity's citation behavior is structurally uneven — just 6% of URLs account for 47% of all citations, per Peec AI's analysis of 1M+ AI citations. And Google AI Mode cites 93% of URLs less than once per answer on average, making consistent placement there genuinely difficult. These numbers shape everything in this framework — which engines to prioritize, which publishers to target, and how to pitch.

Step 1–2: Identify Your Citation Gap and Target Engines

Before you pitch a single publisher, you need to know where your brand stands right now across the AI engines your buyers actually use. Most teams skip this audit and go straight to outreach. That's how you end up spending a quarter building Perplexity citations while your competitors own the ChatGPT answers that actually send buyers.

Start with manual query testing. Pull your top 10-15 informational queries — the questions your target audience asks before buying — and run them through ChatGPT, Perplexity, and Google AI Overviews on the same day. Document three things for each query: whether your brand appears at all, whether you're cited as a source or just mentioned in passing, and which competitors are named. Do this in an incognito browser to strip personalization. The pattern I keep seeing when I run this exercise with new teams: they're generating traffic from AI referrals but have no idea which engine it's coming from. That matters enormously because the citation mechanics differ by platform.

Next, open GA4 and filter referral traffic by source. Look specifically for traffic from chatgpt.com, perplexity.ai, and bing.com (which carries Copilot traffic). If you're seeing meaningful volume from Perplexity but near-zero from ChatGPT, your content may be structured for retrieval but not for the kind of authoritative attribution ChatGPT prioritizes. If you're seeing the reverse, you likely have strong brand signals but weak citation-format content. Either way, you now have a baseline.

The mention-citation gap is the metric most teams never measure. You can appear in an AI answer as supporting evidence for a competitor's claim — your data used, your brand invisible. I've audited campaigns where the team was celebrating "AI visibility" while every actual appearance was a background source, not a named recommendation. Map this explicitly: for each query where you appear, is your brand named or just your content used? That gap tells you exactly what kind of outreach you need.

For engine prioritization, the AI platform citation patterns data from Profound makes the decision fairly clear. ChatGPT's 93% of URLs cited more than 1.5 times per answer means if you earn a placement in a source ChatGPT trusts, it will use you repeatedly. That's compounding value. Perplexity's 64% non-citation rate means a lot of your retrieved content never surfaces visibly. I'd start every campaign with ChatGPT-priority targeting, then layer in Perplexity citation building once the ChatGPT foundation is set.

Step 3–4: Find the Publishers AI Engines Already Trust

Reverse-engineering which domains the AI engines actually cite for your topic cluster is the single highest-leverage research step in this entire process. Most publisher outreach lists are built on DA scores and organic traffic. That's the wrong input for AI citation outreach.

Here's the method I use. Take your 10-15 target queries and run them through Perplexity, noting every cited source in the answer panel. Do the same with ChatGPT Browse. Build a spreadsheet: domain, query it appeared for, citation type (direct quote, data source, named recommendation), and how many of your target queries it appeared in. After 15 queries, patterns emerge fast. You'll typically find 8-12 domains that appear repeatedly across your topic cluster. Those are your tier-one targets.

What you're looking for isn't the highest-traffic publishers. It's the publishers the AI engines have already decided are authoritative for your specific topic space. A mid-size industry publication that appears in 9 of your 15 target queries is worth more than a major outlet that appears in one. The AI engine has already expressed a preference — you're just reading it.

For ChatGPT specifically, look at what sources appear in Browse mode responses versus base model responses. Browse-mode citations tend to favor recent, well-structured pages with clear authorship. Base model citations tend to favor content that was heavily linked and discussed before the training cutoff. Both matter, but they require slightly different publisher profiles.

Once you have your tier-one list, segment publishers into three categories: those that already mention your brand (upgrade targets), those that mention competitors but not you (displacement targets), and those that mention neither (new placement targets). Upgrade targets are your fastest wins — the relationship with the publisher may already exist, and the ask is smaller. Displacement targets require the strongest pitch because you're asking an editor to consider you alongside an established reference. New placement targets are the longest play but often the most durable.

For contact research, go beyond the generic contact page. Find the specific editor or writer who covers your topic cluster. Use LinkedIn to identify editorial staff, then cross-reference with their recent bylines to confirm they're still active on that beat. A pitch that lands in a general inbox converts at a fraction of the rate of one addressed to the person who actually wrote the article you want to be added to.

Want to know which AI engines are citing your competitors but not you?

Step 5: Craft the Pitch That Gets You Included

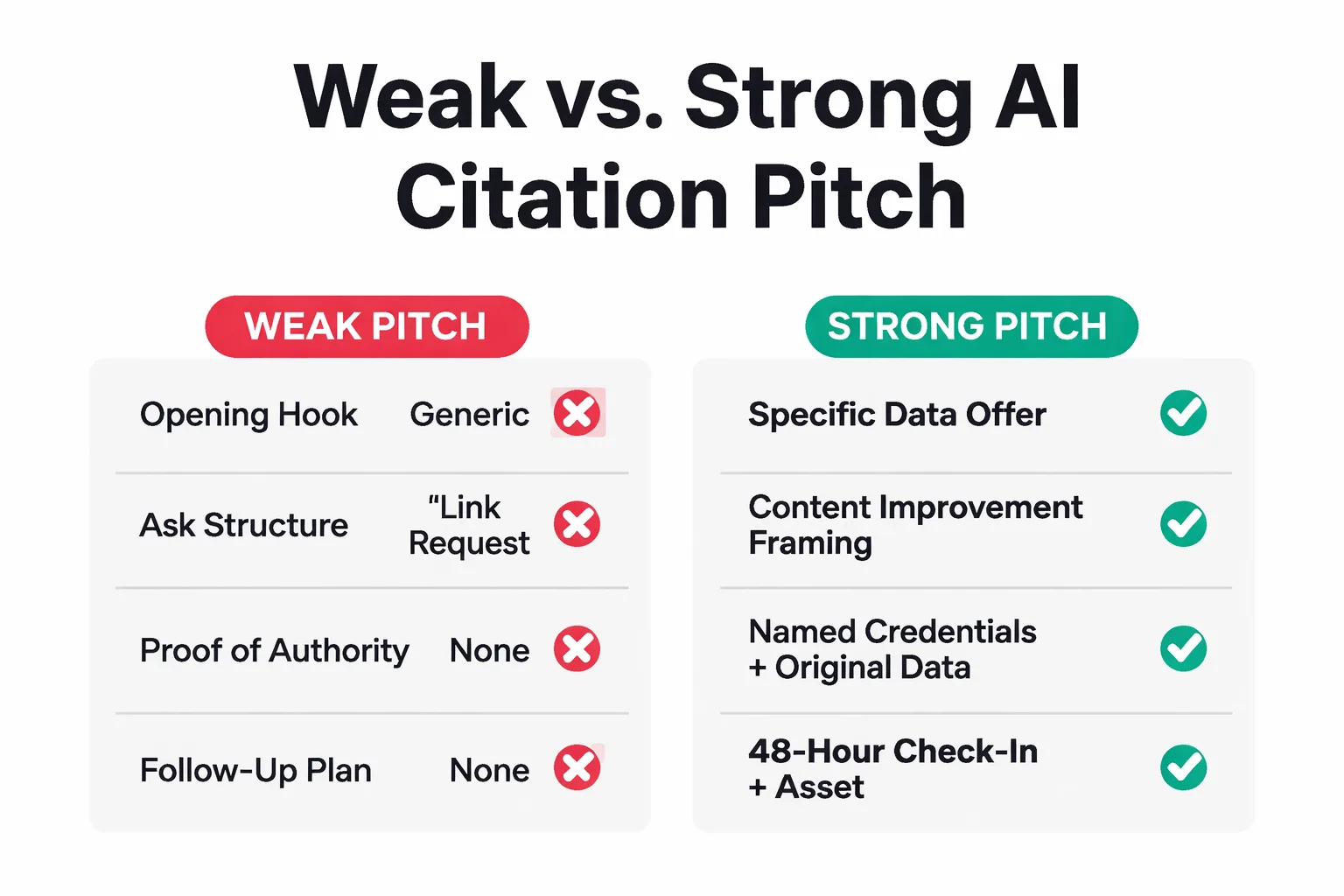

Most publisher pitches for AI citations fail because they read like link-building requests with better vocabulary. Editors who maintain the kind of authoritative content AI engines cite are not interested in your domain authority. They're interested in making their content better.

The pitch structure that works has four components. First, a specific observation about their existing content — not flattery, but a genuine gap you can fill. "Your guide on [topic] covers X and Y well, but doesn't include the updated data on Z that's changed significantly this year." This signals you actually read the piece and have something concrete to offer.

Second, the offer itself. Original data outperforms quotes, which outperform summaries of existing research. If you have proprietary findings — even from a small internal study — that's the strongest possible offer. If not, a well-sourced expert quote from a named person with verifiable credentials is second. What doesn't work: offering to "contribute a section" or asking to be "added as a resource." Those phrases flag you as a link builder immediately.

Third, the credential anchor. One sentence establishing why your brand is a legitimate source on this specific topic. Not your company history — your demonstrated expertise on the exact claim you're offering. "We've tracked [specific metric] across [specific context] for [time period]" is concrete. "We're a leading provider of [category]" is noise.

Fourth, a frictionless next step. Don't ask for a call. Don't attach a PDF. Offer the data point or quote directly in the email body so the editor can evaluate it immediately. If it's good, they'll use it. If they want more, they'll ask.

What to avoid is equally important. Don't mention SEO, backlinks, or domain authority anywhere in the pitch. Don't use the phrase "mutually beneficial." Don't pitch roundups or listicles by asking to be added — pitch specific factual gaps in specific articles. The editors maintaining content that AI engines trust are running editorial operations, not link exchanges. Treat them accordingly.

For brand mention outreach specifically targeting AI visibility, frame the ask around content accuracy and freshness rather than inclusion. "Your 2024 data on X has been superseded by Y — here's the updated figure with source" is a genuinely useful pitch. It positions you as a resource, not a supplicant.

Step 6: Optimize the Asset Being Cited

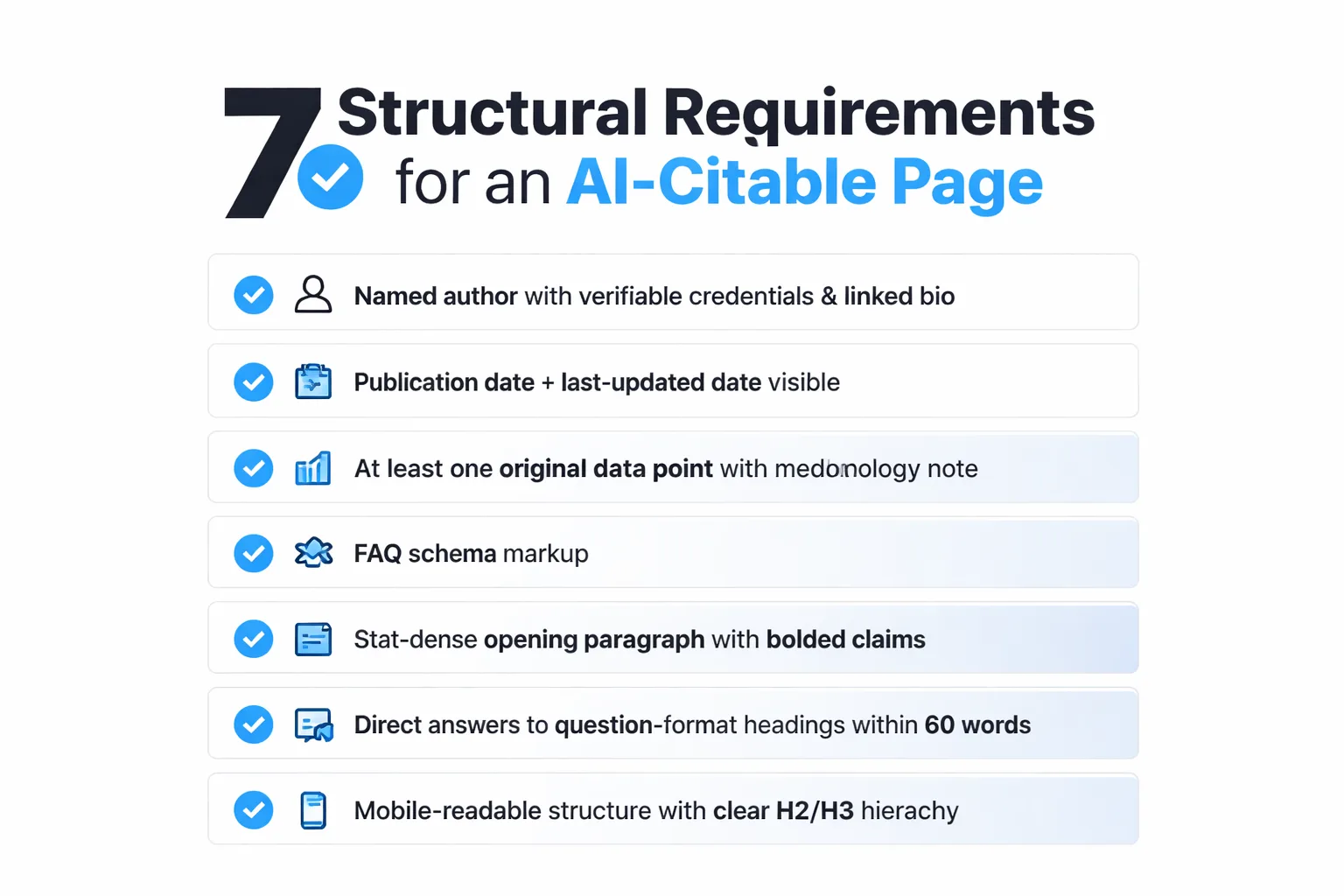

Getting a publisher to include your brand is only half the equation. The page being cited has to meet the structural and semantic requirements AI engines use to decide whether to pull from it. A placement in a trusted publisher that links to a thin, poorly structured page is nearly worthless for AI citation purposes.

Author credentials are the first thing to fix. A page attributed to "Staff Writer" or "Marketing Team" carries almost no E-E-A-T weight for AI engines. The author needs a name, a linked bio with verifiable expertise signals, and ideally a publication history on the topic. This isn't just an SEO consideration — it's how AI engines assess whether the content represents genuine expertise or manufactured authority.

Stat freshness matters more than most teams realize. AI engines, particularly ChatGPT in Browse mode and Perplexity, actively weight recency for factual claims. A page with 2023 statistics that hasn't been updated signals stale content even if the surrounding analysis is strong. Build a quarterly refresh process into any page you're actively pitching as a citation source. Update the key statistics, add a "last updated" date that's visible in the page metadata, and use schema markup to surface that date to crawlers.

FAQ schema is the structural element I see most consistently missing from pages that should be earning AI citations. AI engines are retrieval systems — they prefer content that answers questions directly. A page with FAQ schema that matches the exact question phrasing your audience uses in AI queries is structurally optimized for extraction. Without it, even strong content gets passed over for a more parseable alternative.

The opening paragraph is disproportionately important. Research on AI platform citation patterns consistently shows that AI engines extract from the top of the document first. If your most citable claim — the specific data point or named recommendation you want attributed to your brand — is buried in paragraph eight, it may never get pulled. Put your strongest, most specific claim in the first 100 words.

One thing I've learned from running content through Google's Helpful Content System evaluation: a clean score on readability and keyword tools tells you almost nothing about whether the content will earn citations. What matters is demonstrated expertise and original perspective. Peec AI's portfolio analysis found consistent "massive visibility drops" across companies running AI content generation without genuine expertise signals. The quality gate most teams are using is measuring the wrong thing.

For teams scaling this process, Meev's quality-gated publishing workflow handles the structural requirements automatically — schema injection, author attribution, stat freshness flags — so the citation optimization layer doesn't require manual auditing of every published page.

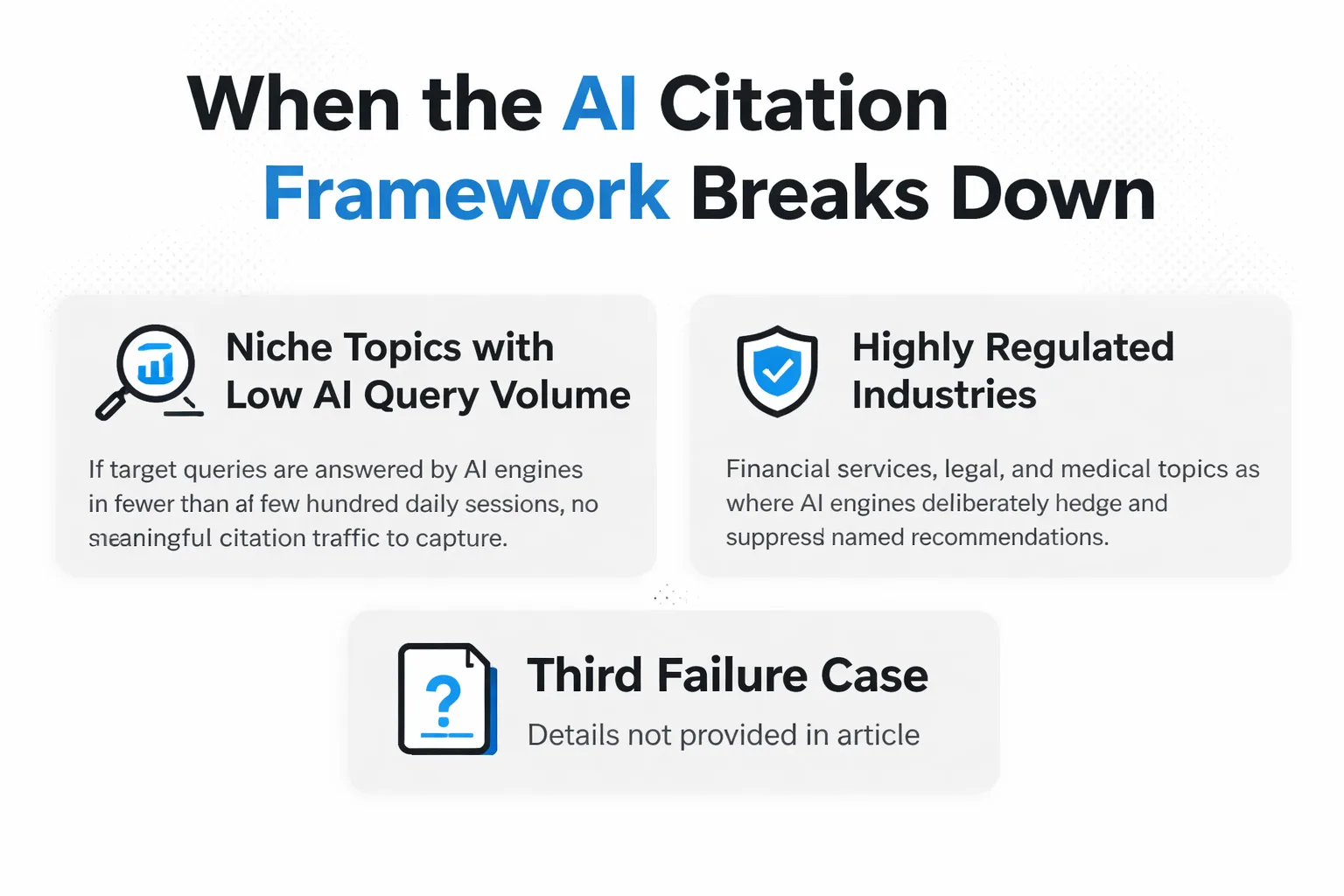

Where This Framework Breaks Down

This seven-step process works well under specific conditions. It doesn't work everywhere, and being honest about that saves teams from wasting quarters on the wrong approach.

First failure case: niche topics with low AI query volume. If your target queries are answered by AI engines in fewer than a few hundred daily sessions, there's no meaningful citation traffic to capture regardless of how well you execute the outreach. Check the actual AI query volume for your topic before investing in this framework.

Second failure case: highly regulated industries where AI engines deliberately hedge. In financial services, legal, and medical topics, ChatGPT and Perplexity frequently decline to name specific brands as recommendations precisely to avoid liability. You can earn citations as data sources in these verticals, but named recommendations are structurally suppressed. The outreach strategy needs to shift toward citation-as-evidence rather than citation-as-recommendation.

Third failure case: brands with weak topical authority signals. If your domain has no existing presence in your topic cluster — no indexed content, no inbound links from relevant publishers, no entity recognition in AI engines — outreach alone won't move the needle. You need six to twelve months of topical authority building before publisher pitches carry weight. Outreach accelerates an existing signal; it can't manufacture one from nothing.

Step 7: Track, Iterate, and Scale

Most teams measure AI citation campaigns by counting mentions. That's the wrong metric, and I made this mistake myself for a full quarter before the data forced a correction.

The tracking framework I now use segments by platform from day one. Citations in Perplexity and citations in ChatGPT are not equivalent outcomes. ChatGPT accounts for 87.4% of AI-driven referral traffic in the data I've seen, while Perplexity's citation behavior is more generous but less commercially valuable in most verticals. If you're aggregating citation counts across platforms, a campaign that earns 20 Perplexity citations and zero ChatGPT citations will look healthier than it is.

For the 30-day check, run your baseline query set again manually and compare against your original audit. Track three metrics: citation rate (did your brand appear), citation type (named recommendation vs. background source), and citation platform. The mention-citation gap should be narrowing — if you're appearing more but still as background evidence rather than named recommendations, your content structure needs work before the next outreach wave.

At 60 days, pull GA4 referral data and segment by AI engine source. Look for movement in chatgpt.com and perplexity.ai referral sessions. A successful outreach campaign should produce measurable referral lift by this point if the placements are in genuinely high-citation-rate publishers. If referral traffic hasn't moved, the placements are in publishers the AI engines aren't actually pulling from — go back to your publisher research and tighten the criteria.

At 90 days, add pipeline attribution. This is the metric almost no team tracks but the only one that actually justifies the investment. Tag AI referral sessions in your CRM and track them through to opportunity creation or conversion. The goal isn't citation volume — it's whether the people AI engines send to your site are buyers. In my experience, AI-referred visitors from ChatGPT convert at a meaningfully higher rate than average organic traffic because they've already been pre-qualified by the AI answer. That's the business case for this entire framework.

For scaling, Meev's AI search visibility tracking handles the query monitoring and citation lift measurement across platforms, so you're not running manual audits every 30 days as your campaign grows. The manual process works for the first cycle. It doesn't scale past 50 target queries without tooling.

The outreach itself scales through systematization, not volume. A well-researched pitch to 20 tier-one publishers outperforms 200 cold emails to generic contacts every time. Build the publisher research process into a repeatable workflow — query audit, citation frequency analysis, contact identification, pitch customization — and run it quarterly as your topic cluster evolves and new publishers enter the AI engines' trusted sources.

For teams comparing visibility platforms to support this tracking, the Meev vs. Profound comparison breaks down exactly which citation monitoring features matter at different campaign scales. The right tool depends heavily on how many queries you're tracking and whether you need multi-engine attribution or single-platform depth.

Frequently Asked Questions

How long does it take to see results from AI citation outreach? Most campaigns show measurable citation lift at 30-45 days for Perplexity (which indexes and cites new content faster) and 60-90 days for ChatGPT. Named recommendations — where AI engines specifically recommend your brand rather than just using your content as background evidence — typically take a full quarter to build, because they require multiple corroborating citations across independent sources, not just a single placement.

Should I target the same publishers for ChatGPT and Perplexity citations? Not necessarily. The publisher sets that ChatGPT and Perplexity draw from overlap significantly, but not completely. Perplexity tends to cite more recent, web-crawled sources and pulls from a wider range of mid-tier publishers. ChatGPT in Browse mode favors sources with strong structural signals and consistent citation history. Start with publishers that appear in both engines for your target queries — those are the highest-leverage targets — then segment by engine for secondary outreach waves.

How is AI citation outreach different from standard digital PR? Standard digital PR optimizes for backlinks and brand awareness. AI citation outreach optimizes for the specific editorial contexts AI engines use to surface named recommendations. The difference is structural: you're not just trying to appear in an article, you're trying to appear in an article in a way that AI engines read as authoritative attribution. That means the pitch, the asset being cited, and the publisher selection criteria are all different from a traditional PR campaign.

What content formats are most likely to earn AI citations? Original data and proprietary research earn the most consistent citations across all major AI engines. After that, expert quotes with named credentials, FAQ-structured content with direct answers to specific questions, and comparison frameworks with specific benchmarks. What underperforms: general thought leadership without specific claims, content that summarizes existing research without adding original perspective, and brand-forward content that reads as marketing rather than editorial.

Can I do AI citation outreach without original research? Yes, but your pitch leverage is lower. Without original data, your strongest offer is a named expert quote with verifiable credentials on a specific factual claim, or a synthesis of existing research that fills a genuine gap in the publisher's coverage. The key is specificity — a vague expert perspective is no more citable than a generic blog post. A named expert making a specific, falsifiable claim with a methodology note behind it is genuinely useful to editors maintaining authoritative content.

About the Author

Judy Zhou, Head of Content Strategy

Judy Zhou leads content strategy at Meev, where she oversees AI-driven content research and publishing for hundreds of brands. With a background in SEO and editorial operations, she focuses on building content systems that rank on Google, get cited by AI search engines, and drive measurable business results.

Run your first AI citation audit in Meev and see exactly where your brand stands across ChatGPT, Perplexity, and Google AI Overviews — before your next outreach wave.