Most SEO tools are designed to show you the same data as your competitors, creating a feedback loop of mediocrity. If you are still filtering by search volume and Keyword Difficulty (KD) to build your calendar, you are essentially outsourcing your strategy to the same algorithms everyone else is using. Here's how to break that cycle by finding the 'blind spots' in your competitors' keyword profiles.

Here's a contrarian take: the keyword research process most SEOs follow is optimized for finding the same opportunities everyone else is already targeting. It's a race to the middle — and the middle is crowded. The real wins in high-potential keyword research aren't in the tools. They're in the signals those tools haven't indexed yet.

The keywords worth targeting in 2026 aren't the ones with the highest search volume — they're the ones where intent is clear, competition is thin, and your content can be the definitive answer.

What's the TL;DR on unclaimed keywords?

- Chasing high search volume is a trap — keywords in positions 4–15 with clear conversion intent often outperform high-volume head terms in actual revenue impact. - Reddit threads, People Also Ask clusters, and AI chatbot query patterns surface emerging demand 3–6 months before it appears in Ahrefs or Semrush. - A keyword gap audit using Semrush's Keyword Gap tool, filtered for KD under 30 and competitor positions 1–10, can surface 20–40 actionable targets in under an hour. - Validating with Search Console impressions data before investing content resources prevents wasted effort on keywords that look good in tools but don't convert in practice.Why 'High Volume' Is the Wrong Metric

Search volume as the primary signal of keyword value is an assumption worth challenging. It isn't a reliable one — it's a proxy, and a misleading one at that.

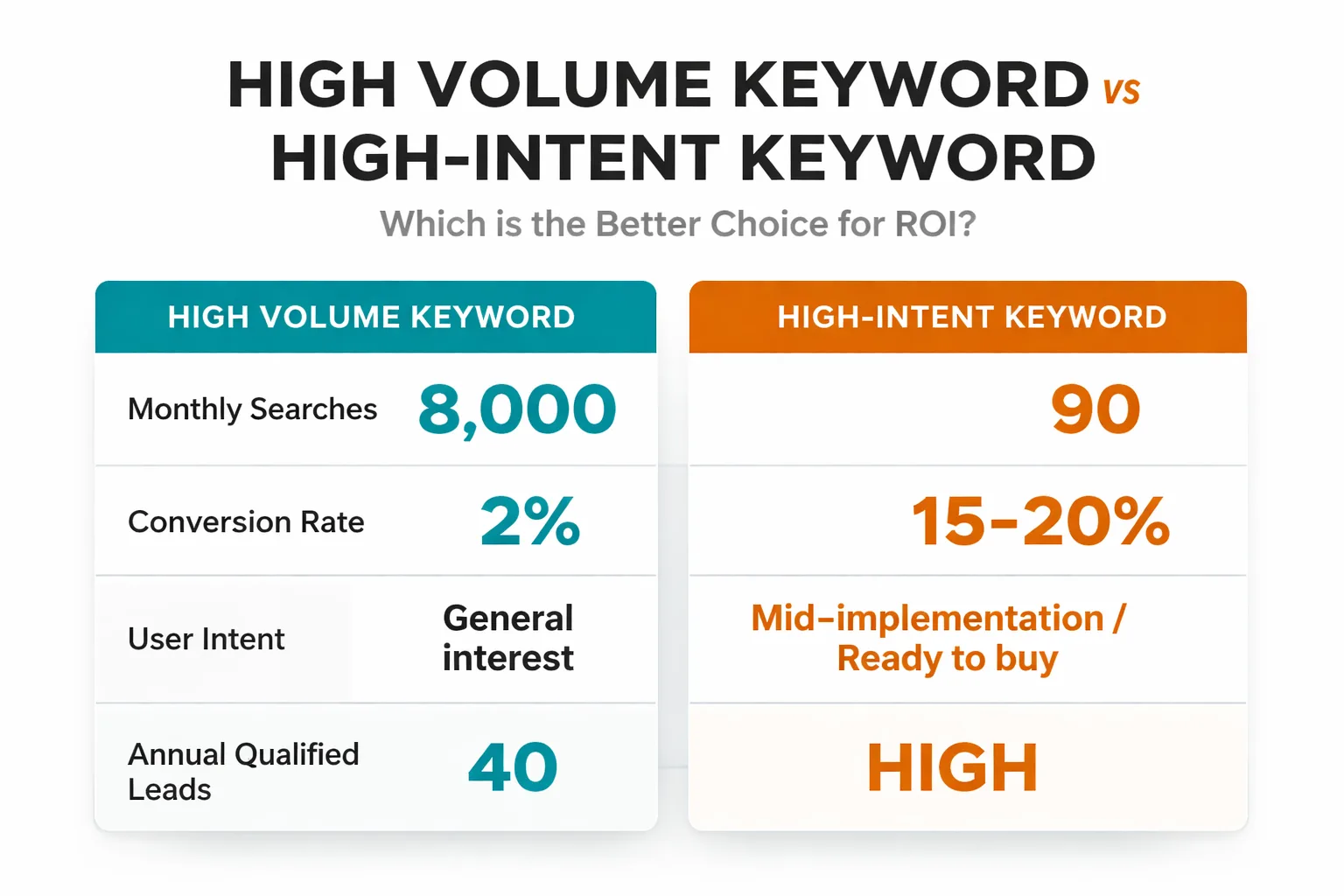

In my work leading content strategy, I've found a consistent pattern across dozens of content audits. A keyword pulling 8,000 monthly searches in a competitive SaaS vertical delivers just 40 qualified leads per year if a site cracks page one. A zero-volume keyword like "how to migrate HubSpot contacts to Salesforce without duplicates" pulls 90 searches a month — but every single one of those searchers is mid-implementation, credit card already out, looking for a solution provider. The conversion rate on that second keyword isn't 2%. It's 15–20%.

According to The Complete B2B SEO Guide for 2026, keywords currently ranking in positions 4 to 15 represent the fastest tactical wins available — not because they're high-volume, but because the ranking gap is closeable with focused effort. I've seen this play out repeatedly: a site sitting at position 7 for a transactional keyword with 400 monthly searches generates more pipeline than a position 3 ranking for a 5,000-search informational term. Intent wins every time.

The three metrics that actually matter when evaluating a keyword:

- Traffic potential (not just the keyword's volume, but the cluster of related terms a single piece of content can capture) - Ranking difficulty relative to your domain authority (a KD of 28 means nothing if your DR is 15) - Conversion intent (is the searcher researching, comparing, or ready to act?)

When I reframe keyword value this way, a lot of the "obvious" targets fall off the list — and a lot of genuinely high-potential keywords appear that competitors have completely ignored.

Untapped SEO Keywords: The 3 Sources Most Keyword Tools Miss

In my experience, this is where the strongest opportunities tend to surface — and where most keyword tools fall short.

Reddit threads are a goldmine that almost nobody is mining systematically. When someone posts in r/marketing or r/SEO asking "why does my Google Search Console show impressions but zero clicks," that's a keyword signal. They're phrasing a real problem in natural language, and that phrasing often maps directly to a long-tail query that hasn't hit Semrush's database yet. I spend 20 minutes a week scanning relevant subreddits for question patterns — it's one of my most reliable discovery methods. The goal isn't posts with thousands of upvotes, but questions that appear repeatedly across different threads, because repetition signals latent search demand.

People Also Ask clusters are underused as a discovery mechanism. Most SEOs glance at PAA boxes and move on. I've found that treating them as a branching keyword map yields far better results. When you search a seed term and expand every PAA question, then search those questions and expand their PAA boxes, you end up with a semantic web of related intent that no keyword tool has fully mapped. The questions buried two or three levels deep in that expansion are often the highest-intent, lowest-competition targets I've come across. Google is literally showing you what people want to know — and most competitors are ignoring it.

AI chatbot query patterns are the newest signal, and in my view the most underappreciated. When users ask ChatGPT, Claude, or Gemini a question, they phrase it differently than they'd type into Google — more conversational, more specific, more problem-focused. I pay close attention to what questions clients raise that they've clearly lifted from an AI conversation, and what the SEO and content marketing trends for 2026 research shows about how AI-mediated search is reshaping query structure. These query patterns are leading indicators of how search behavior is evolving. The shift toward longer, more specific, answer-seeking queries is real — and it's creating keyword opportunities that volume-based tools are structurally blind to.

Here's the workflow I use for turning these sources into actionable keyword targets:

1. Spend 15 minutes on Reddit searching your niche + "how do I" or "why does" — copy every question that appears more than twice into a spreadsheet. 2. Run your top 5 seed keywords through Google and expand every PAA box two levels deep — note any questions that don't have strong existing content in the top results. 3. Review the last 30 days of Search Console data for queries with 50+ impressions and under 5 clicks — these are keywords where your site is visible but not compelling, and they often point to adjacent intent that hasn't been addressed.

That three-step process takes about 45 minutes and consistently surfaces 10–15 targets that Ahrefs would never surface through standard research.

How to Run Keyword Gap Analysis

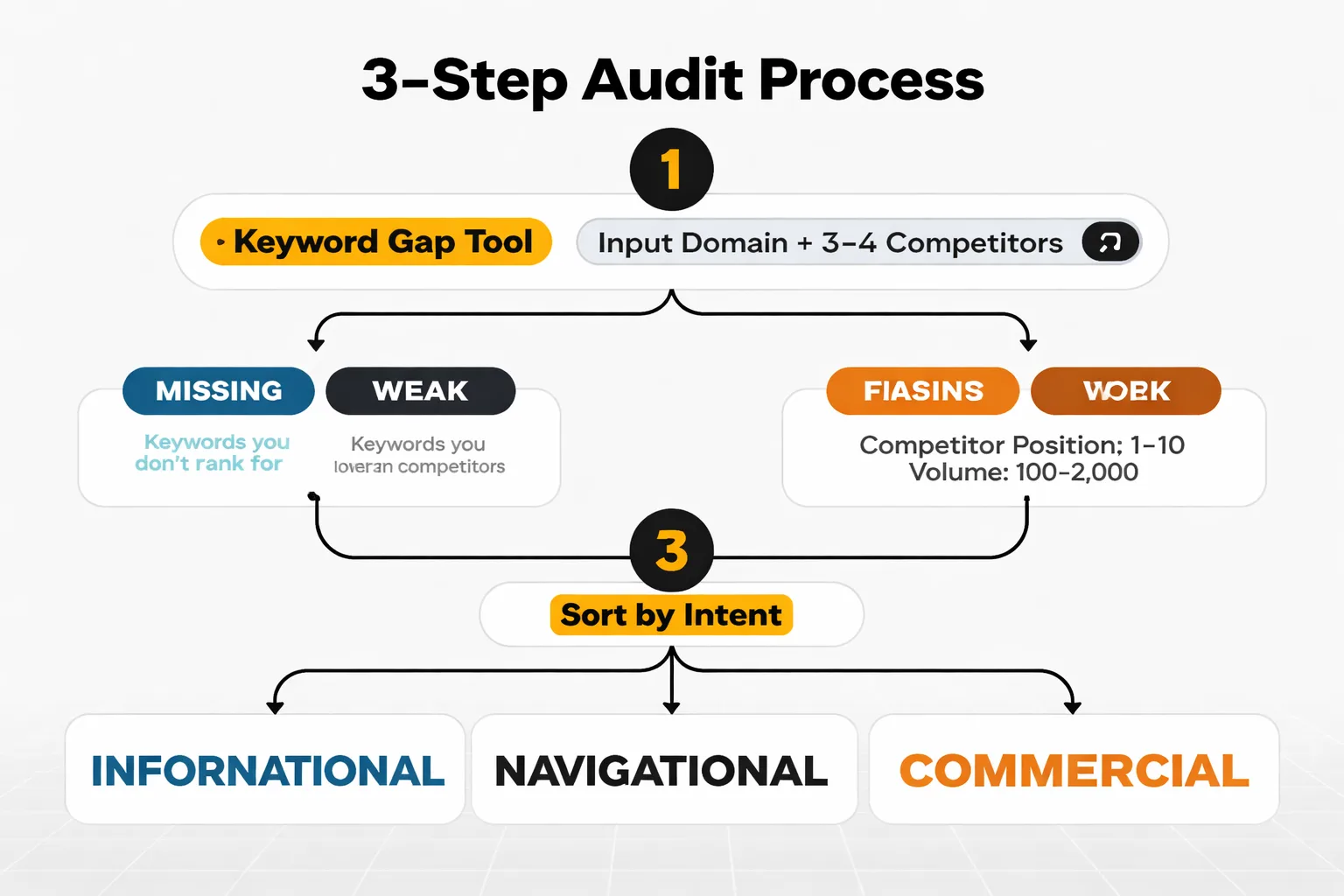

A keyword gap audit is the fastest way to find high-potential keywords competitors are already capturing — and you're not. Here's the exact process I recommend, start to finish, in under an hour.

Step 1: Set up the gap analysis. In Semrush, go to Keyword Gap and enter your domain plus 3–4 direct competitors. Use the "Missing" filter first — these are keywords your competitors rank for that you don't appear for at all. Then switch to "Weak" — keywords where you rank but your competitors rank higher.

Step 2: Apply filters aggressively. Set KD to 0–35. Set competitor position to 1–10 (you want keywords where at least one competitor is actually ranking well, which confirms the keyword is real and rankable). Set volume to 100–2,000 — this cuts out the noise at both ends. You're left with a list of genuinely achievable targets.

Step 3: Sort by intent. Export the filtered list and tag each keyword by intent: informational, navigational, commercial, or transactional. I use a simple color code in a Google Sheet. Transactional and commercial keywords go to the top of the priority list regardless of volume.

Step 4: Identify content type. For each priority keyword, do a quick SERP check. Is the top result a blog post, a landing page, a comparison page, or a tool? This tells you what content format Google is rewarding for that query — and building the wrong format is one of the most common reasons I see good keywords underperform.

Step 5: Check for keyword cannibalization. Before adding a keyword to the pipeline, run a site search to confirm there isn't already a page targeting it. SEO keyword cannibalization — where two pages on the same site compete for the same term — is a silent traffic killer that a gap audit can accidentally make worse if you skip this check.

A well-executed gap audit typically surfaces 20–40 actionable targets. I recommend prioritizing the top 10 and adding the rest to a backlog for quarterly review.

Validating Keywords Before You Invest

Finding a keyword is not the same as confirming it's worth a 2,000-word article and three hours of time. Skipping validation is a mistake I see constantly — and it leads to published content that ranks for keywords that turn out to be dead ends. Here's how I validate before committing resources.

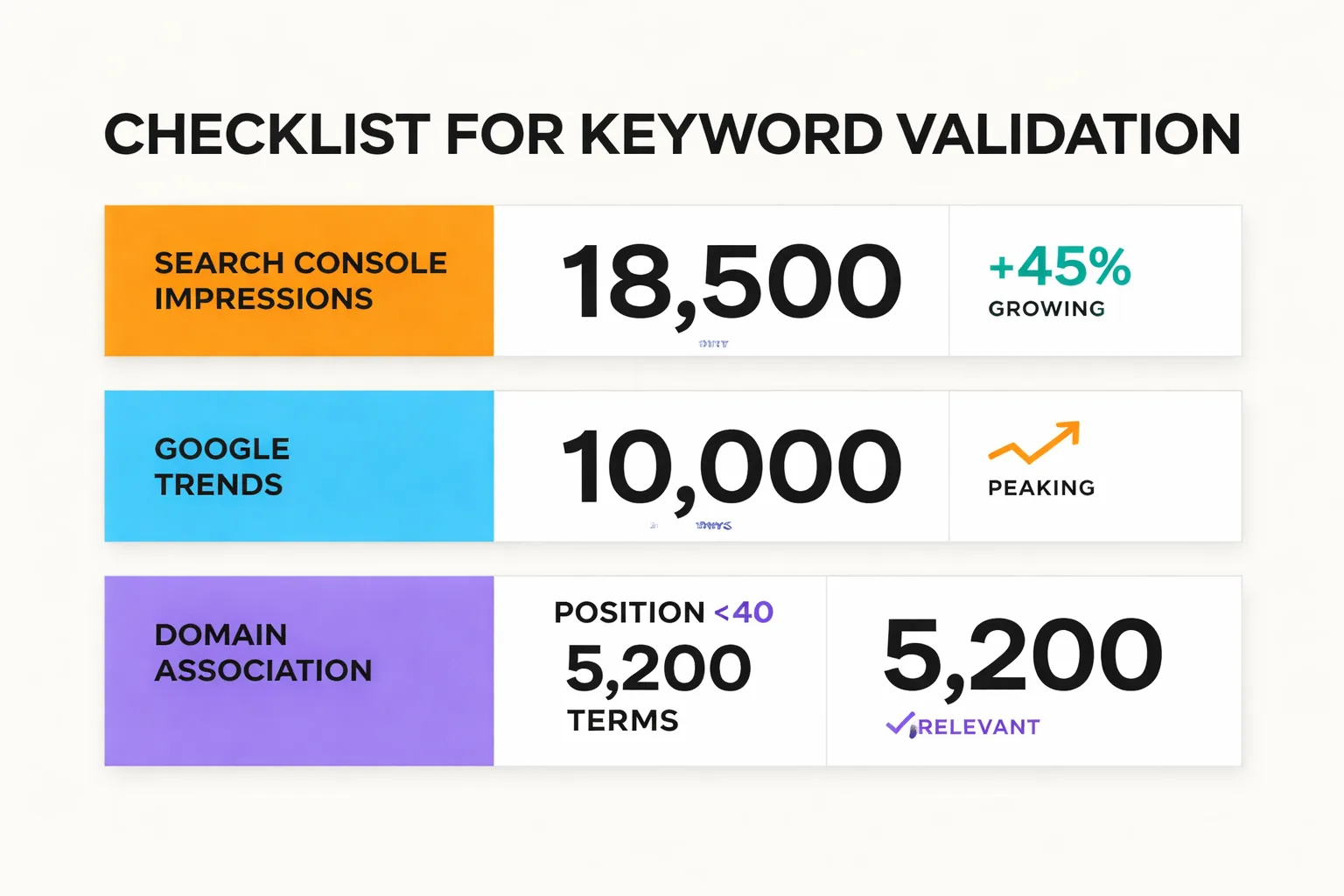

Search Console impressions data is my first check. When I'm considering a keyword where any impressions already exist (even at position 40+), that's a strong signal the keyword is real and that Google already associates the domain with the topic. I pull the Query report in Search Console, filter by the keyword or a close variant, and review the impression trend over the last 90 days. Flat or growing impressions mean the keyword is live. Declining impressions on a keyword I'm not yet targeting can indicate a trend that's already peaking — worth noting before investing.

Google Trends is my second check, specifically for anything that feels like it might be seasonal or trend-driven. The goal isn't absolute search volume — it's trajectory. A keyword with 500 monthly searches and a rising Trends line is worth more than a keyword with 1,200 monthly searches and a declining one. Trends also helps me check geographic concentration — if 80% of the search interest is from one country and that's not my target audience, the volume number is misleading.

A quick SERP analysis is the final gate. I look at the top 5 results and ask three questions: Are these pages from domains with significantly higher authority? Are they recent (published or updated within 18 months)? And — critically — do any of them actually answer the query well? If the top results are thin, outdated, or tangentially related to the query, that's a gap worth filling. If the top results are in-depth, well-organized pages from DR 70+ domains, I either need a genuinely differentiated angle or I'll deprioritize the keyword.

This validation process takes about 10 minutes per keyword. For a list of 20 targets, that's under four hours — a worthwhile investment before committing to a full content calendar.

One additional check I always make: whether the keyword is likely to trigger an AI Overview in Google's results. If it does, the question becomes whether the content can be the source that gets cited — which means structuring it with clear definitions, numbered steps, and direct answers after every H2. There's more on this in how to write for AI Overviews without losing your audience, but the short version is: AEO-optimized structure and keyword targeting are now the same discipline.

Building a High-Potential Keyword Research Pipeline

Here's the part most keyword research guides skip entirely: what happens after finding a keyword. Because finding 40 great targets and then losing them in a spreadsheet somewhere is exactly as useful as not finding them at all.

At Meev, we use a simple four-stage pipeline in Notion, though Airtable works equally well. The stages are: Discovery → Validated → Briefed → Published. Every keyword lives in exactly one stage at any given time, and the rule is that nothing moves to Briefed until it's been through the validation process I described above.

The fields I track for each keyword:

- Keyword (exact match) - Monthly volume (from Semrush or Ahrefs) - KD score - Search intent tag (informational / commercial / transactional) - Target content format (blog post / landing page / comparison / tool) - Competitor URL (the page to outrank) - Priority score (1–3, based on intent + difficulty + strategic fit) - Assigned writer and target publish date

The priority score is the most important field. My calculation is straightforward: transactional intent = +2, commercial intent = +1, KD under 20 = +2, KD 20–35 = +1, strategic fit with existing content cluster = +1. Anything scoring 4 or 5 goes into the next sprint. Anything scoring 2 or below goes into the quarterly backlog.

This system does one thing really well: it forces prioritization. Without a scoring system, every keyword feels equally urgent, and content gets published in random order with no compounding effect. With a scoring system, the work naturally builds toward content clusters — groups of related content that reinforce each other's rankings — because the highest-scoring keywords tend to cluster around the same topics.

I recommend a pipeline review every two weeks. New keywords from Reddit, PAA, and gap audits go straight into Discovery. Anything that's been in Validated for more than 30 days without moving to Briefed gets re-evaluated — either it gets prioritized or it gets cut. The pipeline should feel like a living document, not a graveyard.

The Automation Layer

Once the pipeline is running manually, I add automation without losing the judgment that makes it work. A few lightweight automations save me about three hours a week without replacing the human analysis.

For Search Console monitoring, I run a weekly automated export that flags any query with 100+ impressions and under 3% CTR that doesn't have a dedicated page. These are keywords where Google is already surfacing the site — searchers just haven't been given a compelling reason to click. That report alone has consistently surfaced strong content ideas for the teams I've worked with.

For Reddit monitoring, I use a simple RSS feed from Reddit search results for core topic clusters, piped into a Notion database via Zapier. New posts that match keyword filters appear in the Discovery stage automatically. Manual review is still required — the automation handles collection, not judgment.

For Google Search Console structured data monitoring, I track which published pieces are generating rich results and which aren't. Pages that aren't generating rich results despite having FAQ or How-To markup get flagged for a structured data audit. This connects directly to keyword performance — rich results dramatically improve CTR on keywords where a page is already ranking, which is often faster than trying to rank for new keywords entirely.

The goal isn't to automate keyword research. It's to automate the collection so I can spend time on analysis, prioritization, and content quality — the parts that actually require human judgment.

The SEOs who win in 2026 aren't the ones with the best tools. They're the ones who've built systems that surface the right signals, validate them quickly, and execute consistently. High-potential keyword research isn't a one-time project. It's a repeatable process — and in my experience, the compounding effect of running that process every two weeks for 12 months produces a content library that competitors can't replicate quickly, no matter how good their tools are.