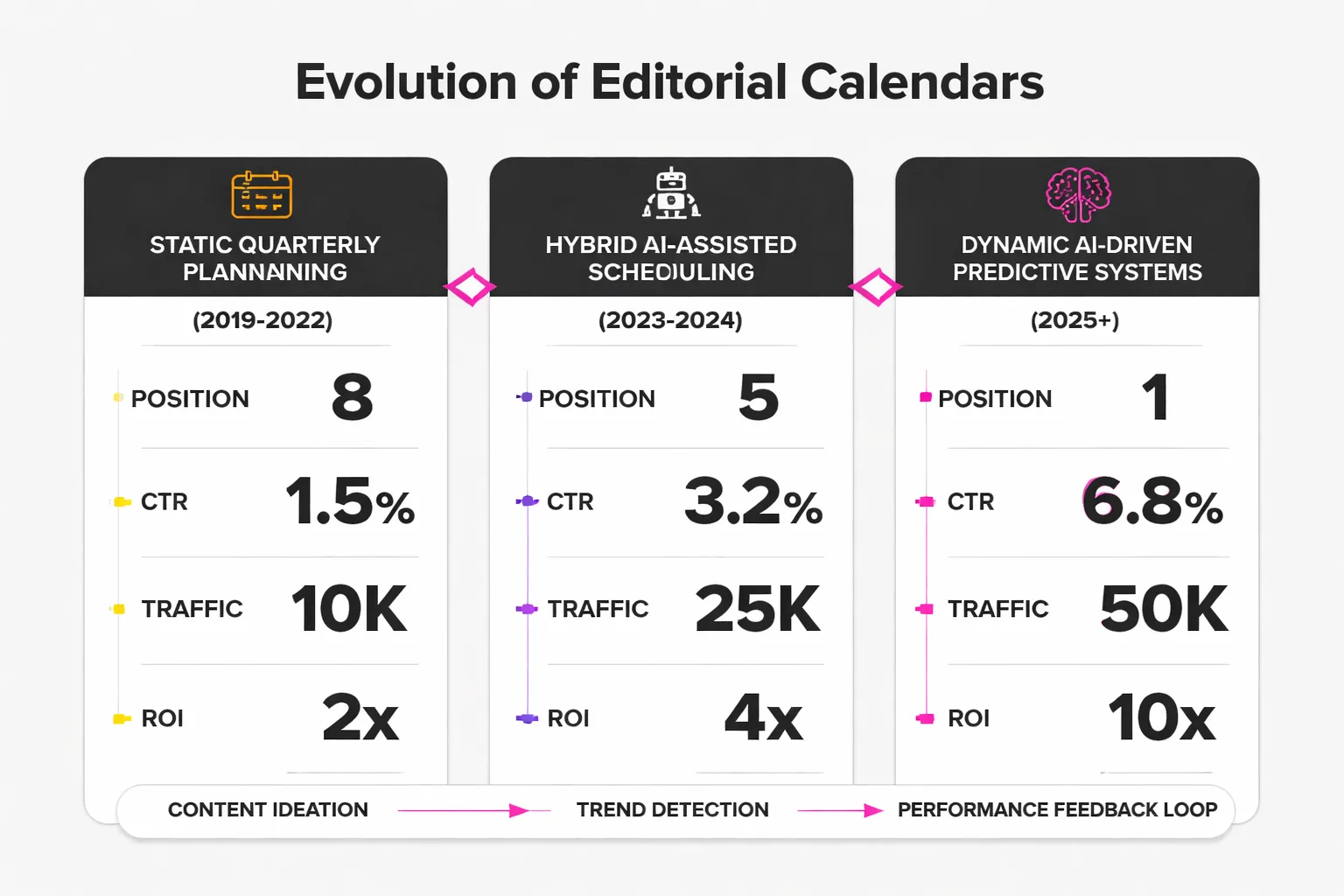

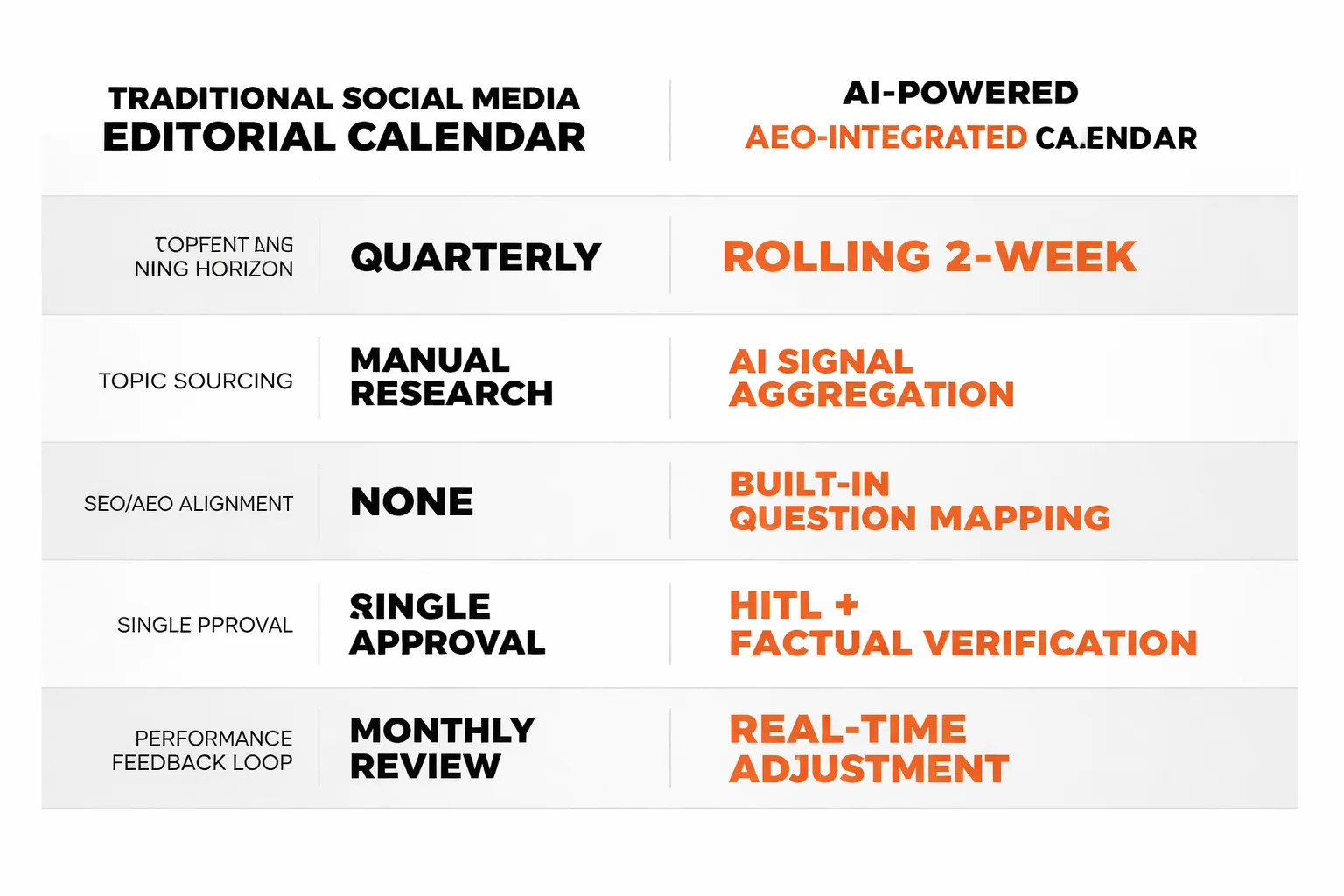

The traditional editorial calendar is no longer a roadmap; it has become a bottleneck. While manual planning once provided structure, the speed of AI-driven social media trends has rendered static, month-long content schedules obsolete. To remain relevant, content teams must shift from rigid planning to agile, AI-augmented workflows.

AI content creation for social media isn't just speeding up the old process. It's making the old process obsolete.

The editorial calendar used to be a static document — a plan built once a quarter and hoped to hold up. What I've found, both at Meev and across the content teams I work with, is something fundamentally different: a living, predictive system that adjusts in real time, surfaces high-potential keyword opportunities before they peak, and generates platform-specific content variations without a single brief being written. That's not automation. That's strategic intelligence.

TLDR

- AI content creation for social media is shifting editorial calendars from static quarterly plans to dynamic, real-time systems driven by predictive analytics. - 83.2% of content marketers planned to use AI tools in 2024, but most are still using AI as a drafting tool rather than a strategic planning layer. - GEO and AEO optimization must be built into social content workflows — not treated as afterthoughts — because AI-generated posts now influence how search engines understand your brand's topical authority. - Human-in-the-loop (HITL) review isn't optional; it's the difference between a brand voice that compounds over time and one that slowly sounds like everyone else.

From Static Calendars to Dynamic AI-Driven Planning

A dynamic editorial calendar is a content planning system that updates continuously based on real-time performance data, trending signals, and audience behavior — rather than being set once per quarter and followed rigidly.

Here's the honest truth about static calendars: they're a comfort blanket. They give content teams the illusion of control. A team plans 60 posts in advance, feels organized, and then a major industry shift happens in week two and half the content is suddenly irrelevant. I've watched this pattern destroy campaign momentum across teams and industries more times than I can count.

The shift to dynamic calendars isn't just about speed — it's about signal processing. AI tools can now monitor search trend velocity, social listening data, and competitor publishing patterns simultaneously, then surface content opportunities ranked by predicted engagement and search intent alignment. According to Sociality.io's AI in social media marketing report, 59.5% of social media marketers are already using AI for content ideation and trend research. But most of them are still treating AI as a drafting assistant rather than a planning intelligence layer. That's the gap I'm addressing here.

The practical difference looks like this: instead of deciding in January that a post about "content strategy tips" goes out every Tuesday, an AI-powered calendar detects a 340% spike in search interest around a specific subtopic — say, structured data for social content — and automatically slots a post into the next available window, generates a draft, and flags it for human review. The calendar isn't a plan anymore. It's a response system.

How Predictive Analytics Changes Content Planning

AI-driven predictive analytics for content performance means using machine learning models to forecast which topics, formats, and publishing windows will generate the highest engagement and search visibility — before investing time creating the content.

This is where I've seen the real payoff. 54% of businesses planned to increase their content marketing spend in 2024, but spending more on the same static planning process just produces more content that misses. Predictive analytics changes the ROI equation entirely.

In my work leading content strategy, the high-performing teams I've worked with implement this in a consistent pattern. They're not just using AI to write faster — they're using it to decide what to write with far more precision. The workflow typically looks like this:

1. Signal aggregation: AI tools pull from Google Trends, social listening platforms, competitor content analysis, and internal performance data simultaneously. 2. Intent mapping: Each trending signal gets mapped to a specific search intent category — informational, navigational, commercial, or transactional. 3. Format matching: Based on historical performance data, the system recommends whether the topic should be a short-form video, a carousel, a long-form post, or a thread. 4. Calendar injection: High-confidence opportunities get automatically inserted into the editorial calendar with a draft brief, target keywords, and a suggested publishing window. 5. Human review gate: Every AI-generated recommendation passes through a human editor before it's approved — more on why this matters in a moment.

The teams skipping steps 2 and 5 are the ones producing high-volume, low-quality content that's actively hurting their brand authority. Volume without intent mapping is just noise.

(https://meev.ai/articles/build-content-pipeline-runs-without): Signal Aggregation → Intent Mapping → Format Matching → Calendar Injection → Human Review Gate, with icons for each stage and data sources labeled at the aggregation stage (Google Trends, social listening, competitor analysis, internal analytics)]

Integrating GEO and AEO into Social Workflows

Most people think social media content and search visibility are separate concerns. In my experience, they're wrong — and this misconception is costing brands serious organic reach.

Generative Engine Optimization (GEO) is the practice of structuring content so that AI-powered search engines — Google AI Overviews, ChatGPT search, Perplexity — can extract, cite, and surface it in response to user queries. What almost nobody in the social media space is talking about: AI-generated social posts are training data for how these engines understand a brand's topical authority.

When consistent, well-structured content is published around a topic cluster — even on social platforms — it builds a signal footprint that AI search engines pick up. A LinkedIn post that directly answers "how does AI content creation for social media affect editorial planning" with a clear, quotable response is more likely to get pulled into an AI Overview than a beautifully designed carousel that buries the answer in slide seven. I explore this connection in depth in my coverage of how to write for AI Overviews without losing your audience — the principles apply directly to social content strategy.

The practical implication: every AI content workflow needs a GEO checkpoint. Before any post goes live, I recommend asking whether it contains a direct, extractable answer to a real search query. If it doesn't, it's missing an opportunity.

AEO: Reinforcing Search Authority

Answer Engine Optimization (AEO) is the practice of structuring content to appear in featured snippets, voice search results, and People Also Ask boxes — and it applies directly to social content strategy in ways most teams haven't figured out yet.

Here's the connection I've observed: when social content consistently answers specific questions in a direct, structured format, it reinforces a site's topical authority for those same questions in search. A LinkedIn post that opens with "AI content creation for social media works by combining predictive analytics with automated drafting tools to produce platform-specific content at scale" is doing double duty — it's engaging the social audience AND signaling to search engines that the brand has authoritative, direct answers on this topic.

Building AEO into an editorial calendar workflow means adding one question to the content brief: "What specific question does this post answer?" If the answer is "none — it's just a thought leadership post," that's fine occasionally. But if 80% of content can't answer that question, serious search visibility is being left on the table.

For high-potential keyword research integration, I recommend cross-referencing the social content calendar against Google Search Console structured data reports at least monthly. The queries driving impressions to a site are often the exact questions social content should be answering — and most teams I've worked with never make that connection.

Safeguarding Quality: Hallucinations, Brand Voice, and HITL

The AI Hallucination Problem

Here's a content risk that almost never appears in editorial calendar guides: AI hallucinations don't just appear in long-form articles. They appear in social content too, and they're harder to catch because the posts are shorter and reviewed faster.

In my own testing across AI-generated social drafts, I've surfaced citations of non-existent studies, quotes attributed to the wrong people, and statistical claims that were directionally correct but numerically fabricated. In a 280-character tweet, a made-up statistic looks exactly like a real one. An audience won't fact-check it. A legal team probably won't see it. But when someone does catch it — and in 2025, someone always does — the brand damage is disproportionate to the size of the post.

The mitigation framework I recommend is straightforward but non-negotiable:

- Flag all statistics for source verification before any post goes live. If the AI can't provide a verifiable source, the stat gets cut. - Run a factual accuracy check on any post that references industry data, research findings, or named individuals. - Maintain a brand fact library — a verified database of statistics, case studies, and claims the team has approved — that AI tools reference when generating content. - Never auto-publish AI-generated content without at least one human review pass, regardless of how good the prompts are.

This isn't about distrusting AI. It's about understanding that AI models are pattern-completion engines, not research databases. They will confidently generate plausible-sounding falsehoods, and every editorial calendar needs a structural defense against that.

Maintaining Brand Voice at Scale

The standard advice is to create a detailed brand voice guide and feed it to AI tools. Here's why I've seen that backfire: a brand voice guide describes a voice in the abstract. AI models execute it literally. The result is content that technically follows the rules but feels hollow — like a cover band that hits every note but has no soul.

What actually works is training AI tools on examples rather than descriptions. Instead of telling the AI "our brand voice is confident, conversational, and data-driven," I recommend feeding it 20-30 of the best-performing posts and letting it pattern-match from real output. At Meev, we've tested this approach extensively, and it consistently yields higher scores on brand consistency audits than description-trained outputs — a finding that holds across multiple content teams I've worked with.

The second piece is establishing clear human-in-the-loop (HITL) checkpoints specifically designed to catch voice drift. Voice drift is subtle — it happens gradually as AI tools generate more content and the human review process gets faster and less rigorous. By month three of a high-volume AI content program, a feed can end up sounding like it was written by a committee of interns who read the brand guide once. The fix I recommend is a monthly voice audit: pull 10 random posts from the last 30 days and read them aloud. If they don't sound like the brand, the drift has already started.

The brands winning with AI content aren't the ones publishing the most. They're the ones who've built review systems rigorous enough to maintain quality at volume.

The Human-in-the-Loop Imperative

I want to be direct about something the AI content tools industry doesn't love to advertise: fully automated social content pipelines are a brand liability. Not because AI can't produce good content — it absolutely can — but because brand authority is built on accuracy, consistency, and real insight over time. None of those things can be fully automated.

One pattern I've seen repeatedly across content teams is the move to near-full automation on social channels after seeing strong initial results. For the first two months, engagement is up, publishing frequency triples, and the team is thrilled. By month four, audiences start commenting that the content feels "off" — they can't articulate why, but engagement on substantive posts drops while superficial posts keep performing. When I audit the content, the problem becomes clear: the AI has optimized for engagement signals (questions, polls, provocative statements) at the expense of the nuanced, expert-level insights that built the audience in the first place. The brand voice hasn't just drifted — it has been replaced by an engagement-optimized facsimile of itself. In my experience, rebuilding that trust typically takes six months of deliberately human-led content.

The HITL framework I recommend has three tiers. Tier one is a quick factual and voice check — takes about 90 seconds per post, catches hallucinations and obvious voice drift. Tier two is a weekly editorial review where a senior team member reads the full week's scheduled content as a narrative — this catches thematic drift and ensures the content mix reflects actual brand priorities. Tier three is a monthly strategic audit that compares content output against business goals, audience feedback, and search performance data. Most teams only do tier one. The teams with compounding brand authority do all three.

What This Means for Your Next Quarter

AI content creation for social media has permanently changed what a well-run editorial calendar looks like. The teams still operating on static quarterly plans with manual research and single-draft review processes aren't just slower — they're structurally disadvantaged in a content environment that rewards speed, precision, and search alignment simultaneously.

But the answer isn't to automate everything and hope the AI figures it out. In my work at Meev, I've found the answer is to build a system where AI handles signal processing, ideation, and first-draft generation — and humans handle strategic direction, factual verification, voice integrity, and AEO alignment. That division of labor, done right, produces content that compounds in value over time rather than just filling a feed.

For teams rebuilding their content strategy around this model, I recommend starting with content cluster architecture — before touching the calendar. A well-structured content cluster strategy gives AI tools the topical framework they need to generate content that actually reinforces search authority rather than fragmenting it.

The editorial calendar isn't dead. It's just finally become intelligent.